deep learning.ai作业:Class 1 Week 4 assignment4_1

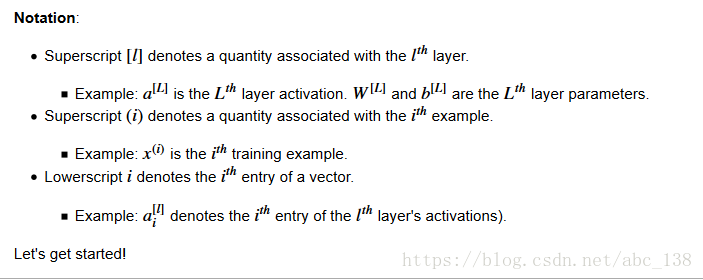

Building your Deep Neural Network: Step by Step

Welcome to your week 4 assignment (part 1 of 2)! You have previously trained a 2-layer Neural Network (with a single hidden layer). This week, you will build a deep neural network, with as many layers as you want!

- In this notebook, you will implement all the functions required to build a deep neural network.

- In the next assignment, you will use these functions to build a deep neural network for image classification.

After this assignment you will be able to:

- Use non-linear units like ReLU to improve your model

- Build a deeper neural network (with more than 1 hidden layer)

- Implement an easy-to-use neural network class

1 - Packages

Let's first import all the packages that you will need during this assignment.

- numpy is the main package for scientific computing with Python.

- matplotlib is a library to plot graphs in Python.

- dnn_utils provides some necessary functions for this notebook.

- testCases provides some test cases to assess the correctness of your functions

- np.random.seed(1) is used to keep all the random function calls consistent. It will help us grade your work. Please don't change the seed.

import numpy as np

import h5py

import matplotlib.pyplot as plt

from testCases_v2 import *

from dnn_utils_v2 import sigmoid, sigmoid_backward, relu, relu_backward

%matplotlib inline

plt.rcParams['figure.figsize'] = (5.0, 4.0) # set default size of plots

plt.rcParams['image.interpolation'] = 'nearest'

plt.rcParams['image.cmap'] = 'gray'

%load_ext autoreload

%autoreload 2

np.random.seed(1)2 - Outline of the Assignment

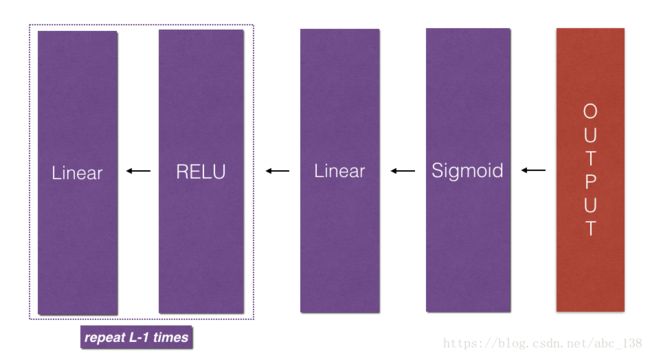

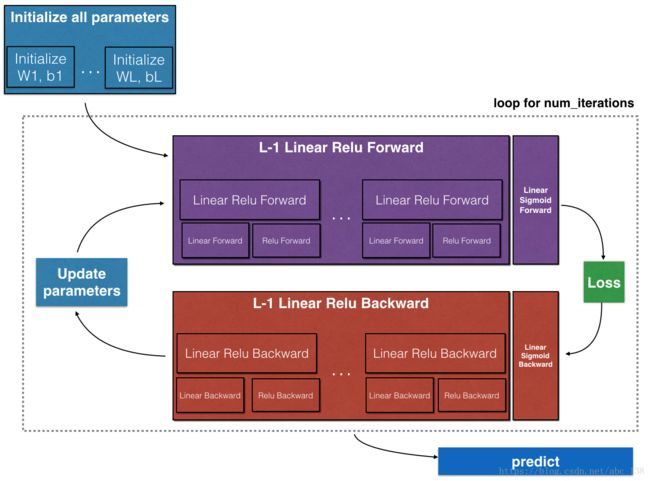

To build your neural network, you will be implementing several "helper functions". These helper functions will be used in the next assignment to build a two-layer neural network and an L-layer neural network. Each small helper function you will implement will have detailed instructions that will walk you through the necessary steps. Here is an outline of this assignment, you will:

- Initialize the parameters for a two-layer network and for an LL-layer neural network.

- Implement the forward propagation module (shown in purple in the figure below).

- Complete the LINEAR part of a layer's forward propagation step (resulting in

![Z^{[1]}](http://img.e-com-net.com/image/info8/27963e75e1994562b379565381107514.gif) ).

). - We give you the ACTIVATION function (relu/sigmoid).

- Combine the previous two steps into a new [LINEAR->ACTIVATION] forward function.

- Stack the [LINEAR->RELU] forward function L-1 time (for layers 1 through L-1) and add a [LINEAR->SIGMOID] at the end (for the final layer LL). This gives you a new L_model_forward function.

- Complete the LINEAR part of a layer's forward propagation step (resulting in

- Compute the loss.

- Implement the backward propagation module (denoted in red in the figure below).

- Complete the LINEAR part of a layer's backward propagation step.

- We give you the gradient of the ACTIVATE function (relu_backward/sigmoid_backward)

- Combine the previous two steps into a new [LINEAR->ACTIVATION] backward function.

- Stack [LINEAR->RELU] backward L-1 times and add [LINEAR->SIGMOID] backward in a new L_model_backward function

- Finally update the parameters.

1 初始化双层网络和LL层神经网络的参数。

2 实现前向传播模块(在下图中以紫色显示)。

(1)完成图层前向传播步骤的LINEAR部分(生成Z [1] Z [1])。

(2)我们为您提供ACTIVATION功能(relu / sigmoid)。

(3)将前两个步骤合并为一个新的[LINEAR-> ACTIVATION]转发函数。

(4)堆叠[LINEAR-> RELU]前进函数L-1时间(对于层1到L-1)并在末尾添加[LINEAR-> SIGMOID](对于最终层LL)。这给你一个新的L_model_forward函数。

3 计算损失。

4 实现反向传播模块(在下图中用红色表示)。

(1)完成图层后向传播步骤的LINEAR部分。

(2)我们给你ACTIVATE函数的渐变(relu_backward / sigmoid_backward)

(3)将前两个步骤合并为一个新的[LINEAR-> ACTIVATION]后退功能。

(4)将[LINEAR-> RELU]向后叠加L-1次,并在新的L_model_backward函数中向后添加[LINEAR-> SIGMOID]

5最后更新参数 Note that for every forward function, there is a corresponding backward function. That is why at every step of your forward module you will be storing some values in a cache. The cached values are useful for computing gradients. In the backpropagation module you will then use the cache to calculate the gradients. This assignment will show you exactly how to carry out each of these steps.

Note that for every forward function, there is a corresponding backward function. That is why at every step of your forward module you will be storing some values in a cache. The cached values are useful for computing gradients. In the backpropagation module you will then use the cache to calculate the gradients. This assignment will show you exactly how to carry out each of these steps.

3 - Initialization

You will write two helper functions that will initialize the parameters for your model. The first function will be used to initialize parameters for a two layer model. The second one will generalize this initialization process to LL layers.

3.1 - 2-layer Neural Network

Exercise: Create and initialize the parameters of the 2-layer neural network.

Instructions:

- The model's structure is: LINEAR -> RELU -> LINEAR -> SIGMOID.

- Use random initialization for the weight matrices. Use

np.random.randn(shape)*0.01with the correct shape. - Use zero initialization for the biases. Use

np.zeros(shape).

# 实现2层神经网络参数的初始化

def initialize_parameters(n_x,n_h,n_y):

"""

Argument:

n_x -- size of the input layer

n_h -- size of the hidden layer

n_y -- size of the output layer

Returns:

parameters -- python dictionary containing your parameters:

W1 -- weight matrix of shape (n_h, n_x)

b1 -- bias vector of shape (n_h, 1)

W2 -- weight matrix of shape (n_y, n_h)

b2 -- bias vector of shape (n_y, 1)

"""

np.random.seed(1)

W1 = np.random.randn(n_h,n_x) * 0.01

b1 = np.zeros((n_h,1))

W2 = np.random.randn(n_y,n_h) * 0.01

b2 = np.zeros((n_y,1))

assert (W1.shape == (n_h,n_x))

assert (b1.shape == (n_h,1))

assert (W2.shape == (n_y,n_h))

assert (b2.shape == (n_y,1))

parameters = {"W1":W1,

"b1":b1,

"W2":W2,

"b2":b2}

return parameters

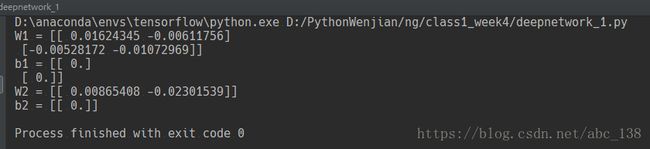

parameters = initialize_parameters(2,2,1)

print("W1 = " + str(parameters["W1"]))

print("b1 = " + str(parameters["b1"]))

print("W2 = " + str(parameters["W2"]))

print("b2 = " + str(parameters["b2"]))

3.2 - L-layer Neural Network

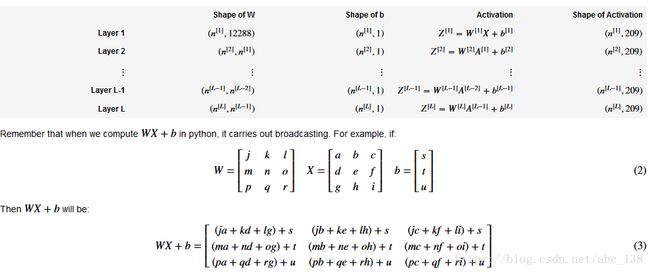

The initialization for a deeper L-layer neural network is more complicated because there are many more weight matrices and bias vectors. When completing the initialize_parameters_deep, you should make sure that your dimensions match between each layer. Recall that n[l] is the number of units in layer l. Thus for example if the size of our input Xis (12288,209) (with m=209examples) then:

Exercise: Implement initialization for an L-layer Neural Network.

Instructions:

- The model's structure is [LINEAR -> RELU] ×× (L-1) -> LINEAR -> SIGMOID. I.e., it has L−1L−1 layers using a ReLU activation function followed by an output layer with a sigmoid activation function.

- Use random initialization for the weight matrices. Use

np.random.rand(shape) * 0.01. - Use zeros initialization for the biases. Use

np.zeros(shape). - We will store n[l]n[l], the number of units in different layers, in a variable

layer_dims. For example, thelayer_dimsfor the "Planar Data classification model" from last week would have been [2,4,1]: There were two inputs, one hidden layer with 4 hidden units, and an output layer with 1 output unit. Thus meansW1's shape was (4,2),b1was (4,1),W2was (1,4) andb2was (1,1). Now you will generalize this to LL layers! - Here is the implementation for L=1L=1 (one layer neural network). It should inspire you to implement the general case (L-layer neural network).

if L == 1: parameters["W" + str(L)] = np.random.randn(layer_dims[1], layer_dims[0]) * 0.01 parameters["b" + str(L)] = np.zeros((layer_dims[1], 1))

# L层神经网络参数初始化

"""

模型的结构是[LINEAR - > RELU]××(L-1) - > LINEAR - > SIGMOID。 即,它具有使用ReLU激活功能的L-1L-1层,接着是具有S形激活功能的输出层。

对权重矩阵使用随机初始化。 使用np.random.rand(shape)* 0.01。

对偏见使用零初始化。 使用np.zeros(形状)。

我们将n [l] n [l](不同图层中的单元数)存储在变量layer_dims中。 例如,上周“平面数据分类模型”的layer_dims可能是[2,4,1]:有两个输入,一个

隐藏层有四个隐藏单元,输出层有一个输出单元。 因此,W1的形状为(4,2),b1为(4,1),W2为(1,4),b2为(1,1)。 现在您将推广到LL层!

这里是L = 1L = 1(单层神经网络)的实现。 它应该激励你执行一般情况(L层神经网络

"""

def initialize_parameters_deep(layer_dims):

"""

Arguments:

layer_dims -- python array (list) containing the dimensions of each layer in our network

Returns:

parameters -- python dictionary containing your parameters "W1", "b1", ..., "WL", "bL":

Wl -- weight matrix of shape (layer_dims[l], layer_dims[l-1])

bl -- bias vector of shape (layer_dims[l], 1)

"""

np.random.seed(3)

parameters = {}

L = len(layer_dims)

for l in range(1,L):

parameters['W' + str(l)] = np.random.randn(layer_dims[l],layer_dims[l-1]) * 0.01

parameters['b' + str(l)] = np.zeros((layer_dims[l],1))

assert (parameters['W' + str(l)].shape ==(layer_dims[l],layer_dims[l-1]) )

assert (parameters['b' + str(l)].shape == (layer_dims[l],1))

return parameters

parameters = initialize_parameters_deep([5,4,3])

print("W1 = " + str(parameters["W1"]))

print("b1 = " + str(parameters["b1"]))

print("W2 = " + str(parameters["W2"]))

print("b2 = " + str(parameters["b2"]))4 - Forward propagation module

4.1 - Linear Forward

Now that you have initialized your parameters, you will do the forward propagation module. You will start by implementing some basic functions that you will use later when implementing the model. You will complete three functions in this order:

- LINEAR

- LINEAR -> ACTIVATION where ACTIVATION will be either ReLU or Sigmoid.

- [LINEAR -> RELU] ×× (L-1) -> LINEAR -> SIGMOID (whole model)

The linear forward module (vectorized over all the examples) computes the following equations:

# 网络前向传播 线性部分

def linear_forward(A,W,b):

"""

Implement the linear part of a layer's forward propagation.

Arguments:

A -- activations from previous layer (or input data): (size of previous layer, number of examples)

W -- weights matrix: numpy array of shape (size of current layer, size of previous layer)

b -- bias vector, numpy array of shape (size of the current layer, 1)

Returns:

Z -- the input of the activation function, also called pre-activation parameter

cache -- a python dictionary containing "A", "W" and "b" ; stored for computing the backward pass efficiently

"""

Z = np.dot(W,A) + b

assert (Z.shape == (W.shape[0],A.shape[1]))

cache = (A,W,b)

return Z,cache

A, W, b = linear_forward_test_case()

Z, linear_cache = linear_forward(A, W, b)

print("Z = " + str(Z))# 实现sigmoid和tanh激活函数

def linear_activation_forward(A_pre,W,b,activation):

"""

Implement the forward propagation for the LINEAR->ACTIVATION layer

Arguments:

A_prev -- activations from previous layer (or input data): (size of previous layer, number of examples)

W -- weights matrix: numpy array of shape (size of current layer, size of previous layer)

b -- bias vector, numpy array of shape (size of the current layer, 1)

activation -- the activation to be used in this layer, stored as a text string: "sigmoid" or "relu"

Returns:

A -- the output of the activation function, also called the post-activation value

cache -- a python dictionary containing "linear_cache" and "activation_cache";

stored for computing the backward pass efficiently

"""

if activation == "sigmoid":

Z,linear_cache = linear_forward(A_pre,W,b)

A,activation_cache = sigmoid(Z)

elif activation == "relu":

Z,linear_cache = linear_forward(A_pre,W,b)

A,activation_cache = relu(Z)

assert (A.shape == (W.shape[0],A_pre.shape[1]))

cache = (linear_cache,activation_cache)

return A,cache

A_prev, W, b = linear_activation_forward_test_case()

A, linear_activation_cache = linear_activation_forward(A_prev, W, b, activation = "sigmoid")

print("With sigmoid: A = " + str(A))

A, linear_activation_cache = linear_activation_forward(A_prev, W, b, activation = "relu")

print("With ReLU: A = " + str(A))

Note: In deep learning, the "[LINEAR->ACTIVATION]" computation is counted as a single layer in the neural network, not two layers.

d) L-Layer Model

For even more convenience when implementing the LL-layer Neural Net, you will need a function that replicates the previous one (linear_activation_forward with RELU) L−1L−1 times, then follows that with one linear_activation_forward with SIGMOID.

# .实现前向传播的前L-1层【linear->relu】最后一层的【linear->sigmoid】函数

def L_model_forward(X,parameters):

"""

Implement forward propagation for the [LINEAR->RELU]*(L-1)->LINEAR->SIGMOID computation

Arguments:

X -- data, numpy array of shape (input size, number of examples)

parameters -- output of initialize_parameters_deep()

Returns:

AL -- last post-activation value

caches -- list of caches containing:

every cache of linear_relu_forward() (there are L-1 of them, indexed from 0 to L-2)

the cache of linear_sigmoid_forward() (there is one, indexed L-1)

"""

caches = []

A = X

L = len(parameters)//2 # number of layers in the neural networ

# Implement [LINEAR -> RELU]*(L-1). Add "cache" to the "caches" list

for l in range(1,L):

A_prev = A

A,cache = linear_activation_forward(A_prev,parameters["W" + str(l)],parameters["b" + str(l)],activation="relu")

caches.append(cache)

# Implement LINEAR -> SIGMOID. Add "cache" to the "caches" list

AL ,cache = linear_activation_forward(A,parameters["W" + str(L)],parameters["b" + str(L)],activation="sigmoid")

caches.append(cache)

assert (AL.shape == (1,X.shape[1]))

return AL,caches

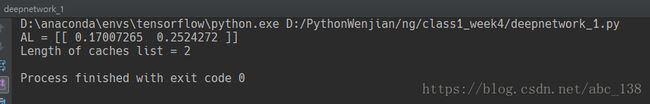

X,parameters = L_model_forward_test_case()

AL,caches = L_model_forward(X,parameters)

print("AL = " + str(AL))

print("Length of caches list = " + str(len(caches)))5 - Cost function

Now you will implement forward and backward propagation. You need to compute the cost, because you want to check if your model is actually learning.

Exercise: Compute the cross-entropy cost JJ, using the following formula:

"""

损失函数

"""

def compute_cost(AL,Y):

"""

Implement the cost function defined by equation (7).

Arguments:

AL -- probability vector corresponding to your label predictions, shape (1, number of examples)

Y -- true "label" vector (for example: containing 0 if non-cat, 1 if cat), shape (1, number of examples)

Returns:

cost -- cross-entropy cost

"""

m =Y.shape[1]

cost = (1./m)*(-np.dot(Y,np.log(AL).T) - np.dot(1-Y,np.log(1-AL).T))

cost = np.squeeze(cost)

assert (cost.shape == ())

return cost

Y,AL = compute_cost_test_case()

print("cost = " +str(compute_cost(AL,Y)))6 - Backward propagation module

Just like with forward propagation, you will implement helper functions for backpropagation. Remember that back propagation is used to calculate the gradient of the loss function with respect to the parameters.

Reminder:

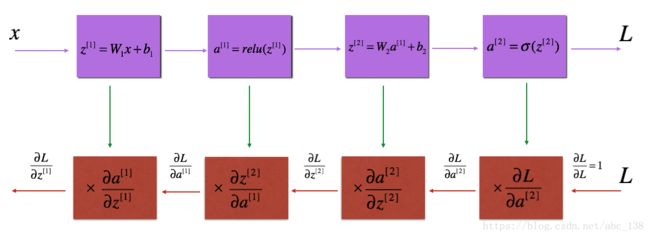

Figure 3 : Forward and Backward propagation for LINEAR->RELU->LINEAR->SIGMOID

The purple blocks represent the forward propagation, and the red blocks represent the backward propagation.

Now, similar to forward propagation, you are going to build the backward propagation in three steps:

- LINEAR backward

- LINEAR -> ACTIVATION backward where ACTIVATION computes the derivative of either the ReLU or sigmoid activation

- [LINEAR -> RELU] ×× (L-1) -> LINEAR -> SIGMOID backward (whole model)

"""

反向传播分为三部分

LINEAR向后

线性 - >激活向后,其中激活计算ReLU或S形激活的衍生

[LINEAR - > RELU]××(L-1) - > LINEAR - > SIGMOID向后(整个模型)

"""

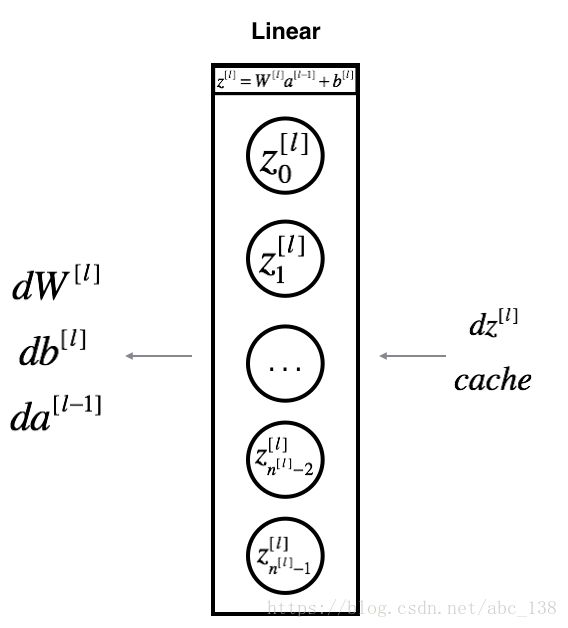

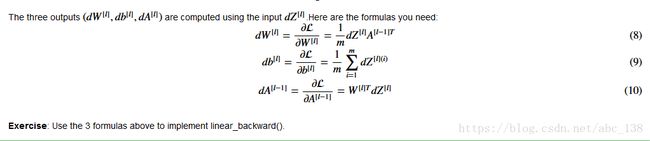

def linear_backward(dZ,cache):

"""

Implement the linear portion of backward propagation for a single layer (layer l)

Arguments:

dZ -- Gradient of the cost with respect to the linear output (of current layer l)

cache -- tuple of values (A_prev, W, b) coming from the forward propagation in the current layer

Returns:

dA_prev -- Gradient of the cost with respect to the activation (of the previous layer l-1), same shape as A_prev

dW -- Gradient of the cost with respect to W (current layer l), same shape as W

db -- Gradient of the cost with respect to b (current layer l), same shape as b

"""

A_prev,W,b = cache

m = A_prev.shape[1]

dW = np.dot(dZ,A_prev.T) / m

db = np.sum(dZ,axis = 1,keepdims=True) / m

dA_prev = np.dot(W.T,dZ)

assert (dA_prev.shape == A_prev.shape)

assert (dW.shape == W.shape)

assert (db.shape == b.shape)

return dA_prev,dW,db

# Set up some test inputs

dZ, linear_cache = linear_backward_test_case()

dA_prev, dW, db = linear_backward(dZ, linear_cache)

print ("dA_prev = "+ str(dA_prev))

print ("dW = " + str(dW))

print ("db = " + str(db))6.2 - Linear-Activation backward

Next, you will create a function that merges the two helper functions: linear_backward and the backward step for the activation linear_activation_backward.

To help you implement linear_activation_backward, we provided two backward functions:

sigmoid_backward: Implements the backward propagation for SIGMOID unit. You can call it as follows:

dZ = sigmoid_backward(dA, activation_cache)

relu_backward: Implements the backward propagation for RELU unit. You can call it as follows:

dZ = relu_backward(dA, activation_cache)

If g(.) is the activation function, sigmoid_backward and relu_backward compute

Exercise: Implement the backpropagation for the LINEAR->ACTIVATION layer.

def linear_activation_backward(dA,cache,activation):

"""

Implement the backward propagation for the LINEAR->ACTIVATION layer.

Arguments:

dA -- post-activation gradient for current layer l

cache -- tuple of values (linear_cache, activation_cache) we store for computing backward propagation efficiently

activation -- the activation to be used in this layer, stored as a text string: "sigmoid" or "relu"

Returns:

dA_prev -- Gradient of the cost with respect to the activation (of the previous layer l-1), same shape as A_prev

dW -- Gradient of the cost with respect to W (current layer l), same shape as W

db -- Gradient of the cost with respect to b (current layer l), same shape as b

"""

linear_cache,activation_cahe = cache

if activation == "relu":

dZ = relu_backward(dA,activation_cahe)

dA_prev,dW,db = linear_backward(dZ,linear_cache)

elif activation == "sigmoid":

dZ = sigmoid_backward(dA,activation_cahe)

dA_prev,dW,db = linear_backward(dZ,linear_cache)

return dA_prev, dW, db

AL, linear_activation_cache = linear_activation_backward_test_case()

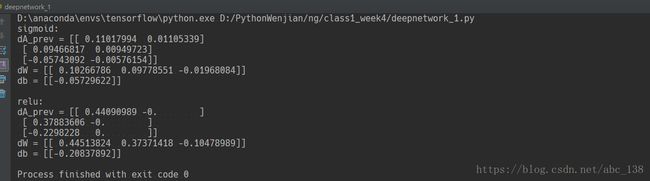

dA_prev, dW, db = linear_activation_backward(AL, linear_activation_cache, activation = "sigmoid")

print ("sigmoid:")

print ("dA_prev = "+ str(dA_prev))

print ("dW = " + str(dW))

print ("db = " + str(db) + "\n")

dA_prev, dW, db = linear_activation_backward(AL, linear_activation_cache, activation = "relu")

print ("relu:")

print ("dA_prev = "+ str(dA_prev))

print ("dW = " + str(dW))

print ("db = " + str(db))6.3 - L-Model Backward

Now you will implement the backward function for the whole network. Recall that when you implemented the L_model_forward function, at each iteration, you stored a cache which contains (X,W,b, and z). In the back propagation module, you will use those variables to compute the gradients. Therefore, in the L_model_backward function, you will iterate through all the hidden layers backward, starting from layer LL. On each step, you will use the cached values for layer ll to backpropagate through layer ll. Figure 5 below shows the backward pass.

def L_model_backward(AL, Y, caches):

"""

Implement the backward propagation for the [LINEAR->RELU] * (L-1) -> LINEAR -> SIGMOID group

Arguments:

AL -- probability vector, output of the forward propagation (L_model_forward())

Y -- true "label" vector (containing 0 if non-cat, 1 if cat)

caches -- list of caches containing:

every cache of linear_activation_forward() with "relu" (it's caches[l], for l in range(L-1) i.e l = 0...L-2)

the cache of linear_activation_forward() with "sigmoid" (it's caches[L-1])

Returns:

grads -- A dictionary with the gradients

grads["dA" + str(l)] = ...

grads["dW" + str(l)] = ...

grads["db" + str(l)] = ...

"""

grads = {}

L = len(caches) # the number of layers

m = AL.shape[1]

Y = Y.reshape(AL.shape) # after this line, Y is the same shape as AL

# Initializing the backpropagation

### START CODE HERE ### (1 line of code)

dAL = -(np.divide(Y, AL) - np.divide((1-Y), (1-AL)))

### END CODE HERE ###

# Lth layer (SIGMOID -> LINEAR) gradients. Inputs: "AL, Y, caches". Outputs: "grads["dAL"], grads["dWL"], grads["dbL"]

### START CODE HERE ### (approx. 2 lines)

current_cache = caches[L-1]

grads["dA" + str(L)], grads["dW" + str(L)], grads["db" + str(L)] = linear_activation_backward(dAL, current_cache, "sigmoid")

### END CODE HERE ###

for l in reversed(range(L - 1)):

# lth layer: (RELU -> LINEAR) gradients.

# Inputs: "grads["dA" + str(l + 2)], caches". Outputs: "grads["dA" + str(l + 1)] , grads["dW" + str(l + 1)] , grads["db" + str(l + 1)]

### START CODE HERE ### (approx. 5 lines)

current_cache = caches[l]

dA_prev_temp, dW_temp, db_temp = linear_activation_backward(grads["dA" + str(l+2)], current_cache, "relu")

grads["dA" + str(l + 1)] = dA_prev_temp

grads["dW" + str(l + 1)] = dW_temp

grads["db" + str(l + 1)] = db_temp

### END CODE HERE ###

return grads

AL, Y_assess, caches = L_model_backward_test_case()

grads = L_model_backward(AL, Y_assess, caches)

print ("dW1 = "+ str(grads["dW1"]))

print ("db1 = "+ str(grads["db1"]))

print ("dA1 = "+ str(grads["dA1"]))

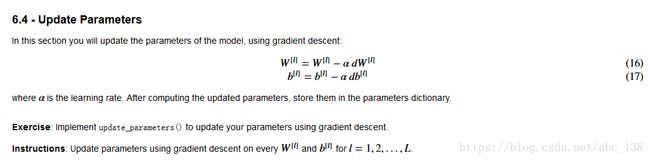

"""

更新参数

"""

def update_parameters(parameters, grads, learning_rate):

"""

Update parameters using gradient descent

Arguments:

parameters -- python dictionary containing your parameters

grads -- python dictionary containing your gradients, output of L_model_backward

Returns:

parameters -- python dictionary containing your updated parameters

parameters["W" + str(l)] = ...

parameters["b" + str(l)] = ...

"""

L = len(parameters) // 2 # number of layers in the neural network

# Update rule for each parameter. Use a for loop.

### START CODE HERE ### (≈ 3 lines of code)

for l in range(L):

parameters["W" + str(l + 1)] = parameters["W" + str(l + 1)] - learning_rate * grads["dW" + str(l + 1)]

parameters["b" + str(l + 1)] = parameters["b" + str(l + 1)] - learning_rate * grads["db" + str(l + 1)]

### END CODE HERE ###

return parameters

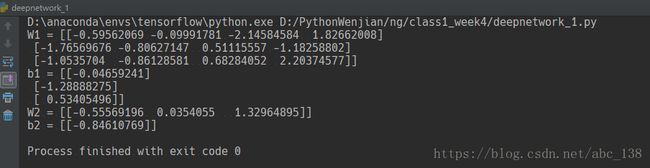

parameters, grads = update_parameters_test_case()

parameters = update_parameters(parameters, grads, 0.1)

print ("W1 = "+ str(parameters["W1"]))

print ("b1 = "+ str(parameters["b1"]))

print ("W2 = "+ str(parameters["W2"]))

print ("b2 = "+ str(parameters["b2"]))7 - Conclusion

Congrats on implementing all the functions required for building a deep neural network!

We know it was a long assignment but going forward it will only get better. The next part of the assignment is easier.

In the next assignment you will put all these together to build two models:

- A two-layer neural network

- An L-layer neural network

You will in fact use these models to classify cat vs non-cat images!