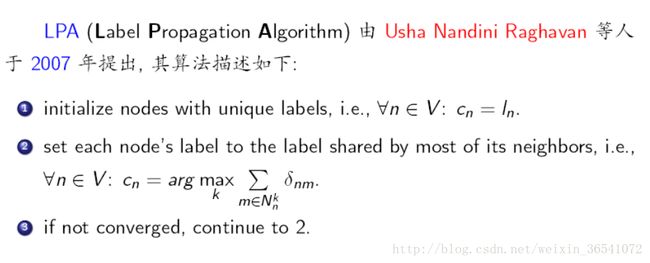

社区发现算法之标签传播(LPA)

标签传播算法(LPA)的做法比较简单:

第一步: 为所有节点指定一个唯一的标签;

第二步: 逐轮刷新所有节点的标签,直到达到收敛要求为止。对于每一轮刷新,节点标签刷新的规则如下:

对于某一个节点,考察其所有邻居节点的标签,并进行统计,将出现个数最多的那个标签赋给当前节点。当个数最多的标签不唯一时,随机选一个。

- 1

注:算法中的记号 N_n^k 表示节点 n 的邻居中标签为 k 的所有节点构成的集合。

标签传播算法(label propagation)的核心思想非常简单:相似的数据应该具有相同的label。LP算法包括两大步骤:1)构造相似矩阵;2)勇敢的传播吧。

LP算法是基于Graph的,因此我们需要先构建一个图。我们为所有的数据构建一个图,图的节点就是一个数据点,包含labeled和unlabeled的数据。节点i和节点j的边表示他们的相似度。这个图的构建方法有很多,这里我们假设这个图是全连接的,节点i和节点j的边权重为:

这里,α是超参。

还有个非常常用的图构建方法是knn图,也就是只保留每个节点的k近邻权重,其他的为0,也就是不存在边,因此是稀疏的相似矩阵。

- 1

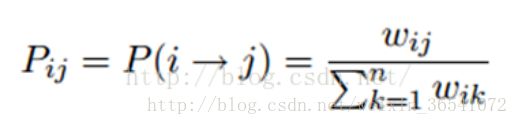

标签传播算法非常简单:通过节点之间的边传播label。边的权重越大,表示两个节点越相似,那么label越容易传播过去。我们定义一个NxN的概率转移矩阵P:

Pij表示从节点i转移到节点j的概率。假设有C个类和L个labeled样本,我们定义一个LxC的label矩阵YL,第i行表示第i个样本的标签指示向量,即如果第i个样本的类别是j,那么该行的第j个元素为1,其他为0。同样,我们也给U个unlabeled样本一个UxC的label矩阵YU。把他们合并,我们得到一个NxC的soft label矩阵F=[YL;YU]。soft label的意思是,我们保留样本i属于每个类别的概率,而不是互斥性的,这个样本以概率1只属于一个类。当然了,最后确定这个样本i的类别的时候,是取max也就是概率最大的那个类作为它的类别的。那F里面有个YU,它一开始是不知道的,那最开始的值是多少?无所谓,随便设置一个值就可以了。

千呼万唤始出来,简单的LP算法如下:

1)执行传播:F=PF

2)重置F中labeled样本的标签:FL=YL

3)重复步骤1)和2)直到F收敛。

步骤1)就是将矩阵P和矩阵F相乘,这一步,每个节点都将自己的label以P确定的概率传播给其他节点。如果两个节点越相似(在欧式空间中距离越近),那么对方的label就越容易被自己的label赋予,就是更容易拉帮结派。步骤2)非常关键,因为labeled数据的label是事先确定的,它不能被带跑,所以每次传播完,它都得回归它本来的label。随着labeled数据不断的将自己的label传播出去,最后的类边界会穿越高密度区域,而停留在低密度的间隔中。相当于每个不同类别的labeled样本划分了势力范围。

变身的LP算法

我们知道,我们每次迭代都是计算一个soft label矩阵F=[YL;YU],但是YL是已知的,计算它没有什么用,在步骤2)的时候,还得把它弄回来。我们关心的只是YU,那我们能不能只计算YU呢?Yes。我们将矩阵P做以下划分:

代码如下:

import time

import numpy as np

# return k neighbors index

def navie_knn(dataSet, query, k):

numSamples = dataSet.shape[0]

## step 1: calculate Euclidean distance

diff = np.tile(query, (numSamples, 1)) - dataSet

squaredDiff = diff ** 2

squaredDist = np.sum(squaredDiff, axis = 1) # sum is performed by row

## step 2: sort the distance

sortedDistIndices = np.argsort(squaredDist)

if k > len(sortedDistIndices):

k = len(sortedDistIndices)

return sortedDistIndices[0:k]

# build a big graph (normalized weight matrix)

def buildGraph(MatX, kernel_type, rbf_sigma = None, knn_num_neighbors = None):

num_samples = MatX.shape[0]

affinity_matrix = np.zeros((num_samples, num_samples), np.float32)

if kernel_type == 'rbf':

if rbf_sigma == None:

raise ValueError('You should input a sigma of rbf kernel!')

for i in xrange(num_samples):

row_sum = 0.0

for j in xrange(num_samples):

diff = MatX[i, :] - MatX[j, :]

affinity_matrix[i][j] = np.exp(sum(diff**2) / (-2.0 * rbf_sigma**2))

row_sum += affinity_matrix[i][j]

affinity_matrix[i][:] /= row_sum

elif kernel_type == 'knn':

if knn_num_neighbors == None:

raise ValueError('You should input a k of knn kernel!')

for i in xrange(num_samples):

k_neighbors = navie_knn(MatX, MatX[i, :], knn_num_neighbors)

affinity_matrix[i][k_neighbors] = 1.0 / knn_num_neighbors

else:

raise NameError('Not support kernel type! You can use knn or rbf!')

return affinity_matrix

# label propagation

def labelPropagation(Mat_Label, Mat_Unlabel, labels, kernel_type = 'rbf', rbf_sigma = 1.5, \

knn_num_neighbors = 10, max_iter = 500, tol = 1e-3):

# initialize

num_label_samples = Mat_Label.shape[0]

num_unlabel_samples = Mat_Unlabel.shape[0]

num_samples = num_label_samples + num_unlabel_samples

labels_list = np.unique(labels)

num_classes = len(labels_list)

MatX = np.vstack((Mat_Label, Mat_Unlabel))

clamp_data_label = np.zeros((num_label_samples, num_classes), np.float32)

for i in xrange(num_label_samples):

clamp_data_label[i][labels[i]] = 1.0

label_function = np.zeros((num_samples, num_classes), np.float32)

label_function[0 : num_label_samples] = clamp_data_label

label_function[num_label_samples : num_samples] = -1

# graph construction

affinity_matrix = buildGraph(MatX, kernel_type, rbf_sigma, knn_num_neighbors)

# start to propagation

iter = 0; pre_label_function = np.zeros((num_samples, num_classes), np.float32)

changed = np.abs(pre_label_function - label_function).sum()

while iter < max_iter and changed > tol:

if iter % 1 == 0:

print "---> Iteration %d/%d, changed: %f" % (iter, max_iter, changed)

pre_label_function = label_function

iter += 1

# propagation

label_function = np.dot(affinity_matrix, label_function)

# clamp

label_function[0 : num_label_samples] = clamp_data_label

# check converge

changed = np.abs(pre_label_function - label_function).sum()

# get terminate label of unlabeled data

unlabel_data_labels = np.zeros(num_unlabel_samples)

for i in xrange(num_unlabel_samples):

unlabel_data_labels[i] = np.argmax(label_function[i+num_label_samples])

return unlabel_data_labelsJBQkFCMA==/dissolve/70/gravity/SouthEast)测试代码:

import time

import math

import numpy as np

from labelPropagation import labelPropagation

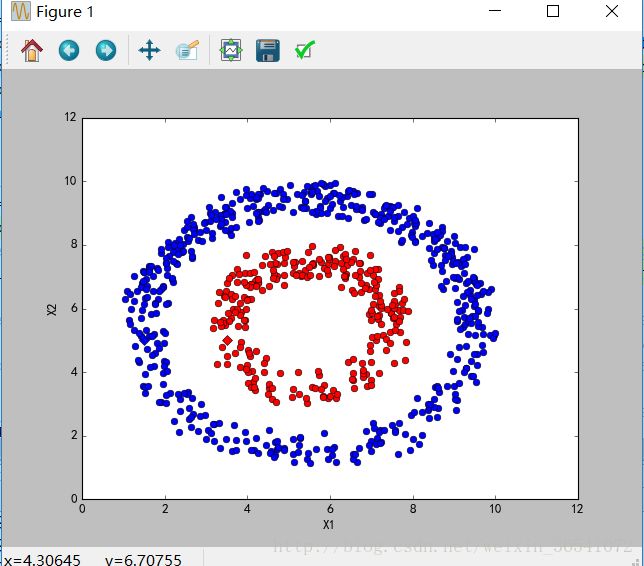

def show(Mat_Label, labels, Mat_Unlabel, unlabel_data_labels):

import matplotlib.pyplot as plt

for i in range(Mat_Label.shape[0]):

if int(labels[i]) == 0:

plt.plot(Mat_Label[i, 0], Mat_Label[i, 1], 'Dr')

elif int(labels[i]) == 1:

plt.plot(Mat_Label[i, 0], Mat_Label[i, 1], 'Db')

else:

plt.plot(Mat_Label[i, 0], Mat_Label[i, 1], 'Dy')

for i in range(Mat_Unlabel.shape[0]):

if int(unlabel_data_labels[i]) == 0:

plt.plot(Mat_Unlabel[i, 0], Mat_Unlabel[i, 1], 'or')

elif int(unlabel_data_labels[i]) == 1:

plt.plot(Mat_Unlabel[i, 0], Mat_Unlabel[i, 1], 'ob')

else:

plt.plot(Mat_Unlabel[i, 0], Mat_Unlabel[i, 1], 'oy')

plt.xlabel('X1'); plt.ylabel('X2')

plt.xlim(0.0, 12.)

plt.ylim(0.0, 12.)

plt.show()

def loadCircleData(num_data):

center = np.array([5.0, 5.0])

radiu_inner = 2

radiu_outer = 4

num_inner = num_data / 3

num_outer = num_data - num_inner

data = []

theta = 0.0

for i in range(num_inner):

pho = (theta % 360) * math.pi / 180

tmp = np.zeros(2, np.float32)

tmp[0] = radiu_inner * math.cos(pho) + np.random.rand(1) + center[0]

tmp[1] = radiu_inner * math.sin(pho) + np.random.rand(1) + center[1]

data.append(tmp)

theta += 2

theta = 0.0

for i in range(num_outer):

pho = (theta % 360) * math.pi / 180

tmp = np.zeros(2, np.float32)

tmp[0] = radiu_outer * math.cos(pho) + np.random.rand(1) + center[0]

tmp[1] = radiu_outer * math.sin(pho) + np.random.rand(1) + center[1]

data.append(tmp)

theta += 1

Mat_Label = np.zeros((2, 2), np.float32)

Mat_Label[0] = center + np.array([-radiu_inner + 0.5, 0])

Mat_Label[1] = center + np.array([-radiu_outer + 0.5, 0])

labels = [0, 1]

Mat_Unlabel = np.vstack(data)

return Mat_Label, labels, Mat_Unlabel

def loadBandData(num_unlabel_samples):

#Mat_Label = np.array([[5.0, 2.], [5.0, 8.0]])

#labels = [0, 1]

#Mat_Unlabel = np.array([[5.1, 2.], [5.0, 8.1]])

Mat_Label = np.array([[5.0, 2.], [5.0, 8.0]])

labels = [0, 1]

num_dim = Mat_Label.shape[1]

Mat_Unlabel = np.zeros((num_unlabel_samples, num_dim), np.float32)

Mat_Unlabel[:num_unlabel_samples/2, :] = (np.random.rand(num_unlabel_samples/2, num_dim) - 0.5) * np.array([3, 1]) + Mat_Label[0]

Mat_Unlabel[num_unlabel_samples/2 : num_unlabel_samples, :] = (np.random.rand(num_unlabel_samples/2, num_dim) - 0.5) * np.array([3, 1]) + Mat_Label[1]

return Mat_Label, labels, Mat_Unlabel

if name == “main“:

num_unlabel_samples = 800

#Mat_Label, labels, Mat_Unlabel = loadBandData(num_unlabel_samples)

Mat_Label, labels, Mat_Unlabel = loadCircleData(num_unlabel_samples)

## Notice: when use 'rbf' as our kernel, the choice of hyper parameter 'sigma' is very import! It should be

## chose according to your dataset, specific the distance of two data points. I think it should ensure that

## each point has about 10 knn or w_i,j is large enough. It also influence the speed of converge. So, may be

## 'knn' kernel is better!

#unlabel_data_labels = labelPropagation.labelPropagation(Mat_Label, Mat_Unlabel, labels, kernel_type = 'rbf', rbf_sigma = 0.2)

unlabel_data_labels = labelPropagation(Mat_Label, Mat_Unlabel, labels, kernel_type = 'knn', knn_num_neighbors = 10, max_iter = 400)

show(Mat_Label, labels, Mat_Unlabel, unlabel_data_labels)

结果如下:

利用networkx:

#coding:utf-8

'''

Created on 2017-1-4

@author: 刘帅

'''

import collections

import random

import networkx as nx

class LPA():

def __init__(self, G, max_iter = 20):

self._G = G

self._n = len(G.node) #number of nodes

self._max_iter = max_iter

def can_stop(self):

# all node has the label same with its most neighbor

for i in range(self._n):

node = self._G.node[i]

label = node["label"]

max_labels = self.get_max_neighbor_label(i)

if(label not in max_labels):

return False

return True

def get_max_neighbor_label(self,node_index):

m = collections.defaultdict(int)

for neighbor_index in self._G.neighbors(node_index):

neighbor_label = self._G.node[neighbor_index]["label"]

m[neighbor_label] += 1

max_v = max(m.itervalues())

return [item[0] for item in m.items() if item[1] == max_v]

'''asynchronous update'''

def populate_label(self):

#random visit

visitSequence = random.sample(self._G.nodes(),len(self._G.nodes()))

for i in visitSequence:

node = self._G.node[i]

label = node["label"]

max_labels = self.get_max_neighbor_label(i)

if(label not in max_labels):

newLabel = random.choice(max_labels)

node["label"] = newLabel

def get_communities(self):

communities = collections.defaultdict(lambda:list())

for node in self._G.nodes(True):

label = node[1]["label"]

communities[label].append(node[0])

return communities.values()

def execute(self):

#initial label

for i in range(self._n):

self._G.node[i]["label"] = i

iter_time = 0

#populate label

while(not self.can_stop() and iter_time结果如下:

[0, 1, 3, 7, 11, 12, 13, 17, 19, 21]

[5, 6, 16]

[4, 10]

[2, 8, 9, 14, 15, 18, 20, 22, 23, 24, 25, 26, 27, 28, 29, 30, 31, 32, 33]