PyTorch学习(13)——自编码(AutoEncoder)

本篇博客主要介绍PyTorch中的自编码(AutoEncoder),并使用自编码来实现非监督学习。

示例代码:

import torch

import torch.nn as nn

import torch.utils.data as Data

import torchvision

from torch.autograd import Variable

import matplotlib.pyplot as plt

from mpl_toolkits.mplot3d import Axes3D

from matplotlib import cm

import numpy as np

# 超参数

EPOCH = 10

BATCH_SIZE = 64

LR = 0.005

DOWNLOAD_MNIST = False

N_TEST_IMG = 5

# 下载MNIST数据

train_data = torchvision.datasets.MNIST(

root='./mnist/',

train=True,

transform=torchvision.transforms.ToTensor(),

download=DOWNLOAD_MNIST,

)

# 输出一个样本

# print(train_data.train_data.size())

# print(train_data.train_labels.size())

# plt.imshow(train_data.train_data[2].numpy(), cmap='gray')

# plt.title('%i' % train_data.train_labels[2])

# plt.show()

# Dataloader

train_loader = Data.DataLoader(dataset=train_data, batch_size=BATCH_SIZE, shuffle=True)

class AutoEncoder(nn.Module):

def __init__(self):

super(AutoEncoder, self).__init__()

self.encoder = nn.Sequential(

nn.Linear(28 * 28, 128),

nn.Tanh(),

nn.Linear(128, 64),

nn.Tanh(),

nn.Linear(64, 12),

nn.Tanh(),

nn.Linear(12, 3),

)

self.decoder = nn.Sequential(

nn.Linear(3, 12),

nn.Tanh(),

nn.Linear(12, 64),

nn.Tanh(),

nn.Linear(64, 128),

nn.Tanh(),

nn.Linear(128, 28 * 28),

nn.Sigmoid(),

)

def forward(self, x):

encoded = self.encoder(x)

decoded = self.decoder(encoded)

return encoded, decoded

autoencoder = AutoEncoder()

optimizer = torch.optim.Adam(autoencoder.parameters(), lr=LR)

loss_func = nn.MSELoss()

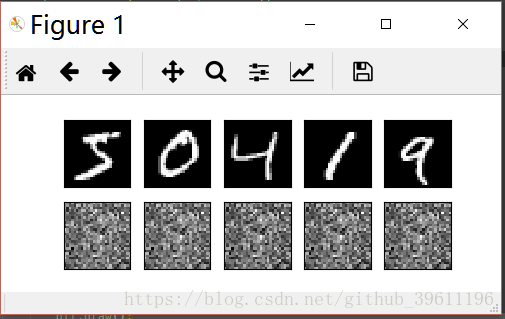

# initialize figure

f, a = plt.subplots(2, N_TEST_IMG, figsize=(5, 2))

plt.ion() # continuously plot

# original data (first row) for viewing

view_data = train_data.train_data[:N_TEST_IMG].view(-1, 28*28).type(torch.FloatTensor)/255.

for i in range(N_TEST_IMG):

a[0][i].imshow(np.reshape(Variable(view_data).data.numpy()[i], (28, 28)), cmap='gray'); a[0][i].set_xticks(()); a[0][i].set_yticks(())

for epoch in range(EPOCH):

for step, (x, y) in enumerate(train_loader):

b_x = Variable(x.view(-1, 28 * 28))

b_y = Variable(x.view(-1, 28 * 28))

b_label = Variable(y)

encoded, decoded = autoencoder(b_x)

loss = loss_func(decoded, b_y)

optimizer.zero_grad()

loss.backward()

optimizer.step()

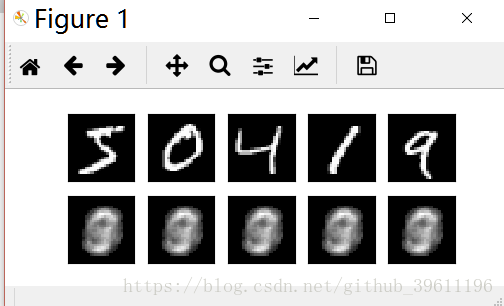

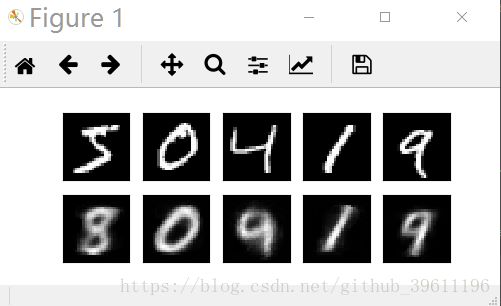

if step % 100 == 0:

print('Epoch: ', epoch, '| train loss: %.4f' % loss.data.numpy())

# plotting decoded image (second row)

_, decoded_data = autoencoder(Variable(view_data))

for i in range(N_TEST_IMG):

a[1][i].clear()

a[1][i].imshow(np.reshape(decoded_data.data.numpy()[i], (28, 28)), cmap='gray')

a[1][i].set_xticks(());

a[1][i].set_yticks(())

plt.draw();

plt.pause(0.05)

plt.ioff()

plt.show()

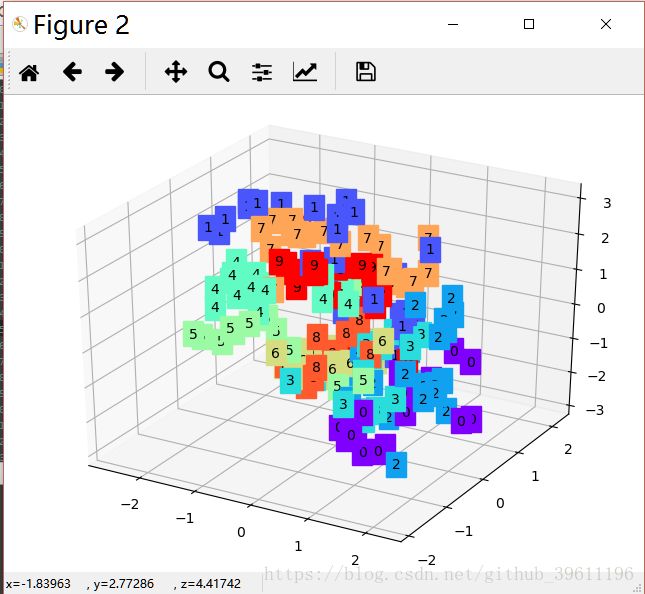

# visualize in 3D plot

view_data = train_data.train_data[:200].view(-1, 28 * 28).type(torch.FloatTensor) / 255.

encoded_data, _ = autoencoder(Variable(view_data))

fig = plt.figure(2);

ax = Axes3D(fig)

X, Y, Z = encoded_data.data[:, 0].numpy(), encoded_data.data[:, 1].numpy(), encoded_data.data[:, 2].numpy()

values = train_data.train_labels[:200].numpy()

for x, y, z, s in zip(X, Y, Z, values):

c = cm.rainbow(int(255 * s / 9));

ax.text(x, y, z, s, backgroundcolor=c)

ax.set_xlim(X.min(), X.max());

ax.set_ylim(Y.min(), Y.max());

ax.set_zlim(Z.min(), Z.max())

plt.show()

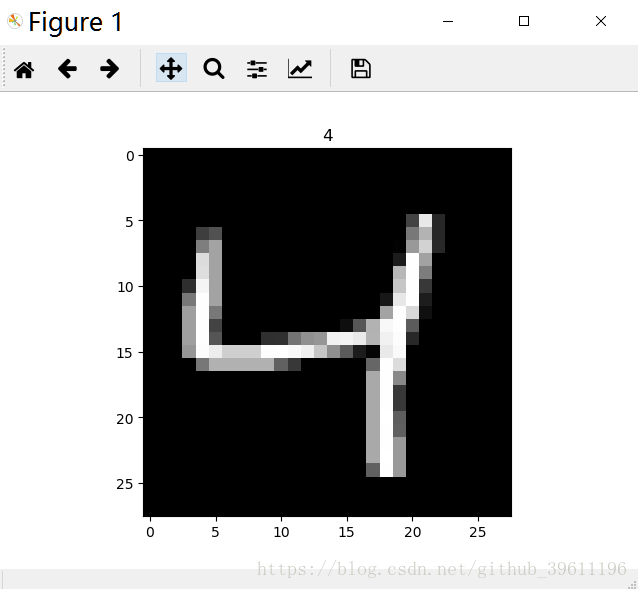

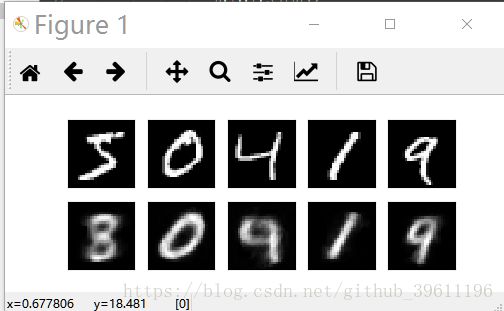

数据示例:

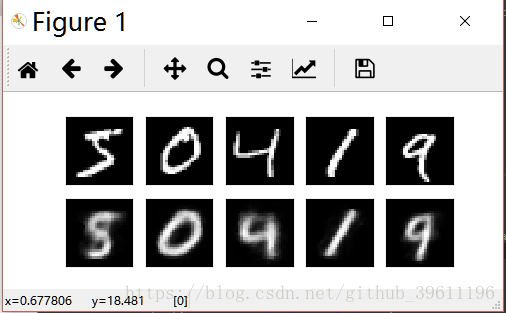

运行结果:

Epoch: 0 | train loss: 0.2318

Epoch: 0 | train loss: 0.0704

Epoch: 0 | train loss: 0.0680

Epoch: 0 | train loss: 0.0617

Epoch: 0 | train loss: 0.0595

Epoch: 0 | train loss: 0.0512

Epoch: 0 | train loss: 0.0528

Epoch: 0 | train loss: 0.0486

Epoch: 0 | train loss: 0.0498

Epoch: 0 | train loss: 0.0445

Epoch: 1 | train loss: 0.0468

Epoch: 1 | train loss: 0.0459

Epoch: 1 | train loss: 0.0428

Epoch: 1 | train loss: 0.0447

Epoch: 1 | train loss: 0.0455

Epoch: 1 | train loss: 0.0448

Epoch: 1 | train loss: 0.0422

Epoch: 1 | train loss: 0.0489

Epoch: 1 | train loss: 0.0426

Epoch: 1 | train loss: 0.0417

Epoch: 2 | train loss: 0.0470

Epoch: 2 | train loss: 0.0413

Epoch: 2 | train loss: 0.0398

Epoch: 2 | train loss: 0.0419

Epoch: 2 | train loss: 0.0424

Epoch: 2 | train loss: 0.0418

Epoch: 2 | train loss: 0.0426

Epoch: 2 | train loss: 0.0398

Epoch: 2 | train loss: 0.0401

Epoch: 2 | train loss: 0.0401

Epoch: 3 | train loss: 0.0420

Epoch: 3 | train loss: 0.0444

Epoch: 3 | train loss: 0.0396

Epoch: 3 | train loss: 0.0447

Epoch: 3 | train loss: 0.0367

Epoch: 3 | train loss: 0.0384

Epoch: 3 | train loss: 0.0446

Epoch: 3 | train loss: 0.0435

Epoch: 3 | train loss: 0.0434

Epoch: 3 | train loss: 0.0406

Epoch: 4 | train loss: 0.0379

Epoch: 4 | train loss: 0.0382

Epoch: 4 | train loss: 0.0403

Epoch: 4 | train loss: 0.0351

Epoch: 4 | train loss: 0.0377

Epoch: 4 | train loss: 0.0367

Epoch: 4 | train loss: 0.0370

Epoch: 4 | train loss: 0.0397

Epoch: 4 | train loss: 0.0376

Epoch: 4 | train loss: 0.0353

Epoch: 5 | train loss: 0.0402

Epoch: 5 | train loss: 0.0368

Epoch: 5 | train loss: 0.0382

Epoch: 5 | train loss: 0.0395

Epoch: 5 | train loss: 0.0396

Epoch: 5 | train loss: 0.0414

Epoch: 5 | train loss: 0.0373

Epoch: 5 | train loss: 0.0388

Epoch: 5 | train loss: 0.0363

Epoch: 5 | train loss: 0.0382

Epoch: 6 | train loss: 0.0366

Epoch: 6 | train loss: 0.0357

Epoch: 6 | train loss: 0.0360

Epoch: 6 | train loss: 0.0397

Epoch: 6 | train loss: 0.0376

Epoch: 6 | train loss: 0.0364

Epoch: 6 | train loss: 0.0370

Epoch: 6 | train loss: 0.0383

Epoch: 6 | train loss: 0.0360

Epoch: 6 | train loss: 0.0334

Epoch: 7 | train loss: 0.0369

Epoch: 7 | train loss: 0.0324

Epoch: 7 | train loss: 0.0372

Epoch: 7 | train loss: 0.0373

Epoch: 7 | train loss: 0.0360

Epoch: 7 | train loss: 0.0347

Epoch: 7 | train loss: 0.0352

Epoch: 7 | train loss: 0.0322

Epoch: 7 | train loss: 0.0346

Epoch: 7 | train loss: 0.0359

Epoch: 8 | train loss: 0.0354

Epoch: 8 | train loss: 0.0353

Epoch: 8 | train loss: 0.0345

Epoch: 8 | train loss: 0.0303

Epoch: 8 | train loss: 0.0367

Epoch: 8 | train loss: 0.0356

Epoch: 8 | train loss: 0.0364

Epoch: 8 | train loss: 0.0373

Epoch: 8 | train loss: 0.0375

Epoch: 8 | train loss: 0.0357

Epoch: 9 | train loss: 0.0314

Epoch: 9 | train loss: 0.0355

Epoch: 9 | train loss: 0.0375

Epoch: 9 | train loss: 0.0367

Epoch: 9 | train loss: 0.0364

Epoch: 9 | train loss: 0.0347

Epoch: 9 | train loss: 0.0348

Epoch: 9 | train loss: 0.0333

Epoch: 9 | train loss: 0.0395

Epoch: 9 | train loss: 0.0393