Pytorch 中的学习率调整方法

Pytorch中的学习率调整有两种方式:

- 直接修改optimizer中的lr参数;

- 利用lr_scheduler()提供的几种衰减函数

1. 修改optimizer中的lr:

import torch

import matplotlib.pyplot as plt

%matplotlib inline

from torch.optim import *

import torch.nn as nn

class net(nn.Module):

def __init__(self):

super(net,self).__init__()

self.fc = nn.Linear(1,10)

def forward(self,x):

return self.fc(x)

model = net()

LR = 0.01

optimizer = Adam(model.parameters(),lr = LR)

lr_list = []

for epoch in range(100):

if epoch % 5 == 0:

for p in optimizer.param_groups:

p['lr'] *= 0.9

lr_list.append(optimizer.state_dict()['param_groups'][0]['lr'])

plt.plot(range(100),lr_list,color = 'r')

2. lr_scheduler

2.1 torch.optim.lr_scheduler.LambdaLR(optimizer, lr_lambda, last_epoch=-1)

lr_lambda 会接收到一个int参数:epoch,然后根据epoch计算出对应的lr。如果设置多个lambda函数的话,会分别作用于Optimizer中的不同的params_group

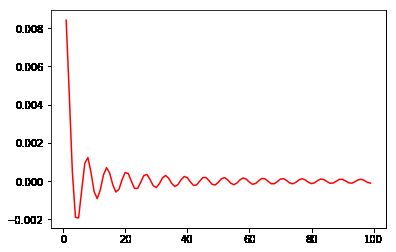

import numpy as np

lr_list = []

model = net()

LR = 0.01

optimizer = Adam(model.parameters(),lr = LR)

lambda1 = lambda epoch:np.sin(epoch) / epoch

scheduler = lr_scheduler.LambdaLR(optimizer,lr_lambda = lambda1)

for epoch in range(100):

scheduler.step()

lr_list.append(optimizer.state_dict()['param_groups'][0]['lr'])

plt.plot(range(100),lr_list,color = 'r')

2.2 torch.optim.lr_scheduler.StepLR(optimizer, step_size, gamma=0.1, last_epoch=-1)

每个一定的epoch,lr会自动乘以gamma

lr_list = []

model = net()

LR = 0.01

optimizer = Adam(model.parameters(),lr = LR)

scheduler = lr_scheduler.StepLR(optimizer,step_size=5,gamma = 0.8)

for epoch in range(100):

scheduler.step()

lr_list.append(optimizer.state_dict()['param_groups'][0]['lr'])

plt.plot(range(100),lr_list,color = 'r')

2.3 torch.optim.lr_scheduler.MultiStepLR(optimizer, milestones, gamma=0.1, last_epoch=-1)

三段式lr,epoch进入milestones范围内即乘以gamma,离开milestones范围之后再乘以gamma

这种衰减方式也是在学术论文中最常见的方式,一般手动调整也会采用这种方法。

lr_list = []

model = net()

LR = 0.01

optimizer = Adam(model.parameters(),lr = LR)

scheduler = lr_scheduler.MultiStepLR(optimizer,milestones=[20,80],gamma = 0.9)

for epoch in range(100):

scheduler.step()

lr_list.append(optimizer.state_dict()['param_groups'][0]['lr'])

plt.plot(range(100),lr_list,color = 'r')

2.4 torch.optim.lr_scheduler.ExponentialLR(optimizer, gamma, last_epoch=-1)

每个epoch中lr都乘以gamma

lr_list = []

model = net()

LR = 0.01

optimizer = Adam(model.parameters(),lr = LR)

scheduler = lr_scheduler.ExponentialLR(optimizer, gamma=0.9)

for epoch in range(100):

scheduler.step()

lr_list.append(optimizer.state_dict()['param_groups'][0]['lr'])

plt.plot(range(100),lr_list,color = 'r')

2.5 torch.optim.lr_scheduler.CosineAnnealingLR(optimizer, T_max, eta_min=0, last_epoch=-1)

T_max 对应1/2个cos周期所对应的epoch数值

eta_min 为最小的lr值,默认为0

lr_list = []

model = net()

LR = 0.01

optimizer = Adam(model.parameters(),lr = LR)

scheduler = lr_scheduler.CosineAnnealingLR(optimizer, T_max = 20)

for epoch in range(100):

scheduler.step()

lr_list.append(optimizer.state_dict()['param_groups'][0]['lr'])

plt.plot(range(100),lr_list,color = 'r')

2.6 torch.optim.lr_scheduler.ReduceLROnPlateau(optimizer, mode='min', factor=0.1, patience=10, verbose=False, threshold=0.0001, threshold_mode='rel', cooldown=0, min_lr=0, eps=1e-08)

在发现loss不再降低或者acc不再提高之后,降低学习率。各参数意义如下:

mode:'min'模式检测metric是否不再减小,'max'模式检测metric是否不再增大;

factor: 触发条件后lr*=factor;

patience:不再减小(或增大)的累计次数;

verbose:触发条件后print;

threshold:只关注超过阈值的显著变化;

threshold_mode:有rel和abs两种阈值计算模式,rel规则:max模式下如果超过best(1+threshold)为显著,min模式下如果低于best(1-threshold)为显著;abs规则:max模式下如果超过best+threshold为显著,min模式下如果低于best-threshold为显著;

cooldown:触发一次条件后,等待一定epoch再进行检测,避免lr下降过速;

min_lr:最小的允许lr;

eps:如果新旧lr之间的差异小与1e-8,则忽略此次更新。

一起讨论机器学习与Pytorch,可以加群747537854