PyTorch: RNN实战详解之生成名字

版权声明:博客文章都是作者辛苦整理的,转载请注明出处,谢谢! http://blog.csdn.net/m0_37306360/article/details/79316964

介绍

上一篇我们讲了如何在PyTorch框架下用RNN分类名字http://blog.csdn.net/m0_37306360/article/details/79316013,本文讲如何用RNN生成特定语言(类别)的名字。我们使用上一篇同样的数据集。不同的是,不是根据输入的名字来预测此名字是那种语言的(读完名字的所有字母之后,我们不是预测一个类别)。而是一次输入一个类别并输出一个字母。 反复预测字符以生成名字。

PyTorch之RNN实战生成

定义网络

class RNN(nn.Module):

def __init__(self, input_size, hidden_size, output_size):

super(RNN, self).__init__()

self.hidden_size = hidden_size

self.i2h = nn.Linear(n_categories + input_size + hidden_size, hidden_size)

self.i2o = nn.Linear(n_categories + input_size + hidden_size, output_size)

self.o2o = nn.Linear(hidden_size + output_size, output_size)

self.dropout = nn.Dropout(0.1)

self.softmax = nn.LogSoftmax(dim=1)

def forward(self, category, input, hidden):

input_combined = torch.cat((category, input, hidden), 1)

output = self.i2o(input_combined)

hidden = self.i2h(input_combined)

output_combined = torch.cat((output, hidden), 1)

output = self.o2o(output_combined)

output = self.dropout(output)

output = self.softmax(output)

return output, hidden

def initHidden(self):

return Variable(torch.zeros(1, self.hidden_size))数据预处理

其他的数据预处理和上一篇文章类似。主要的不同是如何构建训练样本对。

对于每个时间步(即训练词中的每个字母),网络的输入是(类别,当前字母,隐藏状态),输出将是(下一个字母,下一个隐藏状态)。 因此,对于每个训练集,我们需要:类别,一组输入字母和一组输出(目标)字母。

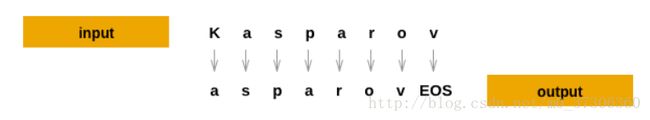

我们可以很简单的获取(类别,名字(字母序列)),但是我们如何用这些数据构建输入字母序列和输出字母序列呢?由于我们预测了每个时间步的当前字母的下一个字母,因此字母对是来自行的连续字母的组 - 例如对于序列(“ABCD”),构建(“A”, B”), (“B”,“C”), (“C”, “D”), (“D”,“EOS”). 如图:

训练网络

与仅使用最后一个输出的分类相比,我们在每一步都进行了预测,因此我们需要计算每一步的损失。

for i in range(input_line_tensor.size()[0]):

output, hidden = rnn(category_tensor, input_line_tensor[i], hidden)

loss += criterion(output, target_line_tensor[i])采样

为了取样,我们给网络输入一个字母,得到下一个字母,把它作为下一个字母的输入,并重复,直到返回EOS结束符。

整个生成过程如下:

1. 为输入类别,开始字母和空的隐藏状态创建张量

2. 用开始字母创建一个字符串:output_name

3. 达到最大输出长度:

(1).将当前的字母送入网络

(2).从输出获取下一个字母和下一个隐藏状态

(3).如果这个字符是EOS,生成结束

(4).如果是普通字母,添加到output_name并继续

4.返回最后生成的名字

完整代码

from io import open

import glob

import unicodedata

import string

all_letters = string.ascii_letters + " .,;'-"

n_letters = len(all_letters) + 1 # Plus EOS marker

def findFiles(path): return glob.glob(path)

# Turn a Unicode string to plain ASCII, thanks to http://stackoverflow.com/a/518232/2809427

def unicodeToAscii(s):

return ''.join(

c for c in unicodedata.normalize('NFD', s)

if unicodedata.category(c) != 'Mn'

and c in all_letters

)

# Read a file and split into lines

def readLines(filename):

lines = open(filename, encoding='utf-8').read().strip().split('\n')

return [unicodeToAscii(line) for line in lines]

# Build the category_lines dictionary, a list of lines per category

category_lines = {}

all_categories = []

for filename in findFiles('nlpdata/data/names/*.txt'):

category = filename.split('/')[-1].split('.')[0]

category = category.split('\\')[1]

all_categories.append(category)

lines = readLines(filename)

category_lines[category] = lines

n_categories = len(all_categories)

# print('# categories:', n_categories, all_categories)

# print(unicodeToAscii("O'Néàl"))

import torch

import torch.nn as nn

from torch.autograd import Variable

class RNN(nn.Module):

def __init__(self, input_size, hidden_size, output_size):

super(RNN, self).__init__()

self.hidden_size = hidden_size

self.i2h = nn.Linear(n_categories + input_size + hidden_size, hidden_size)

self.i2o = nn.Linear(n_categories + input_size + hidden_size, output_size)

self.o2o = nn.Linear(hidden_size + output_size, output_size)

self.dropout = nn.Dropout(0.1)

self.softmax = nn.LogSoftmax(dim=1)

def forward(self, category, input, hidden):

input_combined = torch.cat((category, input, hidden), 1)

output = self.i2o(input_combined)

hidden = self.i2h(input_combined)

output_combined = torch.cat((output, hidden), 1)

output = self.o2o(output_combined)

output = self.dropout(output)

output = self.softmax(output)

return output, hidden

def initHidden(self):

return Variable(torch.zeros(1, self.hidden_size))

# Training

# Preparing for Training (get random pairs of (category, line))

import random

# Random item from a list

def randomChoice(l):

return l[random.randint(0, len(l) - 1)]

# Get a random category and random line from that category

def randomTrainingPair():

category = randomChoice(all_categories)

line = randomChoice(category_lines[category])

return category, line

# One-hot vector for category

def categoryTensor(category):

li = all_categories.index(category)

tensor = torch.zeros(1, n_categories) # 1*18

tensor[0][li] = 1

return tensor

# One-hot matrix of first to last letters (not including EOS) for input

def inputTensor(line):

tensor = torch.zeros(len(line), 1, n_letters) # len(line)*1*59

for li in range(len(line)):

letter = line[li]

tensor[li][0][all_letters.find(letter)] = 1

return tensor

# LongTensor of second letter to end (EOS) for target

def targetTensor(line):

letter_indexes = [all_letters.find(line[li]) for li in range(1, len(line))]

letter_indexes.append(n_letters - 1) # EOS

return torch.LongTensor(letter_indexes)

# Make category, input, and target tensors from a random category, line pair

def randomTrainingExample():

category, line = randomTrainingPair()

category_tensor = Variable(categoryTensor(category))

input_line_tensor = Variable(inputTensor(line))

target_line_tensor = Variable(targetTensor(line))

return category_tensor, input_line_tensor, target_line_tensor

# our model

rnn = RNN(n_letters, 128, n_letters)

# Training the Network

criterion = nn.NLLLoss()

learning_rate = 0.0005

def train(category_tensor, input_line_tensor, target_line_tensor):

hidden = rnn.initHidden()

rnn.zero_grad()

loss = 0

for i in range(input_line_tensor.size()[0]):

output, hidden = rnn(category_tensor, input_line_tensor[i], hidden)

loss += criterion(output, target_line_tensor[i])

loss.backward()

for p in rnn.parameters():

p.data.add_(-learning_rate, p.grad.data)

return output, loss.data[0] / input_line_tensor.size()[0]

import time

import math

def timeSince(since):

now = time.time()

s = now - since

m = math.floor(s / 60)

s -= m * 60

return '%dm %ds' % (m, s)

n_iters = 100000

print_every = 5000

plot_every = 500

all_losses = []

total_loss = 0 # Reset every plot_every iters

start = time.time()

for iter in range(1, n_iters + 1):

output, loss = train(*randomTrainingExample())

total_loss += loss

if iter % print_every == 0:

print('%s (%d %d%%) %.4f' % (timeSince(start), iter, iter / n_iters * 100, loss))

if iter % plot_every == 0:

all_losses.append(total_loss / plot_every)

total_loss = 0

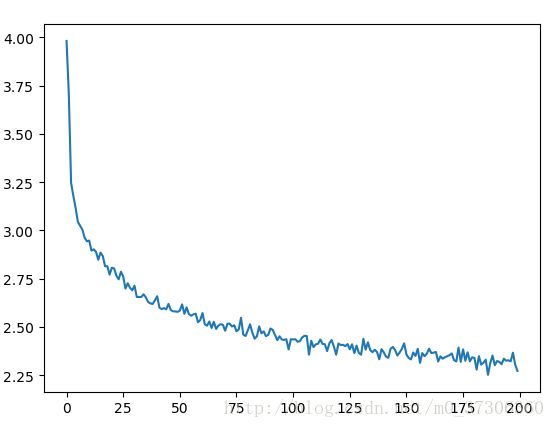

# 训练loss变化

import matplotlib.pyplot as plt

import matplotlib.ticker as ticker

plt.figure()

plt.plot(all_losses)

plt.show()

# Sample network

max_length = 20

# Sample from a category and starting letter

def sample(category, start_letter='A'):

category_tensor = Variable(categoryTensor(category))

input = Variable(inputTensor(start_letter))

hidden = rnn.initHidden()

output_name = start_letter

# 最大也只生成max_length长度

for i in range(max_length):

output, hidden = rnn(category_tensor, input[0], hidden)

topv, topi = output.data.topk(1)

topi = topi[0][0]

# 如果是EOS,停止

if topi == n_letters - 1:

break

else:

letter = all_letters[topi]

output_name += letter

# 否则,将这个时刻输出的字母作为下个时刻的输入字母

input = Variable(inputTensor(letter))

return output_name

# Get multiple samples from one category and multiple starting letters

def samples(category, start_letters='ABC'):

for start_letter in start_letters:

print(sample(category, start_letter))

samples('Russian', 'RUS')

samples('German', 'GER')

samples('Spanish', 'SPA')

samples('Chinese', 'CHI')

输出结果:

0m 32s (5000 5%) 2.3348

1m 1s (10000 10%) 3.0012

1m 29s (15000 15%) 2.7776

2m 2s (20000 20%) 2.7482

2m 38s (25000 25%) 1.3141

3m 10s (30000 30%) 2.5318

3m 37s (35000 35%) 2.4345

4m 3s (40000 40%) 2.7806

4m 30s (45000 45%) 2.0744

4m 57s (50000 50%) 2.7273

5m 23s (55000 55%) 5.1529

5m 49s (60000 60%) 2.0862

6m 15s (65000 65%) 2.5506

6m 41s (70000 70%) 3.4072

7m 8s (75000 75%) 2.6554

7m 34s (80000 80%) 2.1122

8m 12s (85000 85%) 2.2132

8m 44s (90000 90%) 1.9226

9m 11s (95000 95%) 2.8443

9m 37s (100000 100%) 2.4129

Roskin

Uakinov

Santovov

Gerran

Eren

Romer

Sara

Parez

Aller

Chan

Han

Iun

Process finished with exit code 0

loss变化:

参考:http://pytorch.org/tutorials/intermediate/char_rnn_generation_tutorial.html