AdaBoost元算法学习笔记

1,算法原理

元算法是对不同算法进行组合的方式来提高算法的性能。AdaBoost是最流行的元算法的一种。

使用元算法有多种形式,如不同算法的集成,同一算法在不同设置上的集成,数据集的不同部分分给不同算法后的集成

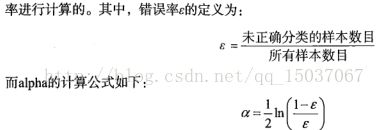

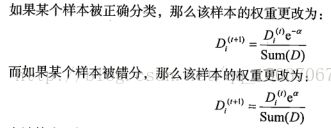

AdaBoost 的运行过程如下,数据集中的每个数据有一个权重,这些权重构成一个向量D,权重一开始初始为相同值,然后用数据集训练出一个弱分类器,然后计算错误概率。然后在同一数据集上再训练弱分类器,其中权重和第一次不同,第一次分对的权重减小,第一次分错的权重增大,为了从弱分类器中得到最后结果,会对每个弱分类器分一个权重值。这些权重值是基于分类器的错误率来计算的

在计算D之后,AdaBoost又开始下一轮迭代,直到错误率为0或迭代次数超过用户指定的数为止,接下来我们用单层决策树作弱分类器来实现AdaBoost

import numpy as np

import matplotlib.pyplot as pl

def loadSimpData():

datMat = np.matrix([[ 1. , 2.1],

[ 2. , 1.1],

[ 1.3, 1. ],

[ 1. , 1. ],

[ 2. , 1. ]])

classLabels = [1.0, 1.0, -1.0, -1.0, 1.0]

return datMat,classLabels

def stumpClassify(dataMat, dimen, threshVal, threshIneq):

retArray = np.ones((np.shape(dataMat)[0],1))

if threshIneq == 'lt':

retArray[dataMat[:,dimen] <= threshVal] = -1.0

else:

retArray[dataMat[:,dimen] > threshVal] = -1.0

return retArray

def buildStump(dataArr, Labels, D):

dataMat = np.mat(dataArr)

labelsMat = np.mat(Labels).T

m,n = np.shape(dataMat)

numSteps = 10.0

bestStump = {}

bestClassEst = np.mat(np.zeros((m,1)))

minError = np.inf

for i in range(n):

rangeMin = dataMat[:,i].min()

rangeMax = dataMat[:,i].max()

stepSize = (rangeMax - rangeMin) / numSteps

for j in range(-1,int(numSteps) + 1):

for inequal in ['lt','gt']:

threshVal = (rangeMin + float(j)*stepSize)

predictedVals = stumpClassify(dataMat, i, threshVal, inequal)

errArr = np.mat(np.ones((m,1)))

errArr[predictedVals==labelsMat] = 0

weightedError = D.T * errArr

if weightedError < minError:

minError = weightedError

bestClassEst = predictedVals.copy()

bestStump['dim'] = i

bestStump['thresh'] = threshVal

bestStump['ineq'] = inequal

return bestStump, minError, bestClassEst

def adaBoostTrainDS(dataArr, classLabels, numIt = 40):

weakClassArr = []

m = np.shape(dataArr)[0]

D = np.mat(np.ones((m,1))/m)

aggClassEst = np.mat(np.zeros((m,1)))

for i in range(numIt):

bestStump, error, classEst = buildStump(dataArr, classLabels, D)

alpha = float(0.5*np.log((1.0-error)/max(error,1e-16)))

bestStump['alpha'] = alpha

weakClassArr.append(bestStump)

expon = np.multiply(-1*alpha*np.mat(classLabels).T,classEst)

D = np.multiply(D,np.exp(expon))

D = D/D.sum()

aggClassEst += alpha * classEst

aggErrors = np.multiply(np.sign(aggClassEst)!=np.mat(classLabels).T,np.ones((m,1)))

errorRate = aggErrors.sum()/m

if errorRate == 0.0: break

return weakClassArr

def adaClassify(datToClass, ClassifierArr):

dataMat = np.mat(datToClass)

m = np.shape(dataMat)[0]

aggClassEst = np.mat(np.zeros((m,1)))

for i in range(len(ClassifierArr)):

classEst = stumpClassify(dataMat, ClassifierArr[i]['dim'], ClassifierArr[i]['thresh'], ClassifierArr[i]['ineq'])

aggClassEst += ClassifierArr[i]['alpha']*classEst

return np.sign(aggClassEst)