一. Kubernetes简介

1.基本对象

Pod

Pod是最小部署单元,一个Pod有一个或多个容器组成,Pod中容器共享存储和网络,在同一台Docker主机上运行。

Service

Service一个应用服务抽象,定义了Pod逻辑集合和访问这个Pod集合的策略。

Service代理Pod集合对外表现是为一个访问入口,分配一个集群IP地址,来自这个IP的请求将负载均衡转发后端Pod中的容器。

Service通过Lable Selector选择一组Pod提供服务。

Volume

数据卷,共享Pod中容器使用的数据。

Namespace

命名空间将对象逻辑上分配到不同Namespace,可以是不同的项目、用户等区分管理,并设定控制策略,从而实现多租户。

命名空间也称为虚拟集群。

Lable

标签用于区分对象(比如Pod、Service),键/值对存在;每个对象可以有多个标签,通过标签关联对象。

2.Master组件

kube-apiserver

Kubernetes API,集群的统一入口,各组件协调者,以HTTP API提供接口服务,所有对象资源的增删改查和监听操作都交给APIServer处理后再提交给Etcd存储。

kube-controller-manager

处理集群中常规后台任务,一个资源对应一个控制器,而ControllerManager就是负责管理这些控制器的。

kube-scheduler

根据调度算法为新创建的Pod选择一个Node节点。

3.Node组件

kubelet

kubelet是Master在Node节点上的Agent,管理本机运行容器的生命周期,比如创建容器、Pod挂载数据卷、

下载secret、获取容器和节点状态等工作。kubelet将每个Pod转换成一组容器。

kube-proxy

在Node节点上实现Pod网络代理,维护网络规则和四层负载均衡工作。

docker

运行容器。

4.第三方服务

etcd

分布式键值存储系统。用于保持集群状态,比如Pod、Service等对象信息。

二. Kubernetes搭建环境

Centos7系统

Mster节点 190.168.3.230

Node1节点 190.168.3.231

Node2节点 190.168.3.232

Etcd三节点高可用

搭建之间确保三台主机时间同步,关闭selinux和firewalld

三.搭建Kubernetes

1.搭建etcd

自签Etcd SSL证书,并在三节点搭建etcd集群

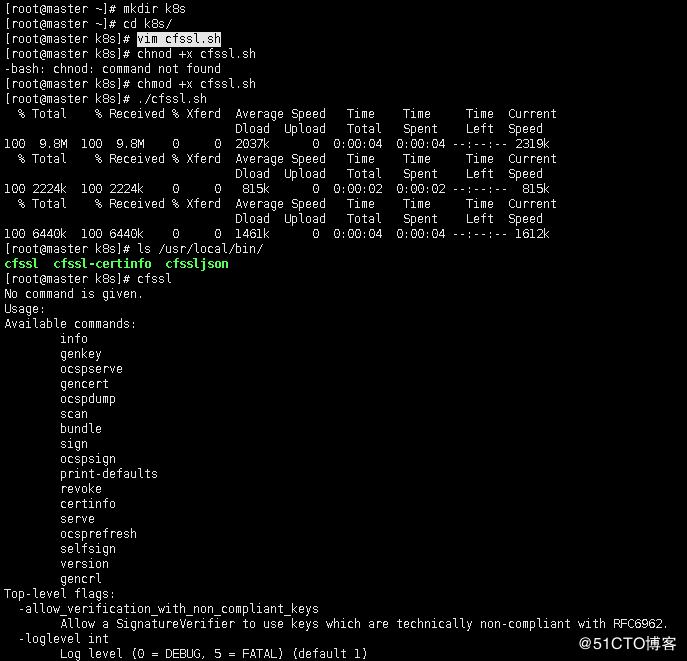

a.下载生成证书工具,并检查安装是否正常

vim cfssl.sh

curl -L https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 -o /usr/local/bin/cfssl

curl -L https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 -o /usr/local/bin/cfssljson

curl -L https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64 -o /usr/local/bin/cfssl-certinfo

chmod +x /usr/local/bin/cfssl /usr/local/bin/cfssljson /usr/local/bin/cfssl-certinfo

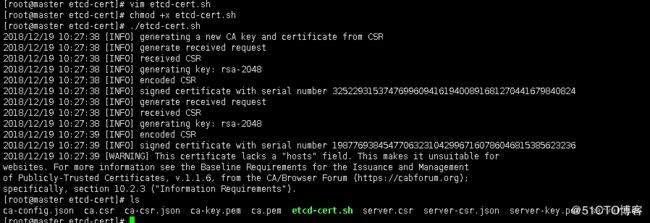

b.为etcd生成证书![]()

[root@master etcd-cert]# vim etcd-cert.sh

cat > ca-config.json <

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"www": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF

cat > ca-csr.json <

"CN": "etcd CA",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing"

}

]

}

EOF

cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

#-----------------------

cat > server-csr.json <

"CN": "etcd",

"hosts": [

"190.168.3.230",

"190.168.3.231",

"190.168.3.232"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing"

}

]

}

EOF

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json | cfssljson -bare server

注:将server-csr.json 文件里的hosts地址改为etcd三台集群的地址

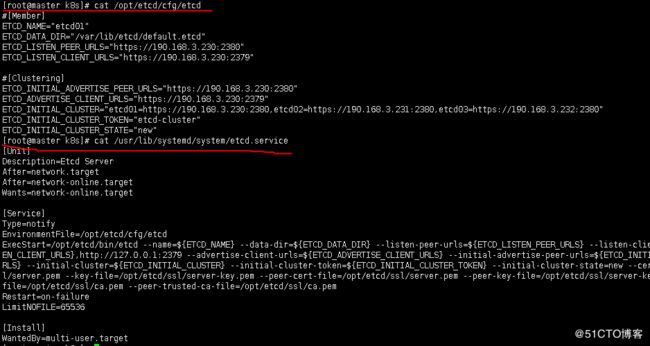

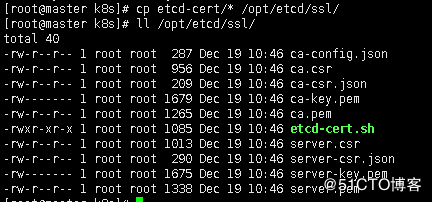

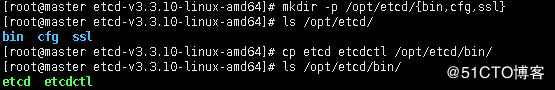

创建etcd目录,并将服务脚本拷贝到etcd的工作目录下/opt/etcd/bin/

创建etcd.sh 生成配置文件脚本

[root@master k8s]# vim etcd.sh

[root@master k8s]# chmod +x etcd.sh

#!/bin/bash

example: ./etcd.sh etcd01 192.168.1.10 etcd02=https://192.168.1.11:2380,etcd03=https://192.168.1.12:2380

ETCD_NAME=$1

ETCD_IP=$2

ETCD_CLUSTER=$3

WORK_DIR=/opt/etcd

cat <

#[Member]

ETCD_NAME="${ETCD_NAME}"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://${ETCD_IP}:2380"

ETCD_LISTEN_CLIENT_URLS="https://${ETCD_IP}:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://${ETCD_IP}:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://${ETCD_IP}:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://${ETCD_IP}:2380,${ETCD_CLUSTER}"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

EOF

cat <

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=${WORK_DIR}/cfg/etcd

ExecStart=${WORK_DIR}/bin/etcd \

--name=\${ETCD_NAME} \

--data-dir=\${ETCD_DATA_DIR} \

--listen-peer-urls=\${ETCD_LISTEN_PEER_URLS} \

--listen-client-urls=\${ETCD_LISTEN_CLIENT_URLS},http://127.0.0.1:2379 \

--advertise-client-urls=\${ETCD_ADVERTISE_CLIENT_URLS} \

--initial-advertise-peer-urls=\${ETCD_INITIAL_ADVERTISE_PEER_URLS} \

--initial-cluster=\${ETCD_INITIAL_CLUSTER} \

--initial-cluster-token=\${ETCD_INITIAL_CLUSTER_TOKEN} \

--initial-cluster-state=new \

--cert-file=${WORK_DIR}/ssl/server.pem \

--key-file=${WORK_DIR}/ssl/server-key.pem \

--peer-cert-file=${WORK_DIR}/ssl/server.pem \

--peer-key-file=${WORK_DIR}/ssl/server-key.pem \

--trusted-ca-file=${WORK_DIR}/ssl/ca.pem \

--peer-trusted-ca-file=${WORK_DIR}/ssl/ca.pem

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable etcd

systemctl restart etcd

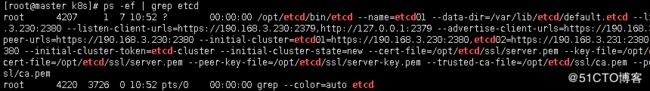

[root@master k8s]# ./etcd.sh etcd01 190.168.3.230 etcd02=https://190.168.3.231:2380,etcd03=https://190.168.3.232:2380

拷贝完后启动服务,第一次主节点启动服务比较慢

[root@master k8s]# systemctl start etcd

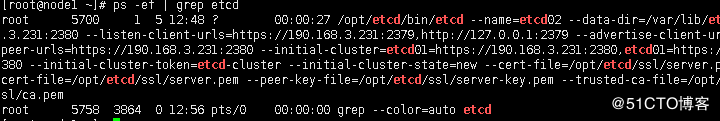

查看mster节点的etcd已经启动

[root@master k8s]# systemctl enable etcd 开机自启

d.安装node节点的etcd

将主节点配置好的文件直接拷贝到其他两个节点上

node1节点执行刚才的etcd.sh脚本

[root@node1 ~]# ./etcd.sh etcd02 190.168.3.231 etcd01=https://190.168.3.230:2380,etcd03=https://190.168.3.232:2380

检查node1下etcd的配置文件是否正确

[root@node1 ~]# vim /opt/etcd/cfg/etcd

[root@node1 ~]# systemctl start etcd

[root@node1 ~]# systemctl enable etcd

node2节点和node1节点一样执行脚本

[root@node2 ~]# ./etcd.sh etcd03 190.168.3.232 etcd01=https://190.168.3.230:2380,etcd02=https://190.168.3.231:2380

[root@node2 ~]# systemctl start etcd

[root@node2 ~]# systemctl enable etcd

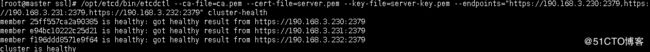

[root@master ssl]# /opt/etcd/bin/etcdctl --ca-file=ca.pem --cert-file=server.pem --key-file=server-key.pem --endpoints="https://190.168.3.230:2379,https://190.168.3.231:2379,https://190.168.3.232:2379" cluster-health

member 25ff557ca2a90385 is healthy: got healthy result from https://190.168.3.230:2379

member e94bc10222c25d21 is healthy: got healthy result from https://190.168.3.231:2379

member f196ddd8571e9f64 is healthy: got healthy result from https://190.168.3.232:2379

cluster is healthy

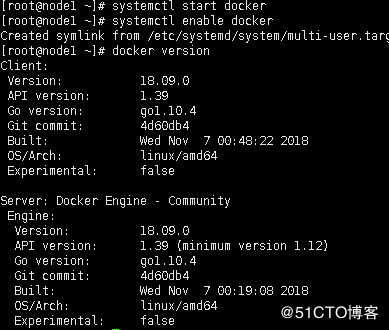

2.node节点搭建docker

两个节点做同样的操作

在官网上下载最新版本

https://docs.docker.com/install/linux/docker-ce/centos/

安装docker依赖

yum install -y yum-utils \

device-mapper-persistent-data \

lvm2

添加docker源仓库

yum-config-manager \

--add-repo \

https://download.docker.com/linux/centos/docker-ce.repo

安装docker

yum install docker-ce -y

systemctl start docker

systemctl enable docker

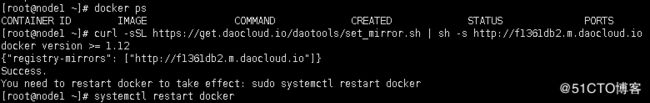

设置使用国内镜像

https://www.daocloud.io/mirror

curl -sSL https://get.daocloud.io/daotools/set_mirror.sh | sh -s http://f1361db2.m.daocloud.io

3.部署flannel网络

a.写入子网到etcd中供flannel使用

进入到etcd-cert证书目录

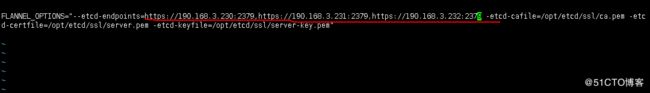

[root@master etcd-cert]# /opt/etcd/bin/etcdctl --ca-file=ca.pem --cert-file=server.pem --key-file=server-key.pem --endpoints="https://190.168.3.230:2379,https://190.168.3.231:2379,https://190.168.3.232:2379" set /coreos.com/network/config '{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}'

{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}

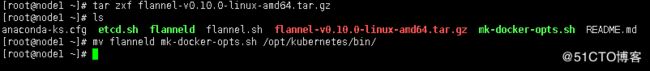

b.下载部署flannel包并部署

创建kubernetes下的flannel目录

[root@node1 ~]# mkdir -p /opt/kubernetes/{bin,cfg,ssl}

下载flannel包和编写部署脚本

将解压后的flanneld mk-docker-opts.sh拷贝到/opt/kubernetes/bin下

查看flannel.sh脚本

[root@node1 ~]# vim flannel.sh

#!/bin/bash

ETCD_ENDPOINTS=${1:-"http://127.0.0.1:2379"}

cat <

FLANNEL_OPTIONS="--etcd-endpoints=${ETCD_ENDPOINTS} \

-etcd-cafile=/opt/etcd/ssl/ca.pem \

-etcd-certfile=/opt/etcd/ssl/server.pem \

-etcd-keyfile=/opt/etcd/ssl/server-key.pem"

EOF

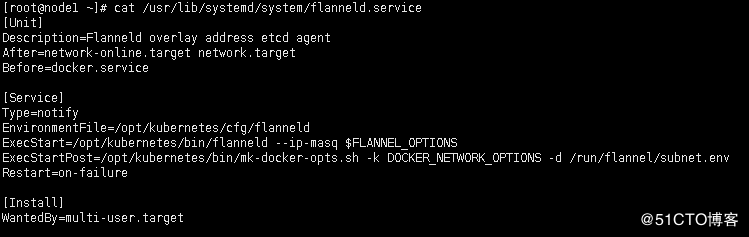

cat <

[Unit]

Description=Flanneld overlay address etcd agent

After=network-online.target network.target

Before=docker.service

[Service]

Type=notify

EnvironmentFile=/opt/kubernetes/cfg/flanneld

ExecStart=/opt/kubernetes/bin/flanneld --ip-masq \$FLANNEL_OPTIONS

ExecStartPost=/opt/kubernetes/bin/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/subnet.env

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

cat <

[Unit]

Description=Docker Application Container Engine

Documentation=https://docs.docker.com

After=network-online.target firewalld.service

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=/run/flannel/subnet.env

ExecStart=/usr/bin/dockerd \$DOCKER_NETWORK_OPTIONS

ExecReload=/bin/kill -s HUP \$MAINPID

LimitNOFILE=infinity

LimitNPROC=infinity

LimitCORE=infinity

TimeoutStartSec=0

Delegate=yes

KillMode=process

Restart=on-failure

StartLimitBurst=3

StartLimitInterval=60s

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable flanneld

systemctl restart flanneld

systemctl restart dockerd

执行flannel.sh脚本

[root@node1 ~]# ./flannel.sh

执行完修改配置文件为以下参数

[root@node1 ~]# vim /opt/kubernetes/cfg/flanneld

[root@node1 ~]# systemctl start flanneld

[root@node1 ~]# systemctl enable flanneld

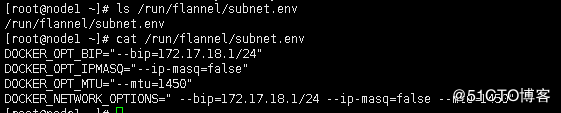

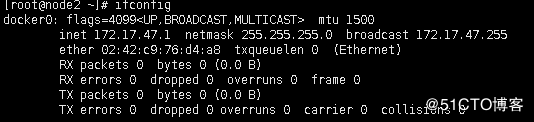

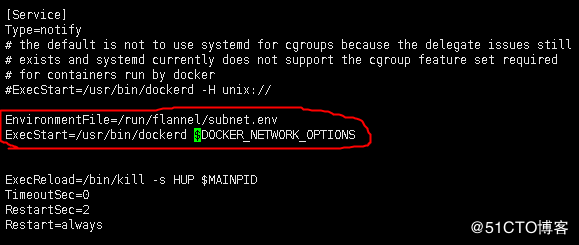

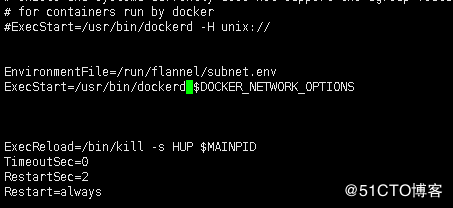

c.配置docker启动时配置使用flannel子网

将以下的配置设置到docker开机启动项中

[root@node1 ~]# cat flannel.sh

EnvironmentFile=/run/flannel/subnet.env

ExecStart=/usr/bin/dockerd $DOCKER_NETWORK_OPTIONS

[root@node1 ~]# vim /usr/lib/systemd/system/docker.service

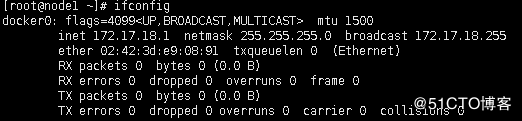

查看设置结果

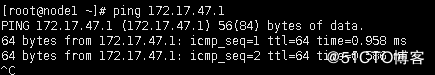

设置完后重新启动,docker进入到flannel的子网了

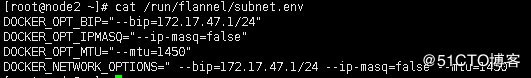

d.配置node2上的flannel网络

将node1 下的/opt/kubernetes目录直接拷贝到node2

证书用的是之前etcd下ssl目录里的证书

[root@node2 ~]# systemctl start flanneld

[root@node2 ~]# systemctl enable flanneld

[root@node2 ~]# vim /usr/lib/systemd/system/docker.service

设置完后重新启动,node2节点的docker进入到flannel的子网了

4.部署master组件

自签ssl证书

kube-apiserver

kube-controller-manager

kube-scheduler

a.生成自签ssl证书

cat > ca-config.json <

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF

cat > ca-csr.json <

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

#-----------------------

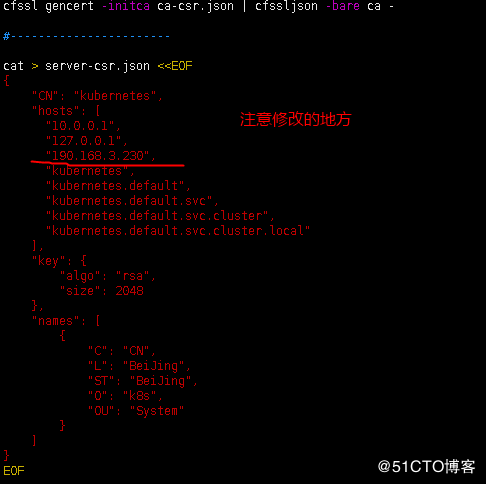

cat > server-csr.json <

"CN": "kubernetes",

"hosts": [

"10.0.0.1",

"127.0.0.1",

"190.168.3.250",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes server-csr.json | cfssljson -bare server

#-----------------------

"CN": "kubernetes",

"hosts": [

"10.0.0.1",

"127.0.0.1",

"190.168.3.230",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]}

EOF

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes server-csr.json | cfssljson -bare server

#-----------------------

cat > admin-csr.json <

"CN": "admin",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "system:masters",

"OU": "System"

}

]

}

EOF

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin

#-----------------------

cat > kube-proxy-csr.json <

"CN": "system:kube-proxy",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

执行创建脚本

[root@master k8s-cert]# chmod +x k8s-cert.sh

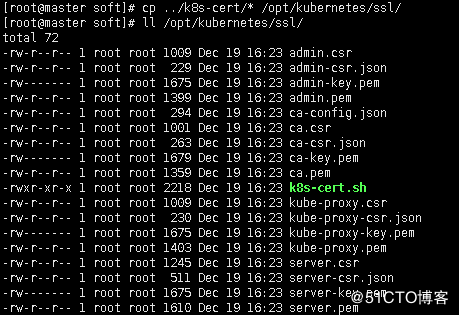

[root@master k8s-cert]# ./k8s-cert.sh ![]()

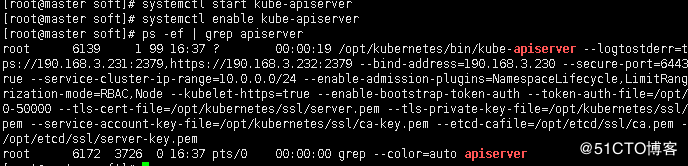

b.kube-apiserver部署

[root@master k8s]# mkdir soft

[root@master k8s]# cd soft/![]()

将需要的二进制命令拷贝到

[root@master bin]# cp kube-apiserver kubectl kube-controller-manager kube-scheduler /opt/kubernetes/bin/

查看aipserver部署脚本

[root@master soft]# cat apiserver.sh

#!/bin/bash

MASTER_ADDRESS=$1

ETCD_SERVERS=$2

cat <

KUBE_APISERVER_OPTS="--logtostderr=true \

--v=4 \

--etcd-servers=${ETCD_SERVERS} \

--bind-address=${MASTER_ADDRESS} \

--secure-port=6443 \

--advertise-address=${MASTER_ADDRESS} \

--allow-privileged=true \

--service-cluster-ip-range=10.0.0.0/24 \

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction \

--authorization-mode=RBAC,Node \

--kubelet-https=true \

--enable-bootstrap-token-auth \

--token-auth-file=/opt/kubernetes/cfg/token.csv \

--service-node-port-range=30000-50000 \

--tls-cert-file=/opt/kubernetes/ssl/server.pem \

--tls-private-key-file=/opt/kubernetes/ssl/server-key.pem \

--client-ca-file=/opt/kubernetes/ssl/ca.pem \

--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \

--etcd-cafile=/opt/etcd/ssl/ca.pem \

--etcd-certfile=/opt/etcd/ssl/server.pem \

--etcd-keyfile=/opt/etcd/ssl/server-key.pem"

EOF

cat <

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-apiserver

ExecStart=/opt/kubernetes/bin/kube-apiserver \$KUBE_APISERVER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable kube-apiserver

systemctl restart kube-apiserver

将之前生成的master节点部署生成的证书拷贝到/opt/kubernetes/ssl/下

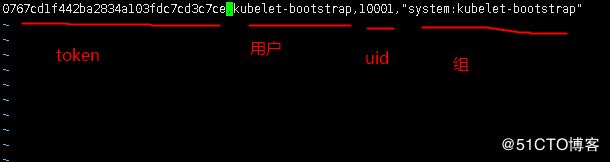

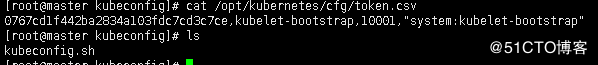

[root@master soft]# vim /opt/kubernetes/cfg/token.csv

0767cd1f442ba2834a103fdc7cd3c7ce,kubelet-bootstrap,10001,"system:kubelet-bootstrap"

生成aipserver服务文件

[root@master soft]# chmod +x apiserver.sh

[root@master soft]# ./apiserver.sh 190.168.3.230 https://190.168.3.230:2379,https://190.168.3.231:2379,https://190.168.3.232:2379

c.部署启动kube-controller-manager

查看controller-manager配置脚本

[root@master soft]# cat controller-manager.sh

#!/bin/bash

MASTER_ADDRESS=$1

cat <

KUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=true \

--v=4 \

--master=${MASTER_ADDRESS}:8080 \

--leader-elect=true \

--address=127.0.0.1 \

--service-cluster-ip-range=10.0.0.0/24 \

--cluster-name=kubernetes \

--cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem \

--cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \

--root-ca-file=/opt/kubernetes/ssl/ca.pem \

--service-account-private-key-file=/opt/kubernetes/ssl/ca-key.pem \

--experimental-cluster-signing-duration=87600h0m0s"

EOF

cat <

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-controller-manager

ExecStart=/opt/kubernetes/bin/kube-controller-manager \$KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable kube-controller-manager

systemctl restart kube-controller-manager

d.kube-scheduler调度服务启动

查看配置服务脚本

[root@master soft]# cat scheduler.sh

#!/bin/bash

MASTER_ADDRESS=$1

cat <

KUBE_SCHEDULER_OPTS="--logtostderr=true \

--v=4 \

--master=${MASTER_ADDRESS}:8080 \

--leader-elect"

EOF

cat <

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-scheduler

ExecStart=/opt/kubernetes/bin/kube-scheduler \$KUBE_SCHEDULER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable kube-scheduler

systemctl restart kube-scheduler

e.查看mster部署结果是否正常

[root@master soft]# /opt/kubernetes/bin/kubectl get cs

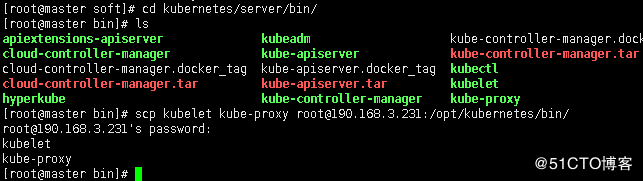

5.部署node组件

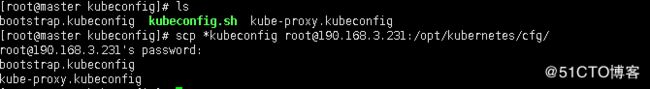

a.创建kubeconfig文件

将master kubernetes下的二进制命令kubelet kube-proxy传给node1

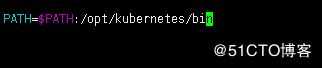

配置kubelet kube-proxy的环境变量

[root@master kubeconfig]# vim /etc/profile

[root@master kubeconfig]# source /etc/profile

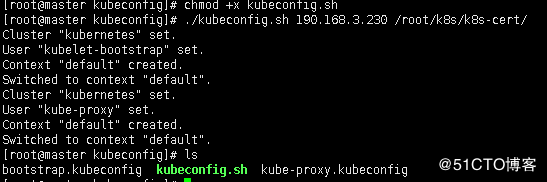

将之前生成的token写入到kubeconfig.sh文件里

修改配置文件

[root@master kubeconfig]# vim kubeconfig.sh

创建 TLS Bootstrapping Token

#BOOTSTRAP_TOKEN=$(head -c 16 /dev/urandom | od -An -t x | tr -d ' ')

#BOOTSTRAP_TOKEN=0fb61c46f8991b718eb38d27b605b008

#cat > token.csv <

#EOF

#----------------------

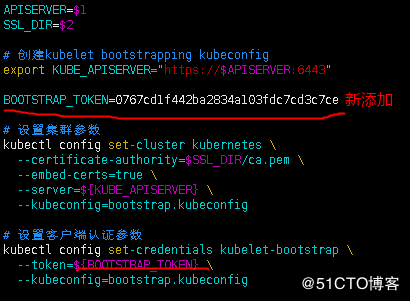

APISERVER=$1

SSL_DIR=$2

创建kubelet bootstrapping kubeconfig

export KUBE_APISERVER="https://$APISERVER:6443"

BOOTSTRAP_TOKEN=0767cd1f442ba2834a103fdc7cd3c7ce

设置集群参数

kubectl config set-cluster kubernetes \

--certificate-authority=$SSL_DIR/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=bootstrap.kubeconfig

设置客户端认证参数

kubectl config set-credentials kubelet-bootstrap \

--token=${BOOTSTRAP_TOKEN} \

--kubeconfig=bootstrap.kubeconfig

设置上下文参数

kubectl config set-context default \

--cluster=kubernetes \

--user=kubelet-bootstrap \

--kubeconfig=bootstrap.kubeconfig

设置默认上下文

kubectl config use-context default --kubeconfig=bootstrap.kubeconfig

#----------------------

创建kube-proxy kubeconfig文件

kubectl config set-cluster kubernetes \

--certificate-authority=$SSL_DIR/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=kube-proxy.kubeconfig

kubectl config set-credentials kube-proxy \

--client-certificate=$SSL_DIR/kube-proxy.pem \

--client-key=$SSL_DIR/kube-proxy-key.pem \

--embed-certs=true \

--kubeconfig=kube-proxy.kubeconfig

kubectl config set-context default \

--cluster=kubernetes \

--user=kube-proxy \

--kubeconfig=kube-proxy.kubeconfig

kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

b.将kubelet-bootstrap用户绑定到系统集群角色

[root@master k8s-cert]# kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --user=kubelet-bootstrap

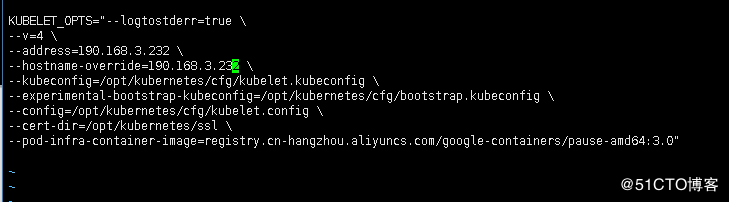

node1 节点kubelet配置

查看配置脚本

[root@node1 ~]# cat kubelet.sh

#!/bin/bash

NODE_ADDRESS=$1

DNS_SERVER_IP=${2:-"10.0.0.2"}

cat <

KUBELET_OPTS="--logtostderr=true \

--v=4 \

--address=${NODE_ADDRESS} \

--hostname-override=${NODE_ADDRESS} \

--kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig \

--experimental-bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig \

--config=/opt/kubernetes/cfg/kubelet.config \

--cert-dir=/opt/kubernetes/ssl \

--pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0"

EOF

cat <

kind: KubeletConfiguration

apiVersion: kubelet.config.k8s.io/v1beta1

address: ${NODE_ADDRESS}

port: 10250

cgroupDriver: cgroupfs

clusterDNS:

- ${DNS_SERVER_IP}

clusterDomain: cluster.local.

failSwapOn: false

EOF

cat <

[Unit]

Description=Kubernetes Kubelet

After=docker.service

Requires=docker.service

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kubelet

ExecStart=/opt/kubernetes/bin/kubelet \$KUBELET_OPTS

Restart=on-failure

KillMode=process

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable kubelet

systemctl restart kubelet

执行脚本

[root@node1 ~]# ./kubelet.sh 190.168.3.231 10.0.0.2

后面是加的是dns,后面会部署

注:这一步记得前面要将kubelet-bootstrap用户绑定到系统集群角色

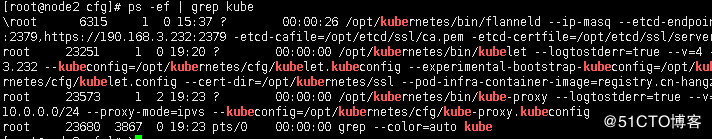

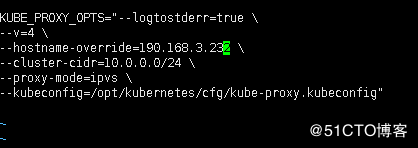

d.node1部署kube-proxy组件![]()

[root@node1 ~]# cat proxy.sh

#!/bin/bash

NODE_ADDRESS=$1

cat <

KUBE_PROXY_OPTS="--logtostderr=true \

--v=4 \

--hostname-override=${NODE_ADDRESS} \

--cluster-cidr=10.0.0.0/24 \

--proxy-mode=ipvs \

--kubeconfig=/opt/kubernetes/cfg/kube-proxy.kubeconfig"

EOF

cat <

[Unit]

Description=Kubernetes Proxy

After=network.target

[Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-proxy

ExecStart=/opt/kubernetes/bin/kube-proxy \$KUBE_PROXY_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable kube-proxy

systemctl restart kube-proxy

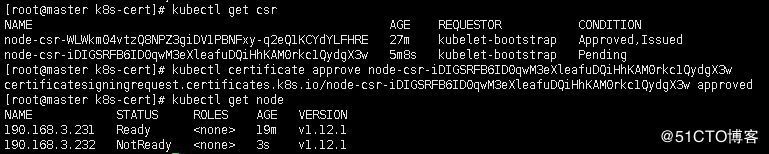

同意请求,加入集群几点

[root@master k8s-cert]# kubectl certificate approve node-csr-WLWkm04vtzQ8NPZ3giDV1PBNFxy-q2eQ1KCYdYLFHRE

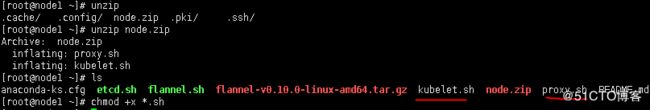

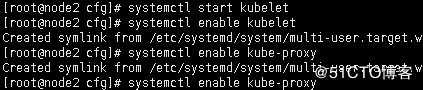

f.配置node2节点的kubelet和kube-proxy

由于node1 下的 /opt/kubernetes目录和node2一直,可以直接拷过去

切换到node2节点![]()

修改地址

[root@node2 cfg]# vim kubelet

[root@node2 cfg]# vim kube-proxy

在node2上删除生成的证书

[root@node2 cfg]# rm -f /opt/kubernetes/ssl/*

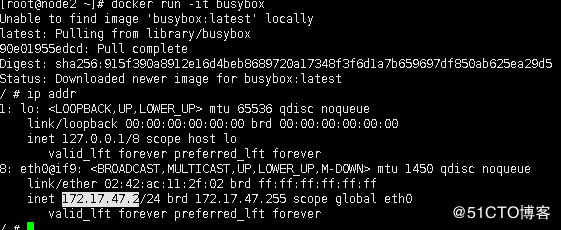

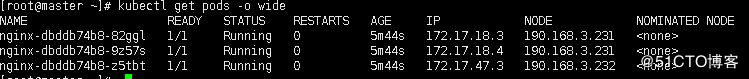

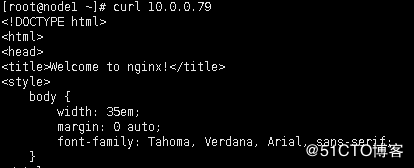

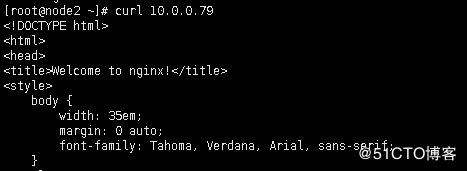

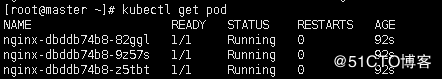

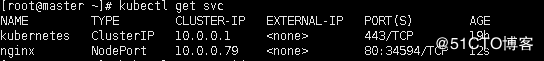

四.运行实例测试

运行3个副本的nginx

[root@master ~]# kubectl run nginx --image=nginx --replicas=3

启动服务,暴露80端口

[root@master ~]# kubectl expose deployment nginx --port=80 --target-port=80 --type=NodePort

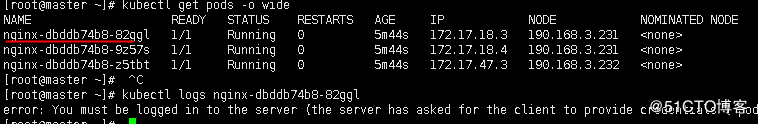

解决没有权限查看日志

查看其中的一个日志报错

这个是没有权限

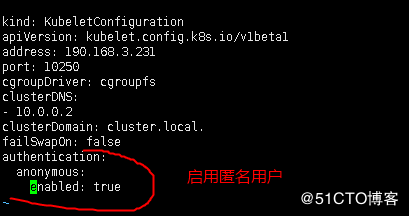

创建一个匿名用户访问日志

在node1和node2节点的kubelet.config文件里启用匿名用户

[root@node1 ~]# vim /opt/kubernetes/cfg/kubelet.config

[root@node1 ~]# systemctl restart kubelet

再次访问还是报错,要付于匿名用户管理员权限

[root@master ~]# kubectl create clusterrolebinding cluster-system-anonymous --clusterrole=cluster-admin --user=system:anonymous

再次测试正常

-