爬虫学习(一)---爬取电影天堂下载链接

欢迎加入python学习交流群 667279387

爬虫学习

爬虫学习(一)—爬取电影天堂下载链接

爬虫学习(二)–爬取360应用市场app信息

主要利用了python3.5 requests,BeautifulSoup,eventlet三个库来实现。

1、解析单个电影的详细页面

例如这个网址:http://www.dy2018.com/i/98477.html。要获取这个电影的影片名和下载地址。我们先打开这个网页来分析下这个这个网页的源代码。

包含影片名字的字段:

<div class="title_all"><h1>2017年欧美7.0分科幻片《猩球崛起3:终极之战》HD中英双字h1>div>包含影片下载地址的 字段:

<td style="WORD-WRAP: break-word" bgcolor="#fdfddf"><a href="ftp://d:[email protected]:12311/[电影天堂www.dy2018.com]猩球崛起3:终极之战HD中英双字.rmvb">ftp://d:[email protected]:12311/[电影天堂www.dy2018.com]猩球崛起3:终极之战HD中英双字.rmvba>td>获取单个影片的影片名和下载链接

import re

import requests

from bs4 import BeautifulSoup

url = 'http://www.dy2018.com/i/98477.html'

headers = {'User-Agent':'Mozilla/5.0 (Windows NT 6.1; WOW64; rv:38.0) Gecko/20100101 Firefox/38.0'}

def get_one_film_detail(url):

#print("one_film doing:%s" % url)

r = requests.get(url, headers=headers)

# print(r.text.encode(r.encoding).decode('gbk'))

bsObj = BeautifulSoup(r.content.decode('gbk','ignore'), "html.parser")

td = bsObj.find('td', attrs={'style': 'WORD-WRAP: break-word'})

if td is None:#没有找到下载标签的返回None,个别网页格式不同

return None, None

url_a = td.find('a')

url_a = url_a.string

title = bsObj.find('h1')

title = title.string

# title = re.findall(r'[^《》]+', title)[1] #此处处理一下的话就只返回影片名 本例结果为:猩球崛起3:终极之战

return title, url_a

print (get_one_film_detail(url))2、解析页面列表中所有的影片链接

先打开一个影片列表链接,例如:http://www.dy2018.com/2/index_2.html

打开查看源码可以看到每部影片都是下面这样的信息开头,然后接上影片的简单介绍,介绍我们不关心,只关心下面这段含有影片详情页面的链接,主要获取这个页面所有这样列出的影片的详情页面的链接。

<td height="26">

<b>

<a class=ulink href='/html/gndy/dyzz/'>[最新电影]a>

<a href="/i/98256.html" class="ulink" title="2017年印度7.1分动作片《巴霍巴利王(下):终结》BD中英双字">2017年印度7.1分动作片《巴霍巴利王(下):终结》BD中英双字a>

b>

td>import re

import requests

from bs4 import BeautifulSoup

page_url = 'http://www.dy2018.com/2/index_22.html'

headers = {'User-Agent':'Mozilla/5.0 (Windows NT 6.1; WOW64; rv:38.0) Gecko/20100101 Firefox/38.0'}

def get_one_page_urls(page_url):

#print("one_page doing:%s" % page_url)

urls = []

base_url = "http://www.dy2018.com"

r = requests.get(page_url, headers=headers)

bsObj = BeautifulSoup(r.content, "html.parser")

url_all = bsObj.find_all('a', attrs={'class': "ulink", 'title': re.compile('.*')})

for a_url in url_all:

a_url = a_url.get('href')

a_url = base_url + a_url

urls.append(a_url)

return urls

print (get_one_page_urls(page_url))3、多页面开始爬虫

有了前面两步骤的准备就可以进行多页面爬取数据了。

import eventlet

import re

import time

import requests

from bs4 import BeautifulSoup

headers = {'User-Agent':'Mozilla/5.0 (Windows NT 6.1; WOW64; rv:38.0) Gecko/20100101 Firefox/38.0'}

def get_one_film_detail(url):

print("one_film doing:%s" % url)

r = requests.get(url, headers=headers)

# print(r.text.encode(r.encoding).decode('gbk'))

bsObj = BeautifulSoup(r.content.decode('gbk','ignore'), "html.parser")

td = bsObj.find('td', attrs={'style': re.compile('.*')})

if td is None:

return None, None

url_a = td.find('a')

url_a = url_a.string

title = bsObj.find('h1')

title = title.string

# title = re.findall(r'[^《》]+', title)[1]

return title, url_a

def get_one_page_urls(page_url):

print("one_page doing:%s" % page_url)

urls = []

base_url = "http://www.dy2018.com"

r = requests.get(page_url, headers=headers)

bsObj = BeautifulSoup(r.content, "html.parser")

url_all = bsObj.find_all('a', attrs={'class': "ulink", 'title': re.compile('.*')})

for a_url in url_all:

a_url = a_url.get('href')

a_url = base_url + a_url

urls.append(a_url)

return urls

# print(r.text.encode(r.encoding).decode('gbk'))

pool = eventlet.GreenPool()

f = open("download.txt", "w")

start = time.time()

for i in range(2, 100):

page_url = 'http://www.dy2018.com/2/index_%s.html' % i

for title, url_a in pool.imap(get_one_film_detail, get_one_page_urls(page_url)):

# print("titel:%s,download url:%s"%(title,url_a))

f.write("%s:%s\n\n" % (title, url_a))

end = time.time()

print('total time cost:')

print(end - start)前面的这段代码估计是因为用了绿色线程爬取速度过快,爬去到了20多页之后会主动给我断开,报错如下:

raise RemoteDisconnected("Remote end closed connection without"

http.client.RemoteDisconnected: Remote end closed connection without response优化之后的代码

import eventlet

import re

import time

import requests

from bs4 import BeautifulSoup

result = []

headers={'User-Agent':'Mozilla/5.0 (Windows NT 6.1; WOW64; rv:38.0) Gecko/20100101 Firefox/38.0'}

def get_one_film_detail(urls):

req = requests.session() #此处改用session,可以减少和服务器的 tcp链接次数。

req.headers.update(headers)

for url in urls:

print("one_film doing:%s" % url)

r = req.get(url)

# print(r.text.encode(r.encoding).decode('gbk'))

bsObj = BeautifulSoup(r.content.decode('gbk','ignore'), "html.parser")

td = bsObj.find('td', attrs={'style': re.compile('.*')})

if td is None:

continue

url_a = td.find('a')

if url_a is None:

continue

url_a = url_a.string

title = bsObj.find('h1')

title = title.string

# title = re.findall(r'[^《》]+', title)[1]

f = open("download.txt", "a")

f.write("%s:%s\n\n" % (title, url_a))

def get_one_page_urls(page_url):

print("one_page doing:%s" % page_url)

urls = []

base_url = "http://www.dy2018.com"

r = requests.get(page_url, headers=headers)

bsObj = BeautifulSoup(r.content, "html.parser")

url_all = bsObj.find_all('a', attrs={'class': "ulink", 'title': re.compile('.*')})

for a_url in url_all:

a_url = a_url.get('href')

a_url = base_url + a_url

urls.append(a_url)

return urls

# print(r.text.encode(r.encoding).decode('gbk'))

pool = eventlet.GreenPool()

start = time.time()

page_urls = ['http://www.dy2018.com/2/']

for i in range(2, 100):

page_url = 'http://www.dy2018.com/2/index_%s.html' % i

page_urls.append(page_url)

for urls in pool.imap(get_one_page_urls, page_urls):

get_one_film_detail(urls)

end = time.time()

print('total time cost:')

print(end - start)

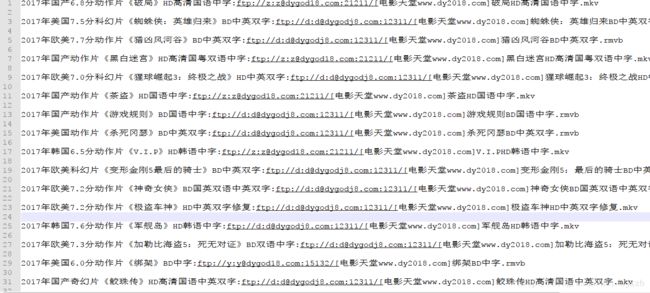

优化之后相当于多线程获取所有的影片的链接,再单线程去获取每个影片的下载地址。获取电影天堂上面的动作电影下载链接总共 花了

total time cost:

304.44839310646057如果源码对你有用,请评论下博客说声谢谢吧~

欢迎python爱好者加入:学习交流群 667279387