卷积神经网络常见架构AlexNet、ZFNet、VGGNet、GoogleNet和ResNet模型

目前的常见的卷积网络结构有AlexNet、ZF Net、VGGNet、Inception、ResNet等等,接下来我们对这些架构一一详解。

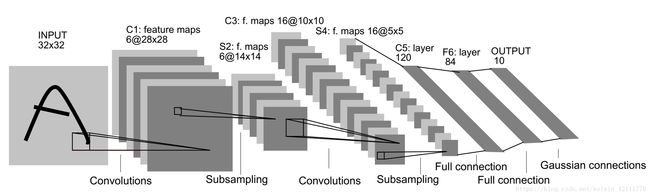

LeNet-5

LeNet-5模型诞生于1998年,是Yann LeCun教授在论文Gradient-based learning applied to document recognition中提出的,它是第一个成功应用于数字识别问题的卷积神经网络,麻雀虽小五脏俱全,它包含了深度学习的基本模块:卷积层,池化层,全连接层。是其他深度学习模型的基础。代码见:使用LeNet-5和类LeNet-5网络识别手写字体

LeNet除了输入层,总共有7层。输入是32*32大小的图像

- C1是卷积层,卷积层的过滤器尺寸大小为5*5,深度为6,不使用全0补充,步长为1,所以这一层的输出尺寸为32-5+1=28,深度为6。

- S2层是一个池化层,这一层的输入是C1层的输出,是一个28286的结点矩阵,过滤器大小为22,步长为2,所以本层的输出为1414*6

- C3层也是一个卷积层,本层的输入矩阵为14146,过滤器大小为55,深度为16,不使用全0补充,步长为1,故输出为1010*16

- S4层是一个池化层 ,输入矩阵大小为101016,过滤器大小为22,步长为2,故输出矩阵大小为55*16

- C5层是一个卷积层,过滤器大小为55,和全连接层没有区别,这里可看做全连接层。输入为5516矩阵,将其拉直为一个长度为55*16的向量,即将一个三维矩阵拉直到一维向量空间中,输出结点为120。

- F6层是全连接层 ,输入结点为120个,输出结点为84个

- 输出层也是全连接层,输入结点为84个,输出节点个数为10。

# coding=utf-8

import tensorflow as tf

from tensorflow.contrib.layers import flatten

# 定义LeNet网络

def LeNet(input_tensor):

# C1 conv Input=32*32*1, Output=28*28*6

conv1_w = tf.Variable(tf.truncated_normal(shape=[5, 5, 1, 6], mean=0, stddev=0.1))

conv1_b = tf.Variable(tf.zeros(6))

conv1 = tf.nn.conv2d(input_tensor, conv1_w, strides=[1, 1, 1, 1], padding='VALID')+conv1_b

conv1 = tf.nn.relu(conv1)

# S2 Pooling Input=28*28*6 Output=14*14*6

pool_1 = tf.nn.max_pool(conv1, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1], padding='VALID')

# C3 conv Input=14*14*6 Output=10*10*6

conv2_w = tf.Variable(tf.truncated_normal(shape=[5, 5, 6, 16], mean=0, stddev=0.1))

conv2_b = tf.Variable(tf.zeros(16))

conv2 = tf.nn.conv2d(pool_1, conv2_w, strides=[1, 1, 1, 1], padding='VALID')+conv2_b

conv2 = tf.nn.relu(conv2)

# S4 Pooling Input=10*10*6 OutPut=5*5*16

pool_2 = tf.nn.max_pool(conv2, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1], padding='VALID')

# Flatten Input=5*5*16 Output=400

fc1 = flatten(pool_2)

# C5 conv Input=5*5*16=400 Output=120

fc1_w = tf.Variable(tf.truncated_normal(shape=(400, 120), mean=0, stddev=0.1))

fc1_b = tf.Variable(tf.zeros(120))

fc1 = tf.matmul(fc1, fc1_w) + fc1_b

# F6 Input=120 OutPut=84

fc2_w = tf.Variable(tf.truncated_normal(shape=(120, 84), mean=0, stddev=0.1))

fc2_b = tf.Variable(tf.zeros(84))

fc2 = tf.matmul(fc1, fc2_w)+fc2_b

fc2 = tf.nn.relu(fc2)

# F7 Input=84 Output=10

fc3_w = tf.Variable(tf.truncated_normal(shape=(84, 10), mean=0, stddev=0.1))

fc3_b = tf.Variable(tf.zeros(10))

logits = tf.matmul(fc2, fc3_w) + fc3_b

return logits

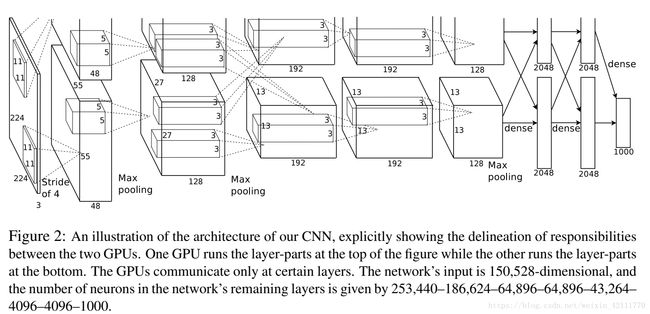

AlexNet

LeNet提出并成功地应用手写数字识别,但是很快,CNN的锋芒被SVM和手工设计的局部特征所掩盖。2012年,AlexNet在ImageNet图像分类任务竞赛中获得冠军,一鸣惊人,从此开创了深度神经网络空前的高潮。论文ImageNet Classification with Deep Convolutional Neural Networks

AlexNet优势在于:

- 使用了非线性激活函数ReLU(如果不是很了解激活函数,可以参考我的另一篇博客 激活函数Activation Function,并验证其效果在较深的网络超过Sigmoid,成功解决了Sigmoid在网络较深时的梯度弥散问题。

- 提出了LRN(Local Response Normalization),局部响应归一化,LRN一般用在激活和池化函数后,对局部神经元的活动创建竞争机制,使其中响应比较大对值变得相对更大,并抑制其他反馈较小的神经元,增强了模型的泛化能力

- 使用CUDA加速深度神经卷积网络的训练,利用GPU强大的并行计算能力,处理神经网络训练时大量的矩阵运算

- 在CNN中使用重叠的最大池化,AlexNet全部使用最大池化,避免平均池化的模糊化效果。AlexNet中提出让步长比池化核的尺寸小,这样池化层的输出之间会有重叠和覆盖,提升了特征的丰富性。

- 使用数据增广(data agumentation)和Dropout防止过拟合。【数据增广】随机地从256256的原始图像中截取224224大小的区域,相当于增加了2048倍的数据量;【Dropout】AlexNet在后面的三个全连接层中使用Dropout,随机忽略一部分神经元,以避免模型过拟合。

AlexNet总共包含8层,其中有5个卷积层和3个全连接层,有60M个参数,神经元个数为650k,分类数目为1000,LRN层出现在第一个和第二个卷积层后面,最大池化层出现在两个LRN层及最后一个卷积层后。

1. 第一层输入图像规格为2272273,过滤器(卷积核)大小为1111,深度为96,步长为4,则卷积后的输出为555596,分成两组,进行池化运算处理,过滤器大小为33,步长为2,池化后的每组输出为272748

2. 第二层的输入为272796,分成两组,为272748,填充为2,过滤器大小为55,深度为128,步长为1,则每组卷积后的输出为2727128;然后进行池化运算,过滤器大小为33,步长为2,则池化后的每组输出为1313128

3. 第三层两组中每组的输入为1313128,填充为1,过滤器尺寸为33,深度为192,步长为1,卷积后输出为1313192。**在C3这里做了通道的合并,也就是一种串联操作,所以一个卷积核卷积的不再是单张显卡上的图像,而是两张显卡的图像串在一起之后的图像,所以卷积核的厚度为256,这也是为什么图上会画两个33128的卷积核。**

4. 第四层两组中每组的输入为1313192,填充为1,过滤器尺寸为33,深度为192,步长为1,卷积后输出为1313192

5. 第五层输入为上层的两组1313192,填充为1,过滤器大小为33,深度为128,步长为1,卷积后的输出为1313128;然后进行池化处理,过滤器大小为33,步长为2,则池化后的每组输出为66128

6. 第六层为全连接层,上一层总共的输出为66256,所以这一层的输入为66256,采用66256尺寸的过滤器对输入数据进行卷积运算,每个过滤器对输入数据进行卷积运算生成一个结果,通过一个神经元输出,设置4096个过滤器,所以输出结果为4096

7. 第六层的4096个数据与第七层的4096个神经元进行全连接

8. 第八层的输入为4096,输出为1000(类别数目)

下面的代码运行在一块GPU上

def AlexNet(images):

# 图像规格227*227*3,卷积核大小为11*11,步长为4,深度为96, 输出为55*55*96

with tf.name_scope('conv_1'):

conv1_w = tf.Variable(tf.truncated_normal(shape=[11, 11, 3, 96], dtype=tf.float32, mean=0,

stddev=0.1), name='weights')

conv1_b = tf.Variable(tf.constant(value=0.0, shape=[96], dtype=tf.float32),

trainable=True, name='biases')

conv_1 = tf.nn.conv2d(images, conv1_w, strides=[1, 4, 4, 1], padding='VALID') + conv1_b

conv_1 = tf.nn.relu(conv_1, name='conv1')

# 输入55*55*96,过滤器大小为3*3,步长为2,输出为27*27*96

with tf.name_scope('lrn1_and_pooling1'):

lrn_1 = tf.nn.lrn(conv_1, 4, bias=1.0, alpha=0.001 / 9.0, beta=0.75, name='LRN_1')

pooling_1 = tf.nn.max_pool(lrn_1, ksize=[1, 3, 3, 1], strides=[1, 2, 2, 1],

padding='VALID', name='pooling_1')

# 输入为27*27*96,卷积核大小为5*5,步长为1,填充为2,深度为256,输出为27*27*256

with tf.name_scope('conv_2'):

conv2_w = tf.Variable(tf.truncated_normal(shape=[5, 5, 96, 256], dtype=tf.float32, mean=0,

stddev=0.1), name='weights')

conv2_b = tf.Variable(tf.constant(value=0.0, shape=[256], dtype=tf.float32),

trainable=True, name='biases')

conv_2 = tf.nn.conv2d(pooling_1, conv2_w, strides=[1, 1, 1, 1], padding='SAME') + conv2_b

conv_2 = tf.nn.relu(conv_2, name='conv2')

# 输入为27*27*256,过滤器大小为3*3,步长为2,输出为13*13*256

with tf.name_scope('lrn2_and_pooling2'):

lrn_2 = tf.nn.lrn(conv_2, 4, bias=1.0, alpha=0.001 / 9.0, beta=0.75, name='lrn_2')

pooling_2 = tf.nn.max_pool(lrn_2, ksize=[1, 3, 3, 1], strides=[1, 2, 2, 1],

padding='VALID', name='pooling_2')

# 输入为13*13*256,卷积核大小为3*3,步长为1,填充为1,输出为13*13*384

with tf.name_scope('conv_3'):

conv3_w = tf.Variable(tf.truncated_normal(shape=[3, 3, 256, 384], dtype=tf.float32, mean=0,

stddev=0.1), name='weights')

conv3_b = tf.Variable(tf.constant(value=0.0, shape=[384], dtype=tf.float32),

trainable=True, name='biases')

conv_3 = tf.nn.conv2d(pooling_2, conv3_w, strides=[1, 1, 1, 1], padding='SAME') + conv3_b

conv_3 = tf.nn.relu(conv_3, name='conv_3')

# 输入为13*13*384,卷积核大小为3*3,步长为1,填充为1,输出为13*13*384

with tf.name_scope('conv_4'):

conv4_w = tf.Variable(tf.truncated_normal(shape=[3, 3, 384, 384], dtype=tf.float32, mean=0,

stddev=0.1), name='weights')

conv4_b = tf.Variable(tf.constant(value=0.0, shape=[384], dtype=tf.float32), name='biases')

conv_4 = tf.nn.conv2d(conv_3, conv4_w, strides=[1, 1, 1, 1], padding='SAME') + conv4_b

conv_4 = tf.nn.relu(conv_4, name='conv_4')

# 输入为13*13*384,卷积核大小为3*3,步长为1,填充为1,输出为13*13*256

with tf.name_scope('conv_5'):

conv5_w = tf.Variable(tf.truncated_normal(shape=[3, 3, 384, 256], dtype=tf.float32, mean=0,

stddev=0.1), name='weights')

conv5_b = tf.Variable(tf.constant(value=0.0, shape=[256], dtype=tf.float32), name='biases')

conv_5 = tf.nn.conv2d(conv_4, conv5_w, strides=[1, 1, 1, 1], padding='SAME') + conv5_b

conv_5 = tf.nn.relu(conv_5, name='conv_5')

#输入为13*13*256,过滤器大小为3*3,步长为2,输出为6*6*256

with tf.name_scope('pooling_3'):

pooling_3 = tf.nn.max_pool(conv_5, ksize=[1, 3, 3, 1], strides=[1, 2, 2, 1],

padding='VALID', name='pooling_3')

print('pooling_3:', pooling_3.shape)

fc_1 = flatten(pooling_3)

dim = fc_1.shape[1].value

with tf.name_scope('full_connection_1'):

fc1_w = tf.Variable(tf.truncated_normal(shape=[dim, 4096], dtype=tf.float32, mean=0,

stddev=0.1), name='weights')

fc1_b = tf.Variable(tf.constant(value=0.0, dtype=tf.float32, shape=[4096]), name='biases')

fc1 = tf.matmul(fc_1, fc1_w) + fc1_b

with tf.name_scope('full_connection_2'):

fc2_w = tf.Variable(tf.truncated_normal(shape=[4096, 4096], dtype=tf.float32, mean=0,

stddev=0.1), name='weights')

fc2_b = tf.Variable(tf.constant(value=0.0, dtype=tf.float32, shape=[4096]), name='biases')

fc2 = tf.matmul(fc1, fc2_w) + fc2_b

with tf.name_scope('full_connection_3'):

fc3_w = tf.Variable(tf.truncated_normal(shape=[4096, 1000], dtype=tf.float32, mean=0,

stddev=0.1), name='weights')

fc3_b = tf.Variable(tf.constant(value=0.0, dtype=tf.float32, shape=[1000]), name='biases')

logits = tf.matmul(fc2, fc3_w) + fc3_b

return logits

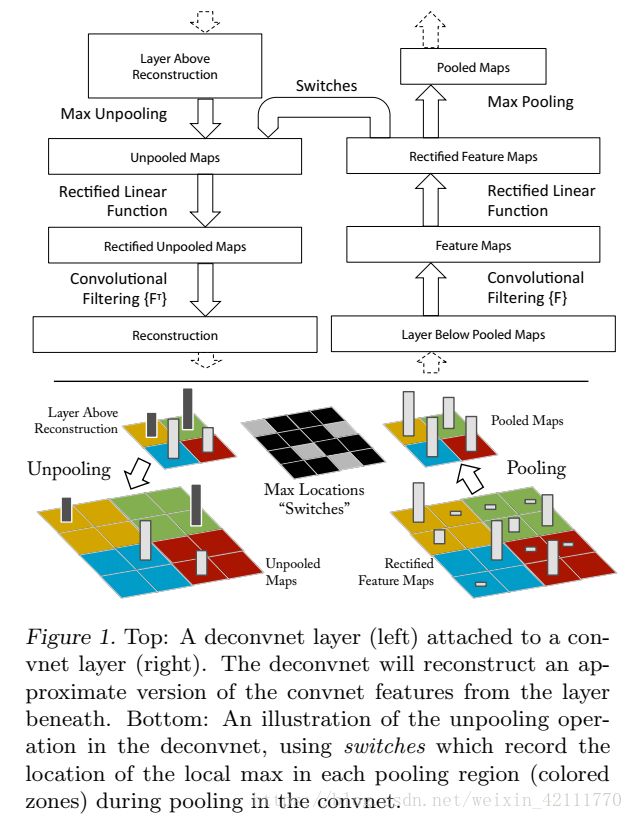

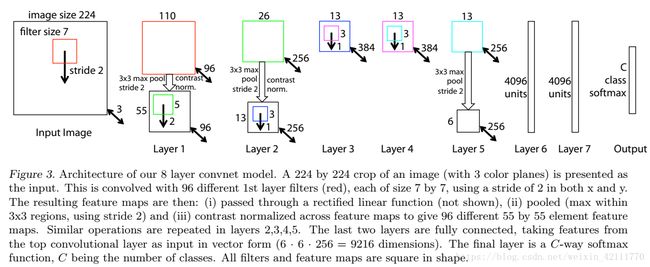

ZF Net

ZF论文 Visualizing and Understanding Convolutional Networks 最大的贡献在于通过使用可视化技术揭示了神经网络各层到底在干什么,起到了什么作用。

可视化技术依赖于反卷积操作,即卷积的逆过程,将特征映射到像素上。具体过程如下图所示:

- Unpooling:在卷积神经网络中,最大池化是不可逆的,作者采用近似的实现,使用一组转换变量switch 记录每个池化区域最大值的位置。在反池化的时候,将最大值返回到其所应该在的位置,其他位置用0补充。

- Rectification:反卷积的时候也同样利用ReLU激活函数

- Filtering:解卷积网络中利用卷积网络中相同的filter的转置应用到Rectified Unpooled Maps。也就是对filter进行水平方向和垂直方向的翻转。

可视化不仅能够看到一个训练完的模型的内部操作,而且还能够帮助改进网络结构从而提高网络性能。ZFNet模型是在AlexNet基础上进行改动,网络结构上并没有太大的突破。差异表现在, AlexNet是用两块GPU的稀疏连接结构,而ZFNet只用了一块GPU的稠密链接结构;改变了AleNet的第一层,将过滤器的大小由1111变成77,并且将步长由4变成2,使用更小的卷积核和步长,保留更多特征;将3,4,5层变成了全连接。

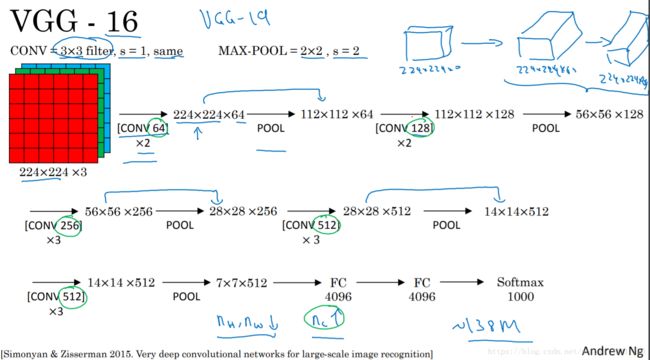

VGG Net

VGG模型是牛津大学VGG组提出的。论文为Very Deep Convolutional Networks for Large-Scale Image Recognition VGG全部使用了33的卷积核和22最大池化核通过不断加深网络结构来提神性能。采用堆积的小卷积核优于采用大的卷积核,因为多层非线性层可以增加网络深层来保证学习更复杂的模式,而且所需的参数还比较少。

- 两个堆叠的卷积层(卷积核为33)有限感受野是55,三个堆叠的卷积层(卷积核为33)的感受野为77,故可以堆叠含有小尺寸卷积核的卷积层来代替具有大尺寸的卷积核的卷积层,并且能够使得感受野大小不变,而且多个3*3的卷积核比一个大尺寸卷积核有更多的非线性(每个堆叠的卷积层中都包含激活函数),使得decision function更加具有判别性。

- 假设一个3层的33卷积层的输入和输出都有C channels,堆叠的卷积层的参数个数为 3 × ( 3 2 ⋅ C 2 ) = 27 C 2 3\times \left ( 3^{2}\cdot C^{2} \right ) = 27C^{2} 3×(32⋅C2)=27C2,而等同的一个单层的77卷积层的参数为 7 2 ⋅ C 2 = 49 C 2 7^{2} \cdot C^{2} =49C^{2} 72⋅C2=49C2

可以看到VGG-D使用了一种块结构:多次重复使用统一大小的卷积核来提取更复杂和更具有表达性的特征。VGG系列中,最多使用是VGG-16,下图来自Andrew Ng深度学习里面对VGG-16架构的描述。如图所示,在VGG-16的第三、四、五块:256、512、512个过滤器依次用来提取复杂的特征,其效果就等于一个带有3各卷积层的大型512*512大分类器。

下面陈列出VGG-16(D架构的代码),每层的输入格式和输出格式与上图一样:

import tensorflow as tf

from tensorflow.contrib.layers import xavier_initializer_conv2d

from tensorflow.contrib.layers import flatten

# 卷积层定义

def conv_op(input_op, filter_size, channel_out, step, name):

channel_in = input_op.get_shape()[-1].value

with tf.name_scope(name) as scope:

weights = tf.get_variable(shape=[filter_size, filter_size, channel_in, channel_out], dtype=tf.float32,

initializer=xavier_initializer_conv2d(), name=scope + 'weights')

biases = tf.Variable(tf.constant(value=0.0, shape=[channel_out], dtype=tf.float32),

trainable=True, name='biases')

conv = tf.nn.conv2d(input_op, weights, strides=[1, step, step, 1], padding='SAME') + biases

conv = tf.nn.relu(conv, name=scope)

return conv

# 最大池化层

def maxPool_op(input_op, filter_size, step, name):

return tf.nn.max_pool(input_op, ksize=[1, filter_size, filter_size, 1], strides=[1, step, step, 1],

padding='SAME', name=name)

# 全连接层

def full_connection(input_op, channel_out, name):

channel_in = input_op.get_shape()[-1].value

with tf.name_scope(name) as scope:

weight = tf.get_variable(shape=[channel_in, channel_out], dtype=tf.float32,

initializer=xavier_initializer_conv2d(), name=scope + 'weight')

bias = tf.Variable(tf.constant(value=0.0, shape=[channel_out], dtype=tf.float32), name='bias')

fc = tf.nn.relu_layer(input_op, weight, bias, name=scope)

return fc

# 定义VGG-16网络

def VGGNet_16(images, keep_prob):

# 第一个块结构,包括两个conv3-64

with tf.name_scope('block_1'):

conv1_1 = conv_op(images, filter_size=3, channel_out=64, step=1, name='conv1_1')

conv1_2 = conv_op(conv1_1, filter_size=3, channel_out=64, step=1, name='conv1_2')

pool1 = maxPool_op(conv1_2, filter_size=2, step=2, name='pooling_1')

# 第二个块结构,包括两个conv3-128

with tf.name_scope('block_2'):

conv2_1 = conv_op(pool1, filter_size=3, channel_out=128, step=1, name='conv2_1')

conv2_2 = conv_op(conv2_1, filter_size=3, channel_out=128, step=1, name='conv2_2')

pool2 = maxPool_op(conv2_2, filter_size=2, step=2, name='pooling_2')

# 第三个块结构,包括三个conv3-256

with tf.name_scope('block_3'):

conv3_1 = conv_op(pool2, filter_size=3, channel_out=256, step=1, name='conv3_1')

conv3_2 = conv_op(conv3_1, filter_size=3, channel_out=256, step=1, name='conv3_2')

conv3_3 = conv_op(conv3_2, filter_size=3, channel_out=256, step=1, name='conv3_3')

pool3 = maxPool_op(conv3_3, filter_size=2, step=2, name='pooling_3')

# 第四个块结构,包括三个conv3-512

with tf.name_scope('block_4'):

conv4_1 = conv_op(pool3, filter_size=3, channel_out=512, step=1, name='conv4_1')

conv4_2 = conv_op(conv4_1, filter_size=3, channel_out=512, step=1, name='conv4_2')

conv4_3 = conv_op(conv4_2, filter_size=3, channel_out=512, step=1, name='conv4_3')

pool4 = maxPool_op(conv4_3, filter_size=2, step=2, name='pooling_4')

# 第五个块结构,包括三个conv3-512

with tf.name_scope('block_5'):

conv5_1 = conv_op(pool4, filter_size=3, channel_out=512, step=1, name='conv5_1')

conv5_2 = conv_op(conv5_1, filter_size=3, channel_out=512, step=1, name='conv5_2')

conv5_3 = conv_op(conv5_2, filter_size=3, channel_out=512, step=1, name='conv5_2')

pool5 = maxPool_op(conv5_3, filter_size=2, step=2, name='pooling_5')

# flatten

fc1 = flatten(pool5)

dim = fc1.shape[1].value

with tf.name_scope('FC1_4096'):

fc1 = full_connection(fc1, channel_out=4096, name='FC1_4096')

fc1_drop = tf.nn.dropout(fc1, keep_prob=keep_prob, name='fc1_drop')

with tf.name_scope('FC2_4096'):

fc2 = full_connection(fc1_drop, channel_out=4096, name='FC1_4096')

fc2_drop = tf.nn.dropout(fc2, keep_prob=keep_prob, name='fc2_drop')

with tf.name_scope('FC_1000'):

fc3 = full_connection(fc2_drop, channel_out=1000, name='FC_1000')

logits = tf.nn.softmax(fc3)

return logits

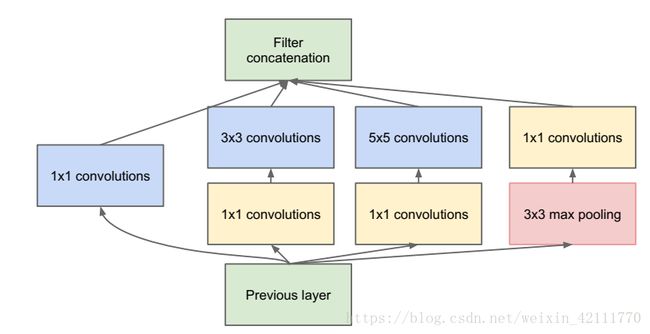

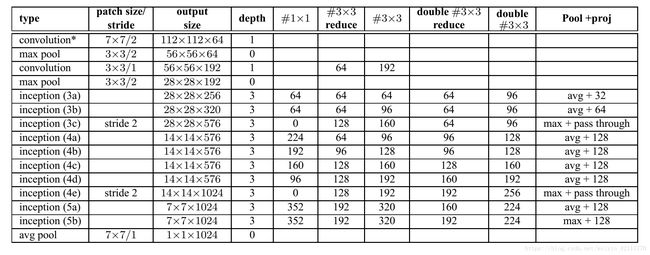

Inception

提高深度神经网络性能最直接的办法是增加它们尺寸,不仅仅包括深度(网络层数),还包括它的宽度,即每一层的单元个数。但是这种简单直接的解决方法存在两个重大的缺点,更大的网络意味着更多的参数,使得网络更加容易过拟合,而且还会导致计算资源的增大。经过多方面的思量,考虑到将稀疏矩阵聚类成相对稠密子空间来倾向于对稀疏矩阵的优化,因而提出inception结构。Google Inception Net是一个大家族,包括:

- **Inception v1结构(GoogLeNet): **论文为Going deeper with ConvolutionsGoogleNet为ILSVRC 2014比赛中的第一名,提出了Inception Module结构

- **Inception V2结构: ** 论文Batch Normalization: Accelerating Deep Network Training by Reducing Internal Covariate Shift Inception V2结构用两个33的卷积替代55的大卷机,提出了Batch Normalization(简称BN)正则化方法

- **Inception v3结构: ** 论文Rethinking the Inception Architecture for Computer Vision Inception V3 网络主要有两方面的改造:一是引入Factorization into small convolutions的思想,将较大的二维卷积拆分成两个较小的一维卷积,二是优化了Inception Module结构。

- **Inception v4结构: ** 论文Inception-ResNet and the Impact of Residual Connections on learning Inception v4结构主要结合了微软的ResNet。

Inception架构的主要思想是考虑怎样用容易获得密集组件近似覆盖卷积视觉网络的最优稀疏结构。Inception模型的基本结构如下,在多个不同尺寸的卷积核上同时进行卷积运算后再进行聚合,并使用1*1的卷积进行降维减少计算成本。 Inception Module使用了3中不同尺寸的卷积和1个最大池化,增加了网络对不同尺度的适应性。传统的卷集层的输入数据只和一种尺寸的卷积核进行运算,输出固定维度的特征数据(例如128),而在Inception模块中,输入和多个尺寸的卷积核(11,33和55)分别进行卷积运算,然后再聚合,输出的128特征中包括11卷积核的 a 1 a_{1} a1 个输出特征,33卷积核的 a 2 a_{2} a2个输出特征和55卷积核的 a 3 a_{3} a3个输出特征 ( a 1 + a 2 + a 3 = 128 ) \left(a_{1}+a_{2}+a_{3}=128\right) (a1+a2+a3=128)。Inception结构将相关性强的特征汇聚在一起,同样是128个输出特征,Inception得到的特征“冗余”的信息比较少,所以收敛速度就会更快。

GoogleNet采用9个Inception模块化的结构,共22层,除了最后一层的输出,其中间结点的分类效果也很好。还使用了辅助类结点(auxiliary classifiers),将中间某一层的输出用作分类,并按一个较小的权重加到最终分类结果中。这样相当于做了模型融合,同时给网络增加了反向传播的梯度信号,也提供了额外的正则化,对于整个网络的训练大有益处。下图是网络的结构图:

注:每一个Inception结构模块包含两层

Inception V2结构 Inception V2参考VGG,用两个33的卷积代替55的大卷积,还提出著名的Batch Normalization(BN)方法。BN是一个非常有效的正则化方法,可以让大型卷积网络的训练速度加快很多倍,同时收敛后的分类准确率也可以得到大幅提高。BN用于神经网络某层时,会对每一个mini-batch数据进行标准化(normalization)处理,使输出规范化到N(0,1)的正态分布,减少了Internal Covariate shift(内部神经元的改变) 。

单纯地使用BN获得的增益还不明显,还需要对网络做一些相应的调整:增大学习率并加快学习衰减速度;去除Dropout和LRN并减轻 L 2 L_{2} L2正则化;更彻底地对训练样本进行shuffle;减少数据增强中图片的光度失真,(BN网络训练很快,每一个样本训练样本训练次数更少,我们希望训练者关注更多真实的图片)

Inception v3结构 引入 Factorization into small convolutions的思想,将一个较大的二维卷积拆分成两个较小的一维卷积,比如将33卷积拆分成13和31卷积,一方面节约了大量参数,加速运算并减轻过拟合,同时增加了一层非线性扩展模型表达能力。这种非对称的卷积结构拆分,其结果比对称地拆为几个相同的小卷积核效果更明显,可以处理更多,更复杂的空间特征,增加特征多样性。另一方面,Inception V3优化了Inception Module结构,现在Inception Module有3535、1717和88三种不同的结构。

Inception v4结构 将Inception Module与Residual connection相结合,加速训练速度并提升性能。下面节介绍的是ResNet网络。

import tensorflow as tf

from tensorflow.contrib.layers import flatten

def Inception_Module(input, filter_1x1, fileter_3x3_reduce,

filter_3x3, filter_5x5_reduce, filter_5x5, filter_pool_proj, scope):

with tf.variable_scope(scope):

conv_1x1 = tf.layers.conv2d(inputs=input, filters=filter_1x1, kernel_size=1, padding='SAME',

activation=tf.nn.relu, name='conv_1x1')

conv_3x3_reduce = tf.layers.conv2d(inputs=input, filters=fileter_3x3_reduce, kernel_size=1, padding='SAME',

activation=tf.nn.relu, name='conv_3x3_reduce')

conv_3x3 = tf.layers.conv2d(inputs=conv_3x3_reduce, filters=filter_3x3, kernel_size=3, padding='SAME',

activation=tf.nn.relu, name='conv_3x3')

conv_5x5_reduce = tf.layers.conv2d(inputs=input, filters=filter_5x5_reduce, kernel_size=1, padding='SAME',

activation=tf.nn.relu, name='conv_5x5_reduce')

conv_5x5 = tf.layers.conv2d(inputs=conv_5x5_reduce, filters=filter_5x5, kernel_size=5, padding='SAME',

activation=tf.nn.relu, name='conv_5x5')

maxpool = tf.layers.max_pooling2d(inputs=input, pool_size=3, strides=1, padding='SAME', name='max_pool')

maxpool_proj = tf.layers.conv2d(inputs=maxpool, filters=filter_pool_proj, kernel_size=1, padding='SAME',

activation=tf.nn.relu, name='maxpool_proj')

inception_output = tf.concat((conv_1x1, conv_3x3, conv_5x5, maxpool_proj),

axis=-1, name='inception_output')

return inception_output

def GoogleNet(images):

with tf.variable_scope('GoogleNet'):

conv_1 = tf.layers.conv2d(inputs=images, filters=64, kernel_size=7, strides=2,

activation=tf.nn.relu, padding='SAME', name='conv_1')

max_pool_1 = tf.layers.max_pooling2d(inputs=conv_1, pool_size=3, strides=2,

padding='SAME', name='max_pool_1')

print('conv_1:', conv_1.shape, 'max_pool_1:', max_pool_1.shape)

conv_2 = tf.layers.conv2d(inputs=max_pool_1, filters=192, kernel_size=3, strides=1, padding='SAME',

activation=tf.nn.relu, name='conv_2')

max_pool_2 = tf.layers.max_pooling2d(inputs=conv_2, pool_size=3, strides=2, padding='SAME', name='max_pool_2')

print('conv_2:', conv_2.shape, 'max_pooling_2', max_pool_2.shape)

inception_3a = Inception_Module(max_pool_2, 64, 96, 128, 16, 32, 32, scope='inception_3a')

inception_3b = Inception_Module(inception_3a, 128, 128, 192, 32, 96, 64, scope='inception_3b')

max_pool_3 = tf.layers.max_pooling2d(inputs=inception_3b, pool_size=3, strides=2, padding='SAME', name='max_pool_3')

print('inception_3a:', inception_3a.shape, 'inception_3b:', inception_3b.shape, 'max_pool_3:', max_pool_3.shape)

inception_4a = Inception_Module(max_pool_3, 192, 96, 208, 16, 48, 64, scope='inception_4a')

inception_4b = Inception_Module(inception_4a, 160, 112, 224, 24, 64, 64, scope='inception_4b')

inception_4c = Inception_Module(inception_4b, 128, 128, 256, 24, 64, 64, scope='inception_4c')

inception_4d = Inception_Module(inception_4c, 112, 144, 288, 32, 64, 64, scope='inception_4d')

inception_4e = Inception_Module(inception_4d, 256, 160, 320, 32, 128, 128, scope='inception_4e')

max_pool_4 = tf.layers.max_pooling2d(inputs=inception_4e, pool_size=3, strides=2, padding='SAME', name='max_pool_4')

print('inception_4a', inception_4a.shape,

'inception_4b', inception_4b.shape,

'inception_4c', inception_4c.shape,

'inception_4d', inception_4d.shape,

'inception_4e', inception_4e.shape,

'max_pool_4:', max_pool_4.shape)

inception_5a = Inception_Module(max_pool_4, 256, 160, 320, 32, 128, 128, scope='inception_5a')

inception_5b = Inception_Module(inception_5a, 384, 192, 384, 48, 128, 128, scope='inception_5b')

avg_pool = tf.layers.average_pooling2d(inputs=inception_5b, pool_size=7, strides=1, padding='SAME', name='avg_pool')

print('inception_5a:', inception_5a.shape, 'inception_5b', inception_5b.shape, 'avg_pool:', avg_pool.shape)

drop_out = tf.layers.dropout(inputs=avg_pool, rate=0.4)

flattern_layer = flatten(drop_out)

print(flattern_layer.shape)

linear = tf.layers.dense(inputs=flattern_layer, units=1000, name='linear')

print(linear.shape)

logits = tf.nn.softmax(linear)

return logits

batch_size = 128

def main(argv=None):

images = tf.zeros(shape=[batch_size, 224, 224, 3], dtype=tf.float32)

GoogleNet(images)

if __name__ == '__main__':

tf.app.run()

输出结果:

conv_1: (128, 112, 112, 64), max_pool_1: (128, 56, 56, 64)

conv_2: (128, 56, 56, 192) ,max_pooling_2 (128, 28, 28, 192)

inception_3a: (128, 28, 28, 256), inception_3b: (128, 28, 28, 480) ,max_pool_3: (128, 14, 14, 480)

inception_4a (128, 14, 14, 512), inception_4b (128, 14, 14, 512), inception_4c (128, 14, 14, 512) ,inception_4d (128, 14, 14, 528) ,inception_4e (128, 14, 14, 832) ,max_pool_4: (128, 7, 7, 832)

inception_5a: (128, 7, 7, 832) ,inception_5b :(128, 7, 7, 1024), avg_pool: (128, 7, 7, 1024)

(128, 50176)

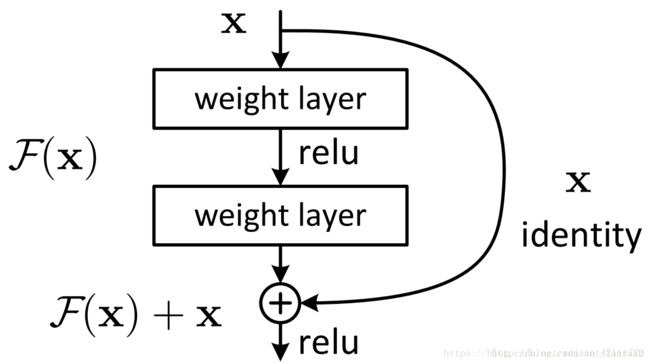

ResNet

论文Deep Residual Learning for Image Recognition

随着网络的加深,出现了训练集准确率下降,错误率上升的现象,就是所谓的“退化”问题。按理说更深的模型不应当比它浅的模型产生更高的错误率,这不是由于过拟合产生的,而是由于模型复杂时,SGD的优化变得更加困难,导致模型达不到好的学习效果。ResNet就是针对这个问题应运而生的。

ResNet,深度残差网络,基本思想是引入了能够跳过一层或多层的“shortcut connection”,如下图所示,即图中的“弯弯的弧线”。ResNet中提出了两种mapping:一种是identity mapping,另一种是residual mapping。最后的输出为y=F(x)+x,顾名思义,identity mapping指的是自身,也就是x,而residual mapping,残差,指的就是y-x=F(x)。这个简单的加法并不会给网络增加额外的参数和计算量,同时却能够大大增加模型的训练速度,提高训练效果,并且当模型的层数加深时,这个简单的结构能够很好的解决退化问题。

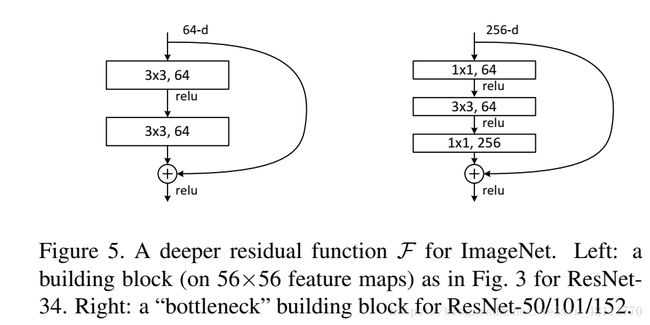

有两种基础的残差块的设计如下图:

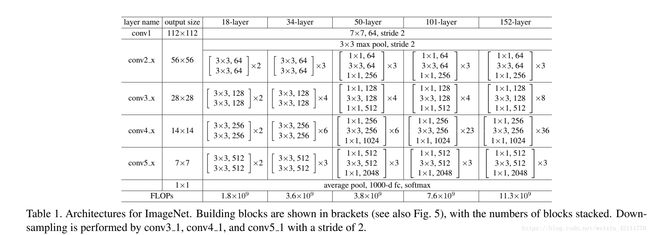

这两种结构是分别针对ResNet34和ResNet50/101/152,右边的“bottleneck design”要比左边的“building block”多了1层,增添1*1的卷积目的就是为了降低参数的数目,减少计算量。所以浅层次的网络,可使用“building block”,对于深层次的网络,为了减少计算量,bottleneck desigh 是更好的选择。

再将x添加到F(x)中,还需考虑到x的维度与F(x)维度可能不匹配的情况,论文中给出三种方案:

A: 输入输出一致的情况下,使用恒等映射,不一致的情况下,则用0填充(zero-padding shortcuts)

B: 输入输出一致时使用恒等映射,不一致时使用 projection shortcuts

C: 在两种情况下均使用 projection shortcuts

经实验验证,虽然C要稍优于B,B稍优于A,但是A/B/C之间的稍许差异对解决“退化”问题并没有多大的贡献,而且使用0填充时,不添加额外的参数,可以保证模型的复杂度更低,这对更深的网络非常有利的,因此方法C被作者舍弃。

最后,放出一张图片展示常见的ResNet网络架构的组成,如下所示:

下面以50-layer的残差网络为例,展示ResNet的网络架构:

import tensorflow as tf

# a ‘bottleneck’ building block

def bottleneck(input, channel_out, stride, scope):

channel_in = input.get_shape()[-1]

channel = channel_out / 4

with tf.variable_scope(scope):

first_layer = tf.layers.conv2d(input, filters=channel, kernel_size=1, strides=stride,

padding='SAME', activation=tf.nn.relu, name='conv1_1x1')

second_layer = tf.layers.conv2d(first_layer, filters=channel, kernel_size=3, strides=1,

padding='SAME', activation=tf.nn.relu, name='conv2_3x3')

third_layer = tf.layers.conv2d(second_layer, filters=channel_out, kernel_size=1, strides=1,

padding='SAME', name='conv3_1x1')

if channel_in != channel_out:

# projection (option B)

shortcut = tf.layers.conv2d(input, filters=channel_out, kernel_size=1,

strides=stride, name='projection')

else:

shortcut = input # identify

output = tf.nn.relu(shortcut + third_layer)

return output

# 每一个大卷积层的残差块

def residual_block(input, channel_out, stride, n_bottleneck, down_sampling, scope):

with tf.variable_scope(scope):

if down_sampling:

out = bottleneck(input, channel_out, stride=2, scope='bottleneck_1')

else:

out = bottleneck(input, channel_out, stride, scope='bottleneck_1')

for i in range(1, n_bottleneck):

out = bottleneck(out, channel_out, stride, scope='bottleneck_%i' % (i+1))

return out

# layer_50残差网络架构

def ResNet_50(images):

with tf.variable_scope('Layer_50'):

# conv_1

conv_1 = tf.layers.conv2d(images, filters=64, kernel_size=7, strides=2, padding='SAME',

activation=tf.nn.relu, name='conv1')

# conv2_x

max_pooling = tf.layers.max_pooling2d(conv_1, pool_size=3, strides=2,

padding='SAME', name='max_pooling')

conv_2 = residual_block(max_pooling, 256, stride=1, n_bottleneck=3, down_sampling=False, scope='conv2')

# conv3_x

conv_3 = residual_block(conv_2, 512, stride=1, n_bottleneck=4, down_sampling=True, scope='conv_3')

# conv4_x

conv_4 = residual_block(conv_3, 1024, stride=1, n_bottleneck=6, down_sampling=True, scope='conv4')

# conv5_x

conv_5 = residual_block(conv_4, 2048, stride=1, n_bottleneck=3, down_sampling=True, scope='conv5')

average_pooling = tf.layers.average_pooling2d(conv_5, pool_size=7, strides=1, name='avg_pooling')

full_connection = tf.layers.flatten(average_pooling)

logits = tf.nn.softmax(tf.layers.dense(full_connection, 1000, name='full_connection'))

return logits

总结

- LeNet是第一个成功应用于手写字体识别的卷积神经网络 ALexNet展示了卷积神经网络的强大性能,开创了卷积神经网络空前的高潮

- ZFNet通过可视化展示了卷积神经网络各层的功能和作用

- VGG采用堆积的小卷积核替代采用大的卷积核,堆叠的小卷积核的卷积层等同于单个的大卷积核的卷积层,不仅能够增加决策函数的判别性还能减少参数量

- GoogleNet增加了卷积神经网络的宽度,在多个不同尺寸的卷积核上进行卷积后再聚合,并使用1*1卷积降维减少参数量

- ResNet解决了网络模型的退化问题,允许神经网络更深

注:关于AlexNet,GoogleNet,VGG和ResNet网络架构的demo代码见:https://github.com/skloisMary/demo-Network.git