闲谈神经网络(BP),tesnsoflow实战torch实战

BP神经网络实战

前段时间看了BP神经网络,并进行回归预测,下面从三种方法进行阐述。

方法一、直接使用波斯顿房价预测案例进行简单修改,话不多说,源码如下:(代码备注很清晰,一看既懂)

#读取数据

from sklearn.metrics import mean_squared_error #均方误差

from sklearn.metrics import mean_absolute_error #平方绝对误差

from sklearn.metrics import r2_score#R square

import tensorflow as tf

import matplotlib.pyplot as plt

import numpy as np

import pandas as pd # 能快速读取常规大小的文件。Pandas能提供高性能、易用的数据结构和数据分析工具

from sklearn.utils import shuffle # 随机打乱工具,将原有序列打乱,返回一个全新的顺序错乱的值

# 迭代轮次

train_epochs = 1

# 学习率

learning_rate = 0.01

# 读取数据文件

def get_data():

mydf = pd.read_csv('temp.csv', sep='\s+',encoding='utf-8')

print(mydf)

df = mydf.values

print(df)

# print(df)

#

# # 把df转换成np的数组格式

# df = np.array(df)

return df

#数据归一化操作

def normal(df):

# 特征数据归一化

# 对特征数据{0到11}列 做(0-1)归一化

for i in range(10):

df[:, i] = (df[:, i] - df[:, i].min()) / (df[:, i].max() - df[:, i].min())

# x_data为归一化后的前10列特征数据

x_data = df[:, :10]

# y_data为最后1列标签数据

y_data = df[:, 10]

return x_data, y_data

def model():

# 模型定义

# 定义特征数据和标签数据的占位符

# shape中None表示行的数量未知,在实际训练时决定一次带入多少行样本,从一个样本的随机SDG到批量SDG都可以

#placeholder()函数是在神经网络构建graph的时候在模型中的占位,此时并没有把要输入的数据传入模型,

# 它只会分配必要的内存。等建立session,在会话中,运行模型的时候通过feed_dict()函数向占位符喂入数据。

x = tf.placeholder(tf.float32, [None, 10], name="X") # 10个特征数据(10列)

y = tf.placeholder(tf.float32, [None, 1], name="Y") # 1个标签数据(1列)

with tf.name_scope("Model"):

#tf.random_normal()函数用于从“服从指定正态分布的序列”中随机取出指定个数的值。

#w是权重

w = tf.Variable(tf.random_normal([10, 1], stddev=0.01), name="W")

# b 初始化值为1.0

b = tf.Variable(1.0, name="b")

def model(x, w, b):

return tf.matmul(x, w) + b

# 预测计算操作,前向计算节点

#计算的wx+b

pred = model(x, w, b)

print("y_pred is %s"%(pred))

# 定义均方差损失函数

# 定义损失函数

#采用均方差损失函数,square为平方,reduce_mean为求均值,y为实际数值,pred为预测即使用f(x)=wx+b计算的值

with tf.name_scope("LossFunction"):

#2. tf.pow(x, y, name=None)

#释义:幂值计算操作,即 x^y

loss_function = tf.reduce_mean(tf.pow(y - pred, 2)) # 均方误差(所有维度的均值)

# 创建优化器

print("loss_function is ")

print(loss_function)

optimizer = tf.train.GradientDescentOptimizer(learning_rate).minimize(loss_function)

return loss_function, optimizer, pred, x, y, b, w

def run_model(loss_function, optimizer, pred, x_data, y_data, x, y, b, w):

# 声明会话

sess = tf.Session()

# 定义初始化变量的操作

init = tf.global_variables_initializer()

# 为TensorBoard可视化准备数据

# 设置日志存储目录

logdir = './test'

# 创建一个操作,用于记录损失值loss,后面在TensorBoard中SCALARS栏可见

#例如:tf.summary.scalar('mean', mean)

#用来显示标量的信息

#一般在画loss,accuary时会用到这个函数。

sum_loss_op = tf.summary.scalar("loss", loss_function)

# 把所有需要记录摘要日志文件的合并,方便一次性写入

merged = tf.summary.merge_all()

# 启动会话

sess.run(init)

# 创建摘要的文件写入器

# 创建摘要writer,将计算图写入摘要文件,后面在Tensorflow中GRAPHS栏可见

writer = tf.summary.FileWriter(logdir, sess.graph)

# 迭代训练

loss_list = [] # 用于保存loss值的列表

b0 = []

Add_train_y = []

Add_predict_y = []

for epoch in range(train_epochs):

loss_sum = 0.0

#对应得输出值

for xs, ys in zip(x_data, y_data):

#reshape(-1,1)任意行,一列

#

#拉直了是一行十列

xs = xs.reshape(1, 10)

#拉直了是一行一列

ys = ys.reshape(1, 1)

#_ 是一个空值

#_表示一个占位符,就是第一个获取的变量不要,用来占位的。是空值

_, summary_str, loss = sess.run([optimizer, sum_loss_op, loss_function], feed_dict={x: xs, y: ys})

# print("_ is %s ,sumamary is %s, loss is %s"%(_,summary_str,loss))

writer.add_summary(summary_str, epoch)

loss_sum = loss_sum + loss

#随机打乱操作

x_data, y_data = shuffle(x_data, y_data)

#eval是执行一个字符串,并放哪会结果

b0temp = b.eval(session=sess) # 训练中当前变量b值

w0temp = w.eval(session=sess) # 训练中当前权重w值

loss_average = loss_sum / len(y_data) # 当前训练中的平均损失

b0.append(b0temp)

loss_list.append(loss_average) # 每轮添加一次

print("epoch=", epoch + 1, "loss=", loss_average, "b=", b0temp, "w=", w0temp)

#调用回归函数mean_squared_error

#sess.run(pred, feed_dict={x: x_data})为预测值

#feed_dict的作用是给使用placeholder创建出来的tensor赋值。其实,他的作用更加广泛:feed 使用一个 值(不能是tensor,可以是tensor.eval())临时替换一个 op 的输出结果. 你可以提供 feed 数据作为 run() 调用的参数. feed 只在调用它的方法内有效, 方法结束, feed 就会消失.

Add_train_y.append(np.sqrt(mean_squared_error(y_data, sess.run(pred, feed_dict={x: x_data}))))

#loss_list = []#用于保存loss值的列表

print("Add_train_y is %s"%(Add_train_y))

return loss_list, pred, Add_train_y, sess

def plot_all(loss_list, x_data, y_data, pred, Add_train_y, sess, x, y):

plt.plot(loss_list)

# 模型应用

# n = np.random.randint(6)

n = 1

print(n+2)

x_test = x_data[n]

print("x_test is %s"%(x_test))

# print(x_test, y_data[10111])

x_test = x_test.reshape(1, 10)

print(x_test)

# x_test = [[5.060753370997017e-05, 0.00010510795462839959, 1.946443604229622e-05, 0.0005985314083006087, 0.00026763599558157303, 0.9982482007561934, 1.0, 0.9969830124134441, 0.00017517992438066598, 0.0] ]

#随机取出一个进行预测

predict = sess.run(pred, feed_dict={x: x_test})

print("预测值:%f" % predict)

target = y_data[n]

print("标签值:%f" % target)

plt.show()

####### 曲线拟合效果,可以看出预测效果不错 #####

test_predictions = sess.run(pred, feed_dict={x: x_data})

plt.scatter(y_data, test_predictions)

plt.xlabel('True Values ')

plt.ylabel('Predictions ')

plt.axis('equal')

plt.xlim(plt.xlim())

plt.ylim(plt.ylim())

_ = plt.plot([-100, 100], [-100, 100])

plt.show()

########## ######### ##

plt.figure()

plt.xlabel('Epoch')

plt.ylabel('Mean Abs Error ')

plt.plot(np.arange(10), Add_train_y,

label = 'MAE')

plt.legend()

plt.ylim([0, 10])

plt.show()

def main():

df = get_data() # getdata

print(df)

x_data, y_data = normal(df)

print("X_data is %s,y_data is %s"%(x_data,y_data))

#loss_function是军方误差

loss_function, optimizer, pred, x, y, b, w = model()

loss_list, pred, Add_train_y, sess = run_model(loss_function, optimizer, pred, x_data, y_data, x, y, b, w)

plot_all(loss_list, x_data, y_data, pred, Add_train_y, sess, x, y)

if __name__ == '__main__':

main()

实验结果如下:

方法二、tensorflow结合正则。

import tensorflow as tf

import pandas as pd

import numpy as np

createVar = locals()

'''

建立一个网络结构可变的BP神经网络通用代码:

在训练时各个参数的意义:

hidden_floors_num:隐藏层的个数

every_hidden_floor_num:每层隐藏层的神经元个数

learning_rate:学习速率

activation:激活函数

regularization:正则化方式

regularization_rate:正则化比率

total_step:总的训练次数

train_data_path:训练数据路径

model_save_path:模型保存路径

利用训练好的模型对验证集进行验证时各个参数的意义:

model_save_path:模型保存路径

validate_data_path:验证集路径

precision:精度

利用训练好的模型进行预测时各个参数的意义:

model_save_path:模型的保存路径

predict_data_path:预测数据路径

predict_result_save_path:预测结果保存路径

'''

# 训练模型全局参数

hidden_floors_num = 1

every_hidden_floor_num = [50]

learning_rate = 0.00001

activation = 'tanh'

regularization = 'L2'

regularization_rate = 0.7

total_step = 100000

train_data_path = './sum.csv'

model_save_path = './model/predict_model'

# 利用模型对验证集进行验证返回正确率

# model_save_path = 'C:/Users/zhangxing/Desktop/BP_regression/model/predict_model'

# validate_data_path = 'C:/Users/zhangxing/Desktop/BP_regression/validate.csv'

precision = 0.5

# 利用模型进行预测全局参数

model_save_path = './model/predict_model'

predict_data_path = './data2.csv'

predict_result_save_path = './result.csv'

def inputs(train_data_path):

train_data = pd.read_csv(train_data_path)

# train_data= train_data.replace(to_replace=r'^\s*$',value=np.nan,regex=True,inplace=True)

# train_data=pd.DataFrame(train_data)

# train_data=train_data.strip()

print(train_data)

#X是前面的每行的三个数,y是最后一个的预测值

X = np.array(train_data.iloc[:, :-1])

Y = np.array(train_data.iloc[:, -1:])

return X, Y

def make_hidden_layer(pre_lay_num, cur_lay_num, floor):

createVar['w' + str(floor)] = tf.Variable(tf.random_normal([pre_lay_num, cur_lay_num], stddev=1))

createVar['b' + str(floor)] = tf.Variable(tf.random_normal([cur_lay_num], stddev=1))

return eval('w'+str(floor)), eval('b'+str(floor))

def initial_w_and_b(all_floors_num):

# 初始化隐藏层的w, b

for floor in range(2, hidden_floors_num+3):

pre_lay_num = all_floors_num[floor-2]

cur_lay_num = all_floors_num[floor-1]

w_floor, b_floor = make_hidden_layer(pre_lay_num, cur_lay_num, floor)

createVar['w' + str(floor)] = w_floor

createVar['b' + str(floor)] = b_floor

def cal_floor_output(x, floor):

w_floor = eval('w'+str(floor))

b_floor = eval('b'+str(floor))

if activation == 'sigmoid':

output = tf.sigmoid(tf.matmul(x, w_floor) + b_floor)

if activation == 'tanh':

output = tf.tanh(tf.matmul(x, w_floor) + b_floor)

if activation == 'relu':

output = tf.nn.relu(tf.matmul(x, w_floor) + b_floor)

return output

def inference(x):

output = x

for floor in range(2, hidden_floors_num+2):

output = cal_floor_output(output, floor)

floor = hidden_floors_num+2

w_floor = eval('w'+str(floor))

b_floor = eval('b'+str(floor))

output = tf.matmul(output, w_floor) + b_floor

return output

#计算total-loss值

#默认l1正则化

def loss(x, y_real):

y_pre = inference(x)

if regularization == 'None':

total_loss = tf.reduce_sum(tf.squared_difference(y_real, y_pre))

if regularization == 'L1':

total_loss = 0

for floor in range(2, hidden_floors_num + 3):

w_floor = eval('w' + str(floor))

total_loss = total_loss + tf.contrib.layers.l1_regularizer(regularization_rate)(w_floor)

# total_loss = total_loss + tf.reduce_sum(tf.squared_difference(y_real, y_pre))

total_loss = total_loss + tf.reduce_sum(tf.squared_difference(tf.clip_by_value(y_real,1e-8,tf.reduce_max(y_real)), tf.clip_by_value(y_pre,1e-8,tf.reduce_max(y_pre))))

if regularization == 'L2':

total_loss = 0

for floor in range(2, hidden_floors_num + 3):

w_floor = eval('w' + str(floor))

total_loss = total_loss + tf.contrib.layers.l2_regularizer(regularization_rate)(w_floor)

total_loss = total_loss + tf.reduce_sum(tf.squared_difference(tf.clip_by_value(y_real,1e-8,tf.reduce_max(y_real)), tf.clip_by_value(y_pre,1e-8,tf.reduce_max(y_pre))))

return total_loss

def train(total_loss):

train_op = tf.train.GradientDescentOptimizer(learning_rate).minimize(total_loss)

return train_op

# 训练模型

def train_model(hidden_floors_num, every_hidden_floor_num, learning_rate, activation, regularization,

regularization_rate, total_step, train_data_path, model_save_path):

X, Y = inputs(train_data_path)

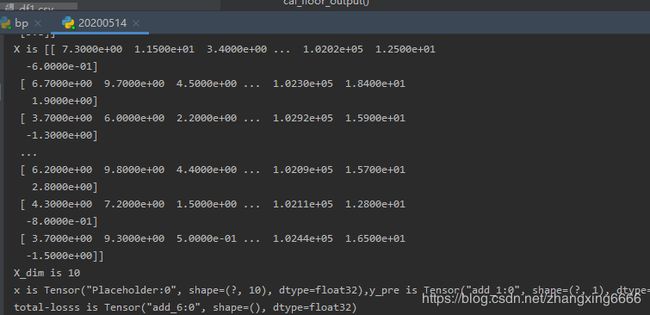

print("X is %s,Y is %s"%(X,Y))

#X_dim是指有几个特征,这里是三个,x1,x2,x3

print("X is %s"%(X))

X_dim = X.shape[1]

print("X_dim is %s"%(X_dim))

all_floors_num = [X_dim] + every_hidden_floor_num + [1]

# 将参数保存到和model_save_path相同的文件夹下, 恢复模型进行预测时加载这些参数创建神经网络

temp = model_save_path.split('/')

model_name = temp[-1]

parameter_path = ''

for i in range(len(temp)-1):

parameter_path = parameter_path + temp[i] + '/'

parameter_path = parameter_path + model_name + '_parameter.txt'

with open(parameter_path, 'w') as f:

f.write("all_floors_num:")

for i in all_floors_num:

f.write(str(i) + ' ')

f.write('\n')

f.write('activation:')

f.write(str(activation))

#占位

x = tf.placeholder(dtype=tf.float32, shape=[None, X_dim])

y_real = tf.placeholder(dtype=tf.float32, shape=[None, 1])

initial_w_and_b(all_floors_num)

y_pre = inference(x)

print("x is %s,y_pre is %s,y_real is %s"%(x,y_pre,y_real))

total_loss = loss(x, y_real)

print("total-losss is %s"%(total_loss))

#得到随机梯度值

train_op = train(total_loss)

print("train_op is %s"%(train_op))

# 记录在训练集上的正确率

train_accuracy = tf.reduce_mean(tf.cast(tf.abs(y_pre - y_real) < precision, tf.float32))

# 保存模型

saver = tf.train.Saver()

# 在一个会话对象中启动数据流图,搭建流程

sess = tf.Session()

init = tf.global_variables_initializer()

sess.run(init)

for step in range(total_step):

sess.run([train_op], feed_dict={x: X[0:, :], y_real: Y[0:, :]})

if step % 1000 == 0:

saver.save(sess, model_save_path)

total_loss_value = sess.run(total_loss, feed_dict={x: X[0:, :], y_real: Y[0:, :]})

print('train step is ', step, ', total loss value is ', total_loss_value,

', train_accuracy', sess.run(train_accuracy, feed_dict={x: X, y_real: Y}),

', precision is ', precision)

saver.save(sess, model_save_path)

print("训练已经完成")

sess.close()

# def validate(model_save_path, validate_data_path, precision):

# # **********************根据model_save_path推出模型参数路径, 解析出all_floors_num和activation****************

# temp = model_save_path.split('/')

# model_name = temp[-1]

# parameter_path = ''

# for i in range(len(temp)-1):

# parameter_path = parameter_path + temp[i] + '/'

# parameter_path = parameter_path + model_name + '_parameter.txt'

# with open(parameter_path, 'r') as f:

# lines = f.readlines()

#

# # 从读取的内容中解析all_floors_num

# temp = lines[0].split(':')[-1].split(' ')

# all_floors_num = []

# for i in range(len(temp)-1):

# all_floors_num = all_floors_num + [int(temp[i])]

#

# # 从读取的内容中解析activation

# activation = lines[1].split(':')[-1]

# hidden_floors_num = len(all_floors_num) - 2

#

# # **********************读取验证数据*************************************

# X, Y = inputs(validate_data_path)

# X_dim = X.shape[1]

#

# # **********************创建神经网络************************************

# x = tf.placeholder(dtype=tf.float32, shape=[None, X_dim])

# y_real = tf.placeholder(dtype=tf.float32, shape=[None, 1])

# initial_w_and_b(all_floors_num)

# y_pre = inference(x)

#

# # 记录在验证集上的正确率

# validate_accuracy = tf.reduce_mean(tf.cast(tf.abs(y_pre - y_real) < precision, tf.float32))

#

# sess = tf.Session()

# saver = tf.train.Saver()

# with tf.Session() as sess:

# # 读取模型

# try:

# saver.restore(sess, model_save_path)

# print('模型载入成功!')

# except:

# print('模型不存在,请先训练模型!')

# return

# validate_accuracy_value = sess.run(validate_accuracy, feed_dict={x: X, y_real: Y})

# print('validate_accuracy is ', validate_accuracy_value)

#

# return validate_accuracy_value

def predict(model_save_path, predict_data_path, predict_result_save_path):

# **********************根据model_save_path推出模型参数路径, 解析出all_floors_num和activation****************

temp = model_save_path.split('/')

model_name = temp[-1]

parameter_path = ''

for i in range(len(temp)-1):

parameter_path = parameter_path + temp[i] + '/'

parameter_path = parameter_path + model_name + '_parameter.txt'

with open(parameter_path, 'r') as f:

lines = f.readlines()

# 从读取的内容中解析all_floors_num

temp = lines[0].split(':')[-1].split(' ')

all_floors_num = []

for i in range(len(temp)-1):

all_floors_num = all_floors_num + [int(temp[i])]

# 从读取的内容中解析activation

activation = lines[1].split(':')[-1]

hidden_floors_num = len(all_floors_num) - 2

# **********************读取预测数据*************************************

predict_data = pd.read_csv(predict_data_path)

X = np.array(predict_data.iloc[:, :])

X_dim = X.shape[1]

# **********************创建神经网络************************************

x = tf.placeholder(dtype=tf.float32, shape=[None, X_dim])

initial_w_and_b(all_floors_num)

y_pre = inference(x)

sess = tf.Session()

saver = tf.train.Saver()

with tf.Session() as sess:

# 读取模型

try:

saver.restore(sess, model_save_path)

print('模型载入成功!')

except:

print('模型不存在,请先训练模型!')

return

y_pre_value = sess.run(y_pre, feed_dict={x: X[0:, :]})

# 将预测结果写入csv文件

predict_data_columns = list(predict_data.columns) + ['predict']

data = np.column_stack([X, y_pre_value])

result = pd.DataFrame(data, columns=predict_data_columns)

result.to_csv(predict_result_save_path, index=False)

print('预测结果保存在:', predict_result_save_path)

if __name__ == '__main__':

mode = 'train'

if mode == 'train':

# 训练模型

train_model(hidden_floors_num, every_hidden_floor_num, learning_rate, activation, regularization,

regularization_rate, total_step, train_data_path, model_save_path)

# if mode == 'validate':

# # 利用模型对验证集进行正确性测试

# validate(model_save_path, validate_data_path, precision)

if mode == 'predict':

# 利用模型进行预测

predict(model_save_path, predict_data_path, predict_result_save_path)

实验结果

torch实战

dataset.py

from torch.utils.data import Dataset,DataLoader

import pandas as pd

import numpy as np

import torch

class MyDataset(Dataset):

def __init__(self,data_dir):

df = pd.read_csv(data_dir,delim_whitespace=True)

datas = df.to_numpy()

for i in range(10):

col = datas[:,i]

col_max = np.max(col)

col_min = np.min(col)

datas[:, i] = (col-col_min)/(col_max-col_min)

self.xs = torch.from_numpy( np.array(datas[:,:10],dtype=np.float32))

self.ys = torch.from_numpy( np.array(datas[:,10:],dtype=np.float32))

def __len__(self):

return len(self.ys)

def __getitem__(self, item):

return self.xs[item],self.ys[item]

mydataset = MyDataset('temp.csv')

train_Dataloder = DataLoader(dataset=mydataset,batch_size=2000)

if __name__ == '__main__':

for x,y in mydataset:

print(y)

train.py

from torch import nn

import torch

from torch.nn import functional as F

from faceRecognize.dataset import *

from torch import optim

from regress.dataset import train_Dataloder

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.feature = nn.Sequential(

nn.Linear(in_features=10,out_features=32),

nn.BatchNorm1d(32),

nn.LeakyReLU(),

nn.Linear(in_features=32, out_features=64),

nn.BatchNorm1d(64),

nn.LeakyReLU(),

nn.Linear(in_features=64, out_features=128),

nn.BatchNorm1d(128),

nn.LeakyReLU()

)

self.output = nn.Linear(128,1)

def forward(self, x):

feature = self.feature(x)

output = self.output(feature)

return output

if __name__ == '__main__':

net = Net().cuda()

loss_func = nn.MSELoss()

opt = optim.Adam(net.parameters(),lr=0.0001)

for i in range(1000):

for index,(xs,ys) in enumerate(train_Dataloder):

xs = xs.cuda()

ys = ys.cuda()

output = net(xs)

loss = loss_func(output,ys)

opt.zero_grad()

loss.backward()

opt.step()

print(loss)

需要数据集可以邮箱我:

Github