TensorFlow分布式训练:单机多卡训练MirroredStrategy、多机训练MultiWorkerMirroredStrategy

日萌社

人工智能AI:Keras PyTorch MXNet TensorFlow PaddlePaddle 深度学习实战(不定时更新)

ResNet模型在GPU上的并行实践

TensorFlow分布式训练:单机多卡训练MirroredStrategy、多机训练MultiWorkerMirroredStrategy

4.8 分布式训练

当我们拥有大量计算资源时,通过使用合适的分布式策略,我们可以充分利用这些计算资源,从而大幅压缩模型训练的时间。针对不同的使用场景,TensorFlow 在 tf.distribute.Strategy`中为我们提供了若干种分布式策略,使得我们能够更高效地训练模型。

4.8.1 TensorFlow 分布式的分类

-

图间并行(又称数据并行)

- 每个机器上都会有一个完整的模型,将数据分散到各个机器,分别计算梯度。

-

图内并行(又称模型并行)

- 每个机器分别负责整个模型的一部分计算任务。

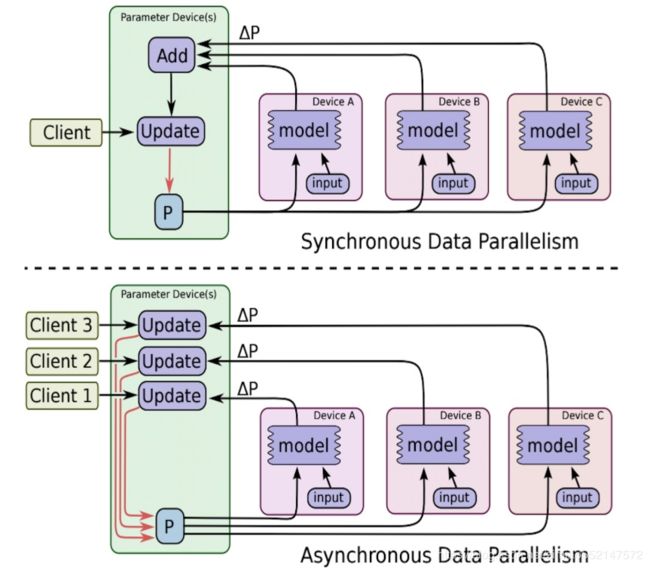

1、图间并行用的非常多,会包含两种方式进行更新

同步:收集到足够数量的梯度,一同更新,下图上方

异步:即同步方式下,更新需求的梯度数量为1,下图下方

注:PS(parameter server):维护全局共享的模型参数的服务器

2、实现方式

- 单机多卡

- 多机单卡

TensorFlow多机多卡实现思路。多级多卡的分布式有很多实现方式,比如:

1. 将每个GPU当做一个worker

2. 同一个机器的各个GPU进行图内并行

3. 同一个机器的各个GPU进行图间并行

比如说第三种:模型实现封装成函数、将数据分成GPU数量的份数、在每个GPU下,进行一次模型forward计算,并使用优化器算出梯度、reduce每个GPU下的梯度,并将梯度传入到分布式中的优化器中

4.8.1 单机多卡训练: MirroredStrategy

tf.distribute.MirroredStrategy 是一种简单且高性能的,数据并行的同步式分布式策略,主要支持多个 GPU 在同一台主机上训练。

-

1、MirroredStrategy运行原理:

- 1、训练开始前,该策略在所有 N 个计算设备(GPU)上均各复制一份完整的模型

- 2、每次训练传入一个批次的数据时,将数据分成 N 份,分别传入 N 个计算设备(即数据并行)

- 3、使用分布式计算的 All-reduce 操作,在计算设备间高效交换梯度数据并进行求和,使得最终每个设备都有了所有设备的梯度之和,使用梯度求和的结果更新本地变量

- 当所有设备均更新本地变量后,进行下一轮训练(即该并行策略是同步的)。默认情况下,TensorFlow 中的 MirroredStrategy 策略使用 NVIDIA NCCL 进行 All-reduce 操作。

-

2、构建代码步骤:

1、使用这种策略时,我们只需实例化一个 MirroredStrategy 策略:

strategy = tf.distribute.MirroredStrategy()

2、并将模型构建的代码放入 strategy.scope() 的上下文环境中:

with strategy.scope():

# 模型构建代码

或者可以在参数中指定设备,如:

strategy = tf.distribute.MirroredStrategy(devices=["/gpu:0", "/gpu:1"])即指定只使用第 0、1 号 GPU 参与分布式策略。

4.8.1.1 MirroredStrategy进行分类模型训练

以下代码展示了使用 MirroredStrategy 策略,在 TensorFlow Datasets 中的部分图像数据集上使用 Keras 训练 MobileNetV2 的过程:

import tensorflow as tf

import tensorflow_datasets as tfds

num_epochs = 5

batch_size_per_replica = 64

learning_rate = 0.001

strategy = tf.distribute.MirroredStrategy()

print('Number of devices: %d' % strategy.num_replicas_in_sync) # 输出设备数量

batch_size = batch_size_per_replica * strategy.num_replicas_in_sync

# 载入数据集并预处理

def resize(image, label):

image = tf.image.resize(image, [224, 224]) / 255.0

return image, label

# 当as_supervised为True时,返回image和label两个键值

dataset = tfds.load("cats_vs_dogs", split=tfds.Split.TRAIN, as_supervised=True)

dataset = dataset.map(resize).shuffle(1024).batch(batch_size)

with strategy.scope():

model = tf.keras.applications.MobileNetV2()

model.compile(

optimizer=tf.keras.optimizers.Adam(learning_rate=learning_rate),

loss=tf.keras.losses.sparse_categorical_crossentropy,

metrics=[tf.keras.metrics.sparse_categorical_accuracy]

)

model.fit(dataset, epochs=num_epochs)

这个是在线下测试的结果

-

epoch=5,batch_size=64

-

2块 NVIDIA GeForce GTX 1080 Ti 显卡进行单机多卡的模型训练。

| 数据集 | 单机无分布式(Batch Size 为 64) | 单机多卡(总 Batch Size 为 64) | 单机多卡(总 Batch Size 为 256) |

|---|---|---|---|

| cats_vs_dogs | 150s/epoch | 36s/epoch | 30s/epoch |

4.8.2 多机训练: MultiWorkerMirroredStrategy

多机训练的方法和单机多卡类似,将 MirroredStrategy 更换为适合多机训练的 MultiWorkerMirroredStrategy 即可。不过,由于涉及到多台计算机之间的通讯,还需要进行一些额外的设置。

1、需要设置环境变量 TF_CONFIG ,示例如下:

os.environ['TF_CONFIG'] = json.dumps({

'cluster': {

'worker': ["localhost:8888", "localhost:9999"]

},

'task': {'type': 'worker', 'index': 0}

})

2、TF_CONFIG 由 cluster 和 task 两部分组成:

-

cluster 说明了整个多机集群的结构和每台机器的网络地址(IP + 端口号)。对于每一台机器,cluster 的值都是相同的。

-

task 说明了当前机器的角色。例如, {'type': 'worker', 'index': 0} 说明当前机器是 cluster 中的第 0 个 worker(即 localhost:20000 )。每一台机器的 task 值都需要针对当前主机进行分别的设置。

3、运行过程

-

1、在所有的机器上逐个运行训练代码即可。先运行的代码在尚未与其他主机连接时会进入监听状态,待整个集群的连接建立完毕后,所有的机器即会同时开始训练。

-

2、假设有两台机器,即首先在两台机器上均部署这个程序,唯一的区别是 task 部分,第一台机器设置为 {'type': 'worker', 'index': 0} ,第二台机器设置为 {'type': 'worker', 'index': 1} 。接下来,在两台机器上依次运行程序,待通讯成功后,即会自动开始训练流程。

多机训练代码

同样对于同一个数据及进行训练的时候,指定多机代码:

import tensorflow_datasets as tfds

import os

import json

num_epochs = 5

batch_size_per_replica = 64

learning_rate = 0.001

num_workers = 2

# 1、指定集群环境

os.environ['TF_CONFIG'] = json.dumps({

'cluster': {

'worker': ["localhost:20000", "localhost:20001"]

},

'task': {'type': 'worker', 'index': 0}

})

batch_size = batch_size_per_replica * num_workers

def resize(image, label):

image = tf.image.resize(image, [224, 224]) / 255.0

return image, label

dataset = tfds.load("cats_vs_dogs", split=tfds.Split.TRAIN, as_supervised=True)

dataset = dataset.map(resize).shuffle(1024).batch(batch_size)

# 2、初始化集群

strategy = tf.distribute.experimental.MultiWorkerMirroredStrategy()

# 3、上下文环境定义模型

with strategy.scope():

model = tf.keras.applications.MobileNetV2()

model.compile(

optimizer=tf.keras.optimizers.Adam(learning_rate=learning_rate),

loss=tf.keras.losses.sparse_categorical_crossentropy,

metrics=[tf.keras.metrics.sparse_categorical_accuracy]

)

model.fit(dataset, epochs=num_epochs)

测试结果比较:单张 NVIDIA Tesla K80 显卡,使用单机单卡时,Batch Size 设置为 64;使用双机单卡时,测试总 Batch Size 为 64(分发到单台机器的 Batch Size 为 32)和总 Batch Size 为 128(分发到单台机器的 Batch Size 为 64)两种情况。

| 数据集 | 单机单卡(Batch Size 为 64) | 双机单卡(总 Batch Size 为 64) | 双机单卡(总 Batch Size 为 128) |

|---|---|---|---|

| cats_vs_dogs | 1622s | 858s | 755s |

4.8.3 总结

- TensorFlow的分布式训练接口使用

6-3,使用单GPU训练模型

深度学习的训练过程常常非常耗时,一个模型训练几个小时是家常便饭,训练几天也是常有的事情,有时候甚至要训练几十天。

训练过程的耗时主要来自于两个部分,一部分来自数据准备,另一部分来自参数迭代。

当数据准备过程还是模型训练时间的主要瓶颈时,我们可以使用更多进程来准备数据。

当参数迭代过程成为训练时间的主要瓶颈时,我们通常的方法是应用GPU或者Google的TPU来进行加速。

详见《用GPU加速Keras模型——Colab免费GPU使用攻略》

https://zhuanlan.zhihu.com/p/68509398

无论是内置fit方法,还是自定义训练循环,从CPU切换成单GPU训练模型都是非常方便的,无需更改任何代码。当存在可用的GPU时,如果不特意指定device,tensorflow会自动优先选择使用GPU来创建张量和执行张量计算。

但如果是在公司或者学校实验室的服务器环境,存在多个GPU和多个使用者时,为了不让单个同学的任务占用全部GPU资源导致其他同学无法使用(tensorflow默认获取全部GPU的全部内存资源权限,但实际上只使用一个GPU的部分资源),我们通常会在开头增加以下几行代码以控制每个任务使用的GPU编号和显存大小,以便其他同学也能够同时训练模型。

在Colab笔记本中:修改->笔记本设置->硬件加速器 中选择 GPU

注:以下代码只能在Colab 上才能正确执行。

可通过以下colab链接测试效果《tf_单GPU》:

https://colab.research.google.com/drive/1r5dLoeJq5z01sU72BX2M5UiNSkuxsEFe

%tensorflow_version 2.x

import tensorflow as tf

print(tf.__version__)from tensorflow.keras import *

#打印时间分割线

@tf.function

def printbar():

ts = tf.timestamp()

today_ts = ts%(24*60*60)

hour = tf.cast(today_ts//3600+8,tf.int32)%tf.constant(24)

minite = tf.cast((today_ts%3600)//60,tf.int32)

second = tf.cast(tf.floor(today_ts%60),tf.int32)

def timeformat(m):

if tf.strings.length(tf.strings.format("{}",m))==1:

return(tf.strings.format("0{}",m))

else:

return(tf.strings.format("{}",m))

timestring = tf.strings.join([timeformat(hour),timeformat(minite),

timeformat(second)],separator = ":")

tf.print("=========="*8,end = "")

tf.print(timestring)

一,GPU设置

gpus = tf.config.list_physical_devices("GPU")

if gpus:

gpu0 = gpus[0] #如果有多个GPU,仅使用第0个GPU

tf.config.experimental.set_memory_growth(gpu0, True) #设置GPU显存用量按需使用

# 或者也可以设置GPU显存为固定使用量(例如:4G)

#tf.config.experimental.set_virtual_device_configuration(gpu0,

# [tf.config.experimental.VirtualDeviceConfiguration(memory_limit=4096)])

tf.config.set_visible_devices([gpu0],"GPU") 比较GPU和CPU的计算速度

printbar()

with tf.device("/gpu:0"):

tf.random.set_seed(0)

a = tf.random.uniform((10000,100),minval = 0,maxval = 3.0)

b = tf.random.uniform((100,100000),minval = 0,maxval = 3.0)

c = a@b

tf.print(tf.reduce_sum(tf.reduce_sum(c,axis = 0),axis=0))

printbar()================================================================================17:37:01

2.24953778e+11

================================================================================17:37:01printbar()

with tf.device("/cpu:0"):

tf.random.set_seed(0)

a = tf.random.uniform((10000,100),minval = 0,maxval = 3.0)

b = tf.random.uniform((100,100000),minval = 0,maxval = 3.0)

c = a@b

tf.print(tf.reduce_sum(tf.reduce_sum(c,axis = 0),axis=0))

printbar()================================================================================17:37:34

2.24953795e+11

================================================================================17:37:40二,准备数据

MAX_LEN = 300

BATCH_SIZE = 32

(x_train,y_train),(x_test,y_test) = datasets.reuters.load_data()

x_train = preprocessing.sequence.pad_sequences(x_train,maxlen=MAX_LEN)

x_test = preprocessing.sequence.pad_sequences(x_test,maxlen=MAX_LEN)

MAX_WORDS = x_train.max()+1

CAT_NUM = y_train.max()+1

ds_train = tf.data.Dataset.from_tensor_slices((x_train,y_train)) \

.shuffle(buffer_size = 1000).batch(BATCH_SIZE) \

.prefetch(tf.data.experimental.AUTOTUNE).cache()

ds_test = tf.data.Dataset.from_tensor_slices((x_test,y_test)) \

.shuffle(buffer_size = 1000).batch(BATCH_SIZE) \

.prefetch(tf.data.experimental.AUTOTUNE).cache()

三,定义模型

tf.keras.backend.clear_session()

def create_model():

model = models.Sequential()

model.add(layers.Embedding(MAX_WORDS,7,input_length=MAX_LEN))

model.add(layers.Conv1D(filters = 64,kernel_size = 5,activation = "relu"))

model.add(layers.MaxPool1D(2))

model.add(layers.Conv1D(filters = 32,kernel_size = 3,activation = "relu"))

model.add(layers.MaxPool1D(2))

model.add(layers.Flatten())

model.add(layers.Dense(CAT_NUM,activation = "softmax"))

return(model)

model = create_model()

model.summary()

Model: "sequential"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

embedding (Embedding) (None, 300, 7) 216874

_________________________________________________________________

conv1d (Conv1D) (None, 296, 64) 2304

_________________________________________________________________

max_pooling1d (MaxPooling1D) (None, 148, 64) 0

_________________________________________________________________

conv1d_1 (Conv1D) (None, 146, 32) 6176

_________________________________________________________________

max_pooling1d_1 (MaxPooling1 (None, 73, 32) 0

_________________________________________________________________

flatten (Flatten) (None, 2336) 0

_________________________________________________________________

dense (Dense) (None, 46) 107502

=================================================================

Total params: 332,856

Trainable params: 332,856

Non-trainable params: 0

_________________________________________________________________四,训练模型

optimizer = optimizers.Nadam()

loss_func = losses.SparseCategoricalCrossentropy()

train_loss = metrics.Mean(name='train_loss')

train_metric = metrics.SparseCategoricalAccuracy(name='train_accuracy')

valid_loss = metrics.Mean(name='valid_loss')

valid_metric = metrics.SparseCategoricalAccuracy(name='valid_accuracy')

@tf.function

def train_step(model, features, labels):

with tf.GradientTape() as tape:

predictions = model(features,training = True)

loss = loss_func(labels, predictions)

gradients = tape.gradient(loss, model.trainable_variables)

optimizer.apply_gradients(zip(gradients, model.trainable_variables))

train_loss.update_state(loss)

train_metric.update_state(labels, predictions)

@tf.function

def valid_step(model, features, labels):

predictions = model(features)

batch_loss = loss_func(labels, predictions)

valid_loss.update_state(batch_loss)

valid_metric.update_state(labels, predictions)

def train_model(model,ds_train,ds_valid,epochs):

for epoch in tf.range(1,epochs+1):

for features, labels in ds_train:

train_step(model,features,labels)

for features, labels in ds_valid:

valid_step(model,features,labels)

logs = 'Epoch={},Loss:{},Accuracy:{},Valid Loss:{},Valid Accuracy:{}'

if epoch%1 ==0:

printbar()

tf.print(tf.strings.format(logs,

(epoch,train_loss.result(),train_metric.result(),valid_loss.result(),valid_metric.result())))

tf.print("")

train_loss.reset_states()

valid_loss.reset_states()

train_metric.reset_states()

valid_metric.reset_states()

train_model(model,ds_train,ds_test,10)================================================================================17:13:26

Epoch=1,Loss:1.96735072,Accuracy:0.489200622,Valid Loss:1.64124215,Valid Accuracy:0.582813919

================================================================================17:13:28

Epoch=2,Loss:1.4640888,Accuracy:0.624805152,Valid Loss:1.5559175,Valid Accuracy:0.607747078

================================================================================17:13:30

Epoch=3,Loss:1.20681274,Accuracy:0.68581605,Valid Loss:1.58494771,Valid Accuracy:0.622439921

================================================================================17:13:31

Epoch=4,Loss:0.937500894,Accuracy:0.75361836,Valid Loss:1.77466083,Valid Accuracy:0.621994674

================================================================================17:13:33

Epoch=5,Loss:0.693960547,Accuracy:0.822199941,Valid Loss:2.00267363,Valid Accuracy:0.6197685

================================================================================17:13:35

Epoch=6,Loss:0.519614,Accuracy:0.870296121,Valid Loss:2.23463202,Valid Accuracy:0.613980412

================================================================================17:13:37

Epoch=7,Loss:0.408562034,Accuracy:0.901246965,Valid Loss:2.46969271,Valid Accuracy:0.612199485

================================================================================17:13:39

Epoch=8,Loss:0.339028627,Accuracy:0.920062363,Valid Loss:2.68585229,Valid Accuracy:0.615316093

================================================================================17:13:41

Epoch=9,Loss:0.293798745,Accuracy:0.92930305,Valid Loss:2.88995624,Valid Accuracy:0.613535166

================================================================================17:13:43

Epoch=10,Loss:0.263130337,Accuracy:0.936651051,Valid Loss:3.09705234,Valid Accuracy:0.6126446726-4,使用多GPU训练模型

如果使用多GPU训练模型,推荐使用内置fit方法,较为方便,仅需添加2行代码。

在Colab笔记本中:修改->笔记本设置->硬件加速器 中选择 GPU

注:以下代码只能在Colab 上才能正确执行。

可通过以下colab链接测试效果《tf_多GPU》:

https://colab.research.google.com/drive/1j2kp_t0S_cofExSN7IyJ4QtMscbVlXU-

MirroredStrategy过程简介:

-

训练开始前,该策略在所有 N 个计算设备上均各复制一份完整的模型;

-

每次训练传入一个批次的数据时,将数据分成 N 份,分别传入 N 个计算设备(即数据并行);

-

N 个计算设备使用本地变量(镜像变量)分别计算自己所获得的部分数据的梯度;

-

使用分布式计算的 All-reduce 操作,在计算设备间高效交换梯度数据并进行求和,使得最终每个设备都有了所有设备的梯度之和;

-

使用梯度求和的结果更新本地变量(镜像变量);

-

当所有设备均更新本地变量后,进行下一轮训练(即该并行策略是同步的)。

%tensorflow_version 2.x

import tensorflow as tf

print(tf.__version__)

from tensorflow.keras import * #此处在colab上使用1个GPU模拟出两个逻辑GPU进行多GPU训练

gpus = tf.config.experimental.list_physical_devices('GPU')

if gpus:

# 设置两个逻辑GPU模拟多GPU训练

try:

tf.config.experimental.set_virtual_device_configuration(gpus[0],

[tf.config.experimental.VirtualDeviceConfiguration(memory_limit=1024),

tf.config.experimental.VirtualDeviceConfiguration(memory_limit=1024)])

logical_gpus = tf.config.experimental.list_logical_devices('GPU')

print(len(gpus), "Physical GPU,", len(logical_gpus), "Logical GPUs")

except RuntimeError as e:

print(e)一,准备数据

MAX_LEN = 300

BATCH_SIZE = 32

(x_train,y_train),(x_test,y_test) = datasets.reuters.load_data()

x_train = preprocessing.sequence.pad_sequences(x_train,maxlen=MAX_LEN)

x_test = preprocessing.sequence.pad_sequences(x_test,maxlen=MAX_LEN)

MAX_WORDS = x_train.max()+1

CAT_NUM = y_train.max()+1

ds_train = tf.data.Dataset.from_tensor_slices((x_train,y_train)) \

.shuffle(buffer_size = 1000).batch(BATCH_SIZE) \

.prefetch(tf.data.experimental.AUTOTUNE).cache()

ds_test = tf.data.Dataset.from_tensor_slices((x_test,y_test)) \

.shuffle(buffer_size = 1000).batch(BATCH_SIZE) \

.prefetch(tf.data.experimental.AUTOTUNE).cache()

二,定义模型

tf.keras.backend.clear_session()

def create_model():

model = models.Sequential()

model.add(layers.Embedding(MAX_WORDS,7,input_length=MAX_LEN))

model.add(layers.Conv1D(filters = 64,kernel_size = 5,activation = "relu"))

model.add(layers.MaxPool1D(2))

model.add(layers.Conv1D(filters = 32,kernel_size = 3,activation = "relu"))

model.add(layers.MaxPool1D(2))

model.add(layers.Flatten())

model.add(layers.Dense(CAT_NUM,activation = "softmax"))

return(model)

def compile_model(model):

model.compile(optimizer=optimizers.Nadam(),

loss=losses.SparseCategoricalCrossentropy(from_logits=True),

metrics=[metrics.SparseCategoricalAccuracy(),metrics.SparseTopKCategoricalAccuracy(5)])

return(model)三,训练模型

#增加以下两行代码

strategy = tf.distribute.MirroredStrategy()

with strategy.scope():

model = create_model()

model.summary()

model = compile_model(model)

history = model.fit(ds_train,validation_data = ds_test,epochs = 10) WARNING:tensorflow:NCCL is not supported when using virtual GPUs, fallingback to reduction to one device

INFO:tensorflow:Using MirroredStrategy with devices ('/job:localhost/replica:0/task:0/device:GPU:0', '/job:localhost/replica:0/task:0/device:GPU:1')

Model: "sequential"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

embedding (Embedding) (None, 300, 7) 216874

_________________________________________________________________

conv1d (Conv1D) (None, 296, 64) 2304

_________________________________________________________________

max_pooling1d (MaxPooling1D) (None, 148, 64) 0

_________________________________________________________________

conv1d_1 (Conv1D) (None, 146, 32) 6176

_________________________________________________________________

max_pooling1d_1 (MaxPooling1 (None, 73, 32) 0

_________________________________________________________________

flatten (Flatten) (None, 2336) 0

_________________________________________________________________

dense (Dense) (None, 46) 107502

=================================================================

Total params: 332,856

Trainable params: 332,856

Non-trainable params: 0

_________________________________________________________________

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:CPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:CPU:0',).

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:CPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:CPU:0',).

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:CPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:CPU:0',).

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:CPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:CPU:0',).

Train for 281 steps, validate for 71 steps

Epoch 1/10

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:GPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:GPU:0', '/job:localhost/replica:0/task:0/device:GPU:1').

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:GPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:GPU:0', '/job:localhost/replica:0/task:0/device:GPU:1').

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:GPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:GPU:0', '/job:localhost/replica:0/task:0/device:GPU:1').

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:GPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:GPU:0', '/job:localhost/replica:0/task:0/device:GPU:1').

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:GPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:GPU:0', '/job:localhost/replica:0/task:0/device:GPU:1').

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:GPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:GPU:0', '/job:localhost/replica:0/task:0/device:GPU:1').

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:GPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:GPU:0', '/job:localhost/replica:0/task:0/device:GPU:1').

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:CPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:CPU:0',).

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:CPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:CPU:0',).

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:CPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:CPU:0',).

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:CPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:CPU:0',).

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:CPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:CPU:0',).

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:CPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:CPU:0',).

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:GPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:GPU:0', '/job:localhost/replica:0/task:0/device:GPU:1').

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:GPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:GPU:0', '/job:localhost/replica:0/task:0/device:GPU:1').

INFO:tensorflow:Reduce to /job:localhost/replica:0/task:0/device:GPU:0 then broadcast to ('/job:localhost/replica:0/task:0/device:GPU:0', '/job:localhost/replica:0/task:0/device:GPU:1').

281/281 [==============================] - 15s 53ms/step - loss: 2.0270 - sparse_categorical_accuracy: 0.4653 - sparse_top_k_categorical_accuracy: 0.7481 - val_loss: 1.7517 - val_sparse_categorical_accuracy: 0.5481 - val_sparse_top_k_categorical_accuracy: 0.7578

Epoch 2/10

281/281 [==============================] - 4s 14ms/step - loss: 1.5206 - sparse_categorical_accuracy: 0.6045 - sparse_top_k_categorical_accuracy: 0.7938 - val_loss: 1.5715 - val_sparse_categorical_accuracy: 0.5993 - val_sparse_top_k_categorical_accuracy: 0.7983

Epoch 3/10

281/281 [==============================] - 4s 14ms/step - loss: 1.2178 - sparse_categorical_accuracy: 0.6843 - sparse_top_k_categorical_accuracy: 0.8547 - val_loss: 1.5232 - val_sparse_categorical_accuracy: 0.6327 - val_sparse_top_k_categorical_accuracy: 0.8112

Epoch 4/10

281/281 [==============================] - 4s 13ms/step - loss: 0.9127 - sparse_categorical_accuracy: 0.7648 - sparse_top_k_categorical_accuracy: 0.9113 - val_loss: 1.6527 - val_sparse_categorical_accuracy: 0.6296 - val_sparse_top_k_categorical_accuracy: 0.8201

Epoch 5/10

281/281 [==============================] - 4s 14ms/step - loss: 0.6606 - sparse_categorical_accuracy: 0.8321 - sparse_top_k_categorical_accuracy: 0.9525 - val_loss: 1.8791 - val_sparse_categorical_accuracy: 0.6158 - val_sparse_top_k_categorical_accuracy: 0.8219

Epoch 6/10

281/281 [==============================] - 4s 14ms/step - loss: 0.4919 - sparse_categorical_accuracy: 0.8799 - sparse_top_k_categorical_accuracy: 0.9725 - val_loss: 2.1282 - val_sparse_categorical_accuracy: 0.6037 - val_sparse_top_k_categorical_accuracy: 0.8112

Epoch 7/10

281/281 [==============================] - 4s 14ms/step - loss: 0.3947 - sparse_categorical_accuracy: 0.9051 - sparse_top_k_categorical_accuracy: 0.9814 - val_loss: 2.3033 - val_sparse_categorical_accuracy: 0.6046 - val_sparse_top_k_categorical_accuracy: 0.8094

Epoch 8/10

281/281 [==============================] - 4s 14ms/step - loss: 0.3335 - sparse_categorical_accuracy: 0.9207 - sparse_top_k_categorical_accuracy: 0.9863 - val_loss: 2.4255 - val_sparse_categorical_accuracy: 0.5993 - val_sparse_top_k_categorical_accuracy: 0.8099

Epoch 9/10

281/281 [==============================] - 4s 14ms/step - loss: 0.2919 - sparse_categorical_accuracy: 0.9304 - sparse_top_k_categorical_accuracy: 0.9911 - val_loss: 2.5571 - val_sparse_categorical_accuracy: 0.6020 - val_sparse_top_k_categorical_accuracy: 0.8126

Epoch 10/10

281/281 [==============================] - 4s 14ms/step - loss: 0.2617 - sparse_categorical_accuracy: 0.9342 - sparse_top_k_categorical_accuracy: 0.9937 - val_loss: 2.6700 - val_sparse_categorical_accuracy: 0.6077 - val_sparse_top_k_categorical_accuracy: 0.8148

CPU times: user 1min 2s, sys: 8.59 s, total: 1min 10s

Wall time: 58.5 s6-5,使用TPU训练模型

如果想尝试使用Google Colab上的TPU来训练模型,也是非常方便,仅需添加6行代码。

在Colab笔记本中:修改->笔记本设置->硬件加速器 中选择 TPU

注:以下代码只能在Colab 上才能正确执行。

可通过以下colab链接测试效果《tf_TPU》:

https://colab.research.google.com/drive/1XCIhATyE1R7lq6uwFlYlRsUr5d9_-r1s

%tensorflow_version 2.x

import tensorflow as tf

print(tf.__version__)

from tensorflow.keras import * 一,准备数据

MAX_LEN = 300

BATCH_SIZE = 32

(x_train,y_train),(x_test,y_test) = datasets.reuters.load_data()

x_train = preprocessing.sequence.pad_sequences(x_train,maxlen=MAX_LEN)

x_test = preprocessing.sequence.pad_sequences(x_test,maxlen=MAX_LEN)

MAX_WORDS = x_train.max()+1

CAT_NUM = y_train.max()+1

ds_train = tf.data.Dataset.from_tensor_slices((x_train,y_train)) \

.shuffle(buffer_size = 1000).batch(BATCH_SIZE) \

.prefetch(tf.data.experimental.AUTOTUNE).cache()

ds_test = tf.data.Dataset.from_tensor_slices((x_test,y_test)) \

.shuffle(buffer_size = 1000).batch(BATCH_SIZE) \

.prefetch(tf.data.experimental.AUTOTUNE).cache()二,定义模型

tf.keras.backend.clear_session()

def create_model():

model = models.Sequential()

model.add(layers.Embedding(MAX_WORDS,7,input_length=MAX_LEN))

model.add(layers.Conv1D(filters = 64,kernel_size = 5,activation = "relu"))

model.add(layers.MaxPool1D(2))

model.add(layers.Conv1D(filters = 32,kernel_size = 3,activation = "relu"))

model.add(layers.MaxPool1D(2))

model.add(layers.Flatten())

model.add(layers.Dense(CAT_NUM,activation = "softmax"))

return(model)

def compile_model(model):

model.compile(optimizer=optimizers.Nadam(),

loss=losses.SparseCategoricalCrossentropy(from_logits=True),

metrics=[metrics.SparseCategoricalAccuracy(),metrics.SparseTopKCategoricalAccuracy(5)])

return(model)三,训练模型

#增加以下6行代码

import os

resolver = tf.distribute.cluster_resolver.TPUClusterResolver(tpu='grpc://' + os.environ['COLAB_TPU_ADDR'])

tf.config.experimental_connect_to_cluster(resolver)

tf.tpu.experimental.initialize_tpu_system(resolver)

strategy = tf.distribute.experimental.TPUStrategy(resolver)

with strategy.scope():

model = create_model()

model.summary()

model = compile_model(model)

WARNING:tensorflow:TPU system 10.26.134.242:8470 has already been initialized. Reinitializing the TPU can cause previously created variables on TPU to be lost.

WARNING:tensorflow:TPU system 10.26.134.242:8470 has already been initialized. Reinitializing the TPU can cause previously created variables on TPU to be lost.

INFO:tensorflow:Initializing the TPU system: 10.26.134.242:8470

INFO:tensorflow:Initializing the TPU system: 10.26.134.242:8470

INFO:tensorflow:Clearing out eager caches

INFO:tensorflow:Clearing out eager caches

INFO:tensorflow:Finished initializing TPU system.

INFO:tensorflow:Finished initializing TPU system.

INFO:tensorflow:Found TPU system:

INFO:tensorflow:Found TPU system:

INFO:tensorflow:*** Num TPU Cores: 8

INFO:tensorflow:*** Num TPU Cores: 8

INFO:tensorflow:*** Num TPU Workers: 1

INFO:tensorflow:*** Num TPU Workers: 1

INFO:tensorflow:*** Num TPU Cores Per Worker: 8

INFO:tensorflow:*** Num TPU Cores Per Worker: 8

INFO:tensorflow:*** Available Device: _DeviceAttributes(/job:localhost/replica:0/task:0/device:CPU:0, CPU, 0, 0)

INFO:tensorflow:*** Available Device: _DeviceAttributes(/job:localhost/replica:0/task:0/device:CPU:0, CPU, 0, 0)

INFO:tensorflow:*** Available Device: _DeviceAttributes(/job:localhost/replica:0/task:0/device:XLA_CPU:0, XLA_CPU, 0, 0)

INFO:tensorflow:*** Available Device: _DeviceAttributes(/job:localhost/replica:0/task:0/device:XLA_CPU:0, XLA_CPU, 0, 0)

INFO:tensorflow:*** Available Device: _DeviceAttributes(/job:worker/replica:0/task:0/device:CPU:0, CPU, 0, 0)

INFO:tensorflow:*** Available Device: _DeviceAttributes(/job:worker/replica:0/task:0/device:CPU:0, CPU, 0, 0)

INFO:tensorflow:*** Available Device: _DeviceAttributes(/job:worker/replica:0/task:0/device:TPU:0, TPU, 0, 0)

INFO:tensorflow:*** Available Device: _DeviceAttributes(/job:worker/replica:0/task:0/device:TPU:0, TPU, 0, 0)

INFO:tensorflow:*** Available Device: _DeviceAttributes(/job:worker/replica:0/task:0/device:TPU:1, TPU, 0, 0)

INFO:tensorflow:*** Available Device: _DeviceAttributes(/job:worker/replica:0/task:0/device:TPU:1, TPU, 0, 0)

INFO:tensorflow:*** Available Device: _DeviceAttributes(/job:worker/replica:0/task:0/device:TPU:2, TPU, 0, 0)

INFO:tensorflow:*** Available Device: _DeviceAttributes(/job:worker/replica:0/task:0/device:TPU:2, TPU, 0, 0)

INFO:tensorflow:*** Available Device: _DeviceAttributes(/job:worker/replica:0/task:0/device:TPU:3, TPU, 0, 0)

INFO:tensorflow:*** Available Device: _DeviceAttributes(/job:worker/replica:0/task:0/device:TPU:3, TPU, 0, 0)

INFO:tensorflow:*** Available Device: _DeviceAttributes(/job:worker/replica:0/task:0/device:TPU:4, TPU, 0, 0)

INFO:tensorflow:*** Available Device: _DeviceAttributes(/job:worker/replica:0/task:0/device:TPU:4, TPU, 0, 0)

INFO:tensorflow:*** Available Device: _DeviceAttributes(/job:worker/replica:0/task:0/device:TPU:5, TPU, 0, 0)

INFO:tensorflow:*** Available Device: _DeviceAttributes(/job:worker/replica:0/task:0/device:TPU:5, TPU, 0, 0)

INFO:tensorflow:*** Available Device: _DeviceAttributes(/job:worker/replica:0/task:0/device:TPU:6, TPU, 0, 0)

INFO:tensorflow:*** Available Device: _DeviceAttributes(/job:worker/replica:0/task:0/device:TPU:6, TPU, 0, 0)

INFO:tensorflow:*** Available Device: _DeviceAttributes(/job:worker/replica:0/task:0/device:TPU:7, TPU, 0, 0)

INFO:tensorflow:*** Available Device: _DeviceAttributes(/job:worker/replica:0/task:0/device:TPU:7, TPU, 0, 0)

INFO:tensorflow:*** Available Device: _DeviceAttributes(/job:worker/replica:0/task:0/device:TPU_SYSTEM:0, TPU_SYSTEM, 0, 0)

INFO:tensorflow:*** Available Device: _DeviceAttributes(/job:worker/replica:0/task:0/device:TPU_SYSTEM:0, TPU_SYSTEM, 0, 0)

INFO:tensorflow:*** Available Device: _DeviceAttributes(/job:worker/replica:0/task:0/device:XLA_CPU:0, XLA_CPU, 0, 0)

INFO:tensorflow:*** Available Device: _DeviceAttributes(/job:worker/replica:0/task:0/device:XLA_CPU:0, XLA_CPU, 0, 0)

Model: "sequential"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

embedding (Embedding) (None, 300, 7) 216874

_________________________________________________________________

conv1d (Conv1D) (None, 296, 64) 2304

_________________________________________________________________

max_pooling1d (MaxPooling1D) (None, 148, 64) 0

_________________________________________________________________

conv1d_1 (Conv1D) (None, 146, 32) 6176

_________________________________________________________________

max_pooling1d_1 (MaxPooling1 (None, 73, 32) 0

_________________________________________________________________

flatten (Flatten) (None, 2336) 0

_________________________________________________________________

dense (Dense) (None, 46) 107502

=================================================================

Total params: 332,856

Trainable params: 332,856

Non-trainable params: 0

_________________________________________________________________history = model.fit(ds_train,validation_data = ds_test,epochs = 10)Train for 281 steps, validate for 71 steps

Epoch 1/10

281/281 [==============================] - 12s 43ms/step - loss: 3.4466 - sparse_categorical_accuracy: 0.4332 - sparse_top_k_categorical_accuracy: 0.7180 - val_loss: 3.3179 - val_sparse_categorical_accuracy: 0.5352 - val_sparse_top_k_categorical_accuracy: 0.7195

Epoch 2/10

281/281 [==============================] - 6s 20ms/step - loss: 3.3251 - sparse_categorical_accuracy: 0.5405 - sparse_top_k_categorical_accuracy: 0.7302 - val_loss: 3.3082 - val_sparse_categorical_accuracy: 0.5463 - val_sparse_top_k_categorical_accuracy: 0.7235

Epoch 3/10

281/281 [==============================] - 6s 20ms/step - loss: 3.2961 - sparse_categorical_accuracy: 0.5729 - sparse_top_k_categorical_accuracy: 0.7280 - val_loss: 3.3026 - val_sparse_categorical_accuracy: 0.5499 - val_sparse_top_k_categorical_accuracy: 0.7217

Epoch 4/10

281/281 [==============================] - 5s 19ms/step - loss: 3.2751 - sparse_categorical_accuracy: 0.5924 - sparse_top_k_categorical_accuracy: 0.7276 - val_loss: 3.2957 - val_sparse_categorical_accuracy: 0.5543 - val_sparse_top_k_categorical_accuracy: 0.7217

Epoch 5/10

281/281 [==============================] - 5s 19ms/step - loss: 3.2655 - sparse_categorical_accuracy: 0.6008 - sparse_top_k_categorical_accuracy: 0.7290 - val_loss: 3.3022 - val_sparse_categorical_accuracy: 0.5490 - val_sparse_top_k_categorical_accuracy: 0.7231

Epoch 6/10

281/281 [==============================] - 5s 19ms/step - loss: 3.2616 - sparse_categorical_accuracy: 0.6041 - sparse_top_k_categorical_accuracy: 0.7295 - val_loss: 3.3015 - val_sparse_categorical_accuracy: 0.5503 - val_sparse_top_k_categorical_accuracy: 0.7235

Epoch 7/10

281/281 [==============================] - 6s 21ms/step - loss: 3.2595 - sparse_categorical_accuracy: 0.6059 - sparse_top_k_categorical_accuracy: 0.7322 - val_loss: 3.3064 - val_sparse_categorical_accuracy: 0.5454 - val_sparse_top_k_categorical_accuracy: 0.7266

Epoch 8/10

281/281 [==============================] - 6s 21ms/step - loss: 3.2591 - sparse_categorical_accuracy: 0.6063 - sparse_top_k_categorical_accuracy: 0.7327 - val_loss: 3.3025 - val_sparse_categorical_accuracy: 0.5481 - val_sparse_top_k_categorical_accuracy: 0.7231

Epoch 9/10

281/281 [==============================] - 5s 19ms/step - loss: 3.2588 - sparse_categorical_accuracy: 0.6062 - sparse_top_k_categorical_accuracy: 0.7332 - val_loss: 3.2992 - val_sparse_categorical_accuracy: 0.5521 - val_sparse_top_k_categorical_accuracy: 0.7257

Epoch 10/10

281/281 [==============================] - 5s 18ms/step - loss: 3.2577 - sparse_categorical_accuracy: 0.6073 - sparse_top_k_categorical_accuracy: 0.7363 - val_loss: 3.2981 - val_sparse_categorical_accuracy: 0.5516 - val_sparse_top_k_categorical_accuracy: 0.7306

CPU times: user 18.9 s, sys: 3.86 s, total: 22.7 s

Wall time: 1min 1s