Python实战——选择最佳旅游攻略,让旅游更加便捷(爬虫实战)

前言: 打算和老弟去西安来一个说走就走的旅行,但是网上攻略太多了看得头皮发麻,但是仔细看的话每条旅游攻略都有特定的参数条件的,比如人数、价钱、游玩时间,也就是说我们可以通过筛选这些条件初步获取我们满意的攻略。

1 前期准备

这次爬的是去哪儿网,网站大概长这样

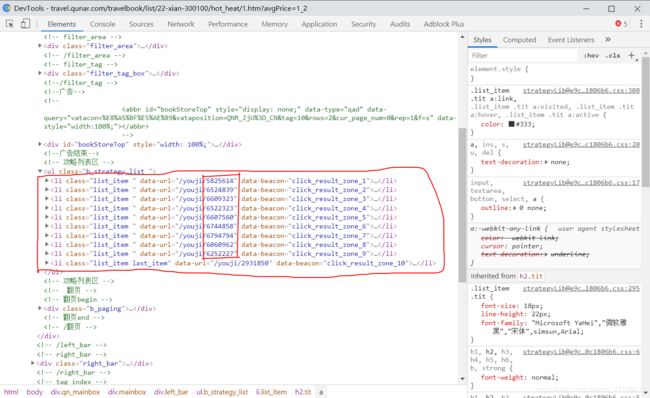

如果我们搜一个旅行地点,会得到这么一个网页

网页的网址为:http://travel.qunar.com/travelbook/list/22-xian-300100/hot_heat/1.htm?avgPrice=1_2

其中有这么几个参数需要我们注意:

- “22-xian-300100”:这个与我们选择的旅游目的地有关;

- “1”:“hot_heat”之后的那个1代表页码,这是翻页逻辑,很重要,码住;

- “avgPrice”:这个参数的取值为1_2,意思是费用为第一个“1-999”与第二个“1000-2999”,可以根据自己需要选择。

还有一个很重要的,每一个攻略都对应于一个编号,那么这个编号怎么获取呢?我们看一下网页的源码:

红色框框框住的就是第一个的10个旅游攻略对应的编码,获取的代码我是看了一篇公众号文章才知道的,侵删。

好了,知道这些之后就可以开始正式爬取了。

2 数据爬取

这一块直接上代码了,就不多说了。

2.1 旅游攻略编号获取

# -*- coding: utf-8 -*-

import requests

from bs4 import BeautifulSoup

import time

import random

from fake_useragent import UserAgent

#import re

#爬取每个网址的分页

fb = open(r'url.txt','w')

# --------------------所有城市------------------------- #

#url = 'http://travel.qunar.com/travelbook/list.htm?page={}&order=hot_heat&avgPrice=1_2'

#请求头,cookies在电脑网页中可以查到

#ua = UserAgent()

#headers={'user-agent':ua.random,

# 'cookies':'JSESSIONID=5E9DCED322523560401A95B8643B49DF; QN1=00002b80306c204d8c38c41b; QN300=s%3Dbaidu; QN99=2793; QN205=s%3Dbaidu; QN277=s%3Dbaidu; QunarGlobal=10.86.213.148_-3ad026b5_17074636b8f_-44df|1582508935699; QN601=64fd2a8e533e94d422ac3da458ee6e88; _i=RBTKSueZDCmVnmnwlQKbrHgrodMx; QN269=D32536A056A711EA8A2FFA163E642F8B; QN48=6619068f-3a3c-496c-9370-e033bd32cbcc; fid=ae39c42c-66b4-4e2d-880f-fb3f1bfe72d0; QN49=13072299; csrfToken=51sGhnGXCSQTDKWcdAWIeIrhZLG86cka; QN163=0; Hm_lvt_c56a2b5278263aa647778d304009eafc=1582513259,1582529930,1582551099,1582588666; viewdist=298663-1; uld=1-300750-1-1582590496|1-300142-1-1582590426|1-298663-1-1582590281|1-300698-1-1582514815; _vi=6vK5Gry4UmXDT70IFohKyFF8R8Mu0SvtUfxawwaKYRTq9NKud1iKUt8qkTLGH74E80hXLLVOFPYqRGy52OuTFnhpWvBXWEbkOJaDGaX_5L6CnyiQPPOYb2lFVxrJXsVd-W4NGHRzYtRQ5cJmiAbasK8kbNgDDhkJVTC9YrY6Rfi2; viewbook=7562814|7470570|7575429|7470584|7473513; QN267=675454631c32674; Hm_lpvt_c56a2b5278263aa647778d304009eafc=1582591567; QN271=c8712b13-2065-4aa7-a70b-e6156f6fc216',

# 'referer':'http://travel.qunar.com/travelbook/list.htm?page=1&order=hot_heat&avgPrice=1'}

# -------------------以西安为例-------------------------#

url = 'http://travel.qunar.com/travelbook/list/22-xian-300100/hot_heat/{}.htm?avgPrice=1_2'

#请求头,cookies在电脑网页中可以查到

ua = UserAgent()

headers = {

'user-agent':ua.random,

'cookies':'JSESSIONID=5E9DCED322523560401A95B8643B49DF; QN1=00002b80306c204d8c38c41b; QN300=s%3Dbaidu; QN99=2793; QN205=s%3Dbaidu; QN277=s%3Dbaidu; QunarGlobal=10.86.213.148_-3ad026b5_17074636b8f_-44df|1582508935699; QN601=64fd2a8e533e94d422ac3da458ee6e88; _i=RBTKSueZDCmVnmnwlQKbrHgrodMx; QN269=D32536A056A711EA8A2FFA163E642F8B; QN48=6619068f-3a3c-496c-9370-e033bd32cbcc; fid=ae39c42c-66b4-4e2d-880f-fb3f1bfe72d0; QN49=13072299; csrfToken=51sGhnGXCSQTDKWcdAWIeIrhZLG86cka; QN163=0; Hm_lvt_c56a2b5278263aa647778d304009eafc=1582513259,1582529930,1582551099,1582588666; viewdist=298663-1; uld=1-300750-1-1582590496|1-300142-1-1582590426|1-298663-1-1582590281|1-300698-1-1582514815; _vi=6vK5Gry4UmXDT70IFohKyFF8R8Mu0SvtUfxawwaKYRTq9NKud1iKUt8qkTLGH74E80hXLLVOFPYqRGy52OuTFnhpWvBXWEbkOJaDGaX_5L6CnyiQPPOYb2lFVxrJXsVd-W4NGHRzYtRQ5cJmiAbasK8kbNgDDhkJVTC9YrY6Rfi2; viewbook=7562814|7470570|7575429|7470584|7473513; QN267=675454631c32674; Hm_lpvt_c56a2b5278263aa647778d304009eafc=1582591567; QN271=c8712b13-2065-4aa7-a70b-e6156f6fc216',

'referer':'http://travel.qunar.com/travelbook/list/22-xian-300100/hot_heat/1.htm?avgPrice=1_2'

}

# -------------------网址爬取--------------------------#

count = 1

#共200页

for i in range(1,201):

url_ = url.format(i)

try:

response = requests.get(url=url_,headers = headers)

response.encoding = 'utf-8'

html = response.text

soup = BeautifulSoup(html,'lxml')

#print(soup)

all_url = soup.find_all('li',attrs={'class': 'list_item'})

#print(all_url[0])

'''

for i in range(len(all_url)):

#p = re.compile(r'data-url="/youji/\d+">')

url = re.findall('data-url="(.*?)"', str(i), re.S)

#url = re.search(p,str(i))

print(url)

'''

print('正在爬取第%s页' % count)

for each in all_url:

each_url = each.find('h2')['data-bookid']

print(each_url)

fb.write(each_url)

fb.write('\n')

#last_url = each.find('li', {"class": "list_item last_item"})['data-url']

#print(last_url)

time.sleep(random.randint(3,5))

count+=1

except Exception as e:

print(e)

fb.close() # 关闭文件

上面的代码一定要有cookies,要不然爬不下来数据。获取的数据大概长这样:

5825614

6524839

6609323

6522323

6607560

6744858

6794794

6060962

6252227

2931850

这些都是旅游攻略的编号。好了,现在有了编号,接下来就可以爬取关键信息了。

2.2 关键信息获取

数据地址为:去哪儿网->攻略->攻略库->热门游记,获取的信息主要是下图中所示的

主要有:出发时间、旅游天数、人均费用、结伴人物、玩法、一条简介、游记的浏览量、爬下来的数据都存到csv文件中去了,代码如下:

# -*- coding: utf-8 -*-

import requests

from bs4 import BeautifulSoup

import time

import csv

import random

from fake_useragent import UserAgent

# ------------------网址生成-----------------------#

url_list = []

with open('url.txt','r') as f:

for i in f.readlines():

i = i.strip()

url_list.append(i)

the_url_list = []

for i in range(len(url_list)):

url = 'http://travel.qunar.com/youji/'

the_url = url + str(url_list[i])

the_url_list.append(the_url)

last_list = []

# 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/76.0.3809.87 Safari/537.360'

# --------------------信息获取--------------------#

ua = UserAgent()

def spider():

headers = {

'user-agent': ua.random,

'cookies': 'QN1=00002b80306c204d8c38c41b; QN300=s%3Dbaidu; QN99=2793; QN205=s%3Dbaidu; QN277=s%3Dbaidu; QunarGlobal=10.86.213.148_-3ad026b5_17074636b8f_-44df|1582508935699; QN601=64fd2a8e533e94d422ac3da458ee6e88; _i=RBTKSueZDCmVnmnwlQKbrHgrodMx; QN269=D32536A056A711EA8A2FFA163E642F8B; QN48=6619068f-3a3c-496c-9370-e033bd32cbcc; fid=ae39c42c-66b4-4e2d-880f-fb3f1bfe72d0; QN49=13072299; csrfToken=51sGhnGXCSQTDKWcdAWIeIrhZLG86cka; QN163=0; Hm_lvt_c56a2b5278263aa647778d304009eafc=1582513259,1582529930,1582551099,1582588666; viewdist=298663-1; uld=1-300750-1-1582590496|1-300142-1-1582590426|1-298663-1-1582590281|1-300698-1-1582514815; viewbook=7575429|7473513|7470584|7575429|7470570; QN267=67545462d93fcee; _vi=vofWa8tPffFKNx9MM0ASbMfYySr3IenWr5QF22SjnOoPp1MKGe8_-VroXhkC0UNdM0WdUnvQpqebgva9VacpIkJ3f5lUEBz5uyCzG-xVsC-sIV-jEVDWJNDB2vODycKN36DnmUGS5tvy8EEhfq_soX6JF1OEwVFXk2zow0YZQ2Dr; Hm_lpvt_c56a2b5278263aa647778d304009eafc=1582603181; QN271=fc8dd4bc-3fe6-4690-9823-e27d28e9718c',

'Host': 'travel.qunar.com'

}

count = 1

for i in range(len(the_url_list)):

try:

print('正在爬取第%s页'% count)

response = requests.get(url=the_url_list[i],headers = headers)

response.encoding = 'utf-8'

html = response.text

soup = BeautifulSoup(html,'lxml')

information = soup.find('p',attrs={'class': 'b_crumb_cont'}).text.strip().replace(' ','')

info = information.split('>')

if len(info)>2:

location = info[1].replace('\xa0','').replace('旅游攻略','')

introduction = info[2].replace('\xa0','')

else:

location = info[0].replace('\xa0','')

introduction = info[1].replace('\xa0','')

#print(location)

#print(introduction)

# --------------------数据预处理---------------------------------#

other_information = soup.find('ul',attrs={'class': 'foreword_list'})

when = other_information.find('li',attrs={'class': 'f_item when'})

time1 = when.find('p',attrs={'class': 'txt'}).text.replace('出发日期','').strip()

howlong = other_information.find('li',attrs={'class': 'f_item howlong'})

day = howlong.find('p', attrs={'class': 'txt'}).text.replace('天数','').replace('/','').replace('天','').strip()

howmuch = other_information.find('li',attrs={'class': 'f_item howmuch'})

money = howmuch.find('p', attrs={'class': 'txt'}).text.replace('人均费用','').replace('/','').replace('元','').strip()

who = other_information.find('li',attrs={'class': 'f_item who'})

people = who.find('p',attrs={'class': 'txt'}).text.replace('人物','').replace('/','').strip()

how = other_information.find('li',attrs={'class': 'f_item how'})

play = how.find('p',attrs={'class': 'txt'}).text.replace('玩法','').replace('/','').strip()

Look = soup.find('span',attrs={'class': 'view_count'}).text.strip()

if time1:

Time = time1

else:

Time = '-'

if day:

Day = day

else:

Day = '-'

if money:

Money = money

else:

Money = '-'

if people:

People = people

else:

People = '-'

if play:

Play = play

else:

Play = '-'

last_list.append([location,introduction,Time,Day,Money,People,Play,Look])

#设置爬虫时间

time.sleep(random.randint(3,5))

count+=1

except Exception as e :

print(e)

#写入csv

with open('Travel.csv', 'a', encoding='utf-8-sig', newline='') as csvFile:

csv.writer(csvFile).writerow(['地点', '短评', '出发时间', '天数','人均费用','人物','玩法','浏览量'])

for rows in last_list:

csv.writer(csvFile).writerow(rows)

if __name__ == '__main__':

spider()