Datawhale零基础入门数据挖掘-Task4建模调参

4.1 学习目标

了解常用的机器学习模型,并掌握机器学习模型的建模与调参流程

完成相应学习打卡任务

4.2 内容介绍

1. 线性回归模型:

线性回归对于特征的要求;

处理长尾分布;

理解线性回归模型;

2. 模型性能验证:

评价函数与目标函数;

交叉验证方法;

留一验证方法;

针对时间序列问题的验证;

绘制学习率曲线;

绘制验证曲线;

3. 嵌入式特征选择:

Lasso回归;

Ridge回归;

决策树;

4. 模型对比:

常用线性模型;

常用非线性模型;

4.3 代码

导包

import pandas as pd

import numpy as np

import warnings

warnings.filterwarnings('ignore')

def reduce_mem_usage(df):

""" iterate df 的每个特征,修改数据类型,降低内存占用 """

# 初始 df 的内存占用, sum的结果是 B,除以两个1024,变成 MB

start_mem = df.memory_usage().sum() / 1024 / 1024

print('Memory usage of dataframe is {:.2f} MB'.format(start_mem))

for col in df.columns:

col_type = df[col].dtype

# 对于非object的数值型特征,分别计算该特征的最小值和最大值所占内存的上下界

if col_type != object:

c_min = df[col].min()

c_max = df[col].max()

# 如果是整型数据,从占用内存最小的数据类型开始,依次进行数值比较,测试 特征的取值范围 是否在 该数据类型的取值范围里

if str(col_type)[:3] == 'int':

if c_min > np.iinfo(np.int8).min and c_max < np.iinfo(np.int8).max:

df[col] = df[col].astype(np.int8)

elif c_min > np.iinfo(np.int16).min and c_max < np.iinfo(np.int16).max:

df[col] = df[col].astype(np.int16)

elif c_min > np.iinfo(np.int32).min and c_max < np.iinfo(np.int32).max:

df[col] = df[col].astype(np.int32)

elif c_min > np.iinfo(np.int64).min and c_max < np.iinfo(np.int64).max:

df[col] = df[col].astype(np.int64)

# 如果是浮点型数据,同样是依次进行比较,但是最小的是float16,而且float32基本上已经足够大,够用了

else:

if c_min > np.finfo(np.float16).min and c_max < np.finfo(np.float16).max:

df[col] = df[col].astype(np.float16)

elif c_min > np.finfo(np.float32).min and c_max < np.finfo(np.float32).max:

df[col] = df[col].astype(np.float32)

else:

df[col] = df[col].astype(np.float64)

# 对于object型数据,转换为分类型数据,降低内存占用(没有时间型特征,只留了使用天数)

else:

df[col] = df[col].astype('category')

# 修改每个特征的数据类型后,df 的内存占用

end_mem = df.memory_usage().sum() / 1024 / 1024

print('Memory usage after optimization is: {:.2f} MB'.format(end_mem))

print('Decreased by {:.1f}%'.format(100 * (start_mem - end_mem) / start_mem))

return df

读取数据

data = reduce_mem_usage(pd.read_csv('data_for_tree.gz'))

Memory usage of dataframe is 60507328.00 MB

Memory usage after optimization is: 15724107.00 MB

Decreased by 74.0%

4.3.1 线性回归

基础建模

from sklearn.linear_model import LinearRegression

from matplotlib import pyplot as plt

model = LinearRegression(normalize=True)

model = model.fit(train_X, train_y)

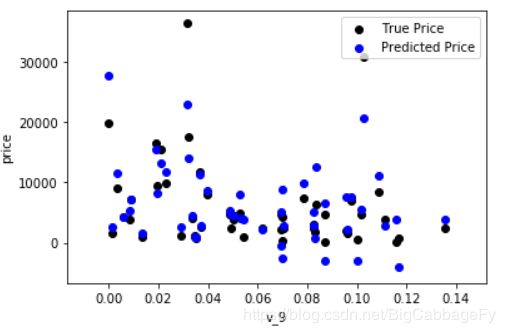

plt.scatter(train_X['v_9'][subsample_index], train_y[subsample_index], color='black')

plt.scatter(train_X['v_9'][subsample_index], model.predict(train_X.loc[subsample_index]), color='blue')

plt.xlabel('v_9')

plt.ylabel('price')

plt.legend(['True Price','Predicted Price'],loc='upper right')

print('The predicted price is obvious different from true price')

plt.show()

import seaborn as sns

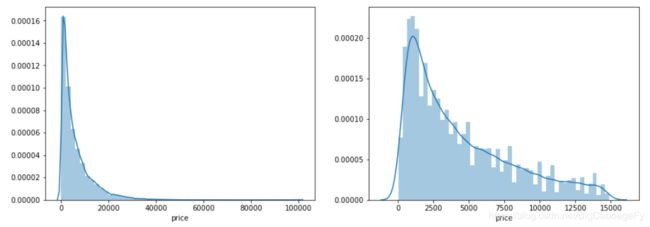

print('It is clear to see the price shows a typical exponential distribution')

plt.figure(figsize=(15,5))

plt.subplot(1,2,1)

sns.distplot(train_y)

plt.subplot(1,2,2)

sns.distplot(train_y[train_y < np.quantile(train_y, 0.9)])

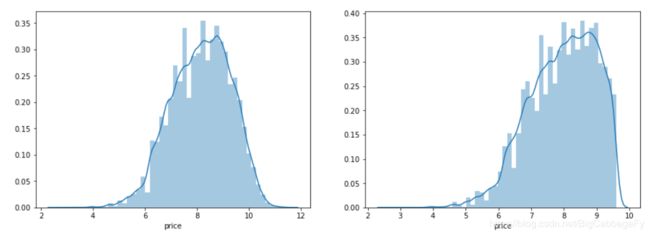

train_y_ln = np.log(train_y + 1)

import seaborn as sns

print('It is clear to see the price shows a typical exponential distribution')

plt.figure(figsize=(15,5))

plt.subplot(1,2,1)

sns.distplot(train_y)

plt.subplot(1,2,2)

sns.distplot(train_y[train_y < np.quantile(train_y, 0.9)])

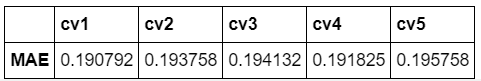

4.3.2 五折交叉验证

from sklearn.model_selection import cross_val_score

from sklearn.metrics import mean_absolute_error, make_scorer

def log_transfer(func):

def wrapper(y, yhat):

result = func(np.log(y), np.nan_to_num(np.log(yhat)))

return result

return wrapper

scores = cross_val_score(model, X=train_X, y=train_y, verbose=1, cv = 5, scoring=make_scorer(log_transfer(mean_absolute_error)))

print('AVG:', np.mean(scores))

scores = cross_val_score(model, X=train_X, y=train_y_ln, verbose=1, cv = 5, scoring=make_scorer(mean_absolute_error))

print('AVG:', np.mean(scores))

scores = pd.DataFrame(scores.reshape(1,-1))

scores.columns = ['cv' + str(x) for x in range(1, 6)]

scores.index = ['MAE']

scores

4.3.3 模拟真实业务情况

绘制学习率曲线与验证曲线

import datetime

sample_feature = sample_feature.reset_index(drop=True)

split_point = len(sample_feature) // 5 * 4

train = sample_feature.loc[:split_point].dropna()

val = sample_feature.loc[split_point:].dropna()

train_X = train[continuous_feature_names]

train_y_ln = np.log(train['price'] + 1)

val_X = val[continuous_feature_names]

val_y_ln = np.log(val['price'] + 1)

model = model.fit(train_X, train_y_ln)

mean_absolute_error(val_y_ln, model.predict(val_X))

from sklearn.model_selection import learning_curve, validation_curve

def plot_learning_curve(estimator, title, X, y, ylim=None, cv=None,n_jobs=1, train_size=np.linspace(.1, 1.0, 5 )):

plt.figure()

plt.title(title)

if ylim is not None:

plt.ylim(*ylim)

plt.xlabel('Training example')

plt.ylabel('score')

train_sizes, train_scores, test_scores = learning_curve(estimator, X, y, cv=cv, n_jobs=n_jobs, train_sizes=train_size, scoring = make_scorer(mean_absolute_error))

train_scores_mean = np.mean(train_scores, axis=1)

train_scores_std = np.std(train_scores, axis=1)

test_scores_mean = np.mean(test_scores, axis=1)

test_scores_std = np.std(test_scores, axis=1)

plt.grid()

plt.fill_between(train_sizes, train_scores_mean - train_scores_std,

train_scores_mean + train_scores_std, alpha=0.1,

color="r")

plt.fill_between(train_sizes, test_scores_mean - test_scores_std,

test_scores_mean + test_scores_std, alpha=0.1,

color="g")

plt.plot(train_sizes, train_scores_mean, 'o-', color='r',

label="Training score")

plt.plot(train_sizes, test_scores_mean,'o-',color="g",

label="Cross-validation score")

plt.legend(loc="best")

return plt

plot_learning_curve(LinearRegression(), 'Liner_model', train_X[:1000], train_y_ln[:1000], ylim=(0.0, 0.5), cv=5, n_jobs=1)

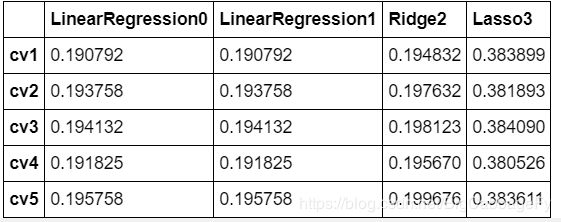

4.3.4 多种模型对比

线性模型 & 嵌入式特征选择

train = sample_feature[continuous_feature_names + ['price']].dropna()

train_X = train[continuous_feature_names]

train_y = train['price']

train_y_ln = np.log(train_y + 1)

from sklearn.linear_model import LinearRegression

from sklearn.linear_model import Ridge

from sklearn.linear_model import Lasso

models = [LinearRegression(),

Ridge(),

Lasso()]

result = dict()

for model in models:

model_name = str(model).split('(')[0]

scores = cross_val_score(model, X=train_X, y=train_y_ln, verbose=0, cv = 5, scoring=make_scorer(mean_absolute_error))

result[model_name] = scores

print(model_name + ' is finished')

result = pd.DataFrame(result)

result.index = ['cv' + str(x) for x in range(1, 6)]

result

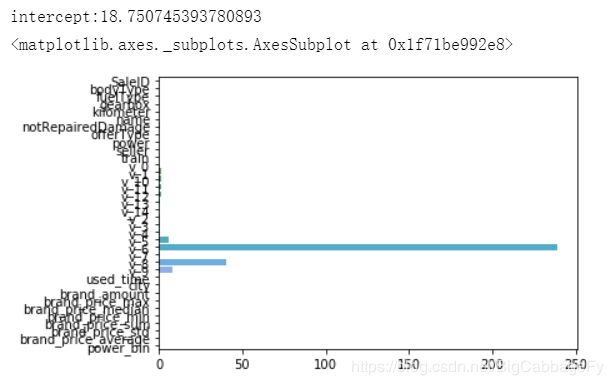

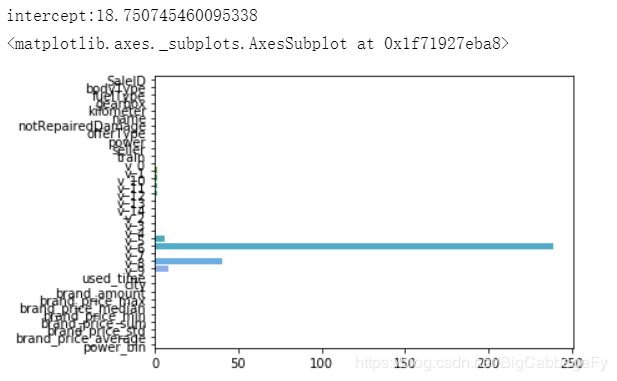

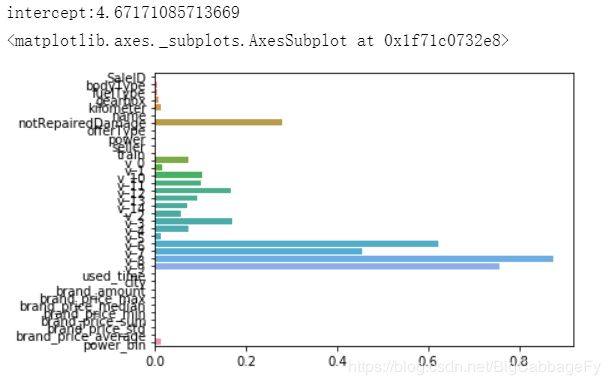

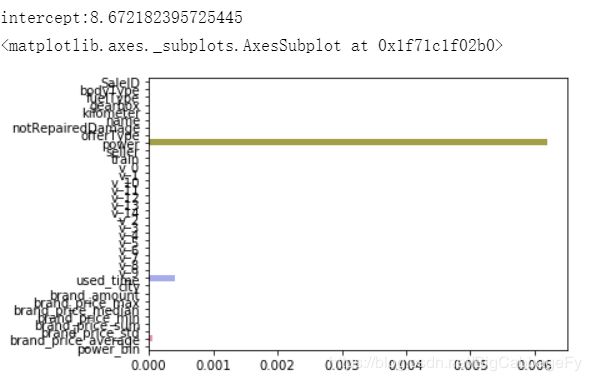

画出每个特征的重要性大小

model = LinearRegression().fit(train_X, train_y_log)

print('intercept:'+ str(model.intercept_))

sns.barplot(abs(model.coef_), continuous_features)

model = LinearRegression(normalize=True).fit(train_X, train_y_log)

print('intercept:'+ str(model.intercept_))

sns.barplot(abs(model.coef_), continuous_features)

model = Ridge().fit(train_X, train_y_log)

print('intercept:'+ str(model.intercept_))

sns.barplot(abs(model.coef_), continuous_features)

model = Lasso().fit(train_X, train_y_log)

print('intercept:'+ str(model.intercept_))

sns.barplot(abs(model.coef_), continuous_features)