基于OpenPose的卡通人物可视化

前言

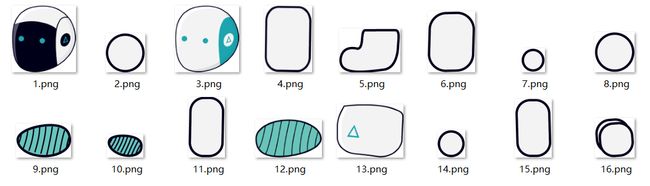

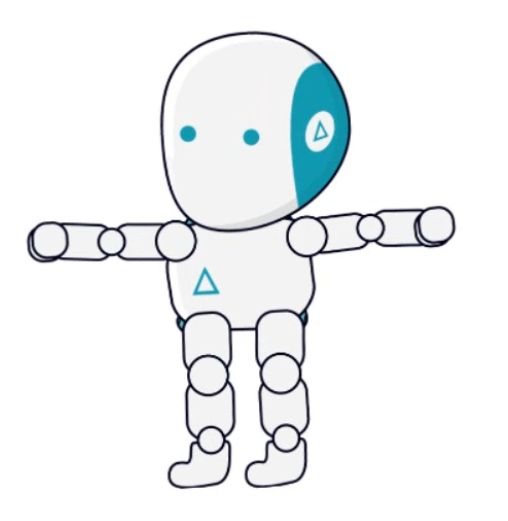

去年打算用些现成的Pose做些展示,因为以前有在OpenPose做些识别等开发工作,所以这次我就简单在OpenPose上把骨架用动画填充上去,关于能够和人动作联系起来的动画,我找到了Unity提供的示例Anima2D,但是Unity学得不是很熟,我就用PhotoShop把Anima2D的每个部位截取保存下来,然后使用OpenCV把各个部位读取进去,做些数学计算,把各个部位贴到OpenPose得出的骨架结果上,最后得到一个简单的2D动作展示,代码我会贴出来,下面写下我的思路,方法很简单,不喜勿喷。

效果展示

思路描述

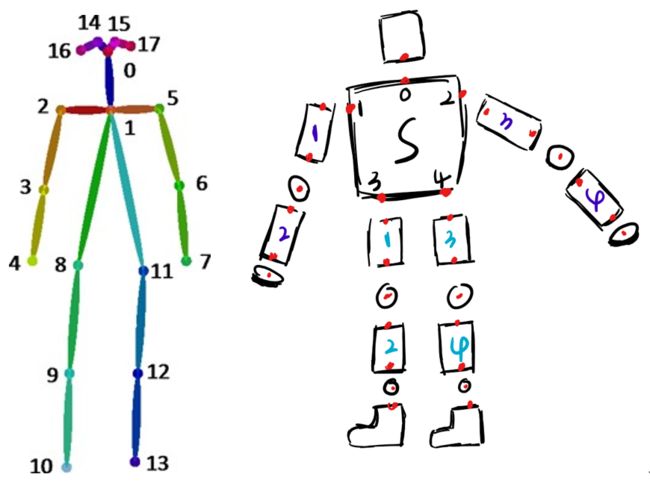

- 用Photoshop把各个部位拆开,这样子方法用OpenCV读取各个关节来做旋转变化。

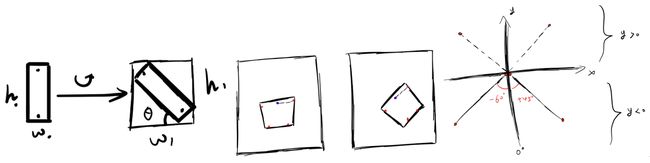

- 根据OpenPose的18个关节点的位置,制定切实可行的关节旋转计划,因为旋转需要其余点绕一个定点来旋转,而一个关节的旋转势必会带动与它相关连的所有点的旋转,所以这一部分要考虑清楚。下面是我当时做的各类型的草稿,大致内容是在研究怎么旋转,这里没有详细体现出来。

- 然后我设计了一个很大致的关节点联系的草图,大家看具体的关节点联系就好了,我当时是给每个关节标号了。

- 就是由上面这个玩意,我最后将各个部位一步一步组成一个完整形态的机器人,可能和官方的example有一点差距,但是过程中有些麻烦的东西我就给它去掉了。

-

代码细节我就不细讲了,就是些简单的数学旋转变化,再调一调就出来了,另外这个可以加入SVM,将关节点回归某些指定的动作,这样可以实现在动画过程中你做出指定的动作后动画界面做出一些反馈,最简单的就是你比划两下然后头部的颜色就从白色变成黑色,或把它背景颜色改一下,实现一些用户交互性的东西这些自己去研究,SVM这个简单可行,刚好加到VS里面编译一下就出来了,下面是代码,我就加了一些自己的东西。

图片资源在CSDN下载那里搜索“Anima2D的Robot图片 ”就有了。

#include

#include

#include

#ifndef GFLAGS_GFLAGS_H_

namespace gflags = google;

#endif

#include

#include

#include

#include

#include

#include

#define pi 3.1415926

#include

#include

#pragma comment(linker, "/subsystem:\"windows\" /entry:\"mainCRTStartup\"" )

void saveBead(cv::Mat *background, cv::Mat *bead, int X_, int Y_, int tarX, int tarY);

int cvAdd4cMat_q(cv::Mat &dst, cv::Mat &scr, double scale);

cv::Mat rotate(cv::Mat src, double angle);

void getPoint(int *point, int srcX, int srcY, double angle, int srcImgCols, int srcImgRows, int tarImgCols, int tarImgRows);

cv::Mat getRobot(op::Array poseKeypoints,double scale);

double getAngle(double p0_x, double p0_y, double p1_x, double p1_y);

void resizeImg(double scale);

// Robot Param

int point[2] = { 0,0 };

double scale = 1;

cv::Mat

img0_s = cv::imread("D:/Robot/background0.png"),

img1_s = cv::imread("D:/Robot/1.png", -1),

img2_s = cv::imread("D:/Robot/2.png", -1),

head_s = cv::imread("D:/Robot/3.png", -1),

img4_s = cv::imread("D:/Robot/4.png", -1),

foot_s = cv::imread("D:/Robot/5.png", -1),

leg_s = cv::imread("D:/Robot/6.png", -1),

img7_s = cv::imread("D:/Robot/7.png", -1),

bead_s = cv::imread("D:/Robot/8.png", -1),

m1_s = cv::imread("D:/Robot/9.png", -1),

m2_s = cv::imread("D:/Robot/10.png", -1),

hand_s = cv::imread("D:/Robot/11.png", -1),

neck_s = cv::imread("D:/Robot/12.png", -1),

body_s = cv::imread("D:/Robot/13.png", -1),

circle_s = cv::imread("D:/Robot/14.png", -1),

img15_s = cv::imread("D:/Robot/15.png", -1),

handcenter_s = cv::imread("D:/Robot/16.png", -1), background,

b1= cv::imread("D:/Robot/background1.png")

;

cv::Mat img0 = img0_s.clone(),

img1 = img1_s.clone(),

img2 = img2_s.clone(),

head = head_s.clone(),

img4 = img4_s.clone(),

foot = foot_s.clone(),

leg = leg_s.clone(),

img7 = img7_s.clone(),

bead = bead_s.clone(),

m1 = m1_s.clone(),

m2 = m2_s.clone(),

hand = hand_s.clone(),

neck = neck_s.clone(),

body = body_s.clone(),

circle = circle_s.clone(),

img15 = img15_s.clone(),

handcenter = handcenter_s.clone();

int camera_rows = 720, camera_cols = 1280;

//int ccount = 0;

struct UserDatum : public op::Datum

{

bool boolThatUserNeedsForSomeReason;

UserDatum(const bool boolThatUserNeedsForSomeReason_ = false) :

boolThatUserNeedsForSomeReason{ boolThatUserNeedsForSomeReason_ }

{}

};

class WUserOutput : public op::WorkerConsumer>>

{

public:

void initializationOnThread() {}

void workConsumer(const std::shared_ptr>& datumsPtr)

{

try

{

if (datumsPtr != nullptr && !datumsPtr->empty())

{

const auto& poseKeypoints = datumsPtr->at(0).poseKeypoints;

const auto& poseHeatMaps = datumsPtr->at(0).poseHeatMaps

background = img0.clone();

cv::Mat d2 , d1 = datumsPtr->at(0).cvOutputData,temp, d0 = datumsPtr->at(0).cvInputData;

cv::resize(background, temp, cv::Size(640, 360), (0, 0), (0, 0), cv::INTER_LINEAR);

if (poseKeypoints.empty())

{

cv::imshow("卡通界面", temp);

}

else

{

d2 = getRobot(poseKeypoints, scale);

cv::resize(d2, d2, cv::Size(640, 360), (0, 0), (0, 0), cv::INTER_LINEAR);

cv::imshow("卡通界面", d2);

}

cv::resize(d0, d0, cv::Size(640, 360), (0, 0), (0, 0), cv::INTER_LINEAR);

cv::imshow("摄像头界面", d0);

const char key = (char)cv::waitKey(1);

if (key == 27)

this->stop();

}

}

catch (const std::exception& e)

{

this->stop();

op::error(e.what(), __LINE__, __FUNCTION__, __FILE__);

}

}

};

int openPoseDemo()

{

try

{

op::log("Starting OpenPose demo...", op::Priority::High);

const auto timerBegin = std::chrono::high_resolution_clock::now();

op::check(0 <= 3 && 3 <= 255, "Wrong logging_level value.",

__LINE__, __FUNCTION__, __FILE__);

op::ConfigureLog::setPriorityThreshold((op::Priority)3);

op::Profiler::setDefaultX(1000);

const auto outputSize = op::flagsToPoint("-1x-1", "-1x-1");

// netInputSize

const auto netInputSize = op::flagsToPoint("-1x256", "-1x368");

// faceNetInputSize

const auto faceNetInputSize = op::flagsToPoint("368x368", "368x368 (multiples of 16)");

// handNetInputSize

const auto handNetInputSize = op::flagsToPoint("368x368", "368x368 (multiples of 16)");

// producerType

const auto producerSharedPtr = op::flagsToProducer("", "", "", -1,

false, "-1x-1", 30,

"models/cameraParameters/flir/", !false,

(unsigned int)1, -1);

// poseModel

const auto poseModel = op::flagsToPoseModel("COCO");

// JSON saving

//if (!FLAGS_write_keypoint.empty())

// op::log("Flag `write_keypoint` is deprecated and will eventually be removed."

// " Please, use `write_json` instead.", op::Priority::Max);

// keypointScale

const auto keypointScale = op::flagsToScaleMode(0);

// heatmaps to add

const auto heatMapTypes = op::flagsToHeatMaps(false, false,

false);

const auto heatMapScale = op::flagsToHeatMapScaleMode(2);

// >1 camera view?

const auto multipleView = (false || 1 > 1 || false);

// Enabling Google Logging

const bool enableGoogleLogging = true;

op::Wrapper> opWrapper;

auto wUserOutput = std::make_shared();

// Add custom processing

const auto workerOutputOnNewThread = true;

opWrapper.setWorkerOutput(wUserOutput, workerOutputOnNewThread);

// Pose configuration (use WrapperStructPose{} for default and recommended configuration)

const op::WrapperStructPose wrapperStructPose{

!false, netInputSize, outputSize, keypointScale, -1, 0,

1, (float)0.3, op::flagsToRenderMode(-1, multipleView),

poseModel, !false, (float)0.6, (float)0.7,

0, "models/", heatMapTypes, heatMapScale, false,

(float)0.05, -1, enableGoogleLogging };

// Face configuration (use op::WrapperStructFace{} to disable it)

const op::WrapperStructFace wrapperStructFace{

false, faceNetInputSize, op::flagsToRenderMode(-1, multipleView, -1),

(float)0.6, (float)0.7, (float)0.2 };

// Hand configuration (use op::WrapperStructHand{} to disable it)

const op::WrapperStructHand wrapperStructHand{

false, handNetInputSize, 1, (float)0.4, false,

op::flagsToRenderMode(-1, multipleView, -1), (float)0.6,

(float)0.7, (float)0.2 };

// Extra functionality configuration (use op::WrapperStructExtra{} to disable it)

const op::WrapperStructExtra wrapperStructExtra{

false, -1, false, -1, 0 };

// Producer (use default to disable any input)

const op::WrapperStructInput wrapperStructInput{producerSharedPtr, 0, (unsigned int)-1, false, false, 0, false };

const auto displayMode = op::DisplayMode::NoDisplay;

const bool guiVerbose = false;

const bool fullScreen = false;

const op::WrapperStructOutput wrapperStructOutput{

displayMode, guiVerbose, fullScreen, "",

op::stringToDataFormat("yml"), "", "",

"", "", "png", "",

30, "", "png", "",

"", "", "8051" };

// Configure wrapper

opWrapper.configure(wrapperStructPose, wrapperStructFace, wrapperStructHand, wrapperStructExtra,

wrapperStructInput, wrapperStructOutput);

// Set to single-thread running (to debug and/or reduce latency)

if (false)

opWrapper.disableMultiThreading();

op::log("Starting thread(s)...", op::Priority::High);

opWrapper.exec();

const auto now = std::chrono::high_resolution_clock::now();

const auto totalTimeSec = (double)std::chrono::duration_cast(now - timerBegin).count()

* 1e-9;

const auto message = "OpenPose demo successfully finished. Total time: "

+ std::to_string(totalTimeSec) + " seconds.";

op::log(message, op::Priority::High);

return 0;

}

catch (const std::exception& e)

{

op::error(e.what(), __LINE__, __FUNCTION__, __FILE__);

return -1;

}

}

int main(int argc, char *argv[])

{

resizeImg(0.7);

gflags::ParseCommandLineFlags(&argc, &argv, true);

return openPoseDemo();

}

cv::Mat getRobot(op::Array poseKeypoints,double scale)

{

//我根据传入的关键点序列构造了一个完整的机器人的形状,由最初的背景,一点一点地把部件贴上去,最麻烦的就是计算部件旋转带动其他部件的关系

//我在这里用的方法是指定每个部件在前一个部件的相对位置

//后面使用了指针,所以这里还是需要克隆一下,不然每次换帧都在同一张背景下会导致有很多叠影

for (int person = 0; person < poseKeypoints.getSize(0); person++)

{

//std::cout << sqrt((poseKeypoints[{person, 1, 0}] - poseKeypoints[{person, 0, 0}])*(poseKeypoints[{person, 1, 0}] - poseKeypoints[{person, 0, 0}]) + (poseKeypoints[{person, 1, 1}] - poseKeypoints[{person, 0, 1}])*(poseKeypoints[{person, 1, 1}] - poseKeypoints[{person, 0, 1}])) << std::endl;

int center_x = background.cols *poseKeypoints[{person, 1, 0}] * 1.0 / camera_cols;

int center_y = background.rows *poseKeypoints[{person, 1, 1}] * 1.0 / camera_rows;

double p0_x, p0_y, p1_x, p1_y, x, y, a;

double bodyAngle = getAngle(poseKeypoints[{person, 1, 0}], poseKeypoints[{person, 1, 1}], (poseKeypoints[{person, 8, 0}] + poseKeypoints[{person, 11, 0}]) / 2.0, (poseKeypoints[{person, 8, 1}] + poseKeypoints[{person, 11, 1}]) / 2.0);

if (poseKeypoints[{person, 1, 0}] == 0 || poseKeypoints[{person, 1, 1}] == 0 || poseKeypoints[{person, 8, 0}] == 0 || poseKeypoints[{person, 11, 0}] == 0 || poseKeypoints[{person, 8, 1}] == 0 || poseKeypoints[{person, 11, 0}] == 0)

bodyAngle = 0;

//根据OpenPose的关键点分布,我由关键点1,关键点8和11计算出了身体部位的偏转角

cv::Mat neck_ = rotate(neck, bodyAngle);//脖子的偏转随身体

getPoint(point, neck.cols / 2, neck.rows / 2, bodyAngle, neck.cols, neck.rows, neck_.cols, neck_.rows);//获得翻转部位指定关键点

saveBead(&background, &neck_, center_x, center_y, point[0], point[1]);//将翻转后的部位还原到背景图像中

cv::Mat body_ = rotate(body, bodyAngle + 7);//取得部位以及翻转图像

int body_0_X = body.cols / 2, body_0_Y = 25 * scale, //根据原图我确定了五个关键位置,脖子连接部位以及四肢连接的部位

body_1_X = 15 * scale, body_1_Y = body.rows / 5,

body_2_X = body.cols - 10 * scale, body_2_Y = body.rows / 5,

body_3_X = body.cols / 4, body_3_Y = body.rows * 4 / 5,

body_4_X = body.cols * 3 / 4, body_4_Y = body.rows * 4 / 5;

//获得身体旋转之后的五个关键点的坐标

getPoint(point, body_0_X, body_0_Y, bodyAngle, body.cols, body.rows, body_.cols, body_.rows);

body_0_X = point[0]; body_0_Y = point[1];

getPoint(point, body_1_X, body_1_Y, bodyAngle, body.cols, body.rows, body_.cols, body_.rows);

body_1_X = point[0]; body_1_Y = point[1];

getPoint(point, body_2_X, body_2_Y, bodyAngle, body.cols, body.rows, body_.cols, body_.rows);

body_2_X = point[0]; body_2_Y = point[1];

getPoint(point, body_3_X, body_3_Y, bodyAngle, body.cols, body.rows, body_.cols, body_.rows);

body_3_X = point[0]; body_3_Y = point[1];

getPoint(point, body_4_X, body_4_Y, bodyAngle, body.cols, body.rows, body_.cols, body_.rows);

body_4_X = point[0]; body_4_Y = point[1];

//保存下肢的两个绿色的摩擦块,因为身体要覆盖摩擦块,所以我先保存两个摩擦块,在保存身体

cv::Mat m1_ = rotate(m1, bodyAngle);

saveBead(&background, &m1_, body_3_X - body_0_X + center_x, 15 * scale + body_3_Y - body_0_Y + center_y, m1_.cols / 2, m1_.rows / 2);

saveBead(&background, &m1_, body_4_X - body_0_X + center_x, 15 * scale + body_4_Y - body_0_Y + center_y, m1_.cols / 2, m1_.rows / 2);

saveBead(&background, &body_, center_x, center_y, body_0_X, body_0_Y);//将翻转后的部位还原到背景图像中

//左臂

double m_1x, m_1y, m_0x, m_0y, m_2x, m_2y, m_3x, m_3y;

//手臂的上半部分计算

double hand1Angle = getAngle(poseKeypoints[{person, 2, 0}], poseKeypoints[{person, 2, 1}], poseKeypoints[{person, 3, 0}], poseKeypoints[{person, 3, 1}]);

cv::Mat hand1 = rotate(hand, hand1Angle), m2_ = rotate(m2, hand1Angle);

getPoint(point, hand.cols / 2, 0, hand1Angle, hand.cols, hand.rows, hand1.cols, hand1.rows);//获得翻转部位指定关键点

m_0x = point[0]; m_0y = point[1];

getPoint(point, hand.cols / 2, hand.rows, hand1Angle, hand.cols, hand.rows, hand1.cols, hand1.rows);//获得翻转部位指定关键点

m_1x = point[0]; m_1y = point[1];

//手臂的下半部分计算

double hand2Angle = getAngle(poseKeypoints[{person, 3, 0}], poseKeypoints[{person, 3, 1}], poseKeypoints[{person, 4, 0}], poseKeypoints[{person, 4, 1}]);

cv::Mat hand2 = rotate(hand, hand2Angle), hand2center = rotate(handcenter, hand2Angle + 180);

getPoint(point, hand.cols / 2, 0, hand2Angle, hand.cols, hand.rows, hand2.cols, hand2.rows);//获得翻转部位指定关键点

m_2x = point[0]; m_2y = point[1];

getPoint(point, hand.cols / 2, hand.rows, hand2Angle, hand.cols, hand.rows, hand2.cols, hand2.rows);//获得翻转部位指定关键点

m_3x = point[0]; m_3y = point[1];

//保存整支手臂

saveBead(&background, &hand1, body_1_X - body_0_X + center_x, body_1_Y - body_0_Y + center_y, m_0x, m_0y);

saveBead(&background, &hand2, m_3x - m_2x + m_1x - m_0x + body_1_X - body_0_X + center_x, m_3y - m_2y + m_1y - m_0y + body_1_Y - body_0_Y + center_y, m_3x, m_3y);

saveBead(&background, &circle, m_1x - m_0x + body_1_X - body_0_X + center_x, m_1y - m_0y + body_1_Y - body_0_Y + center_y, circle.cols / 2, circle.rows / 2);

saveBead(&background, &hand2center, m_3x - m_2x + m_1x - m_0x + body_1_X - body_0_X + center_x, m_3y - m_2y + m_1y - m_0y + body_1_Y - body_0_Y + center_y, hand2center.cols / 2, hand2center.rows / 2);

saveBead(&background, &bead, body_1_X - body_0_X + center_x, body_1_Y - body_0_Y + center_y, bead.cols / 2, bead.rows / 2);

//头部

double headAngle = getAngle(poseKeypoints[{person, 0, 0}], poseKeypoints[{person, 0, 1}], poseKeypoints[{person, 1, 0}], poseKeypoints[{person, 1, 1}]);

cv::Mat head_ = rotate(head, headAngle);

getPoint(point, head.cols / 2 - 30 * scale, head.rows, headAngle, head.cols, head.rows, head_.cols, head_.rows);//获得翻转部位指定关键点

saveBead(&background, &head_, center_x, center_y, point[0], point[1]);//将翻转后的部位还原到背景图像中

//右臂

//手臂的上半部分计算

double hand3Angle = getAngle(poseKeypoints[{person, 5, 0}], poseKeypoints[{person, 5, 1}], poseKeypoints[{person, 6, 0}], poseKeypoints[{person, 6, 1}]);

cv::Mat hand3 = rotate(hand, hand3Angle);

getPoint(point, hand.cols / 2, 0, hand3Angle, hand.cols, hand.rows, hand3.cols, hand3.rows);//获得翻转部位指定关键点

m_0x = point[0]; m_0y = point[1];

getPoint(point, hand.cols / 2, hand.rows, hand3Angle, hand.cols, hand.rows, hand3.cols, hand3.rows);//获得翻转部位指定关键点

m_1x = point[0]; m_1y = point[1];

//手臂的下半部分计算

double hand4Angle = getAngle(poseKeypoints[{person, 6, 0}], poseKeypoints[{person, 6, 1}], poseKeypoints[{person, 7, 0}], poseKeypoints[{person, 7, 1}]);

cv::Mat hand4 = rotate(hand, hand4Angle), hand4center = rotate(handcenter, hand4Angle);

getPoint(point, hand.cols / 2, 0, hand4Angle, hand.cols, hand.rows, hand4.cols, hand4.rows);//获得翻转部位指定关键点

m_2x = point[0]; m_2y = point[1];

getPoint(point, hand.cols / 2, hand.rows, hand4Angle, hand.cols, hand.rows, hand4.cols, hand4.rows);//获得翻转部位指定关键点

m_3x = point[0]; m_3y = point[1];

//保存整支手臂

saveBead(&background, &hand3, body_2_X - body_0_X + center_x, body_2_Y - body_0_Y + center_y, m_0x, m_0y);

saveBead(&background, &hand4, m_3x - m_2x + m_1x - m_0x + body_2_X - body_0_X + center_x, m_3y - m_2y + m_1y - m_0y + body_2_Y - body_0_Y + center_y, m_3x, m_3y);

saveBead(&background, &circle, m_1x - m_0x + body_2_X - body_0_X + center_x, m_1y - m_0y + body_2_Y - body_0_Y + center_y, circle.cols / 2, circle.rows / 2);

saveBead(&background, &hand4center, m_3x - m_2x + m_1x - m_0x + body_2_X - body_0_X + center_x, m_3y - m_2y + m_1y - m_0y + body_2_Y - body_0_Y + center_y, hand4center.cols / 2, hand4center.rows / 2);

saveBead(&background, &bead, body_2_X - body_0_X + center_x, body_2_Y - body_0_Y + center_y, bead.cols / 2, bead.rows / 2);

//左腿

//左腿的上半部分计算

double leg1Angle = getAngle(poseKeypoints[{person, 8, 0}], poseKeypoints[{person, 8, 1}], poseKeypoints[{person, 9, 0}], poseKeypoints[{person, 9, 1}]);

cv::Mat leg1 = rotate(leg, leg1Angle);

getPoint(point, leg.cols / 2, 0, leg1Angle, leg.cols, leg.rows, leg1.cols, leg1.rows);//获得翻转部位指定关键点

m_0x = point[0]; m_0y = point[1];

getPoint(point, leg.cols / 2, leg.rows, leg1Angle, leg.cols, leg.rows, leg1.cols, leg1.rows);//获得翻转部位指定关键点

m_1x = point[0]; m_1y = point[1];

//左腿的下半部分计算

double leg2Angle = getAngle(poseKeypoints[{person, 9, 0}], poseKeypoints[{person, 9, 1}], poseKeypoints[{person, 10, 0}], poseKeypoints[{person, 10, 1}]);

cv::Mat leg2 = rotate(leg, leg2Angle), foot2 = rotate(foot, leg2Angle);

getPoint(point, leg.cols / 2, 0, leg2Angle, leg.cols, leg.rows, leg2.cols, leg2.rows);//获得翻转部位指定关键点

m_2x = point[0]; m_2y = point[1];

getPoint(point, leg.cols / 2, leg.rows, leg2Angle, leg.cols, leg.rows, leg2.cols, leg2.rows);//获得翻转部位指定关键点

m_3x = point[0]; m_3y = point[1];

//保存整支左腿

double f_x, f_y;

getPoint(point, foot.cols * 3 / 5 + 10 * scale, 0, leg2Angle, foot.cols, foot.rows, foot2.cols, foot2.rows);//获得翻转部位指定关键点

f_x = point[0]; f_y = point[1];

saveBead(&background, &leg1, body_3_X - body_0_X + center_x, body_3_Y - body_0_Y + center_y, m_0x, m_0y);

saveBead(&background, &leg2, m_1x - m_0x + body_3_X - body_0_X + center_x, m_1y - m_0y + body_3_Y - body_0_Y + center_y, m_2x, m_2y);

saveBead(&background, &bead, m_1x - m_0x + body_3_X - body_0_X + center_x, m_1y - m_0y + body_3_Y - body_0_Y + center_y, bead.cols / 2, bead.rows / 2);

saveBead(&background, &foot2, m_3x - m_2x + m_1x - m_0x + body_3_X - body_0_X + center_x, m_3y - m_2y + m_1y - m_0y + body_3_Y - body_0_Y + center_y, f_x, f_y);

saveBead(&background, &circle, m_3x - m_2x + m_1x - m_0x + body_3_X - body_0_X + center_x, m_3y - m_2y + m_1y - m_0y + body_3_Y - body_0_Y + center_y, circle.cols / 2, circle.rows / 2);

//右腿

//右腿的上半部分计算

double leg3Angle = getAngle(poseKeypoints[{person, 11, 0}], poseKeypoints[{person, 11, 1}], poseKeypoints[{person, 12, 0}], poseKeypoints[{person, 12, 1}]);

cv::Mat leg3 = rotate(leg, leg3Angle);

getPoint(point, leg.cols / 2, 0, leg3Angle, leg.cols, leg.rows, leg3.cols, leg3.rows);//获得翻转部位指定关键点

m_0x = point[0]; m_0y = point[1];

getPoint(point, leg.cols / 2, leg.rows, leg3Angle, leg.cols, leg.rows, leg3.cols, leg3.rows);//获得翻转部位指定关键点

m_1x = point[0]; m_1y = point[1];

//右腿的下半部分计算

double leg4Angle = getAngle(poseKeypoints[{person, 12, 0}], poseKeypoints[{person, 12, 1}], poseKeypoints[{person, 13, 0}], poseKeypoints[{person, 13, 1}]);

cv::Mat leg4 = rotate(leg, leg4Angle), foot4 = rotate(foot, leg4Angle);

getPoint(point, leg.cols / 2, 0, leg4Angle, leg.cols, leg.rows, leg4.cols, leg4.rows);//获得翻转部位指定关键点

m_2x = point[0]; m_2y = point[1];

getPoint(point, leg.cols / 2, leg.rows, leg4Angle, leg.cols, leg.rows, leg4.cols, leg4.rows);//获得翻转部位指定关键点

m_3x = point[0]; m_3y = point[1];

//保存整支右腿

getPoint(point, foot.cols * 3 / 5 + 10 * scale, 0, leg4Angle, foot.cols, foot.rows, foot4.cols, foot4.rows);//获得翻转部位指定关键点

f_x = point[0]; f_y = point[1];

saveBead(&background, &leg3, body_4_X - body_0_X + center_x, body_4_Y - body_0_Y + center_y, m_0x, m_0y);

saveBead(&background, &leg4, m_1x - m_0x + body_4_X - body_0_X + center_x, m_1y - m_0y + body_4_Y - body_0_Y + center_y, m_2x, m_2y);

saveBead(&background, &bead, m_1x - m_0x + body_4_X - body_0_X + center_x, m_1y - m_0y + body_4_Y - body_0_Y + center_y, bead.cols / 2, bead.rows / 2);

saveBead(&background, &foot4, m_3x - m_2x + m_1x - m_0x + body_4_X - body_0_X + center_x, m_3y - m_2y + m_1y - m_0y + body_4_Y - body_0_Y + center_y, f_x, f_y);

saveBead(&background, &circle, m_3x - m_2x + m_1x - m_0x + body_4_X - body_0_X + center_x, m_3y - m_2y + m_1y - m_0y + body_4_Y - body_0_Y + center_y, circle.cols / 2, circle.rows / 2);

}

return background;

}

double getAngle(double p0_x, double p0_y, double p1_x, double p1_y)

{

//这个函数用来计算关节的旋转角度,需要传入两个关键点的坐标

//我根据实际情况下确定的角度偏转方法,每个部件在竖直状态下偏转为0度,往右边偏转的角度为正值,往左边偏转的角度为负值

//考虑了斜率的正负和关键点所在的位置,进而确定是否抬手

double bodyAngle, body_X, body_Y, x, y, a;

x = p1_x - p0_x, y = -(p1_y - p0_y);

a = sqrt(x*x + y*y);

if (a == 0) bodyAngle = 0;

else if (p0_x == 0 || p0_y == 0 || p1_x == 0 || p1_y == 0) bodyAngle = 0;

else

{

bodyAngle = asin(abs(x) / a)*180.0 / pi;

if (x / y < 0)

{

if (y>0)

bodyAngle = bodyAngle - 180;

}

else

{

if (y < 0)

bodyAngle = -bodyAngle;

else

bodyAngle = 180 - bodyAngle;

}

}

return bodyAngle;

}

void saveBead(cv::Mat *background, cv::Mat *bead, int X_, int Y_, int tarX, int tarY)

{

//saveBead的作用是将部件bead保存贴在背景background上面,后面的参数是为了指定作用点,前面的X_,Y_是background的固定点坐标

//tarX和tarY是部件中的固定点坐标

if (X_ == 0 || Y_ == 0 || tarX == 0 || tarY == 0) return;

if ((X_ - tarX) >= 0 && (Y_ - tarY) >= 0)

{

if ((X_ - tarX) + (*bead).cols > (*background).cols || (Y_ - tarY) + (*bead).rows > (*background).rows) return;

if (X_ - tarX == 0 || Y_ - tarY == 0) return;

cv::Mat middle(

*background,

cvRect(

X_ - tarX,

Y_ - tarY,

(*bead).cols,

(*bead).rows

)

);

cvAdd4cMat_q(middle, *bead, 1.0);

}

else return;

}

void getPoint(int *point, int srcX, int srcY, double angle, int srcImgCols, int srcImgRows, int tarImgCols, int tarImgRows)

{

//我这里会出现原图和旋转后的图像,在原图我取其中几个点作为我想要摆放其他部件的定点,但是旋转之后这些点的坐标会发生改变

//所以我设计了getPoint函数,用于得到旋转图中,我们原来在原图中设定的点的坐标,也就是得到旋转图后我们之前想要的位置的点的坐标。

int tarX = cos(angle*(pi / 180.0))*(srcX - srcImgCols / 2)

- sin(angle*(pi / 180.0))*(-(srcY - srcImgRows / 2))

+ tarImgCols / 2,

tarY = -(sin(angle*(pi / 180.0))*(srcX - srcImgCols / 2)

+ cos(angle*(pi / 180.0))*(-(srcY - srcImgRows / 2)))

+ tarImgRows / 2;

point[0] = tarX;

point[1] = tarY;

}

int cvAdd4cMat_q(cv::Mat &dst, cv::Mat &scr, double scale)

{//图像重叠操作

if (dst.channels() != 3 || scr.channels() != 4)

{

return true;

}

if (scale < 0.01)

return false;

std::vectorscr_channels;

std::vectordstt_channels;

split(scr, scr_channels);

split(dst, dstt_channels);

CV_Assert(scr_channels.size() == 4 && dstt_channels.size() == 3);

if (scale < 1)

{

scr_channels[3] *= scale;

scale = 1;

}

for (int i = 0; i < 3; i++)

{

dstt_channels[i] = dstt_channels[i].mul(255.0 / scale - scr_channels[3], scale / 255.0);

dstt_channels[i] += scr_channels[i].mul(scr_channels[3], scale / 255.0);

}

merge(dstt_channels, dst);

return true;

}

void resizeImg(double scale)

{

cv::resize(img1_s, img1, cv::Size(img1_s.cols *scale, img1_s.rows *scale), (0, 0), (0, 0), cv::INTER_LINEAR);

cv::resize(img2_s, img2, cv::Size(img2_s.cols *scale, img2_s.rows *scale), (0, 0), (0, 0), cv::INTER_LINEAR);

cv::resize(head_s, head, cv::Size(head_s.cols *scale, head_s.rows *scale), (0, 0), (0, 0), cv::INTER_LINEAR);

cv::resize(img4_s, img4, cv::Size(img4_s.cols *scale, img4_s.rows *scale), (0, 0), (0, 0), cv::INTER_LINEAR);

cv::resize(foot_s, foot, cv::Size(foot_s.cols *scale, foot_s.rows *scale), (0, 0), (0, 0), cv::INTER_LINEAR);

cv::resize(leg_s, leg, cv::Size(leg_s.cols *scale, leg_s.rows *scale), (0, 0), (0, 0), cv::INTER_LINEAR);

cv::resize(img7_s, img7, cv::Size(img7_s.cols *scale, img7_s.rows *scale), (0, 0), (0, 0), cv::INTER_LINEAR);

cv::resize(bead_s, bead, cv::Size(bead_s.cols *scale, bead_s.rows *scale), (0, 0), (0, 0), cv::INTER_LINEAR);

cv::resize(m1_s, m1, cv::Size(m1_s.cols *scale, m1_s.rows *scale), (0, 0), (0, 0), cv::INTER_LINEAR);

cv::resize(m2_s, m2, cv::Size(m2_s.cols *scale, m2_s.rows *scale), (0, 0), (0, 0), cv::INTER_LINEAR);

cv::resize(hand_s, hand, cv::Size(hand_s.cols *scale, hand_s.rows *scale), (0, 0), (0, 0), cv::INTER_LINEAR);

cv::resize(neck_s, neck, cv::Size(neck_s.cols *scale, neck_s.rows *scale), (0, 0), (0, 0), cv::INTER_LINEAR);

cv::resize(body_s, body, cv::Size(body_s.cols *scale, body_s.rows *scale), (0, 0), (0, 0), cv::INTER_LINEAR);

cv::resize(circle_s, circle, cv::Size(circle_s.cols *scale, circle_s.rows *scale), (0, 0), (0, 0), cv::INTER_LINEAR);

cv::resize(img15_s, img15, cv::Size(img15_s.cols *scale, img15_s.rows *scale), (0, 0), (0, 0), cv::INTER_LINEAR);

cv::resize(handcenter_s, handcenter, cv::Size(handcenter_s.cols *scale, handcenter_s.rows *scale), (0, 0), (0, 0), cv::INTER_LINEAR);

}

cv::Mat rotate(cv::Mat src, double angle)

{

//旋转函数,我传入图像src,并让它旋转angle角度。往右为正值,往左为负值

cv::Point2f center(src.cols / 2, src.rows / 2);

cv::Mat rot = cv::getRotationMatrix2D(center, angle, 1);

cv::Rect bbox = cv::RotatedRect(center, src.size(), angle).boundingRect();

rot.at(0, 2) += bbox.width / 2.0 - center.x;

rot.at(1, 2) += bbox.height / 2.0 - center.y;

cv::Mat dst;

cv::warpAffine(src, dst, rot, bbox.size());

return dst;

}