BERT学习笔记:create_pretraining_data.py 运行及代码解读

1 简介

本文主要是自己理解的记录,方便以后回顾时不是从头开始看,如果你有幸看到这篇文章并且对你有些许帮助,我很荣幸,如果没有帮助也感谢你的浏览。

2 运行

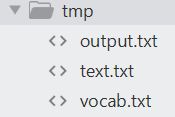

首先介绍怎么运行,边运行边查看输出可以方便理解代码,创建tmp文件夹,加入以下三个文件:text.txt,output.txt,vocab.txt,是运行时必须指定的参数。

text.txt中为训练文本,随便找的文本

写诗做文章都是千古之事,而其中甘苦得失只有作者自己心里知道。

各位作者都是不同地位的人,怎么会空有虚名呢?这两句话表达杜甫晚年对诗歌创作的见解,带有总结性质。

上句“千古事”是指留传久远,关系重大,下句“寸心知”是说对于文章,作者本人的理解感知最为明白。

这两句诗虽是以议论入诗,但对仗工整,语言高度概括,而且切中肯綮,含蕴丰富,很有哲理性。

vocab.txt 可以从Google发布的中文模型中解压缩找到。

output.txt为必须制定的输出目录

python create_pretraining_data.py --input_file=tmp/text.txt --output_file=tmp/output.txt --vocab_file=tmp/vocab.txt2 源码解释

2.1必须参数

flags.DEFINE_string("input_file", None,

"Input raw text file (or comma-separated list of files).")

flags.DEFINE_string(

"output_file", None,

"Output TF example file (or comma-separated list of files).")

flags.DEFINE_string("vocab_file", None,

"The vocabulary file that the BERT model was trained on.")- 文件输入路径

- 输出文件路径

- 词典文件路径 (预训练好模型中能够找到)

2.2可选参数

flags.DEFINE_bool(

"do_lower_case", True,

"Whether to lower case the input text. Should be True for uncased "

"models and False for cased models.")

flags.DEFINE_integer("max_seq_length", 128, "Maximum sequence length.")

flags.DEFINE_integer("max_predictions_per_seq", 20,

"Maximum number of masked LM predictions per sequence.")- 是否小写输入, 默认True

- 最大句子的长度

- 每一句MLM预测的百分比

flags.DEFINE_integer("random_seed", 12345, "Random seed for data generation.")

flags.DEFINE_integer(

"dupe_factor", 10,

"Number of times to duplicate the input data (with different masks).")

flags.DEFINE_float("masked_lm_prob", 0.15, "Masked LM probability.")

flags.DEFINE_float(

"short_seq_prob", 0.1,

"Probability of creating sequences which are shorter than the "

"maximum length.")- 用于数据生成的随机种子

- 复制输入数据的次数(使用不同的掩码) 默认循环10次

- Masked LM的比例

3 训练实例

3.1单独的实例训练

class TrainingInstance(object):

"""A single training instance (sentence pair)."""

# 初始化

def __init__(self, tokens, segment_ids, masked_lm_positions, masked_lm_labels,

is_random_next):

self.tokens = tokens

self.segment_ids = segment_ids

self.is_random_next = is_random_next

self.masked_lm_positions = masked_lm_positions

self.masked_lm_labels = masked_lm_labels

# 字符串化

def __str__(self):

s = ""

s += "tokens: %s\n" % (" ".join(

[tokenization.printable_text(x) for x in self.tokens]))

s += "segment_ids: %s\n" % (" ".join([str(x) for x in self.segment_ids]))

s += "is_random_next: %s\n" % self.is_random_next

s += "masked_lm_positions: %s\n" % (" ".join(

[str(x) for x in self.masked_lm_positions]))

s += "masked_lm_labels: %s\n" % (" ".join(

[tokenization.printable_text(x) for x in self.masked_lm_labels]))

s += "\n"

return s

def __repr__(self):

return self.__str__()3.2 写训练实例到文件

def write_instance_to_example_files(instances, tokenizer, max_seq_length,

max_predictions_per_seq, output_files):

"""Create TF example files from `TrainingInstance`s."""

writers = []

for output_file in output_files:

writers.append(tf.python_io.TFRecordWriter(output_file))

writer_index = 0

total_written = 0

for (inst_index, instance) in enumerate(instances):

# 将字通过vocan.txt 转换为id 具体看tokenizer.py

input_ids = tokenizer.convert_tokens_to_ids(instance.tokens)

input_mask = [1] * len(input_ids)

segment_ids = list(instance.segment_ids)

assert len(input_ids) <= max_seq_length

# 小于最大序列长度补0

while len(input_ids) < max_seq_length:

input_ids.append(0)

input_mask.append(0)

segment_ids.append(0)

assert len(input_ids) == max_seq_length

assert len(input_mask) == max_seq_length

assert len(segment_ids) == max_seq_length

masked_lm_positions = list(instance.masked_lm_positions)

masked_lm_ids = tokenizer.convert_tokens_to_ids(instance.masked_lm_labels)

masked_lm_weights = [1.0] * len(masked_lm_ids)

while len(masked_lm_positions) < max_predictions_per_seq:

masked_lm_positions.append(0)

masked_lm_ids.append(0)

masked_lm_weights.append(0.0)

next_sentence_label = 1 if instance.is_random_next else 0

features = collections.OrderedDict()

features["input_ids"] = create_int_feature(input_ids)

features["input_mask"] = create_int_feature(input_mask)

features["segment_ids"] = create_int_feature(segment_ids)

features["masked_lm_positions"] = create_int_feature(masked_lm_positions)

features["masked_lm_ids"] = create_int_feature(masked_lm_ids)

features["masked_lm_weights"] = create_float_feature(masked_lm_weights)

features["next_sentence_labels"] = create_int_feature([next_sentence_label])

tf_example = tf.train.Example(features=tf.train.Features(feature=features))

writers[writer_index].write(tf_example.SerializeToString())

writer_index = (writer_index + 1) % len(writers)

total_written += 1

if inst_index < 20:

tf.logging.info("*** Example ***")

tf.logging.info("tokens: %s" % " ".join(

[tokenization.printable_text(x) for x in instance.tokens]))

for feature_name in features.keys():

feature = features[feature_name]

values = []

if feature.int64_list.value:

values = feature.int64_list.value

elif feature.float_list.value:

values = feature.float_list.value

tf.logging.info(

"%s: %s" % (feature_name, " ".join([str(x) for x in values])))

for writer in writers:

writer.close()

tf.logging.info("Wrote %d total instances", total_written)把格式好的用于训练的数据写入tfrecord文件中,这个方法的主要作用就是把已经处理好的数据写入二进制文件中

def create_int_feature(values):

feature = tf.train.Feature(int64_list=tf.train.Int64List(value=list(values)))

return feature

def create_float_feature(values):

feature = tf.train.Feature(float_list=tf.train.FloatList(value=list(values)))

return feature创建不同的训练特征,主要将预处理好的数据转为Tensorflow中的格式

3.3 创建训练实例

def create_training_instances(input_files, tokenizer, max_seq_length,

dupe_factor, short_seq_prob, masked_lm_prob,

max_predictions_per_seq, rng):

"""Create `TrainingInstance`s from raw text."""

all_documents = [[]]

# Input file format:

# (1) One sentence per line. These should ideally be actual sentences, not

# entire paragraphs or arbitrary spans of text. (Because we use the

# sentence boundaries for the "next sentence prediction" task).

# (2) Blank lines between documents. Document boundaries are needed so

# that the "next sentence prediction" task doesn't span between documents.

# 每行是一个文档中的一句话,凭借这个来进行下一句预测的任务

# 文档之间间隔一个空行, 因为下一个预测不跨文档

# all_documents最后形成三维数组, 每句形成一个一维数组, 每个段落中的每句放在一维中, 每个段落放

# 在一维中

for input_file in input_files:

with tf.gfile.GFile(input_file, "r") as reader:

while True:

line = tokenization.convert_to_unicode(reader.readline())

if not line:

break

line = line.strip()

# Empty lines are used as document delimiters

if not line:

all_documents.append([])

tokens = tokenizer.tokenize(line)

if tokens:

all_documents[-1].append(tokens)

# Remove empty documents

all_documents = [x for x in all_documents if x]

rng.shuffle(all_documents)

vocab_words = list(tokenizer.vocab.keys())

instances = []

# dupe_factor默认值是10, 复制输入数据的次数, 相同的数据形成不同的掩码, 增加实例用于训练

for _ in range(dupe_factor):

for document_index in range(len(all_documents)):

instances.extend(

create_instances_from_document(

all_documents, document_index, max_seq_length, short_seq_prob,

masked_lm_prob, max_predictions_per_seq, vocab_words, rng))

rng.shuffle(instances)

print('instances', len(instances))

return instances3.4根据但文档创建实例

def create_instances_from_document(

all_documents, document_index, max_seq_length, short_seq_prob,

masked_lm_prob, max_predictions_per_seq, vocab_words, rng):

"""Creates `TrainingInstance`s for a single document."""

# document 相当于单个段落

document = all_documents[document_index]

# Account for [CLS], [SEP], [SEP]

# 为了加入 [CLS], [SEP], [SEP] 所以长度减3

max_num_tokens = max_seq_length - 3

# 大部分情况下,我们希望把长度填充到最大长度,但是一少部分情况下我们希望采用短句来最小化预训练

# 和微调的差异。总的来说 target_seq_length 是一个粗略的目标,而 max_seq_length是一个强制的限制。

target_seq_length = max_num_tokens

if rng.random() < short_seq_prob:

target_seq_length = rng.randint(2, max_num_tokens) #生成随机数

instances = []

current_chunk = []

current_length = 0

i = 0

while i < len(document):

segment = document[i] # segment是一个句子列表

current_chunk.append(segment)

current_length += len(segment)

# i == len(document) - 1 为了将本段落的所有segment 都加入current_chunk中再往下执行

if i == len(document) - 1 or current_length >= target_seq_length:

if current_chunk:

# `a_end` is how many segments from `current_chunk` go into the `A`

# (first) sentence.

a_end = 1

if len(current_chunk) >= 2:

# 随机生成1到len(current_chunk)-1的随机数

a_end = rng.randint(1, len(current_chunk) - 1)

# tokens_a 表示将文档中前a_end 个句子加入tokens_a中

tokens_a = []

for j in range(a_end):

tokens_a.extend(current_chunk[j])

# tokens_b 如果当前文档(段落)如果只有一个句子,随机填充一个句子作为下一个句子预测

# 如果不是一个句子将 a_end到len(current_chunk) - 1 加到tokens_b中

# 还有随机概率小于0.5的情况

tokens_b = []

# Random next

# 如果is_random_next为True 训练时转化为0, False 转化为1

is_random_next = False

# 如果只有一个句子或者随机概率小于0.5 随机填充下一个句子

if len(current_chunk) == 1 or rng.random() < 0.5:

is_random_next = True

target_b_length = target_seq_length - len(tokens_a)

# 从总文档中随机填充一个

for _ in range(10):

random_document_index = rng.randint(0, len(all_documents) - 1)

if random_document_index != document_index:

break

random_document = all_documents[random_document_index]

random_start = rng.randint(0, len(random_document) - 1)

for j in range(random_start, len(random_document)):

tokens_b.extend(random_document[j])

if len(tokens_b) >= target_b_length:

break

# We didn't actually use these segments so we "put them back" so

# they don't go to waste.

num_unused_segments = len(current_chunk) - a_end

i -= num_unused_segments

# Actual next

else:

is_random_next = False

for j in range(a_end, len(current_chunk)):

tokens_b.extend(current_chunk[j])

# 将一对序列截断到最大序列长度, tokens_a, tokens_b 是当前所有文档总数(文档是当前段落)

truncate_seq_pair(tokens_a, tokens_b, max_num_tokens, rng)

assert len(tokens_a) >= 1

assert len(tokens_b) >= 1

# 下边几行看过论文的很好理解

tokens = []

segment_ids = []

tokens.append("[CLS]")

segment_ids.append(0)

for token in tokens_a:

tokens.append(token)

segment_ids.append(0)

tokens.append("[SEP]")

segment_ids.append(0)

for token in tokens_b:

tokens.append(token)

segment_ids.append(1)

tokens.append("[SEP]")

segment_ids.append(1)

# tokens [CLS] 各 位 作 者 都 是 不 同 地 位 [MASK] 人 , 怎 么 会 空 有 虚 名 呢 ? [SEP] 这 两 句 [MASK] 虽 [MASK] 以 议 论 入 诗 , 但 对 仗 工 整 , [MASK] 言 [MASK] 度 概 括 , 而 且 [MASK] [MASK] 肯 [MASK] , 含 蕴 丰 富 , 很 有 哲 理 性 。 [SEP]

# masked_lm_positions tokens中每个词在vocabt.txt 中位置

# masked_lm_labels 是每个MASK掉的词

(tokens, masked_lm_positions,

masked_lm_labels) = create_masked_lm_predictions(

tokens, masked_lm_prob, max_predictions_per_seq, vocab_words, rng)

instance = TrainingInstance(

tokens=tokens,

segment_ids=segment_ids,

is_random_next=is_random_next,

masked_lm_positions=masked_lm_positions,

masked_lm_labels=masked_lm_labels)

instances.append(instance)

current_chunk = []

current_length = 0

i += 1

return instances3.5创建masked LM的预测

def create_masked_lm_predictions(tokens, masked_lm_prob,

max_predictions_per_seq, vocab_words, rng):

"""Creates the predictions for the masked LM objective."""

cand_indexes = []

for (i, token) in enumerate(tokens):

if token == "[CLS]" or token == "[SEP]":

continue

cand_indexes.append(i)

rng.shuffle(cand_indexes)

output_tokens = list(tokens)

num_to_predict = min(max_predictions_per_seq,

max(1, int(round(len(tokens) * masked_lm_prob))))

masked_lms = []

covered_indexes = set()

for index in cand_indexes:

if len(masked_lms) >= num_to_predict:

break

if index in covered_indexes:

continue

covered_indexes.add(index)

masked_token = None

# 80% of the time, replace with [MASK]

if rng.random() < 0.8:

masked_token = "[MASK]"

else:

# 10% of the time, keep original

if rng.random() < 0.5:

masked_token = tokens[index]

# 10% of the time, replace with random word

else:

masked_token = vocab_words[rng.randint(0, len(vocab_words) - 1)]

output_tokens[index] = masked_token

masked_lms.append(MaskedLmInstance(index=index, label=tokens[index]))

masked_lms = sorted(masked_lms, key=lambda x: x.index)

masked_lm_positions = []

masked_lm_labels = []

for p in masked_lms:

masked_lm_positions.append(p.index)

masked_lm_labels.append(p.label)

# output_tokens, mask后的序列, masked_lm_positions,masked的位置

# masked_lm_labels masked后的真实字

return (output_tokens, masked_lm_positions, masked_lm_labels)选择字符用[MASK]进行替换,调整数据格式,返回。

3.6截断序列长度

# 将一对序列截断到最大序列长度

def truncate_seq_pair(tokens_a, tokens_b, max_num_tokens, rng):

"""Truncates a pair of sequences to a maximum sequence length."""

while True:

total_length = len(tokens_a) + len(tokens_b)

if total_length <= max_num_tokens:

break

trunc_tokens = tokens_a if len(tokens_a) > len(tokens_b) else tokens_b

assert len(trunc_tokens) >= 1

# We want to sometimes truncate from the front and sometimes from the

# back to add more randomness and avoid biases.

if rng.random() < 0.5:

del trunc_tokens[0]

else:

trunc_tokens.pop()4 main()方法

def main(_):

tf.logging.set_verbosity(tf.logging.INFO)

tokenizer = tokenization.FullTokenizer(

vocab_file=FLAGS.vocab_file, do_lower_case=FLAGS.do_lower_case)

input_files = [] # 输入文件列表

for input_pattern in FLAGS.input_file.split(","):

input_files.extend(tf.gfile.Glob(input_pattern))

tf.logging.info("*** Reading from input files ***")

for input_file in input_files:

tf.logging.info(" %s", input_file)

rng = random.Random(FLAGS.random_seed)

instances = create_training_instances(

input_files, tokenizer, FLAGS.max_seq_length, FLAGS.dupe_factor,

FLAGS.short_seq_prob, FLAGS.masked_lm_prob, FLAGS.max_predictions_per_seq,

rng)

output_files = FLAGS.output_file.split(",")

tf.logging.info("*** Writing to output files ***")

for output_file in output_files:

tf.logging.info(" %s", output_file)

write_instance_to_example_files(instances, tokenizer, FLAGS.max_seq_length,

FLAGS.max_predictions_per_seq, output_files)加载输入数据,处理成制定格式,存入二进制输出文件中。

主函数入口

if __name__ == "__main__":

flags.mark_flag_as_required("input_file")

flags.mark_flag_as_required("output_file")

flags.mark_flag_as_required("vocab_file")

tf.app.run()定义必须的函数并运行。