Hadoop2.7高可用集群搭建步骤

集群节点分配

Park01

Zookeeper

NameNode (active)

Resourcemanager (active)

Park02

Zookeeper

NameNode (standby)

Park03

Zookeeper

ResourceManager (standby)

Park04

DataNode

NodeManager

JournalNode

Park05

DataNode

NodeManager

JournalNode

Park06

DataNode

NodeManager

JournalNode

安装步骤

0.永久关闭每台机器的防火墙

执行:service iptables stop

再次执行:chkconfig iptables off

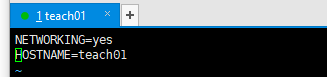

1.为每台机器配置主机名以及hosts文件

配置主机名=》执行: vim /etc/sysconfig/network =》然后执行 hostname 主机名=》达到不重启生效目的

配置hosts文件=》执行:vim /etc/hosts

示例:

127.0.0.1 localhost

::1 localhost

192.168.234.21 teach01

192.168.234.22 teach02

192.168.234.23 teach03

192.168.234.24 teach04

192.168.234.25 teach05

192.168.234.26 teach06

2.通过远程命令将配置好的hosts文件 scp到其他5台节点上

执行:scp /etc/hosts teach02: /etc

3.为每天机器配置ssh免秘钥登录

执行:ssh-keygen

ssh-copy-id root@teach01 (分别发送到6台节点上)

4.前三台机器安装和配置zookeeper

配置conf目录下的zoo.cfg以及创建myid文件

(zookeeper集群安装具体略)

5.为每台机器安装jdk和配置jdk环境

6.为每台机器配置主机名,然后每台机器重启,(如果不重启,也可以配合:hostname teach01生效)

执行: vim /etc/sysconfig/network 进行编辑

7.安装和配置01节点的hadoop

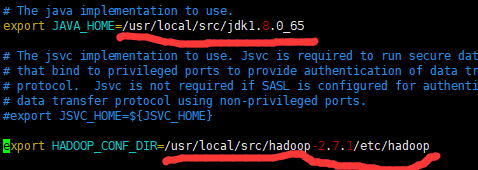

配置hadoop-env.sh

配置jdk安装所在目录

配置hadoop配置文件所在目录8.配置core-site.xml

fs.defaultFS

hdfs://ns

hadoop.tmp.dir

/usr/local/src/hadoop-2.7.1/hadoop-${user.name}

ha.zookeeper.quorum

teach01:2181,teach02:2181,teach03:2181

9.配置01节点的hdfs-site.xml

dfs.nameservices

ns

dfs.ha.namenodes.ns

nn1,nn2

dfs.namenode.rpc-address.ns.nn1

teach01:9000

dfs.namenode.http-address.ns.nn1

teach01:50070

dfs.namenode.rpc-address.ns.nn2

teach02:9000

dfs.namenode.http-address.ns.nn2

teach02:50070

dfs.namenode.shared.edits.dir

qjournal://teach04:8485;teach05:8485;teach06:8485/ns

dfs.journalnode.edits.dir

/usr/local/src/hadoop-2.7.1/journal

dfs.ha.automatic-failover.enabled

true

dfs.client.failover.proxy.provider.ns

org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider

dfs.ha.fencing.methods

sshfence

dfs.ha.fencing.ssh.private-key-files

/root/.ssh/id_rsa

dfs.namenode.name.dir

file:///usr/local/src/hadoop-2.7.1/hadoop-${user.name}/namenode

dfs.datanode.data.dir

file:///usr/local/src/hadoop-2.7.1/hadoop-${user.name}/datanode

dfs.replication

3

dfs.permissions

false

10.配置mapred-site.xml

mapreduce.framework.name

yarn

11.配置yarn-site.xml

yarn.resourcemanager.ha.enabled

true

yarn.resourcemanager.ha.rm-ids

rm1,rm2

yarn.resourcemanager.hostname.rm1

teach01

yarn.resourcemanager.hostname.rm2

teach03

yarn.resourcemanager.recovery.enabled

true

yarn.resourcemanager.store.class

org.apache.hadoop.yarn.server.resourcemanager.recovery.ZKRMStateStore

yarn.resourcemanager.zk-address

teach01:2181,teach02:2181,teach03:2181

For multiple zk services, separate them with comma

yarn.resourcemanager.cluster-id

yarn-ha

yarn.resourcemanager.hostname

teach03

yarn.nodemanager.aux-services

mapreduce_shuffle

关闭内存检测,可以不配。

12.配置slaves文件

配置代码:

teach04

teach05

teach06

13.配置hadoop的环境变量(可不配)

JAVA_HOME=/usr/local/src/jdk1.8.0_65

HADOOP_HOME=/usr/local/src /hadoop-2.7.1

CLASSPATH=.:$JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar

PATH=$JAVA_HOME/bin:$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$PATH

export JAVA_HOME PATH CLASSPATH HADOOP_HOME

14.根据配置文件,创建相关的文件夹,用来存放对应数据

在hadoop-2.7.1目录下创建:

①journal目录

②创建tmp目录

③在tmp目录下,分别创建namenode目录和datanode目录

15.通过scp命令,将hadoop安装目录远程copy到其他5台机器上

比如向hadoop02节点传输:

scp -r hadoop-2.7.1 hadoop02:/usr/local/src

Hadoop集群启动

16.启动zookeeper集群

在Zookeeper安装目录的bin目录下执行:sh zkServer.sh start

17.格式化zookeeper

在zk的leader节点上执行:

hdfs zkfc -formatZK,这个指令的作用是在zookeeper集群上生成ha节点(ns节点)

注:18--24步可以用一步来替代:进入hadoop安装目录的sbin目录,执行:start-dfs.sh 。但建议还是按部就班来执行,比较可靠。

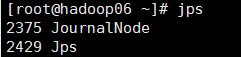

18.启动journalnode集群

在04、05、06节点上执行:

切换到hadoop安装目录的bin目录下,执行:

sh hadoop-daemons.sh start journalnode

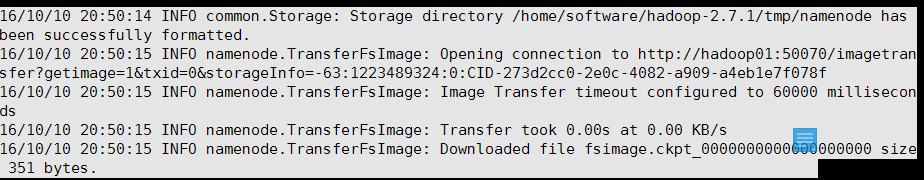

然后执行jps命令查看:19.格式化01节点的namenode

在01节点上执行:(第一次启动hadoop才执行)

hadoop namenode -format

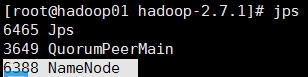

20.启动01节点的namenode

在01节点上执行:

hadoop-daemon.sh start namenode

21.把02节点的 namenode节点变为standby namenode节点

在02节点上执行:

hdfs namenode -bootstrapStandby

22.启动02节点的namenode节点

在02节点上执行:

hadoop-daemon.sh start namenode

23.在04,05,06节点上启动datanode节点

在04,05,06节点上执行: hadoop-daemon.shstart datanode

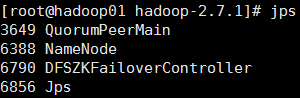

24.启动zkfc(启动FalioverControllerActive)

在01,02节点上执行:

hadoop-daemon.sh start zkfc

25.在01节点上启动主Resourcemanager

在01节点上执行:start-yarn.sh

启动成功后,04,05,06节点上应该有nodemanager的进程

26.在03节点上启动副 Resoucemanager

在03节点上执行:yarn-daemon.shstart resourcemanager

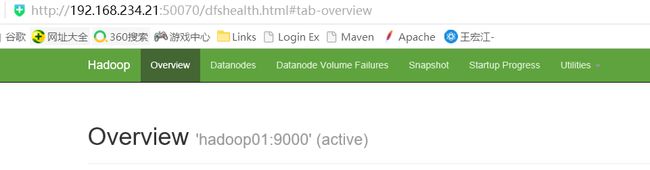

27.测试

输入地址:http://192.168.234.21:50070,查看namenode的信息,是active状态的

然后停掉01节点的namenode,此时返现standby的namenode变为active。

28.查看yarn的管理地址

http://teach01:8088(节点01的8088端口)