最近都没怎么写代码,忙着上班,每天上到晚上九点半,回来看视频,学习scrapy,写了个小项目,无法传回下一页链接继续爬取。。。。还在调试。

期间又写了个爬取贴吧小爬虫练练xpath提取规则,用的不是很熟。还是有错误。

# -*- coding: utf-8 -*-

import requests

from lxml import etree

def gethtml(url):

header = {'User-Agent' :'Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/62.0.3202.94 Safari/537.36'}

r = requests.get(url, headers=header)

return r.content

def parsehtml(html):

selector = etree.HTML(html)

name = selector.xpath('//*[@id="j_core_title_wrap"]/h3/text()')[0]

author = selector.xpath('//*[@id="j_p_postlist"]/div[1]/div[1]/ul/li[3]/a/text()')[0]

time = selector.xpath('//*[@id="j_p_postlist"]/div[1]/div[2]/div[4]/div[1]/div/span[3]/text()')[0]

infos = selector.xpath('//div[@class="d_post_content_main "]/div[1]')

for info in infos:

paper = info.xpath('string(.)').strip().encode('utf-8')

f = open('001.text', 'a', encoding='utf-8')

print(paper)

f.write(str(paper))

f.close()

def main(infourl):

html = gethtml(infourl)

parsehtml(html)

main("https://tieba.baidu.com/p/5366583054?see_lz=1")

打开content.txt是这个鬼样子:

使用encode大法修改代码:

def parsehtml(html):

selector = etree.HTML(html)

name = selector.xpath('//*[@id="j_core_title_wrap"]/h3/text()')[0].encode('utf-8')

author = selector.xpath('//*[@id="j_p_postlist"]/div[1]/div[1]/ul/li[3]/a/text()')[0].encode('utf-8')

time = selector.xpath('//*[@id="j_p_postlist"]/div[1]/div[2]/div[4]/div[1]/div/span[3]/text()')[0].encode('utf-8')

infos = selector.xpath('//div[@class="d_post_content_main "]/div[1]')

for info in infos:

paper = info.xpath('string(.)').strip().encode('utf-8')

return paper

得到的文件时这个鬼样:

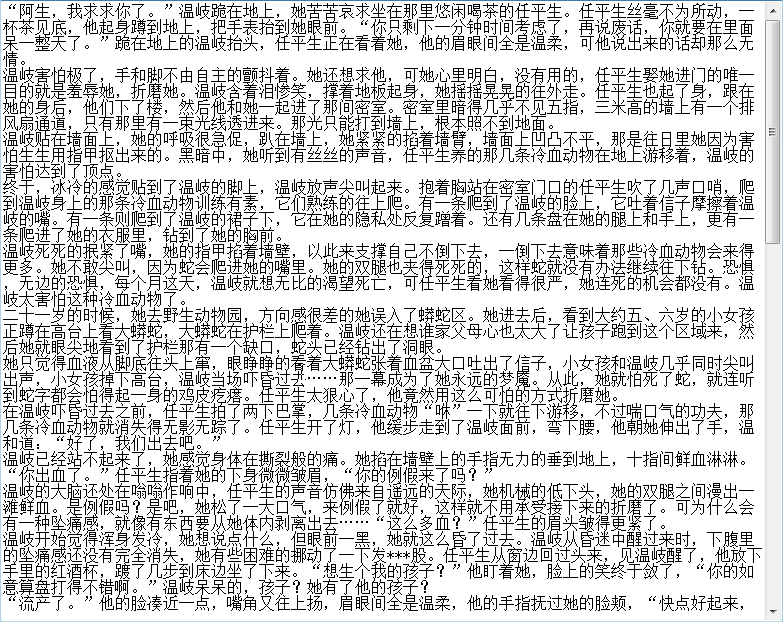

可是我要写成这样子的话:

# -*- coding: utf-8 -*-

import requests

from lxml import etree

url = "https://tieba.baidu.com/p/5366583054?see_lz=1"

header = {'User-Agent' :'Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/62.0.3202.94 Safari/537.36'}

r = requests.get(url, headers=header)

selector = etree.HTML(r.text)

name = selector.xpath('//*[@id="j_core_title_wrap"]/h3/text()')[0]

author = selector.xpath('//*[@id="j_p_postlist"]/div[1]/div[1]/ul/li[3]/a/text()')[0]

time = selector.xpath('//*[@id="j_p_postlist"]/div[1]/div[2]/div[4]/div[1]/div/span[3]/text()')[0]

infos = selector.xpath('//div[@class="d_post_content_main "]/div[1]')

for info in infos:

paper = info.xpath('string(.)').strip()

print(paper + '\n')

f = open('content.text', 'a', encoding='utf-8')

f.write(str(paper) + '\n')

f.close()

content文件又能正常显示出中文,

后来我索性新建一个文件,把第二个程序代码改装一下:

# -*- coding: utf-8 -*-

import requests

from lxml import etree

def gethtml(url):

# url = "https://tieba.baidu.com/p/5366583054?see_lz=1"

header = {'User-Agent' :'Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/62.0.3202.94 Safari/537.36'}

r = requests.get(url, headers=header)

html = r.text

return html

def parsehtml(html):

selector = etree.HTML(html)

name = selector.xpath('//*[@id="j_core_title_wrap"]/h3/text()')[0]

author = selector.xpath('//*[@id="j_p_postlist"]/div[1]/div[1]/ul/li[3]/a/text()')[0]

time = selector.xpath('//*[@id="j_p_postlist"]/div[1]/div[2]/div[4]/div[1]/div/span[3]/text()')[0]

infos = selector.xpath('//div[@class="d_post_content_main "]/div[1]')

for info in infos:

paper = info.xpath('string(.)').strip()

print(paper + '\n')

f = open('content.text', 'a', encoding='utf-8')

f.write(str(paper) + '\n')

f.close()

url1 = "https://tieba.baidu.com/p/5366583054?see_lz=1"

parsehtml(gethtml(url1))

#

# if len(etree.HTML(gethtml(url1)).xpath('//*[@id="thread_theme_7"]/div[1]/ul/li[1]/a[6]')):

# furtherurl = etree.HTML(gethtml(url1)).xpath('//*[@id="thread_theme_7"]/div[1]/ul/li[1]/a[6]/@href/text()')

# url = "https://tieba.baidu.com" + str(furtherurl)

# parsehtml(gethtml(url))

继续可以正常运行并且可以显示中文。

但是我不能只获取这一页的小说呀,于是提取下一页的链接并且拼接url:

if len(etree.HTML(gethtml(url1)).xpath('//*[@id="thread_theme_7"]/div[1]/ul/li[1]/a[6]')):

furtherurl = etree.HTML(gethtml(url1)).xpath('//*[@id="thread_theme_7"]/div[1]/ul/li[1]/a[6]/@href/text()')

url = "https://tieba.baidu.com" + str(furtherurl)

parsehtml(gethtml(url))

然而跑的时候报错。。。。。

image.png

image.png

先这样吧,12点了好困,明天再说。。。

早起更新,早上意识到,提取链接是不需要/text()的,我们只需要提取出链接的属性,另外,由于xpath返回的是一个列表类型,我们需要用索引,取第0个,代码修改如下:

if len(etree.HTML(gethtml(url1)).xpath('//*[@id="thread_theme_7"]/div[1]/ul/li[1]/a[6]')):

furtherurl = etree.HTML(gethtml(url1)).xpath('//*[@id="thread_theme_7"]/div[1]/ul/li[1]/a[6]/@href')[0]

url = "https://tieba.baidu.com" + str(furtherurl)

parsehtml(gethtml(url))

这样跑起来还是有个问题,显示获取的time内容list index out of range, 先注释掉这行,看看能不能翻页获取内容。试了一下可以翻页,但是只能翻到第三页获取数据,这又是啥问题。。。。

第二天晚上下班,回来更新。上班期间偷偷查了些资料,嘿嘿,貌似是被网站限制的原因。后来还是通过构造url完成了代码。。。。。。表示本来就是因为想通过找下一页链接然后迭代的方式去抓取的,结果还是回到了老路子,郁闷。用scrapy也是有这个问题。再慢慢查资料吧~完整代码如下:

# -*- coding: utf-8 -*-

import requests

from lxml import etree

def gethtml(url):

# url = "https://tieba.baidu.com/p/5366583054?see_lz=1"

header = {'User-Agent' :'Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/62.0.3202.94 Safari/537.36'}

r = requests.get(url, headers=header)

html = r.text

return html

def parsehtml(html):

selector = etree.HTML(html)

name = selector.xpath('//*[@id="j_core_title_wrap"]/h3/text()')[0]

author = selector.xpath('//*[@id="j_p_postlist"]/div[1]/div[1]/ul/li[3]/a/text()')[0]

# time = selector.xpath('//*[@id="j_p_postlist"]/div[1]/div[2]/div[4]/div[1]/div/span[3]/text()')[0]

infos = selector.xpath('//div[@class="d_post_content_main "]/div[1]')

for info in infos:

paper = info.xpath('string(.)').strip()

print(paper + '\n')

f = open('content.text', 'a', encoding='utf-8')

f.write(str(paper) + '\n')

f.close()

url1 = "https://tieba.baidu.com/p/5366583054?see_lz=1"

parsehtml(gethtml(url1))

for i in range(2, 11):

url2 = "https://tieba.baidu.com/p/5366583054?pn=" + str(i)

parsehtml(gethtml(url2))

以后再填坑