hadoop单节点的hdfs部署

第一步:创建hadoop用户并上传hadoop的tar包

第二步:部署jdk

1、 jdk的部署路径:/usr/java

2、上传完之后解压:

[root@hadoop-01 java]# tar -xzvf jdk-8u45-linux-x64.gz

[root@hadoop-01 java]# ll

total 319156

drwxr-xr-x 8 uucp 143 4096 Apr 11 2015 jdk1.8.0_45

-rw-r--r-- 1 root root 153530841 Jul 8 2015 jdk-7u80-linux-x64.tar.gz

-rw-r--r-- 1 root root 173271626 Sep 19 11:49 jdk-8u45-linux-x64.gz

!!!此时解压完注意jdk文件夹的用户和用户组

3、进行权限修正

[root@hadoop-01 java]# chown -R root:root jdk1.8.0_45

[root@hadoop-01 java]# ll

total 319156

drwxr-xr-x 8 root root 4096 Apr 11 2015 jdk1.8.0_45

-rw-r--r-- 1 root root 153530841 Jul 8 2015 jdk-7u80-linux-x64.tar.gz

-rw-r--r-- 1 root root 173271626 Sep 19 11:49 jdk-8u45-linux-x64.gz

4、配置jdk的环境变量

[root@hadoop-01 java]# vi /etc/profile

export JAVA_HOME=/usr/java/jdk1.8.0_45

export JRE_HOME=$JAVA_HOME/jre

export CLASSPATH=.:$JAVA_HOME/lib:$JRE_HOME/lib:$CLASSPATH

export PATH=$JAVA_HOME/bin:$JRE_HOME/bin:$PATH

[root@hadoop-01 java]# source /etc/profile

[root@hadoop-01 java]# which java

/usr/java/jdk1.8.0_45/bin/java

第三步:解压hadoop

[hadoop@hadoop-01 app]$ tar -xzvf hadoop-2.6.0-cdh5.7.0.tar.gz

[hadoop@hadoop-01 app]$ cd hadoop-2.6.0-cdh5.7.0

[hadoop@hadoop-01 hadoop-2.6.0-cdh5.7.0]$ ll

total 76

drwxr-xr-x 2 hadoop hadoop 4096 Mar 24 2016 bin #可执行脚本

drwxr-xr-x 2 hadoop hadoop 4096 Mar 24 2016 bin-mapreduce1

drwxr-xr-x 3 hadoop hadoop 4096 Mar 24 2016 cloudera

drwxr-xr-x 6 hadoop hadoop 4096 Mar 24 2016 etc #配置目录(conf)

drwxr-xr-x 5 hadoop hadoop 4096 Mar 24 2016 examples

drwxr-xr-x 3 hadoop hadoop 4096 Mar 24 2016 examples-mapreduce1

drwxr-xr-x 2 hadoop hadoop 4096 Mar 24 2016 include

drwxr-xr-x 3 hadoop hadoop 4096 Mar 24 2016 lib #jar包目录

drwxr-xr-x 2 hadoop hadoop 4096 Mar 24 2016 libexec

drwxr-xr-x 3 hadoop hadoop 4096 Mar 24 2016 sbin #hadoop组件的启动 停止脚本

drwxr-xr-x 4 hadoop hadoop 4096 Mar 24 2016 share

drwxr-xr-x 17 hadoop hadoop 4096 Mar 24 2016 src

第四步:修改hadoop配置文件

一:修改core-site.xml:

fs.defaultFS

hdfs://localhost:9000

二:修改hdfs-site.xml:

dfs.replication

1

第五步:配置ssh免密登陆

[hadoop@hadoop002 ~]$ ssh-keygen

Generating public/private rsa key pair.

Enter file in which to save the key (/home/hadoop/.ssh/id_rsa):

Created directory '/home/hadoop/.ssh'.

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /home/hadoop/.ssh/id_rsa.

Your public key has been saved in /home/hadoop/.ssh/id_rsa.pub.

The key fingerprint is:

ba:48:3d:ff:af:4d:da:74:67:31:d6:98:ad:a0:b3:76 hadoop@hadoop002

The key's randomart image is:

+--[ RSA 2048]----+

| |

| |

| |

| +.|

| S . o+o|

| . . . ...o|

| . + o o o o|

| . . + .OE. o |

| . . .o=++ |

+-----------------+

[hadoop@hadoop002 ~]$ cd .ssh

[hadoop@hadoop002 .ssh]$ ll

total 8

-rw------- 1 hadoop hadoop 1675 Feb 13 22:36 id_rsa 私钥

-rw-r--r-- 1 hadoop hadoop 398 Feb 13 22:36 id_rsa.pub 公钥

[hadoop@hadoop002 .ssh]$ cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

[hadoop@hadoop002 .ssh]$

[hadoop@hadoop002 .ssh]$ ll

total 12

-rw-rw-r-- 1 hadoop hadoop 398 Feb 13 22:37 authorized_keys

-rw------- 1 hadoop hadoop 1675 Feb 13 22:36 id_rsa

-rw-r--r-- 1 hadoop hadoop 398 Feb 13 22:36 id_rsa.pub

-rw-r--r-- 1 hadoop hadoop 0 Feb 13 22:39 known_hosts

ssh localhost date 是需要输入密码,但是这个用户是没有配置密码。

我们应该在没有配置密码情况下去完成无密码信任呢?

改权限

[hadoop@hadoop002 .ssh]$ chmod 600 authorized_keys

[hadoop@hadoop002 .ssh]$ ssh localhost date

The authenticity of host 'localhost (127.0.0.1)' can't be established.

RSA key fingerprint is b1:94:33:ec:95:89:bf:06:3b:ef:30:2f:d7:8e:d2:4c.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'localhost' (RSA) to the list of known hosts.

Wed Feb 13 22:41:17 CST 2019

[hadoop@hadoop002 .ssh]$

[hadoop@hadoop002 .ssh]$

[hadoop@hadoop002 .ssh]$ ssh localhost date

Wed Feb 13 22:41:22 CST 2019

[hadoop@hadoop002 .ssh]$

第六步:格式化

要启动 Hadoop 集群,需要启动 HDFS 和 YARN 两个集群。

注意:首次启动HDFS时,必须对其进行格式化操作。本质上是一些清理和准备工作,因为此时的 HDFS 在物理上还是不存在的。

bin/hdfs namenode -format或者bin/hadoop namenode –format

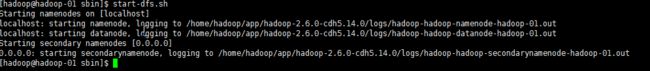

第七步:启动

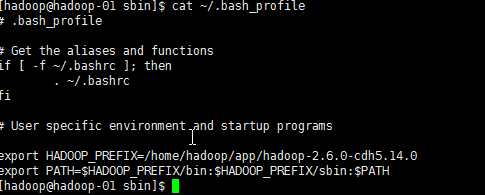

第八步:配置hadoop用户的个人环境变量

至此hdfs单节点的部署到此完成。