目标检测菜鸟起飞路线: 第一线--YOLOV3代码运行详解

目标检测菜鸟起飞路线

第一线:YOLOV3代码运行详解

我是个小白,我先跑通yolov3代码再说

参考文章:https://blog.csdn.net/m0_37857151/article/details/81330699

https://my.oschina.net/u/876354/blog/1927881

代码地址: https://github.com/qqwweee/keras-yolo3

一 数据集–仿照VOC2007数据集结构进行设置:

1.1 数据集采集

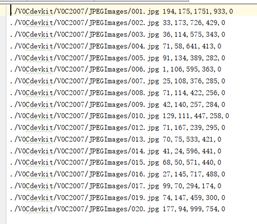

在百度上搜索汽车下载20张.jpg格式图片放到JPEGIMages文件中(如下图所示),本次只是测试,所以采集数据较少。

Annotations:用于存放标注后的xml文件,每一个xml对应一张图像,并且每个xml中存放的是标记的各个目标的位置和类别信息;

ImageSets/Main:用于存放训练集、测试集、验收集的文件列表;

JPEGImages:存储统一规则命名好的原始图像。

Annotations_txt:可忽略。

1.2 数据集标注

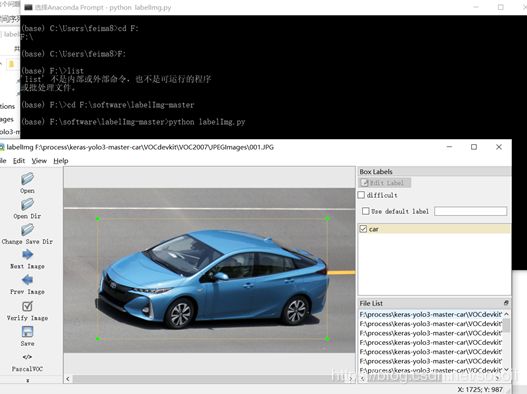

labelImg标注工具是一个目标检测标注目标的便捷的工具。

代码地址:https://github.com/tzutalin/labelImg

安装之前,在Anaconda Prompt安装

pip install PyQt5

pip install pyqt5-tools

下载labelImg的压缩文件,然后cd到目标文件夹

pyrcc5 -o resources.py resources.qrc

完成后,继续在该目标文件夹下输入

python labelImg.py

出现如下的图形,说明你安装成功。(labelimage 快捷键: A: prev image;D: next image;W:creat rectbox;ctrl+s: save xml)标注完成后,将文件保存到Annotations文件夹下。

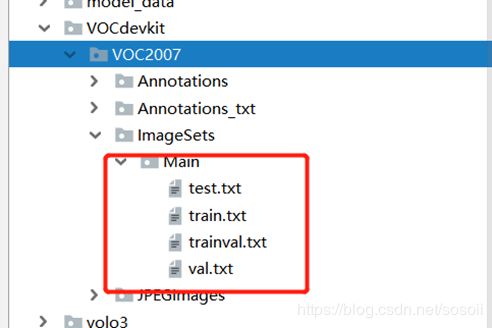

1.3 划分训练集、测试集、验证集

完成所有汽车照片的标注后,还要将数据集划分下训练集、测试集和验证集。自动化分脚本:convert_to_txt.py文件

import os

import random

trainval_percent = 0.1

train_percent = 0.9

xmlfilepath = 'F:/process/ssd_kerasV2-master/videos/照片_samll/照片'

txtsavepath = 'VOCdevkit/VOC2007/ImageSets/Main'

total_xml = os.listdir(xmlfilepath)

num = len(total_xml)

list = range(num)

tv = int(num * trainval_percent)

tr = int(tv * train_percent)

trainval = random.sample(list, tv)

train = random.sample(trainval, tr)

ftrainval = open('VOCdevkit/VOC2007/ImageSets/Main/trainval.txt', 'w')

ftest = open('VOCdevkit/VOC2007/ImageSets/Main/test.txt', 'w')

ftrain = open('VOCdevkit/VOC2007/ImageSets/Main/train.txt', 'w')

fval = open('VOCdevkit/VOC2007/ImageSets/Main/val.txt', 'w')

for i in list:

name = total_xml[i][:-4] + '\n'

if i in trainval:

ftrainval.write(name)

if i in train:

ftest.write(name)

else:

fval.write(name)

else:

ftrain.write(name)

ftrainval.close()

ftrain.close()

fval.close()

ftest.close()

执行完毕之后,Main文件下面生成

其中,训练集train.txt 文件中每行包含一个图片的名字,共有20*train_percent行名字。

1.4 转换标注数据文件

YOLO采用的标注数据文件,每一行由文件所在路径、标注框的位置(左上角、右下角)、类别ID组成,格式为:image_file_path x_min, y_min,x_max, y_max, class_id

这种文件格式跟前面制作好的VOC_2007标注文件的格式不一样,Keras-yolo3里面提供了voc格式转yolo格式的转换脚本 voc_annotation.py(代码本人已经更改,可根据实际情况进行调整)。在转换格式之前,先打开voc_annotation.py文件,修改里面的classes的值。例如本案例在voc_2007中标注的汽车的物体命名为car,因此voc_annotation.py修改为:

import xml.etree.ElementTree as ET

from os import getcw

sets=[('2007', 'train'), ('2007', 'val'), ('2007', 'test')]

classes = ["car"]

执行完毕之后,自动生成2007_train.txt文件

2.1 修改参数文件yolo3.cfg

注明一下,这个文件是用于转换官网下载的.weights文件用的。

训练自己的网络可以不用此操作。

IDE里直接打开cfg文件,ctrl+f搜 yolo,总共搜出3个含有yolo的地方!!每个地方都要修改:(这个地方踩了好几次的坑)

[convolutional]

filters:3*(5+len(classes));

[yolo]

classes: len(classes) = 1,这里只有汽车一个类别,所以写成1.

random:原来是1,显存小改为0

YOLO官网上提供了YOLOv3模型训练好的权重文件,把它下载保存到电脑上。

如果预训练的数据集与yolov3中类别相差太大,不准备进行迁移学习,可以训练新类别的权重,可不下载此权重。

下载地址: https://pjreddie.com/media/files/yolov3.weights

如果要用预训练的权重继续训练,执行以下代码,将权重却换成keras识别的模式:

python convert.py yolov3.cfg yolov3.weights model_data/yolo.h5

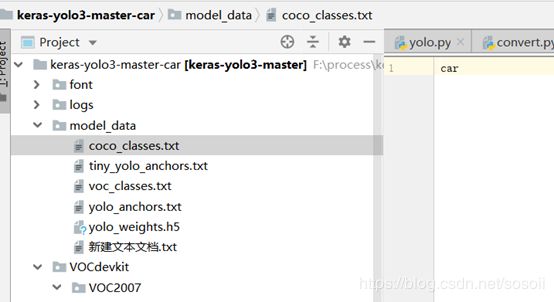

2.3 创建类别文件

将coco-classes.txt和voc_classes.txt中的类别修改为自己的类别,如本次训练模型是汽车-car,

2.4 训练模型

接下来到了关键的步骤:训练模型。首先,修改train.py里面的相关路径配置,主要有:annotation_path、classes_path、weights_path。

def _main():

annotation_path = '2007_train.txt'

log_dir = 'logs/000/'

classes_path = 'model_data/coco_classes.txt'

anchors_path = 'model_data/yolo_anchors.txt'

class_names = get_classes(classes_path)

num_classes = len(class_names)

anchors = get_anchors(anchors_path)

原train.py中有加载预训练权重的代码,并冻结部分层数,在此基础上进行训练。然后执行train.py就可以开始进行训练。训练后的模型,默认保存路径为logs/000/trained_weights_final.h5。

2.5 测试模型

完成模型的训练之后,调用yolo.py即可使用我们训练好的模型。首先,修改yolo.py里面的模型路径、类别文件路径,如下:

class YOLO(object):

_defaults = {

"model_path": 'logs/000/trained_weights_final.h5',

# "model_path": 'model_data/yolo_weights.h5',

"anchors_path": 'model_data/yolo_anchors.txt',

"classes_path": 'model_data/coco_classes.txt',

"score" : 0.3,

"iou" : 0.45,

"model_image_size" : (416, 416),

"gpu_num" : 1,

}

通过调用 YOLO类就能使用YOLO模型,为方便测试,在yolo.py最后增加以下代码,只要修改图像路径后,就能使用自己的yolo模型了

if __name__ == '__main__':

yolo=YOLO()

path = 'F:/process/keras-yolo3-master/VOCdevkit/VOC2007/JPEGImages/DJI_0003.JPG'

# path = 'test1.JPG'

try:

image = Image.open(path)

except:

print('Open Error! Try again!')

else:

r_image = yolo.detect_image(image)

r_image.show()

Keras-YOLOv3中,r_image,_=yolo.detect_image(image)出现’JpegImageFile’ object is not iterable错误,修改为r_image=yolo.detect_image(image)即可。

2.6 补充—训练全新权重

除了以上汽车数据集之外,还有一部份专业领域的图像,因为与原有的yolov3训练集差异太大,所以基本YOLOV3第一阶段的迁移学习阶段对之后的训练过程无用,则直接开始第二段或者重新根据darknet53。

"""

Retrain the YOLO model for your own dataset.

"""

import numpy as np

import keras.backend as K

from keras.layers import Input, Lambda

from keras.models import Model

from keras.callbacks import TensorBoard, ModelCheckpoint, EarlyStopping

from yolo3.model import preprocess_true_boxes, yolo_body, tiny_yolo_body, yolo_loss

from yolo3.utils import get_random_data

def _main():

annotation_path = '2007_train.txt'

log_dir = 'logs/000/'

classes_path = 'model_data/coco_classes.txt'

anchors_path = 'model_data/yolo_anchors.txt'

class_names = get_classes(classes_path)

anchors = get_anchors(anchors_path)

input_shape = (416, 416) # multiple of 32, hw

model = create_model(input_shape, anchors, len(class_names))

train(model, annotation_path, input_shape, anchors, len(class_names), log_dir=log_dir)

def train(model, annotation_path, input_shape, anchors, num_classes, log_dir='logs/'):

model.compile(optimizer='adam', loss={

'yolo_loss': lambda y_true, y_pred: y_pred})

logging = TensorBoard(log_dir=log_dir)

checkpoint = ModelCheckpoint(log_dir + "ep{epoch:03d}-loss{loss:.3f}-val_loss{val_loss:.3f}.h5",

monitor='val_loss', save_weights_only=True, save_best_only=True, period=1)

batch_size = 10

val_split = 0.1

with open(annotation_path) as f:

lines = f.readlines()

np.random.shuffle(lines)

num_val = int(len(lines) * val_split)

num_train = len(lines) - num_val

print('Train on {} samples, val on {} samples, with batch size {}.'.format(num_train, num_val, batch_size))

model.fit_generator(data_generator_wrap(lines[:num_train], batch_size, input_shape, anchors, num_classes),

steps_per_epoch=max(1, num_train // batch_size),

validation_data=data_generator_wrap(lines[num_train:], batch_size, input_shape, anchors,

num_classes),

validation_steps=max(1, num_val // batch_size),

epochs=2,

initial_epoch=1)

model.save_weights(log_dir + 'trained_weights.h5')

def get_classes(classes_path):

with open(classes_path) as f:

class_names = f.readlines()

class_names = [c.strip() for c in class_names]

return class_names

def get_anchors(anchors_path):

with open(anchors_path) as f:

anchors = f.readline()

anchors = [float(x) for x in anchors.split(',')]

return np.array(anchors).reshape(-1, 2)

def create_model(input_shape, anchors, num_classes, load_pretrained=False, freeze_body=False,

weights_path='model_data/yolo_weights.h5'):

K.clear_session() # get a new session

image_input = Input(shape=(None, None, 3))

h, w = input_shape

num_anchors = len(anchors)

y_true = [Input(shape=(h // {0: 32, 1: 16, 2: 8}[l], w // {0: 32, 1: 16, 2: 8}[l], \

num_anchors // 3, num_classes + 5)) for l in range(3)]

model_body = yolo_body(image_input, num_anchors // 3, num_classes)

print('Create YOLOv3 model with {} anchors and {} classes.'.format(num_anchors, num_classes))

if load_pretrained:

model_body.load_weights(weights_path, by_name=True, skip_mismatch=True)

print('Load weights {}.'.format(weights_path))

if freeze_body:

# Do not freeze 3 output layers.

num = len(model_body.layers) - 3

for i in range(num): model_body.layers[i].trainable = False

print('Freeze the first {} layers of total {} layers.'.format(num, len(model_body.layers)))

model_loss = Lambda(yolo_loss, output_shape=(1,), name='yolo_loss',

arguments={'anchors': anchors, 'num_classes': num_classes, 'ignore_thresh': 0.5})(

[*model_body.output, *y_true])

model = Model([model_body.input, *y_true], model_loss)

return model

def data_generator(annotation_lines, batch_size, input_shape, anchors, num_classes):

n = len(annotation_lines)

np.random.shuffle(annotation_lines)

i = 0

while True:

image_data = []

box_data = []

for b in range(batch_size):

i %= n

image, box = get_random_data(annotation_lines[i], input_shape, random=True)

image_data.append(image)

box_data.append(box)

i += 1

image_data = np.array(image_data)

box_data = np.array(box_data)

y_true = preprocess_true_boxes(box_data, input_shape, anchors, num_classes)

yield [image_data, *y_true], np.zeros(batch_size)

def data_generator_wrap(annotation_lines, batch_size, input_shape, anchors, num_classes):

n = len(annotation_lines)

if n == 0 or batch_size <= 0: return None

return data_generator(annotation_lines, batch_size, input_shape, anchors, num_classes)

if __name__ == '__main__':

_main()