吴恩达作业5:正则化和dropout

构建了三层神经网络来验证正则化和dropout对防止过拟合的作用。

首先看数据集,reg_utils.py包含产生数据集函数,前向传播,计算损失值等,代码如下:

import numpy as np

import matplotlib.pyplot as plt

import h5py

import sklearn

import sklearn.datasets

import sklearn.linear_model

import scipy.io

def sigmoid(x):

"""

Compute the sigmoid of x

Arguments:

x -- A scalar or numpy array of any size.

Return:

s -- sigmoid(x)

"""

s = 1/(1+np.exp(-x))

return s

def relu(x):

"""

Compute the relu of x

Arguments:

x -- A scalar or numpy array of any size.

Return:

s -- relu(x)

"""

s = np.maximum(0,x)

return s

def load_planar_dataset(seed):

np.random.seed(seed)

m = 400 # number of examples

N = int(m/2) # number of points per class

D = 2 # dimensionality

X = np.zeros((m,D)) # data matrix where each row is a single example

Y = np.zeros((m,1), dtype='uint8') # labels vector (0 for red, 1 for blue)

a = 4 # maximum ray of the flower

for j in range(2):

ix = range(N*j,N*(j+1))

t = np.linspace(j*3.12,(j+1)*3.12,N) + np.random.randn(N)*0.2 # theta

r = a*np.sin(4*t) + np.random.randn(N)*0.2 # radius

X[ix] = np.c_[r*np.sin(t), r*np.cos(t)]

Y[ix] = j

X = X.T

Y = Y.T

return X, Y

def initialize_parameters(layer_dims):

"""

Arguments:

layer_dims -- python array (list) containing the dimensions of each layer in our network

Returns:

parameters -- python dictionary containing your parameters "W1", "b1", ..., "WL", "bL":

W1 -- weight matrix of shape (layer_dims[l], layer_dims[l-1])

b1 -- bias vector of shape (layer_dims[l], 1)

Wl -- weight matrix of shape (layer_dims[l-1], layer_dims[l])

bl -- bias vector of shape (1, layer_dims[l])

Tips:

- For example: the layer_dims for the "Planar Data classification model" would have been [2,2,1].

This means W1's shape was (2,2), b1 was (1,2), W2 was (2,1) and b2 was (1,1). Now you have to generalize it!

- In the for loop, use parameters['W' + str(l)] to access Wl, where l is the iterative integer.

"""

np.random.seed(3)

parameters = {}

L = len(layer_dims) # number of layers in the network

for l in range(1, L):

parameters['W' + str(l)] = np.random.randn(layer_dims[l], layer_dims[l-1]) / np.sqrt(layer_dims[l-1])

parameters['b' + str(l)] = np.zeros((layer_dims[l], 1))

assert(parameters['W' + str(l)].shape == layer_dims[l], layer_dims[l-1])

assert(parameters['W' + str(l)].shape == layer_dims[l], 1)

return parameters

def forward_propagation(X, parameters):

"""

Implements the forward propagation (and computes the loss) presented in Figure 2.

Arguments:

X -- input dataset, of shape (input size, number of examples)

parameters -- python dictionary containing your parameters "W1", "b1", "W2", "b2", "W3", "b3":

W1 -- weight matrix of shape ()

b1 -- bias vector of shape ()

W2 -- weight matrix of shape ()

b2 -- bias vector of shape ()

W3 -- weight matrix of shape ()

b3 -- bias vector of shape ()

Returns:

loss -- the loss function (vanilla logistic loss)

"""

# retrieve parameters

W1 = parameters["W1"]

b1 = parameters["b1"]

W2 = parameters["W2"]

b2 = parameters["b2"]

W3 = parameters["W3"]

b3 = parameters["b3"]

# LINEAR -> RELU -> LINEAR -> RELU -> LINEAR -> SIGMOID

Z1 = np.dot(W1, X) + b1

A1 = relu(Z1)

Z2 = np.dot(W2, A1) + b2

A2 = relu(Z2)

Z3 = np.dot(W3, A2) + b3

A3 = sigmoid(Z3)

cache = (Z1, A1, W1, b1, Z2, A2, W2, b2, Z3, A3, W3, b3)

return A3, cache

def backward_propagation(X, Y, cache):

"""

Implement the backward propagation presented in figure 2.

Arguments:

X -- input dataset, of shape (input size, number of examples)

Y -- true "label" vector (containing 0 if cat, 1 if non-cat)

cache -- cache output from forward_propagation()

Returns:

gradients -- A dictionary with the gradients with respect to each parameter, activation and pre-activation variables

"""

m = X.shape[1]

(Z1, A1, W1, b1, Z2, A2, W2, b2, Z3, A3, W3, b3) = cache

dZ3 = A3 - Y

dW3 = 1./m * np.dot(dZ3, A2.T)

db3 = 1./m * np.sum(dZ3, axis=1, keepdims = True)

dA2 = np.dot(W3.T, dZ3)

dZ2 = np.multiply(dA2, np.int64(A2 > 0))

dW2 = 1./m * np.dot(dZ2, A1.T)

db2 = 1./m * np.sum(dZ2, axis=1, keepdims = True)

dA1 = np.dot(W2.T, dZ2)

dZ1 = np.multiply(dA1, np.int64(A1 > 0))

dW1 = 1./m * np.dot(dZ1, X.T)

db1 = 1./m * np.sum(dZ1, axis=1, keepdims = True)

gradients = {"dZ3": dZ3, "dW3": dW3, "db3": db3,

"dA2": dA2, "dZ2": dZ2, "dW2": dW2, "db2": db2,

"dA1": dA1, "dZ1": dZ1, "dW1": dW1, "db1": db1}

return gradients

def update_parameters(parameters, grads, learning_rate):

"""

Update parameters using gradient descent

Arguments:

parameters -- python dictionary containing your parameters:

parameters['W' + str(i)] = Wi

parameters['b' + str(i)] = bi

grads -- python dictionary containing your gradients for each parameters:

grads['dW' + str(i)] = dWi

grads['db' + str(i)] = dbi

learning_rate -- the learning rate, scalar.

Returns:

parameters -- python dictionary containing your updated parameters

"""

n = len(parameters) // 2 # number of layers in the neural networks

# Update rule for each parameter

for k in range(n):

parameters["W" + str(k+1)] = parameters["W" + str(k+1)] - learning_rate * grads["dW" + str(k+1)]

parameters["b" + str(k+1)] = parameters["b" + str(k+1)] - learning_rate * grads["db" + str(k+1)]

return parameters

def predict(X, y, parameters):

"""

This function is used to predict the results of a n-layer neural network.

Arguments:

X -- data set of examples you would like to label

parameters -- parameters of the trained model

Returns:

p -- predictions for the given dataset X

"""

m = X.shape[1]

p = np.zeros((1,m), dtype = np.int)

# Forward propagation

a3, caches = forward_propagation(X, parameters)

# convert probas to 0/1 predictions

for i in range(0, a3.shape[1]):

if a3[0,i] > 0.5:

p[0,i] = 1

else:

p[0,i] = 0

# print results

#print ("predictions: " + str(p[0,:]))

#print ("true labels: " + str(y[0,:]))

print("Accuracy: " + str(np.mean((p[0,:] == y[0,:]))))

return p

def compute_cost(a3, Y):

"""

Implement the cost function

Arguments:

a3 -- post-activation, output of forward propagation

Y -- "true" labels vector, same shape as a3

Returns:

cost - value of the cost function

"""

m = Y.shape[1]

logprobs = np.multiply(-np.log(a3),Y) + np.multiply(-np.log(1 - a3), 1 - Y)

cost = 1./m * np.nansum(logprobs)

return cost

def load_dataset():

train_dataset = h5py.File('datasets/train_catvnoncat.h5', "r")

train_set_x_orig = np.array(train_dataset["train_set_x"][:]) # your train set features

train_set_y_orig = np.array(train_dataset["train_set_y"][:]) # your train set labels

test_dataset = h5py.File('datasets/test_catvnoncat.h5', "r")

test_set_x_orig = np.array(test_dataset["test_set_x"][:]) # your test set features

test_set_y_orig = np.array(test_dataset["test_set_y"][:]) # your test set labels

classes = np.array(test_dataset["list_classes"][:]) # the list of classes

train_set_y = train_set_y_orig.reshape((1, train_set_y_orig.shape[0]))

test_set_y = test_set_y_orig.reshape((1, test_set_y_orig.shape[0]))

train_set_x_orig = train_set_x_orig.reshape(train_set_x_orig.shape[0], -1).T

test_set_x_orig = test_set_x_orig.reshape(test_set_x_orig.shape[0], -1).T

train_set_x = train_set_x_orig/255

test_set_x = test_set_x_orig/255

return train_set_x, train_set_y, test_set_x, test_set_y, classes

def predict_dec(parameters, X):

"""

Used for plotting decision boundary.

Arguments:

parameters -- python dictionary containing your parameters

X -- input data of size (m, K)

Returns

predictions -- vector of predictions of our model (red: 0 / blue: 1)

"""

# Predict using forward propagation and a classification threshold of 0.5

a3, cache = forward_propagation(X, parameters)

predictions = (a3>0.5)

return predictions

def load_planar_dataset(randomness, seed):

np.random.seed(seed)

m = 50

N = int(m/2) # number of points per class

D = 2 # dimensionality

X = np.zeros((m,D)) # data matrix where each row is a single example

Y = np.zeros((m,1), dtype='uint8') # labels vector (0 for red, 1 for blue)

a = 2 # maximum ray of the flower

for j in range(2):

ix = range(N*j,N*(j+1))

if j == 0:

t = np.linspace(j, 4*3.1415*(j+1),N) #+ np.random.randn(N)*randomness # theta

r = 0.3*np.square(t) + np.random.randn(N)*randomness # radius

if j == 1:

t = np.linspace(j, 2*3.1415*(j+1),N) #+ np.random.randn(N)*randomness # theta

r = 0.2*np.square(t) + np.random.randn(N)*randomness # radius

X[ix] = np.c_[r*np.cos(t), r*np.sin(t)]

Y[ix] = j

X = X.T

Y = Y.T

return X, Y

def plot_decision_boundary(model, X, y):

# Set min and max values and give it some padding

x_min, x_max = X[0, :].min() - 1, X[0, :].max() + 1

y_min, y_max = X[1, :].min() - 1, X[1, :].max() + 1

h = 0.01

# Generate a grid of points with distance h between them

xx, yy = np.meshgrid(np.arange(x_min, x_max, h), np.arange(y_min, y_max, h))

# Predict the function value for the whole grid

Z = model(np.c_[xx.ravel(), yy.ravel()])

Z = Z.reshape(xx.shape)

# Plot the contour and training examples

plt.contourf(xx, yy, Z, cmap=plt.cm.Spectral)

plt.ylabel('x2')

plt.xlabel('x1')

plt.scatter(X[0, :], X[1, :], c=y, cmap=plt.cm.Spectral)

plt.show()

def load_2D_dataset():

data = scipy.io.loadmat('datasets/data.mat')

train_X = data['X'].T

train_Y = data['y'].T

test_X = data['Xval'].T

test_Y = data['yval'].T

#plt.scatter(train_X[0, :], train_X[1, :], c=np.squeeze(train_Y), s=40, cmap=plt.cm.Spectral);

return train_X, train_Y, test_X, test_Y调用数据集,代码如下:

import numpy as np

import reg_utils

import matplotlib.pyplot as plt

import testCases

import sklearn

import sklearn.datasets

train_X, train_Y, test_X, test_Y=reg_utils.load_2D_dataset()

print('训练样本={}'.format(train_X.shape))

print('训练样本标签={}'.format(train_Y.shape))

print('测试样本={}'.format(test_X.shape))

plt.show()打印结果:

第一种方法不用正则化和dropout即lambda=0,keep_prob=1,代码如下

import numpy as np

import reg_utils

import matplotlib.pyplot as plt

import testCases

import sklearn

import sklearn.datasets

train_X, train_Y, test_X, test_Y=reg_utils.load_2D_dataset()

print('训练样本={}'.format(train_X.shape))

print('训练样本标签={}'.format(train_Y.shape))

print('测试样本={}'.format(test_X.shape))

# plt.show()

"""

初始化权重 方差为2/n

"""

def initialize_parameters_he(layers_dims):

L=len(layers_dims)

parameters={}

for i in range(1,L):

parameters['W'+str(i)]=np.random.randn(layers_dims[i],layers_dims[i-1])\

*np.sqrt(2.0/layers_dims[i-1])

parameters['b' + str(i)]=np.zeros((layers_dims[i],1))

return parameters

'''

计算损失值:带有L2正则项的损失值

'''

def compute_cost_with_regularization(A3,Y,parameters,lambd):

m=Y.shape[1]

W1 = parameters['W1']

W2 = parameters['W2']

W3 = parameters['W3']

cost_entropy=reg_utils.compute_cost(A3, Y)

#cost_regularize = np.sum(np.sum(np.square(Wl)) for Wl in [W1, W2, W3]) * lambd / (2 * m)

cost_regularize=np.sum(np.sum(np.square(Wl)) for Wl in [W1,W2,W3])* lambd / (2 * m)

cost=cost_entropy+cost_regularize

return cost

"""

前向传播带有dropout

"""

def forward_propagation_with_dropout(X,paremeters,keep_prob):

W1 = paremeters['W1']

b1 = paremeters['b1']

W2 = paremeters['W2']

b2 = paremeters['b2']

W3 = paremeters['W3']

b3 = paremeters['b3']

Z1=np.dot(W1,X)+b1

A1=reg_utils.relu(Z1)

D1=np.random.rand(A1.shape[0],A1.shape[1])#np.random.rand 输出值在0 1之间

D1=(D1 0))

dW2 = np.dot(dZ2, A1.T)

db2 = np.sum(dZ2, axis=1, keepdims=True)

dA1 = np.dot(W2.T, dZ2)

dA1 = np.multiply(dA1, D1)

dA1 = dA1 / keep_prob

dZ1 = np.multiply(dA1, np.int64(A1 > 0))

dW1 = np.dot(dZ1, X.T)

db1 = np.sum(dZ1, axis=1, keepdims=True)

gradients={'dZ3':dZ3,'dW3':dW3,'db3':db3,'dA2':dA2,'dZ2':dZ2,

'dW2':dW2,'db2':db2,'dA1':dA1,'dZ1':dZ1,'dW1':dW1,'db1':db1}

return gradients

"""

后向传播带有L2正则项

"""

def back_propagation_with_regularization(X,Y,lambd,cache):

m=X.shape[1]

(Z1, A1, W1, b1, Z2, A2, W2, b2, Z3, A3, W3, b3) = cache

#X,W1,A1,W2,A2,W3,A3=cache

dZ3=1./m *(A3-Y)

dW3=np.dot(dZ3,A2.T)+W3*(lambd/m)

db3=np.sum(dZ3, axis=1, keepdims = True)

dA2=np.dot(W3.T,dZ3)

dZ2=np.multiply(dA2,np.int64(A2 > 0))

dW2=np.dot(dZ2,A1.T)+W2*(lambd/m)#由此可看处 lambda越大 W的惩罚越大

db2 = np.sum(dZ2, axis=1, keepdims=True)

dA1=np.dot(W2.T,dZ2)

dZ1 = np.multiply(dA1, np.int64(A1 > 0))

dW1 = np.dot(dZ1, X.T) + W1 * (lambd / m)

db1 = np.sum(dZ1, axis=1, keepdims=True)

gradients = {"dZ3": dZ3, "dW3": dW3, "db3": db3, "dA2": dA2,

"dZ2": dZ2, "dW2": dW2, "db2": db2, "dA1": dA1,

"dZ1": dZ1, "dW1": dW1, "db1": db1}

return gradients

"""

构建模型

"""

def model(X,Y,num_iterations,learning_rate,lambd=0,keep_prob=1):

layers_dims=[X.shape[0], 20, 3, 1]

parameters=initialize_parameters_he(layers_dims)

costs=[]

for i in range(num_iterations):

if keep_prob==1:

A3, cache=reg_utils.forward_propagation(X, parameters)

elif keep_prob<1:

A3, cache=forward_propagation_with_dropout(X, parameters, keep_prob)

if lambd==0:

cost = reg_utils.compute_cost(A3, Y)

else:

cost=compute_cost_with_regularization(A3,Y,parameters,lambd)

if lambd==0 and keep_prob==1:

gradients=reg_utils.backward_propagation(X, Y, cache)

elif lambd!=0:

gradients=back_propagation_with_regularization(X, Y, lambd, cache)

elif keep_prob<1:

gradients=back_propagation_with_dropout(X,Y,cache,keep_prob)

parameters=reg_utils.update_parameters(parameters, gradients, learning_rate)

if i%1000==0:

print('after {} iterations cost is {}'.format(i,cost))

costs.append(cost)

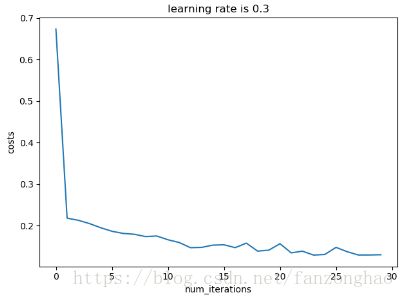

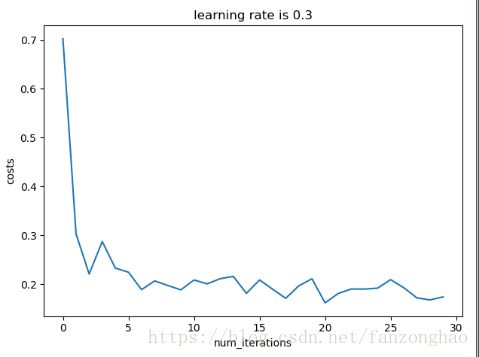

plt.plot(costs)

plt.xlabel('num_iterations')

plt.ylabel('costs')

plt.title('learning rate is {}'.format(str(learning_rate)))

plt.show()

return parameters

def test():

#######test compute_cost_with_regularization

# a3, Y_assess, parameters=testCases.compute_cost_with_regularization_test_case()

# cost=compute_cost_with_regularization(a3, Y_assess, parameters,0.1)

# print(cost)

########################

#######back_propagation_with_regularization

# X_assess, Y_assess, cache=testCases.backward_propagation_with_regularization_test_case()

# gradients=back_propagation_with_regularization(X_assess, Y_assess,0.7,cache)

# print('dw1={} dw2={} dw3={}'.format(gradients['dW1'],gradients['dW2'],gradients['dW3']))

###################test forward_propagation_with_dropout

# X_assess, parameters=testCases.forward_propagation_with_dropout_test_case()

# A3, cache=forward_propagation_with_dropout(X_assess, parameters,keep_prob=0.7)

# print('A3={}'.format(A3))

###################test backward_propagation_with_dropout

X_assess, Y_assess, cache=testCases.backward_propagation_with_dropout_test_case()

gradients=back_propagation_with_dropout(X_assess, Y_assess, cache,keep_prob=0.8)

print('dA1={}'.format(gradients['dA1']))

print('dA2={}'.format(gradients['dA2']))

"""

测试模型

"""

def test_model():

parameters = model(train_X, train_Y, num_iterations=30000, learning_rate=0.3, lambd=0,keep_prob=1)

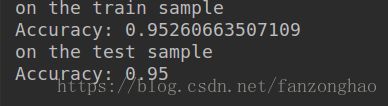

print('on the train sample')

train_prediction=reg_utils.predict(train_X, train_Y,parameters)

print('on the test sample')

test_prediction = reg_utils.predict(test_X, test_Y, parameters)

if __name__=='__main__':

#test()

test_model()

#pass 打印结果:可看出过拟合了

lambda=0.7,keep_prob=1打印结果:可看出减少了过拟合

lambda=0.keep_prob=0.86,打印结果:可看出dropout也能减少过拟合。