WordCount案例实操

1.需求

在给定的文本文件中统计输出每一个单词出现的总次数

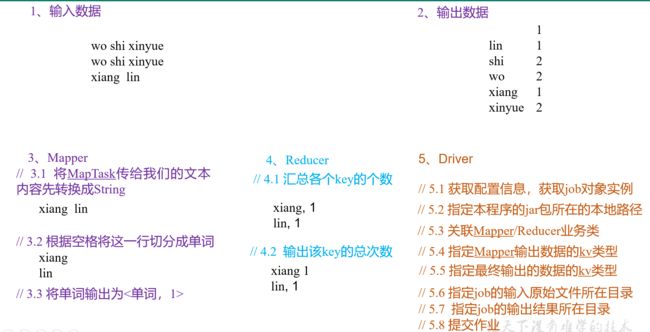

(1)输入数据

![]()

(2)期望输出数据

1

lin 1

shi 2

wo 2

xiang 1

xinyue 2

2.需求分析

按照MapReduce编程规范,分别编写Mapper,Reducer,Driver,如图所示。

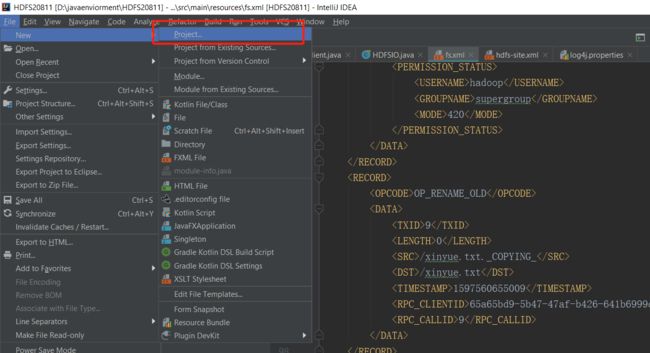

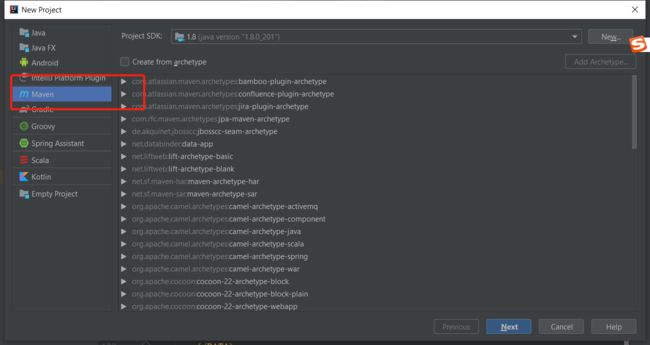

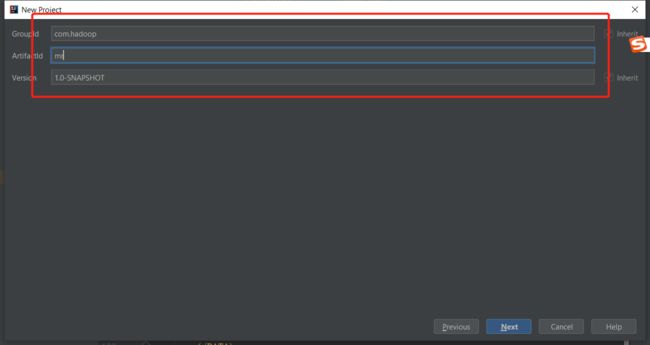

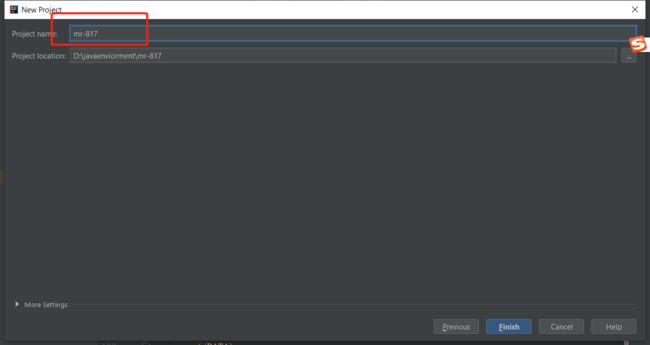

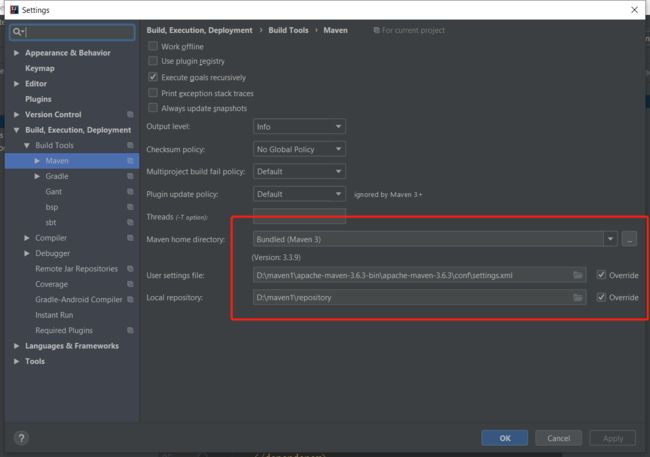

3.环境准备

(1)创建maven工程

(2)在pom.xml文件中添加如下依赖

<dependencies>

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>RELEASE</version>

</dependency>

<dependency>

<groupId>org.apache.logging.log4j</groupId>

<artifactId>log4j-core</artifactId>

<version>2.8.2</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-common</artifactId>

<version>2.7.2</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>2.7.2</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-hdfs</artifactId>

<version>2.7.2</version>

</dependency>

</dependencies>

(2)在项目的src/main/resources目录下,新建一个文件,命名为“log4j.properties”,在文件中填入。

log4j.rootLogger=INFO, stdout

log4j.appender.stdout=org.apache.log4j.ConsoleAppender

log4j.appender.stdout.layout=org.apache.log4j.PatternLayout

log4j.appender.stdout.layout.ConversionPattern=%d %p [%c] - %m%n

log4j.appender.logfile=org.apache.log4j.FileAppender

log4j.appender.logfile.File=target/spring.log

log4j.appender.logfile.layout=org.apache.log4j.PatternLayout

log4j.appender.logfile.layout.ConversionPattern=%d %p [%c] - %m%n

4.编写程序

(1)编写Mapper类

package com.hadoop.mapreduce;/*

作者 :XiangLin

创建时间 :17/08/2020 23:39

文件 :WordcountMapper.java

IDE :IntelliJ IDEA

*/

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class WordcountMapper extends Mapper<LongWritable, Text, Text, IntWritable> {

Text k = new Text();

IntWritable v = new IntWritable(1);

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

// 1 获取一行

String line = value.toString();

// 2 切割

String[] words = line.split(" ");

//3 输出

for (String word : words) {

k.set(word);

context.write(k,v);

}

}

}

(2)编写Reducer类

package com.hadoop.mapreduce;/*

作者 :XiangLin

创建时间 :17/08/2020 23:49

文件 :WordcountReducer.java

IDE :IntelliJ IDEA

*/

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class WordcountReducer extends Reducer<Text, IntWritable, Text, IntWritable> {

int sum;

IntWritable v = new IntWritable();

@Override

protected void reduce(Text key, Iterable<IntWritable> values, Context context) throws IOException, InterruptedException {

// 1 累加求和

sum = 0;

for (IntWritable count : values) {

sum += count.get();

}

// 2 输出

v.set(sum);

context.write(key,v);

}

}

(3)编写Driver驱动类

package com.hadoop.mapreduce;/*

作者 :XiangLin

创建时间 :17/08/2020 23:53

文件 :WordcountDriver.java

IDE :IntelliJ IDEA

*/

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

public class WordcountDriver {

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {

Configuration conf = new Configuration();

//1.获取job对象

Job job = Job.getInstance(conf);

// 2设置jar存储位置

job.setJarByClass(WordcountDriver.class);

// 3关联Map和Reduce类

job.setMapperClass(WordcountMapper.class);

job.setReducerClass(WordcountReducer.class);

// 4设置Mapper阶段输出数据的key和value

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(IntWritable.class);

// 5设置最终数据输出的key和value类型

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

// 6设置输出路径和输出路径

FileInputFormat.setInputPaths(job,new Path(args[0]));

FileOutputFormat.setOutputPath(job,new Path(args[1]));

// 7提交job

boolean result = job.waitForCompletion(true);

System.exit(result?0 : 1);

}

}

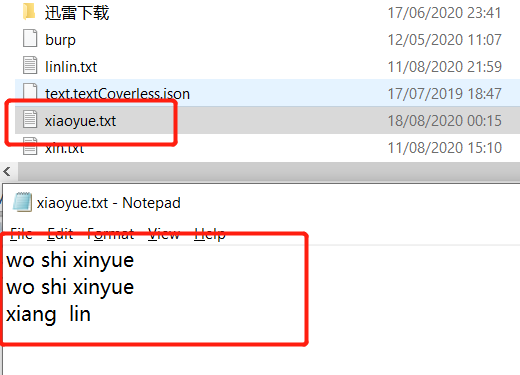

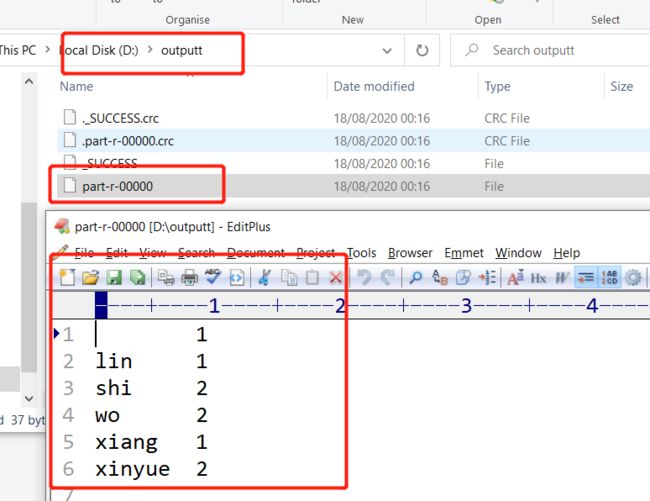

5.本地测试

运行,设置输出输出参数

d:/xiaoyue.txt d:/outputt

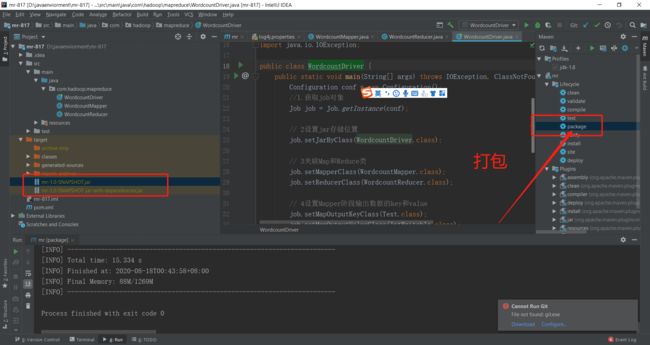

6.集群上测试

(0)用maven打jar包,需要添加的打包插件依赖

注意:

<build>

<plugins>

<plugin>

<artifactId>maven-compiler-plugin</artifactId>

<version>2.3.2</version>

<configuration>

<source>1.8</source>

<target>1.8</target>

</configuration>

</plugin>

<plugin>

<artifactId>maven-assembly-plugin </artifactId>

<configuration>

<descriptorRefs>

<descriptorRef>jar-with-dependencies</descriptorRef>

</descriptorRefs>

<archive>

<manifest>

<mainClass>com.hadoop.mr.WordcountDriver</mainClass>

</manifest>

</archive>

</configuration>

<executions>

<execution>

<id>make-assembly</id>

<phase>package</phase>

<goals>

<goal>single</goal>

</goals>

</execution>

</executions>

</plugin>

</plugins>

</build>

注意:如果工程上显示红叉。在项目上右键->maven->update project即可。

修改不带依赖的jar包名称为wc.jar,并拷贝该jar包到Hadoop集群。

(2)启动Hadoop集群

(3)执行WordCount程序

[hadoop@hadoop102 hadoop-2.7.2]$ hadoop jar wc.jar com.hadoop.mapreduce.WordcountDriver /user/hadoop/input /user/hadoop/output

[hadoop@hadoop102 hadoop-2.7.2]$ hadoop fs -cat /user/hadoop/output/part-r-00000

gu 1

lu 1

mao 1

san 1

shi 2

wo 2

woshibossxiang 2

xinyue 2

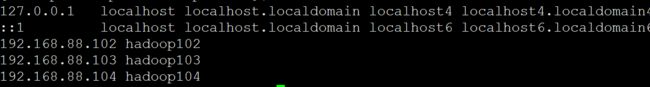

注:博主在运行:博主在运行过程当中发现一个问题,就是运行的时候一直卡死

百度半天

发现问题,

解决方法:关闭hadoop集群,修改hosts文件,命令:sudo vi /etc/hosts,三台机子都要修改

几百本常用电子书免费领取:https://github.com/XiangLinPro/IT_book

![]()