图像特征词典原理及实现

图像特征词典原理及实现

原理

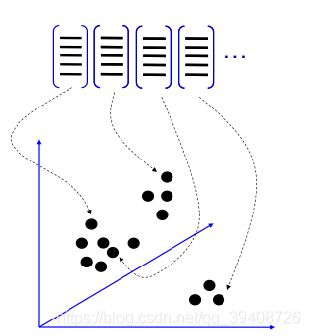

一.Bag of features: 基础流程

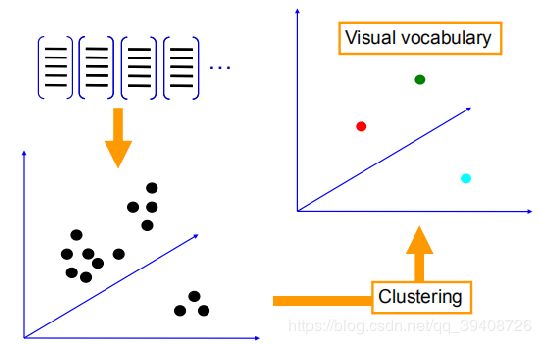

聚类是实现 visual vocabulary /codebook的关

键

• 无监督学习策略

• k-means 算法获取的聚类中心作为 codevector

• Codebook 可以通过不同的训练集协同训练获得

• 一旦训练集准备足够充分, 训练出来的码本( codebook)将

具有普适性

码本/字典用于对输入图片的特征集进行量化

• 对于输入特征,量化的过程是将该特征映射到距离其最接近

的 codevector ,并实现计数

• 码本 = 视觉词典

• Codevector = 视觉单词

4.把输入图像转化成视觉单词(visual words)

的频率直方图

5.构造特征到图像的倒排表,通过倒排表快速索引相关图像

给定图像的bag-of-features直方图特征,如何

实现图像分类/检索?

给定输入图像的BOW直方图, 在数据库中查找 k 个最近邻

的图像

对于图像分类问题,可以根据这k个近邻图像的分类标签,

投票获得分类结果

当训练数据足以表述所有图像的时候,检索/分类效果良

好

6.根据索引结果进行直方图匹配

代码及实现

1.生成词典

.`# -- coding: utf-8 --

import pickle

from PCV.imagesearch import vocabulary

from PCV.tools.imtools import get_imlist

from PCV.localdescriptors import sift

##要记得将PCV放置在对应的路径下

#获取图像列表

imlist = get_imlist(‘D:/Visual_Studio_Code/data/first1000/’) ###要记得改成自己的路径

nbr_images = len(imlist)

#获取特征列表

featlist = [imlist[i][:-3]+‘sift’ for i in range(nbr_images)]

#提取文件夹下图像的sift特征

for i in range(nbr_images):

sift.process_image(imlist[i], featlist[i])

#生成词汇

voc = vocabulary.Vocabulary(‘ukbenchtest’)

voc.train(featlist, 1000, 10)

#保存词汇

#saving vocabulary

with open(r’D:\Visual_Studio_Code\data\first1000\vocabulary.pkl’, ‘wb’) as f:

pickle.dump(voc, f)

print (‘vocabulary is:’, voc.name, voc.nbr_words)

2把数据导入数据库

# -*- coding: utf-8 -*-

import pickle

from PCV.imagesearch import imagesearch

from PCV.localdescriptors import sift

from sqlite3 import dbapi2 as sqlite

from PCV.tools.imtools import get_imlist

##要记得将PCV放置在对应的路径下

#获取图像列表

imlist = get_imlist('D:/Visual_Studio_Code/data/first1000/')##记得改成自己的路径

nbr_images = len(imlist)

#获取特征列表

featlist = [imlist[i][:-3]+'sift' for i in range(nbr_images)]

# load vocabulary

#载入词汇

with open(r'D:\Visual_Studio_Code\data\first1000\vocabulary.pkl', 'rb') as f:

voc = pickle.load(f)

#创建索引

indx = imagesearch.Indexer('testImaAdd.db',voc)

indx.create_tables()

# go through all images, project features on vocabulary and insert

#遍历所有的图像,并将它们的特征投影到词汇上

for i in range(nbr_images)[:1000]:

locs,descr = sift.read_features_from_file(featlist[i])

indx.add_to_index(imlist[i],descr)

# commit to database

#提交到数据库

indx.db_commit()

con = sqlite.connect('testImaAdd.db')

print (con.execute('select count (filename) from imlist').fetchone())

print (con.execute('select * from imlist').fetchone())

3。实现

.`# -- coding: utf-8 --

import pickle

from PCV.localdescriptors import sift

from PCV.imagesearch import imagesearch

from PCV.geometry import homography

from PCV.tools.imtools import get_imlist

load image list and vocabulary

#载入图像列表

imlist = get_imlist(‘first1000/’)

nbr_images = len(imlist)

#载入特征列表

featlist = [imlist[i][:-3]+‘sift’ for i in range(nbr_images)]

#载入词汇

with open(‘first1000/vocabulary.pkl’, ‘rb’) as f:

voc = pickle.load(f)

src = imagesearch.Searcher(‘testImaAdd.db’,voc)

#index of query image and number of results to return

#查询图像索引和查询返回的图像数

q_ind = 0

nbr_results = 20

regular query

#常规查询(按欧式距离对结果排序)

res_reg = [w[1] for w in src.query(imlist[q_ind])[:nbr_results]]

print (‘top matches (regular):’, res_reg)

#load image features for query image

#载入查询图像特征

q_locs,q_descr = sift.read_features_from_file(featlist[q_ind])

fp = homography.make_homog(q_locs[:,:2].T)

#RANSAC model for homography fitting

#用单应性进行拟合建立RANSAC模型

model = homography.RansacModel()

rank = {}

load image features for result

#载入候选图像的特征

for ndx in res_reg[1:]:

locs,descr = sift.read_features_from_file(featlist[ndx]) # because ‘ndx’ is a rowid of the DB that starts at 1

# get matches

matches = sift.match(q_descr,descr)

ind = matches.nonzero()[0]

ind2 = matches[ind]

tp = homography.make_homog(locs[:,:2].T)

# compute homography, count inliers. if not enough matches return empty list

try:

H,inliers = homography.H_from_ransac(fp[:,ind],tp[:,ind2],model,match_theshold=4)

except:

inliers = []

# store inlier count

rank[ndx] = len(inliers)

#sort dictionary to get the most inliers first

sorted_rank = sorted(rank.items(), key=lambda t: t[1], reverse=True)

res_geom = [res_reg[0]]+[s[0] for s in sorted_rank]

print (‘top matches (homography):’, res_geom)

#显示查询结果

imagesearch.plot_results(src,res_reg[:8]) #常规查询

imagesearch.plot_results(src,res_geom[:8]) #重排后的结果