本文引用了2个js文件,这里提供下CDN资源,!

功能介绍:

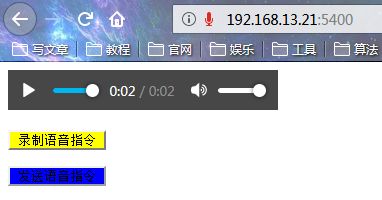

1.点击页面上的''录制语音指令'',然后开始说话,不要超过60秒;

2.说完,点击页面上的''发送语音指令'',系统会重复一遍你说的话(合成后的声音,不在是你原来声音)

前段代码:

DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

head>

<body>

<audio controls autoplay id="player">audio>

<p><button onclick="start_reco()" style="background-color: yellow">录制语音指令button>p>

<p><button onclick="stop_reco_audio()" style="background-color: blue">发送语音指令button>p>

body>

<script type="text/javascript" src='{{ url_for('static',filename='js/jquery.js') }}'>script>

<script type="text/javascript" src='{{ url_for('static',filename='js/recorder.js') }}'>script>

<script type="text/javascript">

var reco = null;

var audio_context = new AudioContext();

var base_url = 'http://192.168.13.21:5400';

navigator.getUserMedia = (navigator.getUserMedia ||

navigator.webkitGetUserMedia ||

navigator.mozGetUserMedia ||

navigator.msGetUserMedia);

navigator.getUserMedia({audio: true}, create_stream, function (err) {

console.log(err)

});

function create_stream(user_media) {

var stream_input = audio_context.createMediaStreamSource(user_media);

reco = new Recorder(stream_input);

}

// 录制语音指令

function start_reco() {

reco.record();

}

// 发送语音指令

function stop_reco_audio() {

reco.stop();

send_audio();

reco.clear();

}

function send_audio() {

reco.exportWAV(function (wav_file) {

var formdata = new FormData();

formdata.append("record", wav_file);

console.log(formdata);

$.ajax({

url: base_url+"/ai",

type: 'post',

processData: false,

contentType: false,

data: formdata,

dataType: 'json',

// 自动播放语音

success: function (data) {

document.getElementById("player").src =base_url+"/get_audio/" + data.filename

}

});

})

}

script>

html>

后端代码:

app.py

from flask import Flask,render_template,request,jsonify,send_file from uuid import uuid4 import s4_5 app = Flask(__name__,static_folder='static') @app.route("/") def index(): return render_template("index.html") @app.route("/ai",methods=["POST"]) def ai(): # 1.保存录音文件 audio = request.files.get("record") filename = f"{uuid4()}.wav" audio.save(filename) #2.将录音文件转换为PCM发送给百度进行语音识别 q_text = s4_5.audio2text(filename) #3.将识别的问题交给图灵或自主处理获取答案 a_text = s4_5.to_tuling(q_text) #4.将答案发送给百度语音合成,合成音频文件 a_file = s4_5.text2audio(a_text) #5.将音频文件发送给前端播放 return jsonify({"filename":a_file}) @app.route("/get_audio/") def get_audio(filename): return send_file(filename) if __name__ == '__main__': # 注意前后端保持一致o! app.run("0.0.0.0",5400,debug=True)

s4_5.py

from aip import AipSpeech,AipNlp import time,os APP_ID = '15422825' APP_KEY = 'DhXGtWHYMujMVZZGRI3a7rzb' SECRET_KEY = 'PbyUvTL31fImGthOOIP5ZbbtEOGwGOoT' # 与百度进行一次加密校验,认证你是合法用户合法的应用 # AipSpeech是百度语音的客户端,认证成功之后,客户端将被开启,这里的client就是已经开启的百度语音的客户端了 client = AipSpeech(APP_ID, APP_KEY, SECRET_KEY) # 自然语言处理 nlp = AipNlp(APP_ID, APP_KEY, SECRET_KEY) # 1.将wma格式文件转为pcm格式文件 def get_file_content(filePath): # 执行cmd命令os.system() os.system(f"ffmpeg -y -i {filePath} -acodec pcm_s16le -f s16le -ac 1 -ar 16000 {filePath}.pcm") with open(f"{filePath}.pcm", 'rb') as fp: return fp.read() # 2.将音频转成文字 def audio2text(filepath): # 识别本地文件 res = client.asr(get_file_content(filepath), 'pcm', 16000, { # 不填写lan参数生效,都不填写,默认1537(普通话 输入法模型),dev_pid参数见本节开头的表格 'dev_pid': 1536, }) # res.get("result")[0]) # 将录音转成文字,然后返回 return res.get("result")[0] # 3.将文字转成音频 def text2audio(text): # 给合成的音频命名 filename = f"{time.time()}.mp3" # 合成语音结果 result = client.synthesis(text, 'zh', 1, { 'vol': 5, "spd": 3, "pit": 7, "per": 4 }) # 识别正确返回语音二进制 错误则返回dict 参照下面错误码 if not isinstance(result, dict): with open(filename, 'wb') as f: f.write(result) return filename #4.图灵机器人 def to_tuling(text): # 导入模块requests,发post请求 import requests args = { "reqType": 0, "perception": { "inputText": { "text": text } }, "userInfo": { "apiKey": "eaf3daedeb374564bfe9db10044bc20b", "userId": "6789" } } # 图灵机器人API接口 url = "http://openapi.tuling123.com/openapi/api/v2" # 向图灵发起请求 res = requests.post(url, json=args) # 将结果赋值给text text = res.json().get("results")[0].get("values").get("text") # 将test返回 return text