MFC/Qt下调用caffe源码(一)---将caffe源码生成动态链接库dll

本人研一,最近想将用caffe训出的模型,通过MFC做出一个界面,扔进一张图片,点击预测,即可调用预测分类函数完成测试,并且通过MessageBox弹出最终分类的信息。

首先通过查资料总结出两种方法,第一:直接调用编译好的caffe源码;(本次用到的源码是classification.cpp)

第二:将caffe源码生成动态链接库dll,然后在其它工程项目下进行调用。由于caffe的源码依赖 项太多,稍微错一点就编译不通过,故本次操作采用调用dll的方式。

1.win7 vs2013,新建win32控制台程序,如下所示:

2.首先将项目的属性改成release和x64编译模式,添加两个.h文件和一个cpp文件:

(1)我采用的是dllexport方式导出的,采用了条件编译,要将DLL_EXPORTS宏定义加入到预处理器中以及_SCL_SECURE_NO_WARNINGS

Tip:.h文件

#ifndef CAFFE_CLASSIFY_H_

#define CAFFE_CLASSIFY_H_

#define CPU_ONLY 1

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

//#pragma once

using namespace caffe;

using std::string;

using namespace std;

using boost::shared_ptr;

#ifdef DLL_EXPORTS

#define DLL_EXPORTS_API _declspec(dllexport)

#else

#define DLL_EXPORTS_API _declspec(dllimport)

#endif

/* Pair (label, confidence) representing a prediction. */

typedef std::pair Prediction;

class DLL_EXPORTS_API Classifier {

public:

Classifier(const string& model_file,

const string& trained_file,

const string& mean_file,

const string& label_file);

std::vector Classify(const cv::Mat& img, int N = 5);

~Classifier();

private:

void SetMean(const string& mean_file);

std::vector Predict(const cv::Mat& img);

void WrapInputLayer(std::vector* input_channels);

void Preprocess(const cv::Mat& img,

std::vector* input_channels);

private:

boost::shared_ptr > net_;

cv::Size input_geometry_;

int num_channels_;

cv::Mat mean_;

std::vector labels_;

};

#endif (2)此文件是关于层的,缺哪个添加哪个。之前按照网上博客抄的,后来运行的时候报错:Unkown Layer 。后来想起来我的网络里面用到了Concat Layer,后来就自己添加了,特别注意:直接extern 就行,不用REGISTER_LAYER_CLASS(Concat);

Tip .h文件,此文件是关于层的,缺哪个添加哪个。之前按照网上博客抄的,后来运行的时候报错:Unkown Layer 。后来想起来我的网络里面用到了Concat Layer,后来就自己添加了,特别注意:直接extern 就行,不用REGISTER_LAYER_CLASS(Concat);

#ifndef LAYER_H

#define LAYER_H

#include "caffe/common.hpp"

#include "caffe/layers/input_layer.hpp"

#include "caffe/layers/inner_product_layer.hpp"

#include "caffe/layers/dropout_layer.hpp"

#include "caffe/layers/conv_layer.hpp"

#include "caffe/layers/relu_layer.hpp"

#include "caffe/layers/pooling_layer.hpp"

#include "caffe/layers/lrn_layer.hpp"

#include "caffe/layers/softmax_layer.hpp"

#include "caffe/layers/concat_layer.hpp"

namespace caffe

{

extern INSTANTIATE_CLASS(InputLayer);

extern INSTANTIATE_CLASS(InnerProductLayer);

extern INSTANTIATE_CLASS(DropoutLayer);

extern INSTANTIATE_CLASS(ConcatLayer);

extern INSTANTIATE_CLASS(ConvolutionLayer);

REGISTER_LAYER_CLASS(Convolution);

extern INSTANTIATE_CLASS(ReLULayer);

REGISTER_LAYER_CLASS(ReLU);

extern INSTANTIATE_CLASS(PoolingLayer);

REGISTER_LAYER_CLASS(Pooling);

extern INSTANTIATE_CLASS(LRNLayer);

REGISTER_LAYER_CLASS(LRN);

extern INSTANTIATE_CLASS(SoftmaxLayer);

REGISTER_LAYER_CLASS(Softmax);

//extern INSTANTIATE_CLASS(ConcatLayer);

//REGISTER_LAYER_CLASS(Concat);

}

#endif

(3)将classification的源文件赋值到cpp中,注意添加头文件;

.cpp文件

#include "Header.h"

#include "SingleDll.h"

Classifier::Classifier(const string& model_file,

const string& trained_file,

const string& mean_file,

const string& label_file) {

#ifdef CPU_ONLY

Caffe::set_mode(Caffe::CPU);

#else

Caffe::set_mode(Caffe::GPU);

#endif

//Caffe::set_mode(Caffe::GPU);

/* Load the network. */

net_.reset(new Net(model_file, TEST));

net_->CopyTrainedLayersFrom(trained_file);

CHECK_EQ(net_->num_inputs(), 1) << "Network should have exactly one input.";

CHECK_EQ(net_->num_outputs(), 1) << "Network should have exactly one output.";

Blob* input_layer = net_->input_blobs()[0];

num_channels_ = input_layer->channels();

CHECK(num_channels_ == 3 || num_channels_ == 1)

<< "Input layer should have 1 or 3 channels.";

input_geometry_ = cv::Size(input_layer->width(), input_layer->height());

/* Load the binaryproto mean file. */

SetMean(mean_file);

/* Load labels. */

std::ifstream labels(label_file.c_str());

CHECK(labels) << "Unable to open labels file " << label_file;

string line;

while (std::getline(labels, line))

labels_.push_back(string(line));

Blob* output_layer = net_->output_blobs()[0];

CHECK_EQ(labels_.size(), output_layer->channels())

<< "Number of labels is different from the output layer dimension.";

}

static bool PairCompare(const std::pair& lhs,

const std::pair& rhs) {

return lhs.first > rhs.first;

}

/* Return the indices of the top N values of vector v. */

static std::vector Argmax(const std::vector& v, int N) {

std::vector > pairs;

for (size_t i = 0; i < v.size(); ++i)

pairs.push_back(std::make_pair(v[i], static_cast(i)));

std::partial_sort(pairs.begin(), pairs.begin() + N, pairs.end(), PairCompare);

std::vector result;

for (int i = 0; i < N; ++i)

result.push_back(pairs[i].second);

return result;

}

/* Return the top N predictions. */

std::vector Classifier::Classify(const cv::Mat& img, int N) {

std::vector output = Predict(img);

N = std::min(labels_.size(), N);

std::vector maxN = Argmax(output, N);

std::vector predictions;

for (int i = 0; i < N; ++i) {

int idx = maxN[i];

predictions.push_back(std::make_pair(labels_[idx], output[idx]));

}

return predictions;

}

/* Load the mean file in binaryproto format. */

void Classifier::SetMean(const string& mean_file) {

BlobProto blob_proto;

ReadProtoFromBinaryFileOrDie(mean_file.c_str(), &blob_proto);

/* Convert from BlobProto to Blob */

Blob mean_blob;

mean_blob.FromProto(blob_proto);

CHECK_EQ(mean_blob.channels(), num_channels_)

<< "Number of channels of mean file doesn't match input layer.";

/* The format of the mean file is planar 32-bit float BGR or grayscale. */

std::vector channels;

float* data = mean_blob.mutable_cpu_data();

for (int i = 0; i < num_channels_; ++i) {

/* Extract an individual channel. */

cv::Mat channel(mean_blob.height(), mean_blob.width(), CV_32FC1, data);

channels.push_back(channel);

data += mean_blob.height() * mean_blob.width();

}

/* Merge the separate channels into a single image. */

cv::Mat mean;

cv::merge(channels, mean);

/* Compute the global mean pixel value and create a mean image

* filled with this value. */

cv::Scalar channel_mean = cv::mean(mean);

mean_ = cv::Mat(input_geometry_, mean.type(), channel_mean);

}

std::vector Classifier::Predict(const cv::Mat& img) {

Blob* input_layer = net_->input_blobs()[0];

input_layer->Reshape(1, num_channels_,

input_geometry_.height, input_geometry_.width);

/* Forward dimension change to all layers. */

net_->Reshape();

std::vector input_channels;

WrapInputLayer(&input_channels);

Preprocess(img, &input_channels);

net_->Forward();

/* Copy the output layer to a std::vector */

Blob* output_layer = net_->output_blobs()[0];

const float* begin = output_layer->cpu_data();

const float* end = begin + output_layer->channels();

return std::vector(begin, end);

}

/* Wrap the input layer of the network in separate cv::Mat objects

* (one per channel). This way we save one memcpy operation and we

* don't need to rely on cudaMemcpy2D. The last preprocessing

* operation will write the separate channels directly to the input

* layer. */

void Classifier::WrapInputLayer(std::vector* input_channels) {

Blob* input_layer = net_->input_blobs()[0];

int width = input_layer->width();

int height = input_layer->height();

float* input_data = input_layer->mutable_cpu_data();

for (int i = 0; i < input_layer->channels(); ++i) {

cv::Mat channel(height, width, CV_32FC1, input_data);

input_channels->push_back(channel);

input_data += width * height;

}

}

void Classifier::Preprocess(const cv::Mat& img,

std::vector* input_channels) {

/* Convert the input image to the input image format of the network. */

cv::Mat sample;

if (img.channels() == 3 && num_channels_ == 1)

cv::cvtColor(img, sample, cv::COLOR_BGR2GRAY);

else if (img.channels() == 4 && num_channels_ == 1)

cv::cvtColor(img, sample, cv::COLOR_BGRA2GRAY);

else if (img.channels() == 4 && num_channels_ == 3)

cv::cvtColor(img, sample, cv::COLOR_BGRA2BGR);

else if (img.channels() == 1 && num_channels_ == 3)

cv::cvtColor(img, sample, cv::COLOR_GRAY2BGR);

else

sample = img;

cv::Mat sample_resized;

if (sample.size() != input_geometry_)

cv::resize(sample, sample_resized, input_geometry_);

else

sample_resized = sample;

cv::Mat sample_float;

if (num_channels_ == 3)

sample_resized.convertTo(sample_float, CV_32FC3);

else

sample_resized.convertTo(sample_float, CV_32FC1);

cv::Mat sample_normalized;

cv::subtract(sample_float, mean_, sample_normalized);

/* This operation will write the separate BGR planes directly to the

* input layer of the network because it is wrapped by the cv::Mat

* objects in input_channels. */

cv::split(sample_normalized, *input_channels);

CHECK(reinterpret_cast(input_channels->at(0).data)

== net_->input_blobs()[0]->cpu_data())

<< "Input channels are not wrapping the input layer of the network.";

}

Classifier::~Classifier()

{}

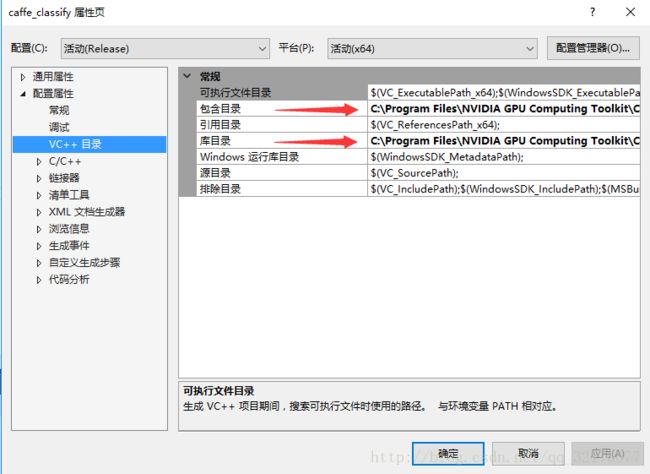

3.打开属性管理器,添加caffe相关依赖项。添加完可能会报各种can not open XXX.lib的错误。莫着急,这都是由于粗心路径不对造成的,要注意细节。

包含目录:

D:\caffe\NugetPackages\gflags.2.1.2.1\build\native\include

D:\caffe\NugetPackages\glog.0.3.3.0\build\native\include

D:\caffe\NugetPackages\protobuf-v120.2.6.1\build\native\include

D:\caffe\NugetPackages\OpenCV.2.4.10\build\native\include

D:\caffe\NugetPackages\OpenBLAS.0.2.14.1\lib\native\include

D:\caffe\NugetPackages\boost.1.59.0.0\lib\native\include

还要加上caffe编译完生成的include路径,最好将其复制到该项目下

eg.D:\caffe-class\include

库目录:

E:\caffe\NugetPackages\OpenCV.2.4.10\build\native\lib\x64\v120\Release

E:\caffe\NugetPackages\gflags.2.1.2.1\build\native\x64\v120\dynamic\Lib

E:\caffe\NugetPackages\glog.0.3.3.0\build\native\lib\x64\v120\Release \dynamic

E:\caffe\NugetPackages\OpenBLAS.0.2.14.1\lib\native\lib\x64

E:\caffe\NugetPackages\protobuf-v120.2.6.1\build\native\lib\x64\v120\Release

E:\caffe\NugetPackages\LevelDB-vc120.1.2.0.0\build\native\lib\x64\v120\Release

E:\caffe\NugetPackages\hdf5-v120-complete.1.8.15.2\lib\native\lib\x64

E:\caffe\NugetPackages\boost_date_time-vc120.1.59.0.0\lib\native\address-model-64\lib

E:\caffe\NugetPackages\boost_filesystem-vc120.1.59.0.0\lib\native\address-model-64\lib

E:\caffe\NugetPackages\boost_system-vc120.1.59.0.0\lib\native\address-model-64\lib

E:\caffe\NugetPackages\boost_thread-vc120.1.59.0.0\lib\native\address-model-64\lib

E:\caffe\NugetPackages\boost_chrono-vc120.1.59.0.0\lib\native\address-model-64\lib

注意还需添加caffe编译生成的release文件Tip:上述库目录中少了lmdb ,自己别忘了

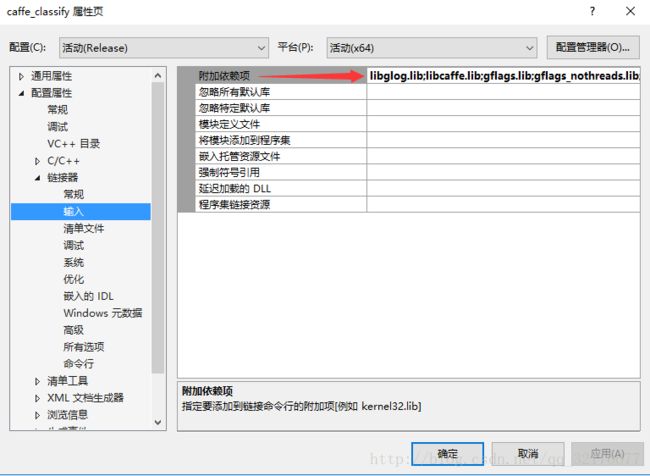

添加依赖项

libglog.lib

libcaffe.lib

gflags.lib

gflags_nothreads.lib

hdf5.lib

hdf5_hl.lib

libprotobuf.lib

libopenblas.dll.a

Shlwapi.lib

LevelDb.lib

lmdb.lib

opencv_core2410.lib

opencv_highgui2410.lib

opencv_imgproc2410.lib

opencv_video2410.lib

opencv_objdetect2410.lib

注意:由于我是cpu条件下编译的(加了宏定义CPU_ONLY),若您是GPU 则要添加相应的cudn的路径,以及

cublas.lib

cuda.lib

curand.lib

cudart.lib

cudnn.lib 这些依赖项!!

4.编译生成,会发现生成dll lib等文件,但是没有ink,不知道为啥,那不重要。

最后第一篇生成dll文件就讲解到这里,生成完后可以测试一下能不能用。我也是先测试能用,才新建MFC工程进行调用的。

下次就为大家讲解MFC下调用,具体实现MFC调用caffemodel实现过程。

若需要help,QQ 1443563995.如有错误,多多指教!