AlexNet

AlexNet

- 简介

- 过拟合与Dropout

- 网络结构

- 花数据集

- 代码

-

- split_data.py

- model.py

- tran.py

- predict.py

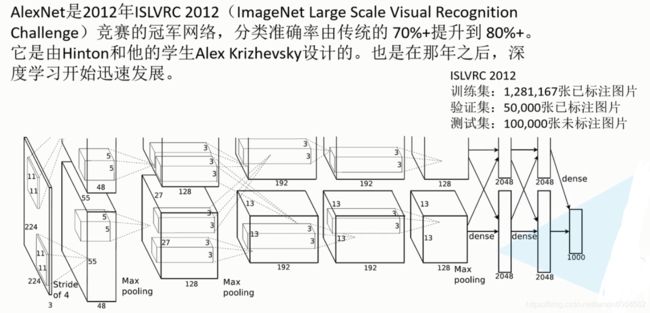

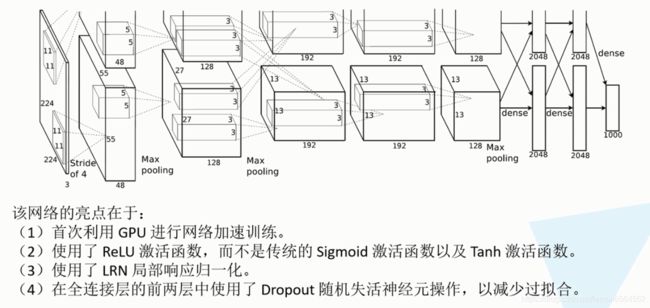

简介

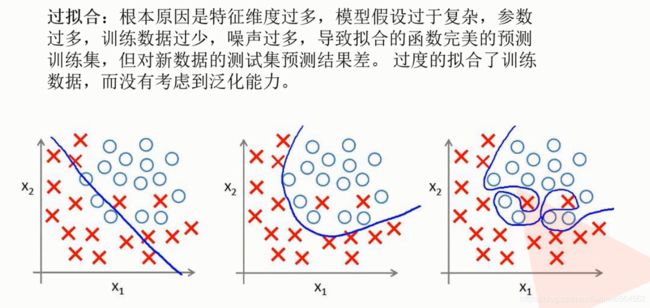

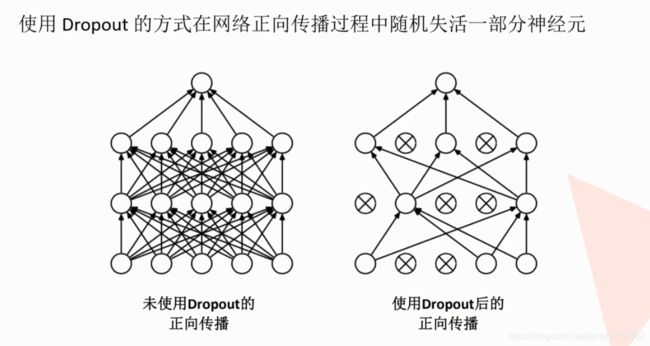

过拟合与Dropout

网络结构

花数据集

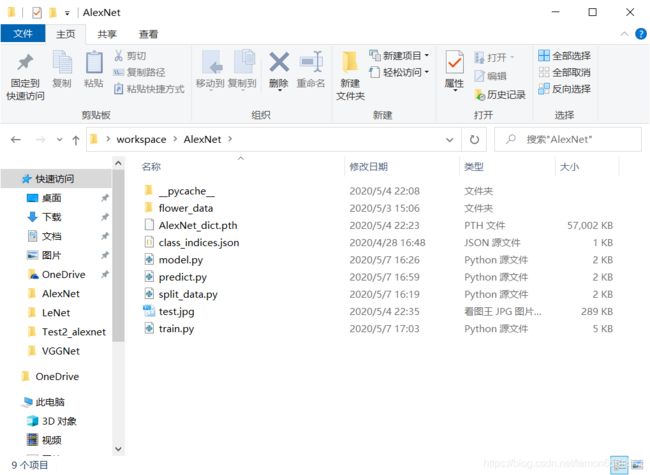

代码

split_data.py

将数据集划分为 9 : 1 的训练集(train)和验证集(val)。

import os

from shutil import copy

import random

def mkfile(file):

if not os.path.exists(file):

os.makedirs(file)

# 当前文件所在文件夹下'flower_data/flower_photos'

# ————split_data.py

# ————flower_data

# —————————flower_photos

file = 'flower_data/flower_photos'

flower_class = [cla for cla in os.listdir(file) if ".txt" not in cla]

mkfile('flower_data/train')

for cla in flower_class:

mkfile('flower_data/train/' + cla)

mkfile('flower_data/val')

for cla in flower_class:

mkfile('flower_data/val/' + cla)

split_rate = 0.1

for cla in flower_class:

cla_path = file + '/' + cla + '/'

images = os.listdir(cla_path)

num = len(images)

eval_index = random.sample(images, k=int(num * split_rate))

for index, image in enumerate(images):

if image in eval_index:

image_path = cla_path + image

new_path = 'flower_data/val/' + cla

copy(image_path, new_path)

else:

image_path = cla_path + image

new_path = 'flower_data/train/' + cla

copy(image_path, new_path)

print("\r[{}] processing [{}/{}]".format(cla, index + 1, num),

end="") # processing bar

print()

print("processing done!")

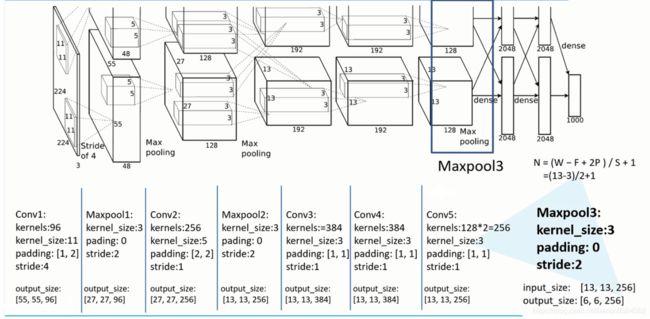

model.py

import torch

import torch.nn as nn

class AlexNet(nn.Module):

def __init__(self, num_classes):

super(AlexNet, self).__init__()

self.features = nn.Sequential(

nn.Conv2d(3, 48, kernel_size=11, stride=4, padding=2), # input(3, 224, 224) output(48, 55, 55)

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3, stride=2), # (48, 27, 27)

nn.Conv2d(48, 128, kernel_size=5, padding=2), # (128, 27, 27)

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3, stride=2), # (128, 13, 13)

nn.Conv2d(128, 192, kernel_size=3, padding=1), # (192, 13, 13)

nn.ReLU(inplace=True),

nn.Conv2d(192, 192, kernel_size=3, padding=1), # (192, 13, 13)

nn.ReLU(inplace=True),

nn.Conv2d(192, 128, kernel_size=3, padding=1), # (128, 13, 13)

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3, stride=2), # (128, 6, 6)

)

self.classifier = nn.Sequential(

nn.Dropout(p=0.5),

nn.Linear(128 * 6 * 6, 2048),

nn.ReLU(inplace=True),

nn.Dropout(p=0.5),

nn.Linear(2048, 2048),

nn.ReLU(inplace=True),

nn.Linear(2048, num_classes),

)

def forward(self, x):

x = self.features(x)

x = torch.flatten(x, start_dim=1)

x = self.classifier(x)

return x

tran.py

from model import AlexNet

import torch

import torchvision as tv

import json

import torchvision.transforms as transforms

import time

data_transform = {

"train":

transforms.Compose([

transforms.RandomResizedCrop(224), # 随机裁剪为 224 * 224 的图像

transforms.RandomHorizontalFlip(), # 水平翻转,提高训练难度,使网络精度更高

transforms.ToTensor(),

# Convert a ``PIL Image`` or ``numpy.ndarray`` to tensor.

# Converts a PIL Image or numpy.ndarray (H x W x C) in the range

# [0, 255] to a torch.FloatTensor of shape (C x H x W) in the range [0.0, 1.0]

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))

# Given mean: ``(M1,...,Mn)`` and std: ``(S1,..,Sn)`` for ``n`` channels,

# ``output[channel] = (input[channel] - mean[channel]) / std[channel]``

]),

"val":

transforms.Compose([

transforms.Resize((224, 224)), # 缩放图像为 224 * 224, 注意要有()

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))

])

}

# 打开自己的数据集,用torchvision.datasets.ImageFolder()

train_set = tv.datasets.ImageFolder(

root='C:/Users/14251/Desktop/workspace/AlexNet/flower_data/train',

transform=data_transform["train"])

val_set = tv.datasets.ImageFolder(

root='C:/Users/14251/Desktop/workspace/AlexNet/flower_data/val',

transform=data_transform["val"])

train_loader = torch.utils.data.DataLoader(train_set,

batch_size=32,

shuffle=True,

num_workers=0)

val_loader = torch.utils.data.DataLoader(val_set,

batch_size=4,

shuffle=True,

num_workers=0)

# {'daisy':0, 'dandelion':1, 'roses':2, 'sunflower':3, 'tulips':4}

# convert to {0:'daisy', 1:'dandelion', 2:'roses', 3:'sunflower', 4:'tulips'}

train_list = train_set.class_to_idx

data_dict = dict((val, key) for key, val in train_list.items())

# write dict into json file

json_str = json.dumps(data_dict, indent=4)

with open('class_indices.json', 'w') as json_file:

json_file.write(json_str)

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

net = AlexNet(num_classes=5)

net.to(device)

loss_fun = torch.nn.CrossEntropyLoss()

optimizer = torch.optim.Adam(net.parameters(), lr=0.0002)

best_accurate = 0.0

for epoch in range(20):

net.train() # use Dropout()

running_loss = 0.0

t1 = time.perf_counter()

for step, data in enumerate(train_loader, start=0):

images, labels = data

optimizer.zero_grad()

outputs = net(images.to(device))

loss = loss_fun(outputs, labels.to(device))

loss.backward()

optimizer.step()

running_loss += loss.item()

# print train process

rate = (step + 1) / len(train_loader)

a = "*" * int(rate * 50)

b = "." * int((1 - rate) * 50)

print("\rtrain loss: {:^3.0f}%[{}->{}]{:.3f}".format(

int(rate * 100), a, b, loss),

end="")

print()

print(time.perf_counter() - t1)

accurate = 0.0

net.eval() # don't use Dropout()

with torch.no_grad():

for val_data in val_loader:

val_images, val_labels = val_data

outputs = net(val_images.to(device)) # [batch, 5]

prediction = torch.max(outputs, dim=1)[1] # 从第1维 5 开始, 求最大值, [1]代表索引

accurate += (prediction == val_labels.to(device)).sum().item()

val_accurate = accurate / len(val_set)

if val_accurate > best_accurate:

best_accurate = val_accurate

torch.save(

net.state_dict(),

'C:/Users/14251/Desktop/workspace/AlexNet/AlexNet_dict.pth')

print('[epoch %d] train_loss: %.3f test_accuracy: %.3f' %

(epoch + 1, running_loss / step, val_accurate))

print('Finished Training')

predict.py

import torch

from model import AlexNet

import torchvision.transforms as transforms

from PIL import Image

import json

# import matplotlib.pyplot as plt

transform = transforms.Compose([

transforms.Resize((224, 224)),

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))

])

# load image, 单张图像用PIL.Image.Open()

img = Image.open('C:/Users/14251/Desktop/workspace/AlexNet/test.jpg')

# plt.imshow(img)

img = transform(img) # (H, W, C) -> (C, H, W)

img = img.unsqueeze(dim=0) # (C, H, W) -> (N, C, H, W)

# read class_indict

try:

json_file = open('./class_indices.json', 'r')

class_indict = json.load(json_file)

except Exception as e:

print(e)

exit(-1)

net = AlexNet(num_classes=5)

net.load_state_dict(

torch.load('C:/Users/14251/Desktop/workspace/AlexNet/AlexNet_dict.pth'))

net.eval()

# with torch.no_grad():

# outputs = net(img)

# prediction = torch.max(outputs, dim=1)[1].item()

# print(class_indict[str(prediction)])

with torch.no_grad():

# predict class

output = torch.squeeze(net(img))

predict = torch.softmax(output, dim=0)

predict_cla = torch.argmax(predict).numpy()

print(class_indict[str(predict_cla)], predict[predict_cla].item())