scikit-learn KNN实现糖尿病预测

随书代码,阅读笔记。

KNN是一种有监督的机器学习算法,可以解决分类问题,也可以解决回归问题。

算法优点:准确性高,对异常值和噪声有较高的容忍度;

算法缺点:计算量大,内存消耗也比较大。

针对算法计算量大,有一些改进的数据结构,避免重复计算K-D Tree, Ball Tree。

算法变种:根据邻居的距离,分配不同权重。另外一个变种是指定半径。

- KNN进行分类

%matplotlib inline

import matplotlib.pyplot as plt

import numpy as np

import pandas as pd

from sklearn.datasets.samples_generator import make_blobs

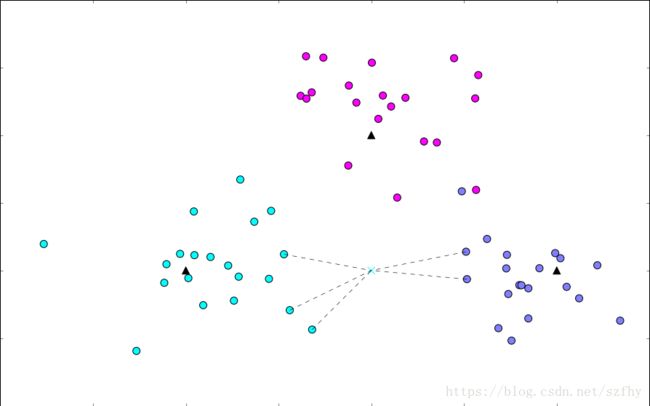

# 生成数据

centers = [[-2, 2], [2, 2], [0, 4]]

X, y = make_blobs(n_samples=60, centers=centers, random_state=0, cluster_std=0.60)

# 画出数据

plt.figure(figsize=(16, 10), dpi=144)

c = np.array(centers)

plt.scatter(X[:, 0], X[:, 1], c=y, s=100, cmap='cool'); # 画出样本

plt.scatter(c[:, 0], c[:, 1], s=100, marker='^', c='orange'); # 画出中心点

from sklearn.neighbors import KNeighborsClassifier

# 模型训练

k = 5

clf = KNeighborsClassifier(n_neighbors=k)

clf.fit(X, y);

# 进行预测

X_sample = [0, 2]

y_sample = clf.predict(X_sample);

neighbors = clf.kneighbors(X_sample, return_distance=False);

# 画出示意图

plt.figure(figsize=(16, 10), dpi=144)

plt.scatter(X[:, 0], X[:, 1], c=y, s=100, cmap='cool'); # 样本

plt.scatter(c[:, 0], c[:, 1], s=100, marker='^', c='k'); # 中心点

plt.scatter(X_sample[0], X_sample[1], marker="x",

c=y_sample, s=100, cmap='cool') # 待预测的点

for i in neighbors[0]:

plt.plot([X[i][0], X_sample[0]], [X[i][1], X_sample[1]],

'k--', linewidth=0.6); # 预测点与距离最近的 5 个样本的连线- KNN进行回归拟合

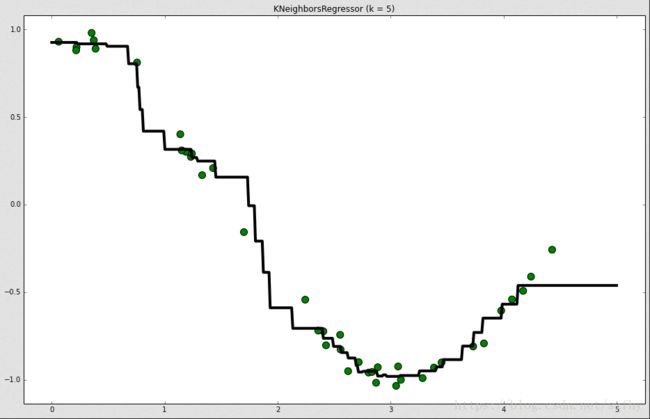

%matplotlib inline

import matplotlib.pyplot as plt

import numpy as np

# 生成训练样本

n_dots = 40

X = 5 * np.random.rand(n_dots, 1)

y = np.cos(X).ravel()

# 添加一些噪声

y += 0.2 * np.random.rand(n_dots) - 0.1

# 训练模型

from sklearn.neighbors import KNeighborsRegressor

k = 5

knn = KNeighborsRegressor(k)

knn.fit(X, y);

# 生成足够密集的点并进行预测

T = np.linspace(0, 5, 500)[:, np.newaxis]

y_pred = knn.predict(T)

knn.score(X, y)

#output:0.98579189493611052

# 画出拟合曲线

plt.figure(figsize=(16, 10), dpi=144)

plt.scatter(X, y, c='g', label='data', s=100) # 画出训练样本

plt.plot(T, y_pred, c='k', label='prediction', lw=4) # 画出拟合曲线

plt.axis('tight')

plt.title("KNeighborsRegressor (k = %i)" % k)

plt.show()- KNN 实现糖尿病预测

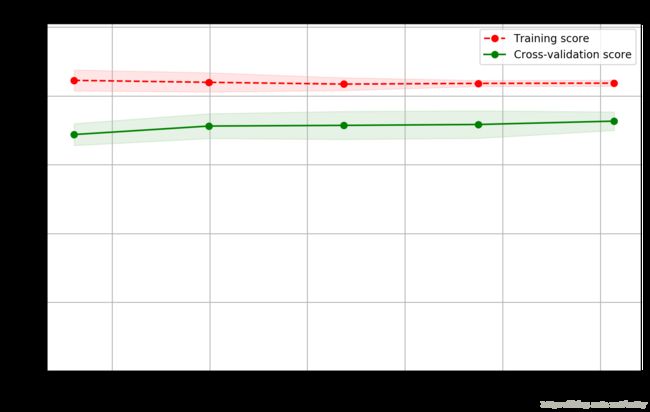

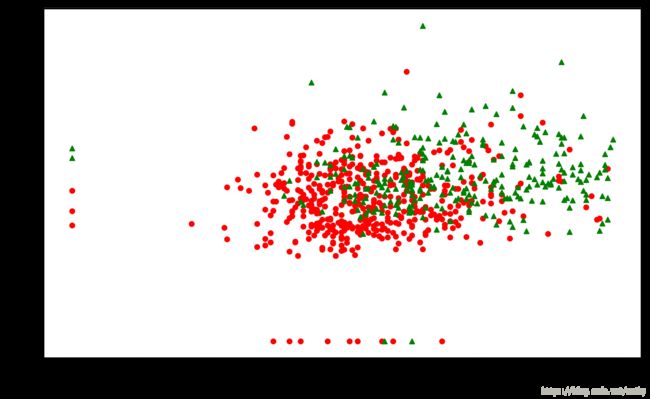

%matplotlib inline import matplotlib.pyplot as plt import numpy as np import pandas as pd # 加载数据 data = pd.read_csv('datasets/pima-indians-diabetes/diabetes.csv') print('dataset shape {}'.format(data.shape)) data.head() data.groupby("Outcome").size() #Outcome #0 500 无糖尿病 #1 268 有糖尿病 #dtype: int64 X = data.iloc[:, 0:8] Y = data.iloc[:, 8] print('shape of X {}; shape of Y {}'.format(X.shape, Y.shape)) from sklearn.model_selection import train_test_split X_train, X_test, Y_train, Y_test = train_test_split(X, Y, test_size=0.2); from sklearn.neighbors import KNeighborsClassifier, RadiusNeighborsClassifier models = [] models.append(("KNN", KNeighborsClassifier(n_neighbors=2))) models.append(("KNN with weights", KNeighborsClassifier( n_neighbors=2, weights="distance"))) models.append(("Radius Neighbors", RadiusNeighborsClassifier( n_neighbors=2, radius=500.0))) results = [] for name, model in models: model.fit(X_train, Y_train) results.append((name, model.score(X_test, Y_test))) for i in range(len(results)): print("name: {}; score: {}".format(results[i][0],results[i][1])) #name: KNN; score: 0.681818181818 #name: KNN with weights; score: 0.636363636364 #name: Radius Neighbors; score: 0.62987012987 from sklearn.model_selection import KFold from sklearn.model_selection import cross_val_score #kfold 训练10次,计算10次的平均准确率 results = [] for name, model in models: kfold = KFold(n_splits=10) cv_result = cross_val_score(model, X, Y, cv=kfold) results.append((name, cv_result)) for i in range(len(results)): print("name: {}; cross val score: {}".format( results[i][0],results[i][1].mean())) #name: KNN; cross val score: 0.714764183185 #name: KNN with weights; cross val score: 0.677050580998 #name: Radius Neighbors; cross val score: 0.6497265892 #模型训练 knn = KNeighborsClassifier(n_neighbors=2) knn.fit(X_train, Y_train) train_score = knn.score(X_train, Y_train) test_score = knn.score(X_test, Y_test) print("train score: {}; test score: {}".format(train_score, test_score)) #画出学习曲线 from sklearn.model_selection import ShuffleSplit from common.utils import plot_learning_curve knn = KNeighborsClassifier(n_neighbors=2) cv = ShuffleSplit(n_splits=10, test_size=0.2, random_state=0) plt.figure(figsize=(10, 6), dpi=200) plot_learning_curve(plt, knn, "Learn Curve for KNN Diabetes", X, Y, ylim=(0.0, 1.01), cv=cv); #数据可视化 # 从8个特征中选择2个最重要的特征进行可视化 from sklearn.feature_selection import SelectKBest selector = SelectKBest(k=2) X_new = selector.fit_transform(X, Y) X_new[0:5] results = [] for name, model in models: kfold = KFold(n_splits=10) cv_result = cross_val_score(model, X_new, Y, cv=kfold) results.append((name, cv_result)) for i in range(len(results)): print("name: {}; cross val score: {}".format( results[i][0],results[i][1].mean())) # 画出数据 plt.figure(figsize=(10, 6), dpi=200) plt.ylabel("BMI") plt.xlabel("Glucose") plt.scatter(X_new[Y==0][:, 0], X_new[Y==0][:, 1], c='r', s=20, marker='o'); # 画出样本 plt.scatter(X_new[Y==1][:, 0], X_new[Y==1][:, 1], c='g', s=20, marker='^'); # 画出样本 #2个特征和8个特征得到的结果差不多。分类效果达到了瓶颈

KNN对糖尿病进行测试,无法得到比较高的预测准确性

扩展阅读