人脸验证与识别——从模型训练到项目部署

前言

1.人脸验证其实是人脸识别中的一种,人脸验证要做的是1对1的验证,算法的验证模式是对当前人脸与另一张人脸做比对,然后给出得分值,可以按得分值来证明可以当前的人脸是否与另一给脸匹配上。这种使用最多的场景就是人脸解锁,还有高铁站检票口的身份认证入站。

2.人脸识别要做的是1对N的比对,就是拿当前采集到的人脸,然后拿去跟之前采集并保存在数据库人脸中找到与当前使用者人脸数据相符合的图像,并进行匹配,并且知道你是谁这种的使用场景就小区门禁,公司人脸打卡签到。

3.如果要完成一个完成的人脸验证或者人脸识别,基本的分为三个步骤,人脸检测(检测当前画面是否存在人脸),静默活体检测(当前检测到的人脸是否存在欺骗),人脸识别(验证当前检测的到的人脸与要匹配的人脸的是否匹配上)。

一、环境

1.训练环境系统是win10,Anaconda 3.5,python3.7,PyTorch 1.6,显卡RTX3080;cuda10.2,cudnn7.1。

2.模型部署环境PC用的是Vs2019, OpenCV4.5,用了ncnn做推理加速库,ncnn的版本是20220216这个版本。

二、人脸检测

1.在做人脸识别之前,首先最主要的一步是肯定是先检测到当前图像是否存在人脸,这个属于人脸检测的范围,目前有很多开源的人脸检测算法和模型,OpenCV本身也带有人脸检测的算法,但在人脸验证识别中,要涉及到人脸对齐,就是不只是检测人脸还要检测出人脸的关键点。

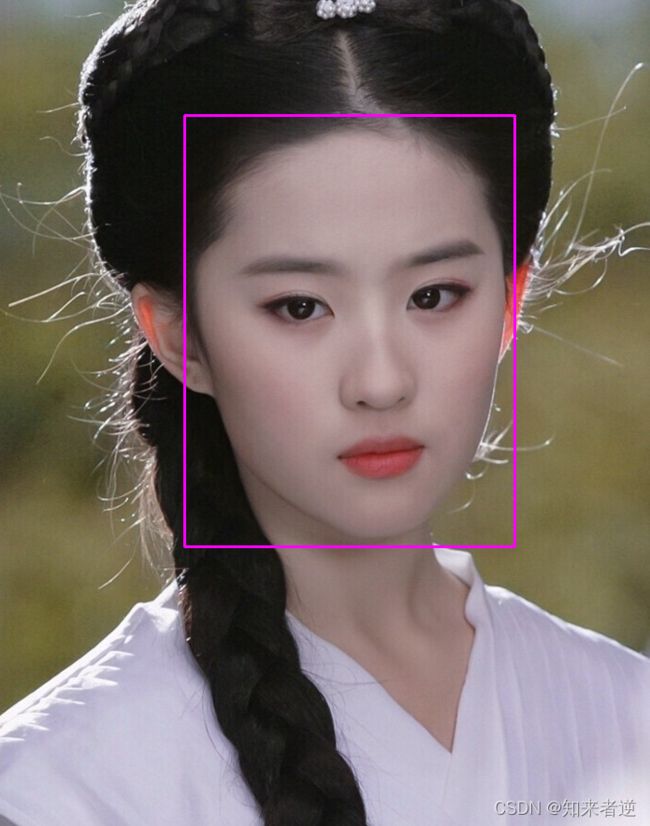

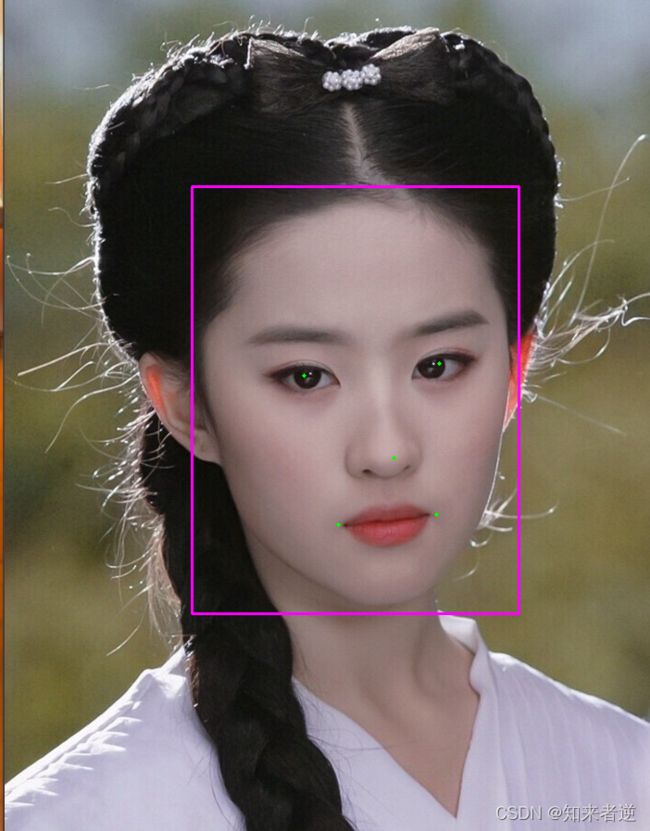

不带人脸关键点的检测:

带人脸关键点的检测:

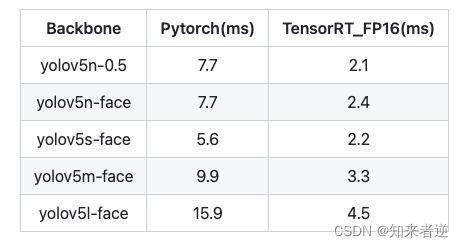

2.因为要考虑应用到移动端或者边缘设备上,我这里用的是yolov5-face这个算法,github地址:https://github.com/deepcam-cn/yolov5-face,这里它使用的数据集是WIDERFace,也给出了标签文件,如果想优化自己的使用场景,可以按数据格式添加自己的数据集。它的检测结果和一些参数:

3.训练之后保存成.pt的模型,先转换成onnx模型,onnx模型就可以使用onnxruntime或者是opencv的dnn来进行C++推理,我这里转了onnx模型之后再转成ncnn模型,关于模型方法可以参考我之前的博客或者是ncnn官方文档。

4.ncnn模型推理代码:

#include "yoloface.h"

#include 5.检测结果与检测速度

输入尺寸是1280的推理结果和速度:

如果检测的人脸占的画幅比较大,可以改640或者更小,推理时间就会减少很多,因为用于人脸验证和识别往往整个画面上人脸占的很大一部分:

三、人脸验证

1.当检测到人脸加关键点之后,就可以对人脸做一个空间归一化,这个空间归一化的操作就叫:人脸对齐(face alignment).这个操作可以使后续模型提取到与五官的位置无关,只有五官的形状纹理相关的特征。可以极大地提升人脸识别,人脸属性分析,表情分类等算法的性能和稳定性。

2.在进行人脸检测的时候,已得到人脸上的关键点landmark,然后用landmark对人脸进行对齐,对齐的方式是通过图像变换,将人脸上的眼睛,鼻子,嘴巴对准到一个预设的固定的位置上。

3.下面是人脸对齐的C++源码:

#include "FaceWarp.h"

inline double count_angle(float landmark[5][2])

{

double a = landmark[2][1] - (landmark[0][1] + landmark[1][1]) / 2;

double b = landmark[2][0] - (landmark[0][0] + landmark[1][0]) / 2;

double angle = atan(abs(b) / a) * 180.0 / M_PI;

return angle;

}

FaceWarp::FaceWarp()

{

}

FaceWarp::~FaceWarp()

{

}

cv::Mat FaceWarp::MeanAxis0(const cv::Mat &src)

{

int num = src.rows;

int dim = src.cols;

// x1 y1

// x2 y2

cv::Mat output(1,dim,CV_32F);

for(int i = 0 ; i < dim; i++){

float sum = 0 ;

for(int j = 0 ; j < num ; j++){

sum+=src.at<float>(j,i);

}

output.at<float>(0,i) = sum/num;

}

return output;

}

cv::Mat FaceWarp::ElementwiseMinus(const cv::Mat &A,const cv::Mat &B)

{

cv::Mat output(A.rows,A.cols,A.type());

assert(B.cols == A.cols);

if(B.cols == A.cols)

{

for(int i = 0 ; i < A.rows; i ++)

{

for(int j = 0 ; j < B.cols; j++)

{

output.at<float>(i,j) = A.at<float>(i,j) - B.at<float>(0,j);

}

}

}

return output;

}

int FaceWarp::MatrixRank(cv::Mat M)

{

cv::Mat w, u, vt;

cv::SVD::compute(M, w, u, vt);

cv::Mat1b nonZeroSingularValues = w > 0.0001;

int rank = countNonZero(nonZeroSingularValues);

return rank;

}

cv::Mat FaceWarp::VarAxis0(const cv::Mat &src)

{

cv::Mat temp_ = ElementwiseMinus(src,MeanAxis0(src));

cv::multiply(temp_ ,temp_ ,temp_ );

return MeanAxis0(temp_);

}

cv::Mat FaceWarp::SimilarTransform(cv::Mat src,cv::Mat dst)

{

int num = src.rows;

int dim = src.cols;

cv::Mat src_mean = MeanAxis0(src);

cv::Mat dst_mean = MeanAxis0(dst);

cv::Mat src_demean = ElementwiseMinus(src, src_mean);

cv::Mat dst_demean = ElementwiseMinus(dst, dst_mean);

cv::Mat A = (dst_demean.t() * src_demean) / static_cast<float>(num);

cv::Mat d(dim, 1, CV_32F);

d.setTo(1.0f);

if (cv::determinant(A) < 0) {

d.at<float>(dim - 1, 0) = -1;

}

cv::Mat T = cv::Mat::eye(dim + 1, dim + 1, CV_32F);

cv::Mat U, S, V;

cv::SVD::compute(A, S,U, V);

int rank = MatrixRank(A);

if (rank == 0) {

assert(rank == 0);

} else if (rank == dim - 1) {

if (cv::determinant(U) * cv::determinant(V) > 0) {

T.rowRange(0, dim).colRange(0, dim) = U * V;

} else {

int s = d.at<float>(dim - 1, 0) = -1;

d.at<float>(dim - 1, 0) = -1;

T.rowRange(0, dim).colRange(0, dim) = U * V;

cv::Mat diag_ = cv::Mat::diag(d);

cv::Mat twp = diag_*V; //np.dot(np.diag(d), V.T)

cv::Mat B = cv::Mat::zeros(3, 3, CV_8UC1);

cv::Mat C = B.diag(0);

T.rowRange(0, dim).colRange(0, dim) = U* twp;

d.at<float>(dim - 1, 0) = s;

}

}

else{

cv::Mat diag_ = cv::Mat::diag(d);

cv::Mat twp = diag_*V.t(); //np.dot(np.diag(d), V.T)

cv::Mat res = U* twp; // U

T.rowRange(0, dim).colRange(0, dim) = -U.t()* twp;

}

cv::Mat var_ = VarAxis0(src_demean);

float val = cv::sum(var_).val[0];

cv::Mat res;

cv::multiply(d,S,res);

float scale = 1.0/val*cv::sum(res).val[0];

T.rowRange(0, dim).colRange(0, dim) = - T.rowRange(0, dim).colRange(0, dim).t();

cv::Mat temp1 = T.rowRange(0, dim).colRange(0, dim); // T[:dim, :dim]

cv::Mat temp2 = src_mean.t(); //src_mean.T

cv::Mat temp3 = temp1*temp2; // np.dot(T[:dim, :dim], src_mean.T)

cv::Mat temp4 = scale*temp3;

T.rowRange(0, dim).colRange(dim, dim+1)= -(temp4 - dst_mean.t()) ;

T.rowRange(0, dim).colRange(0, dim) *= scale;

return T;

}

cv::Mat FaceWarp::ProcessFace(cv::Mat& SmallFrame, Object& Obj)

{

float v1[5][2] = {

{30.2946f, 51.6963f},

{65.5318f, 51.5014f},

{48.0252f, 71.7366f},

{33.5493f, 92.3655f},

{62.7299f, 92.2041f}

};

static cv::Mat src(5, 2, CV_32FC1, v1);

memcpy(src.data, v1, 2 * 5 * sizeof(float));

float v2[5][2] = {

{Obj.pts[0].x, Obj.pts[0].y},

{Obj.pts[1].x, Obj.pts[1].y},

{Obj.pts[2].x, Obj.pts[2].y},

{Obj.pts[3].x, Obj.pts[3].y},

{Obj.pts[4].x, Obj.pts[4].y},

};

cv::Mat dst(5, 2, CV_32FC1, v2);

memcpy(dst.data, v2, 2 * 5 * sizeof(float));

Angle = count_angle(v2);

cv::Mat aligned = SmallFrame.clone();

cv::Mat m = SimilarTransform(dst, src);

cv::warpPerspective(SmallFrame, aligned, m, cv::Size(96, 112), cv::INTER_LINEAR);

resize(aligned, aligned, cv::Size(112, 112), 0, 0, cv::INTER_LINEAR);

return aligned;

}

4.这种用到的人脸关键点只有五个,如果为了更高的精度,可以考虑使用带更多人脸关键的检测模型,68个关键点或者是106个关键点。

四、人脸比对方法

1.人脸对齐之后就可以比对两张脸的相似度了,使用的训练的算法是:https://github.com/deepinsight/insightface ,https://github.com/ronghuaiyang/arcface-pytorch 。

2.训练好的模型这里从onnx模型转换成ncnn,方便统一调用。模型转换可以参考ncnn官方的文档或我之后的博客。

3.模型推理:

#include "Arcface.h"

ArcFace::ArcFace(void)

{

}

ArcFace::~ArcFace()

{

this->net.clear();

}

int ArcFace::loadModel(std::string model, bool use_gpu)

{

bool has_gpu = false;

net.clear();

net.opt = ncnn::Option();

#if NCNN_VULKAN

ncnn::create_gpu_instance();

has_gpu = ncnn::get_gpu_count() > 0;

#endif

bool to_use_gpu = has_gpu && use_gpu;

net.opt.use_vulkan_compute = to_use_gpu;

net.load_param((model + ".param").c_str());

net.load_model((model + ".bin").c_str());

return 0;

}

cv::Mat ArcFace::zscore(const cv::Mat &fc)

{

cv::Mat mean, std;

meanStdDev(fc, mean, std);

return((fc - mean) / std);

}

cv::Mat ArcFace::getFeature(cv::Mat img)

{

vector<float> feature;

//cv to NCNN

ncnn::Mat in = ncnn::Mat::from_pixels(img.data, ncnn::Mat::PIXEL_BGR, img.cols, img.rows);

ncnn::Extractor ex = net.create_extractor();

ex.set_light_mode(true);

ex.input("data", in);

ncnn::Mat out;

ex.extract("fc1", out);

feature.resize(this->feature_dim);

for (int i = 0; i < this->feature_dim; i++) feature[i] = out[i];

//normalize(feature);

cv::Mat feature__=cv::Mat(feature,true);

return zscore(feature__);

}

五.人脸比对验证

1.首先读入两张图像,并检测当前图像是否存在人脸,当前的代码只处理图像里只有一张人脸的逻辑,然后对两张检测到的脸做对齐处理,对齐之后再对两个人脸数据做比较。

void detectImage(cv::Mat& cv_src_1, cv::Mat& cv_src_2,YoloFace &yolo_face, FaceWarp& face_warp, ArcFace& arc_face)

{

std::vector<Object> objects_1,objects_2;

yolo_face.detection(cv_src_2, objects_2);

yolo_face.detection(cv_src_1, objects_1);

if (objects_1.size() == 1 && objects_2.size() == 1)

{

cv::Mat cv_face_1 = cv_src_1(objects_1[0].rect);

cv::Mat cv_face_2 = cv_src_2(objects_2[0].rect);

cv::Mat cv_aligned_1 = face_warp.ProcessFace(cv_src_1, objects_1[0]);

cv::Mat cv_aligned_2 = face_warp.ProcessFace(cv_src_2, objects_2[0]);

cv::Mat fc1 = arc_face.getFeature(cv_aligned_1);

cv::Mat fc2 = arc_face.getFeature(cv_aligned_2);

double score = CosineDistance(fc1, fc2);

cv::rectangle(cv_src_1, objects_1[0].rect, cv::Scalar(180, 180, 0), 2);

cv::rectangle(cv_src_2, objects_2[0].rect, cv::Scalar(255, 0, 255), 2);

cv::putText(cv_src_1, cv::format("score : %0.1f", score), cv::Point(10, 40), cv::FONT_HERSHEY_SIMPLEX, 0.6, cv::Scalar(0, 0, 255));

cv::putText(cv_src_2, cv::format("score: %0.1f", score), cv::Point(10, 40), cv::FONT_HERSHEY_SIMPLEX, 0.6, cv::Scalar(0, 0, 255));

for (auto o : objects_2[0].pts)

{

cv::circle(cv_src_2, o, 2, cv::Scalar(0, 255, 0), -1);

}

for (auto o : objects_1[0].pts)

{

cv::circle(cv_src_1, o, 2, cv::Scalar(0, 255, 0), -1);

}

cv::imshow("1", cv_src_1);

cv::imshow("2", cv_src_2);

cv::waitKey();

}

}

2.代码调用

int main(void)

{

YoloFace yolo_face;

ArcFace face_arc;

FaceWarp face_warp;

face_arc.loadModel("models/arcface/arcface");

yolo_face.loadModel("models/face/face_lite");

cv::Mat cv_src_1 = cv::imread("images/61.jpg");

cv::Mat cv_src_2 = cv::imread("images/31.jpg");

detectImage(cv_src_1, cv_src_2,yolo_face, face_warp, face_arc);

}

3.结果展示:

3.1 两张不同角度的脸。

3.4 十级美颜后的人脸对比,还是很容易就能分出差异。