目标检测之YOLOv1源码解析

在前几天,我就已经介绍了YOLOv1目标检测的原理,后来也把tensorflow实现代码仔细看了一遍,于是就把源码解析一下。关于yolo目标检测的原理请参考前面一篇文章:目标检测之深入理解YOLOv1。

一、准备工作

- 下载源码,本文所使用的yolo源码来源于网址:https://github.com/hizhangp/yolo_tensorflow

- 下载训练所使用的数据集,我们仍然使用以VOC 2012数据集为例,下载地址为:http://host.robots.ox.ac.uk/pascal/VOC/voc2012/VOCtrainval_11-May-2012.tar。

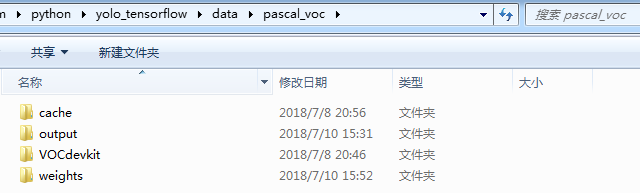

- yolo源码所在目录下,创建一个目录data,然后在data里面创建一个pascal_voc目录,用来保存与VOC 2012数据集相关的数据,我们把下载好的数据集解压到该目录下,如下图所示,其中VOCdevkit为数据集解压得到的文件,剩余三个文件夹我们先不用理会,后面会详细介绍。

- 下载与训练模型,即YOLO_small文件,我们把下载好之后的文件解压放在weights文件夹下面。下载链接:https://drive.google.com/file/d/0B5aC8pI-akZUNVFZMmhmcVRpbTA/view?usp=sharing

- 根据自己的需求修改配置文件yolo/config.py。

- 运行train.py文件,开始训练。

- 运行test.py文件,开始测试。

二 、YOLOv1代码文件结构

如果你按照上面我说的步骤配置好文件之后,源代码结构就会如下图所示:

![]()

简单的介绍一下每个文件的功能:

- data文件夹,存放数据集以及训练时生成的模型,缓存文件。

- test文件夹,用来存放测试时用到的图片。

- utils文件夹,包含两个文件一个是pascal_voc.py,主要用来获取训练集图片文件,以及生成对应的标签文件,为yolo网络训练做准备。另一个文件是timer.py用来计时。

- yolo文件夹,也包含两个文件,config.py包含yolo网络的配置参数,yolo_net.py文件包含yolo网络的结构。

- train.py文件用来训练yolo网络。

- test.py文件用来测试yolo网络。

三、代码解析

1、config.py文件解析

首先我们从配置文件进行讲解,具体代码和注释如下:

import os

DATA_PATH = 'data' # 所有数据所在的根目录

PASCAL_PATH = os.path.join(DATA_PATH, 'pascal_voc') # voc数据集所在的目录

CACHE_PATH = os.path.join(PASCAL_PATH, 'cache') # 保存生成的数据集标签缓冲文件所在文件夹

OUTPUT_DIR = os.path.join(PASCAL_PATH, 'output') # 保存生成的网络模型和日志所在的文件夹

WEIGHTS_DIR = os.path.join(PASCAL_PATH, 'weights') # 检查点文件所在的目录

WEIGHTS_FILE = None

# WEIGHTS_FILE = os.path.join(DATA_PATH, 'weights', 'YOLO_small.ckpt')

CLASSES = ['aeroplane', 'bicycle', 'bird', 'boat', 'bottle', 'bus',

'car', 'cat', 'chair', 'cow', 'diningtable', 'dog', 'horse',

'motorbike', 'person', 'pottedplant', 'sheep', 'sofa',

'train', 'tvmonitor'] # voc数据集类别名

FLIPPED = True # 使用水平镜像,扩大数据集

#

# 模型参数

#

IMAGE_SIZE = 448 # 输入训练图片大小

CELL_SIZE = 7 # 单元网格大小 一张图片分为7*7个网格

BOXES_PER_CELL = 2 # 每个cell里面有2个bbox

ALPHA = 0.1 # 激活函数leakyrelu的alpha值

DISP_CONSOLE = False # 控制台输出信息

OBJECT_SCALE = 1.0 # 有目标时,置信度权重

NOOBJECT_SCALE = 1.0 # 无目标时,置信度权重

CLASS_SCALE = 2.0 # 类别权重

COORD_SCALE = 5.0 #bbox边界框权重

#

# 训练参数

#

GPU = '0'

LEARNING_RATE = 0.0001 # 初始学习率

DECAY_STEPS = 30000 # 退化学习率衰减步数

DECAY_RATE = 0.1 # 衰减率

STAIRCASE = True

BATCH_SIZE = 2 # 每次训练的bacth

MAX_ITER = 15000 # 训练的最大次数

SUMMARY_ITER = 10 # 日志文件保存的间隔

SAVE_ITER = 1000 # 模型保存的间隔步数

#

# 测试时的参数设置

#

THRESHOLD = 0.2 # scores的阈值

IOU_THRESHOLD = 0.5 # 进行nms时的IOU阈值2、yolo_net.py文件解析

yolo网络的建立是通过yolo文件夹中的yolo_net.py文件的代码实现的,yolo_net.py文件定义了YOLONet类,该类包含了网路初始化(__init__()),建立网络(build_networks)和loss函数(loss_layer())等方法。

import numpy as np

import tensorflow as tf

import yolo.config as cfg

slim = tf.contrib.slim

class YOLONet(object):

def __init__(self, is_training=True):

"""

构造函数

利用config文件对网络参数进行初始化,同时定义网络的输入和输出size等信息

其中 offset的作用应该是一个定长的偏移

boundary1和boundary2的作用是在输出信息中确定各种信息的长度(如类别,置信度等)

其中 boundary1指的是对于所有cell的类别的预测的张量维度,所以是self.cell_size * self.cell_size * self.num_class

boundary2 指的是在类别之后每个cell所对应的bounding box的数量总和,所以是boundary1 + self.cell_size * self.cell_size * self.boxes_per_cell

"""

self.classes = cfg.CLASSES # voc数据集的类别名

self.num_class = len(self.classes) # 类别的数量 20

self.image_size = cfg.IMAGE_SIZE # 进行训练时图像的大小448*448

self.cell_size = cfg.CELL_SIZE # 将一个图片划分为cell_size * cell_size个网格

self.boxes_per_cell = cfg.BOXES_PER_CELL # 每个cell中的boxes个数

self.output_size = (self.cell_size * self.cell_size) *\

(self.num_class + self.boxes_per_cell * 5) # 最后一层输出的size大小1470=7*7*(2*5+20)

self.scale = 1.0 * self.image_size / self.cell_size # 图片缩放比例 划分后每个网格的大小

self.boundary1 = self.cell_size * self.cell_size * self.num_class # 输出类别的维度

self.boundary2 = self.boundary1 +\

self.cell_size * self.cell_size * self.boxes_per_cell # 输出boxes的维度

# 损失函数loss的权重

self.object_scale = cfg.OBJECT_SCALE # 有物体时的权重值

self.noobject_scale = cfg.NOOBJECT_SCALE # 无物体时的权重值

self.class_scale = cfg.CLASS_SCALE # 类别损失的权重值

self.coord_scale = cfg.COORD_SCALE # boxes的(x,y,w,h)的权重值

self.learning_rate = cfg.LEARNING_RATE # 初始学习率

self.batch_size = cfg.BATCH_SIZE # 训练时的bacth

self.alpha = cfg.ALPHA # leaky_relu的修正激活系数

"""

1.生成self.cell_size * self.boxes_per_cell个np.arange(self.cell_size)

np.array([np.arange(self.cell_size)] * self.cell_size * self.boxes_per_cell)

2.对生成的array进行reshape,reshape后的形状是2*7*7,即2个7*7

3.最后进行转置

"""

# shape = [7,7,2]

self.offset = np.transpose(np.reshape(np.array(

[np.arange(self.cell_size)] * self.cell_size * self.boxes_per_cell),

(self.boxes_per_cell, self.cell_size, self.cell_size)), (1, 2, 0))

# 训练时输入图像的占位符,shape = [None,448,448,3]

self.images = tf.placeholder(

tf.float32, [None, self.image_size, self.image_size, 3],

name='images')

# 构建网络 获取YOLOV1网络的输出(不经过激活函数的输出) shape = [None,1470]

self.logits = self.build_network(

self.images, num_outputs=self.output_size, alpha=self.alpha,

is_training=is_training)

# 判断是否是训练模式

if is_training:

# 设置标签占位符, shape = [None,7,7,25]

self.labels = tf.placeholder(

tf.float32,

[None, self.cell_size, self.cell_size, 5 + self.num_class])

# 设置损失函数

self.loss_layer(self.logits, self.labels)

self.total_loss = tf.losses.get_total_loss() # 加入权重正则化之后的损失函数

tf.summary.scalar('total_loss', self.total_loss) # 将损失以表量的形式显示,该变量命名为total_loss

# yolov1的网络结构

def build_network(self,

images,

num_outputs,

alpha,

keep_prob=0.5,

is_training=True,

scope='yolo'):

"""

images:输入图像占位符,shape = [None,448,448,3]

num_outputs :标量,网络输出节点数1470

alpha: leaky_relu的修正系数

keep_prob:弃权 保留率

is_training:训练?

scope:命名空间

return : 返回网络最后一层,激活函数处理之前的值,shape = [None,1470]

"""

#定义变量命名空间

with tf.variable_scope(scope):

with slim.arg_scope( # 定义共享参数,使用l2正则化

[slim.conv2d, slim.fully_connected],

activation_fn=leaky_relu(alpha),

weights_regularizer=slim.l2_regularizer(0.0005),

weights_initializer=tf.truncated_normal_initializer(0.0, 0.01)

):

net = tf.pad(

images, np.array([[0, 0], [3, 3], [3, 3], [0, 0]]),

name='pad_1') # 对图像进行了padding处理,输入图片的size由448*448变成了454*454

net = slim.conv2d(

net, 64, 7, 2, padding='VALID', scope='conv_2') # [454,454,3] -> [224,224,64]

net = slim.max_pool2d(net, 2, padding='SAME', scope='pool_3') # [224,224,64] -> [112,112,64]

net = slim.conv2d(net, 192, 3, scope='conv_4') # [112,112,64] -> [112,112,192]

net = slim.max_pool2d(net, 2, padding='SAME', scope='pool_5') # [112,112,192] -> [56,56,192]

net = slim.conv2d(net, 128, 1, scope='conv_6') # 1*1卷积进行降维,大小不变 [56,56,192] -> [56,56,128]

net = slim.conv2d(net, 256, 3, scope='conv_7') # [56,56,128] -> [56,56,256]

net = slim.conv2d(net, 256, 1, scope='conv_8') # [56,56,256] -> [56,56,256]

net = slim.conv2d(net, 512, 3, scope='conv_9') # [56,56,256] -> [56,56,512]

net = slim.max_pool2d(net, 2, padding='SAME', scope='pool_10') # [56,56,512] -> [28,28,512]

net = slim.conv2d(net, 256, 1, scope='conv_11') # [28,28,512] -> [28,28,256]

net = slim.conv2d(net, 512, 3, scope='conv_12') # [28,28,256] -> [28,28,512]

net = slim.conv2d(net, 256, 1, scope='conv_13') # [28,28,512] -> [28,28,256]

net = slim.conv2d(net, 512, 3, scope='conv_14') # [28,28,256] -> [28,28,512]

net = slim.conv2d(net, 256, 1, scope='conv_15') # [28,28,512] -> [28,28,256]

net = slim.conv2d(net, 512, 3, scope='conv_16') # [28,28,256] -> [28,28,512]

net = slim.conv2d(net, 256, 1, scope='conv_17') # [28,28,512] -> [28,28,256]

net = slim.conv2d(net, 512, 3, scope='conv_18') # [28,28,256] -> [28,28,512]

net = slim.conv2d(net, 512, 1, scope='conv_19') # [28,28,512] -> [28,28,512]

net = slim.conv2d(net, 1024, 3, scope='conv_20') # [28,28,512] -> [28,28,1024]

net = slim.max_pool2d(net, 2, padding='SAME', scope='pool_21') # [28,28,1024] -> [14,14,1024]

net = slim.conv2d(net, 512, 1, scope='conv_22') # [14,14,1024] -> [14,14,512]

net = slim.conv2d(net, 1024, 3, scope='conv_23') # [14,14,512] -> [14,14,1024]

net = slim.conv2d(net, 512, 1, scope='conv_24') # [14,14,1024] -> [14,14,512]

net = slim.conv2d(net, 1024, 3, scope='conv_25') # [14,14,512] -> [14,14,1024]

net = slim.conv2d(net, 1024, 3, scope='conv_26') # [14,14,512] -> [14,14,1024]

net = tf.pad(

net, np.array([[0, 0], [1, 1], [1, 1], [0, 0]]),

name='pad_27') # 对前一层的特征图进行padding,大小由[14,14,1024] -> [16,16,1024]

net = slim.conv2d(

net, 1024, 3, 2, padding='VALID', scope='conv_28') # [16,16,1024] -> [7,7,1024]

net = slim.conv2d(net, 1024, 3, scope='conv_29') # [7,7,1024] -> [7,7,1024]

net = slim.conv2d(net, 1024, 3, scope='conv_30') # [7,7,1024] -> [7,7,1024]

net = tf.transpose(net, [0, 3, 1, 2], name='trans_31') # [7,7,1024] -> [1024,7,7]

net = slim.flatten(net, scope='flat_32') # 进行扁平化,展开50176

net = slim.fully_connected(net, 512, scope='fc_33') # 原始论文里面没有这一层,增加这一层主要是降低参数量

net = slim.fully_connected(net, 4096, scope='fc_34') # 全连接层

net = slim.dropout(

net, keep_prob=keep_prob, is_training=is_training,

scope='dropout_35') # 对全连接层中多余的连接进行丢弃

net = slim.fully_connected(

net, num_outputs, activation_fn=None, scope='fc_36') # 全连接层

return net

# 计算IOU

def calc_iou(self, boxes1, boxes2, scope='iou'):

"""

这个函数主要是计算两个bounding box之间的IOU,输入是两个5维的bounding box, 输出的是两个bounding box的IOU

Args:

boxes1: 5-D tensor [BATCH_SIZE, CELL_SIZE, CELL_SIZE, BOXES_PER_CELL, 4] ====> (x_center, y_center, w, h)

boxes2: 5-D tensor [BATCH_SIZE, CELL_SIZE, CELL_SIZE, BOXES_PER_CELL, 4] ===> (x_center, y_center, w, h)

注意:这里的参数(x_center, y_center, w, h)都是归一化到[0,1]之间的,分别表示预测边界框的中心相对整张图片的坐标,宽高

Return:

iou: 4-D tensor [BATCH_SIZE, CELL_SIZE, CELL_SIZE, BOXES_PER_CELL]

"""

with tf.variable_scope(scope):

# transform (x_center, y_center, w, h) to (x1, y1, x2, y2)

# 把之前的中心点坐标,长,宽转换为左上角和右下角的两个点坐标

boxes1_t = tf.stack([boxes1[..., 0] - boxes1[..., 2] / 2.0, # 左上角x

boxes1[..., 1] - boxes1[..., 3] / 2.0, # 左上角y

boxes1[..., 0] + boxes1[..., 2] / 2.0, # 右下角x

boxes1[..., 1] + boxes1[..., 3] / 2.0], # 右下角y

axis=-1)

boxes2_t = tf.stack([boxes2[..., 0] - boxes2[..., 2] / 2.0,

boxes2[..., 1] - boxes2[..., 3] / 2.0,

boxes2[..., 0] + boxes2[..., 2] / 2.0,

boxes2[..., 1] + boxes2[..., 3] / 2.0],

axis=-1)

# calculate the left up point & right down point

# lu和rd就是分别求两个框相交的矩形的左上角和右下角的坐标,

# 对于左上角,选择的是x和y较大的,

# 对于右下角,选择的是x和y较小的

lu = tf.maximum(boxes1_t[..., :2], boxes2_t[..., :2]) # 两个框相交矩形的左上角(x1,y1)

rd = tf.minimum(boxes1_t[..., 2:], boxes2_t[..., 2:]) # 两个框相交矩形的右下角(x2,y2)

# intersection 这个就是求相交矩形的长和宽,所以有rd - lu, 相当于x2 - x1和y2 - y1

# 之所以外面还要加一个tf.maximum是因为删除那些不合理的框,比如两个框没有交集

intersection = tf.maximum(0.0, rd - lu)

inter_square = intersection[..., 0] * intersection[..., 1] # 求出相交部分的面积

# calculate the boxs1 square and boxs2 square

square1 = boxes1[..., 2] * boxes1[..., 3] # 计算boxes1的面积

square2 = boxes2[..., 2] * boxes2[..., 3] # 计算boxes2的面积

union_square = tf.maximum(square1 + square2 - inter_square, 1e-10) # 求出两个框的面积

# 最后一个 tf.clip_by_value,这个是将交并比大于1的变成1,小于0的变成0,因为交并比在[0,1]之间

return tf.clip_by_value(inter_square / union_square, 0.0, 1.0)

def loss_layer(self, predicts, labels, scope='loss_layer'):

# predicts :YOLOV1网络的输出形状[None,1470] 1470 = 7 * 7 * [2 * 5 + 20]

# 0: 7*7*20: 表示预测类别

# 7*7*20 : 7*7*20 + 7*7*2 表示预测置信度,即预测的边界框与实际框之间的IOU

# 7*7*20 + 7*7*2 : 1470 表示预测边界框[x,y,w,h] * 2

# 目标中心是相对于当前网格的,高度和宽度的开根号是相对于当前整张图像的(归一化)

# labels:标签值 shape:[None,7,7,25]

# 0:1 表示的是置信度,也就是这个标注里面是否有目标

# 1:5 表示的是目标边界框,目标中心,高度和宽度(没有归一化)

# 5:25 表示目标的类别

with tf.variable_scope(scope):

# 预测出的classes

predict_classes = tf.reshape( # 预测每个网格目标的类别,shape = [batch_size,7,7,20]

predicts[:, :self.boundary1],

[self.batch_size, self.cell_size, self.cell_size, self.num_class]) # 对predicts进行reshape,

# 预测出的conficence

predict_scales = tf.reshape( # 预测每个格子中两个box的置信度,shape = [batch_size,7,7,2]

predicts[:, self.boundary1:self.boundary2],

[self.batch_size, self.cell_size, self.cell_size, self.boxes_per_cell])

# 预测出的bounding box

predict_boxes = tf.reshape( # 预测每个格子的box,(x,y)表示边界框相对于格子的中心,(w,h)的开根号相对于整张图片

predicts[:, self.boundary2:],

[self.batch_size, self.cell_size, self.cell_size, self.boxes_per_cell, 4]) # shape = [batch_size,7,7,2,4]

# 实际值

# shape[batch_size,7,7,1]

# response中的值是0或者1,对应的网格中存在目标时为1,不存在时为0

# 存在目标指的是存在目标的中心点,而不是说存在目标的一部分

# 所以,目标的中心点所在的cell其对应值才为1,其余值均为0

response = tf.reshape( # 标签的置信度,表示这个网格是否含有目标, shape = [batch_size,7,7,1]

labels[..., 0],

[self.batch_size, self.cell_size, self.cell_size, 1])

# shape [batch_size,7,7,1,4]

boxes = tf.reshape( # 标签的边界框,(x,y)表示边界框相对于整个图片的中心

labels[..., 1:5],

[self.batch_size, self.cell_size, self.cell_size, 1, 4]) # shape = [batch_size,7,7,1,4]

# shape[batch_size,7,7,2,4],boxes的四个值,取值范围为0~1

# tf.tile 用于在同一维度上的复制

# 标签的边界框归一化后,张量沿着axis=3重复两次,扩充后的shape = [batch_size,7,7,2,4[

boxes = tf.tile(

boxes, [1, 1, 1, self.boxes_per_cell, 1]) / self.image_size

classes = labels[..., 5:] # 目标类别

# self.offset 的shape [7,7,2],这个构造的[7,7,2]矩阵,每一行都是[7,2]的矩阵

# 其值为 [[0,0],[1,1],[2,2],[3,3],[4,4],[5,5],[6,6]]

# 这个变量是为了将每个cell的坐标对齐,后一个框比前一个框要多加1

# 比如,我们预测了cell_size的每个中心点坐标,那么我们这个中心点落在第几个cell_size,就在对应的坐标加几

# 这个用法比较巧妙,构造这样一个数组,让他们对应位置相加

# offset的shape [1,7,7,2]

# 如果忽略axis=0,则每一行都是 [[0,0],[1,1],[2,2],[3,3],[4,4],[5,5],[6,6]]

offset = tf.reshape(

tf.constant(self.offset, dtype=tf.float32),

[1, self.cell_size, self.cell_size, self.boxes_per_cell])

# offset shape (1,7,7,2) -> (batch_size,7,7,2)

offset = tf.tile(offset, [self.batch_size, 1, 1, 1])

# offset_tran shape (batch_size,7,7,2),

# 如果忽略axis=0, 第i行为[[i,i],[i,i],[i,i],[i,i],[i,i],[i,i],[i,i]]

offset_tran = tf.transpose(offset, (0, 2, 1, 3))

# shape为(batch,7,7,2,4), 计算每个网格的预测边界框坐标(x,y)相对于当前网格,而不是整幅图像

# 假设当前网格是(3,3),当前网格的预测边界框为(x0,y0),则计算坐标(x,y) = ((x0,y0) + (3,3))/7

predict_boxes_tran = tf.stack(

[(predict_boxes[..., 0] + offset) / self.cell_size, # x/7 是指相对于自己所在的网格

(predict_boxes[..., 1] + offset_tran) / self.cell_size,

tf.square(predict_boxes[..., 2]),

tf.square(predict_boxes[..., 3])], axis=-1)

# 预测box与真实box的IOU, shape [45,7,7,2]

iou_predict_truth = self.calc_iou(predict_boxes_tran, boxes) # 计算IOU

# calculate I tensor [BATCH_SIZE, CELL_SIZE, CELL_SIZE, BOXES_PER_CELL]

# 这个是求论文中的1ijobj参数, [batch_size,7,7,2]

# 其中1ijobj表示的第i网格的第j个边界框预测器负责该物体的预测

# 先计算每个框交并比最大的那个,因为我们知道,YOLOV1每个格子预测两个边界框,一个类别,

# 在训练时,每个目标只需要一个预测器来负责,我们可以指定一个预测器"负责",根据哪个预测器与真实值之间的具有最高的IOU来预测目标

# 所以object_mask就表示每个网格中的哪个边界框负责该格子中目标预测?哪个边界框取值为1,哪个边界框就负责检测目标

# 当格子中确实存在目标时,取值为[1,1],[1,0],[0,1]

# 比如某一个格子的值为[1,0],表示第一个边界框负责预测该格子的目标

# 当格子没有目标时,取值为[0,0]

object_mask = tf.reduce_max(iou_predict_truth, 3, keep_dims=True)

object_mask = tf.cast(

(iou_predict_truth >= object_mask), tf.float32) * response

# calculate no_I tensor [CELL_SIZE, CELL_SIZE, BOXES_PER_CELL]

# noobject_mask表示每个边界框不负责该目标的置信度

# 使用tf.ones_like,使得全部值为1,再减去有目标的(有目标的值为1),这样一减,剩下的就是没有目标的

# noobject prosibility[45,7,7,2]

noobject_mask = tf.ones_like(

object_mask, dtype=tf.float32) - object_mask

# boxes_tran 这个就是把之前的坐标换回来(相对于整张图片 -> 相对当前格子)

# shape(45,7,7,2,4),对boxes的四个值进行规整,

# xy为相对于网格左上角,wh为取根号后的值,范围0~1

boxes_tran = tf.stack(

[boxes[..., 0] * self.cell_size - offset,

boxes[..., 1] * self.cell_size - offset_tran,

tf.sqrt(boxes[..., 2]),

tf.sqrt(boxes[..., 3])], axis=-1)

# class_loss 分类损失函数,原文损失函数公式第5项,如果目标出现在网格中,response为1,否则response为0,

# 该损失函数表明当格子中有目标时,预测的类别越接近实际类别,损失越小

# class_loss shape [batch_size,7,7,20]

class_delta = response * (predict_classes - classes)

class_loss = tf.reduce_mean(

tf.reduce_sum(tf.square(class_delta), axis=[1, 2, 3]),

name='class_loss') * self.class_scale

# object_loss 有目标物体存在的置信度预测损失 原文损失函数公式第3项

# 该损失函数表明当网格中有目标时,负责该目标检测的边界框的置信度越接近预测的边界框与实际边界框之间的IOU时,损失函数越小

# object_loss confidence=iou*p(object)

# p(object)的值为1或0

object_delta = object_mask * (predict_scales - iou_predict_truth)

object_loss = tf.reduce_mean(

tf.reduce_sum(tf.square(object_delta), axis=[1, 2, 3]),

name='object_loss') * self.object_scale

# noobject_loss没有目标物体存在的置信度的损失,此时iou_predict_truth=0,

# noobject_loss p(object)的值为0

noobject_delta = noobject_mask * predict_scales

noobject_loss = tf.reduce_mean(

tf.reduce_sum(tf.square(noobject_delta), axis=[1, 2, 3]),

name='noobject_loss') * self.noobject_scale

# coord_loss 边界框坐标损失, shape = [batch_size,7,7,2,1]

coord_mask = tf.expand_dims(object_mask, 4)

boxes_delta = coord_mask * (predict_boxes - boxes_tran)

coord_loss = tf.reduce_mean(

tf.reduce_sum(tf.square(boxes_delta), axis=[1, 2, 3, 4]),

name='coord_loss') * self.coord_scale

# 将所有的损失放在一起

tf.losses.add_loss(class_loss)

tf.losses.add_loss(object_loss)

tf.losses.add_loss(noobject_loss)

tf.losses.add_loss(coord_loss)

# 将每个损失添加到日志记录

tf.summary.scalar('class_loss', class_loss)

tf.summary.scalar('object_loss', object_loss)

tf.summary.scalar('noobject_loss', noobject_loss)

tf.summary.scalar('coord_loss', coord_loss)

tf.summary.histogram('boxes_delta_x', boxes_delta[..., 0])

tf.summary.histogram('boxes_delta_y', boxes_delta[..., 1])

tf.summary.histogram('boxes_delta_w', boxes_delta[..., 2])

tf.summary.histogram('boxes_delta_h', boxes_delta[..., 3])

tf.summary.histogram('iou', iou_predict_truth)

# 激活函数

def leaky_relu(alpha):

def op(inputs):

return tf.nn.leaky_relu(inputs, alpha=alpha, name='leaky_relu')

return op

3、读取数据pascal_voc.py文件解析

import os

import xml.etree.ElementTree as ET

import numpy as np

import cv2

import pickle

import copy

import yolo.config as cfg

class pascal_voc(object):

def __init__(self, phase, rebuild=False):

"""

准备训练或者测试的数据

phase:传入字符串“train"表示训练,”test“表示测试

rebuild:是否重新创建数据集的标签文件,保存在缓冲文件夹下

"""

self.devkil_path = os.path.join(cfg.PASCAL_PATH, 'VOCdevkit') # VOCdevkit文件夹路径

self.data_path = os.path.join(self.devkil_path, 'VOC2007') # voc2007文件夹的路径

self.cache_path = cfg.CACHE_PATH # cache的路径

self.batch_size = cfg.BATCH_SIZE # batch_size大小

self.image_size = cfg.IMAGE_SIZE # 训练图像大小

self.cell_size = cfg.CELL_SIZE # 网格大小

self.classes = cfg.CLASSES # 类别数

self.class_to_ind = dict(zip(self.classes, range(len(self.classes)))) # 类别名->索引的dict

self.flipped = cfg.FLIPPED # 图片是否采用水平镜像扩充训练集

self.phase = phase # 训练还是测试?

self.rebuild = rebuild # 是否重新创建数据集标签文件

self.cursor = 0 # 从gt_labels加载数据,cursor表示当前读取到第几个

self.epoch = 1 # 存放当前训练的轮数

# 存放数据集的标签,是一个list 每一个元素都是一个dict,对应一个图片

# 如果我们在配置文件中制定flipped=True,则数据集会扩充一倍,每一张原始图片都有一个水平对称的镜像文件

# imname : 图片路径

# label:标签

# flipped:图片水平镜像?

self.gt_labels = None

self.prepare() # 加载数据集标签,初始化gt_labels

def get(self):

"""

加载数据集 每次读取batch大小的图片以及图片对应的标签

return :

images:读取到的图片数据[batch,448,448,3]

labels:对应的标签 [batch,7,7,25]

"""

images = np.zeros(

(self.batch_size, self.image_size, self.image_size, 3)) # 输入图片 [batch,448,448,3]

labels = np.zeros(

(self.batch_size, self.cell_size, self.cell_size, 25)) # 标签,[batch,7,7,25]

count = 0

while count < self.batch_size: # 获取一个batch_size大小的图片和标签

imname = self.gt_labels[self.cursor]['imname'] # 读取图片的路径

flipped = self.gt_labels[self.cursor]['flipped'] # 是否使用水平镜像?

images[count, :, :, :] = self.image_read(imname, flipped) # 读取图片数据

labels[count, :, :, :] = self.gt_labels[self.cursor]['label'] # 读取对应的标签

count += 1

self.cursor += 1

# 如果读取完一轮数据,则当前cursor置为0,当前训练epoch +1

if self.cursor >= len(self.gt_labels):

np.random.shuffle(self.gt_labels) # 打乱顺序

self.cursor = 0

self.epoch += 1

return images, labels

# 读取图片

def image_read(self, imname, flipped=False):

image = cv2.imread(imname) # 读取图片

image = cv2.resize(image, (self.image_size, self.image_size)) # 进行resize

image = cv2.cvtColor(image, cv2.COLOR_BGR2RGB).astype(np.float32) # 颜色转换 BGR -> RGB

image = (image / 255.0) * 2.0 - 1.0 # 进行归一化, 归一化到[-1.0,1.0]

if flipped:

image = image[:, ::-1, :]

return image

def prepare(self):

"""

初始化数据集的标签,保存在变量gt_labels中

return:

# gt_labels存放数据集的标签,是一个list 每一个元素都是一个dict,对应一个图片

# imname : 图片路径

# label:图片文件对应的标签 [7,7,25]的矩阵

# flipped:是否使用水平镜像? 设置为false

"""

gt_labels = self.load_labels() # 加载数据集标签

if self.flipped: # 如果水平镜像,则追加一倍的训练数据集

print('Appending horizontally-flipped training examples ...')

gt_labels_cp = copy.deepcopy(gt_labels) # 深度拷贝

for idx in range(len(gt_labels_cp)): # 遍历每一个图片标签

gt_labels_cp[idx]['flipped'] = True # 设置flipped的属性为True

gt_labels_cp[idx]['label'] =\

gt_labels_cp[idx]['label'][:, ::-1, :] # 目标所在的网格也进行水平镜像 [7,7,25]

for i in range(self.cell_size):

for j in range(self.cell_size):

if gt_labels_cp[idx]['label'][i, j, 0] == 1: # 置信度为1,表示这个网格内有目标

gt_labels_cp[idx]['label'][i, j, 1] = \

self.image_size - 1 -\

gt_labels_cp[idx]['label'][i, j, 1] # 中心的x坐标水平镜像

# 追加数据集的标签,后面的是由原数据集标签扩充的水平镜像数据集标签

gt_labels += gt_labels_cp

np.random.shuffle(gt_labels) # 打乱顺序

self.gt_labels = gt_labels

return gt_labels

def load_labels(self): # 加载标签labels

# cache_file data/pascal_voc/cache/pascal_train_gt_labels.pkl

cache_file = os.path.join( # 缓冲文件名:用来保存数据集标签的文件

self.cache_path, 'pascal_' + self.phase + '_gt_labels.pkl') # cache_file的路径

# cache_file文件存在,且不重新创建,则直接读取文件

if os.path.isfile(cache_file) and not self.rebuild:

print('Loading gt_labels from: ' + cache_file)

with open(cache_file, 'rb') as f: # 打开pkl文件,读取pkl文件中的内容

gt_labels = pickle.load(f) # 加载pkl中的数据

return gt_labels

print('Processing gt_labels from: ' + self.data_path)

# 如果缓冲文件不存在,则创建

if not os.path.exists(self.cache_path):

os.makedirs(self.cache_path)

# 获取训练集的数据文件名

if self.phase == 'train':

txtname = os.path.join(

self.data_path, 'ImageSets', 'Main', 'trainval.txt')

else: # 获取测试集的数据文件名

txtname = os.path.join(

self.data_path, 'ImageSets', 'Main', 'test.txt')

with open(txtname, 'r') as f:

self.image_index = [x.strip() for x in f.readlines()]

gt_labels = [] # 存放图片的标签 图片路径 是否使用水平镜像?

for index in self.image_index: # 遍历每一张图片信息

label, num = self.load_pascal_annotation(index) # 读取每一张图片的标签labels [7,7,25]

if num == 0:

continue

imname = os.path.join(self.data_path, 'JPEGImages', index + '.jpg') # 图片文件名

gt_labels.append({'imname': imname,

'label': label,

'flipped': False}) # 保存该图片的信息

print('Saving gt_labels to: ' + cache_file)

with open(cache_file, 'wb') as f:

pickle.dump(gt_labels, f)

return gt_labels

def load_pascal_annotation(self, index):

"""

Load image and bounding boxes info from XML file in the PASCAL VOC

format.

index:图片文件的index

return:

label:标签 [7,7,25]

0:1 置信度,表示这个地方是否有目标

1:5 目标边界框,也就是目标中心 宽度 高度(这里是实际值,没有归一化)

5:25 目标的类别

len(objs):objs对象长度

"""

# data/VOCdevkit/VOC2007/JPEGImages存放源图片

# imname为训练样例路径

imname = os.path.join(self.data_path, 'JPEGImages', index + '.jpg') # 获取图片文件名路径

im = cv2.imread(imname) # 读取图片数据

h_ratio = 1.0 * self.image_size / im.shape[0] # 高度比

w_ratio = 1.0 * self.image_size / im.shape[1] # 宽度比

label = np.zeros((self.cell_size, self.cell_size, 25)) # 用于保存图片文件的标签

# data/VOCdevkit/VOC2007/Annotations存放的是xml文件

# 包含图片的boxes等信息,一张图片一个xml文件,与PEGImages中源图片一一对应

filename = os.path.join(self.data_path, 'Annotations', index + '.xml') # 图片文件的标注xml文件

tree = ET.parse(filename) # 将xml文档解析为树

objs = tree.findall('object') # 得到图片中所有的box info

# 开始遍历xml中所有的box info

for obj in objs:

bbox = obj.find('bndbox') # 找到标注的bounding box

# Make pixel indexes 0-based

# 将标注的(x1,y1,x2,y2) 进行缩放,由于图像在输入时进行了resize

x1 = max(min((float(bbox.find('xmin').text) - 1) * w_ratio, self.image_size - 1), 0)

y1 = max(min((float(bbox.find('ymin').text) - 1) * h_ratio, self.image_size - 1), 0)

x2 = max(min((float(bbox.find('xmax').text) - 1) * w_ratio, self.image_size - 1), 0)

y2 = max(min((float(bbox.find('ymax').text) - 1) * h_ratio, self.image_size - 1), 0)

# 得到类别的索引值

cls_ind = self.class_to_ind[obj.find('name').text.lower().strip()]

# 对boxes进行转换 (x1,y1,x2,y2) -> (x,y,w,h) 没有归一化

boxes = [(x2 + x1) / 2.0, (y2 + y1) / 2.0, x2 - x1, y2 - y1]

# 计算当前物体的中心在哪个网格中

x_ind = int(boxes[0] * self.cell_size / self.image_size)

y_ind = int(boxes[1] * self.cell_size / self.image_size)

if label[y_ind, x_ind, 0] == 1: # 表明该图片已经初始化过了

continue

# 置信度, 表示这个网格有物体

label[y_ind, x_ind, 0] = 1

# boxes,物体的边界框

label[y_ind, x_ind, 1:5] = boxes

# p(class), 物体的类别

label[y_ind, x_ind, 5 + cls_ind] = 1

return label, len(objs)

4、训练文件train.py解析

import os

import argparse

import datetime

import tensorflow as tf

import yolo.config as cfg

from yolo.yolo_net import YOLONet

from utils.timer import Timer

from utils.pascal_voc import pascal_voc

slim = tf.contrib.slim

# 用来训练YOLOV1网络模型

class Solver(object):

# 求解器的类,用于训练YOLO网络

def __init__(self, net, data):

self.net = net # yolo网络

self.data = data # voc数据

self.weights_file = cfg.WEIGHTS_FILE # 权重文件

self.max_iter = cfg.MAX_ITER # 最大迭代次数

self.initial_learning_rate = cfg.LEARNING_RATE # 初始学习率

self.decay_steps = cfg.DECAY_STEPS # 训练时优化器学习率参数

self.decay_rate = cfg.DECAY_RATE # 退化学习率衰减步数

self.staircase = cfg.STAIRCASE

self.summary_iter = cfg.SUMMARY_ITER # 日志文件保存间隔步数

self.save_iter = cfg.SAVE_ITER # 模型保存间隔

self.output_dir = os.path.join(

cfg.OUTPUT_DIR, datetime.datetime.now().strftime('%Y_%m_%d_%H_%M')) # 输出文件夹

if not os.path.exists(self.output_dir): # 输出文件夹,不存在则创建

os.makedirs(self.output_dir)

self.save_cfg() # 对cfg中的内容进行保存

self.variable_to_restore = tf.global_variables() # 指定保存的张量,这里指定所有变量

self.saver = tf.train.Saver(self.variable_to_restore, max_to_keep=None)

self.ckpt_file = os.path.join(self.output_dir, 'yolo') # 指定保存的模型名称

self.summary_op = tf.summary.merge_all() # merge所有的日志

self.writer = tf.summary.FileWriter(self.output_dir, flush_secs=60) # 将写下的日志文件保存到输出文件夹中

self.global_step = tf.train.create_global_step() # 创建变量,保存当前迭代的次数

self.learning_rate = tf.train.exponential_decay(

self.initial_learning_rate, self.global_step, self.decay_steps,

self.decay_rate, self.staircase, name='learning_rate') # 学习率以exponential_decay方式变化

self.optimizer = tf.train.GradientDescentOptimizer(

learning_rate=self.learning_rate) # 优化器

self.train_op = slim.learning.create_train_op(

self.net.total_loss, self.optimizer, global_step=self.global_step) # 需要训练的op

# 使用tensorflow较高版本时,需要使用以下语句,否则会报错

config = tf.ConfigProto()

config.gpu_options.allow_growth = True

#gpu_options = tf.GPUOptions()

#config = tf.ConfigProto(gpu_options=gpu_options)

self.sess = tf.Session(config=config)

self.sess.run(tf.global_variables_initializer()) # 运行图,对全局变量初始化

# 判断是否从之前的训练权重中接着训练

if self.weights_file is not None:

print('Restoring weights from: ' + self.weights_file)

self.saver.restore(self.sess, self.weights_file)

self.writer.add_graph(self.sess.graph) # 添加graph到日志文件中

def train(self): # 训练

train_timer = Timer() # 训练开始时间

load_timer = Timer() # 数据加载时间

# 进行迭代

for step in range(1, self.max_iter + 1):

load_timer.tic() # 计算每次迭代,加载数据的起始时间

images, labels = self.data.get() # 加载数据,每次读取batch大小的图片和标签

load_timer.toc() # 计算这次迭代加载数据集所用的时间

feed_dict = {self.net.images: images,

self.net.labels: labels} # 需要填充的数据

if step % self.summary_iter == 0: # 迭代summary_iter次,保存一次日志

if step % (self.summary_iter * 10) == 0: # 迭代self.summary_iter * 10次,输出一次迭代信息

train_timer.tic() # 计算每次迭代的起始时间

summary_str, loss, _ = self.sess.run(

[self.summary_op, self.net.total_loss, self.train_op],

feed_dict=feed_dict) # 开始训练,每一次迭代后global_step自加1

train_timer.toc()

log_str = '''{} Epoch: {}, Step: {}, Learning rate: {},'''

''' Loss: {:5.3f}\nSpeed: {:.3f}s/iter,'''

'''' Load: {:.3f}s/iter, Remain: {}'''.format(

datetime.datetime.now().strftime('%m-%d %H:%M:%S'),

self.data.epoch,

int(step),

round(self.learning_rate.eval(session=self.sess), 6),

loss,

train_timer.average_time,

load_timer.average_time,

train_timer.remain(step, self.max_iter)) # 添加日志

print(log_str)

else:

train_timer.tic() # 计算每次训练的起始时间

# 开始训练,每一次迭代后global_step自加1

summary_str, _ = self.sess.run(

[self.summary_op, self.train_op],

feed_dict=feed_dict)

train_timer.toc() # 计算这次迭代所用的时间

self.writer.add_summary(summary_str, step) # 将summary写入文件

else:

train_timer.tic()

self.sess.run(self.train_op, feed_dict=feed_dict)

train_timer.toc()

if step % self.save_iter == 0:

print('{} Saving checkpoint file to: {}'.format(

datetime.datetime.now().strftime('%m-%d %H:%M:%S'),

self.output_dir))

self.saver.save(

self.sess, self.ckpt_file, global_step=self.global_step)

# 将cfg中的内容保存到txt文件中

def save_cfg(self):

with open(os.path.join(self.output_dir, 'config.txt'), 'w') as f:

cfg_dict = cfg.__dict__

for key in sorted(cfg_dict.keys()):

if key[0].isupper():

cfg_str = '{}: {}\n'.format(key, cfg_dict[key])

f.write(cfg_str)

# 更新cfg中的路径

def update_config_paths(data_dir, weights_file):

cfg.DATA_PATH = data_dir # 数据集所在文件夹

cfg.PASCAL_PATH = os.path.join(data_dir, 'pascal_voc') # voc数据所在文件夹

cfg.CACHE_PATH = os.path.join(cfg.PASCAL_PATH, 'cache') # 保存生成的数据集的缓冲文件夹

cfg.OUTPUT_DIR = os.path.join(cfg.PASCAL_PATH, 'output') # 保存生成的网络模型和日志文件

cfg.WEIGHTS_DIR = os.path.join(cfg.PASCAL_PATH, 'weights') # 检查点文件所在的目录

cfg.WEIGHTS_FILE = os.path.join(cfg.WEIGHTS_DIR, weights_file)

def main():

# 定义超参数

parser = argparse.ArgumentParser()

parser.add_argument('--weights', default="YOLO_small.ckpt", type=str)

parser.add_argument('--data_dir', default="data", type=str)

parser.add_argument('--threshold', default=0.2, type=float)

parser.add_argument('--iou_threshold', default=0.5, type=float)

parser.add_argument('--gpu', default='0', type=str)

args = parser.parse_args()

# 判断是否使用GPU

if args.gpu is not None:

cfg.GPU = args.gpu

# 判断data路径是否一致,不一致则进行更新

if args.data_dir != cfg.DATA_PATH:

update_config_paths(args.data_dir, args.weights) # 对cfg中的路径信息进行更新

os.environ['CUDA_VISIBLE_DEVICES'] = cfg.GPU # 指定GPU进行训练

yolo = YOLONet() # yolov1模型

pascal = pascal_voc('train') # 开始处理训练数据集

solver = Solver(yolo, pascal) # 求解器对象

print('Start training ...')

solver.train() # 开始训练

print('Done training.')

if __name__ == '__main__':

main()

5、测试文件test.py解析

import os

import cv2

import argparse

import numpy as np

import tensorflow as tf

import yolo.config as cfg

from yolo.yolo_net import YOLONet

from utils.timer import Timer

# 用于网络测试

class Detector(object):

def __init__(self, net, weight_file):

self.net = net # yolov1网络

self.weights_file = weight_file # 检查点文件路径

self.classes = cfg.CLASSES # voc数据集的类别名

self.num_class = len(self.classes) # voc类别数

self.image_size = cfg.IMAGE_SIZE # 输入图像大小

self.cell_size = cfg.CELL_SIZE # 分成多个网格

self.boxes_per_cell = cfg.BOXES_PER_CELL # 每个网格里面有多少个边界框 B=2

self.threshold = cfg.THRESHOLD # 阈值参数

self.iou_threshold = cfg.IOU_THRESHOLD # IOU阈值参数

# # 将网络输出分离为类别和置信度以及边界框大小,输出维度为7*7*20 + 7*7*2+7*7*2*4=1470

self.boundary1 = self.cell_size * self.cell_size * self.num_class # 7*7*20

self.boundary2 = self.boundary1 +\

self.cell_size * self.cell_size * self.boxes_per_cell # 7*7*20 + 7*7*2

self.sess = tf.Session() # 开启会话

self.sess.run(tf.global_variables_initializer()) # 初始化全局变量

print('Restoring weights from: ' + self.weights_file)

self.saver = tf.train.Saver()

self.saver.restore(self.sess, self.weights_file) # 恢复训练得到的模型

# 将检测结果画到对应的图片上

def draw_result(self, img, result):

for i in range(len(result)): # 遍历所有的检测结果

x = int(result[i][1]) # x_center

y = int(result[i][2]) # y_center

w = int(result[i][3] / 2) # w/2

h = int(result[i][4] / 2) # h/2

# 绘制矩形框(目标边界框)矩形左上角,矩形右下角

cv2.rectangle(img, (x - w, y - h), (x + w, y + h), (0, 255, 0), 2)

# 绘制矩形框,用于存放类别名称,使用灰度填充

cv2.rectangle(img, (x - w, y - h - 20),

(x + w, y - h), (125, 125, 125), -1)

lineType = cv2.LINE_AA if cv2.__version__ > '3' else cv2.CV_AA # 线型

cv2.putText(

img, result[i][0] + ' : %.2f' % result[i][5],

(x - w + 5, y - h - 7), cv2.FONT_HERSHEY_SIMPLEX, 0.5,

(0, 0, 0), 1, lineType) # 绘制文本信息,写上类别名和置信度

def detect(self, img):

img_h, img_w, _ = img.shape # 获取图片的宽和高

inputs = cv2.resize(img, (self.image_size, self.image_size)) # 图片缩放 [448,448,3]

inputs = cv2.cvtColor(inputs, cv2.COLOR_BGR2RGB).astype(np.float32) # 颜色转换 BGR -> RGB

inputs = (inputs / 255.0) * 2.0 - 1.0 # 归一化处理,[-1.0,1.0]

inputs = np.reshape(inputs, (1, self.image_size, self.image_size, 3)) # reshape [1,448,448,3]

result = self.detect_from_cvmat(inputs)[0] # 获取网络输出第一项(即第一张图片) [1,1470]

# 对检测结果的边界框进行缩放处理,一张图片可以有多个边界框

for i in range(len(result)):

# x_center,y_center,w,h都是真实值,分别表示预测边界框的中心坐标,宽,高

result[i][1] *= (1.0 * img_w / self.image_size) # x_center

result[i][2] *= (1.0 * img_h / self.image_size) # y_center

result[i][3] *= (1.0 * img_w / self.image_size) # w

result[i][4] *= (1.0 * img_h / self.image_size) # h

return result

# 运行yolo网络,开始检测

def detect_from_cvmat(self, inputs):

"""

inputs:输入数据 [None,448,448,3]

return : 返回目标检测的结果,每一个元素对应一个测试图片,每个元素包含着若干个边界框

"""

# 返回网络最后一层,激活函数处理之前的值,shape = [None,1470]

net_output = self.sess.run(self.net.logits,

feed_dict={self.net.images: inputs})

results = []

# 对网络输出每一行数据进行处理

for i in range(net_output.shape[0]):

results.append(self.interpret_output(net_output[i]))

return results # 返回处理后的结果

def interpret_output(self, output):

"""

对yolov1网络输出进行处理

args:

output :yolo网络输出的每一行数据,大小为[1470,]

0:7*7*20 表示的是预测类别

7*7*20 : 7*7*20 + 7*7*2 表示预测置信度,即预测的边界框与实际边界框之间的IOU

7*7*20 + 7*7*2 : 1470 表示预测边界框 目标中心是相对当前网格的,宽度和高度的开根号是相对于当前整张图片的(归一化)

return :

result : yolo网络目标检测到的边界框,list类型,每一个元素对应一个目标框

包含(类别名,x_center,y_center,w,h,置信度) 实际上这个置信度是yolo网络输出的置信度confidence和预测对应的类别概率的乘积

"""

probs = np.zeros((self.cell_size, self.cell_size,

self.boxes_per_cell, self.num_class)) # shape [7,7,2,20]

class_probs = np.reshape(

output[0:self.boundary1],

(self.cell_size, self.cell_size, self.num_class)) # 类别概率 [7,7,20]

scales = np.reshape(

output[self.boundary1:self.boundary2],

(self.cell_size, self.cell_size, self.boxes_per_cell)) # 置信度 [7,7,2]

boxes = np.reshape(

output[self.boundary2:],

(self.cell_size, self.cell_size, self.boxes_per_cell, 4)) # 边界框 [7,7,2,4]

offset = np.array( # [14,7] 每一行都是[0,1,2,3,4,5,6]

[np.arange(self.cell_size)] * self.cell_size * self.boxes_per_cell)

offset = np.transpose( # [7,7,2] 每一行都是[[0,0],[1,1],[2,2],[3,3],[4,4],[5,5],[6,6]]

np.reshape(

offset,

[self.boxes_per_cell, self.cell_size, self.cell_size]),

(1, 2, 0))

# 目标中心是相对于当前网格的

boxes[:, :, :, 0] += offset

boxes[:, :, :, 1] += np.transpose(offset, (1, 0, 2))

boxes[:, :, :, :2] = 1.0 * boxes[:, :, :, 0:2] / self.cell_size

# 宽度,高度相对于整张图片

boxes[:, :, :, 2:] = np.square(boxes[:, :, :, 2:])

boxes *= self.image_size # 转换成实际的边界框(没有归一化)

# 遍历每一个边界框的置信度

for i in range(self.boxes_per_cell):

# 遍历每一个类别

for j in range(self.num_class):

# 在测试时,乘以条件概率和单个盒子的置信度,这些分数编码了j类出现在框i中的概率以及预测框拟合目标的程度

probs[:, :, i, j] = np.multiply(

class_probs[:, :, j], scales[:, :, i])

# [7,7,2,20] 如果第i个边界框检测到类别j,且概率大于阈值,则[:,:,i,j] = 1

filter_mat_probs = np.array(probs >= self.threshold, dtype='bool')

# 返回filter_mat_probs非0值的索引,返回4个List,每个List长度为n,即检测到的边界框的个数

filter_mat_boxes = np.nonzero(filter_mat_probs)

# 获取检测到目标的边界框 [n,4] n表示边界框个数

boxes_filtered = boxes[filter_mat_boxes[0],

filter_mat_boxes[1], filter_mat_boxes[2]]

# 获取检测到目标的边界框的置信度 [n,]

probs_filtered = probs[filter_mat_probs]

# 获取检测到的目标的边界框对应的类别 [n,]

classes_num_filtered = np.argmax(

filter_mat_probs, axis=3)[

filter_mat_boxes[0], filter_mat_boxes[1], filter_mat_boxes[2]]

# 按照置信度倒序排序,返回对应的索引

argsort = np.array(np.argsort(probs_filtered))[::-1]

boxes_filtered = boxes_filtered[argsort]

probs_filtered = probs_filtered[argsort]

classes_num_filtered = classes_num_filtered[argsort]

for i in range(len(boxes_filtered)):

if probs_filtered[i] == 0:

continue

for j in range(i + 1, len(boxes_filtered)):

# 计算n个边界框,两两之间的IOU是否大于阈值,进行非极大抑制

if self.iou(boxes_filtered[i], boxes_filtered[j]) > self.iou_threshold:

probs_filtered[j] = 0.0

# 非极大抑制后的输出

filter_iou = np.array(probs_filtered > 0.0, dtype='bool')

boxes_filtered = boxes_filtered[filter_iou]

probs_filtered = probs_filtered[filter_iou]

classes_num_filtered = classes_num_filtered[filter_iou]

result = []

# 遍历每一框

for i in range(len(boxes_filtered)):

result.append(

[self.classes[classes_num_filtered[i]], # 类别名

boxes_filtered[i][0], # x_center

boxes_filtered[i][1], # y_center

boxes_filtered[i][2], # w

boxes_filtered[i][3], # h

probs_filtered[i]]) # 置信度

return result

def iou(self, box1, box2): # 计算两个边界框的IOU

tb = min(box1[0] + 0.5 * box1[2], box2[0] + 0.5 * box2[2]) - \

max(box1[0] - 0.5 * box1[2], box2[0] - 0.5 * box2[2]) # 公共部分的宽

lr = min(box1[1] + 0.5 * box1[3], box2[1] + 0.5 * box2[3]) - \

max(box1[1] - 0.5 * box1[3], box2[1] - 0.5 * box2[3]) # 公共部分的高

inter = 0 if tb < 0 or lr < 0 else tb * lr

return inter / (box1[2] * box1[3] + box2[2] * box2[3] - inter) # 返回IOU

def camera_detector(self, cap, wait=10):

"""打开摄像头,实时检测"""

detect_timer = Timer() # 测试时间

ret, _ = cap.read() # 读取一帧

while ret:

ret, frame = cap.read() # 读取一帧

detect_timer.tic() # 测试开始时间

result = self.detect(frame)

detect_timer.toc() # 测试结束时间

print('Average detecting time: {:.3f}s'.format(

detect_timer.average_time))

self.draw_result(frame, result) # 绘制边界框以及添加附加信息

# 显示

cv2.imshow('Camera', frame)

cv2.waitKey(wait)

ret, frame = cap.read() # 读取下一帧

def image_detector(self, imname, wait=0):

"""对图片进行检测"""

detect_timer = Timer() # 计时

image = cv2.imread(imname) # 读取图片

detect_timer.tic() # 测试开始计时

result = self.detect(image) # 开始测试,返回测试后的结果

detect_timer.toc() # 测试结束计时

print('Average detecting time: {:.3f}s'.format(

detect_timer.average_time))

self.draw_result(image, result)

cv2.imshow('Image', image)

cv2.waitKey(wait)

def main():

# 定义超参数

parser = argparse.ArgumentParser()

parser.add_argument('--weights', default="YOLO_small.ckpt", type=str) # 保存的训练好的模型

parser.add_argument('--weight_dir', default='weights', type=str)

parser.add_argument('--data_dir', default="data", type=str)

parser.add_argument('--gpu', default='0', type=str)

args = parser.parse_args()

os.environ['CUDA_VISIBLE_DEVICES'] = args.gpu # 指定GPU进行测试

yolo = YOLONet(False) # 得到YOLOv1网络

weight_file = os.path.join(args.data_dir, args.weight_dir, args.weights) # 权重文件保存的路径

detector = Detector(yolo, weight_file)

# detect from camera

# cap = cv2.VideoCapture(-1)

# detector.camera_detector(cap)

# detect from image file

imname = 'test/person.jpg'

detector.image_detector(imname)

if __name__ == '__main__':

main()

以上便是个人对YOLOv1代码的理解,注释中如有不当的地方,还请各位指出!