【自然语言处理】【文本生成】Transformers中用于语言生成的不同解码方法

原文地址:https://huggingface.co/blog/how-to-generate

相关博客

【自然语言处理】【文本生成】Transformers中用于语言生成的不同解码方法

【自然语言处理】【文本生成】BART:用于自然语言生成、翻译和理解的降噪Sequence-to-Sequence预训练

【自然语言处理】【文本生成】UniLM:用于自然语言理解和生成的统一语言模型预训练

【自然语言处理】【多模态】OFA:通过简单的sequence-to-sequence学习框架统一架构、任务和模态

一、简介

近些年来,随着大型预训练语言模型的兴起,人们对开发式语言生成越来越感兴趣。之所以开放式语言生成效果令人印象深刻,除了 Transformer \text{Transformer} Transformer架构的改善和大量的无监督训练数据,更好的解码方式也扮演着重要的角色。本文对不同的解码方法进行了简单的介绍并展示如何使用transformers库进行实现。

以下所有的功能都是用于自回归语言生成。简单来说,自回归语言生成是基于以下假设:一个单词序列的概率分布可以被分解为下一个词分布的乘积:

P ( w 1 : T ∣ W 0 ) = ∏ t = 1 T P ( w t ∣ w 1 : t − 1 , W 0 ) , with w 1 : 0 = ∅ (1) P(w_{1:T}|W_0)=\prod_{t=1}^T P(w_t|w_{1:t-1},W_0),\text{with}\quad w_{1:0}=\empty \tag{1} P(w1:T∣W0)=t=1∏TP(wt∣w1:t−1,W0),withw1:0=∅(1)

其中, W 0 W_0 W0是初始上下文。单词序列的长度 T T T通常是动态的,相当于 P ( w t ∣ w 1 : t − 1 , W 0 ) P(w_t|w_{1:t-1},W_0) P(wt∣w1:t−1,W0)在时间步 t = T t=T t=T生成EOS token。

本文将介绍目前最著名的解码方法,主要有:Greedy search、Beam search、Top-k sampling、Top-p sampling。

二、加载模型

from transformers import GPT2LMHeadModel, GPT2Tokenizer

model = GPT2LMHeadModel.from_pretrained("gpt2")

tokenizer = GPT2Tokenizer.from_pretrained("gpt2")

model = model.to("cuda")

三、Greedy Search

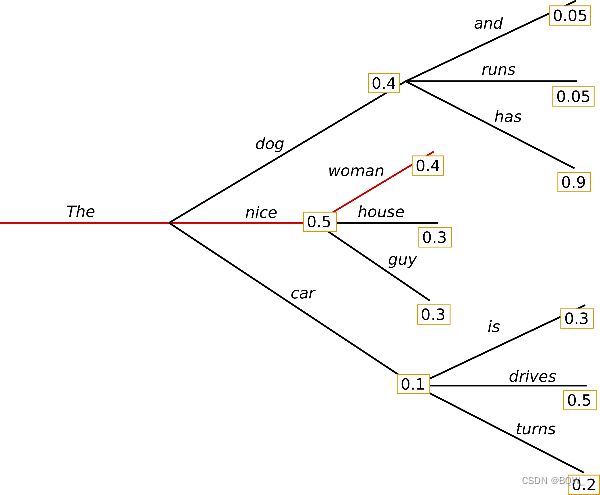

Greedy search \text{Greedy search} Greedy search简单选择概率最高的单词作为下一个单词: w t = argmax w P ( w ∣ w 1 : t − 1 ) w_t=\text{argmax}_wP(w|w_{1:t-1}) wt=argmaxwP(w∣w1:t−1)。下图是 Greedy search \text{Greedy search} Greedy search的示图。

起始于单词The,算法贪心的选择概率最高的下个单词nice,最终生成的单词序列 ( The,nice,woman ) (\text{The,nice,woman}) (The,nice,woman)具有整体概率 0.5 × 0.4 = 0.2 0.5\times 0.4=0.2 0.5×0.4=0.2。

input_ids = tokenizer.encode('I enjoy walking with my cute dog', return_tensors='pt').to("cuda")

greedy_output = model.generate(input_ids, max_length=50)

print("Output:\n" + 100 * '-')

print(tokenizer.decode(greedy_output[0], skip_special_tokens=True))

输出:

Output:

----------------------------------------------------------------------------------------------------

I enjoy walking with my cute dog, but I'm not sure if I'll ever be able to walk with my dog. I'm not sure if I'll ever be able to walk with my dog.

I'm not sure if I'll

根据上下文生成的单词是合理的,但是模型很快就开始重复!这在语言生成中是非常常见的问题,在greedy search和beam search中更是如此。

greed search的主要缺点是会忽略低概率单词后面的高概率单词。如上面的图所示,单词has具有最高的条件概率0.9,其是在第二高概率单词dog之后,所以greedy search忽略了单词序列 ( The,dog,has ) (\text{The,dog,has}) (The,dog,has)。beam search在一定程度上缓解了这个问题。

四、Beam Search

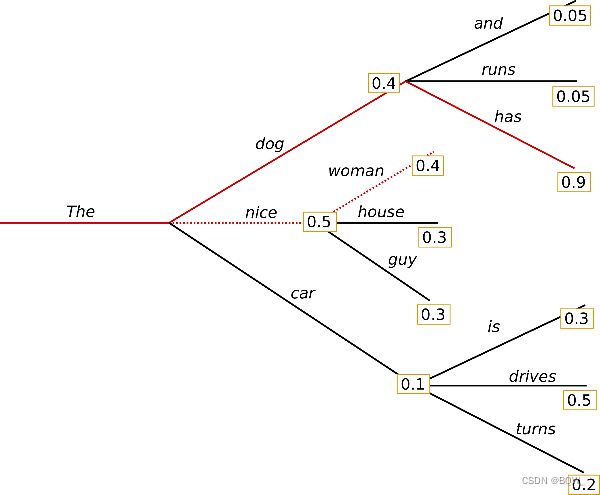

beam search降低了丢失隐藏高概率单词序列的风险,通过在每个时间步中保留最可能的num_beams,并最终选择总体概率最高的假设。这里基于num_beams=2进行解释:

在时间步1,除了最可能的假设 ( The, nice ) (\text{The, nice}) (The, nice),beam search也会跟踪第二可能的 ( The,dog ) (\text{The,dog}) (The,dog)。在时间步2,beam search发现单词序列 ( The,dog,has ) (\text{The,dog,has}) (The,dog,has)的概率0.36高于 ( The,nice,woman ) (\text{The,nice,woman}) (The,nice,woman)的概率0.2。beam search发现的输出序列概率高于greedy search,但是不能保证是概率最高的序列。

下面是transformers中的beam search。设置num_beams>1并且early_stopping=True,当所有的beam hypotheses达到EOS token则生成完成。

beam_output = model.generate(

input_ids,

max_length=50,

num_beams=5,

early_stopping=True

)

print("Output:\n" + 100 * '-')

print(tokenizer.decode(beam_output[0], skip_special_tokens=True))

输出:

Output:

----------------------------------------------------------------------------------------------------

I enjoy walking with my cute dog, but I'm not sure if I'll ever be able to walk with him again.

I'm not sure if I'll ever be able to walk with him again. I'm not sure if I'll

虽然结果更加的流畅,但是输出仍然包含重复的单词序列。一种简单的解决方案是引入n-grams的惩罚项。最常见的n-grams惩罚项是确保没有n-gram出现两次,通过设置已经出现的n-gram在下一次生成中概率为0。 transformers \text{transformers} transformers中设置no_repeat_ngram_size=2:

beam_output = model.generate(

input_ids,

max_length=50,

num_beams=5,

no_repeat_ngram_size=2,

early_stopping=True

)

print("Output:\n" + 100 * '-')

print(tokenizer.decode(beam_output[0], skip_special_tokens=True))

输出:

Output:

----------------------------------------------------------------------------------------------------

I enjoy walking with my cute dog, but I'm not sure if I'll ever be able to walk with him again.

I've been thinking about this for a while now, and I think it's time for me to take a break

效果看起来不错,没有再出现重复。然而,n-gram惩罚在使用时必须小心。生成一篇关于城市New York的文章则不应该使用2-gram惩罚,否则城市的名字在全文中仅出现一次。

beam search的另一个重要特征是,beam search能够在生成后比较各个beams并选择出最符合目标的beam。在transformers中,可以简单的设置参数num_return_sequences来指定返回的beams数量。

beam_outputs = model.generate(

input_ids,

max_length=50,

num_beams=5,

no_repeat_ngram_size=2,

num_return_sequences=5,

early_stopping=True

)

print("Output:\n" + 100 * '-')

for i, beam_output in enumerate(beam_outputs):

print("{}: {}".format(i, tokenizer.decode(beam_output, skip_special_tokens=True)))

输出:

Output:

----------------------------------------------------------------------------------------------------

0: I enjoy walking with my cute dog, but I'm not sure if I'll ever be able to walk with him again.

I've been thinking about this for a while now, and I think it's time for me to take a break

1: I enjoy walking with my cute dog, but I'm not sure if I'll ever be able to walk with him again.

I've been thinking about this for a while now, and I think it's time for me to get back to

2: I enjoy walking with my cute dog, but I'm not sure if I'll ever be able to walk with her again.

I've been thinking about this for a while now, and I think it's time for me to take a break

3: I enjoy walking with my cute dog, but I'm not sure if I'll ever be able to walk with her again.

I've been thinking about this for a while now, and I think it's time for me to get back to

4: I enjoy walking with my cute dog, but I'm not sure if I'll ever be able to walk with him again.

I've been thinking about this for a while now, and I think it's time for me to take a step

可以看到,5个输出的beam的差别微小。

在开放生成中,最近提出了beam search不是最好选择的原因:

-

beam search在机器翻译或者摘要这种结果的长度或多或少可以被预测的任务中表现很好。但是在开放生成中期望输出的长度变化非常大,例如:对话和故事生成。 -

beam search会有严重的重复生成问题。在故事生成中使用n-gram或者其他惩罚都很难控制,在强制"不重复"和重复特定的n-gram上寻找一个好的平衡需要大量的微调。 -

高质量的人类语言是不会遵循下一个词的高概率分布。换句话说,作为人类期望生成的文本是有趣的,而不是无聊且可预测的。下图是

beam search和人类生成结果的有趣性对比

五、Sampling

在最基本的形式中,sampling意味着根据条件概率分布随机挑选下一个单词 w t w_t wt:

w t ∼ P ( w ∣ w 1 : t − 1 ) w_t\sim P(w|w_{1:t-1}) wt∼P(w∣w1:t−1)

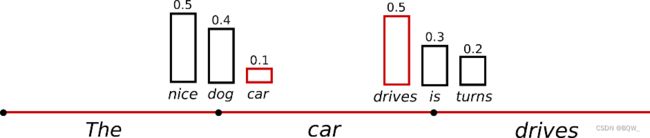

以上面的例子为例,下图可视化了sampling时的语言生成。

显然,使用sampling的语言生成不再是确定的。单词car是从条件概率分布 P ( w ∣ T h e ) P(w|The) P(w∣The)采样的,drives则是从 P ( w ∣ T h e , c a r ) P(w|The,car) P(w∣The,car)采样的。

在transformers中,可以设置do_sample=True并通过top-k=0禁用 Top-K sampling \text{Top-K sampling} Top-K sampling来实现。

torch.random.set_seed(0)

sample_output = model.generate(

input_ids,

do_sample=True,

max_length=50,

top_k=0

)

print("Output:\n" + 100 * '-')

print(tokenizer.decode(sample_output[0], skip_special_tokens=True))

输出:

Output:

----------------------------------------------------------------------------------------------------

I enjoy walking with my cute dog. He just gave me a whole new hand sense."

But it seems that the dogs have learned a lot from teasing at the local batte harness once they take on the outside.

"I take

生成的文章看起来很好,但是仔细看可以发现不是很连贯。new hand sense和local batte harness非常的奇怪,听起来不像是人类写的。最大的问题是采样的单词顺序:模型经常产生不连贯的胡言乱语。

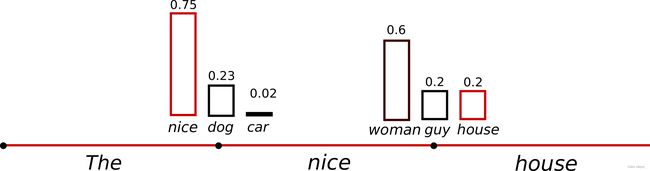

一种解决方法是通过softmax的temperature参数使得分布 P ( w ∣ w 1 : t − 1 ) P(w|w_{1:t-1}) P(w∣w1:t−1)更加的陡峭。应用temperature的例子如下。

在时间步 t = 1 t=1 t=1的下个单词概率变的更加的陡峭,使得单词car几乎不会被选择。下面是通过设置temperature=0.7来使得分布更加陡峭:

torch.random.set_seed(0)

sample_output = model.generate(

input_ids,

do_sample=True,

max_length=50,

top_k=0,

temperature=0.7

)

print("Output:\n" + 100 * '-')

print(tokenizer.decode(sample_output[0], skip_special_tokens=True))

输出:

Output:

----------------------------------------------------------------------------------------------------

I enjoy walking with my cute dog, but I don't like to be at home too much. I also find it a bit weird when I'm out shopping. I am always away from my house a lot, but I do have a few friends

六、Top-K Sampling

Top-K Sampling \text{Top-K Sampling} Top-K Sampling是非常有效的采样方案。在 Top-K Sampling \text{Top-K Sampling} Top-K Sampling中,概率最高的 K K K个下一个单词被挑选出来,概率权重仅在这 K K K个单词中重新分配。 GPT2 \text{GPT2} GPT2就采用这种采样策略,这也是其在故事生成上成功的原因之一。

为了更好的解释 Top-K \text{Top-K} Top-K采样,将上面示例中的两个采样步骤的单词范围从3个扩展至10个。

设置 K = 6 K=6 K=6,在两个采样步骤中均现在采样大小为6个词。6个最可能的词定义为 V t o p − K \text{V}_{top-K} Vtop−K,在第一个时间步其包含了整个概率的2/3,在第二个时间步其包含了绝大多数的概率。可以看到其在第二个时间步成功忽略了一些奇怪的候选词not、the、small、told。

下面展示通过设置top_k=50来使用 Top-K Sampling \text{Top-K Sampling} Top-K Sampling:

torch.random.set_seed(0)

sample_output = model.generate(

input_ids,

do_sample=True,

max_length=50,

top_k=50

)

print("Output:\n" + 100 * '-')

print(tokenizer.decode(sample_output[0], skip_special_tokens=True))

输出:

Output:

----------------------------------------------------------------------------------------------------

I enjoy walking with my cute dog. It's so good to have an environment where your dog is available to share with you and we'll be taking care of you.

We hope you'll find this story interesting!

I am from

结果还不错。生成的文本目前是最像人类撰写的文本。然而, Top-K sampling \text{Top-K sampling} Top-K sampling不能动态地调整需要过滤的下一个单词的数量。这会带来一些问题,因为一些单词可能是从非常陡峭的分布中采样的,而另一些则是从更加平坦分布采样的。

在时间步 t = 1 t=1 t=1, Top-K \text{Top-K} Top-K消除了采样people、big、house和cat的可能,但这些词似乎也是合理的。另一方面,在时间步 t = 2 t=2 t=2中,方法在候选单词中包含了down、a等不合适的单词。因此,限制采样池为固定大小的 K K K会使模型在陡峭分布时胡言乱语,并且在平坦分布时限制模型的创造力。

七、Top-p(nucleus) Sampling

相较于从最可能的 K K K个词中采样, Top-p sampling \text{Top-p sampling} Top-p sampling是累计概率超过概率 p p p的最小可能单词集合中选择。然后,概率分布在这组单词中重新分布。这个方法中单词集合的尺寸是根据下个单词概率分布动态增减的。

这里设 p = 0.92 p=0.92 p=0.92, Top-p sampling \text{Top-p sampling} Top-p sampling挑选概率累计超过 p = 92 % p=92\% p=92%的最小数量单词,定义为 V top-p V_{\text{top-p}} Vtop-p。在第一个例子中,其包含9个最可能的词;而在第二个例子中,最高概率的3个词就超过了 90 % 90\% 90%。可以看到,在下一个单词难以预测时保留了更多的单词,例如 P ( w ∣ T h e ) P(w|The) P(w∣The);当下一个单词更加明确时则保留更少的单词,例如 P ( w ∣ T h e , c a r ) P(w|The,car) P(w∣The,car)。

在transformers中通过设置0

torch.random.set_seed(0)

sample_output = model.generate(

input_ids,

do_sample=True,

max_length=50,

top_p=0.92,

top_k=0

)

print("Output:\n" + 100 * '-')

print(tokenizer.decode(sample_output[0], skip_special_tokens=True))

输出:

Output:

----------------------------------------------------------------------------------------------------

I enjoy walking with my cute dog. He will never be the same. I watch him play.

Guys, my dog needs a name. Especially if he is found with wings.

What was that? I had a lot o

上面的结果看起来像人类写的,但是还不够。理论上 Top-p \text{Top-p} Top-p比 Top-K \text{Top-K} Top-K更优雅,两种方法在实践中表现都不错。 Top-p \text{Top-p} Top-p还可以和 Top-K \text{Top-K} Top-K组合使用,其可以避免排名非常低的单词并允许动态选择。

最后,为了得到多个独立的采样输出,可以设置参数num_return_sequences > 1:

torch.random.set_seed(0)

sample_outputs = model.generate(

input_ids,

do_sample=True,

max_length=50,

top_k=50,

top_p=0.95,

num_return_sequences=3

)

print("Output:\n" + 100 * '-')

for i, sample_output in enumerate(sample_outputs):

print("{}: {}".format(i, tokenizer.decode(sample_output, skip_special_tokens=True)))

输出:

Output:

----------------------------------------------------------------------------------------------------

0: I enjoy walking with my cute dog. It's so good to have the chance to walk with a dog. But I have this problem with the dog and how he's always looking at us and always trying to make me see that I can do something

1: I enjoy walking with my cute dog, she loves taking trips to different places on the planet, even in the desert! The world isn't big enough for us to travel by the bus with our beloved pup, but that's where I find my love

2: I enjoy walking with my cute dog and playing with our kids," said David J. Smith, director of the Humane Society of the US.

"So as a result, I've got more work in my time," he said.

八、结论

作为解码方法,在开发语言生成中 Top-p \text{Top-p} Top-p和 Top-K \text{Top-K} Top-K似乎能够比传统的 greedy search \text{greedy search} greedy search和 beam search \text{beam search} beam search产生更加流程的文本。最近的研究表明greedy search和beam search的明显缺陷,即生成重复的单词序列,是由于模型导致的,而不是解码方法。因此, Top-p \text{Top-p} Top-p和 Top-K \text{Top-K} Top-K也会遭受生成重复单词序列的问题。