自然语言处理:预训练

14.8 来自trans的双向编码器表示(Bert)

- Bidirectional Encoder Representation from Transformers

14.8.1 从上下文无关 到 上下文敏感

-

NLP中 丰富的多义现象 和 复杂的语义 → \rightarrow →同一词可根据上下文赋予不同的表示

-

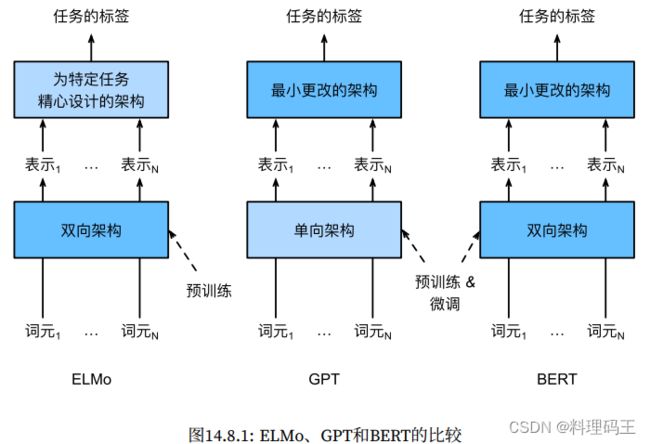

流行的上下文敏感表示:TagLM(language-model augmented sequence tagger)、CoVe(Context Vectors)、ELMo(Embeddings from Language Models)

-

ELMo简洁介绍:

- 整个序列作为输入,ELMo为 序列中每个词 分配 一个表示函数。

- 具体而言,ELMo将 来自预训练的BiLSTM的所有中间层表示 组合为 输出表示;

- 然后,ELMo的表示 将作为 附加特征 添加到 下游任务的现有监督模型 中,例如将 ELMo的表示 和现有模型中词元的原始表示(如GloVe)连结起来;

-

ELMo优点:

- 假如ELMo表示后,冻结了 预训练的BiLSTM中的所有权重;

- 现有的监督模型 是专门为 给定的任务定制的。

- 利用当时不同任务的不同最佳模型,添加ELMo改进了六种NLP任务的技术水平:情感分析、自然语言推断、SRL、共指消解、NER和Q&A。

14.8.2 从 特定任务 到 不可知任务

- 即便有ELMo的改进,但 每个解决方案 仍依赖于 一个特定任务的架构。

- GPT介绍

- GPT(Generative Pre Training)模型 为 上下文的敏感表示 设计了 通用的任务无关模型。

- GPT建立在trans解码器的基础上,预训练一个用于表示 文本序列 的LM;

- GPT运用于 下游任务 时,LM的输出 被送到一个 附加的线性输出层,以预测 任务的标签。

- GPT在 下游任务的监督学习过程中 对 预训练trans解码器中的所有参数 进行微调;

- GPT在 自然语言推断、问答、句子相似性、分类等12任务上进行评估,其中9项任务在最小更改的情况下提升了水平。

- GPT只能向前看(从左到右),即拥有AR特性,无法辨别多义词。

14.8.3 BERT:把两个最好的结合起来

-

ELMo(双向编码特性) + GPT(任务无关,但是 AR特性)

-

Bert使用trans编码器

-

Bert与GPT两点类似:

- Bert表示 将被输入到一个 附加的线性输出层;

- 根据任务的性质对模型架构进行最小的更改,例如预测 每个词元 与 预测 整个序列。

- 对预训练trans编码器的所有参数进行微调,而额外的输出层将从头开始训练

-

Bert改进了11种NLP任务的水平,有以下4个大类:

- 单一文本分类(情感分析)

- 文本对分类(如自然语言推断)

- Q&A

- 文本标记(如NER)

-

2018年:ELMo → \rightarrow → GPT、Bert

-

概念上简单经验上强大的 自然语言深度表示 已经统治了 各种NLP任务的解决方案。

-

本章:深入了解Bert的训练前准备

-

下章:针对下游应用的Bert微调

import torch

from torch import nn

from d2l import torch as d2l

D:\ana3\envs\nlp_prac\lib\site-packages\numpy\_distributor_init.py:32: UserWarning: loaded more than 1 DLL from .libs:

D:\ana3\envs\nlp_prac\lib\site-packages\numpy\.libs\libopenblas.IPBC74C7KURV7CB2PKT5Z5FNR3SIBV4J.gfortran-win_amd64.dll

D:\ana3\envs\nlp_prac\lib\site-packages\numpy\.libs\libopenblas.XWYDX2IKJW2NMTWSFYNGFUWKQU3LYTCZ.gfortran-win_amd64.dll

stacklevel=1)

14.8.4 输入表示

- Bert输入序列 明确地表示 单个文本 和 文本对,即一个Bert输入序列可以包括 一个文本序列 或 两个文本序列;

- Bert输入序列格式:特殊类别词元

- 如输入文本对时,Bert输入序列格式为:

# 输入句子(对),返回 Bert输入序列的标记 及其 相应的片段索引

def get_tokens_and_segments(tokens_a, tokens_b=None):

"""获取输入序列的词元 及其 片段索引"""

tokens = ['' ] + tokens_a + ['' ]

# 0 和 1 分别标记片段 A 和 B

segments = [0] * (len(tokens_a) + 2)

if tokens_b is not None:

tokens += tokens_b + ['' ]

segments += [1] * (len(tokens_b) + 1)

return tokens, segments

# 与原始trans编码器不同,Bert使用 片段嵌入 和 可学习的位置嵌入。

class BERTEncoder(nn.Module):

"""Bert编码器"""

def __init__(self, vocab_size, num_hiddens, norm_shape, ffn_num_input,

ffn_num_hiddens, num_heads, num_layers, dropout,

max_len=1000, key_size=768, query_size=768, value_size=768,

**kwargs):

super(BERTEncoder, self).__init__(**kwargs)

self.token_embedding = nn.Embedding(vocab_size, num_hiddens)

self.segment_embedding = nn.Embedding(2, num_hiddens)

self.blks = nn.Sequential()

# 构造多个块

for i in range(num_layers):

self.blks.add_module(f'{i}', d2l.EncoderBlock(

key_size, query_size, value_size, num_hiddens, norm_shape,

ffn_num_input, ffn_num_hiddens, num_heads, dropout, True))

# Bert中,位置嵌入是 可学习的,因此我们创建一个 足够长的位置嵌入参数

self.pos_embedding = nn.Parameter(torch.randn(1, max_len, num_hiddens))

def forward(self, tokens, segments, valid_lens):

# 在下面代码中,x形状不变:(批量大小,最大序列长度,num_hiddens)

x = self.token_embedding(tokens) + self.segment_embedding(segments)

x = x + self.pos_embedding.data[:, :x.shape[1], :]

for blk in self.blks:

x = blk(x, valid_lens)

return x

# 演示BertEncoder的前向推断,创建一个实例并初始化 其参数

vocab_size, num_hiddens, ffn_num_hiddens, num_heads = 1000, 768, 1024, 4

norm_shape, ffn_num_input, num_layers, dropout = [768], 768, 2, 0.2

encoder = BERTEncoder(vocab_size, num_hiddens, norm_shape, ffn_num_input,

ffn_num_hiddens, num_heads, num_layers, dropout)

# 定义tokens: 长度为8的2个输入序列,每个词元是 词表的索引

# 使用输入tokens的BERTEncoder的前向推断 返回 编码结果

# 每个词元 由 向量表示,

# 向量长度由超参数 num_hiddens(trans编码器的隐藏大小,即隐藏单元数) 定义

tokens = torch.randint(0, vocab_size, (2, 8))

segments = torch.tensor([[0, 0, 0, 0, 1, 1, 1, 1],[0, 0, 0, 1, 1, 1, 1, 1]])

encoded_x = encoder(tokens, segments, None)

encoded_x.shape

# torch.Size([2, 8, 768])

# 输出解释:2句话、每句8个词,每个词维度是768

torch.Size([2, 8, 768])

14.8.5 预训练任务

- BertEncoder的前向推断:给出了 输入文本的 每个词元和插入的特殊标记

- 之后,将使用这些表示来计算 预训练Bert的损失函数,它包括两个任务:

- 掩蔽LM(Masked Language Modeling)

- NSP(Next Sentence Prediction)

掩蔽LM(Masked Language Modeling)

- 对比

- LM:使用 左侧的上下文 预测 词元;

- Bert:随机掩蔽词元(15%) 并使用 来自双向上下文的词元,以 自监督的方式 预测 掩蔽词元。

- 由于掩蔽词元

- 80%时间 为 特殊的

- 10%时间 为 随机词元(这种偶然的噪声 鼓励 Bert在其双向上下文编码中不那么偏向于掩蔽词元(尤其是当标签词元保持不变时))

- 10%时间 不做改变

- 80%时间 为 特殊的

- 下面的MaskLM类 来预测 掩蔽标记。预测使用 单隐藏层的 多层感知机

- 前向推断中,输入为:1. BertEncoder的编码结果 2.用于预测的词元位置;输出:这些位置的预测结果

class MaskLM(nn.Module):

"""Bert的maskedLM"""

def __init__(self, vocab_size, num_hiddens, num_inputs=768, **kwargs):

super(MaskLM, self).__init__(**kwargs)

self.mlp = nn.Sequential(nn.Linear(num_inputs, num_hiddens),

nn.ReLU(),

nn.LayerNorm(num_hiddens),

nn.Linear(num_hiddens, vocab_size))

def forward(self, x, pred_positions):

num_pred_positions = pred_positions.shape[1]

pred_positions = pred_positions.reshape(-1)

batch_size = x.shape[0]

batch_idx = torch.arange(0, batch_size)

# 假设 batch_size = 2, num_pred_positions=3

# 那么 batch_idx 是 np.array([0, 0, 0, 1, 1])

batch_idx = torch.repeat_interleave(batch_idx, num_pred_positions)

masked_x = x[batch_idx, pred_positions]

masked_x = masked_x.reshape((batch_size, num_pred_positions, -1))

mlm_y_hat = self.mlp(masked_x)

return mlm_y_hat

mlm = MaskLM(vocab_size, num_hiddens) # 初始化该类

mlm_positions = torch.tensor([[1, 5, 2], [6, 1, 5]]) # 序列中需进行预测的三个索引

mlm_y_hat = mlm(encoded_x, mlm_positions) # 两个bert输入序列,预测索引

mlm_y_hat.shape

torch.Size([2, 3, 1000])

# 计算交叉熵损失

mlm_y = torch.tensor([[7,8,9],[10,20,30]]) # 真实标签

loss = nn.CrossEntropyLoss(reduction='none')

mlm_l= loss(mlm_y_hat.reshape((-1, vocab_size)), mlm_y.reshape(-1))

mlm_l.shape

torch.Size([6])

mlm_l

tensor([7.3066, 6.4002, 6.6777, 7.2173, 7.4744, 6.8937],

grad_fn=)

NSP(Next Sentence Prediction)

- 尽管masked LM能够编码 双向上下文 来表示单词,但无法显示地建模 文本对之间的逻辑关系。故有 NSP任务:

- 50%时间 句子对 为“真”的连续句子

- 50%时间 第二个句子从语料库中随机抽取,标记为“假”

- 下面的NextSentencePred类功能:使用 单隐藏层的MLP 来预测 第二个句子是否是Bert输入序列中第一个句子的下一个句子。

- 由于 trans编码器中的self-att,特殊词元

- 故,MLP分类器的 输出层(self.output)以x作为输入,x 是 MLP隐藏层的输出,而MLP隐藏层的输入 是 编码后的

class NextSentencePred(nn.Module):

"""Bert的下一句预测任务(NSP)"""

def __init__(self, num_inputs, **kwargs):

super(NextSentencePred, self).__init__(**kwargs)

self.output = nn.Linear(num_inputs, 2)

def forward(self, x):

# x: (batch_size, num_hiddens)

return self.output(x)

# 测试

encoded_x = torch.flatten(encoded_x, start_dim=1)

# print(encoded_x, encoded_x.shape)

# NSP的输入形状:(batch_size, num_hiddens)

nsp = NextSentencePred(encoded_x.shape[-1])

nsp_y_hat = nsp(encoded_x)

nsp_y_hat, nsp_y_hat.shape

(tensor([[-0.3838, -0.2278],

[ 0.2239, 0.2473]], grad_fn=),

torch.Size([2, 2]))

# 计算 两个二元分类 的交叉熵损失

nsp_y = torch.tensor([0, 1])

nsp_l = loss(nsp_y_hat, nsp_y)

nsp_l, nsp_l.shape

(tensor([0.7742, 0.6815], grad_fn=), torch.Size([2]))

- bert预训练语料库(无需人工标注语料):

- 图书语料库——8亿个单词

- 英文维基百科——25亿个单词

14.8.6 整合代码

- 最终的损失函数:masked-LM损失函数 和 NSP损失函数 的线性组合

- 实例化 BERTEncoder、MaskedLM和NextSentencePred三个类 来 定义BERTModel类

- 前向推断返回:

- 编码后的 BERT表示——encoded_x

- masked-LM模型预测结果——mlm_y_hat

- NSP预测结果——nsp_y_hat

class BERTModel(nn.Module):

"""BERT模型"""

def __init__(self, vocab_size, num_hiddens, norm_shape, ffn_num_input,

ffn_num_hiddens, num_heads, num_layers, dropout,

max_len=1000, key_size=768, query_size=768, value_size=768,

hid_in_features=768, mlm_in_features=768,

nsp_in_features=768):

super(BERTModel, self).__init__()

self.encoder = BERTEncoder(vocab_size, num_hiddens, norm_shape,

ffn_num_input, ffn_num_hiddens, num_heads, num_layers,

dropout, max_len=max_len, key_size=key_size,

query_size=query_size, value_size=value_size)

self.hidden = nn.Sequential(nn.Linear(hid_in_features, num_hiddens),

nn.Tanh())

self.mlm = MaskLM(vocab_size, num_hiddens, mlm_in_features) # 任务1

self.nsp = NextSentencePred(nsp_in_features) # 任务2

def forward(self, tokens, segments, valid_lens=None):

encoded_x = self.encoder(tokens, segments, valid_lens)

if pred_positions is not None:

mlm_y_hat = self.mlm(encoded_x, pred_positions) # 任务1

else:

mlm_y_hat = None

# 用于NSP的MLP分类器的隐藏层, 0是“”标记的索引

nsp_y_hat = self.nsp(self.hidden(encoded_x[:, 0, :])) # 任务2

return encoded_x, mlm_y_hat, nsp_y_hat

- 小结

- word2vec和GloVe等词嵌入模型:与上下文无关、每个词仅有一个向量、难处理一词多义或复杂语义;

- ELMo和GPT:词的表示依赖于 它们的上下文。

- ELMo:对上下文双向编码、但使用特定任务的架构

- GPT:任务无关、但是AR特性

- Bert结合上述两者优点——上下文双向编码、只需对大量NLP任务进行最小架构更改

- Bert输入序列的嵌入:词元嵌入、片段嵌入 和 位置嵌入的和

- 预训练包括:masked-LM 和 NSP

14.9 用于预训练BERT的数据集

- 为了训练上述实现的BERT模型,需以合适的格式生成数据集,以适应这两个任务

- 两个棘手的问题

- 最初的bert数据集:图书语料库 和 英语维基百科(两个庞大的语料库的合集)。

- 现成的bert预训练模型 不适合 医学特定领域的应用。

- 因此,需要有定制的数据集;故使用 较小数据集WikiText-2 进行演示

- 与预训练word2vec的PTB数据集相比,WikiText-2优点:

- 保留了原来的标点符号,适用于下一句的预测

- 保留了原来的大小写和数字

- 大了一倍以上

import os, random, torch

from d2l import torch as d2l

- WikiText-2数据集中:

- 每行一个段落,标点符号和词元之间插入空格;

- 保留至少两句话的段落

- 简单起见,本节用句号作为分隔符来拆分句子

- 后续讨论更复杂的句子拆分技术。

# 下载数据

d2l.DATA_HUB['wikitext-2'] = (

'https://s3.amazonaws.com/research.metamind.io/wikitext/'

'wikitext-2-v1.zip', '3c914d17d80b1459be871a5039ac23e752a53cbe')

def _read_wiki(data_dir):

file_name = os.path.join(data_dir, 'wiki.train.tokens')

with open(file_name, 'r', encoding='utf-8') as f:

lines = f.readlines()

# 大写字母转换为小写字母

paragraphs = [line.strip().lower().split(' . ')

for line in lines if len(line.split(' . ')) >= 2]

random.shuffle(paragraphs)

return paragraphs

14.9.1 为预训练任务定义辅助函数

- 定义辅助函数,用以 稍后将 原始文本语料库 转换为 理想格式的数据集,以预训练BERT

NSP任务的数据

- 生成二分类任务的训练样本

def _get_next_sentence(sentence, next_sentence, paragraphs):

# 50% 的概率是下一句话

if random.random() < 0.5:

is_next = True

else:

# paragraphs 是三重列表的嵌套

next_sentence = random.choice(random.choice(paragraphs)) # 随机选择下一句话

is_next = False

return sentence, next_sentence, is_next

- 下面的函数作用:从输入paragraph生成用于下一句预测的训练样本

# 句子列表,每个句子是词元列表 bert输入序列的最大长度

def _get_nsp_data_from_paragraph(paragraph, paragraphs, vocab, max_len):

nsp_data_from_paragraph = []

for i in range(len(paragraph) - 1):

tokens_a, tokens_b, is_next = _get_next_sentence( # 确定这一对是不是正样本

paragraph[i], paragraph[i + 1], paragraphs)

# 考虑一个``词元和两个``词元

if len(tokens_a) + len(tokens_b) + 3 > max_len: # 不使用超过最大长度的样本

continue

tokens, segments = d2l.get_tokens_and_segments(tokens_a, tokens_b)

nsp_data_from_paragraph.append((tokens, segments, is_next)) # 加入最终的数据集中

return nsp_data_from_paragraph

生成masked-LM任务的样本

- 参数说明

- tokens:bert输入序列的 词元的列表

- candidate_pred_positions: 不包括特殊字符的bert输入序列的 词元索引的列表(特殊词元在该任务中不被预测)

- num_mlm_preds: 预测的数量(选择15%要预测的随机词元)

- 每个预测位置的三种情况:

- 特殊的“掩码”词元

- 随机词元替换

- 保持不变

- 该函数的返回值:

- 可能替换后的 输入词元

- 发生预测的 词元索引

- 这些预测的 标签

def _replace_mlm_tokens(tokens, candidate_pred_positions, num_mlm_preds, vocab):

# 为 masked-LM 的输入创建 新的词元副本,其中输入可能包含 替换的``或随机词元

mlm_input_tokens = [token for token in tokens]

pred_positions_and_labels = []

# 打乱后用于在 masked-LM 任务中获取 15%的随机词元 进行预测

random.shuffle(candidate_pred_positions)

for mlm_pred_position in candidate_pred_positions:

if len(pred_positions_and_labels) >= num_mlm_preds:

break

masked_token = None

# 80%时间:将词替换为``词元

if random.random() < 0.8:

masked_token = ""

else:

# 10%的时间:保持词不变

if random.random() < 0.5:

masked_token = tokens[mlm_pred_position]

# 10%的时间:用 随机词 替换该词

else:

masked_token = random.choice(vocab.idx_to_token)

mlm_input_tokens[mlm_pred_position] = masked_token

pred_positions_and_labels.append(

(mlm_pred_position, tokens[mlm_pred_position]))

return mlm_input_tokens, pred_positions_and_labels

# 带掩码的输入词元、 (发生预测的词元索引、要预测的词)

- 以下函数 调用 前述函数,输入为:

- BERT输入序列(tokens)

- 返回:

- 输入词元的索引

- 发生预测的词元索引

- 这些预测的标签索引

def _get_mlm_data_from_tokens(tokens, vocab):

candidate_pred_positions = []

# tokens 是 一个字符串列表

for i, token in enumerate(tokens):

# 在 masked-LM任务 中不会预测特殊词元

if token in ['' , '' ]:

continue

candidate_pred_positions.append(i)

# masked-LM 任务中预测 15%的随机词元

num_mlm_preds = max(1, round(len(tokens) * 0.15))

mlm_input_tokens, pred_position_and_labels = _replace_mlm_tokens(

tokens, candidate_pred_positions, num_mlm_preds, vocab)

pred_position_and_labels = sorted(pred_position_and_labels,

key=lambda x: x[0])

pred_positions = [v[0] for v in pred_position_and_labels]

mlm_pred_labels = [v[1] for v in pred_position_and_labels]

return vocab[mlm_input_tokens], pred_positions, vocab[mlm_pred_labels]

14.9.2 将 文本 转换为 预训练数据集

- 在为 bert预训练 定制 一个Dataset类之前,需定义辅助函数将

- 参数examples:_get_nsp_data_from_paragraph 和 _get_mlm_data_from_tokens的输出

def _pad_bert_inputs(examples, max_len, vocab):

max_num_mlm_preds = round(max_len * 0.15) # 最大预测词的数量

all_token_ids, all_segments, valid_lens = [], [], []

all_pre_positions, all_mlm_weights, all_mlm_labels = [], [], []

nsp_labels = []

for (token_ids, pred_positions, mlm_pred_label_ids, segments, is_next) in examples:

all_token_ids.append(torch.tensor(token_ids + [vocab['' ]] * (

max_len - len(token_ids)), dtype=torch.long))

all_segments.append(torch.tensor(segments + [0] *(

max_len - len(segments)), dtype=torch.long))

# valid_lens 不包括 的计数

valid_lens.append(torch.tensor(len(token_ids), dtype=torch.float32))

all_pre_positions.append(torch.tensor(pred_positions + [0] * (

max_num_mlm_preds - len(pred_positions)), dtype=torch.long))

# 填充 词元的预测 将通过乘以 0 权重

all_mlm_weights.append(

torch.tensor([1.0] * len(mlm_pred_label_ids) + [0.0] * (

max_num_mlm_preds - len(pred_positions)),

dtype=torch.float32))

all_mlm_labels.append(torch.tensor(mlm_pred_label_ids + [0] * (

max_num_mlm_preds - len(mlm_pred_label_ids)), dtype=torch.long))

nsp_labels.append(torch.tensor(is_next, dtype=torch.long))

return (all_token_ids, all_segments, valid_lens, all_pre_positions,

all_mlm_weights, all_mlm_labels, nsp_labels)

- 以下的_WikiTextDataset类包括:

- 两个训练任务的训练样本 的辅助函数

- 用于 填充输入 的辅助函数

__getitem__函数:任意访问 WikiText-2语料库的一对句子生成的预训练样本(masked-LM和NSP)- 最初的bert模型使用词表为3w的wordpiece嵌入,此处简单使用d2l.tokenize函数进行词元化。过滤掉少于5词的低频词元。

class _WikiTextDataset(torch.utils.data.Dataset):

def __init__(self, paragraphs, max_len):

# 输入paragraphs[i] 是代表段落的 句子字符串列表

# 而输出paragraphs[i] 是代表 段落的句子列表,其中每个句子都是 词元列表

paragraphs = [d2l.tokenize(

paragraph, token='word') for paragraph in paragraphs]

sentences = [sentence for paragraph in paragraphs

for sentence in paragraph]

self.vocab = d2l.Vocab(sentences, min_freq=5, reserved_tokens=[

'' , '' , '' , ''

])

# 获取 NSP的数据

examples = []

for paragraph in paragraphs:

examples.extend(_get_nsp_data_from_paragraph(

paragraph, paragraphs, self.vocab, max_len))

# 获取 masked_LM 的数据

examples = [(_get_mlm_data_from_tokens(tokens, self.vocab)

+ (segments, is_next))

for tokens, segments, is_next in examples]

# 填充输入

(self.all_token_ids, self.all_segments, self.valid_lens,

self.all_pred_positions, self.all_mlm_weights,

self.all_mlm_labels, self.nsp_labels) = _pad_bert_inputs(

examples, max_len, self.vocab)

def __getitem__(self, idx):

return (self.all_token_ids[idx], self.all_segments[idx],

self.valid_lens[idx], self.all_pred_positions[idx],

self.all_mlm_weights[idx], self.all_mlm_labels[idx],

self.nsp_labels[idx])

def __len__(self):

return len(self.all_token_ids)

- load_data_wiki函数:

- _read_wiki函数:下载并生成WikiText-2数据集

- _WikiTextDataset类:生成预训练样本

def load_data_wiki(batch_size, max_len):

"""加载WikiText-2数据集"""

num_workers = d2l.get_dataloader_workers() # 返回4

num_workers = 0

data_dir = d2l.download_extract('wikitext-2', 'wikitext-2')

paragraphs = _read_wiki(data_dir)

train_set = _WikiTextDataset(paragraphs, max_len)

train_iter = torch.utils.data.DataLoader(train_set, batch_size,

shuffle=True, num_workers=num_workers)

return train_iter, train_set.vocab

# 打印出小批量的 bert预训练样本 的形状

# 批量大小:512,;bert输入序列的最大长度为:64

batch_size, max_len = 512, 64

train_iter, vocab = load_data_wiki(batch_size, max_len)

for (tokens_x, segments_x, valid_lens_x, pred_positions_x, mlm_weights_x,

mlm_y, nsp_y) in train_iter:

print(tokens_x.shape, segments_x.shape, valid_lens_x.shape, pred_positions_x.shape,

mlm_weights_x.shape, mlm_y.shape, nsp_y.shape)

break

torch.Size([512, 64]) torch.Size([512, 64]) torch.Size([512]) torch.Size([512, 10]) torch.Size([512, 10]) torch.Size([512, 10]) torch.Size([512])

len(vocab), vocab.idx_to_token[10045]

(20256, 'hospitallers')

- 小结:

- Spacy 和 NLTK 可以拆分句子

- 数据集WikiText-2比PTB大两倍多

14.10 预训练Bert

- 14.8节:实现Bert模型

- 14.9节:从WikiText-2数据集生成 预训练样本

- 本节:在WikiText-2数据集上对 BERT 进行 预训练

import torch, os, random

from torch import nn

from d2l import torch as d2l

- 首先,加载 WikiText-2数据集 作为 小批量的预训练样本,用于 两个任务

- 批量大小为:512;

- bert输入序列的最大长度:64(原始bert中为512)

batch_size, max_len = 512, 64

train_iter, vocab = load_data_wiki(batch_size, max_len)

14.10.1 预训练 BERT

- 各种版本的bert:

- 原始基本模型:12层trans编码器块、768个隐藏层单元(隐藏大小)和12个自注意头

- 大模型:12层trans编码器块、1024个隐藏层单元(隐藏大小)和16个自注意头

- 本模型:2层trans编码器块、128个隐藏层单元(隐藏大小)和2个自注意头

net = d2l.BERTModel(len(vocab), num_hiddens=128, norm_shape=[128],

ffn_num_input=128, ffn_num_hiddens=256, num_heads=2,

num_layers=2, dropout=0.2, key_size=128, query_size=128,

value_size=128, hid_in_features=128, mlm_in_features=128,

nsp_in_features=128)

devices = d2l.try_all_gpus()

loss = nn.CrossEntropyLoss()

# 训练前,定义一个辅助函数计算(给定训练样本)两个预测任务的损失。

# 注意:BERT预训练的最终损失是 两个任务损失的和

def _get_batch_loss_bert(net, loss, vocab_size, tokens_x, segments_x, valid_lens_x,

pred_positions_x, mlm_weights_x, mlm_y, nsp_y):

# 前向传播

_, mlm_y_hat, nsp_y_hat = net(tokens_x, segments_x, valid_lens_x.reshape(-1),

pred_positions_x)

# 计算masked-LM的损失

mlm_l = loss(mlm_y_hat.reshape(-1, vocab_size), mlm_y.reshape(-1)) * \

mlm_weights_x.reshape(-1, 1)

mlm_l = mlm_l.sum() / (mlm_weights_x.sum() + 1e-8)

# 计算NSP的损失

nsp_l = loss(nsp_y_hat, nsp_y)

# 最终损失

l = mlm_l + nsp_l

return mlm_l, nsp_l, l

# 该函数定义了在WikiText-2数据集上预训练BERT(net)的过程

# 参数num_steps指定了 训练的迭代步数

def train_bert(train_iter, net, loss, vocab_size, devices, num_steps):

net = nn.DataParallel(net, device_ids=devices).to(devices[0])

trainer = torch.optim.Adam(net.parameters(), lr = 0.01)

step, timer = 0, d2l.Timer()

animator = d2l.Animator(xlabel='step', ylabel='loss',

xlim=[1, num_steps], legend=['mlm', 'nsp'])

# masked-LM损失的和,NSP损失的和,句子对的数量,计数

metric = d2l.Accumulator(4)

num_steps_reached = False

while step < num_steps and not num_steps_reached:

for tokens_x, segments_x, valid_lens_x, pred_positions_x, \

mlm_weights_x, mlm_y, nsp_y in train_iter:

tokens_x = tokens_x.to(devices[0])

segments_x = segments_x.to(devices[0])

valid_lens_x = valid_lens_x.to(devices[0])

pred_positions_x = pred_positions_x.to(devices[0])

mlm_weights_x = mlm_weights_x.to(devices[0])

mlm_y, nsp_y = mlm_y.to(devices[0]), nsp_y.to(devices[0])

trainer.zero_grad()

timer.start()

mlm_l, nsp_l, l = _get_batch_loss_bert(

net, loss, vocab_size, tokens_x, segments_x, valid_lens_x,

pred_positions_x, mlm_weights_x, mlm_y, nsp_y)

l.backward()

trainer.step()

metric.add(mlm_l, nsp_l, tokens_x.shape[0], 1)

timer.stop()

animator.add(step+1, (metric[0] / metric[3], metric[1] / metric[3]))

step += 1

if step == num_steps:

num_steps_reached = True

break

print(f'MLM loss {metric[0] / metric[3]:.3f},'

f'NSP loss {metric[1] / metric[3]:.3f}')

print(f'{metric[2] / timer.sum():.1f} sentence pairs/sec on '

f'{str(devices)}')

train_bert(train_iter, net, loss, len(vocab), devices, 80)

MLM loss 5.289,NSP loss 0.718

7336.8 sentence pairs/sec on [device(type='cuda', index=0)]

14.10.2 用BERT表示文本

- 预训练bert后,可以用它表示 单个文本、文本对 或 其中的任何词元

- 下面的函数返回 tokens_a和tokens_b中所有词元的Bert(net)表示

def get_bert_encoding(net, tokens_a, tokens_b=None):

tokens, segments = d2l.get_tokens_and_segments(tokens_a, tokens_b)

tokens_ids = torch.tensor(vocab[tokens], device=devices[0]).unsqueeze(0)

segments = torch.tensor(segments, device=devices[0]).unsqueeze(0)

valid_len = torch.tensor(len(tokens), device=devices[0]).unsqueeze(0)

encoded_x, _, _ = net(tokens_ids, segments, valid_len)

return encoded_x

# 对于句子“a crane is flying”,打印出该词元的bert表示的前三个元素

tokens_a = ['a', 'crane', 'is', 'flying']

encoded_text = get_bert_encoding(net, tokens_a) # torch.Size([1, 6, 128])

# 词元:'', 'a', 'crane', 'is', 'flying', ''

encoded_text_cls = encoded_text[:, 0, :]

encoded_text_crane = encoded_text[:, 2, :]

encoded_text.shape, encoded_text_cls.shape, encoded_text_crane[0][:3]

(torch.Size([1, 6, 128]),

torch.Size([1, 128]),

tensor([-0.6029, 1.5879, -2.2356], device='cuda:0', grad_fn=))

# 对于句子“a crane driver came”和“he just left”.

# encoded_pair[:,0,:]是来自预训练bert的整个句子对的编码结果

tokens_a, tokens_b = ['a', 'crane', 'driver', 'came'], ['he', 'just', 'left']

encoded_pair = get_bert_encoding(net, tokens_a, tokens_b)

# 词元::'', 'a', 'crane', 'driver', 'came', '', 'he', 'just', 'left', ''

encoded_pair_cls = encoded_pair[:, 0, :]

encoded_pair_crane = encoded_pair[:, 2, :]

encoded_pair.shape, encoded_pair_cls.shape, encoded_pair_crane[0][:3]

(torch.Size([1, 10, 128]),

torch.Size([1, 128]),

tensor([-0.7168, 1.8172, -0.0902], device='cuda:0', grad_fn=))

- 小结

- 下一章为 下游NLP应用 微调 预训练的BERT模型

- 原来的BERT有两个版本(1.1亿参数 和 3.4亿参数)

- 预训练BERT之后,可以用它来表示 单个文本、文本对 或 其中的任何词元

- 同一个词在不同的上下文中有不同的bert表示:bert是上下文敏感的