独家 | 菜鸟必备的循环神经网络指南(附链接)

作者:Victor Zhou

翻译:王雨桐

校对:吴金迪

本文将介绍最基础的循环神经网络(Vanilla RNNs)的概况,工作原理,以及如何在Python中实现。

https://victorzhou.com/blog/intro-to-neural-networks/

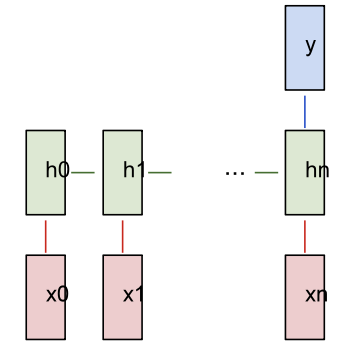

红色为输入,绿色为RNN本身,蓝色为输出。来源:Andrej Karpathy

机器翻译(例如Google翻译)使用“多对多”RNN。原始文本序列被送入RNN,随后RNN将翻译的文本作为输出。

情感分析(例如,这是一个积极的还是负面的评论?)通常是使用“多对一”RNN。将要分析的文本送入RNN,然后RNN产生单个输出分类(例如,这是一个积极的评论)。

![]()

基于之前的隐藏状态和下一个输入,我们可以得到下一个隐藏状态。

通过计算, 我们可以得到下一个输出 。

多对多 RNN

https://github.com/vzhou842/rnn-from-scratch/blob/master/data.py

|

Text

|

Positive?

|

|

i am good

|

✓

|

|

i am bad

|

❌

|

|

this is very good

|

✓

|

|

this is not bad

|

✓

|

|

i am bad not good

|

❌

|

|

i am not at all happy

|

❌

|

|

this was good earlier

|

✓

|

|

i am not at all bad or sad right now

|

✓

|

多对一 RNN

data.py

train_data = {

'good': True,

'bad': False,

# ... more data

}

test_data = {

'this is happy': True,

'i am good': True,

# ... more data

}

True=积极,False=消极

main.py

from data import train_data, test_data

# Create the vocabulary.

vocab = list(set([w for text in train_data.keys() for w in text.split(' ')]))

vocab_size = len(vocab)

print('%d unique words found' % vocab_size) # 18 unique words found

main.py

# Assign indices to each word.

word_to_idx = { w: i for i, w in enumerate(vocab) }

idx_to_word = { i: w for i, w in enumerate(vocab) }

print(word_to_idx['good']) # 16 (this may change)

print(idx_to_word[0]) # sad (this may change)

main.py

import numpy as np

def createInputs(text):

'''

Returns an array of one-hot vectors representing the words

in the input text string.

- text is a string

- Each one-hot vector has shape (vocab_size, 1)

'''

inputs = []

for w in text.split(' '):

v = np.zeros((vocab_size, 1))

v[word_to_idx[w]] = 1

inputs.append(v)

return inputs

rnn.py

import numpy as np

from numpy.random import randn

class RNN:

# A Vanilla Recurrent Neural Network.

def __init__(self, input_size, output_size, hidden_size=64):

# Weights

self.Whh = randn(hidden_size, hidden_size) / 1000

self.Wxh = randn(hidden_size, input_size) / 1000

self.Why = randn(output_size, hidden_size) / 1000

# Biases

self.bh = np.zeros((hidden_size, 1))

self.by = np.zeros((output_size, 1))

rnn.py

class RNN:

# ...

def forward(self, inputs):

'''

Perform a forward pass of the RNN using the given inputs.

Returns the final output and hidden state.

- inputs is an array of one hot vectors with shape (input_size, 1).

'''

h = np.zeros((self.Whh.shape[0], 1))

# Perform each step of the RNN

for i, x in enumerate(inputs):

h = np.tanh(self.Wxh @ x + self.Whh @ h + self.bh)

# Compute the output

y = self.Why @ h + self.by

return y, h

main.py

# ...

def softmax(xs):

# Applies the Softmax Function to the input array.

return np.exp(xs) / sum(np.exp(xs))

# Initialize our RNN!

rnn = RNN(vocab_size, 2)

inputs = createInputs('i am very good')

out, h = rnn.forward(inputs)

probs = softmax(out)

print(probs) # [[0.50000095], [0.49999905]]

附链接:

https://victorzhou.com/blog/softmax/

![]()

![]()

https://github.com/vzhou842/rnn-from-scratch

rnn.py

main.py

# Loop over each training example

for x, y in train_data.items():

inputs = createInputs(x)

target = int(y)

# Forward

out, _ = rnn.forward(inputs)

probs = softmax(out)

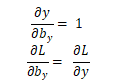

# Build dL/dy

d_L_d_y = probs d_L_d_y[target] -= 1

# Backward

rnn.backprop(d_L_d_y)

rnn.py

class RNN:

# ...

def backprop(self, d_y, learn_rate=2e-2):

'''

Perform a backward pass of the RNN.

- d_y (dL/dy) has shape (output_size, 1).

- learn_rate is a float.

'''

n = len(self.last_inputs)

# Calculate dL/dWhy and dL/dby.

d_Why = d_y @ self.last_hs[n].T

d_by = d_y

![]()

rnn.py

class RNN:

# …

def backprop(self, d_y, learn_rate=2e-2):

‘’’

Perform a backward pass of the RNN.

- d_y (dL/dy) has shape (output_size, 1).

- learn_rate is a float.

‘’’

n = len(self.last_inputs)

# Calculate dL/dWhy and dL/dby.

D_Why = d_y @ self.last_hs[n].T

d_by = d_y

# Initialize dL/dWhh, dL/dWxh, and dL/dbh to zero.

D_Whh = np.zeros(self.Whh.shape)

d_Wxh = np.zeros(self.Wxh.shape)

d_bh = np.zeros(self.bh.shape)

# Calculate dL/dh for the last h.

d_h = self.Why.T @ d_y

# Backpropagate through time.

For t in reversed(range(n)):

# An intermediate value: dL/dh * (1 – h^2)

temp = ((1 – self.last_hs[t + 1] ** 2) * d_h)

# dL/db = dL/dh * (1 – h^2)

d_bh += temp

# dL/dWhh = dL/dh * (1 – h^2) * h_{t-1}

d_Whh += temp @ self.last_hs[t].T

# dL/dWxh = dL/dh * (1 – h^2) * x

d_Wxh += temp @ self.last_inputs[t].T

# Next dL/dh = dL/dh * (1 – h^2) * Whh

d_h = self.Whh @ temp

# Clip to prevent exploding gradients.

For d in [d_Wxh, d_Whh, d_Why, d_bh, d_by]:

np.clip(d, -1, 1, out=d)

# Update weights and biases using gradient descent.

Self.Whh -= learn_rate * d_Whh

self.Wxh -= learn_rate * d_Wxh

self.Why -= learn_rate * d_Why

self.bh -= learn_rate * d_bh

self.by -= learn_rate * d_by

main.py

import random

def processData(data, backprop=True):

'''

Returns the RNN's loss and accuracy for the given data.

- data is a dictionary mapping text to True or False.

- backprop determines if the backward phase should be run.

'''

items = list(data.items())

random.shuffle(items)

loss = 0

num_correct = 0

for x, y in items:

inputs = createInputs(x)

target = int(y)

# Forward

out, _ = rnn.forward(inputs)

probs = softmax(out)

# Calculate loss / accuracy

loss -= np.log(probs[target])

num_correct += int(np.argmax(probs) == target)

if backprop:

# Build dL/dy

d_L_d_y = probs

d_L_d_y[target] -= 1

# Backward

rnn.backprop(d_L_d_y)

return loss / len(data), num_correct / len(data)

main.py

# Training loop

for epoch in range(1000):

train_loss, train_acc = processData(train_data)

if epoch % 100 == 99:

print('--- Epoch %d' % (epoch + 1))

print('Train:\tLoss %.3f | Accuracy: %.3f' % (train_loss, train_acc))

test_loss, test_acc = processData(test_data, backprop=False)

print('Test:\tLoss %.3f | Accuracy: %.3f' % (test_loss, test_acc))

--- Epoch 100

Train: Loss 0.688 | Accuracy: 0.517

Test: Loss 0.700 | Accuracy: 0.500

--- Epoch 200

Train: Loss 0.680 | Accuracy: 0.552

Test: Loss 0.717 | Accuracy: 0.450

--- Epoch 300

Train: Loss 0.593 | Accuracy: 0.655

Test: Loss 0.657 | Accuracy: 0.650

--- Epoch 400

Train: Loss 0.401 | Accuracy: 0.810

Test: Loss 0.689 | Accuracy: 0.650

--- Epoch 500

Train: Loss 0.312 | Accuracy: 0.862

Test: Loss 0.693 | Accuracy: 0.550

--- Epoch 600

Train: Loss 0.148 | Accuracy: 0.914

Test: Loss 0.404 | Accuracy: 0.800

--- Epoch 700

Train: Loss 0.008 | Accuracy: 1.000

Test: Loss 0.016 | Accuracy: 1.000

--- Epoch 800

Train: Loss 0.004 | Accuracy: 1.000

Test: Loss 0.007 | Accuracy: 1.000

--- Epoch 900

Train: Loss 0.002 | Accuracy: 1.000

Test: Loss 0.004 | Accuracy: 1.000

--- Epoch 1000

Train: Loss 0.002 | Accuracy: 1.000

Test: Loss 0.003 | Accuracy: 1.000

https://github.com/vzhou842/rnn-from-scratch

了解长短期记忆网络(LSTM),这是一个更强大和更受欢迎的RNN架构,或关于LSTM的著名的变体--门控循环单元(GRU)。

通过恰当的ML库(如Tensorflow,Keras或PyTorch),你可以尝试更大/更好的RNN。

了解双向RNN,它可以处理前向和后向序列,因此输出层可以获得更多信息。

尝试像GloVe或Word2Vec这样的Word嵌入,可用于将单词转换为更有用的矢量表示。

查看自然语言工具包(NLTK),这是一个用于处理人类语言数据的Python库

编辑:于腾凯

校对:杨学俊

译者简介

翻译组招募信息

工作内容:需要一颗细致的心,将选取好的外文文章翻译成流畅的中文。如果你是数据科学/统计学/计算机类的留学生,或在海外从事相关工作,或对自己外语水平有信心的朋友欢迎加入翻译小组。

你能得到:定期的翻译培训提高志愿者的翻译水平,提高对于数据科学前沿的认知,海外的朋友可以和国内技术应用发展保持联系,THU数据派产学研的背景为志愿者带来好的发展机遇。

其他福利:来自于名企的数据科学工作者,北大清华以及海外等名校学生他们都将成为你在翻译小组的伙伴。

点击文末“阅读原文”加入数据派团队~

点击“阅读原文”拥抱组织