机器学习-朴素贝叶斯

01贝叶斯方法-背景

- 01 贝叶斯分类:贝叶斯分类算法的总称,这类算法均以贝叶斯定理为基础

- 02 先验概率:根据以往的经验分析得到的概率,我们用P(Y)来代表在没有训练数据前假设Y拥有的初始概率

- 03 后验概率:根据已经发生的事件来分析得到的概率。以P(Y|X)代表假设X成立的情况下观察到Y数据的概率,因为它反映了在看到训练数据X后Y成立的置信度。

- 04 联合概率是指在多元的概率分布中多个随机变量分别满足各自条件的概率。X和Y的联合概率表示为P(X,Y)、P(XY)或者P(X交Y),假设X和Y都服从正态分布,那么P(X<5,Y<0)就是一个联合概率,表示X<5,Y<0两个条件同时成立的概率,表示两个事件同时发生的概率。

01贝叶斯方法

贝叶斯公式(模型)

P ( Y ∣ X ) = P ( X , Y ) P ( X ) = P ( X ∣ Y ) ∗ P ( Y ) P ( X ) P(Y|X)=\frac{P(X,Y)}{P(X)}=\frac{P(X|Y)*P(Y)}{P(X)} P(Y∣X)=P(X)P(X,Y)=P(X)P(X∣Y)∗P(Y)

朴素贝叶斯方法是生成学习方法

02朴素贝叶斯原理

- 01生成模型和判别模型

监 督 学 习 = { 生 成 方 法 , 生 成 模 型 , 由数据直接学习决策函数 Y=f(X)或者条件概率分布P(Y|X)作为预测模即判别模型,基本思想是有限样本条件下建立判别函数,不考虑样本的产生模型,直接研究预测模型 判 别 方 法 , 判 别 模 型 , 由训练数据学习联合概率分布P(X,Y),然后求得后验概率分布P(Y|X)。具体来说,利用训练数据学习P(X|Y)和P(Y)的估计,得到联合概率分布:P(X,Y)=P(Y)P(X|Y),再利用它进行分类。 监督学习= \begin{cases} 生成方法,\text { $生成模型$} ,\text{由数据直接学习决策函数 Y=f(X)或者条件概率分布P(Y|X)作为预测模即判别模型,基本思想是有限样本条件下建立判别函数,不考虑样本的产生模型,直接研究预测模型}\\判别方法,\text{$判别模型$},\text{由训练数据学习联合概率分布P(X,Y),然后求得后验概率分布P(Y|X)。具体来说,利用训练数据学习P(X|Y)和P(Y)的估计,得到联合概率分布:P(X,Y)=P(Y)P(X|Y),再利用它进行分类。} \end{cases} 监督学习={生成方法, 生成模型,由数据直接学习决策函数 Y=f(X)或者条件概率分布P(Y|X)作为预测模即判别模型,基本思想是有限样本条件下建立判别函数,不考虑样本的产生模型,直接研究预测模型判别方法,判别模型,由训练数据学习联合概率分布P(X,Y),然后求得后验概率分布P(Y|X)。具体来说,利用训练数据学习P(X|Y)和P(Y)的估计,得到联合概率分布:P(X,Y)=P(Y)P(X|Y),再利用它进行分类。 - 常见的生成模型有线性回归,逻辑回归,感知机,决策树,支持向量机等等

- 常见的判别模型有朴素贝叶斯,HMM,深度信念网络(DBN)等等

- 02朴素贝叶斯是生成方法

具体来说就是利用训练数据学习P(X|Y)和P(X)的估计,得到联合概率分布: P ( X , Y ) = P ( Y ) ∗ P ( X ∣ Y ) P(X,Y)=P(Y)*P(X|Y) P(X,Y)=P(Y)∗P(X∣Y),概率估计方法可以是极大似然法或者贝叶斯估计。 - 03朴素贝叶斯法的基本假设条件是独立的

P ( X = x ∣ Y = c k ) = P ( x ( 1 ) , . . . , x ( n ) ∣ y k ) = ∑ j = 1 n P ( x ( j ) ∣ Y = c k ) P(X=x|Y=c_{k})=P(x^{(1)},...,x^{(n)}|y^{k})=\sum_{j=1}^nP(x^{(j)}|Y=c_{k}) P(X=x∣Y=ck)=P(x(1),...,x(n)∣yk)=j=1∑nP(x(j)∣Y=ck) - ck代表类别,k代表类别个数。这是一个较强的假设。由于这一假设,模型包含的条件概率的数量大为减少,朴素贝叶斯法的学习与预测大为简化。因而朴素贝叶斯法高效,且易于实现。其缺点是分类的性能不一定很高。

- 04 朴素贝叶斯法利用贝叶斯定理与学到的联合概率模型进行分类预测

我们要求的是P(Y|X),根据生成式模型的定义我们可以求P(X,Y)和P(Y)假设中的特征 是条件独立的,这个假设我们成为朴素贝叶斯假设。(如果给定Z的情况下,X和Y条件独立):

P ( X ∣ Z ) = P ( X ∣ Y , Z ) P(X|Z)=P(X|Y,Z) P(X∣Z)=P(X∣Y,Z)也可以表示为:

P ( X , Y ∣ Z ) = P ( X ∣ Z ) P ( Y ∣ Z ) P(X,Y|Z)=P(X|Z)P(Y|Z) P(X,Y∣Z)=P(X∣Z)P(Y∣Z)

独立性

将输入x分到后验概率最大的类y.

y = a r g m a x c k P ( Y = c k ) ∏ j = 1 n P ( X j = x ( j ) ∣ Y = c k ) y=\underset{c_{k}}{argmax}P(Y=c_{k})\prod_{j=1}^{n}P(X_{j}=x^{(j)}|Y=c_{k}) y=ckargmaxP(Y=ck)j=1∏nP(Xj=x(j)∣Y=ck),后验概率最大值介于0-1之间时,损失函数的期望风险最小,其中 X = x 1 , x 2 , . . . . . x n , 为n维向量的集合 X={x_{1},x_{2},.....x_{n}},\text{为n维向量的集合} X=x1,x2,.....xn,为n维向量的集合 Y = c 1 , c 2 , . . . . . c k , K为类别数 Y={c_{1},c_{2},.....c_{k}},\text{K为类别数} Y=c1,c2,.....ck,K为类别数,训练数据集 T = ( x 1 , y 1 ) , ( x 2 , y 2 ) , . . . . . ( x n , y n ) 由P(X,Y)独立同分布产生 T={(x_{1},y_{1}),(x_{2},y_{2}),.....(x_{n},y_{n})}\text{由P(X,Y)独立同分布产生} T=(x1,y1),(x2,y2),.....(xn,yn)由P(X,Y)独立同分布产生

- 05 朴素贝叶斯法对条件概率分布作了条件独立性假设,由于这是一个较强的假设,朴素贝叶斯法也由此得名,具体的假设是:

P ( X = x ∣ Y = c k ) = P ( X ( 1 ) = x ( 1 ) , X ( 2 ) = x ( 2 ) , . . . . . X ( n ) = x ( n ) ∣ Y = c k ) = ∏ j = 1 n P ( X ( j ) = x ( j ) ∣ Y = c k ) ..........................(1) P(X=x|Y=c_{k})=P(X^{(1)}=x^{(1)},X^{(2)}=x^{(2)},.....X^{(n)}=x^{(n)}|Y=c_{k})=\\[2ex]\prod_{j=1}^{n}P(X^{(j)}=x^{(j)}|Y=c_{k})\text{..........................(1)} P(X=x∣Y=ck)=P(X(1)=x(1),X(2)=x(2),.....X(n)=x(n)∣Y=ck)=j=1∏nP(X(j)=x(j)∣Y=ck)..........................(1) - 06 朴素贝叶斯法分类时,对于给定的输入x,通过学习得到的模型计算后验概率分布P(Y=ck|X=x),将后验概率最大的类作为x的类输出,根据贝叶斯定理:

P ( Y ∣ X ) = P ( X ∣ Y ) P ( Y ) P ( X ) P(Y|X)=\frac{P(X|Y)P(Y)}{P(X)} P(Y∣X)=P(X)P(X∣Y)P(Y)可以计算后验概率为 P ( Y = c k ∣ X = x ) = P ( X = x ∣ Y = c k ) P ( Y = c k ) ∑ k = 1 k P ( X = x ∣ Y = c k ) P ( Y = c k ) ..........................(2) P(Y=c_{k}|X=x)=\\[2ex]\frac{P(X=x|Y=c_{k})P(Y=c_{k})}{\sum_{k=1}^{k}P(X=x|Y=c_{k})P(Y=c_{k})}\text{..........................(2)} P(Y=ck∣X=x)=∑k=1kP(X=x∣Y=ck)P(Y=ck)P(X=x∣Y=ck)P(Y=ck)..........................(2)

即贝叶斯模型 y = f ( x ) = a r g m a x c k ∏ j = 1 n P ( X ( j ) = x ( j ) ∣ Y = c k ) P ( Y = c k ) y=f(x)=\underset{c_{k}}{argmax}\prod_{j=1}^{n}P(X^{(j)}=x^{(j)}|Y=c_{k})P(Y=c_{k}) y=f(x)=ckargmax∏j=1nP(X(j)=x(j)∣Y=ck)P(Y=ck)

03朴素贝叶斯案例

04 朴素贝叶斯代码实现

# -*- coding: utf-8 -*-

"""

=========================

@Time : 2021/12/26 16:30

@Author : yhz

@File : GaussianNB.py

=========================

"""

# 导包

import numpy as np

import pandas as pd

from sklearn.datasets import load_iris

from sklearn.model_selection import train_test_split

from collections import Counter

import math

# 加载数据

def create_data():

iris = load_iris()

df = pd.DataFrame(iris.data, columns=iris.feature_names)

df["label"] = iris.target

df.columns = ['sepal length', 'sepal width', 'petal length', 'petal width', 'label']

data = np.array(df.iloc[:100, :])

# data[:, :-1] 不取最后一列,#data[:, -1]只取最后一列

# print(data[:, -1])

return data[:, :-1], data[:, -1]

class NaiveBayes:

def __init__(self):

self.model = None

# 计算期望

@staticmethod

def mean(X):

return sum(X) / float(len(X))

# 计算标准差

def stdev(self, X):

avg = self.mean(X)

return math.sqrt(sum([pow(x - avg, 2) for x in X]) / float(len(X)))

# 概率密度函数

def gaussian_probability(self, x, mean, stdev):

exponent = math.exp(-(math.pow(x - mean, 2) / (2 * math.pow(stdev, 2))))

return 1 / (math.sqrt((2 * math.pi) * math.pow(stdev, 2))) * exponent

# 处理X_train

def summarize(self, train_data):

summaries = []

for i in zip(*train_data):

summaries = [(self.mean(i), self.stdev(i))]

return summaries

# 分别求出数学期望和标准差

def fit(self, X, y):

labels = list(set(y))

data = {label: [] for label in labels}

for f, label in zip(X, y):

data[label].append(f)

# for label, value in data.items():

# print(value)

self.model = {

label: self.summarize(value)

for label, value in data.items()

}

return 'gaussianNB train done!'

# 计算概率

def calculate_probabilities(self, input_data):

# summaries:{0.0: [(5.0, 0.37),(3.42, 0.40)], 1.0: [(5.8, 0.449),(2.7, 0.27)]}

# input_data:[1.1, 2.2]

probabilities = {}

for label, value in self.model.items():

print(label, value)

probabilities[label] = 1

for i in range(len(value)):

mean, stdev = value[i]

probabilities[label] *= self.gaussian_probability(

input_data[i], mean, stdev)

return probabilities

# 预测

def predict(self, X_test):

# {0.0: 2.9680340789325763e-27, 1.0: 3.5749783019849535e-26}

label = sorted(self.calculate_probabilities(X_test).items(), key=lambda x: x[-1])[-1][0]

return label

# 计算精度

def score(self, X_test, y_test):

right = 0

for X, y in zip(X_test, y_test):

label = self.predict(X)

if label == y:

right += 1

return right / float(len(X_test))

if __name__ == '__main__':

X, y = create_data()

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3)

# print(X_test[0], y_test[0])

model = NaiveBayes()

model.fit(X_train, y_train)

# print(model.predict([4.4, 3.2, 1.3, 0.2]))

# print(model.score(X_test, y_test))

#使用scikit-learn实例

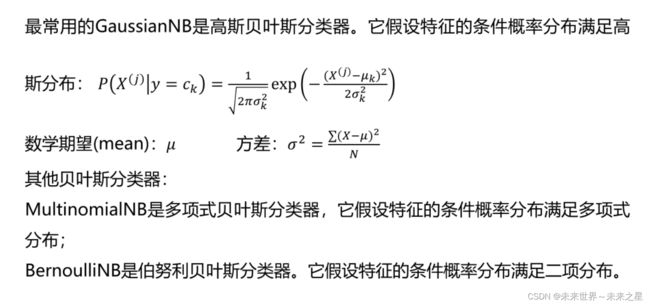

from sklearn.naive_bayes import GaussianNB, BernoulliNB, MultinomialNB

# 高斯模型、伯努利模型和多项式模型

clf = GaussianNB()

clf.fit(X_train, y_train)

print(clf.score(X_test, y_test))

print(clf.predict([[4.4, 3.2, 1.3, 0.2]]))