深度学习训练营第6周好莱坞明星人脸识别

365天深度学习训练营-第6周:好莱坞明星识别

- 本文为365天深度学习训练营 中的学习记录博客

- 参考文章:365天深度学习训练营-第6周:好莱坞明星识别(训练营内部成员可读)

- 原作者:K同学啊|接辅导、项目定制

评论

本项目包含:

1.实验代码、相关说明及结果

2.实验过程记录及总结

主要知识点和目标包括

要求:

使用categorical_crossentropy(多分类的对数损失函数)完成本次选题(完成)

探究不同损失函数的使用场景与代码实现(上一个项目对两种损失函数的异同做了说明,其余的后续有空再做探讨)

拔高(可选):

自己搭建VGG-16网络框架 (完成)

调用官方的VGG-16网络框架(完成)

使用VGG-16算法训练该模型(完成)

探索(难度有点大)

准确率达到60%(完成)

from tensorflow import keras

from tensorflow.keras import models,layers

import os,PIL,pathlib

import numpy as np

import matplotlib.pyplot as plt

import tensorflow as tf

gpus = tf.config.list_physical_devices("GPU")

if gpus:

gpu0 = gpus[0] #如果有多个GPU,仅使用第0个GPU

tf.config.experimental.set_memory_growth(gpu0, True) #设置GPU显存用量按需使用

tf.config.set_visible_devices([gpu0],"GPU")

一、查看数据

data_dir = "/home/mw/input/hlw6967/48-data/48-data";

data_dir = pathlib.Path(data_dir)

data_dir

PosixPath(‘/home/mw/input/hlw6967/48-data/48-data’)

image_count = len(list(data_dir.glob('*/*.jpg')))

print("图片总数为",image_count)

图片总数为 1800

roses = list(data_dir.glob('Jennifer Lawrence/*.jpg'))

pic = PIL.Image.open(roses[10])

plt.imshow(pic)

二、 数据处理

1、加载数据

batch_size = 32

image_height = 256

image_weight = 256

train_ds = keras.preprocessing.image_dataset_from_directory(

data_dir,

batch_size=batch_size,

image_size=(image_height,image_weight),

shuffle=True,

seed=123,

subset='training',

validation_split=0.1,

label_mode = 'categorical') #categorical' means that the labels are encoded as a categorical vecto (e.g. for `categorical_crossentropy` loss).

valid_ds = keras.preprocessing.image_dataset_from_directory(

data_dir,

batch_size=batch_size,

image_size=(image_height,image_weight),

shuffle=True,

subset = 'validation',

validation_split=0.1,

seed=123,

label_mode='categorical')

class_name = train_ds.class_names

class_name

[‘Angelina Jolie’,

‘Brad Pitt’,

‘Denzel Washington’,

‘Hugh Jackman’,

‘Jennifer Lawrence’,

‘Johnny Depp’,

‘Kate Winslet’,

‘Leonardo DiCaprio’,

‘Megan Fox’,

‘Natalie Portman’,

‘Nicole Kidman’,

‘Robert Downey Jr’,

‘Sandra Bullock’,

‘Scarlett Johansson’,

‘Tom Cruise’,

‘Tom Hanks’,

‘Will Smith’]

2、数据可视化

plt.figure(figsize=(20,10))

for images,labels in train_ds.take(1):

for i in range(16):

plt.subplot(5,8,i+1)

plt.imshow(images[i].numpy().astype('uint8'))

plt.axis('off')

plt.title(class_name[np.argmax(labels[i])])

3、再次检查数据

for image_batch,label_batch in train_ds:

print(image_batch.shape)

print(label_batch.shape)

break

(32, 256, 256, 3)

(32, 17)

4、配置数据

AUTOTUNE = tf.data.experimental.AUTOTUNE

train_ds = train_ds.cache().shuffle(2000).prefetch(buffer_size=AUTOTUNE)

valid_ds = valid_ds.cache().prefetch(buffer_size=AUTOTUNE)

三、模型搭建

模型搭建VGG16

直接调用

tf.keras.applications.vgg16.VGG16(

include_top=True, #是否使用顶层

weights=‘imagenet’,#是否加载imgenet预训练参数

input_tensor=None,

input_shape=None,#输入向量形状

pooling=None,#max或avg,最大或平均池化层

classes=1000,#如果使用顶层且使用预训练参数,分类数必须为1000,否则可以自己改动

classifier_activation=‘softmax’#分类激活函数

)

from tensorflow.keras.applications.vgg16 import VGG16

model = VGG16(

include_top=True,

weights=None,

input_tensor=None,

pooling=max,

input_shape=(image_height,image_weight,3),

classes = len(class_name),

classifier_activation='softmax'

)

model.summary()

自己搭建

VGG16_model = models.Sequential([

layers.experimental.preprocessing.Rescaling(1./255, input_shape=(image_height,image_weight,3)),

layers.Conv2D(64, (3,3), padding='same',activation='relu',input_shape=(image_height,image_weight,3)),

layers.Conv2D(64, (3,3), padding='same',activation='relu'),

layers.AveragePooling2D(pool_size=(2,2),strides=(2,2)),#128*128*64

layers.Conv2D(128, (3,3), padding='same', activation='relu'),

layers.Conv2D(128, (3,3), padding='same', activation='relu'),

layers.AveragePooling2D(pool_size=(2,2),strides=(2,2)),#64*64*128

layers.Conv2D(256, (3,3), padding='same', activation='relu'),

layers.Conv2D(256, (3,3), padding='same', activation='relu'),

layers.Conv2D(256, (3,3), padding='same', activation='relu'),

layers.MaxPool2D(pool_size=(2,2),strides=(2,2)),

layers.Conv2D(512, (3,3), padding='same', activation='relu'),

layers.Conv2D(512, (3,3), padding='same', activation='relu'),

layers.Conv2D(512, (3,3), padding='same', activation='relu'),

layers.MaxPool2D(pool_size=(2,2),strides=(2,2)),

layers.Conv2D(512, (3,3), padding='same', activation='relu'),

layers.Conv2D(512, (3,3), padding='same', activation='relu'),

layers.Conv2D(512, (3,3), padding='same', activation='relu'),

layers.MaxPool2D(pool_size=(2,2),strides=(2,2)),

layers.Flatten(),

layers.Dense(4096,activation='relu'),

layers.Dropout(0.4),

layers.Dense(1024,activation='relu'),

layers.Dropout(0.4),

layers.Dense(len(class_name),activation='softmax')

])

VGG16_model.summary()

设置动态学习率

initial_learning_rate = 0.0001

lr_schedule = tf.keras.optimizers.schedules.ExponentialDecay(

initial_learning_rate=initial_learning_rate,

decay_steps=51,

decay_rate=0.95,

staircase=True)

optimizer = tf.keras.optimizers.Adam(learning_rate = lr_schedule)

VGG16_model.compile(optimizer = optimizer,

loss=tf.keras.losses.CategoricalCrossentropy(),

metrics=['accuracy'])

设置早停

from tensorflow.keras.callbacks import ModelCheckpoint,EarlyStopping

epochs = 100

checkpointer = ModelCheckpoint('best_model_week6.h5',

monitor = 'val_accuracy',

verbose = 1,

save_best_only = True,

save_weights_only = True)

earlystopper = EarlyStopping(monitor='val_accuracy',

min_delta = 0.001,

patience=20,

verbose=1)

history = VGG16_model.fit(train_ds,

validation_data=valid_ds,

epochs=epochs,

callbacks=[checkpointer,earlystopper])

Epoch 00058: val_accuracy did not improve from 0.47778

51/51 [==============================] - 28s 547ms/step - loss: 7.3273e-04 - accuracy: 1.0000 - val_loss: 6.4886 - val_accuracy: 0.4389

Epoch 00058: early stopping

#对每个卷积层添加正则化项

kernel_regularizer=keras.regularizers.l2(0.005)

#在每个卷积层之间添加BN层

layers.BatchNormalization(),

#将第一、二个全连接层参数分别修改为1024,512

Epoch 00035: val_accuracy did not improve from 0.54444

51/51 [==============================] - 31s 598ms/step - loss: 17.1592 - accuracy: 0.9932 - val_loss: 18.7538 - val_accuracy: 0.5222

Epoch 00035: early stopping

参考同学文章中的方法

https://www.heywhale.com/home/user/profile/619af63785f0af00178b484b

作者爱挠静香下巴

使用预训练的VGG16模型权重

通过github下载权重文件

自己搭建的VGG16模型仅保留Flatten之前的部分,

同时由于官方文件不包含BN层,因此删掉BN层

VGG16_model.load_weights('/home/mw/project/weight/vgg16_weights_tf_dim_ordering_tf_kernels_notop (1).h5')

#冻结前13层网格参数,保证预训练参数不被改变

for layer in VGG16_model.layers[:13]:

layer.trainable=False

model = models.Sequential([

VGG16_model,

layers.Flatten(),

layers.Dense(1024,activation='relu'),

layers.Dropout(0.4),

layers.Dense(512,activation='relu'),

layers.Dropout(0.4),

layers.Dense(len(class_name),activation='softmax')

])

model.summary()

Model: “sequential_1”

Layer (type) Output Shape Param

sequential (Sequential) (None, 8, 8, 512) 14714688

flatten (Flatten) (None, 32768) 0

dense (Dense) (None, 1024) 33555456

dropout (Dropout) (None, 1024) 0

dense_1 (Dense) (None, 512) 524800

dropout_1 (Dropout) (None, 512) 0

dense_2 (Dense) (None, 17) 8721

Total params: 48,803,665

Trainable params: 43,528,209

Non-trainable params: 5,275,456

Epoch 00039: val_accuracy did not improve from 0.60556

51/51 [==============================] - 12s 229ms/step - loss: 4.7013 - accuracy: 0.9969 - val_loss: 6.4816 - val_accuracy: 0.6056

Epoch 00039: early stopping

模型 性能出现显著提高

四、模型检验

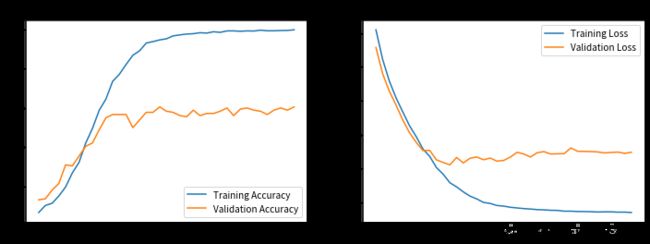

acc = history.history['accuracy']

val_acc = history.history['val_accuracy']

loss = history.history['loss']

val_loss = history.history['val_loss']

epochs_range = range(len(loss))

plt.figure(figsize=(12, 4))

plt.subplot(1, 2, 1)

plt.plot(epochs_range, acc, label='Training Accuracy')

plt.plot(epochs_range, val_acc, label='Validation Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Validation Accuracy')

plt.subplot(1, 2, 2)

plt.plot(epochs_range, loss, label='Training Loss')

plt.plot(epochs_range, val_loss, label='Validation Loss')

plt.legend(loc='upper right')

plt.title('Training and Validation Loss')

plt.show()

指定图片预测

img = PIL.Image.open('/home/mw/input/hlw6967/48-data/48-data/Jennifer Lawrence/001_21a7d5e6.jpg')

plt.imshow(img)

img_array = np.array(img)

model.load_weights('/home/mw/project/best_model_week6a.h5')

img_array = tf.image.resize(img_array,size=(256,256))

img_array = tf.expand_dims(img_array,0) #(1,256,256,3)

prediction = model.predict(img_array)

print('预测结果为',class_name[np.argmax(prediction)])

五、总结

该数据集直接使用VGG16模型进行分类训练效果很差,存在严重的过拟合现象。初始测试集准确率仅0.43。

通过添加BN层,正则化(作用明显),减少全连接层输出的方法可以适当提高测试集准确率,但也仅有0.5做优。

通过使用官方预训练的权重数据能够有效提高模型准确率,可使测试集准确率超过0.6但过拟合的情况仍比较严重。

后续可以试着在全连接层添加BN层可能有所改善