【BasicNet系列:六】MobileNet 论文 v1 v2 笔记解读 + pytorch代码分析

1、MobileNet V1

MobileNets: Efficient Convolutional Neural Networks for Mobile Vision Applications

参考:

https://zhuanlan.zhihu.com/p/33075914

1.1 Prior Work

- 解决什么问题?

深度学习在图像分类,目标检测和图像分割等任务表现出了巨大的优越性。计算量,存储空间以及能耗方面的巨大开销,使得其在某些真实的应用场景如移动或者嵌入式设备是难以被应用的。

目前的研究总结来看分为两个方向:

- 压缩预训练模型。

获得小型网络的一个办法是减小、分解或压缩预训练网络,例如量化压缩(product quantization)、哈希(hashing )、剪枝(pruning)、矢量编码( vector quantization)和霍夫曼编码(Huffman coding)等;此外还有各种分解因子(various factorizations )用来加速预训练网络;还有一种训练小型网络的方法叫蒸馏(distillation ),使用大型网络指导小型网络,这是对论文的方法做了一个补充,后续有介绍补充。

- 直接设计小型模型

例如Flattened networks利用完全的因式分解的卷积网络构建模型,显示出完全分解网络的潜力;Factorized Networks引入了类似的分解卷积以及拓扑连接的使用;Xception network显示了如何扩展深度可分离卷积到Inception V3 networks;Squeezenet 使用一个bottleneck用于构建小型网络。

小型化方面常用的手段有:

(1)卷积核分解,使用1×N和N×1的卷积核代替N×N的卷积核

(2)使用bottleneck结构,以SqueezeNet为代表

(3)以低精度浮点数保存,例如Deep Compression

(4)冗余卷积核剪枝及哈弗曼编码

- MobileNet 目标

在保持模型性能(accuracy)的前提下降低模型大小(parameters size),同时提升模型速度(speed, low latency)

1.2 Network

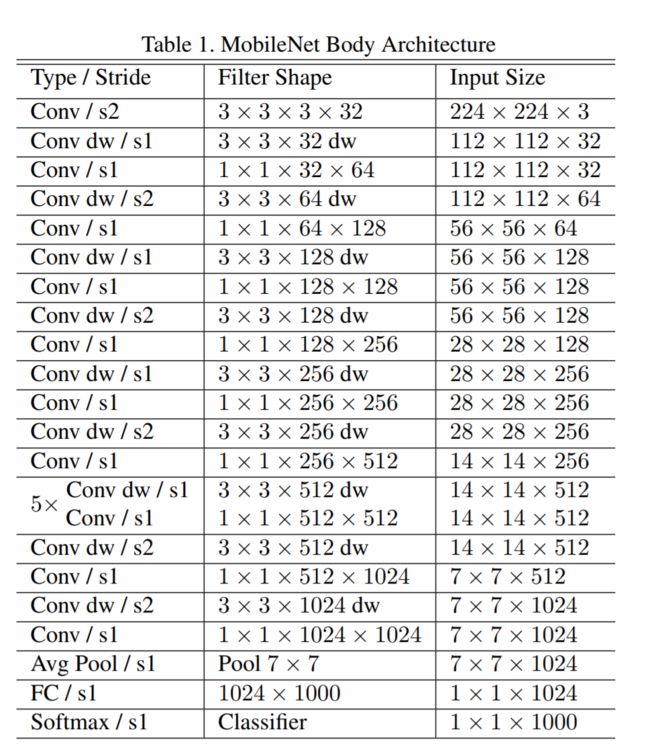

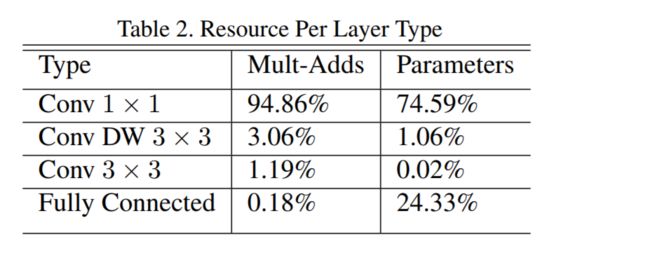

在MobileNet中,有95%的计算量和75%的参数属于1x1卷积。

1.3 ⭐Depthwise Separable Convolution

MobileNet的基本单元是深度级可分离卷积(depthwise separable convolution),即Xception变体结构。

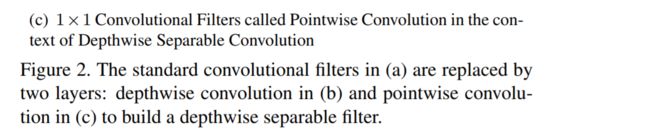

可以分解为两个更小的操作:depthwise convolution 和 pointwise convolution

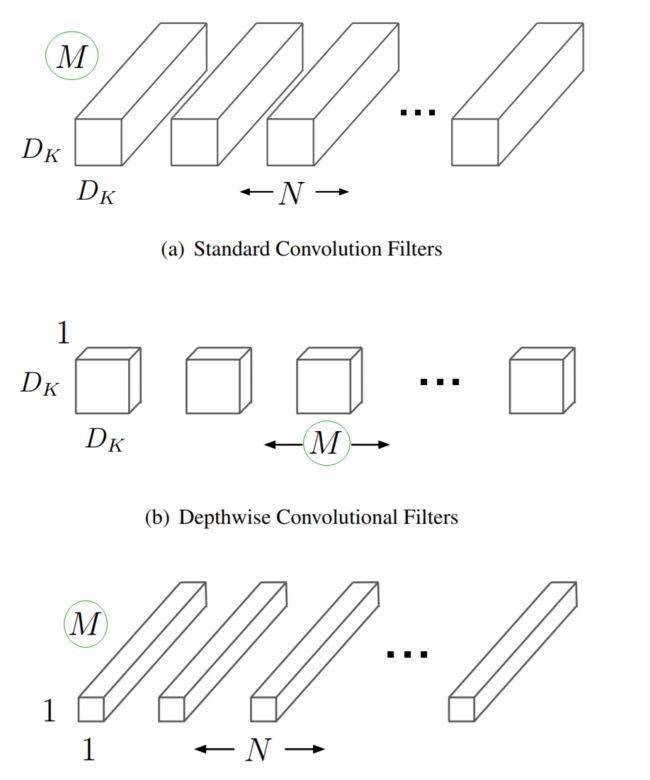

图a中的卷积核就是最常见的3D卷积,替换为deep-wise方式:一个逐个通道处理的2D卷积(图b)结合3D的1*1卷积(图c)

- Depthwise convolution 和标准卷积不同,对于标准卷积其卷积核是用在所有的输入通道上(input channels),而depthwise convolution针对每个输入通道采用不同的卷积核,就是说一个卷积核对应一个输入通道,所以说depthwise convolution是depth级别的操作。

- pointwise convolution 其实就是普通的卷积,只不过其采用1x1的卷积核

采用depthwise convolution对不同输入通道分别进行卷积,然后采用pointwise convolution将上面的输出再进行结合,这样其实整体效果和一个标准卷积是差不多的,但是会大大减少计算量和模型参数量。

- 计算量

M为输入的通道数

DF为输入的宽和高

Dk为卷积核的宽和高

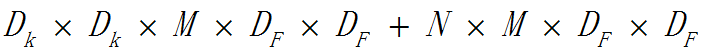

- 在某一普通卷积层如果使用N个卷积核,这一个卷积层的计算量为:

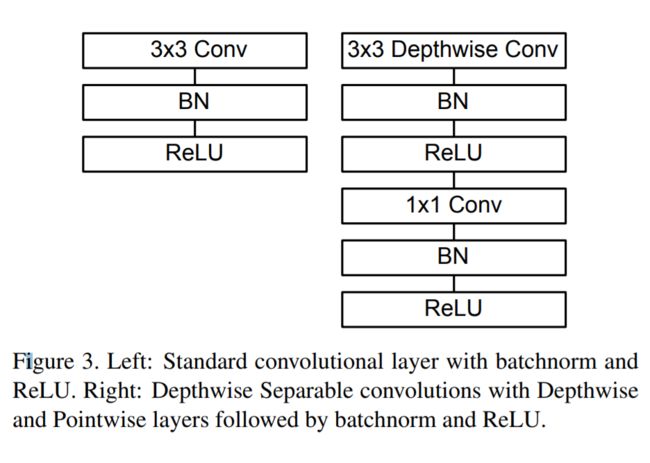

Architectural

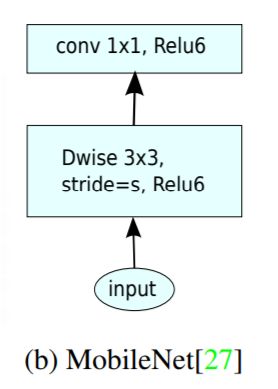

传统的3D卷积常见的使用方式如下图左侧所示,deep-wise卷积的使用方式如下图右边所示。

deep-wise的卷积和后面的1x1卷积被当成了两个独立的模块,都在输出结果的部分加入了Batch Normalization和非线性激活单元。

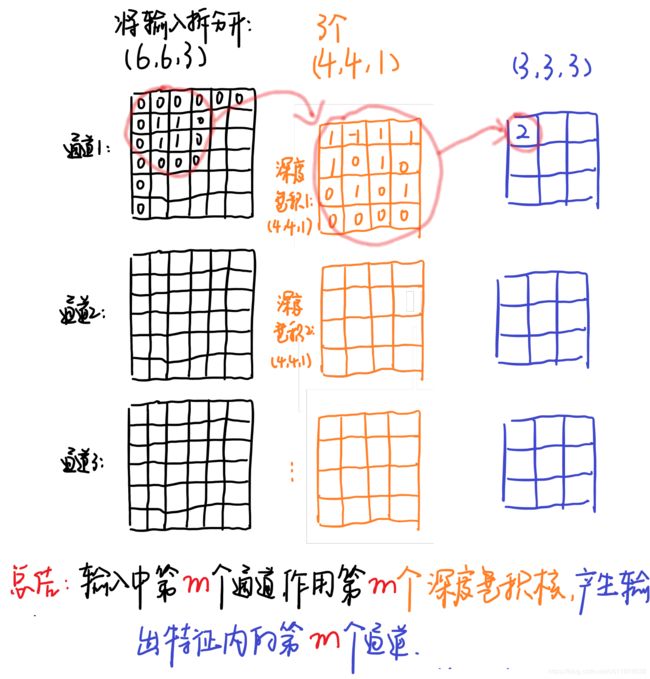

example

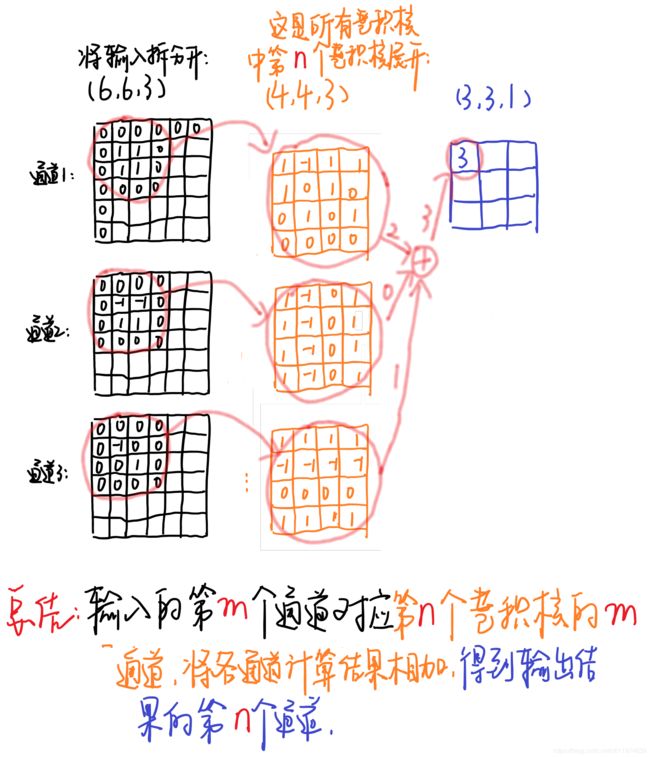

输入图片的大小为(6,6,3) ,原卷积操作是用(4,4,3,5) 的卷积(4×4 是卷积核大小,3是卷积核通道数,5个卷积核数量),stride=1,无padding。输出的特征尺寸为(6-4)/1+1=3,即输出的特征映射为(3,3,5)

将标准卷积中选取序号为 n 的卷积核,大小为(4,4,3) ,标准卷积过程示意图如下(注意省略了偏置单元):

黑色的输入为(6,6,3) 与第n 个卷积核对应,每个通道对应每个卷积核通道卷积得到输出,最终输出为2+0+1=3 。(这是常见的卷积操作,注意这里卷积核要和输入的通道数相同,即图中表示的3个通道~)

对于深度分离卷积,把标准卷积(4,4,3,5)分解为:

depthwise 卷积部分:大小为(4,4,1,3) ,作用在输入的每个通道上,输出特征映射为 (3,3,3)

pointwise 卷积部分:大小为 (1,1,3,5),作用在深度卷积的输出特征映射上,得到最终输出为(3,3,5)

例中depthwise 卷积卷积过程示意图如下:

输入有3个通道,对应着有3个大小为(4,4,1) 的深度卷积核,卷积结果共有3个大小为(3,3,1) ,我们按顺序将这卷积按通道排列得到输出卷积结果(3,3,3) 。

1.4 Width and Resolution Multiplier

为了获得更小更快的模型

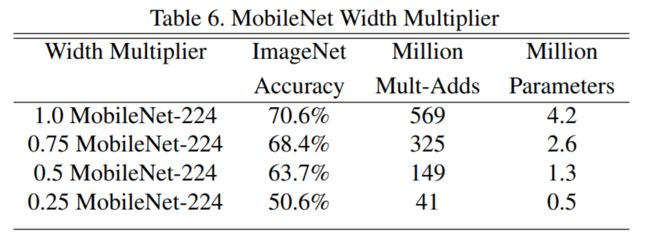

- Width Multiplier: Thinner Models

宽度因子α是一个属于(0,1]之间的数,作用于网络的通道数。是新网络中每一个模块要使用的卷积核数量相较于标准的MobileNet比例。对于deep-wise结合1x1方式的卷积核,计算量为:

α常用的配置为1,0.75,0.5,0.25;当α等于1时就是标准的MobileNet。通过参数α可以非常有效的将计算量和参数数量约减到α的平方倍。

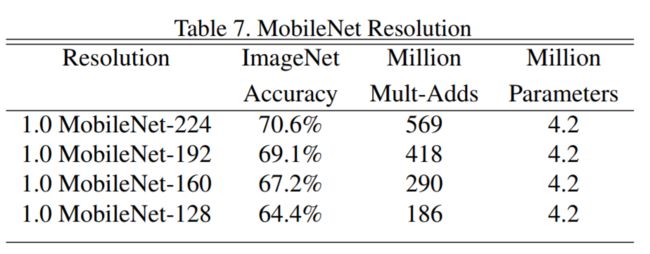

- Resolution Multiplier: Reduced Representation

分辨率因子β的取值范围**在(0,1]**之间,是作用于每一个模块输入尺寸的约减因子,简单来说就是将输入数据以及由此在每一个模块产生的特征图都变小了,结合宽度因子α,deep-wise结合1x1方式的卷积核计算量为:

不同的β系数作用于标准MobileNet时,对精度和计算量以的影响(α固定)

使用宽度和分辨率参数调整网络结构之后,都要从随机初始化重新训练才能得到新网络。

1.5 Module

Training

作者基于TensorFlow训练MobileNet,使用RMSprop算法优化网络参数。考虑到较小的网络不会有严重的过拟合问题,因此没有做大量的数据增强工作。在训练过程中也没有采用训练大网络时的一些常用手段,例如:辅助损失函数,随机图像裁剪输入等。

deep-wise卷积核含有的参数较少,作者发现这部分最好使用较小的weight decay或者不使用weightdecay。

pytorch

class Block(nn.Module):

'''Depthwise conv + Pointwise conv'''

def __init__(self, in_planes, out_planes, stride=1):

super(Block, self).__init__()

self.conv1 = nn.Conv2d(in_planes, in_planes, kernel_size=3, stride=stride, padding=1, groups=in_planes, bias=False)

self.bn1 = nn.BatchNorm2d(in_planes)

self.conv2 = nn.Conv2d(in_planes, out_planes, kernel_size=1, stride=1, padding=0, bias=False)

self.bn2 = nn.BatchNorm2d(out_planes)

def forward(self, x):

out = F.relu(self.bn1(self.conv1(x)))

out = F.relu(self.bn2(self.conv2(out)))

return out

class MobileNet(nn.Module):

# (128,2) means conv planes=128, conv stride=2, by default conv stride=1

cfg = [64, (128,2), 128, (256,2), 256, (512,2), 512, 512, 512, 512, 512, (1024,2), 1024]

def __init__(self, num_classes=10):

super(MobileNet, self).__init__()

self.conv1 = nn.Conv2d(3, 32, kernel_size=3, stride=1, padding=1, bias=False)

self.bn1 = nn.BatchNorm2d(32)

self.layers = self._make_layers(in_planes=32)

self.linear = nn.Linear(1024, num_classes)

def _make_layers(self, in_planes):

layers = []

for x in self.cfg:

out_planes = x if isinstance(x, int) else x[0] # 如果x是int类型,out_planes=x,否则out_planes=x[0]

stride = 1 if isinstance(x, int) else x[1]

layers.append(Block(in_planes, out_planes, stride))

in_planes = out_planes

return nn.Sequential(*layers)

def forward(self, x):

out = F.relu(self.bn1(self.conv1(x)))

out = self.layers(out)

out = F.avg_pool2d(out, 2)

out = out.view(out.size(0), -1)

out = self.linear(out)

return out

print(model)

==> Building model..

net=MobileNet(

(conv1): Conv2d(3, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(layers): Sequential(

(0): Block(

(conv1): Conv2d(32, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=32, bias=False)

(bn1): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(32, 64, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn2): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

(1): Block(

(conv1): Conv2d(64, 64, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), groups=64, bias=False)

(bn1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(64, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

(2): Block(

(conv1): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=128, bias=False)

(bn1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(128, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

(3): Block(

(conv1): Conv2d(128, 128, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), groups=128, bias=False)

(bn1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(128, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn2): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

(4): Block(

(conv1): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=256, bias=False)

(bn1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(256, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn2): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

(5): Block(

(conv1): Conv2d(256, 256, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), groups=256, bias=False)

(bn1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(256, 512, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn2): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

(6): Block(

(conv1): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=512, bias=False)

(bn1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(512, 512, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn2): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

(7): Block(

(conv1): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=512, bias=False)

(bn1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(512, 512, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn2): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

(8): Block(

(conv1): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=512, bias=False)

(bn1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(512, 512, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn2): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

(9): Block(

(conv1): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=512, bias=False)

(bn1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(512, 512, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn2): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

(10): Block(

(conv1): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=512, bias=False)

(bn1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(512, 512, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn2): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

(11): Block(

(conv1): Conv2d(512, 512, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), groups=512, bias=False)

(bn1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(512, 1024, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn2): BatchNorm2d(1024, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

(12): Block(

(conv1): Conv2d(1024, 1024, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=1024, bias=False)

(bn1): BatchNorm2d(1024, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(1024, 1024, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn2): BatchNorm2d(1024, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(linear): Linear(in_features=1024, out_features=10, bias=True)

)

size()

Epoch: 0

[ batch_size , out_channels , h , w ]

in.x=torch.Size([128, 3, 32, 32])

conv1.out=torch.Size([128, 32, 32, 32])

x=torch.Size([128, 32, 32, 32])

conv1.out=torch.Size([128, 32, 32, 32])

conv2.out=torch.Size([128, 64, 32, 32])

x=torch.Size([128, 64, 32, 32])

conv1.out=torch.Size([128, 64, 16, 16])

conv2.out=torch.Size([128, 128, 16, 16])

x=torch.Size([128, 128, 16, 16])

conv1.out=torch.Size([128, 128, 16, 16])

conv2.out=torch.Size([128, 128, 16, 16])

x=torch.Size([128, 128, 16, 16])

conv1.out=torch.Size([128, 128, 8, 8])

conv2.out=torch.Size([128, 256, 8, 8])

x=torch.Size([128, 256, 8, 8])

conv1.out=torch.Size([128, 256, 8, 8])

conv2.out=torch.Size([128, 256, 8, 8])

x=torch.Size([128, 256, 8, 8])

conv1.out=torch.Size([128, 256, 4, 4])

conv2.out=torch.Size([128, 512, 4, 4])

x=torch.Size([128, 512, 4, 4])

conv1.out=torch.Size([128, 512, 4, 4])

conv2.out=torch.Size([128, 512, 4, 4])

x=torch.Size([128, 512, 4, 4])

conv1.out=torch.Size([128, 512, 4, 4])

conv2.out=torch.Size([128, 512, 4, 4])

x=torch.Size([128, 512, 4, 4])

conv1.out=torch.Size([128, 512, 4, 4])

conv2.out=torch.Size([128, 512, 4, 4])

x=torch.Size([128, 512, 4, 4])

conv1.out=torch.Size([128, 512, 4, 4])

conv2.out=torch.Size([128, 512, 4, 4])

x=torch.Size([128, 512, 4, 4])

conv1.out=torch.Size([128, 512, 4, 4])

conv2.out=torch.Size([128, 512, 4, 4])

x=torch.Size([128, 512, 4, 4])

conv1.out=torch.Size([128, 512, 2, 2])

conv2.out=torch.Size([128, 1024, 2, 2])

x=torch.Size([128, 1024, 2, 2])

conv1.out=torch.Size([128, 1024, 2, 2])

conv2.out=torch.Size([128, 1024, 2, 2])

layers.out=torch.Size([128, 1024, 2, 2])

avg_pool2d.out=torch.Size([128, 1024, 1, 1])

out=torch.Size([128, 1024])

linear=torch.Size([128, 10])

2、MobileNet V2

Inverted Residuals and Linear Bottlenecks: Mobile Networks for Classification, Detection and Segmentation

2.1 V1 Disadvantage

- 结构问题:非常简单,复古的直筒结构,类似于VGG,没有使用 Concat/Eltwise+ 等操作进行融合

- Depthwise问题:每个kernel dim相对于普通Conv要小得多,过小的kernel_dim, 加上ReLU的激活影响下,使得神经元输出很容易变为0,所以就学废了。ReLU对于0的输出的梯度为0,所以一旦陷入0输出,就没法恢复了。我们还发现,这个问题在定点化低精度训练的时候会进一步放大。

2.2 V2 innovation

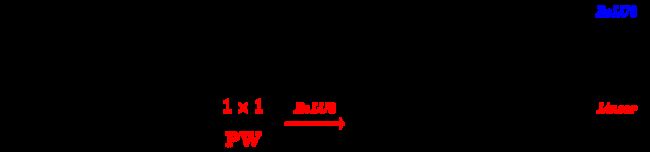

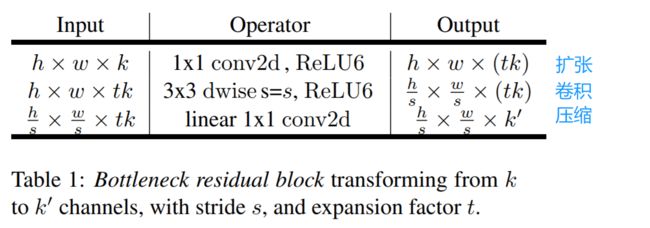

- Inverted residuals

通常的residuals block是先经过一个11的Conv layer,把feature map的通道数“压”下来,再经过33 Conv layer,最后经过一个1*1 的Conv layer,将feature map 通道数再“扩张”回去。即先“压缩”,最后“扩张”回去。

而 inverted residuals就是 先“扩张”,最后“压缩”。

- Linear bottlenecks

避免Relu对特征的破坏,在residual block的Eltwise sum之前的那个 1*1 Conv 不再采用Relu

- Difference between V1 and V2

ReLU6 :就是普通的ReLU但是限制最大输出值为 6,这是为了在移动端设备 float16/int8 的低精度的时候,也能有很好的数值分辨率,如果对 ReLU 的激活范围不加限制,输出范围为0到正无穷,如果激活值非常大,分布在一个很大的范围内,则低精度的float16/int8无法很好地精确描述如此大范围的数值,带来精度损失。

- V1 、V2 相同

都采用 Depth-wise (DW) 卷积搭配 Point-wise (PW) 卷积的方式来提特征。

好处是理论上可以成倍的减少卷积层的时间复杂度和空间复杂度。

-

V1 、V2 不同

- V2 在 DW 卷积之前新加了一个 PW 卷积。

reason:DW 卷积没有改变通道数的能力,在低维空间提特征,效果不够好。 - V2 去掉了第二个 PW 的激活函数。

reason:激活函数在高维空间能够有效的增加非线性,而在低维空间时则会破坏特征,第二个 PW 的主要功能就是降维。

- V2 在 DW 卷积之前新加了一个 PW 卷积。

-

Difference between ResNet and V2

-

MobileNet V2 、ResNet 相同

- MobileNet V2 借鉴 ResNet,都采用了 1 × 1 → 3 × 3 → 1 × 1 的模式。

- MobileNet V2 借鉴 ResNet,同样使用 Shortcut 将输出与输入相加

-

MobileNet V2 、ResNet 不同

- ResNet 使用 标准卷积 提特征,MobileNet 始终使用 DW卷积 提特征。

- ResNet 先降维 (0.25倍)、卷积、再升维,而 MobileNet V2 则是 先升维 (6倍)、卷积、再降维。

ResNet 的微结构是沙漏形,相反, MobileNet V2 则是纺锤形

2.3 bottleneck

2.4 Network

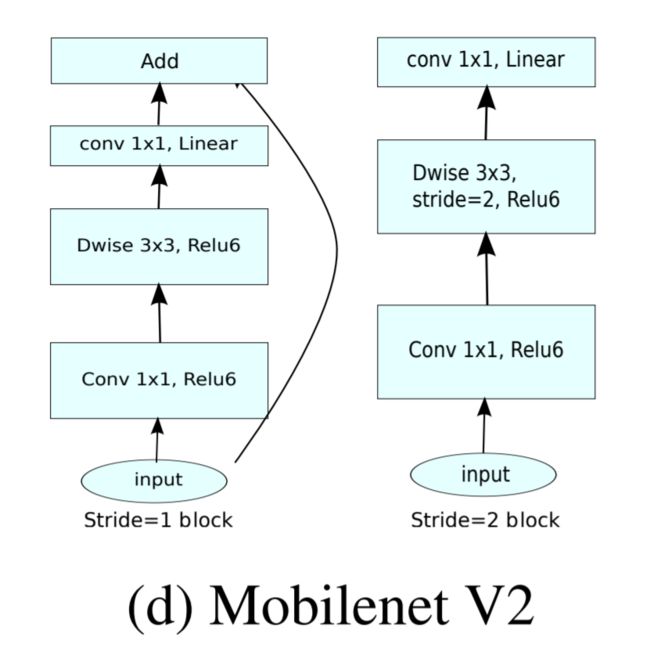

针对stride=1 和stride=2,在block上有稍微不同,主要是为了与shortcut的维度匹配,因此,stride=2时,不采用shortcut。 具体如下图:

❤问题 下采样

除了最后的avgpool,整个网络并没有采用pooling进行下采样,而是利用stride=2来下采样。

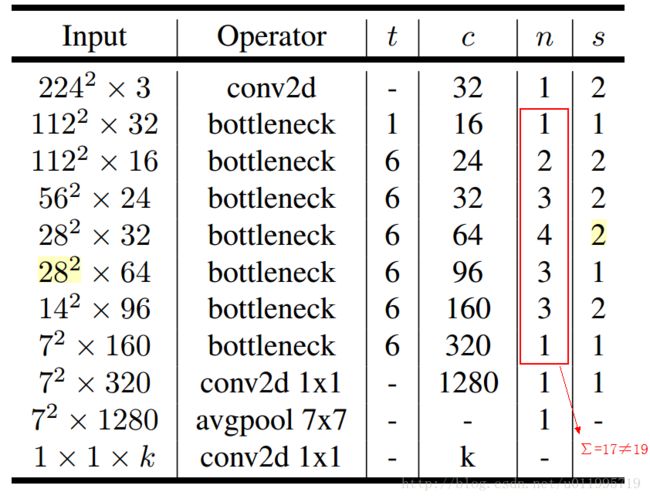

structure

其中:

t 是输入通道的倍增系数(即中间部分的通道数是输入通道数的多少倍)

n 是该模块重复次数

c 是输出通道数

s 是该模块第一次重复时的 stride(后面重复都是 stride 1)

两点有误之处吧:

- 第五行,也就是第7~10个bottleneck,stride=2,分辨率应该从28降低到14;如果不是分辨率出错,那就应该是stride=1;

- 文中提到共计采用19个bottleneck,但是这里只有17个。

Network structure diagram

❤问题 shortcut

我觉得他画的这个有问题 ,因为他stride=1且输入输出channel不一样的时候没有shortcut

2.5 Module

net=MobileNetV2(

(conv1): Conv2d(3, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(layers): Sequential(

(0): Block(

(conv1): Conv2d(32, 32, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn1): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(32, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=32, bias=False)

(bn2): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv3): Conv2d(32, 16, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn3): BatchNorm2d(16, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(shortcut): Sequential(

(0): Conv2d(32, 16, kernel_size=(1, 1), stride=(1, 1), bias=False)

(1): BatchNorm2d(16, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(1): Block(

(conv1): Conv2d(16, 96, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn1): BatchNorm2d(96, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(96, 96, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=96, bias=False)

(bn2): BatchNorm2d(96, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv3): Conv2d(96, 24, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn3): BatchNorm2d(24, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(shortcut): Sequential(

(0): Conv2d(16, 24, kernel_size=(1, 1), stride=(1, 1), bias=False)

(1): BatchNorm2d(24, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(2): Block(

(conv1): Conv2d(24, 144, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn1): BatchNorm2d(144, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(144, 144, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=144, bias=False)

(bn2): BatchNorm2d(144, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv3): Conv2d(144, 24, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn3): BatchNorm2d(24, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(shortcut): Sequential()

)

(3): Block(

(conv1): Conv2d(24, 144, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn1): BatchNorm2d(144, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(144, 144, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), groups=144, bias=False)

(bn2): BatchNorm2d(144, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv3): Conv2d(144, 32, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn3): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(shortcut): Sequential()

)

(4): Block(

(conv1): Conv2d(32, 192, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn1): BatchNorm2d(192, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(192, 192, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=192, bias=False)

(bn2): BatchNorm2d(192, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv3): Conv2d(192, 32, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn3): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(shortcut): Sequential()

)

(5): Block(

(conv1): Conv2d(32, 192, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn1): BatchNorm2d(192, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(192, 192, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=192, bias=False)

(bn2): BatchNorm2d(192, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv3): Conv2d(192, 32, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn3): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(shortcut): Sequential()

)

(6): Block(

(conv1): Conv2d(32, 192, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn1): BatchNorm2d(192, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(192, 192, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), groups=192, bias=False)

(bn2): BatchNorm2d(192, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv3): Conv2d(192, 64, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn3): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(shortcut): Sequential()

)

(7): Block(

(conv1): Conv2d(64, 384, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn1): BatchNorm2d(384, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(384, 384, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=384, bias=False)

(bn2): BatchNorm2d(384, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv3): Conv2d(384, 64, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn3): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(shortcut): Sequential()

)

(8): Block(

(conv1): Conv2d(64, 384, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn1): BatchNorm2d(384, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(384, 384, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=384, bias=False)

(bn2): BatchNorm2d(384, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv3): Conv2d(384, 64, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn3): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(shortcut): Sequential()

)

(9): Block(

(conv1): Conv2d(64, 384, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn1): BatchNorm2d(384, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(384, 384, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=384, bias=False)

(bn2): BatchNorm2d(384, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv3): Conv2d(384, 64, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn3): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(shortcut): Sequential()

)

(10): Block(

(conv1): Conv2d(64, 384, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn1): BatchNorm2d(384, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(384, 384, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=384, bias=False)

(bn2): BatchNorm2d(384, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv3): Conv2d(384, 96, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn3): BatchNorm2d(96, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(shortcut): Sequential(

(0): Conv2d(64, 96, kernel_size=(1, 1), stride=(1, 1), bias=False)

(1): BatchNorm2d(96, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(11): Block(

(conv1): Conv2d(96, 576, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn1): BatchNorm2d(576, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(576, 576, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=576, bias=False)

(bn2): BatchNorm2d(576, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv3): Conv2d(576, 96, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn3): BatchNorm2d(96, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(shortcut): Sequential()

)

(12): Block(

(conv1): Conv2d(96, 576, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn1): BatchNorm2d(576, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(576, 576, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=576, bias=False)

(bn2): BatchNorm2d(576, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv3): Conv2d(576, 96, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn3): BatchNorm2d(96, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(shortcut): Sequential()

)

(13): Block(

(conv1): Conv2d(96, 576, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn1): BatchNorm2d(576, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(576, 576, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), groups=576, bias=False)

(bn2): BatchNorm2d(576, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv3): Conv2d(576, 160, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn3): BatchNorm2d(160, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(shortcut): Sequential()

)

(14): Block(

(conv1): Conv2d(160, 960, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn1): BatchNorm2d(960, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(960, 960, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=960, bias=False)

(bn2): BatchNorm2d(960, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv3): Conv2d(960, 160, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn3): BatchNorm2d(160, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(shortcut): Sequential()

)

(15): Block(

(conv1): Conv2d(160, 960, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn1): BatchNorm2d(960, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(960, 960, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=960, bias=False)

(bn2): BatchNorm2d(960, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv3): Conv2d(960, 160, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn3): BatchNorm2d(160, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(shortcut): Sequential()

)

(16): Block(

(conv1): Conv2d(160, 960, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn1): BatchNorm2d(960, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(960, 960, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=960, bias=False)

(bn2): BatchNorm2d(960, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv3): Conv2d(960, 320, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn3): BatchNorm2d(320, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(shortcut): Sequential(

(0): Conv2d(160, 320, kernel_size=(1, 1), stride=(1, 1), bias=False)

(1): BatchNorm2d(320, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

)

(conv2): Conv2d(320, 1280, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn2): BatchNorm2d(1280, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(linear): Linear(in_features=1280, out_features=10, bias=True)

)