激光雷达和相机联合标定之-but_velodyne_camera

1. 概述

1.该方法适用于相机和激光雷达朝向方向相同或者近似相同的状态,相机和激光雷达之间的R矩阵较小,主要标定误差为T矩阵。 /ps:可以先将激光雷达旋转到与相机安装坐标系相似的世界坐标系下,再使用该方法进行标定。/

2.该方法分为两个主要步骤,T矩阵标定;R|T矩阵联合标定

3.其中T矩阵标定基于相机的小孔成像原理中,世界坐标系下物体与像素坐标系之间的相似三角形关系获得。

4.R|T矩阵联合标定通过在小范围内迭代求解获得。

2.T矩阵标定

2.1 图像特征圆提取

2.1.1 边缘检测

源代码中提供了3种不同的边缘检测算法:

- sobel边缘检测

cv::Mat ImageEdge::computeEdgeImage()

{

cv::Mat gray_img;

if(this->m_img.channels()>1)

{

gray_img = cv::Mat(this->m_img.size(), CV_8UC1);

cv::cvtColor(this->m_img, gray_img, CV_BGR2GRAY);

}

else

{

this->m_img.copyTo(gray_img);

}

cv::Mat edges;

cv::Mat grad_x, grad_y;

cv::Mat abs_grad_x, abs_grad_y;

cv::Sobel(gray_img, grad_x, CV_16S, 1, 0, 3);

cv::convertScaleAbs(grad_x, abs_grad_x);

cv::Sobel(gray_img, grad_y, CV_16S, 0, 1, 3);

cv::convertScaleAbs(grad_y, abs_grad_y);

cv::addWeighted(abs_grad_x, 0.5, abs_grad_y, 0.5, 0, edges);

if(m_write_img)

{

cv::imwrite(m_imgsave_path+"/edge_img.jpg",edges);

}

return edges;

}

- 自适应阈值分割检测

cv::Mat ImageEdge::segmetation()

{

cv::Mat src_gray;

if(this->m_img.channels() > 1)

{

cv::cvtColor(m_img, src_gray, CV_BGR2GRAY);

}

else

{

src_gray = m_img;

}

cv::resize(src_gray, src_gray, cv::Size(src_gray.rows, src_gray.cols));

cv::Mat seg;

cv::adaptiveThreshold(src_gray, seg, 1, CV_ADAPTIVE_THRESH_GAUSSIAN_C,

CV_THRESH_BINARY, 7 , 5);

if(m_write_img)

{

cv::imwrite(m_imgsave_path+"/segment.jpg",seg*255);

}

return seg;

}

- IDT边缘检测–未完全理解

cv::Mat ImageEdge::computeIDTEdgeImage(cv::Mat& edge_img)

{

cv::Mat edges;

edge_img.convertTo(edges, CV_32FC1);

cv::Mat default_edges = edges;

cv::Mat weights = cv::Mat::ones(edges.size(), CV_32FC1);

cv::Mat new_weights = cv::Mat::ones(edges.size(), CV_32FC1);

cv::Mat new_edges = cv::Mat::zeros(edges.size(), CV_32FC1);

int weights_middle = m_distance_weights.cols/2;

for(int rows_cols = 0; rows_cols <2; rows_cols++)

{

int width = edges.cols;

for(int row =0; row <edges.rows; row++)

{

for(int x=0;x<edges.cols;x++)

{

cv::Mat sub_weights;

cv::min(m_distance_weights(cv::Rect(weights_middle - x, 0, width, 1)),

weights.row(row), sub_weights);

cv::Mat edges_row = edges.row(row);

cv::Mat sub_res = sub_weights.mul(edges_row);

double max;

cv::Point max_loc;

cv::minMaxLoc(sub_res, nullptr, &max, nullptr, &max_loc);

new_edges.at<float>(row, x) = max;

new_weights.at<float>(row, x) = sub_weights.at<float>(max_loc.x);

}

}

new_edges = new_edges.t();

new_weights = new_weights.t();

edges = new_edges;

weights = new_weights;

}

cv::Mat idt_edge_img = new_edges.mul(new_weights);

cv::addWeighted(default_edges, m_alpha, idt_edge_img, (1.0 - m_alpha), 0.0, idt_edge_img);

cv::normalize(idt_edge_img, idt_edge_img, 0.0, 1.0, cv::NORM_MINMAX);

cv::threshold(idt_edge_img, idt_edge_img, 0.5,1.0, 0);

cv::Mat element = cv::getStructuringElement(cv::MORPH_RECT, cv::Size(5,5));

cv::dilate(idt_edge_img, idt_edge_img, element);

if(m_write_img)

{

cv::imwrite(m_imgsave_path+"/idt_edge_img.jpg", idt_edge_img.mul(cv::Scalar::all(255.0)));

}

return idt_edge_img;

}

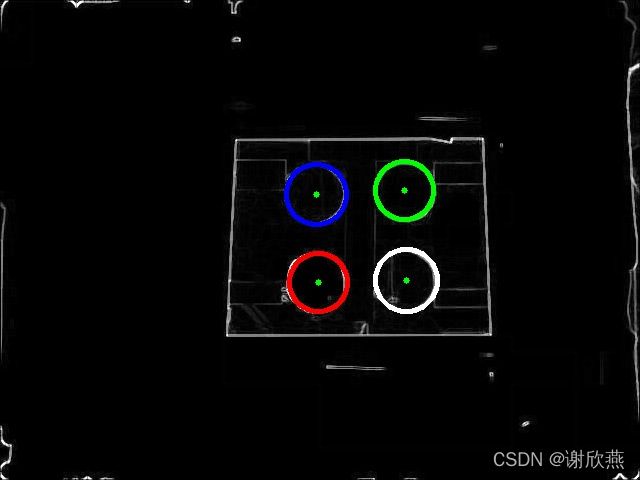

基于三种不同的边缘检测算法,获得的边缘检测图如下:

row_image

sobel边缘检测

sobel边缘检测

idt边缘检测

idt边缘检测

自适应阈值分割边缘检测

自适应阈值分割边缘检测

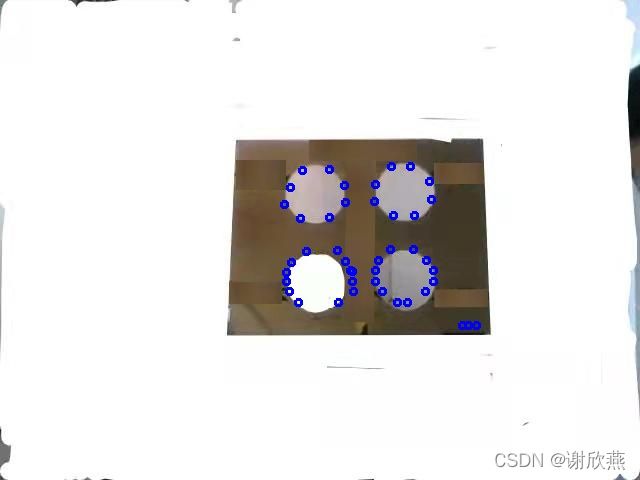

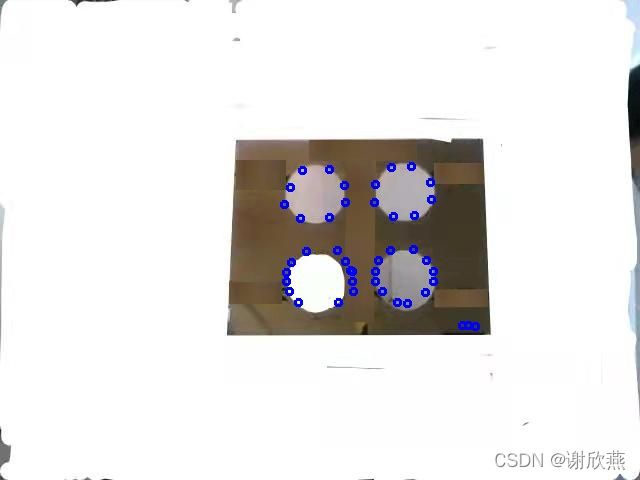

2.1.2 边缘检测图中4个特征圆检测-houghcircle检测

bool ImageEdge::detect4Circles(float canny_thresh, float center_thresh,

std::vector<cv::Point2f> ¢ers,

std::vector<float> &radiuses)

{

std::vector<cv::Vec3f> circles;

radiuses.clear();

centers.clear();

cv::Mat src_gray;

src_gray = this->m_img.clone();

for(int thresh = canny_thresh; circles.size()<4 && thresh>10; thresh -= 5)

{

cv::HoughCircles(src_gray, circles, CV_HOUGH_GRADIENT, 1, src_gray.rows/8, canny_thresh, thresh, 0, 0);

// std::cout<

}

if(circles.size() < 4)

{

return false;

}

std::sort(circles.begin(), circles.end(), order_Y);

std::sort(circles.begin(), circles.begin() + 2, order_X);

std::sort(circles.begin()+2, circles.begin() +4, order_X);

for(size_t i =0; i< circles.size(); i++)

{

centers.push_back(cv::Point2f(circles[i][0], circles[i][1]));

radiuses.push_back(cvRound(circles[i][2]));

}

if(m_write_img)

{

cv::Mat src_rgb;

cv::cvtColor(src_gray, src_rgb, cv::COLOR_GRAY2BGR);

std::vector<cv::Scalar> colors;

colors.push_back(cv::Scalar(255,0,0));

colors.push_back(cv::Scalar(0,255,0));

colors.push_back(cv::Scalar(0,0,255));

colors.push_back(cv::Scalar(255,255,255));

for (size_t i = 0; i < circles.size(); i++)

{

cv::Point center(cvRound(circles[i][0]), cvRound(circles[i][1]));

int radius = cvRound(circles[i][2]);

cv::circle(src_rgb, center, 3, cv::Scalar(0, 255, 0), -1, 8, 0);

cv::circle(src_rgb, center, radius, colors[i], 3, 8, 0);

// std::cout << i+1 << ". circle S("<}

cv::imwrite(m_imgsave_path+"/4_circles_img.jpg", src_rgb);

}

return true;

}

2.2 基于ransac的激光点云圆特征检测

2.2.1 方案重点

- 本方案中需要由于算法中需要按圈计算点云的边界点,因此首先计算激光点云获取的每个点的激光线束,比如本例中使用的是速腾的太阳神32线激光雷达,首先需要通过激光雷达的线束计算公式,计算出每个点的线束。

int getRow32(const float ver_ang)

{

int row=-1;

if(ver_ang <-9.0)

{

row = std::round((ver_ang+1.0)/3.0+18.0);

}

else if(ver_ang >6.2)

{

row = std::round((ver_ang+1.0)/2.0+23.0);

}

else if(ver_ang<=-7.5)

{

row = std::round(ver_ang/2.0 + 20.0);

}

else if(ver_ang>4.8 && ver_ang <=6.2)

{

row=26;

}

else {

row = std::round(ver_ang/1.33 + 22);

}

if(row<0 || row>32-1)

{

row = -1;

}

return row;

}

其中pcl定义新数据类型方法如下:

struct Point

{

PCL_ADD_POINT4D;

float intensity;

uint16_t ring;

float range;

EIGEN_MAKE_ALIGNED_OPERATOR_NEW // ensure proper alignment

// PCL_MAKE_ALIGNED_OPERATOR_NEW

}EIGEN_ALIGN16;

POINT_CLOUD_REGISTER_POINT_STRUCT(Point,

(float, x, x)

(float, y, y)

(float, z, z)

(float, intensity, intensity)

(uint16_t, ring, ring))

- 点云的坐标系为,垂直于激光雷达发射面,沿着行人行走的方向为Z轴,垂直于Z轴的水平方向为x轴,高度方向为y轴。

- 基于相机的内参,判断存在于点云内部的点

pcl::PointCloud<Point> visible_cloud;

this->project(projection_matrix, cv::Rect(0, 0, image_width, image_height), &visible_cloud);

PointCloudEdge visible_scan(visible_cloud);

2.2.2 检测步骤

- 边缘检测

基于单圈内相邻点的距离差,计算该点是否为突变点,在同一平面上的点,相差为0,两个平面的边缘线处,相邻点距离差较大。然后对计算的差值进行归一化。

void PointCloudEdge::intensityByDiff(Processing processing)

{

std::vector<std::vector<Point*>> rings = getRings();

for(std::vector<std::vector<Point*>>::iterator ring = rings.begin();

ring< rings.end(); ring++)

{

Point* prev, *next;

if(ring->empty())

{

continue;

}

(*ring->begin())->intensity = 0;

(*(ring->end()-1))->intensity = 0;

for(std::vector<Point*>::iterator pt=ring->begin()+1; pt<ring->end()-1; pt++)

{

prev = *(pt-1);

next = *(pt+1);

switch(processing)

{

case Processing::DISTORTIONS:

(*pt)->intensity = std::max(std::max(prev->range-(*pt)->range,

next->range-(*pt)->range),float(0));

break;

case Processing::INTENSITY_EDGES:

(*pt)->intensity = std::max(std::max(prev->intensity-(*pt)->intensity,

next->intensity-(*pt)->intensity),float(0));

break;

default:

std::cout<<"Velodyne processing unknown."<<std::endl;

exit(1);

}

}

}

normalizeIntensity(0.0, 1.0);

}

/ps:基于此,本例中标定板需要在一个较大的固定的墙面前面,从而减少其他建筑物的边缘影像,或者选择指定区域检测标定板的边缘/

- 基于ransac的平面拟合

pcl::SampleConsensusModelPlane<pcl::PointXYZI>::Ptr model_p(

new pcl::SampleConsensusModelPlane<pcl::PointXYZI>(xyz_cloud));

pcl::RandomSampleConsensus<pcl::PointXYZI> ransac(model_p);

ransac.setDistanceThreshold(0.1);

ransac.computeModel();

std::vector<int> inliers_indicies;

ransac.getInliers(inliers_indicies);

pcl::copyPointCloud<pcl::PointXYZI>(*xyz_cloud, inliers_indicies, m_plane);

- 基于ransac的标定板边缘线删除-消除边缘线对圆环检测的影响,或者制作边缘线与特征圆距离较远的标定板

// for(int i=0;i<2;i++)

// {

// pcl::PointCloud::Ptr plane_ptr(

// new pcl::PointCloud(m_plane));

// pcl::SampleConsensusModelLine::Ptr model_l(

// new pcl::SampleConsensusModelLine(plane_ptr));

// pcl::RandomSampleConsensus ransac_l(model_l);

// ransac_l.setDistanceThreshold(0.02);

// ransac_l.computeModel();

// std::vector line_inliers;

// ransac_l.getInliers(line_inliers);

// if(line_inliers.empty())

// {

// continue;

// }

// pcl::PointCloud plane_no_line;

// removeLines(*plane_ptr, line_inliers, plane_no_line);

// m_plane=plane_no_line;

// }

- 基于ransac的特征圆检测

radiuses.clear();

std::vector<pcl::PointXYZ> centers;

std::vector<int> inliers_indicies;

pcl::PointCloud<pcl::PointXYZ> *four_spheres=new pcl::PointCloud<pcl::PointXYZ>();

float tolerance = 0.08;

for(int i=0;i<4;i++)

{

pcl::SampleConsensusModelSphere<pcl::PointXYZI>::Ptr model_s(

new pcl::SampleConsensusModelSphere<pcl::PointXYZI>(plane));

// std::cout<<0.85*m_circle_radius<<" "<<1.15*m_circle_radius<

model_s->setRadiusLimits(0.85*m_circle_radius, 1.15*m_circle_radius);

pcl::RandomSampleConsensus<pcl::PointXYZI> ransac_sphere(model_s);

ransac_sphere.setDistanceThreshold(tolerance);

ransac_sphere.computeModel();

inliers_indicies.clear();

ransac_sphere.getInliers(inliers_indicies);

if(inliers_indicies.size() == 0)

{

continue;

}

Eigen::VectorXf coeficients;

ransac_sphere.getModelCoefficients(coeficients);

// std::cout<

pcl::PointCloud<pcl::PointXYZI>::Ptr outliers(new pcl::PointCloud<pcl::PointXYZI>);

pcl::PointCloud<pcl::PointXYZI>::Ptr inliers(new pcl::PointCloud<pcl::PointXYZI>);

removeLines<pcl::PointXYZI>(*plane, inliers_indicies, *outliers);

pcl::copyPointCloud<pcl::PointXYZI>(*plane, inliers_indicies, *inliers);

plane = outliers;

// std::cout<points.size();

for(int i=0;i<inliers->points.size();i++)

{

pcl::PointXYZ p;

p.x = inliers->points[i].x;

p.y = inliers->points[i].y;

p.z = inliers->points[i].z;

four_spheres->points.push_back(p);

}

pcl::PointXYZ middle(coeficients(0), coeficients(1), coeficients(2));

four_spheres->push_back(middle);

centers.push_back(middle);

float radius = coeficients(3);

radiuses.push_back(radius);

}

// PointCloud::Ptr four_spheres_ptr(four_spheres);

return centers;

- 特征圆验证

bool PointCloudEdge::verify4Spheres(const std::vector<pcl::PointXYZ>& spheres_centers, float straight_distance, float delta)

{

std::vector<std::pair<int, int>> near_indexes;

near_indexes.push_back(std::pair<int, int>(0,1));

near_indexes.push_back(std::pair<int, int>(1,3));

near_indexes.push_back(std::pair<int, int>(3,2));

near_indexes.push_back(std::pair<int, int>(2,0));

bool res = true;

for(std::vector<std::pair<int, int>>::iterator ne=near_indexes.begin();ne<near_indexes.end();ne++)

{

float error = std::abs(

euclidDist(spheres_centers[ne->first], spheres_centers[ne->second]) - straight_distance);

// std::cout<

if(error>delta)

res=false;

}

return res;

}

2.3 基于相机特征圆与特征圆半径和点云特征圆与特征圆半径的T矩阵计算

整体思路为

1.z/3d_r = f/3d_r – 计算translation_z

2.x/f=x3/z --计算translation_x

3.y/f=y3/z --计算translation_y

Calibration6DoF Calibration::findTranslation(

std::vector<cv::Point2f> image, std::vector<cv::Point3f> velodyne,

cv::Mat projection, float radius2D, float radius3D)

{

std::vector<float> translation(3, 0);

enum INDEX

{

X=0, Y=1, Z=2

};

float focal_len = projection.at<float>(0,0);

// std::cout<

translation[INDEX::Z] = radius3D*focal_len/radius2D-velodyne.front().z;

float principal_x = projection.at<float>(0,2);

float principal_y = projection.at<float>(1,2);

for(size_t i=0; i<image.size(); i++)

{

translation[INDEX::X] += (image[i].x - principal_x)

* (velodyne[i].z + translation[INDEX::Z]) / focal_len - velodyne[i].x;

translation[INDEX::Y] += (image[i].y - principal_y)

* (velodyne[i].z + translation[INDEX::Z]) / focal_len - velodyne[i].y;

}

translation[INDEX::X] /= image.size();

translation[INDEX::Y] /= image.size();

return Calibration6DoF(translation[INDEX::X], translation[INDEX::Y], translation[INDEX::Z], 0, 0, 0, 0);

}

3.R-T矩阵联合标定

通过小范围内像素级匹配,获得匹配后的相似度,不断跌倒计算最优值

void Calibration::calibrationRefinement(

ImageEdge img, PointCloudEdge scan, cv::Mat P,

float x_rough, float y_rough, float z_rough,

float max_translation, float max_rotation, unsigned steps,

Calibration6DoF &best_calibration, Calibration6DoF &average)

{

img = ImageEdge(img.computeIDTEdgeImage());

float x_min = x_rough - max_translation;

float y_min = y_rough - max_translation;

float z_min = z_rough - max_translation;

float x_rot_min = - max_rotation;

float y_rot_min = - max_rotation;

float z_rot_min = - max_rotation;

float step_transl = max_translation*2/(steps -1);

float step_rot = max_rotation*2/(steps-1);

PointCloudEdge transformed = scan.transform(x_rough, y_rough, z_rough, 0, 0, 0);

float rough_val = Similarity::edgeSimilarity(img, transformed, P);

std::cout<<rough_val<<std::endl;

best_calibration.set(x_rough, y_rough, z_rough, 0, 0, 0, rough_val);

int counter = 0;

int cnt = 0;

int counter_best = 0;

float x=x_min;

for(size_t xi=0;xi<steps;xi++)

{

float y=y_min;

for(size_t yi=0; yi<steps; yi++)

{

float z=z_min;

for(size_t zi=0; zi<steps; zi++)

{

float x_r = x_rot_min;

for(size_t x_ri=0; x_ri<steps; x_ri++)

{

float y_r = y_rot_min;

for(size_t y_ri=0; y_ri<steps; y_ri++)

{

float z_r = z_rot_min;

for(size_t z_ri=0; z_ri<steps; z_ri++)

{

PointCloudEdge transformed = scan.transform(x,y,z,x_r,y_r,z_r);

float value = Similarity::edgeSimilarity(img, transformed, P);

Calibration6DoF calibration(x,y,z,x_r,y_r,z_r,value);

if(value>best_calibration.value)

{

best_calibration.set(x,y,z,x_r,y_r,z_r,value);

counter_best++;

cv::Mat final_img = img.getImage().clone();

for(pcl::PointCloud<Point>::iterator pt = transformed.begin(); pt<transformed.end(); pt++)

{

cv::Point xy=PointCloudEdge::project(*pt, P);

cv::circle(final_img, cv::Point2d(xy.x,xy.y), 3,cv::Scalar(255,0,0),2,8);

}

std::string filename = "./img_refo/"+std::to_string(counter_best++)+"_"+std::to_string(value)+"_mid.jpg";

cv::imwrite(filename, final_img);

}

// cv::Mat final_img = img.getImage().clone();

// for (::pcl::PointCloud::iterator pt = transformed.begin(); pt < transformed.end(); pt++)

// {

// cv::Point xy = Velodyne::Velodyne::project(*pt, P);

// cv::circle(final_img, cv::Point2d(xy.x,xy.y), 3,cv::Scalar(255,0,0),2,8);

// }

// std::string filename = "/home/xxy/xxy/202202/ros_test/img/"+std::to_string(cnt++)+"_"+std::to_string(value)+"_mid.jpg";

// cv::imwrite(filename, final_img);

if (value > rough_val)

{

average += calibration;

counter++;

cout << counter << ".\t";

calibration.print();

}

z_r += step_rot;

}

y_r += step_rot;

}

x_r += step_rot;

}

z+= step_transl;

}

y+= step_transl;

}

x+= step_transl;

}

if(counter==0)

average=best_calibration;

else {

average /= counter;

}

}

相似性度量

float static edgeSimilarity(ImageEdge &img, PointCloudEdge &scan, cv::Mat &P)

{

cv::Point2i m_point_topleft= cv::Point2i(250,120);

cv::Point2i m_point_bottomright= cv::Point2i(500,360);

cv::Rect frame(cv::Point(0,0), img.size());

float CC=0;

for(pcl::PointCloud<Point>::iterator pt = scan.begin(); pt < scan.end(); pt++)

{

cv::Point xy = PointCloudEdge::project(*pt, P);

// std::cout<

if(xy.inside(frame)

&& xy.x > m_point_topleft.x && xy.x < m_point_bottomright.x

&& xy.y > m_point_topleft.y && xy.y < m_point_bottomright.y)

{

// std::cout<intensity<<" ";

assert(xy.x < 640);

CC+= img.at(cv::Point2d(xy.x, xy.y))*pt->intensity;

}

}

return CC;

}

4. 标定结果

4.1 最佳结果

4.2 平均结果

5. 利用4个circle的中心点使用opencvSolvePnp求解

cv::Mat rotM, rvec, tvec;

double camD[9] = {569.1916852916451, 0, 389.2369196593608,

0, 562.7606749808537, 205.39889751999,

0, 0, 1};

double distCoeffD[5] = {0.1220090645915436, -0.07107613516559942, -0.009396424500619524, 0.05404222930344704, 0.07256047312087824};

// cv::Rodrigues(rotM, rvec);

cv::Mat distortion_coefficients = cv::Mat(5, 1, CV_64FC1, distCoeffD);

cv::Mat camera_matrix = cv::Mat(3,3,CV_64FC1,camD);

cv::solvePnP(centers3d,centers2d,camera_matrix, distortion_coefficients,rvec, tvec);

cv::Rodrigues(rvec, rotM);

std::cout<<"rotation matrix: "<<std::endl<<rotM<<std::endl<<rvec<<std::endl;

std::cout<<"translation matrix: "<<std::endl<<tvec<<std::endl;

cv::Mat final_img3=g_img.clone();

// std::cout<

// std::cout<

std::cout<<tvec.at<double>(0,0)<<" "<<tvec.at<double>(1,0)<<" "<<tvec.at<double>(2,0)

<<" "<<rvec.at<double>(0,0)<<" "<<rvec.at<double>(1,0)<<" "<<rvec.at<double>(2,0)<<std::endl;

PointCloudEdge transformed3 = pointcloud_tmp.transform(tvec.at<double>(0,0),tvec.at<double>(1,0),tvec.at<double>(2,0),

rvec.at<double>(0,0), rvec.at<double>(1,0), rvec.at<double>(2,0));

for (pcl::PointCloud<Point>::iterator pt = transformed3.begin(); pt < transformed3.end(); pt++)

{

cv::Point xy = PointCloudEdge::project(*pt, projection_matrix);

if (xy.inside(frame))

{

cv::circle(final_img3, cv::Point2d(xy.x,xy.y), 3,cv::Scalar(255,0,0),2,8);

}

}

cv::imwrite("./final_img_pnp.jpg",final_img3);

5.1 solvePnp 标定结果

6. but_veldyne 和solvepnp标定参数对比

T_0,T_1,T_2,R_0,R_1,R_2

- but_velodyne_avg

-0.537792,0.339281,0.625459,0,0,-0.0075 - but_velodyne_best

-0.537792,0.339281,0.625459,0,0,-0.01 - solvepnp

-1.02459,0.486016,0.571471,0.065575,0.224808,-0.0162254

但从结果看,差异还是挺大的,但是投影结果差异比较小