YoloV3学习笔记(四)——cfg解读

YoloV3学习笔记(四)

- YoloV3学习笔记(四)——cfg解读

-

- 一、cfg文件

- 二、最难理解部分

- 三、总结

YoloV3学习笔记(四)——cfg解读

一、cfg文件

[net]

# Testing #初始batch参数要分为两类,分别为训练集和测试集,不同模式相应放开参数

#batch=1

#subdivisions=1

# Training

batch=64

#一批样本的样本数量,每batch个 样本更新一次参数

subdivisions=8

#batch/subdivisions作为一次性送入训练样本的数量,如果内存不够大,将batch分割成subdivisions个子batch

#(subdivisions相当于分组个数,相除结果作为一次送入训练器的样本数量)

#注意:如果电脑内存小,则把batch改小一点,但batch越大,训练效果越好,subdivisions越大,可以减轻显卡压力

width=416

height=416

channels=3

#以上三个参数为输入图像的参数信息 width和height影响网络对输入图像的分辨率,从而影响precision,只可以设置成32的倍数

#(为什么是32?由于使用了下采样参数是32,所以不同的尺寸大小也选择为32的倍数{320,352…..608}

#最小320*320,最大608*608,网络会自动改变尺寸,并继续训练的过程。)

momentum=0.9

# DeepLearning1中最优化方法中的动量参数,这个值影响着梯度下降到最优值得速度

#(注:SGD方法的一个缺点是其更新方向完全依赖于当前batch计算出的梯度,因而十分不稳定。

# Momentum算法借用了物理中的动量概念,它模拟的是物体运动时的惯性,即更新的时候在一定程度上保留之前更新的方向,同时利用当前batch的梯度微调最终的更新方向。

# 这样一来,可以在一定程度上增加稳定性,从而学习地更快,并且还有一定摆脱局部最优的能力)

decay=0.0005

#权重衰减正则项,防止过拟合,正则项往往有重要意义

#增加样本的数量,改变基础样本的状态,去增加样本整体的数量,增加样本量减少过拟合

angle=0

#通过旋转角度来生成更多训练样本

saturation = 1.5

#通过调整饱和度来生成更多训练样本

exposure = 1.5

#通过调整曝光量来生成更多训练样本

hue=.1

#通过调整色调来生成更多训练样本

learning_rate=0.001

#学习率决定着权值更新的速度

burn_in=1000

#在迭代次数小于burn_in时,其学习率的更新有一种方式,大于burn_in时,才采用policy的更新方式

max_batches = 500200

#训练达到max_batches后停止学习,多个batches

policy=steps

#这个是学习率调整的策略,有policy:constant, steps, exp, poly, step, sig, RANDOM等方式

#constant ——保持学习率为常量

#steps ——比较好理解,按照steps来改变学习率

#Steps和scales相互一一对应

#下面这两个参数steps和scale是设置学习率的变化,比如迭代到40000次时,学习率衰减十倍。

#45000次迭代时,学习率又会在前一个学习率的基础上衰减十倍。根据batch_num调整学习率

steps=400000,450000

scales=.1,.1 #学习率变化的比例,累计相乘

[convolutional]

batch_normalize=1 #是否做BN操作

filters=32 # 输出特征图的数量

size=3 #卷积核的尺寸

stride=1 #做卷积运算的步长

pad=1 #如果pad为0,padding由 padding参数指定。

#如果pad为1,padding大小为size/2,padding应该是对输入图像左边缘拓展的像素数量

activation=leaky

#激活函数的类型:logistic,loggy,relu,elu,relie,plse,hardtan,lhtan,linear,ramp,leaky,tanh,stair

# Downsample #以下为训练网络结构

[convolutional]

batch_normalize=1

filters=64

size=3

stride=2

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=32

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=64

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

# Downsample

[convolutional]

batch_normalize=1

filters=128

size=3

stride=2

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=64

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=128

size=3

stride=1

pad=1

activation=leaky

#shortcut部分是卷积的跨层连接,就像Resnet中使用的一样,参数from是−3,意思是shortcut的输出是通过与先前的倒数第三层网络相加而得到。

[shortcut]

from=-3

activation=linear

#为了解决网络的梯度弥散或者梯度爆炸的现象,提出将深层神经网络的逐层训练改为逐阶段训练,将深层神经网络分为若干个子段,

#每个小段包含比较浅的网络层数,然后用shortcut的连接方式使得每个小段对于残差进行训练,每一个小段学习总差(总的损失)的一部分,

#最终达到总体较小的loss,同时,很好的控制梯度的传播,避免出现梯度消失或者爆炸等不利于训练的情形。

[convolutional]

batch_normalize=1

filters=64

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=128

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

# Downsample #下采样

[convolutional]

batch_normalize=1

filters=256

size=3

stride=2

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=128

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=256

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=128

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=256

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=128

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=256

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=128

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=256

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=128

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=256

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=128

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=256

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=128

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=256

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=128

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=256

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

# Downsample

[convolutional]

batch_normalize=1

filters=512

size=3

stride=2

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=256

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=512

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=256

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=512

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=256

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=512

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=256

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=512

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=256

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=512

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=256

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=512

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=256

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=512

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=256

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=512

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

# Downsample

[convolutional]

batch_normalize=1

filters=1024

size=3

stride=2

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=512

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=1024

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=512

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=1024

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=512

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=1024

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=512

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=1024

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

######################

[convolutional]

batch_normalize=1

filters=512

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

size=3

stride=1

pad=1

filters=1024

activation=leaky

[convolutional]

batch_normalize=1

filters=512

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

size=3

stride=1

pad=1

filters=1024

activation=leaky

[convolutional]

batch_normalize=1

filters=512

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

size=3

stride=1

pad=1

filters=1024

activation=leaky

#重点来了

[convolutional]

size=1

stride=1

pad=1

filters=18

#每一个[region/yolo]层前的最后一个卷积层中的 filters=num(yolo层个数)*(classes+5) ,5的意义是4个坐标tx,ty,tw,th+1个置信度po

activation=linear

[yolo]

mask = 6,7,8 #训练框

#mask的值是0,1,2,这意味着使用第一,第二和第三个anchor。

anchors = 10,13, 16,30, 33,23, 30,61, 62,45, 59,119, 116,90, 156,198, 373,326

#anchors是可以事先通过cmd指令计算出来的,是和图片数量,width,height以及cluster(应该就是下面的num的值,即想要使用的anchors的数量)相关的预选框,

#可以手工挑选,也可以通过k means 从训练样本中学出

#预测框的初始宽高,第一个是w,第二个是h,总数量是num*2

classes=1

num=9

#每个grid预测的BoundingBox num/yolo层个数

jitter=.3

#利用数据抖动产生更多数据,YOLOv2中使用的是crop,filp,以及net层的angle,flip是随机的,

#crop就是jitter的参数,tiny-yolo-voc.cfg中jitter=.2,就是在0~0.2中进行crop

ignore_thresh = .7

#ignore_thresh 指得是参与计算的IOU阈值大小。

#当预测的检测框与ground true的IOU大于ignore_thresh的时候,不会参与loss的计算,否则,检测框将会参与损失计算。

#目的是控制参与loss计算的检测框的规模,当ignore_thresh过于大,接近于1的时候,那么参与检测框回归loss的个数就会比较少,同时也容易造成过拟合;

#而如果ignore_thresh设置的过于小,那么参与计算的会数量规模就会很大。同时也容易在进行检测框回归的时候造成欠拟合。

#一般选取0.5-0.7之间的一个值,之前的计算基础都是小尺度(13*13)用的是0.7,(26*26)用的是0.5。

truth_thresh = 1

random=1

# random设置成1,可以增加检测精度precision

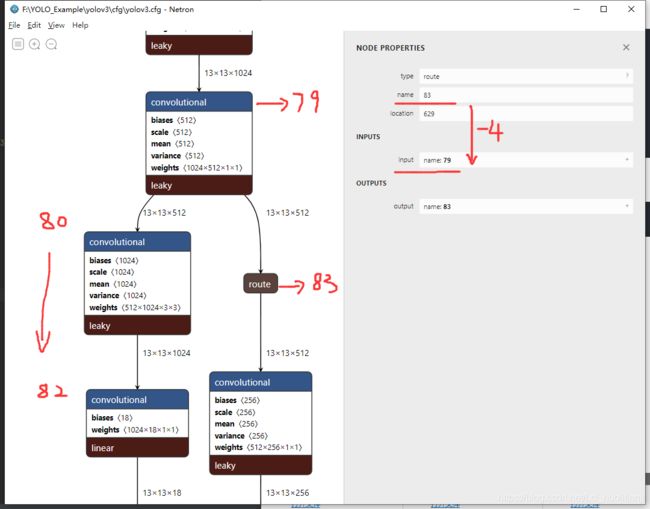

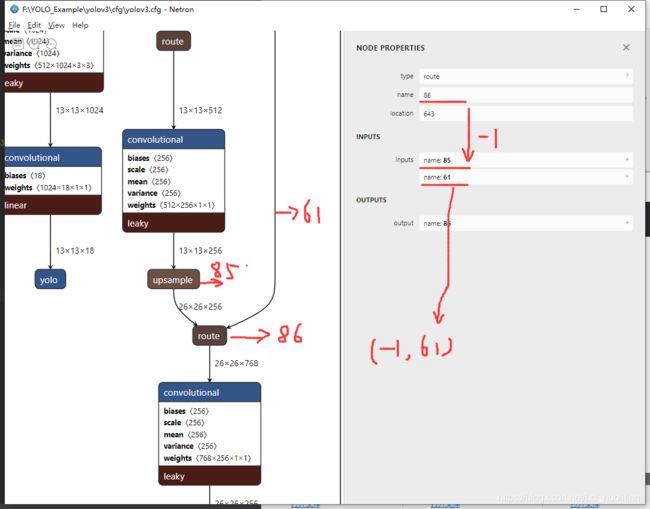

#路由层 进行多尺度训练

[route]

layers = -4

#当属性只有一个值时,它会输出由该值索引的网络层的特征图。 在我们的示例中,它是−4,所以层级将输出路由层之前第四个层的特征图。

#当图层有两个值时,它会返回由其值所索引的图层的连接特征图。 例如,它是—— −1,61,所以该图层将输出来自上一层(-1)和第61层的特征图,并沿深度的维度连接。

[convolutional]

batch_normalize=1

filters=256

size=1

stride=1

pad=1

activation=leaky

[upsample]

stride=2

[route]

layers = -1, 61

[convolutional]

batch_normalize=1

filters=256

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

size=3

stride=1

pad=1

filters=512

activation=leaky

[convolutional]

batch_normalize=1

filters=256

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

size=3

stride=1

pad=1

filters=512

activation=leaky

[convolutional]

batch_normalize=1

filters=256

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

size=3

stride=1

pad=1

filters=512

activation=leaky

[convolutional]

size=1

stride=1

pad=1

filters=18

activation=linear

[yolo]

mask = 3,4,5

anchors = 10,13, 16,30, 33,23, 30,61, 62,45, 59,119, 116,90, 156,198, 373,326

classes=1

num=9

#每个grid cell预测几个box,和anchors的数量一致。当想要使用更多anchors时需要调大num,且如果调大num后训练时Obj趋近0的话可以尝试调大object_scale

jitter=.3

ignore_thresh = .7

truth_thresh = 1

random=1

#多尺度如果为1,每次迭代图片大小随机从320到608,步长为32,如果为0,每次训练大小与输入大小一致

[route]

layers = -4

[convolutional]

batch_normalize=1

filters=128

size=1

stride=1

pad=1

activation=leaky

[upsample]

stride=2

[route]

layers = -1, 36

[convolutional]

batch_normalize=1

filters=128

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

size=3

stride=1

pad=1

filters=256

activation=leaky

[convolutional]

batch_normalize=1

filters=128

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

size=3

stride=1

pad=1

filters=256

activation=leaky

[convolutional]

batch_normalize=1

filters=128

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

size=3

stride=1

pad=1

filters=256

activation=leaky

[convolutional]

size=1

stride=1

pad=1

filters=18

activation=linear

[yolo]

mask = 0,1,2

anchors = 10,13, 16,30, 33,23, 30,61, 62,45, 59,119, 116,90, 156,198, 373,326

classes=1

num=9

jitter=.3

ignore_thresh = .7

truth_thresh = 1

random=1

二、最难理解部分

[route]

layers = -4

[route]

layers = -1,61

三、总结

有人会有疑问,这前面一共不是52层吗,哪里来的61,86之类的,其实,代码中还存在 short cut 层,他也在网络中占位了,如果把short cut 去掉,刚好就是52层(1+(1+2+8+8+4)*2+5)。网络架构还请看下一节。