CIFAR10数据集训练及测试

一、数据集介绍

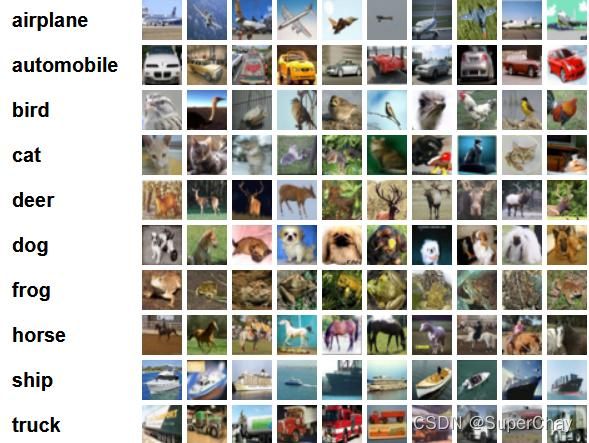

该数据集共有60000张彩色图像,这些图像是32*32,分为10个类,每类6000张图。这里面有50000张用于训练,构成了5个训练批,每一批10000张图;另外10000用于测试,单独构成一批。测试批的数据里,取自10类中的每一类,每一类随机取1000张。抽剩下的就随机排列组成了训练批。注意一个训练批中的各类图像并不一定数量相同,总的来看训练批,每一类都有5000张图。

下面这幅图就是列举了10各类,每一类展示了随机的10张图片:

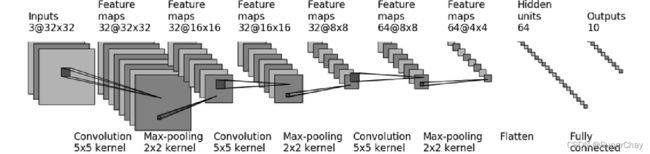

二、搭建神经网络模型

使用CIFAR10网路模型,基于pytorch搭建网络模型

import torch

from torch import nn

class Test(nn.Module):

def __init__(self):

super(Test, self).__init__()

self.model = nn.Sequential(

nn.Conv2d(3, 32, 5, padding=2),

nn.MaxPool2d(2),

nn.Conv2d(32, 32, 5, padding=2),

nn.MaxPool2d(2),

nn.Conv2d(32, 64, 5, padding=2),

nn.MaxPool2d(2),

nn.Flatten(),

nn.Linear(1024, 64),

nn.Linear(64, 10)

)

def forward(self,x):

x = self.model(x)

return x三、数据集的准备及加载

使用torchvision.datasets.CIFAR10()加载数据集,train=True表示数据集为训练数据集,train=False表示数据集为测试集,dowwnload=True表示下载数据集,本地存在数据集不会再次下载。

import torchvision

from torch.utils.data import DataLoader

from torch.utils.tensorboard import SummaryWriter

import time

from model import *

# 定义训练设备

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

# 准备数据集

train_data = torchvision.datasets.CIFAR10("dataset", train=True, transform=torchvision.transforms.ToTensor(),

download=True)

test_data = torchvision.datasets.CIFAR10("dataset", train=False, transform=torchvision.transforms.ToTensor(),

download=True)

train_data_size = len(train_data)

test_data_size = len(test_data)

# print("训练数据集的长度为{}".format(train_data_size))

# print("测试数据集的长度为{}".format(test_data_size))

# 利用DataLoader来加载数据集

train_dataloader = DataLoader(train_data,batch_size=64)

test_dataloader = DataLoader(test_data,batch_size=64)四、神经网络、损失函数、优化器等加载

test = Test()

test = test.to(device)

# 损失函数

loss_fn = nn.CrossEntropyLoss()

loss_fn = loss_fn.to(device)

# 优化器

learning_rate = 0.01

optimizer = torch.optim.SGD(test.parameters(), lr=learning_rate)

# 设置训练网络的一些参数

# 记录训练的次数

total_train_step = 0

# 记录测试的次数

total_test_step = 0

epoch = 30

# 添加Tensorboard

writer = SummaryWriter("logs_train")五、训练、测试、模型保存

start_time = time.time()

for i in range(epoch):

print("-----第{}轮训练开始------".format(i+1))

# 训练步骤开始

test.train()

for data in train_dataloader:

imgs,targets = data

imgs = imgs.to(device)

targets = targets.to(device)

output = test(imgs)

loss = loss_fn(output, targets)

# 优化器优化模型

optimizer.zero_grad()

loss.backward()

optimizer.step()

total_train_step += 1

if total_train_step % 100 == 0:

end_time = time.time()

print(end_time - start_time)

print("训练次数{}, Loss:{}".format(total_train_step, loss.item()))

writer.add_scalar("train_loss", loss.item(), total_train_step)

# 测试步骤开始

test.eval()

total_test_loss = 0

total_accuracy = 0

with torch.no_grad():

for data in test_dataloader:

imgs, targets = data

imgs = imgs.to(device)

targets = targets.to(device)

outputs = test(imgs)

loss = loss_fn(outputs, targets)

total_test_loss += loss.item()

accuracy = (outputs.argmax(1) == targets).sum()

total_accuracy += accuracy

print("整体测试集上的Loss: {}".format(total_test_loss))

print("整体测试集上的正确率: {}".format(total_accuracy/test_data_size))

writer.add_scalar("test_loss", total_test_loss, total_test_step)

writer.add_scalar("test_accuracy", total_accuracy/test_data_size, total_test_step)

total_test_step += 1

torch.save(test, "test_{}.pth".format(i))

print("模型已保存")

writer.close()六、模型的加载及测试

import torch

import torchvision

from PIL import Image

from model import *

CLASS = ["airplane", "automobile", "bird", "cat", "deer", "dog", "frog", "horse", "ship", "truck"]

image_path = "./imgs/dog.png"

image = Image.open(image_path)

# print(image)

transform = torchvision.transforms.Compose([torchvision.transforms.Resize((32, 32)),

torchvision.transforms.ToTensor()])

image = transform(image)

# print(image.shape)

model = torch.load("test_99.pth", map_location=torch.device('cpu'))

# print(model)

image = torch.reshape(image, (1, 3, 32, 32))

model.eval()

with torch.no_grad():

output = model(image)

ret = output.argmax(1)

ret = ret.numpy()

print("预测结果为:{}".format(CLASS[ret[0]]))