特征提取网络之Densnet

文章目录

- 前言

- 1.网络结构

- 2.结构解析

- 3.优点

前言

记录下Densnet

论文地址:https://arxiv.org/pdf/1608.06993.pdf

1.网络结构

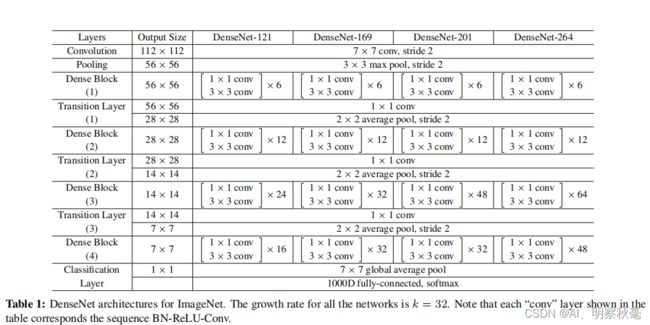

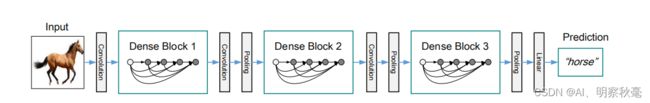

和resnet结构类似,output size表示输出分辨率大小,res2net121前面几层输入输出通道数对应resnet50,最后一层为输出还是1024。前面经过一个7x7卷积(带有BN+relu)+最大池化。后面输出C2,C3,C4,C5.layers那列每层下的小括号里的数字表示层卷积的步长。

2.结构解析

对于后面的输出C2,C3,C4,C5通道数。输入3维,经过conv1+pool,变为

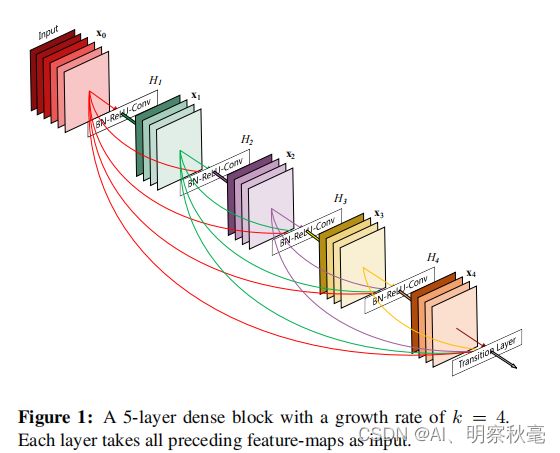

残差块(拼接不是相加)

bn_size =4,growth_rate=32

class _DenseBlock(nn.Sequential):

def __init__(self, num_layers, num_input_features, bn_size, growth_rate, drop_rate):

super(_DenseBlock, self).__init__()

for i in range(num_layers):

layer = _DenseLayer(num_input_features + i * growth_rate, growth_rate, bn_size, drop_rate)

self.add_module('denselayer%d' % (i + 1), layer) #往module里加模块

num_input_features = 输入通道+i * growth_rate

残差块组成:由代码可知,一个BN+Relu+1x1卷积,一个BN+Relu+3x3卷积。注意步长根据上面的结构图变化。

class _DenseLayer(nn.Sequential):

def __init__(self, num_input_features, growth_rate, bn_size, drop_rate):

super(_DenseLayer, self).__init__()

self.add_module('norm1', nn.BatchNorm2d(num_input_features)),

self.add_module('relu1', nn.ReLU(inplace=True)),

self.add_module('conv1', nn.Conv2d(num_input_features, bn_size *

growth_rate, kernel_size=1, stride=1, bias=False)), #num_input_features=输入通道*()

self.add_module('norm2', nn.BatchNorm2d(bn_size * growth_rate)),

self.add_module('relu2', nn.ReLU(inplace=True)),

self.add_module('conv2', nn.Conv2d(bn_size * growth_rate, growth_rate, # 128-32

kernel_size=3, stride=1, padding=1, bias=False)),

self.drop_rate = drop_rate

def forward(self, x):

new_features = super(_DenseLayer, self).forward(x)

if self.drop_rate > 0:

new_features = F.dropout(new_features, p=self.drop_rate, training=self.training)

return torch.cat([x, new_features], 1) #[64,32],[96,32]

Transition Layer层:一个BN+Relu+1x1卷积(压缩通道,减少一半),一个kernel_size=2的AvgPool2d(下采样,降低分辨率)。

class _Transition(nn.Sequential):

def __init__(self, num_input_features, num_output_features, filter_size=1):

super(_Transition, self).__init__()

self.add_module('norm', nn.BatchNorm2d(num_input_features))

self.add_module('relu', nn.ReLU(inplace=True))

self.add_module('conv', nn.Conv2d(num_input_features, num_output_features,

kernel_size=1, stride=1, bias=False))

self.add_module('pool', nn.AvgPool2d(kernel_size=2, stride=2))

3.优点

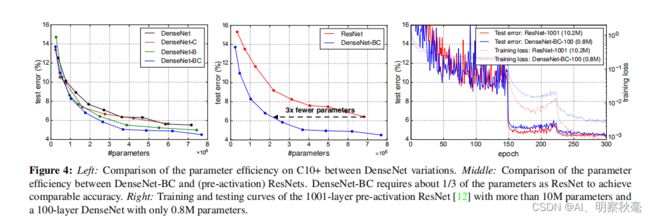

(1) 相比ResNet拥有更少的参数数量.

(2) 旁路加强了特征的重用.

(3) 网络更易于训练,并具有一定的正则效果.

(4) 缓解了gradient vanishing和model degradation的问题.