用残差网络实现MNIST数据集手写数字识别

1.残差网络

本文为用带残差块的CNN网络实现MNIST数据集手写数字的识别。

残差网络比起LeNet等简单的神经网络,不同之初在于,多了一个连接线。

左边为基础的CNN结构,右边为带残差的网络结构

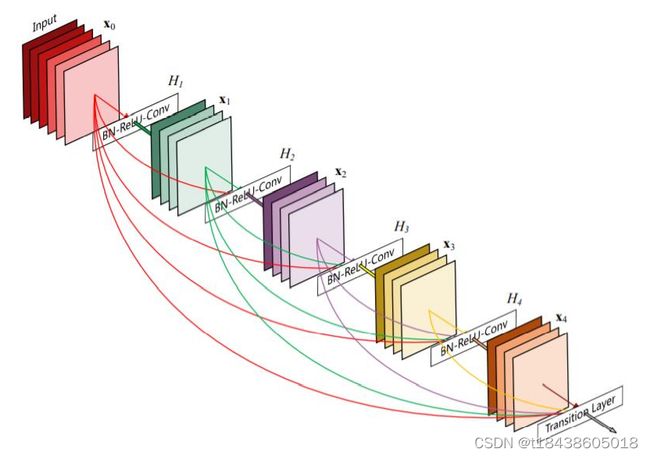

残差块是目前网络模型中,一个跟经典、很基础的结构,像DenseNet就是基于残差块来提出的,一个新的网络模型。

参考笔者的上篇博客:CNN实现MNIST数据集手写数字识别

4.代码实现(pytorch)

import torch

from torchvision import transforms

from torchvision import datasets

from torch.utils.data import DataLoader

import torch.optim as optim

import torch.nn.functional as F

import matplotlib.pyplot as plt

batch_size = 64

transform = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.1307),(0.3081)) #两个参数,平均值和标准差

])

train_dataset = datasets.MNIST(

root="../dataset/mnist/",

train= True,

download= True,

transform= transform

)

train_loader = DataLoader(train_dataset,

shuffle = True,

batch_size = batch_size)

test_dataset = datasets.MNIST(

root="../dataset/mnist/",

train=False,

download=True,

transform=transform

)

test_loder = DataLoader(test_dataset,

shuffle = True,

batch_size = batch_size)

class ResidualBlock(torch.nn.Module):

def __init__(self, channels):

super(ResidualBlock, self).__init__()

self.channels = channels

self.conv1 = torch.nn.Conv2d(channels, channels, kernel_size=3, padding=1)

self.conv2 = torch.nn.Conv2d(channels, channels, kernel_size=3, padding=1)

def forward(self, x):

y = F.relu(self.conv1(x))

y = self.conv2(y)

return F.relu(x + y)

'''

CLASS torch.nn.Conv2d(in_channels, out_channels, kernel_size, stride=1, padding=0,

dilation=1, groups=1, bias=True, padding_mode='zeros', device=None, dtype=None)

'''

'''

CLASS torch.nn.MaxPool2d(kernel_size, stride=None, padding=0,

dilation=1, return_indices=False, ceil_mode=False)

'''

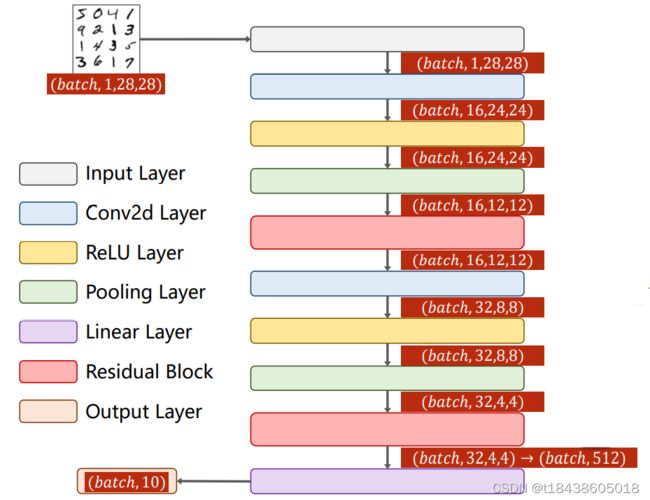

class Net(torch.nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = torch.nn.Conv2d(in_channels=1, out_channels=16, kernel_size=5)

self.conv2 = torch.nn.Conv2d(in_channels=16, out_channels=32, kernel_size=5) # 88 = 24x3 + 16

self.rblock1 = ResidualBlock(16)

self.rblock2 = ResidualBlock(32)

self.maxpooling = torch.nn.MaxPool2d(2)

# 建议读者在实现时,可以做增加几个全连接层,参考笔者博客:

#https://blog.csdn.net/t18438605018/article/details/122137737?spm=1001.2014.3001.5501

self.linear1 = torch.nn.Linear(512, 10)

def forward(self, x):

in_size = x.size(0)

x = self.maxpooling(F.relu(self.conv1(x)))

x = self.rblock1(x)

x = self.maxpooling(F.relu(self.conv2(x)))

x = self.rblock2(x)

x = x.view(in_size, -1) # Flatten操作

x = self.linear1(x)

return x

model = Net()

#有GPU就使用GPU,没有就是用CPU

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

model.to(device)

criterion = torch.nn.CrossEntropyLoss()

optimizer = optim.SGD(model.parameters(), lr=0.01, momentum= 0.5)

def train(epoch):

total = 0

running_loss = 0.0

train_loss = 0.0 #记录每次epoch的损失

accuracy = 0 #记录每次epoch的accuracy

for batch_id, data in enumerate(train_loader,0):

inputs, target = data

inputs, target = inputs.to(device), target.to(device)

optimizer.zero_grad()

# forword + backward + update

outputs = model(inputs)

loss = criterion(outputs, target)

_, predicted = torch.max(outputs.data, dim=1)

accuracy += (predicted == target).sum().item()

total += target.size(0)

loss.backward()

optimizer.step()

running_loss += loss.item()

train_loss = running_loss

#每迭代300次,求一下这三百次迭代的平均

if batch_id % 300 == 299:

print('[%d, %5d] loss: %.3f' %(epoch+1, batch_id+1, running_loss / 300))

running_loss = 0.0

print('第 %d epoch的 Accuracy on train set: %d %%, Loss on train set: %f' % (epoch + 1, 100 * accuracy / total, train_loss))

#返回acc和loss

return 1.0 * accuracy / total, train_loss

def validation(epoch):

correct = 0

total = 0

val_loss = 0.0

with torch.no_grad():

for data in test_loder:

images, target = data

images, target = images.to(device), target.to(device)

outputs = model(images)

loss = criterion(outputs, target)

val_loss += loss.item()

_, predicted = torch.max(outputs.data, dim=1)

total += target.size(0)

correct += (predicted == target).sum().item()

print('第 %d epoch的 Accuracy on validation set: %d %%, Loss on validation set: %f' %(epoch+1,100*correct / total, val_loss))

#返回acc和loss

return 1.0 * correct / total, val_loss

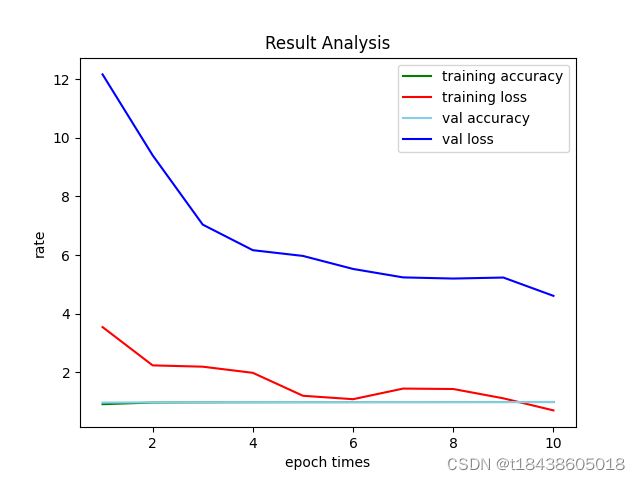

def draw_in_one(list,epoch):

# x_axix,train_pn_dis这些都是长度相同的list()

# 开始画图

x_axix = [x for x in range(1, epoch+1)] #把ranage转化为list

train_acc = list[0]

train_loss = list[1]

val_acc = list[2]

val_loss = list[3]

#sub_axix = filter(lambda x: x % 200 == 0, x_axix)

plt.title('Result Analysis')

plt.plot(x_axix, train_acc, color='green', label='training accuracy')

plt.plot(x_axix, train_loss, color='red', label='training loss')

plt.plot(x_axix, val_acc, color='skyblue', label='val accuracy')

plt.plot(x_axix, val_loss, color='blue', label='val loss')

plt.legend() # 显示图例

plt.xlabel('epoch times')

plt.ylabel('rate')

plt.show()

# python 一个折线图绘制多个曲线

if __name__ == '__main__':

train_loss = []

train_acc = []

val_loss = []

val_acc = []

epoches = 10

list = []

for epoch in range(epoches):

acc1, loss1 = train(epoch)

train_loss.append(loss1)

train_acc.append(acc1)

acc2, loss2 = validation(epoch)

val_loss.append(loss2)

val_acc.append(acc2)

# 四幅图合并绘制

list.append(train_acc)

list.append(train_loss)

list.append(val_acc)

list.append(val_loss)

draw_in_one(list, epoches)

本文代码与CNN实现MNIST数据集手写数字识别代码不同之处,仅在于网络模型换了。其它均未更改。

在验证集上,识别的准确率达到99%。

控制台输出信息:

E:\anaconda3\envs\pytorch\python.exe D:/PycharmProjects/pytorchProject/ReNet实现手写数字识别.py

[1, 300] loss: 0.593

[1, 600] loss: 0.153

[1, 900] loss: 0.118

第 1 epoch的 Accuracy on train set: 91 %, Loss on train set: 3.547480

第 1 epoch的 Accuracy on validation set: 97 %, Loss on validation set: 12.158820

[2, 300] loss: 0.087

[2, 600] loss: 0.083

[2, 900] loss: 0.081

第 2 epoch的 Accuracy on train set: 97 %, Loss on train set: 2.241397

第 2 epoch的 Accuracy on validation set: 98 %, Loss on validation set: 9.401881

[3, 300] loss: 0.059

[3, 600] loss: 0.060

[3, 900] loss: 0.062

第 3 epoch的 Accuracy on train set: 98 %, Loss on train set: 2.196551

第 3 epoch的 Accuracy on validation set: 98 %, Loss on validation set: 7.038621

[4, 300] loss: 0.051

[4, 600] loss: 0.050

[4, 900] loss: 0.043

第 4 epoch的 Accuracy on train set: 98 %, Loss on train set: 1.987330

第 4 epoch的 Accuracy on validation set: 98 %, Loss on validation set: 6.167475

[5, 300] loss: 0.046

[5, 600] loss: 0.039

[5, 900] loss: 0.038

第 5 epoch的 Accuracy on train set: 98 %, Loss on train set: 1.205675

第 5 epoch的 Accuracy on validation set: 98 %, Loss on validation set: 5.971746

[6, 300] loss: 0.035

[6, 600] loss: 0.039

[6, 900] loss: 0.035

第 6 epoch的 Accuracy on train set: 98 %, Loss on train set: 1.088960

第 6 epoch的 Accuracy on validation set: 98 %, Loss on validation set: 5.528260

[7, 300] loss: 0.029

[7, 600] loss: 0.034

[7, 900] loss: 0.030

第 7 epoch的 Accuracy on train set: 99 %, Loss on train set: 1.450512

第 7 epoch的 Accuracy on validation set: 98 %, Loss on validation set: 5.239810

[8, 300] loss: 0.027

[8, 600] loss: 0.026

[8, 900] loss: 0.026

第 8 epoch的 Accuracy on train set: 99 %, Loss on train set: 1.436349

第 8 epoch的 Accuracy on validation set: 98 %, Loss on validation set: 5.200812

[9, 300] loss: 0.025

[9, 600] loss: 0.025

[9, 900] loss: 0.023

第 9 epoch的 Accuracy on train set: 99 %, Loss on train set: 1.118738

第 9 epoch的 Accuracy on validation set: 98 %, Loss on validation set: 5.235084

[10, 300] loss: 0.025

[10, 600] loss: 0.022

[10, 900] loss: 0.022

第 10 epoch的 Accuracy on train set: 99 %, Loss on train set: 0.706974

第 10 epoch的 Accuracy on validation set: 99 %, Loss on validation set: 4.611128

Process finished with exit code 0

如果本文帮助了你,请给作者一个关注吧!