【pytorch】使用pytorch自己实现LayerNorm

pytorch中使用LayerNorm的两种方式,一个是nn.LayerNorm,另外一个是nn.functional.layer_norm

1. 计算方式

根据官方网站上的介绍,LayerNorm计算公式如下。

公式其实也同BatchNorm,只是计算的维度不同。

下面通过实例来走一遍公式

假设有如下的数据

x=

[

[0.1,0.2,0.3],

[0.4,0.5,0.6]

]

# shape (2,3)

先计算mean和variant

均值:

# 计算的维度是最后一维

mean=

[

(0.1+0.2+0.3)/3=0.2,

(0.4+0.5+0.6)/3=0.5

]

方差

var=[ mean((0.1-0.2)^2=0.01,(0.2-0.2)^2=0,(0.3-0.2)^2=0.01)+0.00005,

mean((0.4-0.5)^2=0.01, (0.5-0.5)^2=0, (0.6-0.5)^2=0.01)+0.00005

]

= [ 0.0067+0.00005

0.0067+0.00005

]

sqrt(var) = [ 0.0817,

0.0817

]

再执行 (x-mean)/sqrt(var)

(x-mean)/sqrt(var) = [ [(0.1-0.2)/0.0817, (0.2-0.2)/0.0817, (0.3-0.2)/0.0817],

[(0.4-0.5)/0.0817, (0.5-0.5)/0.0817, (0.6-0.5)/0.0817]

]

= [ [-1.2238, 0.0000, 1.2238],

[-1.2238, 0.0000, 1.2238]

]

2. 实现代码

下面代码是分别使用这两种方式以及一种自己实现的方式

import numpy as np

import torch

import torch.nn.functional as F

x = torch.Tensor([[0.1, 0.2, 0.3], [0.4, 0.5, 0.6]]) # shape is (2,3)

# 注意LayerNorm和layer_norm里的normalized_shape指的都是shape里的数字,而不是index;

# 在内部pytorch会将这个数字转成index

nn_layer_norm = torch.nn.LayerNorm(normalized_shape=[3], eps=1e-5, elementwise_affine=True)

print("LayerNorm=", nn_layer_norm(x))

layer_norm = F.layer_norm(x, normalized_shape=[3], weight=None, bias=None, eps=1e-5)

print("F.layer_norm=", layer_norm)

# dim是维度的index

mean = torch.mean(x, dim=[1], keepdim=True)

# 这里注意是torch.mean而不是torch.sum

# 所以通过torch.var函数是不可以的

var = torch.mean((x - mean) ** 2, dim=[1], keepdim=True)+ 1e-5

print("my LayerNorm=", var,(x - mean) / torch.sqrt(var))

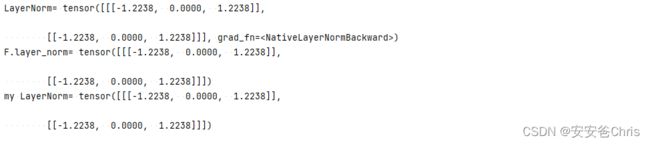

结果如下,

多维实现

如果张量x是3维,应该如何使用?

代码样例如下,

import numpy as np

import torch

import torch.nn.functional as F

x = torch.Tensor([[0.1, 0.2, 0.3], [0.4, 0.5, 0.6]]).view(2,1,3) # shape (2,1,3)

# 注意这里的normalized_shape只能是张量的后面几个连续维度

# 比如这里的1,3 就是 (2,1,3)的最后两维

nn_layer_norm = torch.nn.LayerNorm(normalized_shape=[1,3], eps=1e-5, elementwise_affine=True)

print("LayerNorm=", nn_layer_norm(x))

layer_norm = F.layer_norm(x, normalized_shape=[1,3], weight=None, bias=None, eps=1e-5)

print("F.layer_norm=", layer_norm)

# 这里的dim写最后两维的index

mean = torch.mean(x, dim=[1,2], keepdim=True)

var = torch.mean((x - mean) ** 2, dim=[1,2], keepdim=True)+ 1e-5

print("my LayerNorm=", (x - mean) / torch.sqrt(var))

多维张量的情况下,需要注意这里的normalized_shape只能是张量的后面几个连续维度,否则会报如下类似错误

RuntimeError: Given normalized_shape=[2, 3], expected input with shape [*, 2, 3], but got input of size[2, 1, 3]

3. 思考

从这里可以看出,这里实际上是最尾部维度做Normalization。

考虑到训练nlp模型的场景,张量维度一般是 (Batch size,Length of Sequence, Embedding size),使用LayerNorm实际上就是在一个mini batch的范围内,以Embedding为维度做正则。

那么为什么在nlp的任务上一般使用LayerNorm呢?

在nlp 任务中,每次batch中的sequnce可能不同,所以包含了batch和sequnce的维度的话,可能也把paddding的数据包含进来了。