基于卷积神经网络的猫狗识别

TensorFlow和Keras

数据来源:Kaggle在2013年公开的猫狗数据集,该数据集总共25000张图片,猫狗各12500张。

下载链接:https://www.kaggle.com/c/dogs-vs-cats/data

代码:

import os,shutil

original_dataset_diar = '/home/u/notebook_workspase/datas/dogs-vs-cats/train'#原始数据解压目录

base_dir = '/home/u/notebook_workspase/datas/dogs-cats-small-dataset'#自己保留的小数据集

os.mkdir(base_dir)

#划分后的train,validation,test目录

train_dir = os.path.join(base_dir,'train')#将多个路径组合后返回

os.mkdir(train_dir)

validation_dir = os.path.join(base_dir,'validation')

os.mkdir(validation_dir)

test_dir = os.path.join(base_dir,'test')

os.mkdir(test_dir)

#猫和狗的train,validation,test图像目录

train_cats_dir = os.path.join(train_dir,'cats')

os.mkdir(train_cats_dir)

train_dogs_dir = os.path.join(train_dir,'dogs')

os.mkdir(train_dogs_dir)

validation_cats_dir = os.path.join(validation_dir,'cats')

os.mkdir(validation_cats_dir)

validation_dogs_dir = os.path.join(validation_dir,'dogs')

os.mkdir(validation_dogs_dir)

test_cats_dir = os.path.join(test_dir,'cats')

os.mkdir(test_cats_dir)

test_dogs_dir = os.path.join(test_dir,'dogs')

os.mkdir(test_dogs_dir)

# 复制1000猫到训练目录中

fnames = ['cat.{}.jpg'.format(i) for i in range(1000)]

for fname in fnames:

src = os.path.join(original_dataset_diar,fname)

dst = os.path.join(train_cats_dir,fname)

shutil.copyfile(src,dst)

# 500张猫的验证图片,依次类推

fnames = ['cat.{}.jpg'.format(i) for i in range(1000,1500)]

for fname in fnames:

src = os.path.join(original_dataset_diar,fname)

dst = os.path.join(validation_cats_dir,fname)

shutil.copyfile(src,dst)

fnames = ['cat.{}.jpg'.format(i) for i in range(1500,2000)]

for fname in fnames:

src = os.path.join(original_dataset_diar,fname)

dst = os.path.join(test_cats_dir,fname)

shutil.copyfile(src,dst)

fnames = ['dog.{}.jpg'.format(i) for i in range(1000)]

for fname in fnames:

src = os.path.join(original_dataset_diar,fname)

dst = os.path.join(train_dogs_dir,fname)

shutil.copyfile(src,dst)

fnames = ['dog.{}.jpg'.format(i) for i in range(1000,1500)]

for fname in fnames:

src = os.path.join(original_dataset_diar,fname)

dst = os.path.join(validation_dogs_dir,fname)

shutil.copyfile(src,dst)

fnames = ['dog.{}.jpg'.format(i) for i in range(1500,2000)]

for fname in fnames:

src = os.path.join(original_dataset_diar,fname)

dst = os.path.join(test_dogs_dir,fname)

shutil.copyfile(src,dst)

print(len(os.listdir(train_cats_dir)))

print(len(os.listdir(train_dogs_dir)))

print(len(os.listdir(validation_cats_dir)))

print(len(os.listdir(validation_dogs_dir)))

print(len(os.listdir(test_dogs_dir)))

from keras import layers

from keras import models

model = models.Sequential()

model.add(layers.Conv2D(32,(3,3),activation='relu',input_shape=(150,150,3)))

model.add(layers.MaxPool2D((2,2)))

model.add(layers.Conv2D(64,(3,3),activation='relu'))

model.add(layers.MaxPool2D((2,2)))

model.add(layers.Conv2D(128,(3,3),activation='relu',))

model.add(layers.MaxPool2D((2,2)))

model.add(layers.Conv2D(128,(3,3),activation='relu',))

model.add(layers.MaxPool2D((2,2)))

model.add(layers.Flatten())

model.add(layers.Dense(512,activation='relu'))

model.add(layers.Dense(1,activation='sigmoid'))

Using TensorFlow backend.

model.summary()

# 编译

from keras import optimizers

model.compile(loss='binary_crossentropy',

optimizer=optimizers.RMSprop(lr = 1e-4),

metrics=['acc'])

from keras.preprocessing.image import ImageDataGenerator

train_datagen = ImageDataGenerator(rescale=1./255)

test_datagen = ImageDataGenerator(rescale=1./255)

train_generator = train_datagen.flow_from_directory(

train_dir, # 目标目录

target_size=(150, 150), # 所有图像调整为150x150

batch_size=20,

class_mode='binary') # 二进制标签

validation_generator = test_datagen.flow_from_directory(

validation_dir,

target_size=(150, 150),

batch_size=20,

class_mode='binary')

history = model.fit_generator(

train_generator,#python 生成器

steps_per_epoch=100,#100批次

epochs=30,

validation_data=validation_generator,

validation_steps=50)

model.save('cat-dog-small-1.h5')#保存模型

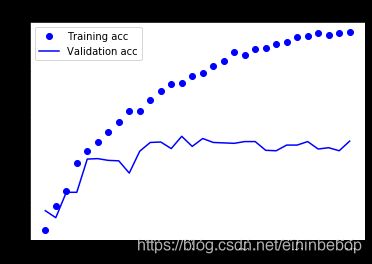

import matplotlib.pyplot as plt

%matplotlib inline

loss = history.history['loss']

val_loss = history.history['val_loss']

acc = history.history['acc']

val_acc = history.history['val_acc']

epochs = range(1,len(acc)+1)

plt.plot(epochs,acc,'bo',label = 'Training acc')

plt.plot(epochs,val_acc,'b',label = 'Validation acc')

plt.title('Training and validation accuracy')

plt.legend()#显示标签

plt.figure()

plt.plot(epochs,loss,'bo',label = 'Training loss')

plt.plot(epochs,val_loss,'b',label = 'Validation loss')

plt.title("Training and validation loss")

plt.legend()

plt.show()

datagen = ImageDataGenerator(

rotation_range=40, # 图像随机旋转的角度范围

width_shift_range=0.2, # 水平或垂直平移范围,相对总宽度或总高度的比例的比例

height_shift_range=0.2,

shear_range=0.2, # 随机错切变换角度换角度

zoom_range=0.2, # 随机缩放范围

horizontal_flip=True, # 一半图像水平翻转

fill_mode='nearest' # 填充新创建像素的方法

)

from keras.preprocessing import image#图像预处理工作的模块

fnames = [os.path.join(train_cats_dir, fname) for fname in os.listdir(train_cats_dir)]

img_path = fnames[3] # 选择一张图片进行增强

img = image.load_img(img_path, target_size=(150, 150)) # 读取图像并调整大小

x = image.img_to_array(img) # 形状转换为(150,150,3)的Numpy数组

x = x.reshape((1,) + x.shape)

i = 0

# 生成随机变换后图像批量,循环是无限生成,也需要我们手动指定终止条件

for batch in datagen.flow(x, batch_size=1):

plt.figure(i)

imgplot = plt.imshow(image.array_to_img(batch[0]))

i += 1

if i % 4 == 0:

break

plt.show()

model = models.Sequential()

model.add(layers.Conv2D(32,(3,3),activation='relu',input_shape=(150,150,3)))

model.add(layers.MaxPool2D((2,2)))

model.add(layers.Conv2D(64,(3,3),activation='relu'))

model.add(layers.MaxPool2D((2,2)))

model.add(layers.Conv2D(128,(3,3),activation='relu',))

model.add(layers.MaxPool2D((2,2)))

model.add(layers.Conv2D(128,(3,3),activation='relu',))

model.add(layers.MaxPool2D((2,2)))

model.add(layers.Flatten())

model.add(layers.Dropout(0.5))#droput层

model.add(layers.Dense(512,activation='relu'))

model.add(layers.Dense(1,activation='sigmoid'))

model.compile(loss='binary_crossentropy',

optimizer=optimizers.RMSprop(lr = 1e-4),

metrics=['acc'])

train_datagen = ImageDataGenerator(

rescale=1./255,

rotation_range=40,

width_shift_range=0.2,

height_shift_range=0.2,

shear_range=0.2,

zoom_range=0.2,

horizontal_flip=True

)

test_datagen = ImageDataGenerator(rescale=1./255) # 验证集不用增强

train_generator = train_datagen.flow_from_directory(

train_dir,

target_size=(150, 150),

batch_size=32,

class_mode='binary'

)

validation_generator = test_datagen.flow_from_directory(

validation_dir,

target_size=(150, 150),

batch_size=32,

class_mode='binary'

)

history = model.fit_generator(

train_generator,

steps_per_epoch=100,

epochs=100,

validation_data=validation_generator,

validation_steps=50

)

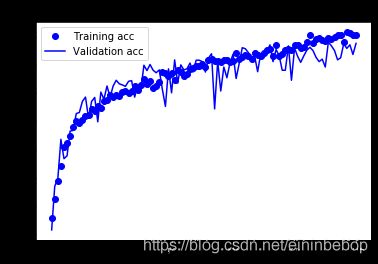

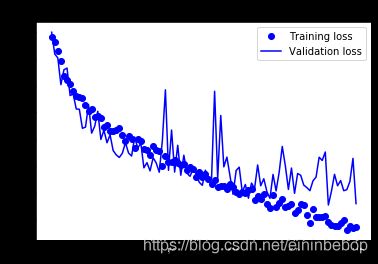

import matplotlib.pyplot as plt

%matplotlib inline

loss = history.history['loss']

val_loss = history.history['val_loss']

acc = history.history['acc']

val_acc = history.history['val_acc']

epochs = range(1,len(acc)+1)

plt.plot(epochs,acc,'bo',label = 'Training acc')

plt.plot(epochs,val_acc,'b',label = 'Validation acc')

plt.title('Training and validation accuracy')

plt.legend()#显示标签

plt.figure()

plt.plot(epochs,loss,'bo',label = 'Training loss')

plt.plot(epochs,val_loss,'b',label = 'Validation loss')

plt.title("Training and validation loss")

plt.legend()

plt.show()

Vgg19网络模型

#迁移学习猫狗识别

import scipy.misc

import scipy.io as scio

import tensorflow as tf

import os

import numpy as np

import sys

def get_files(file_dir):

cats = []

label_cats = []

dogs = []

label_dogs = []

for file in os.listdir(file_dir):

name = file.split(sep='.')

if 'cat' in name[0]:

cats.append(file_dir +"\\"+ file)

label_cats.append(0)

else:

if 'dog' in name[0]:

dogs.append(file_dir +"\\"+ file)

label_dogs.append(1)

image_list = np.hstack((cats, dogs))

label_list = np.hstack((label_cats, label_dogs))

# print('There are %d cats\nThere are %d dogs' %(len(cats), len(dogs)))

# 多个种类分别的时候需要把多个种类放在一起,打乱顺序,这里不需要

# 把标签和图片都放倒一个 temp 中 然后打乱顺序,然后取出来

temp = np.array([image_list, label_list])

temp = temp.transpose()

# 打乱顺序

np.random.shuffle(temp)

# 取出第一个元素作为 image 第二个元素作为 label

image_list = list(temp[:, 0])

label_list = list(temp[:, 1])

label_list = [int(i) for i in label_list]

return image_list, label_list

# 测试 get_files

# imgs , label = get_files('/Users/yangyibo/GitWork/pythonLean/AI/猫狗识别/testImg/')

# for i in imgs:

# print("img:",i)

# for i in label:

# print('label:',i)

# 测试 get_files end

# image_W ,image_H 指定图片大小,batch_size 每批读取的个数 ,capacity队列中 最多容纳元素的个数

def get_batch(image, label, image_W, image_H, batch_size, capacity):

# 转换数据为 ts 能识别的格式

image = tf.cast(image, tf.string)

label = tf.cast(label, tf.int32)

# 将image 和 label 放倒队列里

input_queue = tf.train.slice_input_producer([image, label])

label = input_queue[1]

# 读取图片的全部信息

image_contents = tf.read_file(input_queue[0])

# 把图片解码,channels =3 为彩色图片, r,g ,b 黑白图片为 1 ,也可以理解为图片的厚度

image = tf.image.decode_jpeg(image_contents, channels=3)

# 将图片以图片中心进行裁剪或者扩充为 指定的image_W,image_H

image = tf.image.resize_image_with_crop_or_pad(image, image_W, image_H)

# 对数据进行标准化,标准化,就是减去它的均值,除以他的方差

image = tf.image.per_image_standardization(image)

# 生成批次 num_threads 有多少个线程根据电脑配置设置 capacity 队列中 最多容纳图片的个数 tf.train.shuffle_batch 打乱顺序,

image_batch, label_batch = tf.train.batch([image, label], batch_size=batch_size, num_threads=64, capacity=capacity)

# 重新定义下 label_batch 的形状

label_batch = tf.reshape(label_batch, [batch_size])

# 转化图片

image_batch = tf.cast(image_batch, tf.float32)

return image_batch, label_batch

def _conv_layer(input,weights,bias):

conv=tf.nn.conv2d(input,tf.constant(weights),strides=[1,1,1,1],padding="SAME")

return tf.nn.bias_add(conv,bias)

def _pool_layer(input):

return tf.nn.max_pool(input,ksize=(1,2,2,1),strides=(1,2,2,1,),padding="SAME")

def net(data_path,input_image):

layers=('conv1_1','relu1_1','conv1_2','relu1_2','pool1',

'conv2_1','relu2_1','conv2_2','relu2_2','pool2',

'conv3_1','relu3_1','conv3_2','relu3_2','conv3_3','relu3_3','conv3_4','relu3_4','pool3',

'conv4_1','relu4_1','conv4_2','relu4_2','conv4_3','relu4_3','conv4_4','relu4_4','pool4',

'conv5_1', 'relu5_1','conv5_2','relu5_2','conv5_3','relu5_3','conv5_4','relu5_4'

)

data=scio.loadmat(data_path)

mean=data['normalization'][0][0][0]

mean_pixel=np.mean(mean,axis=(0,1))

weights=data['layers'][0]

net={}

current=input_image

for i,name in enumerate(layers):

kind=name[:4]

if kind=='conv':

kernels,bias=weights[i][0][0][0][0]

kernels=np.transpose(kernels,[1,0,2,3])

bias=bias.reshape(-1)

current=_conv_layer(current,kernels,bias)

elif kind=='relu':

current=tf.nn.relu(current)

elif kind=="pool":

current=_pool_layer(current)

net[name]=current

assert len(net)==len(layers)

return net,mean_pixel,layers

VGG_PATH="D:\\imagenet-vgg-verydeep-19.mat"

train_dir = 'E:\\BaiduNetdiskDownload\\Dogs vs Cats Redux Kernels Edition\\aaa' # My dir--20170727-csq

# 获取图片和标签集

train, train_label = get_files(train_dir)

# 生成批次

train_batch, train_label_batch =get_batch(train,train_label,224,224,32,256)

# 进入模型

nets,mean_pixel,all_layers=net(VGG_PATH,train_batch)

with tf.variable_scope("dense1"):

image=tf.reshape(nets["relu5_4"],[32,-1])

weights=tf.Variable(tf.random_normal(shape=[14*14*512,1024],stddev=0.1))

bias=tf.Variable(tf.zeros(shape=[1024])+0.1)

dense1=tf.nn.tanh(tf.matmul(image,weights)+bias)

with tf.variable_scope("out"):

weights=tf.Variable(tf.random_normal(shape=[1024,2],stddev=0.1))

bias=tf.Variable(tf.zeros(shape=[2])+0.1)

out=tf.matmul(dense1,weights)+bias

loss=tf.reduce_mean(tf.nn.sparse_softmax_cross_entropy_with_logits(logits=out,labels=train_label_batch))

op=tf.train.AdamOptimizer(0.0001).minimize(loss)

correct = tf.nn.in_top_k(out,train_label_batch, 1)

correct = tf.cast(correct, tf.float16)

accuracy = tf.reduce_mean(correct)

init=tf.global_variables_initializer()

with tf.Session() as sess:

sess.run(init)

coord = tf.train.Coordinator()

threads = tf.train.start_queue_runners(sess=sess, coord=coord)

try:

for step in np.arange(100):

if coord.should_stop():

print("结束")

sys.exit(0)

_, tra_loss, tra_acc = sess.run([op, loss, accuracy])

if step % 1 == 0:

print("step",step,"loss",tra_loss,"acc",tra_acc)

except tf.errors.OutOfRangeError:

print('Done training -- epoch limit reached')

finally:

coord.request_stop()

coord.join(threads)

sess.close()

pytorch

数据预处理:

import numpy as np # linear algebra

import pandas as pd # data processing, CSV file I/O (e.g. pd.read_csv)

import os

import torch

import torch.nn as nn

import cv2

import matplotlib.pyplot as plt

import torchvision

from torch.utils.data import Dataset, DataLoader, ConcatDataset

from torchvision import transforms,models

from torch.optim.lr_scheduler import *

import copy

import random

import tqdm

from PIL import Image

import torch.nn.functional as F

%matplotlib inline

BATCH_SIZE = 20

EPOCHS = 10

DEVICE = torch.device("cuda" if torch.cuda.is_available() else "cpu")

cPath = os.getcwd()

train_dir = cPath + '/data/train'

test_dir = cPath + '/data/test'

train_files = os.listdir(train_dir)

test_files = os.listdir(test_dir)

class CatDogDataset(Dataset):

def __init__(self, file_list, dir, mode='train', transform = None):

self.file_list = file_list

self.dir = dir

self.mode= mode

self.transform = transform

if self.mode == 'train':

if 'dog' in self.file_list[0]:

self.label = 1

else:

self.label = 0

def __len__(self):

return len(self.file_list)

def __getitem__(self, idx):

img = Image.open(os.path.join(self.dir, self.file_list[idx]))

if self.transform:

img = self.transform(img)

if self.mode == 'train':

img = img.numpy()

return img.astype('float32'), self.label

else:

img = img.numpy()

return img.astype('float32'), self.file_list[idx]

train_transform = transforms.Compose([

transforms.Resize((256, 256)), # 先调整图片大小至256x256

transforms.RandomCrop((224, 224)), # 再随机裁剪到224x224

transforms.RandomHorizontalFlip(), # 随机的图像水平翻转,通俗讲就是图像的左右对调

transforms.ToTensor(),

transforms.Normalize((0.485, 0.456, 0.406), (0.229, 0.224, 0.225)) # 归一化,数值是用ImageNet给出的数值

])

cat_files = [tf for tf in train_files if 'cat' in tf]

dog_files = [tf for tf in train_files if 'dog' in tf]

cats = CatDogDataset(cat_files, train_dir, transform = train_transform)

dogs = CatDogDataset(dog_files, train_dir, transform = train_transform)

train_set = ConcatDataset([cats, dogs])

train_loader = DataLoader(train_set, batch_size = BATCH_SIZE, shuffle=True, num_workers=0)

test_transform = transforms.Compose([

transforms.Resize((224, 224)),

transforms.ToTensor(),

transforms.Normalize((0.485, 0.456, 0.406), (0.229, 0.224, 0.225))

])

test_set = CatDogDataset(test_files, test_dir, mode='test', transform = test_transform)

test_loader = DataLoader(test_set, batch_size = BATCH_SIZE, shuffle=False, num_workers=0)

samples, labels = iter(train_loader).next()

plt.figure(figsize=(16,24))

grid_imgs = torchvision.utils.make_grid(samples[:BATCH_SIZE])

np_grid_imgs = grid_imgs.numpy()

# in tensor, image is (batch, width, height), so you have to transpose it to (width, height, batch) in numpy to show it.

plt.imshow(np.transpose(np_grid_imgs, (1,2,0)))

配置网络:

class MineNet(nn.Module):

def __init__(self,num_classes=2):

super().__init__()

self.features=nn.Sequential(

nn.Conv2d(3,64,kernel_size=11,stride=4,padding=2), #(224+2*2-11)/4+1=55

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3,stride=2), #(55-3)/2+1=27

nn.Conv2d(64,128,kernel_size=5,stride=1,padding=2), #(27+2*2-5)/1+1=27

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3,stride=2), #(27-3)/2+1=13

nn.Conv2d(128,256,kernel_size=3,stride=1,padding=1), #(13+1*2-3)/1+1=13

nn.ReLU(inplace=True),

nn.Conv2d(256,128,kernel_size=3,stride=1,padding=1), #(13+1*2-3)/1+1=13

nn.ReLU(inplace=True),

nn.Conv2d(128,128,kernel_size=3,stride=1,padding=1), #13+1*2-3)/1+1=13

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3,stride=2), #(13-3)/2+1=6

) #6*6*128=9126

self.avgpool=nn.AdaptiveAvgPool2d((6,6))

self.classifier=nn.Sequential(

nn.Dropout(),

nn.Linear(128*6*6,2048),

nn.ReLU(inplace=True),

nn.Dropout(),

nn.Linear(2048,512),

nn.ReLU(inplace=True),

nn.Linear(512,num_classes),

)

# softmax

self.logsoftmax = nn.LogSoftmax(dim=1)

def forward(self,x):

x=self.features(x)

x=self.avgpool(x)

x=x.view(x.size(0),-1)

x=self.classifier(x)

x=self.logsoftmax(x)

return x

model = MineNet()

# model = MyConvNet().to(DEVICE)

optimizer = torch.optim.SGD(model.parameters(), lr=0.01, momentum=0.9, weight_decay=5e-4) # 设置训练细节

scheduler = StepLR(optimizer, step_size=5)

criterion = nn.CrossEntropyLoss()

def refreshdataloader():

cat_files = [tf for tf in train_files if 'cat' in tf]

dog_files = [tf for tf in train_files if 'dog' in tf]

val_cat_files = []

val_dog_files = []

for i in range(0,1250):

r = random.randint(0,len(cat_files)-1)

val_cat_files.append(cat_files[r])

val_dog_files.append(dog_files[r])

cat_files.remove(cat_files[r])

dog_files.remove(dog_files[r])

cats = CatDogDataset(cat_files, train_dir, transform = train_transform)

dogs = CatDogDataset(dog_files, train_dir, transform = train_transform)

train_set = ConcatDataset([cats, dogs])

train_loader = DataLoader(train_set, batch_size = BATCH_SIZE, shuffle=True, num_workers=1)

val_cats = CatDogDataset(val_cat_files, train_dir, transform = test_transform)

val_dogs = CatDogDataset(val_dog_files, train_dir, transform = test_transform)

val_set = ConcatDataset([val_cats, val_dogs])

val_loader = DataLoader(val_set, batch_size = BATCH_SIZE, shuffle=True, num_workers=1)

return train_loader,val_loader

def train(model, device, train_loader, optimizer, epoch):

model.train()

train_loss = 0.0

train_acc = 0.0

percent = 10

for batch_idx, (sample, target) in enumerate(train_loader):

sample, target = sample.to(device), target.to(device)

optimizer.zero_grad()

output = model(sample)

loss = criterion(output, target)

loss.backward()

optimizer.step()

loss = loss.item()

train_loss += loss

pred = output.max(1, keepdim = True)[1]

train_acc += pred.eq(target.view_as(pred)).sum().item()

if (batch_idx+1)%percent == 0:

print('train epoch: {} [{}/{} ({:.0f}%)]\tloss: {:.6f}\t'.format(

epoch, (batch_idx+1) * len(sample), len(train_loader.dataset),

100. * batch_idx / len(train_loader), loss))

train_loss *= BATCH_SIZE

train_loss /= len(train_loader.dataset)

train_acc = train_acc/len(train_loader.dataset)

print('\ntrain epoch: {}\tloss: {:.6f}\taccuracy:{:.4f}% '.format(epoch,train_loss,100.*train_acc))

scheduler.step()

return train_loss,train_acc

def val(model, device, val_loader,epoch):

model.eval()

val_loss =0.0

correct = 0

for sample, target in val_loader:

with torch.no_grad():

sample,target = sample.to(device),target.to(device)

output = model(sample)

val_loss += criterion(output, target).item()

pred = output.max(1, keepdim = True)[1]

correct += pred.eq(target.view_as(pred)).sum().item()

val_loss *= BATCH_SIZE

val_loss /= len(val_loader.dataset)

val_acc= correct / len(val_loader.dataset)

print("\nval set: epoch{} average loss: {:.4f}, accuracy: {}/{} ({:.4f}%) \n"

.format(epoch, val_loss, correct, len(val_loader.dataset),100.* val_acc))

return val_loss,100.*val_acc

def test(model, device, test_loader,epoch):

model.eval()

filename_list = []

pred_list = []

for sample, filename in test_loader:

with torch.no_grad():

sample = sample.to(device)

output = model(sample)

pred = torch.argmax(output, dim=1)

filename_list += [n[:-4] for n in filename]

pred_list += [p.item() for p in pred]

print("\ntest epoch: {}\n".format(epoch))

submission = pd.DataFrame({"id":filename_list, "label":pred_list})

submission.to_csv('preds_' + str(epoch) + '.csv', index=False)

train_losses = []

train_acces = []

val_losses = []

val_acces = []

for epoch in range(1, EPOCHS + 1):

train_loader,val_loader = refreshdataloader()

tr_loss,tr_acc = train(model, DEVICE, train_loader, optimizer, epoch)

train_losses.append(tr_loss)

train_acces.append(tr_acc)

vl,va = val(model, DEVICE, val_loader,epoch)

val_losses.append(vl)

val_acces.append(va)

filename_pth = 'catdog_mineresnet_' + str(epoch) + '.pth'

torch.save(model.state_dict(), filename_pth)

test(model,DEVICE,test_loader)

ResNet18:

class Net(nn.Module):

def __init__(self, model):

super(Net, self).__init__()

self.resnet_layer = nn.Sequential(*list(model.children())[:-1])

self.Linear_layer = nn.Linear(512, 2)

def forward(self, x):

x = self.resnet_layer(x)

x = x.view(x.size(0), -1)

x = self.Linear_layer(x)

return x

from torchvision.models.resnet import resnet18

resnet = resnet18(pretrained=True)

model = Net(resnet)

model = model.to(DEVICE)

optimizer = torch.optim.SGD(model.parameters(), lr=0.001, momentum=0.9, weight_decay=5e-4) # 设置训练细节

scheduler = StepLR(optimizer, step_size=3)

criterion = nn.CrossEntropyLoss()

把 Pytorch 的 VGG16 接口 model 的 classifier 替换成输出为 2 分类的。训练、验证方法不变。

from torchvision.models.vgg import vgg16

model = vgg16(pretrained=True)

for parma in model.parameters():

parma.requires_grad = False

model.classifier = nn.Sequential(nn.Linear(25088, 4096),

nn.ReLU(),

nn.Dropout(p=0.5),

nn.Linear(4096, 4096),

nn.ReLU(),

nn.Dropout(p=0.5),

nn.Linear(4096, 2))

for index, parma in enumerate(model.classifier.parameters()):

if index == 6:

parma.requires_grad = True

model = model.to(DEVICE)

optimizer = torch.optim.SGD(model.parameters(), lr=0.001, momentum=0.9, weight_decay=5e-4) # 设置训练细节

scheduler = StepLR(optimizer, step_size=3)

criterion = nn.CrossEntropyLoss()