Pytorch实战3——GoogLeNet对MNIST数据集分类

目录

一、GoogLeNet网络

二、编程实战对MNIST数据集分类

一、GoogLeNet网络

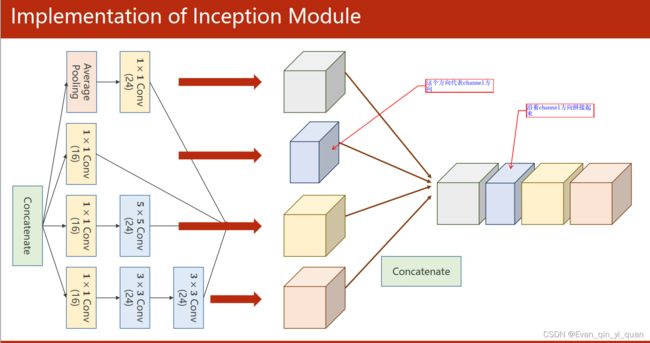

输入分为4个通道,每个通道分别做不同的运算,最后拼接起来

二、编程实战对MNIST数据集分类

代码基于上一篇的卷积神经网络编程,只需要把上一篇的代码中设计模型部分替换成维GoogLeNet网络的即可,其它部分代码不变,替换的模型如下

# GoogLeNet卷积神经网络模型

class InceptionA(nn.Module): #import torch.nn 就可以直接写(nn.Module)

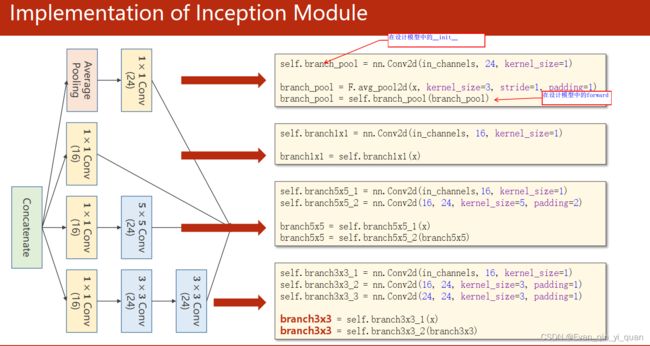

def __init__(self, in_channels):

super(InceptionA, self).__init__()

self.branch1x1 = nn.Conv2d(in_channels, 16, kernel_size=1)

self.branch5x5_1 = nn.Conv2d(in_channels,16, kernel_size=1)

self.branch5x5_2 = nn.Conv2d(16, 24, kernel_size=5, padding=2)

self.branch3x3_1 = nn.Conv2d(in_channels, 16, kernel_size=1)

self.branch3x3_2 = nn.Conv2d(16, 24, kernel_size=3, padding=1)

self.branch3x3_3 = nn.Conv2d(24, 24, kernel_size=3, padding=1)

self.branch_pool = nn.Conv2d(in_channels, 24, kernel_size=1)

def forward(self, x):

branch1x1 = self.branch1x1(x)

branch5x5 = self.branch5x5_1(x)

branch5x5 = self.branch5x5_2(branch5x5)

branch3x3 = self.branch3x3_1(x)

branch3x3 = self.branch3x3_2(branch3x3)

branch3x3 = self.branch3x3_3(branch3x3)

branch_pool = F.avg_pool2d(x, kernel_size=3, stride=1, padding=1)

branch_pool = self.branch_pool(branch_pool)

outputs = [branch1x1, branch5x5, branch3x3, branch_pool] #

return torch.cat(outputs, dim=1) #输出维度 = 24+24+24+16=88,按channel方向拼接起来

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = nn.Conv2d(1, 10, kernel_size=5)

self.conv2 = nn.Conv2d(88, 20, kernel_size=5)

self.incep1 = InceptionA(in_channels=10)

self.incep2 = InceptionA(in_channels=20)

self.mp = nn.MaxPool2d(2)

self.fc = nn.Linear(1408, 10)

def forward(self, x): # (batchsize,channels,weight,high)

in_size = x.size(0)# x.size(0)指batchsize的值,

x = F.relu(self.mp(self.conv1(x))) # 1*28*28 -> 10*24*24 -> 10*12*12

x = self.incep1(x) # 输出88*12*12

x = F.relu(self.mp(self.conv2(x))) #88*12*12 - 20*8*8 -20*4*4

x = self.incep2(x) # 88*4*4 =1408

x = x.view(in_size, -1) #(x.size(0),-1)将tensor的结构转换为了(batchsize, channels*w*h),即将(channels,w,h)拉直

x = self.fc(x)

return x

model = Net()运行结果:

[1, 300] loss: 0.842

[1, 600] loss: 0.214

[1, 900] loss: 0.157

Accuracy on test set: 96 %

[2, 300] loss: 0.128

[2, 600] loss: 0.106

[2, 900] loss: 0.089

Accuracy on test set: 97 %由于我只训练了2轮,准确率未提升,多训练一些,会发现准确率提高到98%