深度学习(五)——ZFNet+Pytorch实现

简介

AlexNet的提出使得大型卷积网络开始变得流行起来,但是人们对于CNN网络究竟为什么能表现这么好,以及怎么样能变得更好尚不清楚,因此为了解决上述两个问题,ZFNet提出了一种可视化技术,用于理解网络中间的特征层和最后的分类器层,并且找到改进神经网络的结构的方法。ZFNet是Matthew D.Zeiler 和 Rob Fergus 在2013年撰写的论文Visualizing and Understanding Convolutional Networks中提出的,是当年ILSVRC的冠军。ZFNet使用反卷积(deconv)和可视化特征图来达到可视化AlexNet的目的,并指出不足,最后修改网络结构,提升分类结果。

原理

反卷积网络结构

论文使用反卷积网络(deconvnet)进行可视化。反卷积网络可以看成是卷积网络的逆过程,但它不具有学习的能力,只是用于探测卷积网络。

反卷积网络依附于网络中的每一层,不断地将特征图映射回输入图并可视化。过程中将需要检测的激活图送入反卷积网络,而其余激活图都设置为0。进入反卷积网络后,经过1.unpool;2.rectify;3.filter;不断生成新的激活图,直到映射回原图。大致流程如下:

- unpool

首先说明,AlexNet中使用的max pooling是不可逆的,因为最大池化丢失了一部分图像信息。但是,如果我们记录了最大池化过程中最大值所在的位置,就可以近似地反池化。

为了记录池化过程中最大值的位置,论文中使用了一种开关(switch)结构,如上图所示。需要说明的一点是,当输入图像确定时,最大池化过程中最大值的位置时固定的,所以开关设置是特定的。 - rectification

AlexNet使用非线性的ReLU作为激活函数,从而修正特征图,使其始终为正。“反激活”的过程,仍使用ReLU进行映射。 - filtering

反卷积的过程使用了一个转置卷积,顾名思义即卷积网络中卷积矩阵的转置(具体查阅一文搞懂反卷积,转置卷积),直接作用在修正后的反池化图上。

卷积网络可视化

在训练结束后,我们在测试集上运用反卷积网络可视化激活特征图。

- 第二层主要相应图像的角点、边缘和颜色。

- 第三层具有更复杂的不变形,主要捕获相似的纹理。

- 第四层提取具有类别性的内容,例如狗脸、鸟腿等。

- 第五层提取具有重要意义的整个对象,例如键盘、狗等。

- 训练时的特征演变(evolution)

图中每一行代表同一张图片在不同epoch时反卷积的结果(论文中选取1,2,5,10,20,30,40,64epoch)。结果表明:较低层的特征收敛更快,在几个epoch之后就会收敛、固定;较高层特征收敛更慢,在40-50epochs之后在完全收敛。 - 特征不变性

论文分别对5张图像进行了三种处理:(从上到下分别是)水平平移、尺寸缩放、旋转图像。第二列的图像表示卷积网络第一层中原始图和特征图向量间的欧式距离。第三列是第七层的欧氏距离。第四列则代表处理后归属正确标签的概率。

实验表明,在前期(layer1),微小的转变(transformation)会导致一个明显的变化。而后期(layer7),水平平移和尺寸缩放带来的改变逐渐稳定,近似呈线性。旋转处理仍有较大变化。这说明CNN具有平移、缩放不变性,而不具有旋转不变性。 - AlexNet存在的问题

AlexNet中第一层使用11*11,步长为4的卷积核。然而在可视化时发现,第一层提取的信息多为高、低频,而中频的信息很少提取出。同时在可视化第二层是会发现由于步长过大引起的混叠伪像(aliasing artifact,参考这篇文章)。所以论文采用更小的卷积核(7*7)和更短的步长(2)。

下面是ZFNet的网络结构。

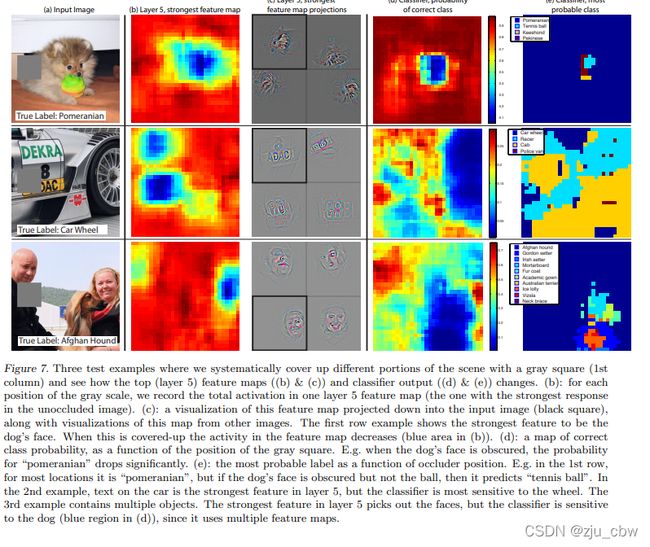

遮挡实验

可以发现,遮挡到目标物体就很难识别出来,遮挡背景并不会有太大影响,可见网络确实是根据物体判断的。

可以看出,狗的眼睛和鼻子对于狗的识别有很强的相关性。

消融实验

通过移除不同层,或者调整每层特征图个数,来观察对识别准确率产生的影响。

可以发现只移除最后两层全连接层或者只移除最后的两层卷积层,并不会对结果产生特别大的影响,但是同时移除掉,就会使误差产生巨大的上升,可见总体的深度对于获得好的效果是重要的。还发现,增加中间卷积层的大小确实可以降低错误,但是导致的扩大的全连接层会导致过拟合。

泛化实验

通过在ImageNet数据集上预训练,再在Caltech与PASCAL VOC 2012数据集上训练最后的softmax层。

从上面可以看出ZFNet在其它数据集上效果也是不错的,即网络确实是学到了一般的特征,在Caltech-256只需要不到十张图片即可超过之前最强的算法。在PASCAL 2012效果不是最好的,可能的原因是,PASCAL数据集多是多物体图片,而ImageNet数据集多是单物体图片,所以本质上是不同的。如果使用的损失函数是多标签的,可能可以改善这个结果。

Pytorch实现

从http://download.tensorflow.org/example_images/flower_photos.tgz下载数据集

执行下面代码,将数据集划分为训练集与验证集。

split_data.py

import os

from shutil import copy

import random

def mkfile(file):

if not os.path.exists(file):

os.makedirs(file)

file = 'flower_data/flower_photos'

flower_class = [cla for cla in os.listdir(file) if ".txt" not in cla]

mkfile('flower_data/train')

for cla in flower_class:

mkfile('flower_data/train/'+cla)

mkfile('flower_data/val')

for cla in flower_class:

mkfile('flower_data/val/'+cla)

split_rate = 0.1

for cla in flower_class:

cla_path = file + '/' + cla + '/'

images = os.listdir(cla_path)

num = len(images)

eval_index = random.sample(images, k=int(num*split_rate))

for index, image in enumerate(images):

if image in eval_index:

image_path = cla_path + image

new_path = 'flower_data/val/' + cla

copy(image_path, new_path)

else:

image_path = cla_path + image

new_path = 'flower_data/train/' + cla

copy(image_path, new_path)

print("\r[{}] processing [{}/{}]".format(cla, index+1, num), end="") # processing bar

print()

print("processing done!")

model.py

import torch.nn as nn

import torch

class ZFNet(nn.Module):

def __init__(self, num_classes=1000, init_weights=False):

super(ZFNet, self).__init__()

self.features = nn.Sequential( # 打包

nn.Conv2d(3, 48, kernel_size=7, stride=2, padding=1), # input[3, 224, 224] output[48, 110, 110] 自动舍去小数点后

nn.ReLU(inplace=True), # inplace 可以载入更大模型

nn.MaxPool2d(kernel_size=3, stride=2, padding=1), # output[48, 55, 55] kernel_num为原论文一半

nn.Conv2d(48, 128, kernel_size=5, stride=2), # output[128, 26, 26]

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1), # output[128, 13, 13]

nn.Conv2d(128, 192, kernel_size=3, padding=1), # output[192, 13, 13]

nn.ReLU(inplace=True),

nn.Conv2d(192, 192, kernel_size=3, padding=1), # output[192, 13, 13]

nn.ReLU(inplace=True),

nn.Conv2d(192, 128, kernel_size=3, padding=1), # output[128, 13, 13]

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3, stride=2), # output[128, 6, 6]

)

self.classifier = nn.Sequential(

nn.Dropout(p=0.5),

# 全连接

nn.Linear(128 * 6 * 6, 2048),

nn.ReLU(inplace=True),

nn.Dropout(p=0.5),

nn.Linear(2048, 2048),

nn.ReLU(inplace=True),

nn.Linear(2048, num_classes),

)

if init_weights:

self._initialize_weights()

def forward(self, x):

x = self.features(x)

x = torch.flatten(x, start_dim=1) # 展平 或者view()

x = self.classifier(x)

return x

def _initialize_weights(self):

for m in self.modules():

if isinstance(m, nn.Conv2d):

nn.init.kaiming_normal_(m.weight, mode='fan_out', nonlinearity='relu') # 何教授方法

if m.bias is not None:

nn.init.constant_(m.bias, 0)

elif isinstance(m, nn.Linear):

nn.init.normal_(m.weight, 0, 0.01) # 正态分布赋值

nn.init.constant_(m.bias, 0)

train.py

import torch

import torch.nn as nn

from torchvision import transforms, datasets, utils

import matplotlib.pyplot as plt

import numpy as np

import torch.optim as optim

from model import ZFNet

import os

import json

import time

# device : GPU or CPU

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

print(device)

# 数据转换

data_transform = {

"train": transforms.Compose([transforms.RandomResizedCrop(224),

transforms.RandomHorizontalFlip(),

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))]),

"val": transforms.Compose([transforms.Resize((224, 224)), # cannot 224, must (224, 224)

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))])}

# data_root = os.path.abspath(os.path.join(os.getcwd(), "../..")) # get data root path

data_root = os.getcwd()

image_path = data_root + "/flower_data/" # flower data set path

train_dataset = datasets.ImageFolder(root=image_path + "/train",

transform=data_transform["train"])

train_num = len(train_dataset)

# {'daisy':0, 'dandelion':1, 'roses':2, 'sunflower':3, 'tulips':4}

flower_list = train_dataset.class_to_idx

cla_dict = dict((val, key) for key, val in flower_list.items())

# write dict into json file

json_str = json.dumps(cla_dict, indent=4)

with open('class_indices.json', 'w') as json_file:

json_file.write(json_str)

batch_size = 32

train_loader = torch.utils.data.DataLoader(train_dataset,

batch_size=batch_size, shuffle=True,

num_workers=0)

validate_dataset = datasets.ImageFolder(root=image_path + "/val",

transform=data_transform["val"])

val_num = len(validate_dataset)

validate_loader = torch.utils.data.DataLoader(validate_dataset,

batch_size=batch_size, shuffle=True,

num_workers=0)

test_data_iter = iter(validate_loader)

test_image, test_label = test_data_iter.next()

# print(test_image[0].size(),type(test_image[0]))

# print(test_label[0],test_label[0].item(),type(test_label[0]))

# 显示图像,之前需把validate_loader中batch_size改为4

# def imshow(img):

# img = img / 2 + 0.5 # unnormalize

# npimg = img.numpy()

# plt.imshow(np.transpose(npimg, (1, 2, 0)))

# plt.show()

#

# print(' '.join('%5s' % cla_dict[test_label[j].item()] for j in range(4)))

# imshow(utils.make_grid(test_image))

net = ZFNet(num_classes=5, init_weights=True)

net.to(device)

# 损失函数:这里用交叉熵

loss_function = nn.CrossEntropyLoss()

# 优化器 这里用Adam

optimizer = optim.Adam(net.parameters(), lr=0.0002)

# 训练参数保存路径

save_path = './AlexNet.pth'

# 训练过程中最高准确率

best_acc = 0.0

# 开始进行训练和测试,训练一轮,测试一轮

for epoch in range(10):

# train

net.train() # 训练过程中,使用之前定义网络中的dropout

running_loss = 0.0

t1 = time.perf_counter()

for step, data in enumerate(train_loader, start=0):

images, labels = data

optimizer.zero_grad()

outputs = net(images.to(device))

loss = loss_function(outputs, labels.to(device))

loss.backward()

optimizer.step()

# print statistics

running_loss += loss.item()

# print train process

rate = (step + 1) / len(train_loader)

a = "*" * int(rate * 50)

b = "." * int((1 - rate) * 50)

print("\rtrain loss: {:^3.0f}%[{}->{}]{:.3f}".format(int(rate * 100), a, b, loss), end="")

print()

print(time.perf_counter()-t1)

# validate

net.eval() # 测试过程中不需要dropout,使用所有的神经元

acc = 0.0 # accumulate accurate number / epoch

with torch.no_grad():

for val_data in validate_loader:

val_images, val_labels = val_data

outputs = net(val_images.to(device))

predict_y = torch.max(outputs, dim=1)[1]

acc += (predict_y == val_labels.to(device)).sum().item()

val_accurate = acc / val_num

if val_accurate > best_acc:

best_acc = val_accurate

torch.save(net.state_dict(), save_path)

print('[epoch %d] train_loss: %.3f test_accuracy: %.3f' %

(epoch + 1, running_loss / step, val_accurate))

print('Finished Training')

import torch

from model import ZFNet

from PIL import Image

from torchvision import transforms

import matplotlib.pyplot as plt

import json

import os

os.environ["KMP_DUPLICATE_LIB_OK"]="TRUE"

data_transform = transforms.Compose(

[transforms.Resize((224, 224)),

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))])

# load image

img = Image.open("./sunflower.jpg") # 验证太阳花

# img = Image.open("./roses.jpg") # 验证玫瑰花

plt.imshow(img)

# [N, C, H, W]

img = data_transform(img)

# expand batch dimension

img = torch.unsqueeze(img, dim=0)

# read class_indict

try:

json_file = open('./class_indices.json', 'r')

class_indict = json.load(json_file)

except Exception as e:

print(e)

exit(-1)

# create model

model = ZFNet(num_classes=5)

# load model weights

model_weight_path = "./AlexNet.pth"

model.load_state_dict(torch.load(model_weight_path))

model.eval()

with torch.no_grad():

# predict class

output = torch.squeeze(model(img))

predict = torch.softmax(output, dim=0)

predict_cla = torch.argmax(predict).numpy()

print(class_indict[str(predict_cla)], predict[predict_cla].item())

plt.show()