(六) 深度学习笔记 |pytorch官方demo(LeNet-5)上

一、前言

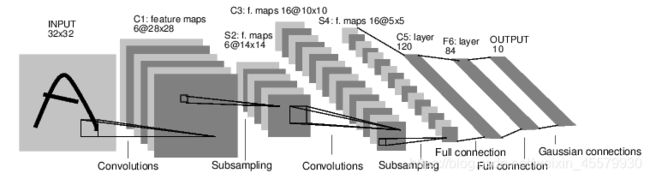

前面有写过关于lenet5的博客,若对lenet5可以自行查看https://blog.csdn.net/weixin_45579930/article/details/112277024

二、定义神经网络

- 首先我们定义一个类,这个类继承nn.module父类

- 在这个类中定义两个方法:

- 一个是初始化函数实现搭建网络过程中网络层结构

- 一个是在forward函数中定义正向传播的过程,按照forward进行运行

进行定义神经网络:

import torch

import torch.nn as nn

import torch.nn.functional as F

class LeNet(nn.Module):

def __init__(self):

super(LeNet, self).__init__()

self.conv1 = nn.Conv2d(3, 16, 5)

self.pool1 = nn.MaxPool2d(2, 2)

self.conv2 = nn.Conv2d(16, 32, 5)

self.pool2 = nn.MaxPool2d(2, 2)

self.fc1 = nn.Linear(32 * 5 * 5, 120)

self.fc2 = nn.Linear(120, 84)

self.fc3 = nn.Linear(84, 10)

def forward(self, x):

x = F.relu(self.conv1(x)) # input(3, 32, 32) output(16, 28, 28)

x = self.pool1(x) # output(16, 14, 14)

x = F.relu(self.conv2(x)) # output(32, 10, 10)

x = self.pool2(x) # output(32, 5, 5)

x = x.view(-1, 32 * 5 * 5) # output(32*5*5)

x = F.relu(self.fc1(x)) # output(120)

x = F.relu(self.fc2(x)) # output(84)

x = self.fc3(x) # output(10)

return x

input1 = torch.rand([32, 3, 32, 32])

model = LeNet()

print(model)

output = model(input1)

输出:

LeNet(

(conv1): Conv2d(3, 16, kernel_size=(5, 5), stride=(1, 1))

(pool1): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False)

(conv2): Conv2d(16, 32, kernel_size=(5, 5), stride=(1, 1))

(pool2): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False)

(fc1): Linear(in_features=800, out_features=120, bias=True)

(fc2): Linear(in_features=120, out_features=84, bias=True)

(fc3): Linear(in_features=84, out_features=10, bias=True)

)

关于上述代码我们进行详解:

2.1.定义卷积层nn.Conv2d

- 1.首先我们可以使用command+左键进行查看释义

torch.nn.Conv2d(in_channels, out_channels, kernel_size, stride=1, padding=0, dilation=1, groups=1, bias=True, padding_mode='zeros')

-

2.我们对此含义进行解释:

- in_channels:输入特征矩阵的深度,通道一般分为3个通道,有rgb(红、绿和蓝)三种。我们这边使用的是灰色图片所以,in_channels是1。

- out_channel:卷积核的个数,使用n个卷积核输出的特征矩阵深度就是n。

- kernel_size:卷积核大小。可以是int类型,如3 代表卷积核的height=width=3,也可以是tuple类型如(3, 5)代表卷积核的height=3,width=5。

- stride:步长,默认是为1。下面的动画演示了卷积运算过程中的一个 (1,1) 步长。

- padding:补0,默认为0,在不足的地方进行补0处理。

- bias:偏值,距离原点的截距或偏移。

2.1.1卷积层nn.Conv2d代码部分详解

# 1.3是代表输入特征层的一个深度

# 2.16代表卷积核

# 3.5尺寸是5✖️5

self.conv1 = nn.Conv2d(3, 16, 5)

# 1.使用激活函数relu

# 2.input(3, 32, 32) 代表3✖️32✖️32大小的

# 3.output(16, 28, 28) 代表使用的是16卷积核 深度channel所以也为16 28是因为N=(W-F+2P)/S+1 (32-5+2✖️0)/1+1=28

x = F.relu(self.conv1(x))

2.定义下采样层nn.MaxPool2d

- 1.首先我们使用command+左键进行查看释义

- 2.由于MaxPool2d继承_MaxPoolNd,我们再次command+左键进行查看释义

- 3.我们对此含义进行解释:

- kernel_size:池化层大小

- stride:布长,if (stride is not None) else kernel_size,如果不采用步长,那么就采用池化层一样的大小

3.定义全连接层nn.Linear

Linear(in_features, out_features, bias=True)

我们将上述代码进行详解:

# 全连接层输入是一纬向量 需要将我们得到的特征矩阵进行展平

# 节点120

self.fc1 = nn.Linear(32*5*5, 120)

# 84个节点

self.fc2 = nn.Linear(120, 84)

# 10是因为我们使用的是cf10具有是个类别的训练集

self.fc3 = nn.Linear(84, 10)

4.定义Tensor的展平:view()

注意到,在经过第二个池化层后,数据还是一个三维的Tensor (32, 5, 5),需要先经过展平后(3255)再传到全连接层:

x = self.pool2(x) # output(32, 5, 5)

x = x.view(-1, 32*5*5) # output(32*5*5)

x = F.relu(self.fc1(x)) # output(120)