卷积神经网络

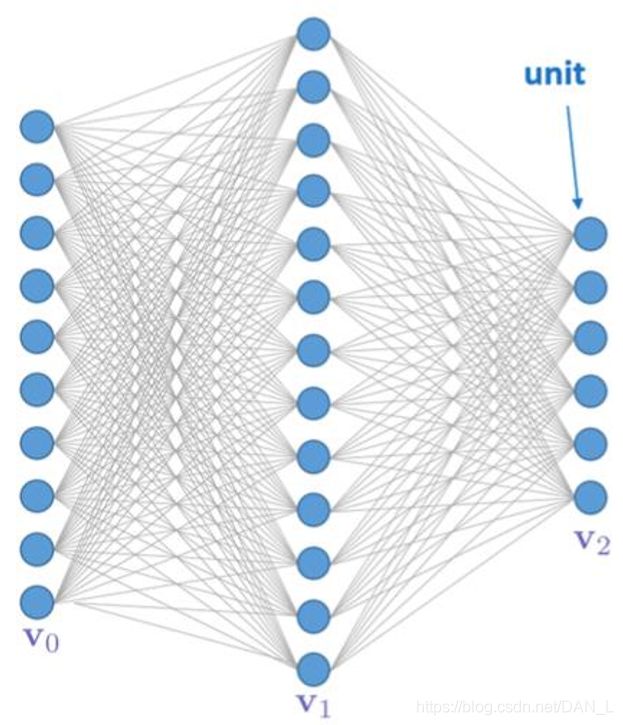

一、传统神经网络存在的问题

(一)权值太多,计算量太大

(二)权值太多,需要大量样本进行训练

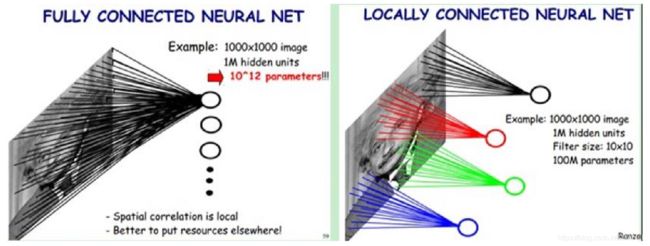

二、局部感受野

1962年哈佛医学院神经生理学家Hubel和Wiesel通过对猫视觉皮层细胞的研究,提出了感受野 (receptive field)的概念,1984年日本学者Fukushima基于感受野概念提出的神经认知机 (neocognitron)可以看作是卷积神经网络的第一个实现网络,也是感受野概念在人工神经网络领 域的首次应用。

三、卷积神经网络CNN

CNN通过感受野和权值共享减少了神经网络需要训练的参数个数。

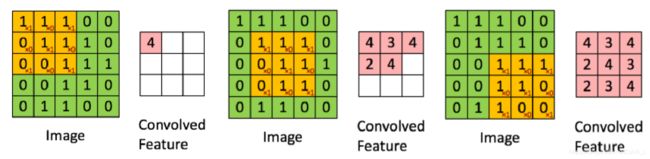

(一)卷积

(二)池化

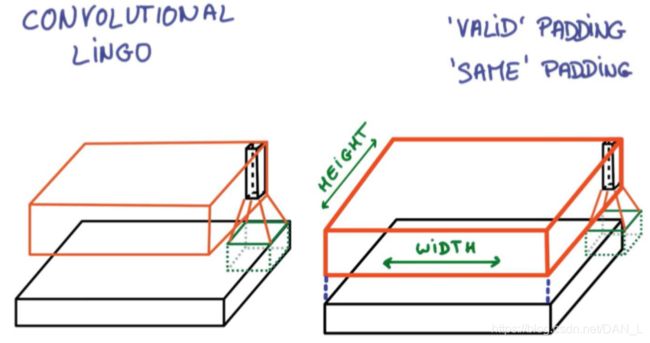

(三)对于卷积操作

1、SAME PADDING

给平面外部补0 ,卷积窗口采样后得到一个跟原来平面大小相同的平面。

2、VALID PADDING

不会超出平面外部 ,卷积窗口采样后得到一个比原来平面小的平面。

(四)对于池化操作

1、SAME PADDING:可能会给平面外部补0

2、VALID PADDING:不会超出平面外部

四、代码实现

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

mnist = input_data.read_data_sets(‘MNIST_data’,one_hot=True)

#每个批次的大小

batch_size = 100

#计算一共有多少个批次

n_batch = mnist.train.num_examples // batch_size

#初始化权值

def weight_variable(shape):

initial = tf.truncated_normal(shape,stddev=0.1)#生成一个截断的正态分布

return tf.Variable(initial)

#初始化偏置

def bias_variable(shape):

initial = tf.constant(0.1,shape=shape)

return tf.Variable(initial)

#卷积层

def conv2d(x,w):

return tf.nn.conv2d(x,w,strides=[1,1,1,1],padding=‘SAME’)

#池化层

def max_pool_2x2(x):

return tf.nn.max_pool(x,ksize=[1,2,2,1],strides=[1,2,2,1],padding=‘SAME’)

#定义两个placeholder

x = tf.placeholder(tf.float32,[None,784])#28*28

y = tf.placeholder(tf.float32,[None,10])

#改变x的格式转为4D的向量[batch, in_heigh, in_width, in_channels]

x_image = tf.reshape(x,[-1,28,28,1])

#初始化第一个卷积层的权值和偏置

w_conv1 = weight_variable([5,5,1,32])#5*5的采样窗口,32个卷积核从1个平面抽取特征

b_conv1 = bias_variable([32])#每一个卷积核一个偏置值

#把x_image和权值向量进行卷积,再加上偏置值,然后应用于relu激活函数

h_conv1 = tf.nn.relu(conv2d(x_image,w_conv1) + b_conv1)

h_pool1 = max_pool_2x2(h_conv1)#进行max-pooling

#初始化第二个卷积层的权值和偏置

w_conv2 = weight_variable([5,5,32,64])#5*5的采样窗口,64个卷积核从32个平面抽取特征

b_conv2 = bias_variable([64])#每一个卷积核一个偏置值

#把h_pool1和权值向量进行卷积,再加上偏置值,然后应用于relu激活函数

h_conv2 = tf.nn.relu(conv2d(h_pool1,w_conv2) + b_conv2)

h_pool2 = max_pool_2x2(h_conv2)

#初始化第一个全连接层的权值

w_fcl = weight_variable([7764,1024])#上一层有7764个神经元,全连接层有1024个神经元

b_fcl = bias_variable([1024])

#把池化层2的输出扁平化为1维

h_pool2_flat = tf.reshape(h_pool2,[-1,7764])

#求第一个全连接层的输出

h_fcl = tf.nn.relu(tf.matmul(h_pool2_flat,w_fcl) + b_fcl)

#keep_prob用来表示神经元的输出概率

keep_prob = tf.placeholder(tf.float32)

h_fcl_drop = tf.nn.dropout(h_fcl,keep_prob)

#初始化第二个全连接层

w_fc2 = weight_variable([1024,10])

b_fc2 = bias_variable([10])

#计算输出

prediction = tf.nn.softmax(tf.matmul(h_fcl_drop,w_fc2) + b_fc2)

#交叉熵代价函数

cross_entropy = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(labels=y,logits=prediction))

#使用AdamOptimizer进行优化

train_step = tf.train.AdamOptimizer(1e-4).minimize(cross_entropy)

#结果存放在一个布尔列表中

correct_prediction = tf.equal(tf.argmax(prediction,1),tf.argmax(y,1))

#求准确率

accuracy = tf.reduce_mean(tf.cast(correct_prediction,tf.float32))

with tf.Session() as sess:

sess.run(tf.global_variables_initializer())

for epoch in range(21):

for batch in range(n_batch):

batch_xs,batch_ys = mnist.train.next_batch(batch_size)

sess.run(train_step,feed_dict={x:batch_xs,y:batch_ys,keep_prob:0.7})

acc = sess.run(accuracy,feed_dict={x:mnist.test.images,y:mnist.test.labels,keep_prob:1.0})

print('Iter' + str(epoch) + ',Testing Accuracy =' + str(acc))

返回值为

Iter0,Testing Accuracy =0.8648

Iter1,Testing Accuracy =0.8768

Iter2,Testing Accuracy =0.9578

Iter3,Testing Accuracy =0.9766

Iter4,Testing Accuracy =0.9825

Iter5,Testing Accuracy =0.9848

Iter6,Testing Accuracy =0.9871

Iter7,Testing Accuracy =0.9874

Iter8,Testing Accuracy =0.9891

Iter9,Testing Accuracy =0.9881

Iter10,Testing Accuracy =0.9891

Iter11,Testing Accuracy =0.9896

Iter12,Testing Accuracy =0.9913

Iter13,Testing Accuracy =0.9906

Iter14,Testing Accuracy =0.9915

Iter15,Testing Accuracy =0.9898

Iter16,Testing Accuracy =0.9913

Iter17,Testing Accuracy =0.9898

Iter18,Testing Accuracy =0.9907

Iter19,Testing Accuracy =0.9924

Iter20,Testing Accuracy =0.9916