实践数据湖iceberg 第十五课 spark3安装与集成iceberg0.13 (jersey包冲突,安装完成)

系列文章目录

实践数据湖iceberg 第一课 入门

实践数据湖iceberg 第二课 iceberg基于hadoop的底层数据格式

实践数据湖iceberg 第三课 在sqlclient中,以sql方式从kafka读数据到iceberg

实践数据湖iceberg 第四课 在sqlclient中,以sql方式从kafka读数据到iceberg(升级版本到flink1.12.7)

实践数据湖iceberg 第五课 hive catalog特点

实践数据湖iceberg 第六课 从kafka写入到iceberg失败问题 解决

实践数据湖iceberg 第七课 实时写入到iceberg

实践数据湖iceberg 第八课 hive与iceberg集成

实践数据湖iceberg 第九课 合并小文件

实践数据湖iceberg 第十课 快照删除

实践数据湖iceberg 第十一课 测试分区表完整流程(造数、建表、合并、删快照)

实践数据湖iceberg 第十二课 catalog是什么

实践数据湖iceberg 第十三课 metadata比数据文件大很多倍的问题

实践数据湖iceberg 第十四课 元数据合并(解决元数据随时间增加而元数据膨胀的问题)

实践数据湖iceberg 第十五课 spark安装与集成iceberg(jersey包冲突)

实践数据湖iceberg 第十六课 通过spark3打开iceberg的认知之门

实践数据湖iceberg 第十七课 hadoop2.7,spark3 on yarn运行iceberg配置

实践数据湖iceberg 第十八课 多种客户端与iceberg交互启动命令(常用命令)

实践数据湖iceberg 第十九课 flink count iceberg,无结果问题

文章目录

- 系列文章目录

- 前言

- 1.准备安装包spark-3.2.1-bin-hadoop2.7.tgz ,解压

- 2.配置spark-defalult.conf

- 3. /etc/profile配置HADOOP_CONF_DIR

- 4.启动测试 报错

- 5. 解决方法

- 5.1 把版本改为一致

-

- 5.2 降低 spark版本

- 5.3 增加个参数

- 6. 集成iceberg

-

- 6.1 安装官网集成iceberg

- 6.2 测试spark iceberg

- 6.3 官网靠不住,来一个作者版(跑通)

- 总结

前言

根据iceberg官网提示,目前iceberg0.13版本,spark对iceberg的支持是最好的,了解iceberg的最好方法是,通过spark

虽然确定公司的架构是flink+iceberg。最快速的学习路径应该是flink+iceberg. 但有时通过曲折迂回的路径才是最快的。

有时为了快就要走一些弯路。

开始安装spark

1.准备安装包spark-3.2.1-bin-hadoop2.7.tgz ,解压

解压

配置spark-defalult.conf

2.配置spark-defalult.conf

spark.master yarn

spark.eventLog.enabled true

spark.eventLog.dir hdfs:///spark-history

3. /etc/profile配置HADOOP_CONF_DIR

vim /etc/profile

export HADOOP_CONF_DIR=/opt/module/hadoop/etc/hadoop

4.启动测试 报错

[root@hadoop101 spark]# bin/spark-shell

Setting default log level to "WARN".

To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel).

22/02/13 15:05:53 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

java.lang.NoClassDefFoundError: com/sun/jersey/api/client/config/ClientConfig

at org.apache.hadoop.yarn.client.api.TimelineClient.createTimelineClient(TimelineClient.java:55)

at org.apache.hadoop.yarn.client.api.impl.YarnClientImpl.createTimelineClient(YarnClientImpl.java:181)

at org.apache.hadoop.yarn.client.api.impl.YarnClientImpl.serviceInit(YarnClientImpl.java:168)

at org.apache.hadoop.service.AbstractService.init(AbstractService.java:163)

at org.apache.spark.deploy.yarn.Client.submitApplication(Client.scala:175)

at org.apache.spark.scheduler.cluster.YarnClientSchedulerBackend.start(YarnClientSchedulerBackend.scala:62)

at org.apache.spark.scheduler.TaskSchedulerImpl.start(TaskSchedulerImpl.scala:220)

at org.apache.spark.SparkContext.(SparkContext.scala:581)

at org.apache.spark.SparkContext$.getOrCreate(SparkContext.scala:2690)

at org.apache.spark.sql.SparkSession$Builder.$anonfun$getOrCreate$2(SparkSession.scala:949)

at scala.Option.getOrElse(Option.scala:189)

at org.apache.spark.sql.SparkSession$Builder.getOrCreate(SparkSession.scala:943)

at org.apache.spark.repl.Main$.createSparkSession(Main.scala:106)

... 55 elided

Caused by: java.lang.ClassNotFoundException: com.sun.jersey.api.client.config.ClientConfig

at java.net.URLClassLoader.findClass(URLClassLoader.java:382)

at java.lang.ClassLoader.loadClass(ClassLoader.java:424)

at sun.misc.Launcher$AppClassLoader.loadClass(Launcher.java:349)

at java.lang.ClassLoader.loadClass(ClassLoader.java:357)

... 68 more

:14: error: not found: value spark

import spark.implicits._

^

:14: error: not found: value spark

import spark.sql

^

Welcome to

____ __

/ __/__ ___ _____/ /__

_\ \/ _ \/ _ `/ __/ '_/

/___/ .__/\_,_/_/ /_/\_\ version 3.2.1

/_/

Using Scala version 2.12.15 (Java HotSpot(TM) 64-Bit Server VM, Java 1.8.0_212)

Type in expressions to have them evaluated.

Type :help for more information.

重新解压一个包测试local模式,没问题, 排除安装包有问题

5. 解决方法

5.1 把版本改为一致

参考 https://blog.csdn.net/zhanglong_4444/article/details/106097216

增加jersey的包,安装文档提示,无效

spark3.1 和Hadoop2.7 jersey的版本不一致

hadoop2.7

[root@hadoop101 jars]# ls /opt/module/hadoop/share/hadoop/common/lib/je

jersey-core-1.9.jar jersey-json-1.9.jar jersey-server-1.9.jar jets3t-0.9.0.jar jettison-1.1.jar jetty-6.1.26.jar jetty-util-6.1.26.jar

spark3.2

[root@hadoop101 jars]# ls jersey-*

jersey-client-2.34.jar jersey-common-2.34.jar jersey-container-servlet-2.34.jar jersey-container-servlet-core-2.34.jar jersey-core-1.19.1.jar jersey-guice-1.19.jar jersey-hk2-2.34.jar jersey-server-2.34.jar

头都大了!!!

问题很简单,解决很困难

查一下社区,还是要自己编译,又要一天。。。

https://github.com/apache/spark/commit/b6f46ca29742029efea2790af7fdefbc2fcf52de

另外找个文章

https://www.jianshu.com/p/d630582c8108

5.2 降低 spark版本

为了节省一天编译包时间,重新一下一个spark2.4的包,

下面作者用的spark.2.2也有包冲突问题,看来不用降级了。

https://www.jianshu.com/p/d630582c8108

5.3 增加个参数

[root@hadoop101 conf]# spark-shell --conf spark.hadoop.yarn.timeline-service.enabled=false

Setting default log level to "WARN".

To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel).

22/02/13 16:15:01 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

22/02/13 16:15:03 WARN yarn.Client: Neither spark.yarn.jars nor spark.yarn.archive is set, falling back to uploading libraries under SPARK_HOME.

Spark context Web UI available at http://hadoop101:4040

Spark context available as 'sc' (master = yarn, app id = application_1642579431487_0048).

Spark session available as 'spark'.

Welcome to

____ __

/ __/__ ___ _____/ /__

_\ \/ _ \/ _ `/ __/ '_/

/___/ .__/\_,_/_/ /_/\_\ version 3.2.1

/_/

Using Scala version 2.12.15 (Java HotSpot(TM) 64-Bit Server VM, Java 1.8.0_212)

Type in expressions to have them evaluated.

Type :help for more information.

5.1的步骤也做了 spark_home/jars 下增加了1.9的jersey, 可能不需要。把它删了,发现报其他错误,又把1.9的jersey加回去

感谢 https://www.jianshu.com/p/d630582c8108 作者

6. 集成iceberg

6.1 安装官网集成iceberg

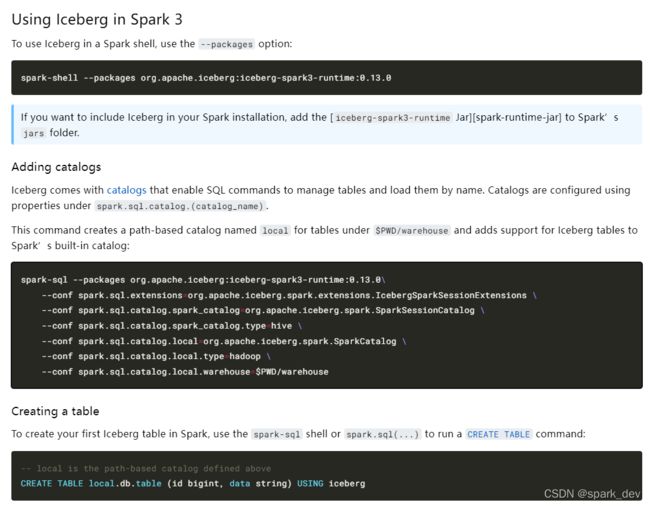

Using Iceberg in Spark 3 #

To use Iceberg in a Spark shell, use the --packages option:

spark-shell --packages org.apache.iceberg:iceberg-spark3-runtime:0.13.0

If you want to include Iceberg in your Spark installation, add the [iceberg-spark3-runtime Jar][spark-runtime-jar] to Spark’s jars folder.

上图来自https://iceberg.apache.org/docs/latest/getting-started/,spark模块。

下载包iceberg-spark-runtime-0.13.0.jar 地址 :https://repo.maven.apache.org/maven2/org/apache/iceberg/iceberg-spark-runtime/0.13.0/iceberg-spark-runtime-0.13.0.jar

放包:

[root@hadoop101 iceberg0.13]# ls

flink-sql-connector-hive-2.3.6_2.12-1.14.3.jar iceberg-flink-runtime-1.14-0.13.0.jar iceberg-hive-runtime-0.13.0.jar iceberg-mr-0.13.0.jar iceberg-spark-runtime-0.13.0.jar

iceberg-flink-runtime-1.13-0.13.0.jar iceberg-hive3-0.13.0.jar iceberg-mr-0.12.1.jar iceberg-spark3-runtime-0.13.0.jar iceberg-spark-runtime-3.2_2.12-0.13.0.jar

[root@hadoop101 iceberg0.13]# cp iceberg-spark3-runtime-0.13.0.jar /opt/module/spark/jars

6.2 测试spark iceberg

官网提供了一个local的方式,测试一下,

在另外一台机器解压一个spark包,看看

[root@hadoop102 spark-3.2.1-bin-hadoop2.7]# bin/spark-sql --conf spark.sql.extensions=org.apache.iceberg.spark.extensions.IcebergSparkSessionExtensions --conf spark.sql.catalog.spark_catalog=org.apache.iceberg.spark.SparkSessionCatalog --conf spark.sql.catalog.spark_catalog.type=hive --conf spark.sql.catalog.local=org.apache.iceberg.spark.SparkCatalog --conf spark.sql.catalog.local.type=hadoop --conf spark.sql.catalog.local.warehouse=$PWD/warehouse

Setting default log level to "WARN".

To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel).

22/02/13 16:41:34 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

22/02/13 16:41:38 WARN conf.HiveConf: HiveConf of name hive.stats.jdbc.timeout does not exist

22/02/13 16:41:38 WARN conf.HiveConf: HiveConf of name hive.stats.retries.wait does not exist

22/02/13 16:41:42 WARN metastore.ObjectStore: Version information not found in metastore. hive.metastore.schema.verification is not enabled so recording the schema version 2.3.0

22/02/13 16:41:42 WARN metastore.ObjectStore: setMetaStoreSchemaVersion called but recording version is disabled: version = 2.3.0, comment = Set by MetaStore root@10.233.65.29

22/02/13 16:41:42 WARN metastore.ObjectStore: Failed to get database default, returning NoSuchObjectException

Spark master: local[*], Application Id: local-1644741696332

spark-sql> CREATE TABLE local.db.table (id bigint, data string) USING iceberg;

22/02/13 16:41:49 ERROR thriftserver.SparkSQLDriver: Failed in [CREATE TABLE local.db.table (id bigint, data string) USING iceberg]

java.lang.IncompatibleClassChangeError: class org.apache.spark.sql.catalyst.plans.logical.DynamicFileFilterWithCardinalityCheck has interface org.apache.spark.sql.catalyst.plans.logical.BinaryNode as super class

at java.lang.ClassLoader.defineClass1(Native Method)

at java.lang.ClassLoader.defineClass(ClassLoader.java:763)

at java.security.SecureClassLoader.defineClass(SecureClassLoader.java:142)

at java.net.URLClassLoader.defineClass(URLClassLoader.java:468)

at java.net.URLClassLoader.access$100(URLClassLoader.java:74)

at java.net.URLClassLoader$1.run(URLClassLoader.java:369)

at java.net.URLClassLoader$1.run(URLClassLoader.java:363)

at java.security.AccessController.doPrivileged(Native Method)

at java.net.URLClassLoader.findClass(URLClassLoader.java:362)

at java.lang.ClassLoader.loadClass(ClassLoader.java:424)

at sun.misc.Launcher$AppClassLoader.loadClass(Launcher.java:349)

at java.lang.ClassLoader.loadClass(ClassLoader.java:357)

at org.apache.iceberg.spark.extensions.IcebergSparkSessionExtensions.$anonfun$apply$8(IcebergSparkSessionExtensions.scala:50)

at org.apache.spark.sql.SparkSessionExtensions.$anonfun$buildOptimizerRules$1(SparkSessionExtensions.scala:201)

at scala.collection.TraversableLike.$anonfun$map$1(TraversableLike.scala:286)

at scala.collection.mutable.ResizableArray.foreach(ResizableArray.scala:62)

at scala.collection.mutable.ResizableArray.foreach$(ResizableArray.scala:55

show databases看看

spark-sql> show databases;

22/02/13 16:51:51 ERROR thriftserver.SparkSQLDriver: Failed in [show databases]

java.lang.IncompatibleClassChangeError: class org.apache.spark.sql.catalyst.plans.logical.DynamicFileFilterWithCardinalityCheck has interface org.apache.spark.sql.catalyst.plans.logical.BinaryNode as super class

at java.lang.ClassLoader.defineClass1(Native Method)

at java.lang.ClassLoader.defineClass(ClassLoader.java:763)

at java.security.SecureClassLoader.defineClass(SecureClassLoader.java:142)

at java.net.URLClassLoader.defineClass(URLClassLoader.java:468)

at java.net.URLClassLoader.access$100(URLClassLoader.java:74)

at java.net.URLClassLoader$1.run(URLClassLoader.java:369)

at java.net.URLClassLoader$1.run(URLClassLoader.java:363)

at java.security.AccessController.doPrivileged(Native Method)

at java.net.URLClassLoader.findClass(URLClassLoader.java:362)

at java.lang.ClassLoader.loadClass(ClassLoader.java:424)

at sun.misc.Launcher$AppClassLoader.loadClass(Launcher.java:349)

at java.lang.ClassLoader.loadClass(ClassLoader.java:357)

at org.apache.iceberg.spark.extensions.IcebergSparkSessionExtensions.$anonfun$apply$8(IcebergSparkSessionExtensions.scala:50)

at org.apache.spark.sql.SparkSessionExtensions.$anonfun$buildOptimizerRules$1(SparkSessionExtensions.scala:201)

at scala.collection.TraversableLike.$anonfun$map$1(TraversableLike.scala:286)

at scala.collection.mutable.ResizableArray.foreach(ResizableArray.scala:62)

at scala.collection.mutable.ResizableArray.foreach$(ResizableArray.scala:55)

at scala.collection.mutable.ArrayBuffer.foreach(ArrayBuffer.scala:49)

at scala.collection.TraversableLike.map(TraversableLike.scala:286)

at scala.collection.TraversableLike.map$(TraversableLike.scala:279)

at scala.collection.AbstractTraversable.map(Traversable.scala:108)

at org.apache.spark.sql.SparkSessionExtensions.buildOptimizerRules(SparkSessionExtensions.scala:201)

at org.apache.spark.sql.internal.BaseSessionStateBuilder.customOperatorOptimizationRules(BaseSessionStateBuilder.scala:259)

at org.apache.spark.sql.internal.BaseSessionStateBuilder$$anon$2.extendedOperatorOptimizationRules(BaseSessionStateBuilder.scala:248)

at org.apache.spark.sql.catalyst.optimizer.Optimizer.defaultBatches(Optimizer.scala:130)

at org.apache.spark.sql.execution.SparkOptimizer.defaultBatches(SparkOptimizer.scala:42)

at org.apache.spark.sql.catalyst.optimizer.Optimizer.batches(Optimizer.scala:383)

at org.apache.spark.sql.catalyst.rules.RuleExecutor.execute(RuleExecutor.scala:200)

at org.apache.spark.sql.catalyst.rules.RuleExecutor.$anonfun$executeAndTrack$1(RuleExecutor.scala:179)

at org.apache.spark.sql.catalyst.QueryPlanningTracker$.withTracker(QueryPlanningTracker.scala:88)

at org.apache.spark.sql.catalyst.rules.RuleExecutor.executeAndTrack(RuleExecutor.scala:179)

at org.apache.spark.sql.execution.QueryExecution.$anonfun$optimizedPlan$1(QueryExecution.scala:138)

at org.apache.spark.sql.catalyst.QueryPlanningTracker.measurePhase(QueryPlanningTracker.scala:111)

at org.apache.spark.sql.execution.QueryExecution.$anonfun$executePhase$1(QueryExecution.scala:196)

at org.apache.spark.sql.SparkSession.withActive(SparkSession.scala:775)

at org.apache.spark.sql.execution.QueryExecution.executePhase(QueryExecution.scala:196)

at org.apache.spark.sql.execution.QueryExecution.optimizedPlan$lzycompute(QueryExecution.scala:134)

at org.apache.spark.sql.execution.QueryExecution.optimizedPlan(QueryExecution.scala:130)

at org.apache.spark.sql.execution.QueryExecution.assertOptimized(QueryExecution.scala:148)

at org.apache.spark.sql.execution.QueryExecution.$anonfun$executedPlan$1(QueryExecution.scala:166)

at org.apache.spark.sql.execution.QueryExecution.withCteMap(QueryExecution.scala:73)

at org.apache.spark.sql.execution.QueryExecution.executedPlan$lzycompute(QueryExecution.scala:163)

at org.apache.spark.sql.execution.QueryExecution.executedPlan(QueryExecution.scala:163)

at org.apache.spark.sql.execution.QueryExecution.simpleString(QueryExecution.scala:214)

at org.apache.spark.sql.execution.QueryExecution.org$apache$spark$sql$execution$QueryExecution$$explainString(QueryExecution.scala:259)

at org.apache.spark.sql.execution.QueryExecution.explainString(QueryExecution.scala:228)

at org.apache.spark.sql.execution.SQLExecution$.$anonfun$withNewExecutionId$5(SQLExecution.scala:98)

at org.apache.spark.sql.execution.SQLExecution$.withSQLConfPropagated(SQLExecution.scala:163)

at org.apache.spark.sql.execution.SQLExecution$.$anonfun$withNewExecutionId$1(SQLExecution.scala:90)

at org.apache.spark.sql.SparkSession.withActive(SparkSession.scala:775)

at org.apache.spark.sql.execution.SQLExecution$.withNewExecutionId(SQLExecution.scala:64)

at org.apache.spark.sql.execution.QueryExecution$$anonfun$eagerlyExecuteCommands$1.applyOrElse(QueryExecution.scala:110)

at org.apache.spark.sql.execution.QueryExecution$$anonfun$eagerlyExecuteCommands$1.applyOrElse(QueryExecution.scala:106)

at org.apache.spark.sql.catalyst.trees.TreeNode.$anonfun$transformDownWithPruning$1(TreeNode.scala:481)

at org.apache.spark.sql.catalyst.trees.CurrentOrigin$.withOrigin(TreeNode.scala:82)

at org.apache.spark.sql.catalyst.trees.TreeNode.transformDownWithPruning(TreeNode.scala:481)

at org.apache.spark.sql.catalyst.plans.logical.LogicalPlan.org$apache$spark$sql$catalyst$plans$logical$AnalysisHelper$$super$transformDownWithPruning(LogicalPlan.scala:30)

at org.apache.spark.sql.catalyst.plans.logical.AnalysisHelper.transformDownWithPruning(AnalysisHelper.scala:267)

at org.apache.spark.sql.catalyst.plans.logical.AnalysisHelper.transformDownWithPruning$(AnalysisHelper.scala:263)

at org.apache.spark.sql.catalyst.plans.logical.LogicalPlan.transformDownWithPruning(LogicalPlan.scala:30)

at org.apache.spark.sql.catalyst.plans.logical.LogicalPlan.transformDownWithPruning(LogicalPlan.scala:30)

at org.apache.spark.sql.catalyst.trees.TreeNode.transformDown(TreeNode.scala:457)

at org.apache.spark.sql.execution.QueryExecution.eagerlyExecuteCommands(QueryExecution.scala:106)

at org.apache.spark.sql.execution.QueryExecution.commandExecuted$lzycompute(QueryExecution.scala:93)

at org.apache.spark.sql.execution.QueryExecution.commandExecuted(QueryExecution.scala:91)

at org.apache.spark.sql.Dataset.(Dataset.scala:219)

at org.apache.spark.sql.Dataset$.$anonfun$ofRows$2(Dataset.scala:99)

at org.apache.spark.sql.SparkSession.withActive(SparkSession.scala:775)

at org.apache.spark.sql.Dataset$.ofRows(Dataset.scala:96)

at org.apache.spark.sql.SparkSession.$anonfun$sql$1(SparkSession.scala:618)

at org.apache.spark.sql.SparkSession.withActive(SparkSession.scala:775)

at org.apache.spark.sql.SparkSession.sql(SparkSession.scala:613)

at org.apache.spark.sql.SQLContext.sql(SQLContext.scala:651)

at org.apache.spark.sql.hive.thriftserver.SparkSQLDriver.run(SparkSQLDriver.scala:67)

at org.apache.spark.sql.hive.thriftserver.SparkSQLCLIDriver.processCmd(SparkSQLCLIDriver.scala:384)

at org.apache.spark.sql.hive.thriftserver.SparkSQLCLIDriver.$anonfun$processLine$1(SparkSQLCLIDriver.scala:504)

at org.apache.spark.sql.hive.thriftserver.SparkSQLCLIDriver.$anonfun$processLine$1$adapted(SparkSQLCLIDriver.scala:498)

at scala.collection.Iterator.foreach(Iterator.scala:943)

at scala.collection.Iterator.foreach$(Iterator.scala:943)

at scala.collection.AbstractIterator.foreach(Iterator.scala:1431)

at scala.collection.IterableLike.foreach(IterableLike.scala:74)

at scala.collection.IterableLike.foreach$(IterableLike.scala:73)

at scala.collection.AbstractIterable.foreach(Iterable.scala:56)

at org.apache.spark.sql.hive.thriftserver.SparkSQLCLIDriver.processLine(SparkSQLCLIDriver.scala:498)

at org.apache.spark.sql.hive.thriftserver.SparkSQLCLIDriver$.main(SparkSQLCLIDriver.scala:287)

at org.apache.spark.sql.hive.thriftserver.SparkSQLCLIDriver.main(SparkSQLCLIDriver.scala)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.spark.deploy.JavaMainApplication.start(SparkApplication.scala:52)

at org.apache.spark.deploy.SparkSubmit.org$apache$spark$deploy$SparkSubmit$$runMain(SparkSubmit.scala:955)

at org.apache.spark.deploy.SparkSubmit.doRunMain$1(SparkSubmit.scala:180)

at org.apache.spark.deploy.SparkSubmit.submit(SparkSubmit.scala:203)

at org.apache.spark.deploy.SparkSubmit.doSubmit(SparkSubmit.scala:90)

at org.apache.spark.deploy.SparkSubmit$$anon$2.doSubmit(SparkSubmit.scala:1043)

at org.apache.spark.deploy.SparkSubmit$.main(SparkSubmit.scala:105

spark sql的包冲突,只引入了 iceberg-spark3-runtime-0.13.0.jar 包,应该是它与spark自身的包冲突

发现社区上,有很有同胞:

https://github.com/apache/iceberg/issues/3585

解决方法是,换一个spark的包,把对应的hadoop版本改为3.2。 反正是测试功能,更换为这个版本看看

6.3 官网靠不住,来一个作者版(跑通)

下载包,解压。不配置spark-default.conf, /etc/profilie配置HADOOP_HOME,HADOOP_CONF_DIR就ok

–package的参数,官网提供的不对。。。

参考社区https://github.com/apache/iceberg/issues/3585 的方法:

[root@hadoop103 spark-3.2.0-bin-hadoop3.2]# bin/spark-sql --packages org.apache.iceberg:iceberg-spark-runtime-3.2_2.12:0.13.0 --conf spark.sql.extensions=org.apache.iceberg.spark.extensions.IcebergSparkSessionExtensions --conf spark.sql.catalog.spark_catalog=org.apache.iceberg.spark.SparkSessionCatalog --conf spark.sql.catalog.spark_catalog.type=hive --conf spark.sql.catalog.local=org.apache.iceberg.spark.SparkCatalog --conf spark.sql.catalog.local.type=hadoop --conf spark.sql.catalog.local.warehouse=/tmp/iceberg/warehouse

:: loading settings :: url = jar:file:/opt/software/spark-3.2.0-bin-hadoop3.2/jars/ivy-2.5.0.jar!/org/apache/ivy/core/settings/ivysettings.xml

Ivy Default Cache set to: /root/.ivy2/cache

The jars for the packages stored in: /root/.ivy2/jars

org.apache.iceberg#iceberg-spark-runtime-3.2_2.12 added as a dependency

:: resolving dependencies :: org.apache.spark#spark-submit-parent-9b267f2d-0588-472e-a5c9-79d0280901ea;1.0

confs: [default]

found org.apache.iceberg#iceberg-spark-runtime-3.2_2.12;0.13.0 in central

downloading https://repo1.maven.org/maven2/org/apache/iceberg/iceberg-spark-runtime-3.2_2.12/0.13.0/iceberg-spark-runtime-3.2_2.12-0.13.0.jar ...

[SUCCESSFUL ] org.apache.iceberg#iceberg-spark-runtime-3.2_2.12;0.13.0!iceberg-spark-runtime-3.2_2.12.jar (3252ms)

:: resolution report :: resolve 1801ms :: artifacts dl 3254ms

:: modules in use:

org.apache.iceberg#iceberg-spark-runtime-3.2_2.12;0.13.0 from central in [default]

---------------------------------------------------------------------

| | modules || artifacts |

| conf | number| search|dwnlded|evicted|| number|dwnlded|

---------------------------------------------------------------------

| default | 1 | 1 | 1 | 0 || 1 | 1 |

---------------------------------------------------------------------

:: retrieving :: org.apache.spark#spark-submit-parent-9b267f2d-0588-472e-a5c9-79d0280901ea

confs: [default]

1 artifacts copied, 0 already retrieved (21817kB/27ms)

22/02/14 10:52:42 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

Setting default log level to "WARN".

To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel).

22/02/14 10:52:45 WARN conf.HiveConf: HiveConf of name hive.stats.jdbc.timeout does not exist

22/02/14 10:52:45 WARN conf.HiveConf: HiveConf of name hive.stats.retries.wait does not exist

22/02/14 10:52:48 WARN metastore.ObjectStore: Version information not found in metastore. hive.metastore.schema.verification is not enabled so recording the schema version 2.3.0

22/02/14 10:52:48 WARN metastore.ObjectStore: setMetaStoreSchemaVersion called but recording version is disabled: version = 2.3.0, comment = Set by MetaStore root@10.233.65.40

Spark master: local[*], Application Id: local-1644807164208

spark-sql> shwo tables;

Error in query:

mismatched input 'shwo' expecting {'(', 'ADD', 'ALTER', 'ANALYZE', 'CACHE', 'CLEAR', 'COMMENT', 'COMMIT', 'CREATE', 'DELETE', 'DESC', 'DESCRIBE', 'DFS', 'DROP', 'EXPLAIN', 'EXPORT', 'FROM', 'GRANT', 'IMPORT', 'INSERT', 'LIST', 'LOAD', 'LOCK', 'MAP', 'MERGE', 'MSCK', 'REDUCE', 'REFRESH', 'REPLACE', 'RESET', 'REVOKE', 'ROLLBACK', 'SELECT', 'SET', 'SHOW', 'START', 'TABLE', 'TRUNCATE', 'UNCACHE', 'UNLOCK', 'UPDATE', 'USE', 'VALUES', 'WITH'}(line 1, pos 0)

== SQL ==

shwo tables

^^^

spark-sql> show tables;

22/02/14 10:53:00 WARN metastore.ObjectStore: Failed to get database global_temp, returning NoSuchObjectException

Time taken: 1.977 seconds

spark-sql> CREATE TABLE local.db.table (id bigint, data string) USING iceberg;

Time taken: 0.76 seconds

spark-sql> INSERT INTO local.db.table VALUES (1, 'a'), (2, 'b'), (3, 'c');

Time taken: 2.534 seconds

spark-sql> select count(1) from local.db.table;

3

Time taken: 0.676 seconds, Fetched 1 row(s)

spark-sql> select count(1) as count,data from local.db.table group by data;

1 a

1 b

1 c

Time taken: 0.401 seconds, Fetched 3 row(s)

spark-sql> SELECT * FROM local.db.table.snapshots;

2022-02-14 10:54:45.113 4837332377008798145 NULL append /tmp/iceberg/warehouse/db/table/metadata/snap-4837332377008798145-1-5cd1f278-6cd8-424b-bdcc-374efd967dfe.avro {"added-data-files":"3","added-files-size":"1929","added-records":"3","changed-partition-count":"1","spark.app.id":"local-1644807164208","total-data-files":"3","total-delete-files":"0","total-equality-deletes":"0","total-files-size":"1929","total-position-deletes":"0","total-records":"3"}

Time taken: 0.164 seconds, Fetched 1 row(s)

发现数据写到了hdfs上。

总结

花了我个周日来升级cieberg,flink,spark,安装 spark,还没个好结果,心塞。。。

跑通的艰辛历程!