图神经网络:(节点分类)在Cora数据集上动手实现图神经网络

文章说明:

1)参考资料:PYG官方文档。超链。

2)博主水平不高,如有错误还望批评指正。

3)我在百度网盘上传了这篇文章的jupyter notebook。超链。提取码8888。

文章目录

-

- 代码实操1:GCN的复杂实现

- 代码实操2:GCN的简单实现

- 代码实操3:GAT的简单实现

代码实操1:GCN的复杂实现

导入绘图的库,定义绘图函数。

from sklearn.manifold import TSNE

import matplotlib.pyplot as plt

def visualize(h,color):

z=TSNE(n_components=2).fit_transform(h.detach().cpu().numpy())

plt.figure(figsize=(10,10))

plt.xticks([])

plt.yticks([])

plt.scatter(z[:,0],z[:,1],s=70,c=color,cmap="Set2")

plt.show()

目前,我并不知道TSNE降维理论。所以,暂时把它作为一种降维并且可视化的技术。

导入对应的库,导入对应的数据集,导入对应的库。

from torch_geometric.transforms import NormalizeFeatures

from torch_geometric.datasets import Planetoid

dataset=Planetoid(root='/DATA/Planetoid',name='Cora',transform=NormalizeFeatures())

data=dataset[0]

#确定具体的图

Cora数据集简单说明:特征矩阵 N × M N \times M N×M, N N N表示为论文数量, M M M表示为特征维度,对于每维,如果单词在论文中,就是1,反之0。邻接矩阵 N × N N \times N N×N, N N N表示为论文数量,论文间存在引用,之间就有一条边。

其他说明:这段代码会在C盘,生成一个叫做DATA的文件,并将数据集放在DATA之中,有强迫症注意一下。

import torch.nn.functional as F

from torch.nn import Linear

import torch

搭建一个多层的感知机,训练模型并且得到结果。

class MLP(torch.nn.Module):

def __init__(self,hidden_channels):

super().__init__()

self.lin1=Linear(dataset.num_features,hidden_channels)

self.lin2=Linear(hidden_channels,dataset.num_classes)

def forward(self,x):

x=self.lin1(x)

x=x.relu()

x=F.dropout(x,p=0.5,training=self.training)

x=self.lin2(x)

return x

model=MLP(hidden_channels=16)

print(model)

#输出:

#MLP(

# (lin1): Linear(in_features=1433, out_features=16, bias=True)

# (lin2): Linear(in_features=16, out_features=7, bias=True)

#)

model=MLP(hidden_channels=16)

criterion=torch.nn.CrossEntropyLoss()

optimizer=torch.optim.Adam(model.parameters(),lr=0.01,weight_decay=5e-4)

def train():

model.train()

optimizer.zero_grad()

out=model(data.x)

loss=criterion(out[data.train_mask],data.y[data.train_mask])

loss.backward()

optimizer.step()

return loss

def test():

model.eval()

out=model(data.x)

pred=out.argmax(dim=1)

test_correct=pred[data.test_mask]==data.y[data.test_mask]

test_acc=int(test_correct.sum())/int(data.test_mask.sum())

return test_acc

for epoch in range(1,201):

loss=train()

print(f'Epoch: {epoch:03d}, Loss: {loss:.4f}')

#这里就不展示输出

test_acc=test()

print(f'Test Accuracy: {test_acc:.4f}')

#输出:Test Accuracy: 0.5750

导入对应的库,搭建图神经网络GCN

from torch_geometric.nn import GCNConv

class GCN(torch.nn.Module):

def __init__(self,hidden_channels):

super().__init__()

self.conv1=GCNConv(dataset.num_features,hidden_channels)

self.conv2=GCNConv(hidden_channels,dataset.num_classes)

def forward(self,x,edge_index):

x=self.conv1(x,edge_index)

x=x.relu()

x=F.dropout(x,p=0.5,training=self.training)

x=self.conv2(x,edge_index)

return x

model=GCN(hidden_channels=16)

print(model)

#输出:

#GCN(

# (conv1): GCNConv(1433, 16)

# (conv2): GCNConv(16, 7)

#)

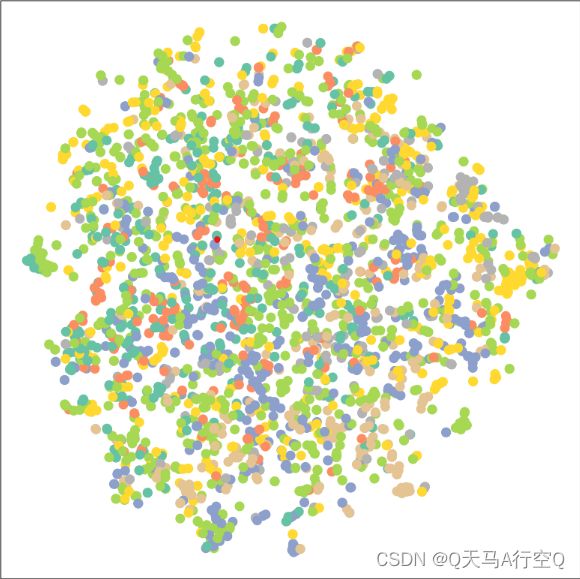

可视化图嵌入(这里只有正向传播)

model=GCN(hidden_channels=16)

model.eval()

out=model(data.x,data.edge_index)

visualize(out,color=data.y)

进行训练得出结果

model=GCN(hidden_channels=16)

optimizer=torch.optim.Adam(model.parameters(),lr=0.01,weight_decay=5e-4)

criterion=torch.nn.CrossEntropyLoss()

def train():

model.train()

optimizer.zero_grad()

out=model(data.x, data.edge_index)

loss=criterion(out[data.train_mask],data.y[data.train_mask])

loss.backward()

optimizer.step()

return loss

def test():

model.eval()

out=model(data.x,data.edge_index)

pred=out.argmax(dim=1)

test_correct=pred[data.test_mask]==data.y[data.test_mask]

test_acc=int(test_correct.sum())/int(data.test_mask.sum())

return test_acc

for epoch in range(1,101):

loss=train()

print(f'Epoch: {epoch:03d}, Loss: {loss:.4f}')

#这里就不展示输出

test_acc=test()

print(f'Test Accuracy: {test_acc:.4f}')

#输出:Test Accuracy: 0.8010

代码实操2:GCN的简单实现

这是PYG官方文档的代码,就以难度而言其实就是少了可视化的东西。构建GCN的框架不同,使用损失函数不同。

from torch_geometric.datasets import Planetoid

from torch_geometric.nn import GCNConv

import torch.nn.functional as F

import torch

class GCN(torch.nn.Module):

def __init__(self):

super().__init__()

self.conv1=GCNConv(dataset.num_node_features,16)

self.conv2=GCNConv(16,dataset.num_classes)

def forward(self,data):

x,edge_index=data.x,data.edge_index

x=self.conv1(x,edge_index)

x=F.relu(x)

x=F.dropout(x,training=self.training)

x=self.conv2(x,edge_index)

return F.log_softmax(x,dim=1)

dataset=Planetoid(root='/DATA/Cora',name='Cora')

device=torch.device('cuda' if torch.cuda.is_available() else 'cpu')

model=GCN().to(device)

data=dataset[0].to(device)

optimizer=torch.optim.Adam(model.parameters(),lr=0.01,weight_decay=5e-4)

model.train()

for epoch in range(200):

optimizer.zero_grad()

out=model(data)

loss=F.nll_loss(out[data.train_mask],data.y[data.train_mask])

loss.backward()

optimizer.step()

model.eval()

pred=model(data).argmax(dim=1)

correct=(pred[data.test_mask]==data.y[data.test_mask]).sum()

acc=int(correct)/int(data.test_mask.sum())

print(f'Accuracy: {acc:.4f}')

#输出:Accuracy: 0.8090

代码实操3:GAT的简单实现

这里操作同上,代码略有不同。

from torch_geometric.datasets import Planetoid

from torch_geometric.nn import GATConv

import torch.nn.functional as F

import torch

class GCN(torch.nn.Module):

def __init__(self):

super().__init__()

self.conv1=GATConv(dataset.num_node_features,16)

self.conv2=GATConv(16,dataset.num_classes)

def forward(self,data):

x,edge_index=data.x,data.edge_index

x=F.dropout(x,p=0.6,training=self.training)

x=self.conv1(x,edge_index)

x=F.relu(x)

x=F.dropout(x,p=0.6,training=self.training)

x=self.conv2(x,edge_index)

return x

dataset=Planetoid(root='/DATA/Cora',name='Cora')

device=torch.device('cuda' if torch.cuda.is_available() else 'cpu');model=GCN().to(device);data=dataset[0].to(device)

optimizer=torch.optim.Adam(model.parameters(),lr=0.05,weight_decay=5e-4);criterion=torch.nn.CrossEntropyLoss()

model.train()

for epoch in range(200):

optimizer.zero_grad()

out=model(data)

loss=criterion(out[data.train_mask],data.y[data.train_mask])

loss.backward()

optimizer.step()

model.eval()

pred=model(data).argmax(dim=1);correct=(pred[data.test_mask]==data.y[data.test_mask]).sum();acc=int(correct)/int(data.test_mask.sum())

print(f'Accuracy: {acc:.4f}')

#输出:Accuracy: 0.7980