大数据项目实战-招聘网站职位分析

目录

第一章:项目概述

1.1项目需求和目标

1.2预备知识

1.3项目架构设计及技术选取

1.4开发环境和开发工具

1.5项目开发流程

第二章:搭建大数据集群环境

2.1安装准备

2.2Hadoop集群搭建

2.3Hive安装

2.4Sqoop安装

第三章:数据采集

3.1知识概要

3.2分析与准备

3.3采集网页数据

第四章:数据预处理

4.1分析预处理数据

4.2设计数据预处理方案

4.3实现数据的预处理

第五章:数据分析

5.1数据分析概述

5.2Hive数据仓库

5.3分析数据

第六章:数据可视化

6.1平台概述

6.2数据迁移

6.3平台环境搭建

6.4实现图形化展示功能

第一章:项目概述

1.1项目需求和目标

项目需求:

本项目是以国内某互联网招聘网站全国范围内的大数据相关招聘信息作为基础信息,其招聘信息能较大程度地反映出市场对大数据相关职位的需求情况及能力要求,利用这些招聘信息数据通过大数据分析平台重点分析一下几点:

- 分析大数据职位的区域分布情况

- 分析大数据职位薪资区间分布情况

- 分析大数据职位相关公司的福利情况

- 分析大数据职位相关公司技能要求情况

项目目标:

- 掌握Linux操作系统的安装和基本操作

- 掌握Hadoop高可用完全分布式集群的安装部署

- 掌握HDFS Shell基本操作命令

- 掌握基于JAVA语言开发MapReduce程序

- 掌握数据仓库Hive的安装及Hive SQL的使用方法

- 掌握使用Eclipse开发Maven程序的方法

- 了解数据预处理的含义

- 了解HTTP相关概念

- 掌握Sqoop安装及数据迁移的使用方法

- 掌握关系型数据库MySQL的安装及使用

- 掌握基于SSM框架进行网站开发的方法

- 掌握利用Echarts进行数据可视化开发的方法

- 掌握数据分析系统的架构

- 掌握数据分析系统的业务流程

1.2预备知识

知识储备:

- 掌握JAVA面向对象编程思想

- 掌握Hadoop、Hive、Sqoop在Linux环境下的基本操作

- 掌握HDFS与MapReduce的Java API程序开发

- 熟悉大数据相关技术,如Hadoop、HIve、Sqoop的基本理论及原理

- 熟悉Linux操作系统Shell命令的使用

- 熟悉关系型数据库MySQL的原理,掌握SQL语句的编写

- 了解网站前端开发相关技术,如HTML、JSP、JQuery、CSS等

- 了解网站后端开发框架Spring+SpringMVC+MyBatis整合使用

- 熟悉Eclipse开发工具的应用

- 熟悉Maven项目管理工具的使用

1.3项目架构设计及技术选取

1.4开发环境和开发工具

系统环境主要分为开发环境(Windows)和集群环境(Linux)

开发工具:Eclipse、JDK、Maven、VMware Workstation

集群环境:Hadoop、Hive、Sqoop、MySQL

web环境:Tomcat、Spring、Spring MVC、MyBatis、Echarts

1.5项目开发流程

1.搭建大数据实验环境

(1)Linux 系统虛拟机的安装与克隆

(2)配置虛拟机网络与 SSH 服务

(3)搭建 Hadoop 集群

(4)安装 MySQL 数据库

(5)安装 Hive

(6)安装 Sqoop

2.编写网络爬虫程序进行数据采集

(1)准备爬虫环境

(2)编写爬虫程序

(3)将爬取数据存储到 HDFS

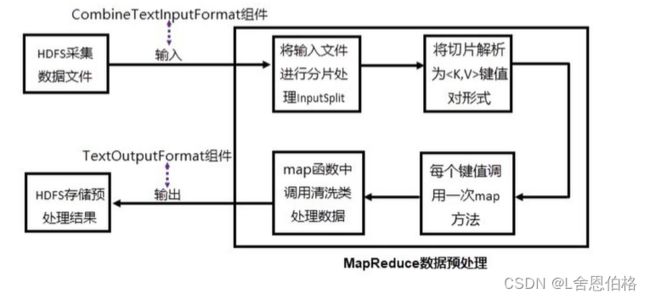

3.数据预处理

(1)分析预处理数据

(2)准备预处理环境

(3)实现 MapReduce 预处理程序进行数据集成和数据转换操作

(4)实现 MapReduce 预处理程序的两种运行模式

4.数据分析

(1)构建数据仓库

(2)通过 HSQL 进行职位区域分析

(3)通过 HSQL 进行职位薪资分析

(4)通过 HSQL 进行公司福利标签分析

(5)通过 HSQL 进行技能标签分析

5.数据可视化

(1)构建关系型数据库

(2)通过 Sqoop 实现数据迁移

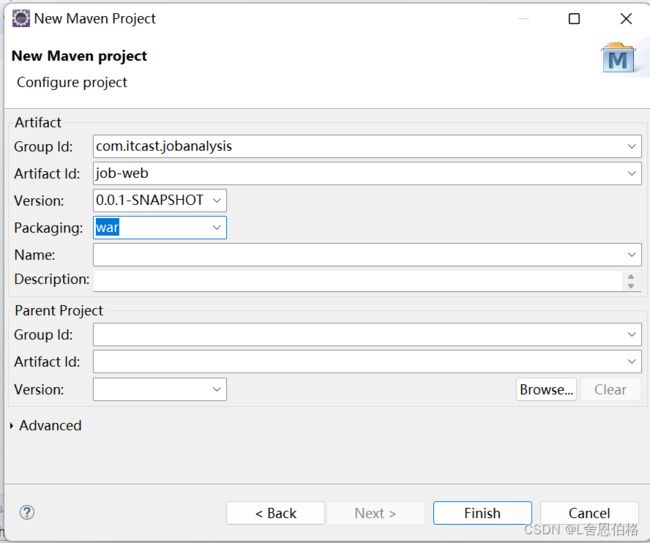

(3)创建 Maven 项目配置项目依赖的信息

(4)编辑配置文件整合 SSM 框架

(5)完善项目组织框架

(6)编写程序实现职位区域分布展示

(7)编写程序实现薪资分布展示

(8)编写程序实现福利标签词云图

(9)预览平台展示内容

(10)编写程序实现技能标签词云图

第二章:搭建大数据集群环境

2.1安装准备

虚拟机安装与克隆(克隆方法选择创建完整克隆)

虚拟机网络配置

#编辑网络

vi /etc/sysconfig/network-scripts/ifcfg-ens33

#重启

service network restart

#配置ip和主机名映射

vi /etc/hostsSSH服务配置

#查看SSH服务

rpm -qa | grep ssh

#SSH安装命令

yum -y install openssh openssh-server

#查看SSH进程

ps -ef | grep ssh

#生成密钥对

ssh-keygen -t rsa

#复制公钥文件

ssh-copy-id 主机名

2.2Hadoop集群搭建

步骤:

- 下载安装

- 配置环境变量(编辑环境变量文件——配置系统环境变量——初始化环境变量)

- 环境验证

- JDK安装

1.安装rz,通过rz命令上传安装包 yum install lrzsz 2.解压 tar -zxvf jdk-8u181-linux-x64.tar.gz -C /usr/local 3.修改名字 mv jdk1.8.0_181/ jdk 4.配置环境变量 vi /etc/profile #JAVA_HOME export JAVA_HOME=/usr/local/jdk export PATH=$PATH:$JAVA_HOME/bin 5.初始化环境变量 source /etc/profile 6.验证配置 java -version - Hadoop安装

1.通过rz命令上传安装包

2.解压

tar -zxvf hadoop2.7.1.tar.gz -C /usr/local

3.修改名字

mv hadoop2.7.1/ hadoop

4.配置环境变量

vi /etc/profile

#HADOOP_HOME

export HADOOP_HOME=/usr/local/hadoop

export PATH=$PATH:$HADOOP_HOME/bin:$HADOOP_HOME/sbin

5.初始化环境变量

source /etc/profile

6.验证配置

hadoop version

- Hadoop集群配置

步骤:

- 配置文件

- 修改文件(hadoop-env.sh、yarn-env.sh、core-site.xml、hdfs-site.xml、mapred-site.xml、yarn-site.xml)

- 修改slaves文件并将集群主节点的配置文件分发到其他主节点

1.cd hadoop/etc/hadoop 2.vi hadoop-env.sh #配置JAVA_HOME export JAVA_HOME=/usr/local/jdk 3.vi yarn-env.sh #配置JAVA_HOME(记得去掉前面的#注释,注意别找错地方)4.vi core-site.xml #配置主进程NameNode运行地址和Hadoop运行时生成数据的临时存放目录fs.defaultFS hdfs://hadoop1:9000 hadoop.tmp.dir /usr/local/hadoop/tmp 5.vi hdfs-site.xml #配置Secondary NameNode节点运行地址和HDFS数据块的副本数量dfs.replication 3 dfs.namenode.secondary.http-address hadoop2:50090 6.cp mapred-site.xml.template mapred-site.xml vi mapred-site.xml #配置MapReduce程序在Yarns上运行mapreduce.framework.name yarn 7.vi yarn-site.xml #配置Yarn的主进程ResourceManager管理者及附属服务mapreduce_shuffleyarn.resourcemanager.hostname hadoop1 yarn.nodemanager.aux-services mapreduce_shuffle 8.vi slaves hadoop1 hadoop2 hadoop3 9.scp /etc/profile root@hadoop2:/etc/profile scp /etc/profile root@hadoop3:/etc/profile scp -r /usr/local/* root@hadoop2:/usr/local/ scp -r /usr/local/* root@hadoop3:/usr/local/ 10.记得在hadoop2、hadoop3初始化 source /etc/profile

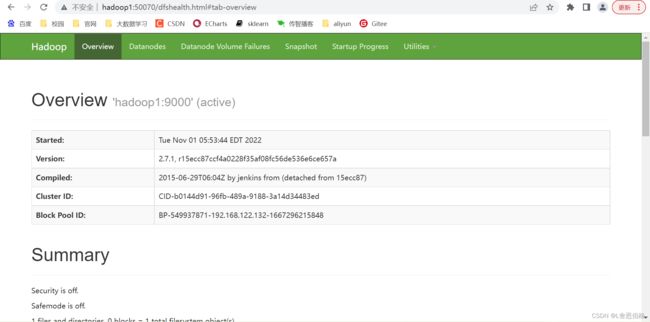

- Hadoop集群测试

- 格式化文件系统

- 启动hadoop集群

- 验证各服务器进程启动情况

#1.格式化文件系统 初次启动HDFS集群时,对主节点进行格式化处理 hdfs namenode -format 或者hadoop namenode -format #2.进入hadoop/sbin/ cd /usr/local/hadoop/sbin/ #3.主节点上启动HDFSNameNode进程 hadoop-daemon.sh start namenode #4.每个节点上启动HDFSDataNode进程 hadoop-daemon.sh start datanode #5.主节点上启动YARNResourceManager进程 yarn-daemon.sh start resourcemanager #6.每个节点上启动YARNodeManager进程 yarn-daemon.sh start nodemanager #7.规划节点上启动SecondaryNameNode进程 hadoop-daemon.sh start secondarynamenode #8.jps(5个进程) DataNode ResourceManager NameNode NodeManager jps

- 通过UI界面查看Hadoop运行状态

在Windows操作系统配置IP映射,文件路径C:\Windows\System32\drivers\etc,在etc文件添加如下配置内容

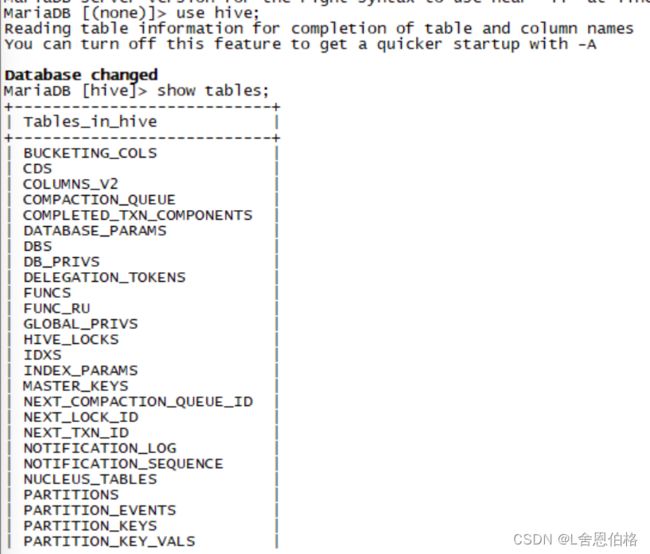

2.3Hive安装

- 安装MySQL服务

#安装mariadb

yum install mariadb-server mariadb

#启动服务

systemctl start mariadb

systemctl enable mariadb

#切换到mysql数据库

use mysql;

#修改root用户密码

update user set password=PASSWORD('123456') where user = 'root';

#设置允许远程登录

grant all privileges on *.* to 'root'@'%'

identified by '123456' with grant option;

#更新权限表

flush privileges;- 安装hive

#1.解压 tar -zxvf apache-hive-1.2.2-bin.tar.gz -C /usr/local #2.修改名字 mv apache-hive-1.2.2-bin/ hive #3.配置文件 cd /hive/conf cp hive-env.sh.template hive-env.sh vi hive-env.sh(修改 export HADOOP_HOME=/usr/local/hadoop)

#4.

vi hive-site.xml

javax.jdo.option.ConnectionURL

jdbc:mysql://localhost:3306/hive?createDatabaseIfNotExist=true

JDBC connect string for a JDBC metastore

javax.jdo.option.ConnectionDriverName

com.mysql.jdbc.Driver

Driver class name for a JDBC metastore

javax.jdo.option.ConnectionUserName

root

username to use against metastore database

javax.jdo.option.ConnectionPassword

123456

password to use against metastore database

#5.上传mysql驱动包

cd ../lib

rz(mysql-connector-java-5.1.40.jar)

#6.配置环境变量

vi /etc/profile

#添加HIVE_HOME

export HIVE_HOME=/usr/local/hive

export PATH=$PATH:$HIVE_HOME/bin

source /etc/profile

#7.启动hive

cd ../bin/

./hive

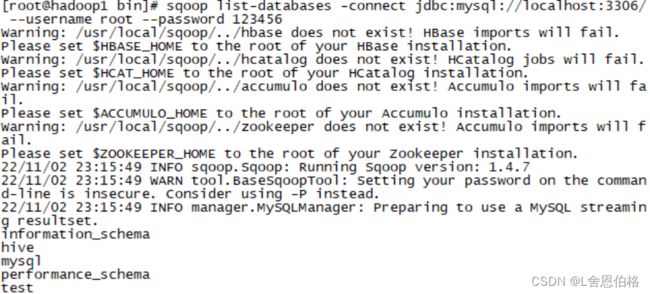

2.4Sqoop安装

#1.解压

tar -zxvf sqoop-1.4.7.bin__hadoop-2.6.0.tar.gz -C /usr/local

#2.修改名字

mv sqoop-1.4.7.bin__hadoop-2.6.0/ sqoop

#3.配置

cd sqoop/conf/

cp sqoop-env-template.sh sqoop-env.sh

vi sqoop-env.sh

修改

export HADOOP_COMMON_HOME=/usr/local/hadoop

export HADOOP_MAPRED_HOME=/usr/local/hadoop

export HIVE_HOME=/usr/local/hive

#4.配置环境变量

vi /etc/profile

#添加SQOOP_HOME

export SQOOP_HOME=/usr/local/sqoop

export PATH=$PATH:$SQOOP_HOME/bin

source /etc/profile

#5.效果测试

cd ../lib

rz(mysql-connector-java-5.1.40.jar)#上传jar包到lib目录下

cd ../bin/

sqoop list-database \

-connect jdbc:mysql://localhost:3306/ \

--username root --password 123456

#(sqoop list-database用于输出连接的本地MySQL数据库中的所有数据库,如果正确返回指定地址的MySQL数据库信息,说明Sqoop配置完毕)

第三章:数据采集

3.1知识概要

1.数据源分类(系统日志采集、网络数据采集、数据库采集)

2.HTTP请求过程

3.HttpClient

3.2分析与准备

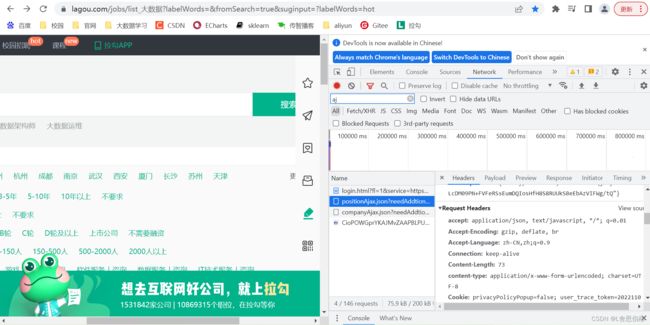

1.分析网页数据结构

使用Google浏览器进入到开发者模式,切换到Network这项,设置过滤规则,查看Ajax请求中的JSON文件;在JSON文件的“content-positionResult-result”下查看大数据职位相关的信息

2.数据采集环境准备

在pom文件中添加编写爬虫程序所需要的HttpClient和JDK1.8依赖

org.apache.httpcomponents

httpclient

4.5.4

jdk.tools

jdk.tools

1.8

system

${JAVA_HOME}/lib/tools.jar

3.3采集网页数据

1.创建相应结果JavaBean类

通过创建的HttpClient响应结果对象作为数据存储的载体,对响应结果中的状态码和数据内容进行封装

//HttpClientResp.java

package com.position.reptile;

import java.io.Serializable;

public class HttpClientResp implements Serializable {

private static final long serialVersionUID = 2963835334380947712L;

//响应状态码

private int code;

//响应内容

private String content;

//空参构造

public HttpClientResp() {

}

public HttpClientResp(int code) {

super();

this.code = code;

}

public HttpClientResp(String content) {

super();

this.content = content;

}

public HttpClientResp(int code, String content) {

super();

this.code = code;

this.content = content;

}

//getter和setter方法

public int getCode() {

return code;

}

public void setCode(int code) {

this.code = code;

}

public String getContent() {

return content;

}

public void setContent(String content) {

this.content = content;

}

//重写toString方法

@Override

public String toString() {

return "HttpClientResp [code=" + code + ", content=" + content + "]";

}

}

2.封装HTTP请求的工具类

在com.position.reptile包下,创建一个命名为HttpClientUtils.java文件的工具类,用于实现HTTP请求方法

(1)定义三个全局变量

//编码格式

private static final String ENCODING = "UTF-8";

//设置连接超时时间,单位毫秒

private static final int CONNECT_TIMEOUT = 6000;

//设置响应时间

private static final int SOCKET_TIMEOUT = 6000;

(2)编写packageHeader()方法,用于封装HTTP请求头

// 封装请求头

public static void packageHeader(Map params, HttpRequestBase httpMethod){

if (params != null) {

// set集合中得到的就是params里面封装的所有请求头的信息,保存在entrySet里面

Set> entrySet = params.entrySet();

// 遍历集合

for (Entry entry : entrySet) {

// 封装到httprequestbase对象里面

httpMethod.setHeader(entry.getKey(),entry.getValue());

}

}

} (3)编写packageParam()方法,用于封装HTTP请求参数

// 封装请求参数

public static void packageParam(Map params,HttpEntityEnclosingRequestBase httpMethod) throws UnsupportedEncodingException {

if (params != null) {

List nvps = new ArrayList();

Set> entrySet = params.entrySet();

for (Entry entry : entrySet) {

// 分别提取entry中的key和value放入nvps数组中

nvps.add(new BasicNameValuePair(entry.getKey(), entry.getValue()));

}

httpMethod.setEntity(new UrlEncodedFormEntity(nvps, ENCODING));

}

} (4)编写HttpClientResp()方法,用于获取HTTP响应内容

public static HttpClientResp getHttpClientResult(CloseableHttpResponse httpResponse,CloseableHttpClient httpClient,HttpRequestBase httpMethod) throws Exception{

httpResponse=httpClient.execute(httpMethod);

//获取HTTP的响应结果

if(httpResponse != null && httpResponse.getStatusLine() != null) {

String content = "";

if(httpResponse.getEntity() != null) {

content = EntityUtils.toString(httpResponse.getEntity(),ENCODING);

}

return new HttpClientResp(httpResponse.getStatusLine().getStatusCode(),content);

}

return new HttpClientResp(HttpStatus.SC_INTERNAL_SERVER_ERROR);

}(5)编写doPost()方法,提交请求头和请求参数

public static HttpClientResp doPost(String url,Mapheaders,Mapparams) throws Exception{

CloseableHttpClient httpclient = HttpClients.createDefault();

HttpPost httppost = new HttpPost(url);

//封装请求配置

RequestConfig requestConfig = RequestConfig.custom()

.setConnectTimeout(CONNECT_TIMEOUT)

.setSocketTimeout(SOCKET_TIMEOUT)

.build();

//设置post请求配置项

httppost.setConfig(requestConfig);

//设置请求头

packageHeader(headers,httppost);

//设置请求参数

packageParam(params,httppost);

//创建httpResponse对象获取响应内容

CloseableHttpResponse httpResponse = null;

try {

return getHttpClientResult(httpResponse,httpclient,httppost);

}finally {

//释放资源

release(httpResponse,httpclient);

}

} (6)编写release()方法,用于释放HTTP请求和HTTP响应对象资源

private static void release(CloseableHttpResponse httpResponse,CloseableHttpClient httpClient) throws IOException{

if(httpResponse != null) {

httpResponse.close();

}

if(httpClient != null) {

httpClient.close();

}

}3.封装存储在HDFS工具类

(1)在pom.xml文件中添加hadoop的依赖,用于调用HDFS API

org.apache.hadoop

hadoop-common

2.7.1

org.apache.hadoop

hadoop-client

2.7.1

(2)在com.position.reptile包下,创建名为HttpClientHdfsUtils.java文件的工具类,实现将数据写入HDFS的方法createFileBySysTime()

public class HttpClientHdfsUtils {

public static void createFileBySysTime(String url,String fileName,String data) {

System.setProperty("HADOOP_USER_NAME", "root");

Path path = null;

//读取系统时间

Calendar calendar = Calendar.getInstance();

Date time = calendar.getTime();

//格式化系统时间

SimpleDateFormat format = new SimpleDateFormat("yyyMMdd");

//获取系统当前时间,将其转换为String类型

String filepath = format.format(time);

//构造Configuration对象,配置hadoop参数

Configuration conf = new Configuration();

URI uri= URI.create(url);

FileSystem fileSystem;

try {

//获取文件系统对象

fileSystem = FileSystem.get(uri,conf);

//定义文件路径

path = new Path("/JobData/"+filepath);

if(!fileSystem.exists(path)) {

fileSystem.mkdirs(path);

}

//在指定目录下创建文件

FSDataOutputStream fsDataOutputStream = fileSystem.create(new Path(path.toString()+"/"+fileName));

//向文件中写入数据

IOUtils.copyBytes(new ByteArrayInputStream(data.getBytes()),fsDataOutputStream,conf,true);

fileSystem.close();

}catch(IOException e) {

e.printStackTrace();

}

}

}

4.实现网页数据采集

(1)通过Chrome浏览器查看请求头

(2)在com.position.reptile包下,创建名为HttpClientData.java文件的主类,用于数据采集功能

public class HttpClientData {

public static void main(String[] args) throws Exception {

//设置请求头

Mapheaders = new HashMap();

headers.put("Cookie","privacyPolicyPopup=false; user_trace_token=20221103113731-d2950fcd-eb36-486c-9032-feab09943d4d; LGUID=20221103113731-ef107f32-06e0-4453-a89c-683f5a558e86; _ga=GA1.2.11435994.1667446652; RECOMMEND_TIP=true; index_location_city=%E5%85%A8%E5%9B%BD; __lg_stoken__=a5abb0b1f9cda5e7a6da82dd7a4397075c675acce324397a86b9cbbd4fc31a58d921346f317ba5c8c92b5c4a9ebb0650576575b67ebae44f422aeb4b1a950643cd2854eece70; JSESSIONID=ABAAAECABIEACCAC2031D7A104C1E74CDC3FABFA00BCC7F; WEBTJ-ID=20221105161123-18446d82e00bcd-0f0b3aafbd8e8e-26021a51-921600-18446d82e018bf; _gid=GA1.2.1865104541.1667635884; Hm_lvt_4233e74dff0ae5bd0a3d81c6ccf756e6=1667446652,1667456559,1667635885; PRE_UTM=; PRE_HOST=; PRE_LAND=https%3A%2F%2Fwww.lagou.com%2Fjobs%2Flist%5F%25E5%25A4%25A7%25E6%2595%25B0%25E6%258D%25AE%3FlabelWords%3D%26fromSearch%3Dtrue%26suginput%3D%3FlabelWords%3Dhot; LGSID=20221105161124-df5ffe02-aefa-434b-b378-2d64367fddde; PRE_SITE=https%3A%2F%2Fwww.lagou.com%2Fcommon-sec%2Fsecurity-check.html%3Fseed%3D5E87A87B3DA4AFE2BC190FBB560FB9266A5615D5937A536A0FA5205B13CAC74F0D0C1CC5AF1D2DD0C0060C9AF3B36CA5%26ts%3D16676358793441%26name%3Da5abb0b1f9cd%26callbackUrl%3Dhttps%253A%252F%252Fwww.lagou.com%252Fjobs%252Flist%5F%2525E5%2525A4%2525A7%2525E6%252595%2525B0%2525E6%25258D%2525AE%253FlabelWords%253D%2526fromSearch%253Dtrue%2526suginput%253D%253FlabelWords%253Dhot%26srcReferer%3D; _gat=1; X_MIDDLE_TOKEN=668d4b4d5ba925cb7156e2d72086c745; privacyPolicyPopup=false; sensorsdata2015session=%7B%7D; TG-TRACK-CODE=index_search; sensorsdata2015jssdkcross=%7B%22distinct_id%22%3A%221843b917f5d1b4-025994c92cf438-26021a51-921600-1843b917f5e3e5%22%2C%22first_id%22%3A%22%22%2C%22props%22%3A%7B%22%24latest_traffic_source_type%22%3A%22%E7%9B%B4%E6%8E%A5%E6%B5%81%E9%87%8F%22%2C%22%24latest_search_keyword%22%3A%22%E6%9C%AA%E5%8F%96%E5%88%B0%E5%80%BC_%E7%9B%B4%E6%8E%A5%E6%89%93%E5%BC%80%22%2C%22%24latest_referrer%22%3A%22%22%2C%22%24os%22%3A%22Windows%22%2C%22%24browser%22%3A%22Chrome%22%2C%22%24browser_version%22%3A%22103.0.0.0%22%2C%22%24latest_referrer_host%22%3A%22%22%7D%2C%22%24device_id%22%3A%221843b917f5d1b4-025994c92cf438-26021a51-921600-1843b917f5e3e5%22%7D; Hm_lpvt_4233e74dff0ae5bd0a3d81c6ccf756e6=1667636243; LGRID=20221105161724-fad126be-48da-4684-aa52-1ff6cfb2dffd; SEARCH_ID=535076fc2a094fa2913263e0079a9038; X_HTTP_TOKEN=a18b9f65c1cbf1490626367661a3afc88e7340da5d");

headers.put("Connection","keep-alive");

headers.put("Accept","application/json, text/javascript, */*; q=0.01");

headers.put("Accept-Language","zh-CN,zh;q=0.9");

headers.put("User-Agent","Mozilla/5.0 (Windows NT 10.0; Win64; x64)"+"AppleWebKit/537.36 (KHTML, like Gecko)"+"Chrome/103.0.0.0 Safari/537.36");

headers.put("content-type","application/x-www-form-urlencoded; charset=UTF-8");

headers.put("Referer", "https://www.lagou.com/jobs/list_%E5%A4%A7%E6%95%B0%E6%8D%AE?labelWords=&fromSearch=true&suginput=?labelWords=hot");

headers.put("Origin", "https://www.lagou.com");

headers.put("x-requested-with","XMLHttpRequest");

headers.put("x-anit-forge-token","None");

headers.put("x-anit-forge-code","0");

headers.put("Host","www.lagou.com");

headers.put("Cache-Control","no-cache");

Mapparams = new HashMap();

params.put("kd","大数据");

params.put("city","全国");

for (int i=1;i<31;i++){

params.put("pn",String.valueOf(i));

}

for (int i=1;i<31;i++){

params.put("pn",String.valueOf(i));

HttpClientResp result = HttpClientUtils.doPost("https://www.lagou.com/jobs/positionAjax.json?"+"needAddtionalResult=false",headers,params);

HttpClientHdfsUtils.createFileBySysTime("hdfs://hadoop1:9000","page"+i,result.toString());

Thread.sleep(1 * 500);

}

}

}

最终采集数据的结果

第四章:数据预处理

4.1分析预处理数据

查看数据结构内容,格式化数据

本项目主要分析的内容是薪资、福利、技能要求、职位分布这四个方面。

- salary(薪资字段的数据内容为字符串形式)

- city(城市字段的数据内容为字符串形式)

- skillLabels(技能要求字段的数据内容为数组形式)

- companyLabelList(福利标签数据字段 数据形式为数组);positionAdvantage(数据形式为字符串)

4.2设计数据预处理方案

4.3实现数据的预处理

(1)数据预处理环境准备

在pom.xml文件中,添加hadoop相关依赖

org.apache.hadoop

hadoop-common

2.7.1

org.apache.hadoop

hadoop-client

2.7.1

(2)创建数据转换类

创建一个com.position.clean的Package,再创建CleanJob类,用于实现对职位信息数据进行转换操作

- deleteString()方法,用于对薪资字符串处理(去除薪资中的"k"字符)

//删除指定字符

public static String deleteString(String str,char delChar) {

StringBuffer stringBuffer = new StringBuffer("");

for(int i=0;i- mergeString()方法,用于将companyLabelList字段中的数据内容和positionAdvange字段中的数据内容进行合并处理,生成新字符串数据(以"-"为分隔符)

//处理合并福利标签

public static String mergeString(String position,JSONArray company) throws JSONException {

String result = "";

if(company.length()!=0) {

for(int i=0;i- killResult()方法,用于将技能数据以"-"为分隔符进行分隔,生成新的字符串数据

//处理技能标签

public static String killResult(JSONArray killData) throws JSONException {

String result = "";

if(killData.length() != 0) {

for(int i=0;i- resultToString()方法,将数据文件中的每一条职位信息数据进行处理并重新组合成新的字符串形式

//数据清洗结果

public static String resultToString(JSONArray jobdata) throws JSONException {

String jobResultData="";

for(int i=0;i(3)创建实现Map任务的Mapper类

在com.position.clean包下,创建一个名称为CleanMapper的类,用于实现MapReduce程序的Map方法

//CleanMapper类继承Mapper基类,并定义Map程序输入和输出的key和value

public class CleanMapper extends Mapper{

//map()方法对输入的键值对进行处理

protected void map(LongWritable key,Text value,Context context) throws IOException,InterruptedException {

String jobResultData="";

String reptileData = value.toString();

//通过截取字符串方式获取content中的数据

String jobData = reptileData.substring(reptileData.indexOf("=",reptileData.indexOf("=")+1)+1,

reptileData.length()-1

);

try {

//获取content中的数据内容

JSONObject contentJson = new JSONObject(jobData);

String contentData = contentJson.getString("content");

//获取content下positionResult中的数据内容

JSONObject positionResultJson = new JSONObject(contentData);

String positionResultData = positionResultJson.getString("positionResult");

//获取最终result中的数据内容

JSONObject resultJson = new JSONObject(positionResultData);

JSONArray resultData = resultJson.getJSONArray("result");

jobResultData = CleanJob.resultToString(resultData);

context.write(new Text(jobResultData), NullWritable.get());

} catch (JSONException e) {

e.printStackTrace();

}

}

} (4)创建并执行MapReduce程序

在com.position.clean包下,创建一个名称为CleanMain的类,用于实现MapReduce程序配置

public class CleanMain {

public static void main(String[] args) throws IOException,ClassNotFoundException,InterruptedException {

//控制台输出日志

BasicConfigurator.configure();

//初始化Hadoop配置

Configuration conf = new Configuration();

//定义一个新的Job,第一个参数是hadoop配置信息,第二个参数是Job的名字

Job job = new Job(conf,"job");

//设置主类

job.setJarByClass(CleanMain.class);

//设置Mapper类

job.setMapperClass(CleanMapper.class);

//设置job输出数据的key类

job.setOutputKeyClass(Text.class);

//设置job输出数据的value类

job.setOutputValueClass(NullWritable.class);

//数据输入路径

FileInputFormat.addInputPath(job, new Path("hdfs://hadoop1:9000/JobData/20221105"));

//数据输出路径

FileOutputFormat.setOutputPath(job,new Path("D:\\BigData\\out"));

System.exit(job.waitForCompletion(true)?0:1);

}

}(5)将程序打包提交到集群运行

修改MapReduce程序主类

package com.position.clean;

import java.io.IOException;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapred.lib.CombineTextInputFormat;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.util.GenericOptionsParser;

import org.apache.log4j.BasicConfigurator;

public class CleanMain {

public static void main(String[] args) throws IOException,ClassNotFoundException,InterruptedException {

//控制台输出日志

BasicConfigurator.configure();

//初始化Hadoop配置

Configuration conf = new Configuration();

//从hadoop命令行读取参数

String[] otherArgs = new GenericOptionsParser(conf,args).getRemainingArgs();

//判断读取的参数正常是两个,分别是输入文件和输出文件的目录

if(otherArgs.length != 2) {

System.err.println("Usage:wordcount");

System.exit(2);

}

//定义一个新的Job,第一个参数是hadoop配置信息,第二个参数是Job的名字

Job job = new Job(conf,"job");

//设置主类

job.setJarByClass(CleanMain.class);

//设置Mapper类

job.setMapperClass(CleanMapper.class);

//处理小文件

job.setInputFormatClass(CombineTextInputFormat.class);

//n个小文件之和不能大于2MB

CombineTextInputFormat.setMinInputSplitSize(job, 2097152);

//在n个小文件之和大于2MB的情况下,需满足n+1个小文件之和不能大于4MB

CombineTextInputFormat.setMaxInputSplitSize(job, 4194304);

//设置job输出数据的key类

job.setOutputKeyClass(Text.class);

//设置job输出数据的value类

job.setOutputValueClass(NullWritable.class);

//设置输入文件

FileInputFormat.addInputPath(job,new Path(otherArgs[0]));

//设置输出文件

FileOutputFormat.setOutputPath(job,new Path(otherArgs[1]));

System.exit(job.waitForCompletion(true)?0:1);

}

}

创建jar包

将jar包提交到集群运行

第五章:数据分析

5.1数据分析概述

本项目通过使用基于分布式文件系统的Hive对招聘网站的数据进行分析

5.2Hive数据仓库

Hive是建立在Hadoop分布式文件系统上的数据仓库,它提供了一系列工具,能够对存储在HDFS中的数据进行数据提取、转换和加载(ETL),是一种可以存储、查询和分析存储在Hadoop中的大规模的工具。Hive可以将HQL语句转为MapReduce程序进行处理。

本项目是将Hive数据仓库设计为星状模型,由一张事实表和多张维度表组成。

- 事实表(ods_jobdata_origin)主要用于存储MapReduce计算框架清洗后的数据

| 字段 | 数据类型 | 描述 |

| city | String | 城市 |

| salary | array |

薪资 |

| company | array |

福利标签 |

| kill | array |

技能标签 |

- 维度表(t_salary_detail)主要用于存储薪资分布分析的数据

| 字段 | 数据类型 | 描述 |

| salary | String | 薪资分布区间 |

| count | int | 区间内出现薪资的频次 |

- 维度表(t_company_detail)主要用于存储福利标签分析的数据

| 字段 | 数据类型 | 描述 |

| company | String | 每个福利标签 |

| count | int | 每个福利标签的频次 |

- 维度表(t_city_detail)主要用于存储城市分布分析的数据

| 字段 | 数据类型 | 描述 |

| city | String | 城市 |

| count | int | 城市频次 |

- 维度表(t_kill_detail)主要用于存储技能标签分析的数据

| 字段 | 数据类型 | 描述 |

| kill | String | 每个标签技能 |

| count | int | 每个标签技能的频次 |

实现数据仓库

- 启动Hadoop集群后,在主节点hadoop1启动Hive

- 将HDFS上的预处理数据导入到事实表ods_jobdata_origin中

--创建数据仓库 jobdata create database jobdata; use jobdata; --创建事实表 ods_jobdata_origin create table ods_jobdata_origin( city string comment '城市', salary arraycomment '薪资', company array comment '福利', kill array comment '技能') comment '原始职位数据表' row format delimited fields terminated by ',' collection items terminated by '-' stored as textfile; --加载数据 load data inpath '/JobData/output/part-r-00000' overwrite into table ods_jobdata_origin; --查询数据 select * from ods_jobdata_origin; - 创建明细表ods_jobdata_detail用于存储事实表细化薪资字段的数据

create table ods_jobdata_detail( city string comment '城市', salary arraycomment '薪资', company array comment '福利', kill array comment '技能', low_salary int comment '低薪资', high_salary int comment '高薪资', avg_salary double comment '平均薪资') comment '职位数据明细表' row format delimited fields terminated by ',' collection items terminated by '-' stored as textfile;

insert overwrite table ods_jobdata_detail

select city,salary,company,kill,salary[0],salary[1],(salary[0]+salary[1])/2

from ods_jobdata_origin;- 对薪资字段内容进行扁平化处理,将处理结果存储到临时中间表t_ods_tmp_salary

create table t_ods_tmp_salary as select explode(ojo.salary) from ods_jobdata_origin ojo; - 对t_ods_tmp_salary表的每一条数据进行泛化处理,将处理结果存储到中间表t_ods_tmp_salary_dist中

create table t_ods_tmp_salary_dist as select case when col>=0 and col<=5 then "0-5" when col>=6 and col<=10 then "6-10" when col>=11 and col<=15 then "11-15" when col>=16 and col<=20 then "16-20" when col>=21 and col<=25 then "21-25" when col>=26 and col<=30 then "26-30" when col>=31 and col<=35 then "31-35" when col>=36 and col<=40 then "36-40" when col>=41 and col<=45 then "41-45" when col>=46 and col<=50 then "46-50" when col>=51 and col<=55 then "51-55" when col>=56 and col<=60 then "56-60" when col>=61 and col<=65 then "61-65" when col>=66 and col<=70 then "66-70" when col>=71 and col<=75 then "71-75" when col>=76 and col<=80 then "76-80" when col>=81 and col<=85 then "81-85" when col>=86 and col<=90 then "86-90" when col>=91 and col<=95 then "91-95" when col>=96 and col<=100 then "96-100" when col>=101 then ">101" end from t_ods_tmp_salary; - 对福利标签字段内容进行扁平化处理,将处理结果存储到临时中间表t_ods_tmp_company

create table t_ods_tmp_company as select explode(ojo.company) from ods_jobdata_origin ojo; - 对技能标签字段内容进行扁平化处理,将处理结果存储到临时中间表t_ods_tmp_kill

create table t_ods_tmp_kill as select explode(ojo.kill) from ods_jobdata_origin ojo; - 创建维度表t_ods_kill,用于存储技能标签的统计结果

create table t_ods_kill( every_kill string comment '技能标签', count int comment '词频') comment '技能标签词频统计' row format delimited fields terminated by ',' stored as textfile; - 创建维度表t_ods_company,用于存储福利标签的统计结果

create table t_ods_company( every_company string comment '福利标签', count int comment '词频') comment '福利标签词频统计' row format delimited fields terminated by ',' stored as textfile; - 创建维度表t_ods_salary,用于存储薪资分布的统计结果

create table t_ods_salary( every_partition string comment '薪资分布', count int comment '聚合统计') comment '薪资分布聚合统计' row format delimited fields terminated by ',' stored as textfile; - 创建维度表t_ods_city,用于存储城市的统计结果

create table t_ods_city( every_city string comment '城市', count int comment '词频') comment '城市统计' row format delimited fields terminated by ',' stored as textfile;

5.3分析数据

- 职位区域分析

--职位区域分析

insert overwrite table t_ods_city

select city,count(1) from ods_jobdata_origin group by city;

--倒叙查询职位区域的信息

select * from t_ods_city sort by count desc;- 职位薪资分析

--职位薪资分析

insert overwrite table t_ods_salary

select '_c0',count(1) from t_ods_tmp_salary_dist group by '_c0';

--查看维度表t_ods_salary中的分析结果,使用sort by 参数对表中的count列进行倒序排序

select * from t_ods_salary sort by count desc;

--平均值

select avg(avg_salary) from ods_jobdata_detail;

--众数

select avg_salary,count(1) as cnt from ods_jobdata_detail group by avg_salary order by cnt desc limit 1;

--中位数

select percentile(cast(avg_salary as bigint),0.5) from ods_jobdata_detail;- 公司福利标签分析

--公司福利分析

insert overwrite table t_ods_company

select col,count(1) from t_ods_tmp_company group by col;

--查询维度表中的分析结果,倒序查询前10个

select every_company,count from t_ods_company sort by count desc limit 10;- 职位技能要求分析

--职位技能要求分析

insert overwrite table t_ods_kill

select col,count(1) from t_ods_tmp_kill group by col;

--查看技能维度表中的分析结果,倒叙查看前3个

select every_kill,count from t_ods_kill sort by count desc limit 3;第六章:数据可视化

6.1平台概述

招聘网站职位分析-数据可视化系统主要通过Web平台对分析结果进行图像化展示,旨在借助于图形化手段,清晰有效地传达信息,能够真实反映现阶段有关大数据职位的内容。本系统采用ECharts来辅助实现。

招聘网站职位分析可视化系统以JavaWeb为基础搭建,通过SSM(Spring+Springmvc+MyBatis)框架实现后端功能,前端在JSP中使用Echarts实现可视化展示,前后端的数据交互是通过SpringMVC与AJAX交互实现。

6.2数据迁移

- 创建关系型数据库(通过Navicat工具连接)

--创建数据库JobData

CREATE DATABASE JobData CHARACTER set utf8 COLLATE utf8_general_ci;

--创建城市分布表

create table t_city_count(

city VARCHAR(30) DEFAULT null,

count int(5) DEFAULT NULL

) ENGINE=INNODB DEFAULT CHARSET=utf8;

--创建薪资分布表

create table t_salary_count(

salary VARCHAR(30) DEFAULT null,

count int(5) DEFAULT NULL

) ENGINE=INNODB DEFAULT CHARSET=utf8;

--创建福利标签统计表

create table t_company_count(

company VARCHAR(30) DEFAULT null,

count int(5) DEFAULT NULL

) ENGINE=INNODB DEFAULT CHARSET=utf8;

--创建技能标签统计表

create table t_kill_count(

kills VARCHAR(30) DEFAULT null,

count int(5) DEFAULT NULL

) ENGINE=INNODB DEFAULT CHARSET=utf8;- 通过Sqoop实现数据迁移

Sqoop主要用于在Hadoop(Hive)与传统数据库(MySQL)间进行数据传递,可以将一个关系型数据库中的数据导入到Hadoop的HDFS中,也可以将HDFS的数据导入到关系型数据库中。

(启动的时候,有相关的警告信息,配置bin/configure-sqoop 文件,注释对应的相关语句)

--将职位所在的城市的分布统计结果数据迁移到t_city_count表中

bin/sqoop export \

--connect jdbc:mysql://hadoop1:3306/JobData?characterEncoding=UTF-8 \

--username root \

--password 123456 \

--table t_city_count \

--columns "city,count" \

--fields-terminated-by ',' \

--export-dir /user/hive/warehouse/jobdata.db/t_ods_city

--将职位薪资分布结果数据迁移到t_salary_count表中

bin/sqoop export \

--connect jdbc:mysql://hadoop1:3306/JobData?characterEncoding=UTF-8 \

--username root \

--password 123456 \

--table t_salary_dist \

--columns "salary,count" \

--fields-terminated-by ',' \

--export-dir /user/hive/warehouse/jobdata.db/t_ods_salary

--将职位福利统计结果数据迁移到t_company_count表中

bin/sqoop export \

--connect jdbc:mysql://hadoop1:3306/JobData?characterEncoding=UTF-8 \

--username root \

--password 123456 \

--table t_company_count \

--columns "company,count" \

--fields-terminated-by ',' \

--export-dir /user/hive/warehouse/jobdata.db/t_ods_company

--将职位技能标签统计结果迁移到t_kill_count表中

bin/sqoop export \

--connect jdbc:mysql://hadoop1:3306/JobData?characterEncoding=UTF-8 \

--username root \

--password 123456 \

--table t_kill_dist \

--columns "kills,count" \

--fields-terminated-by ',' \

--export-dir /user/hive/warehouse/jobdata.db/t_ods_kill6.3平台环境搭建

创建后会出现web.xml is missing and

- 配置pom.xml

4.0.0

com.itcast.jobanalysis

job-web

0.0.1-SNAPSHOT

war

org.codehaus.jettison

jettison

1.1

org.springframework

spring-context

4.2.4.RELEASE

org.springframework

spring-beans

4.2.4.RELEASE

org.springframework

spring-webmvc

4.2.4.RELEASE

org.springframework

spring-jdbc

4.2.4.RELEASE

org.springframework

spring-aspects

4.2.4.RELEASE

org.springframework

spring-jms

4.2.4.RELEASE

org.springframework

spring-context-support

4.2.4.RELEASE

org.mybatis

mybatis

3.2.8

org.mybatis

mybatis-spring

1.2.2

com.github.miemiedev

mybatis-paginator

1.2.15

mysql

mysql-connector-java

5.1.32

com.alibaba

druid

1.0.9

com.alibaba

jconsole

com.alibaba

tools

jstl

jstl

1.2

javax.servlet

servlet-api

2.5

provided

javax.servlet

jsp-api

2.0

provided

junit

junit

4.12

com.fasterxml.jackson.core

jackson-databind

2.4.2

org.aspectj

aspectjweaver

1.8.4

${project.artifactId}

src/main/java

**/*.properties

**/*.xml

false

src/main/resources

**/*.properties

**/*.xml

false

org.apache.maven.plugins

maven-compiler-plugin

3.2

1.8

1.8

UTF-8

org.apache.tomcat.maven

tomcat7-maven-plugin

2.2

/

8080

- 在src/main/resources-spring文件夹下的applicationContext.xml中,编写spring的配置内容

- 在src/main/resources-spring文件夹下的springmvc.xml中,编写SpringMVC的配置内容

- 编写web.xml文件,配置spring监听器、编码过滤器和SpringMVC前端控制器等信息

job-web

index.html

contextConfigLocation

classpath:spring/applicationContext.xml

org.springframework.web.context.ContextLoaderListener

CharacterEncodingFilter

org.springframework.web.filter.CharacterEncodingFilter

encoding

utf-8

CharacterEncodingFilter

/*

data-report

org.springframework.web.servlet.DispatcherServlet

contextConfigLocation

classpath:spring/springmvc.xml

1

data-report

/

404

/WEB-INF/jsp/404.jsp

- 编写数据库配置参数文件db.properties,用于项目解耦

jdbc.driver=com.mysql.jdbc.Driver

jdbc.url=jdbc:mysql://hadoop1:3306/JobData?characterEncoding=utf-8

jdbc.username=root

jdbc.password=123456- 编写Mybatis-Config.xml文件,用于配置Mybatis相关配置

6.4实现图形化展示功能

实现职位区域分布展示

实现薪资分布展示

实现福利标签词云图

实现技能标签词云图

平台可视化展示