ELK安装、部署、调试(六) logstash的安装和配置

1.介绍

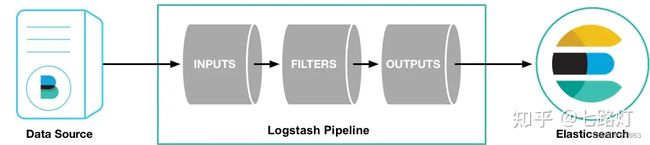

Logstash是具有实时流水线能力的开源的数据收集引擎。Logstash可以动态统一不同来源的数据,并将数据标准化到您选择的目标输出。它提供了大量插件,可帮助我们解析,丰富,转换和缓冲任何类型的数据。

管道(Logstash Pipeline)是Logstash中独立的运行单元,每个管道都包含两个必须的元素输入(input)和输出(output),和一个可选的元素过滤器(filter),事件处理管道负责协调它们的执行。 输入和输出支持编解码器,使您可以在数据进入或退出管道时对其进行编码或解码,而不必使用单独的过滤器。如:json、multiline等

inputs(输入阶段):

会生成事件。包括:file、kafka、beats等

filters(过滤器阶段):

可以将过滤器和条件语句结合使用对事件进行处理。包括:grok、mutate等

outputs(输出阶段):

将事件数据发送到特定的目的地,完成了所以输出处理,改事件就完成了执行。如:elasticsearch、file等

Codecs(解码器):

基本上是流过滤器,作为输入和输出的一部分进行操作,可以轻松地将消息的传输与序列化过程分开。

logstash为开源的数据处理pipeline管道,能接受多个源的数据,然后进行转换,再发送到指定目的地,logstash通过插件的机

制实现各种功能插件的下载地址为https://github.com/logstash-plugins

logstash 主要用于接收数据,解析过滤并转化,输出数据三个部分

对应的插件为input插件,filter插件,output插件

ingpu插件介绍,主要接收数据,支持多少数据源。

支持file : 读取一个文件,类似于tail命令,一行一行的实施读取。

支持syslog:监听系统的514端口的syslog messages,使用RFC3164格式进行解析

redis: 可以从Redis服务器读取数据,此时redis类似于一个消息缓存组件

kafka: 从kafka集群读取数据,kafka和logstash架构一般用于数据量较大的场景,kafka用于数据的换从和存储

filebeat: filebeat为一个文本的日志收集器,占系统资源小

filter插件介绍,主要进行数据的过滤,解析,格式化

grok:是logstash最重要的插件,可以解析任意数据,支持正则表达式,并提供很多内置的规则及模板

mutate 提供丰富的技术类型数据处理能力。包括类型转换,字符串处理,字段处理等

date,转化日志记录中的时间字符串格式

Geoip:更具ip地址提供对应的地域信息。包括国,省,经纬度等,对于可视化地图和区域统计非常有用。

output插件,输入日志到目的地

elasticsearch :发动到es中

file:发动数据到文件中

redis :发送日志到redis

kafka:发送日志到kafka

2.下载

https://www.elastic.co/cn/downloads/logstash

百度云elk上有

使用向导的连接

https://www.elastic.co/guide/en/logstash/7.9/installing-logstash.html#package-repositories

3.安装

logstash

tar zxvf logstash-7.9.3.tar.gz -C /usr/local/

cd /usr/local/

mv logstash-7.9.3 logstash

logstash/bin/logstash为启动文件

4.启动

cd /usr/local/logstash/bin

./logstash -e 'input{stdin{}} output{stdout{codec=>rubydebug}}'后台运行

nohup logstash -e 'input{stdin{}} output{stdout{codec=>rubydebug}}' &

input 使用input插件

stdin{} 采用标准的终端输入,如键盘输入

output 使用output插件

stdout 为标准输出

codec 插件,定义输出格式

rubydebug 一般输出为jison格式的测试

5.测试

5.1 测试1:采用标准的输入和输出

root@localhost bin]# ./logstash -e 'input{stdin{}} output{stdout{codec=>rubydebug}}'Sending Logstash logs to /usr/local/logstash/logs which is now configured via log4j2.properties

[2023-08-29T10:22:35,442][INFO ][logstash.runner ] Starting Logstash {"logstash.version"=>"7.9.3",

"jruby.version"=>"jruby 9.2.13.0 (2.5.7) 2020-08-03 9a89c94bcc OpenJDK 64-Bit Server VM 25.242-b08 on 1.8.0_242

-b08 +indy +jit [linux-x86_64]"}

[2023-08-29T10:22:36,612][WARN ][logstash.config.source.multilocal] Ignoring the 'pipelines.yml' file because

modules or command line options are specified

[2023-08-29T10:22:40,097][INFO ][org.reflections.Reflections] Reflections took 77 ms to scan 1 urls, producing

22 keys and 45 values

[2023-08-29T10:22:42,937][INFO ][logstash.javapipeline ][main] Starting pipeline {:pipeline_id=>"main",

"pipeline.workers"=>4, "pipeline.batch.size"=>125, "pipeline.batch.delay"=>50, "pipeline.max_inflight"=>500,

"pipeline.sources"=>["config string"], :thread=>"#"}

[2023-08-29T10:22:44,380][INFO ][logstash.javapipeline ][main] Pipeline Java execution initialization time

{"seconds"=>1.41}

[2023-08-29T10:22:44,466][INFO ][logstash.javapipeline ][main] Pipeline started {"pipeline.id"=>"main"}

The stdin plugin is now waiting for input:

[2023-08-29T10:22:44,590][INFO ][logstash.agent ] Pipelines running {:count=>1, :running_pipelines=>

[:main], :non_running_pipelines=>[]}

[2023-08-29T10:22:45,030][INFO ][logstash.agent ] Successfully started Logstash API endpoint

{:port=>9600} hello #我用键盘输入的,下面的信息是logstash的输出。

{

"message" => "hello",

"@version" => "1",

"host" => "localhost.localdomain",

"@timestamp" => 2023-08-29T02:22:53.965Z

}

5.2 测试2:使用配置文件 +标准输入输出

参见logstash-1.conf

内容如下

[root@localhost logstash]# cat logstash-1.conf

input { stdin { }

}

output {

stdout { codec => rubydebug }

}

[root@localhost logstash]#

执行

./logstash -f ../logstash-1.conf

[root@localhost bin]# ./logstash -f ../logstash-1.confSending Logstash logs to /usr/local/logstash/logs which is now configured via log4j2.properties

[2023-08-29T10:30:50,169][INFO ][logstash.runner ] Starting Logstash {"logstash.version"=>"7.9.3",

"jruby.version"=>"jruby 9.2.13.0 (2.5.7) 2020-08-03 9a89c94bcc OpenJDK 64-Bit Server VM 25.242-b08 on 1.8.0_242

-b08 +indy +jit [linux-x86_64]"}

[2023-08-29T10:30:51,542][WARN ][logstash.config.source.multilocal] Ignoring the 'pipelines.yml' file because

modules or command line options are specified

[2023-08-29T10:30:55,077][INFO ][org.reflections.Reflections] Reflections took 82 ms to scan 1 urls, producing

22 keys and 45 values

[2023-08-29T10:30:58,205][INFO ][logstash.javapipeline ][main] Starting pipeline {:pipeline_id=>"main",

"pipeline.workers"=>4, "pipeline.batch.size"=>125, "pipeline.batch.delay"=>50, "pipeline.max_inflight"=>500,

"pipeline.sources"=>["/usr/local/logstash/logstash-1.conf"], :thread=>"#"}

[2023-08-29T10:30:59,713][INFO ][logstash.javapipeline ][main] Pipeline Java execution initialization time

{"seconds"=>1.48}

[2023-08-29T10:30:59,829][INFO ][logstash.javapipeline ][main] Pipeline started {"pipeline.id"=>"main"}

The stdin plugin is now waiting for input:

[2023-08-29T10:31:00,060][INFO ][logstash.agent ] Pipelines running {:count=>1, :running_pipelines=>

[:main], :non_running_pipelines=>[]}

[2023-08-29T10:31:00,664][INFO ][logstash.agent ] Successfully started Logstash API endpoint

{:port=>9600} 5.3 测试三:配置文件+file输入 +标准的屏幕输出

vi logstash-2.conf

input {

file {

path => "/var/log/messages"

}

}

output {

stdout {

codec=>rubydebug

}

}[root@localhost logstash]# vi logstash-2.conf

[root@localhost logstash]# ./bin/logstash -f logstash-2.confSending Logstash logs to /usr/local/logstash/logs which is now configured via log4j2.properties

[2023-08-29T10:36:25,171][INFO ][logstash.runner ] Starting Logstash {"logstash.version"=>"7.9.3",

"jruby.version"=>"jruby 9.2.13.0 (2.5.7) 2020-08-03 9a89c94bcc OpenJDK 64-Bit Server VM 25.242-b08 on 1.8.0_242

-b08 +indy +jit [linux-x86_64]"}

[2023-08-29T10:36:37,490][INFO ][logstash.agent ] Successfully started Logstash API endpoint

{:port=>9600}

{

"@timestamp" => 2023-08-29T02:37:14.162Z,

"path" => "/var/log/messages",

"host" => "localhost.localdomain",

"message" => "Aug 29 10:37:13 localhost systemd-logind: Removed session 992.",

"@version" => "1"

}

{

"@timestamp" => 2023-08-29T02:37:14.231Z,

"path" => "/var/log/messages",

"host" => "localhost.localdomain",

"message" => "Aug 29 10:37:13 localhost systemd-logind: Removed session 993.",

"@version" => "1"

}5.4 测试4:配置文件+文件输入+kafka输出

[root@localhost logstash]# cat logstash-3.conf

input {

file {

path => "/var/log/messages"

}

}

output {

kafka {

bootstrap_servers => "10.10.10.71:9092,10.10.10.72:9092,10.10.10.73:9092"

topic_id => "osmessages"

}

}[root@localhost logstash]# ./bin/logstash -f logstash-3.conf到10.10.10.71上用kafka消费

./kafka-console-consumer.sh --bootstrap-server 10.10.10.71:9092,10.10.10.72:9092,10.10.10.73:9092 --topic

osmessages有信息输出,

2023-08-29T02:49:42.383Z localhost.localdomain Aug 29 10:49:41 localhost systemd-logind: New session 996 of user

root.

2023-08-29T02:49:42.300Z localhost.localdomain Aug 29 10:49:41 localhost systemd-logind: New session 997 of user

root.

2023-08-29T02:49:42.380Z localhost.localdomain Aug 29 10:49:41 localhost systemd: Started Session 997 of user

root.

2023-08-29T02:49:42.384Z localhost.localdomain Aug 29 10:49:41 localhost systemd: Started Session 996 of user

root.

2023-08-29T02:49:43.465Z localhost.localdomain Aug 29 10:49:42 localhost dbus[745]: [system] Activating service

name='org.freedesktop.problems' (using servicehelper)

2023-08-29T02:49:43.467Z localhost.localdomain Aug 29 10:49:42 localhost dbus[745]: [system] Successfully

activated service 'org.freedesktop.problems'

2023-08-29T02:50:01.531Z localhost.localdomain Aug 29 10:50:01 localhost systemd: Started Session 998 of user

root.

5.5 测试5:配置文件+filebeat端口输入+标准输出

服务器产生日志(filebeat)---》logstash服务器

[root@localhost logstash]# cat logstash-4.conf

input {

beats {

port => 5044

}

}

output {

stdout {

codec => rubydebug

}

}[root@localhost logstash]# ./bin/logstash -f logstash-4.conf启动后会在本机启动一个5044端口,此段欧在配置文件中可

以任意配置。不要和系统已启动的端口冲突即可

[root@localhost logstash]# netstat -anp | grep 5044

tcp6 0 0 :::5044 :::* LISTEN 10545/java

[root@localhost logstash]#配合测试我们在filebeat服务器上修改配置文件

# ------------------------------ Logstash Output -------------------------------

output.logstash:

hosts: ["10.10.10.74:5044"]

##filebeat使用logstash作为输出,

# Optional SSL. By default is off.

# List of root certificates for HTTPS server verifications

#ssl.certificate_authorities: ["/etc/pki/root/ca.pem"]

# Certificate for SSL client authentication

#ssl.certificate: "/etc/pki/client/ce

修改filebeat配置后,重启filebeat

nohup /usr/local/filebeat/filebeat -e -c /usr/local/filebeat/filebeat-to-logstash.yml &经过测试在logstash下看到了filebeat的日志内容。

5.6测试6 配置文件+filebeat端口输入+输出到kafka

场景

服务器产生日志(filebeat)---> logstash服务器---->kafka服务器

[root@localhost logstash]# cat logstash-5.conf

input {

beats {

port => 5044

}

}

output {

kafka {

bootstrap_servers => "10.10.10.71:9092,10.10.10.72:9092,10.10.10.73:9092"

topic_id => "osmessages"

}

}filebeat服务器:

filebeat.yml配置同5

# ------------------------------ Logstash Output -------------------------------

output.logstash:

hosts: ["10.10.10.74:5045"]

logstash服务器:

配置

[root@localhost logstash]# cat logstash-5.conf

input {

beats {

port => 5044

}

}

output {

kafka {

# codec ==> json #打开注释将会使用json格式传输,使用json感觉更乱

bootstrap_servers => "10.10.10.71:9092,10.10.10.72:9092,10.10.10.73:9092"

topic_id => "osmessages"

}

}./bin/logstash -f logstash-5.confkafka服务器:

./kafka-console-consumer.sh --bootstrap-server 10.10.10.71:9092,10.10.10.72:9092,10.10.10.73:9092 --topic

osmessages

消费了一下日志,可以看到filebeat服务器同步过来的信息。

5.7 测试7 配置文件+kafka读取当输入+输出到es

服务器产生日志(filebeat)---> kafka服务器__抽取数据___> logstash服务器---->ESlogstash的配置

[root@localhost logstash]# cat f-kafka-logs-es.conf

input {

kafka {

bootstrap_servers => "10.10.10.71:9092,10.10.10.72:9092,10.10.10.73:9092"

topics => ["osmessages"]

}

}

output {

elasticsearch {

hosts => ["10.10.10.65:9200,10.10.10.66:9200,10.10.10.67:9200"]

index => "osmessageslog-%{+YYYY-MM-dd}"

}

}

./bin/logstash -f f-kafka-logs-es.conf

nohup /usr/local/logstash/bin logstash -f /usr/local/logstash/f-kafka-logs-es.conf

filebeat.yml配置

# ------------------------------ KAFKA Output -------------------------------

output.kafka:

eanbled: true

hosts: ["10.10.10.71:9092","10.10.10.72:9092","10.10.10.73:9092"]

version: "2.0.1"

topic: '%{[fields][log_topic]}'

partition.round_robin:

reachable_only: true

worker: 2

required_acks: 1

compression: gzip

max_message_bytes: 10000000

nohup /usr/local/filebeat/filebeat -e -c /usr/local/filebeat/filebeat.yml &

完成到kafka的输出

完成