Pytorch笔记之分类

文章目录

- 前言

- 一、导入库

- 二、数据处理

- 三、构建模型

- 四、迭代训练

- 五、模型评估

- 总结

前言

使用Pytorch进行MNIST分类,使用TensorDataset与DataLoader封装、加载本地数据集。

一、导入库

import numpy as np

import torch

from torch import nn, optim

from torch.utils.data import TensorDataset, DataLoader # 数据集工具

from load_mnist import load_mnist # 本地数据集

二、数据处理

1、导入本地数据集,将标签值设置为int类型,构建张量

2、使用TensorDataset与DataLoader封装训练集与测试集

# 构建数据

x_train, y_train, x_test, y_test = \

load_mnist(normalize=True, flatten=False, one_hot_label=False)

# 数据处理

x_train = torch.from_numpy(x_train.astype(np.float32))

y_train = torch.from_numpy(y_train.astype(np.int64))

x_test = torch.from_numpy(x_test.astype(np.float32))

y_test = torch.from_numpy(y_test.astype(np.int64))

# 数据集封装

train_dataset = TensorDataset(x_train, y_train)

test_dataset = TensorDataset(x_test, y_test)

batch_size = 64

train_loader = DataLoader(dataset=train_dataset,

batch_size=batch_size,

shuffle=True)

test_loader = DataLoader(dataset=test_dataset,

batch_size=batch_size,

shuffle=True)

三、构建模型

输入到全连接层之前需要把(batch_size,28,28)展平为(batch_size,784)

交叉熵损失函数整合了Softmax,在模型中可以不添加Softmax

# 继承模型

class FC(nn.Module):

def __init__(self):

super().__init__()

self.fc1 = nn.Linear(784, 10)

self.softmax = nn.Softmax(dim=1)

def forward(self, x):

y = self.fc1(x.view(x.shape[0],-1))

y = self.softmax(y)

return y

# 定义模型

model = FC()

loss_function = nn.CrossEntropyLoss()

optimizer = optim.SGD(model.parameters(), lr=0.1)

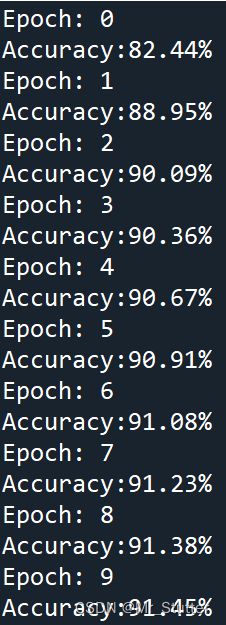

四、迭代训练

从DataLoader中取出x和y,进行前向和反向的计算

for epoch in range(10):

print('Epoch:', epoch)

for i,data in enumerate(train_loader):

x, y = data

y_pred = model.forward(x)

loss = loss_function(y_pred, y)

optimizer.zero_grad()

loss.backward()

optimizer.step()

五、模型评估

在测试集中进行验证

使用.item()获得tensor的取值

correct = 0

for i,data in enumerate(test_loader):

x, y = data

y_pred = model.forward(x)

_, y_pred = torch.max(y_pred, 1)

correct += (y_pred == y).sum().item()

acc = correct / len(test_dataset)

print('Accuracy:{:.2%}'.format(acc))

总结

记录了TensorDataset与DataLoader的使用方法,模型的构建与训练和上一篇Pytorch笔记之回归相似。